Milwaukee County is already using artificial intelligence in small but concrete ways — and a county supervisor has pushed the question into the open by asking for annual, board-level reporting on what systems are in use, how they’re implemented, and what outcomes they produce. The request, introduced by Sup. Shawn Rolland, aims to create a transparent baseline so supervisors and the public can assess benefits, costs, equity impacts, privacy trade-offs, and workplace effects as adoption accelerates. At a January committee meeting county IT leaders confirmed that some AI-enabled security tools are in place, the county has joined an AI government cloud membership, and the county is licensing Microsoft Copilot for staff — but questions remain about definitions, metrics, procurement safeguards, and whether the county’s governance model will keep pace with rapid technical change. The resolution was paused for technical edits, but the debate it sparked highlights a universal governance problem for local governments moving into AI: the need to inventory use, measure impact, and bake in procurement and records safeguards before expansion takes on its own momentum.

Artificial intelligence is no longer a futuristic experiment for local governments; it is a practical productivity and security lever that many counties are adopting incrementally. Milwaukee County’s internal IT shop — the Information Management Services Division (IMSD) — told supervisors that AI is being used in security tooling and that the county has taken steps to access federated government AI resources, while also licensing Microsoft Copilot as an enterprise productivity assistant. The reported Copilot licensing cost aligns with the commonly quoted enterprise price of roughly US$30 per user per month — roughly US$360 per seat per year — a useful budgeting figure though the exact SKU and contractual terms determine final cost and protections. nterprise Copilot plus security tooling plus membership in a government cloud community — is an increasingly common posture among municipalities. It prefers a tenancy‑bound assistant that can be governed through the county’s existing identity, data protection, and monitoring controls rather than unsanctioned consumer chatbots that send prompts into unknown public clouds. But it also shifts the governance burden onto procurement, tenant configuration, records retention, and staff training; those operational controls must be documented, enforced and audited to realize benefits while minimizing legal and privacy risks.

A robust annual AI report would provide the board with:

If the county’s annual report is implemented thoughtfully, with pilot workbooks, tenant attestations, procurem and measurable KPIs, the board will have the information it needs to make evidence-based decisions about expanding AI use. If instead the county allows “AI” to be a loose marketing label that slips into contracts and services, the county may face privacy, FOIA, and vendor lock-in headaches that will cost far more — in money and public trust — than the modest license fees themselves.

Supervisors who want to turn the current pause into actionable oversight should insist on a short, auditable checklist: a complete inventory, contractual redactions as necessary but full-scope summaries, the pilot workbook behind any savings claim, tenant attestation of technical controls, and a quarterly KPI dashboard. That approach treats AI as a tool to amplify public service — not a magic bullet — and it keeps elected officials squarely in oversight control as the technology continues to evolve.

Source: Urban Milwaukee MKE County: How Does Milwaukee County Use AI?

Background / Overview

Background / Overview

Artificial intelligence is no longer a futuristic experiment for local governments; it is a practical productivity and security lever that many counties are adopting incrementally. Milwaukee County’s internal IT shop — the Information Management Services Division (IMSD) — told supervisors that AI is being used in security tooling and that the county has taken steps to access federated government AI resources, while also licensing Microsoft Copilot as an enterprise productivity assistant. The reported Copilot licensing cost aligns with the commonly quoted enterprise price of roughly US$30 per user per month — roughly US$360 per seat per year — a useful budgeting figure though the exact SKU and contractual terms determine final cost and protections. nterprise Copilot plus security tooling plus membership in a government cloud community — is an increasingly common posture among municipalities. It prefers a tenancy‑bound assistant that can be governed through the county’s existing identity, data protection, and monitoring controls rather than unsanctioned consumer chatbots that send prompts into unknown public clouds. But it also shifts the governance burden onto procurement, tenant configuration, records retention, and staff training; those operational controls must be documented, enforced and audited to realize benefits while minimizing legal and privacy risks.What prompted theSup. Shawn Rolland drafted a resolution asking IMSD to produce annual reports that list county AI systems or tools, explain how they’re used, and summarize outcomes. The resolution would also ask for examples of contractors and services where AI is in play and an assessment addressing governance, workforce impacts, data quality, equity, privacy, and risk management. Rolland framed the measure as a benchmarking and oversight tool rather than a mandate to expand AI. County IT leaders told the committee the technology is already in use, but they warned that definitions can be fuzzy and quantifying outcomes — like efficiency gains — can be difficult without clear measurement approaches. One county official estimated Copilot license cost as approximately US$360 per person, and others flagged the problem of “AI” being used as a loose marketing label applied to simple automation. These were among the points that fed the committee’s decision to pause the resolution while its technical details were refined. (The underlying public reporting is the basis for this summary; where claims or numbers could not be independently verified they are treated as directional and flagged in analysis below.)

Where Milwaukee County is using AI today (reported)

- Security tooling: County staff reported AI capabilities embedded in security and threat-detection tools. AI components are commonly used to enrich logs, surface anomalous activity, and prioritize incidents — functions that can materially improve security operations if tuned and governed correctly.

- Membership in a government AI/cloud community: The county said it had joined an AI government community cloud membership intended to provide vetted tools, shared best practices, or tenancy-based services tailored to public-sector needs. Such memberships can provide procurement pathways and technical guidance but do not substitute for contract-level protections.

- Microsoft Copilot licensing for staff: Thnsing Microsoft Copilot — an enterprise assistant that integrates with Microsoft 365 — as part of its productivity toolset. The per-seat list pricing commonly used for budgeting is about US$30 per user per month, or roughly US$360 annually, though the precise contractual terms, included features, and whether the tenant opted into training/data sharing determine the real legal and privacy posture.

Why a board-level AI inventory and annual report matters

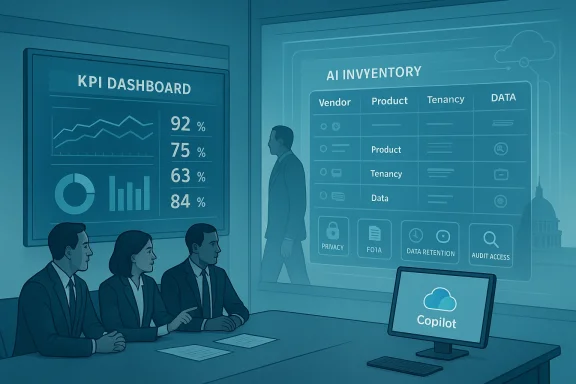

Milwaukee County’s pirement gets at a core transparency problem: without a central inventory, AI slips into departmental workflows in fragmented ways — vendor dashboards, contractor services, and staff “shadow AI” experiments — and supervisors lack an accurate picture of exposure and value.A robust annual AI report would provide the board with:

- A single, auditable inventory of AI systems (vendor name, product, purpose, tenant vs. third-party), including contractor uses.

- Contractual summaries (data residency, non‑training clauses, deletion/export rights, audit access).

- Technical posture (tenant configuration, Purview/Data Loss Prevention rules, logging, prompt telemetry retention).

- Operational metrics (who uses the tool, what tasks are performed, time saved, error/validation rates, and incidents).

- Equity and privacy assessments (how systems affect protected groups; whether outputs are subject to FOIA/records rules).

- Risk register and mitigation plans (where hallucination, bias, or data leakage could cause harm).

The governance gap: what county IT managers warned about

County IT leaders acknowledged three pract make a clean AI inventory and quantified outcomes difficult:- Definition drift and marketing labels. Many vendors market “AI features” that are thin automation or parameterized rules. Distinguishing true generative models from scripted automation can be messy and requires technical review. The county deputy CIO warned that the label “AI” is sometimes applied loosely, which complicates inventory and oversight.

- Measurement challenges. Demonstrating productivity improvements — for example, proving that Copilot saved X minutes per task onth — demands baseline measurements, consistent logging, and a methodology that ties time saved to tangible budget impacts. IT staff are often confident the efficiency gains outweigh license costs, but proving that in audit-ready detail requires careful pilot documentation and the raw workbook. Municipal pilots elsewhere produced headline savings but those figures were directionally meaningful rather than independently auditable without pilot workbooks.

- Operational workload. Producing a high‑quality annual report requires IT time and coordination with procurement, legal, records, and affected departried about creating incremental reporting work that produces little practical value unless the scope is tightly defined and aligned with policy decisions. The committee paused the resolution to refine those technical details. (This procedural pause is the immediate status quo reported.)

What good AI governance looks like — practical prescriptions for Milwaukee County

If the county proceeds with an inventory and annual report, it should demand operational detail and contractual assurances that make governance enforceable. Based on municipal best practices and documented municipal pilots elsewhere, the county board should ask IMSD and other departments to provide the following, at minimum:- Inventory and classification

- Catalogue each AI system, the vendor, purpose, whether it’s tenancy-bound or a third-party service, and whether it touches confidential or regulated data.

- Identify contractor or vendor services that embed AI and include subcontractors.

- Tag each system with risk level (low/medium/high) based on decision-faceness, exposure to PII, and potential equity impacts.

- Procurement and contractual protections

- Produce redacted copies or summaries of AI-related contract terms: does the vendor have non‑training clauses, prompt/telemetrplicit breach notification timelines, and audit access?

- Require enforceable export/deletion rights and documented data handling commitments for any vendor that processes county data. Vendor marketing alone is not sufficient for legal protection.

- Tenant attestation and technical controls

- For tenancy-bound services (like enterprise Copilot) require an IT attestation that tenant settings (Purview DLP, conditional access, retention)ctly and periodically audited.

- Publish a short technical annex specifying what controls are in place and when the last audit or configuration review occurred.

- Records, FOIA and prompt retention policy

- Define whether prompts or outputs are official records; set retention windows and redaction workflows to avoid surprises during open records requests.

- Clarifyen AI materially influences a document or decision that is subject to public disclosure. Municipal policies elsewhere require disclosure and human sign-off for AI‑assisted public documents.

- Pilot workbooks and measurement methodology

- Mandate that any pilot producing headline time-savings publish the pilot workbook: raw metrics, sampling methods, how time savings were measured, hourly rates used, and extrapolation meavings are useful but must be auditable before being used in multi-year budget planning.

- Role‑based access, training, and stewardship

- Issue Copilot or other seat licenses by role and business need only; require mandatory prompt‑hygiene training for licensees and create departmental AI stewards who certify proper usage.

- Meter usage KPIs (time saved, human edit rates, incidents, cost per seat) so the board can track whether benefits scale as promised.

- Equity and privacy impact assessments

- Require a short Data Protection or Equity Impact Assessment (DPIA/EIA) for any system that affects residents or makes decisions that could influence services, enforcement, eligibility, or benefits.

- Make DPIA/EIA results part ofhese assessments should be public or redacted for legitimate security concerns.

- Incident and governance reporting

- Specify incident reporting thresholds (e.g., any suspected data leakage or hallucination that causes incorrect public communication) and a remediation timeline; report incidents in the annual summary with anonymized lessons learned.

- Establish a small cview committee to keep policies current and to triage new procurement requests.

Benefits and the hard accounting problem

AI assistants like Copilot can reduce repetitive drafting time, accelerate report preparation, and surface synthesized information faster. In many pilots, organizations report plausible time savings for writing-heavy tasks and anticipate net productivity gains. But turniniscal saving is non-trivial: you must decide whether time saved represents real cost avoidance (reduced FTEs) or redeployment of staff to higher-value work. To make a budgetary case you need:- Baseline time-on-task measurements.

- Repeatable logging and telemetry of AI use and human edit time.

- Clear assumptions for annualization (how many users, hours saved per user, replacement vs. redeployment).

Municipal reporting that claimed specific dollar savings has frequently done so without releasing the raw workbook, making the headline numbers directional rather than audit-ready — and the board should insist on the workbook before relying on such figures for budget planning.

Risks: technical, legal, and ethical

- Data leakage and privacy exposures. Even tenancy-bound assistants require correct tenant configuration and procurement clauses that prevent prompt telemetry from being used for vendor model training or retained beyond acceptable windows. Without those controls, sensitive prompts or outputs can cination and decision‑facing errors. Generative models can invent plausible but false statements; any content that materially influences enforcement, legal determinations, or public health/safety must require human verification and probably be excluded from unsupervised use.

- Records and FOIA exposure. If outputs or prompts become part of the official redaction rules must be enforced to avoid later surprises in open-records requests.

- Vendor lock-in and procurement fragility. Marketing claims do not replace enforceable contract terms. Municipalities must insist on deletion/export rights, audit access, and clear exit/transition being trapped by a vendor’s platform.

- Shadow AI and compliance drift. A ban on consumer models for official work often drives staff to try public tools on personal devices. Controls (ions), user-friendly sanctioned tools, and rapid IT support are needed to reduce noncompliant workarounds.

How to measure success: realistic KPIs and reporting cadence

For an annual board report to be useful, it should include a compact KPI dashboarrterly and rolled into the annual narrative. Suggested KPIs:- Seats issued and active monthly users (by department).

- Average time saved per user for a set of standardized tasks (with raw pilot workbook archived).

- Human verification/edit rate (percentage of AI outputs redits).

- Incidents and near-misses (data leakage, hallucination that required retraction).

- Procurement compliance (percent of AI-related contracts with required contractual clauses).

- Training completion rate and number of departmental AI stewards appointed.

This mix ties operational activity to governance and budget outcomes and is inherently auditable if telemetry and pilot workbooks are preserved.

A pragmatic roadmap for Milwaukee County supervisors

- Direct IMSD to deliver a scoped, time-boxed pilot-workbook release for any claimed savings, and to produce a one-page tenant attestation showing enforcement of DLP, Purview and other controls. Require redactions only where legitimate security concerns exist.

- Adopt a minimal sets-of-contract clauses for any AI procurement (non-training, deletion/export, audit access, breach notification timelines).to certify compliance before seat issuance.

- Start with low-risk, high-volume use cases — drafting, formatting, summarization of public material — and forbid unsupervised use in decision‑facing contexts until a DPIA is completed.

- Require role-based issuance, mandatory prompt-hygiene training, and department AI steensing to demonstrated metrics and quarterly KPI reports.

What remains uncertain and where to be cauaukee reporting that prompted this discussion is a useful transparency trigger, but some of the figures and claims (for example, exact per-seat licensing costs and precise savings estimates) gainst contracts, tenant settings, and pilot workbooks before they can be relied on for budget decisions. Where public reports give headline numbers, the board should insiworkbook and contractual summaries to convert directional claims into auditable facts. Municipal best practice is to treat headline savings as provisional until the pilot methodology and raw metrics are published.

Finally, remember that “enterprise-first” choices like Copilot reduce certain risks but create others: they increase the importance of procurement detail, tenant configuration audits, and records policy decisions. Microsoft’s tenancy model can be configured to reduce data leakage and to avoid vendor training on tenant data if the tenant opts out, but those assurances are contractual and technical — not automatic — and must be proven in the county’s own environment.Conclusion

Milwaukee County has taken sensible first steps: piloting and licensing tenant-bound A-supplied security tooling, and opening a board-level conversation about inventory and oversight. That conversation is precisely what boards should be having now: AI adoption without an inventory, clear contract terms, technical attestations and auditable pilot workbooks is a recipe for either missed value or unpredictable legal and public‑records exposures.If the county’s annual report is implemented thoughtfully, with pilot workbooks, tenant attestations, procurem and measurable KPIs, the board will have the information it needs to make evidence-based decisions about expanding AI use. If instead the county allows “AI” to be a loose marketing label that slips into contracts and services, the county may face privacy, FOIA, and vendor lock-in headaches that will cost far more — in money and public trust — than the modest license fees themselves.

Supervisors who want to turn the current pause into actionable oversight should insist on a short, auditable checklist: a complete inventory, contractual redactions as necessary but full-scope summaries, the pilot workbook behind any savings claim, tenant attestation of technical controls, and a quarterly KPI dashboard. That approach treats AI as a tool to amplify public service — not a magic bullet — and it keeps elected officials squarely in oversight control as the technology continues to evolve.

Source: Urban Milwaukee MKE County: How Does Milwaukee County Use AI?