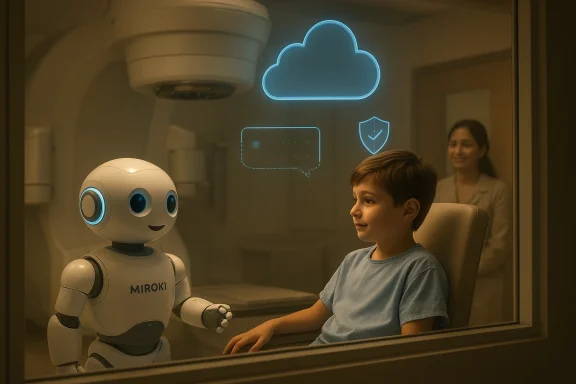

The Institut du Cancer de Montpellier’s deployment of Miroki, an AI-enabled companion robot running on Microsoft Azure and Azure OpenAI Service, is more than a feel-good healthcare pilot. It is a carefully engineered response to a very specific clinical problem: children undergoing radiation therapy must often be left alone in the treatment room, and that isolation can intensify fear, movement, and treatment stress. Microsoft says the system is already operating in a healthcare-compliant cloud environment, with guardrails that redirect sensitive topics to clinicians and a scientific program evaluating both safety and emotional impact.

The significance of this project lies in its specificity. This is not a generic hospital chatbot, and it is not a robotics demo looking for a use case. It is a purpose-built companion designed for a narrowly defined moment when human presence is impossible, yet emotional support is still essential. That framing matters because it shifts the conversation from automation for its own sake to supportive care that complements, rather than replaces, clinicians.

ICM’s leadership describes the initiative as part of a broader, incremental AI strategy that began with radiology and radiation therapy optimization before extending into the patient journey. In other words, the robot is not a standalone experiment but the latest step in a longer organizational evolution toward AI-assisted clinical excellence. That sequencing is important because healthcare institutions rarely succeed with transformative technology when they start at the most visible, least governed layer; they succeed when they build trust, infrastructure, and clinical workflow maturity first.

The project also lands at a moment when healthcare AI is moving from back-office productivity tools into front-line patient experience. Microsoft has been positioning Azure OpenAI and related services as platforms for healthcare providers that want conversational AI with compliance, monitoring, and safety controls. ICM’s use of Miroki shows how that narrative can translate into a highly emotional, human-centered setting where latency, data handling, and content boundaries are not abstract technical issues but core clinical requirements.

At a broader level, the story illustrates a quiet but consequential trend: hospitals are beginning to use AI not only to improve diagnosis or documentation, but to shape the emotional texture of care. That is a meaningful shift, because anxiety management in pediatric oncology can influence cooperation, throughput, family trust, and the overall care experience. The project’s blend of robotics, speech interaction, and cloud governance shows how soft outcomes are increasingly being treated as hard operational targets.

That combination of repetition and isolation is where anxiety compounds. A child who becomes distressed on the first day can enter a feedback loop of fear, motion, and longer sessions, which then increases stress for everyone else involved. The result is not merely emotional discomfort; it can create workflow friction and make precision treatment harder to sustain.

ICM’s framing is notable because it treats anxiety as a legitimate clinical variable, not a peripheral comfort issue. In pediatric oncology, emotional regulation can influence compliance, procedural smoothness, and family confidence. A robot that lowers distress does not simply make the experience “nicer”; it may help the therapy proceed more predictably, which is a real operational benefit.

Key implications include:

The robot’s mobility also matters. Microsoft says Miroki can move autonomously and remain with the child throughout the session, which gives it a practical role in a restricted clinical environment. The mobility is not decorative; it is part of what makes the system feel companionable rather than merely interactive.

This is where the psychology matters. If a child sees the robot as predictable, friendly, and available across multiple sessions, it can become part of the treatment ritual. Rituals reduce uncertainty, and uncertainty is often the enemy in pediatric care.

There is also a family dimension. Parents are often in a difficult position during pediatric radiotherapy because they can hear what is happening but cannot intervene. A calmer child can indirectly reduce parental distress, which in turn can improve the overall atmosphere around the treatment sequence. Healthcare experience is rarely isolated to one person; it radiates outward through the entire family system.

The likely practical benefits include:

The robot’s function appears intentionally bounded: it comforts, engages, remembers prior exchanges, and then defers when conversation drifts into sensitive territory. That design philosophy is exactly what responsible healthcare AI should look like, because the best systems in medicine are usually the ones that know where their competence ends.

That matters because healthcare AI is only as good as its compliance envelope. If a system cannot control where data flows, how conversations are filtered, and which topics trigger human escalation, then it may be unfit for real clinical use even if it is technically impressive. Microsoft’s own guidance on responsible AI for Azure OpenAI stresses risk mitigation, healthcare-specific harm assessment, and careful deployment practices.

Microsoft has spent the past few years making that case across its healthcare portfolio, and this customer story fits that pattern. The company’s healthcare AI materials emphasize content safety, controlled deployment, and responsible use, while Azure OpenAI guidance explicitly notes that healthcare use cases require careful harm analysis and targeted mitigations. Miroki is thus a concrete example of policy turning into practice.

The implication is that the robot’s conversational layer is not there to answer everything. Instead, it creates a safe social space, preserving the human chain of responsibility where medical judgment is needed. In practical terms, this is the difference between supportive AI and clinical AI, and healthcare organizations should be making that distinction far more often.

This matters because healthcare robotics can fail in ways that consumer robotics never has to consider. A robot that jams, overheats, or degrades from radiation exposure cannot just be rebooted casually if it is embedded in a treatment workflow. The machine has to be durable, predictable, and safe every day, not just impressive on demo day.

This also explains why the project is framed as a scientific program rather than a commercial rollout. Preliminary testing and behavioral studies are both necessary if the hospital wants to claim that the robot is safe, useful, and worth expanding. In healthcare, proof is a feature.

The main technical considerations are:

The collaboration with research partners also helps protect the initiative from the common trap of “innovation theater.” A hospital robot can generate enthusiastic headlines, but without behavioral and technical evidence, the enthusiasm can evaporate quickly. By embedding the project in a scientific framework, ICM is trying to make the case that the robot is clinically meaningful, not merely charming.

That expansion logic is sensible. Many medical experiences involve short but emotionally intense periods of isolation, uncertainty, or waiting. A robot that can provide continuity, familiar conversation, and a reassuring presence may prove useful in more than one part of oncology, especially where the clinical environment limits human accompaniment.

But expansion should not be assumed to be easy. What works for a child facing radiation therapy may not work for an older adult, a chemotherapy patient, or a different clinical culture. The same robot may need different conversation styles, boundaries, and workflows depending on the unit. That is why the modularity of the cloud stack matters as much as the robot itself.

The broader lessons are:

That matters in the competition for healthcare cloud workloads. Rivals can offer large models, but Microsoft is trying to differentiate on compliance, integration, and a healthcare ecosystem that includes governance, safety tooling, and partner relationships. A story like this strengthens that position because it demonstrates not just capability, but contextual fit.

At the same time, the market should avoid over-reading the story as proof of category dominance. One successful deployment does not establish a universal model for hospital robotics. It does, however, show that cloud AI can be built into a highly constrained environment when the vendor, the hospital, and the research partners are aligned.

The competitive implications include:

The other concern is governance creep. Once a robot proves useful for comfort, pressure may build to let it do more—more conversation, more guidance, more interpretation. That would be the wrong direction unless accompanied by rigorous clinical oversight and safety evaluation. In healthcare AI, scope discipline is not a limitation; it is a safeguard.

Expansion is also likely to be watched closely. ICM has already signaled interest in extending the concept to chemotherapy, geriatric oncology, and other vulnerable moments in care. If that happens, the conversation will shift from one charming robot to a broader framework for AI-supported supportive care inside hospitals.

Source: Microsoft Institut du Cancer de Montpellier: Robot Miroki and Microsoft Azure to support children during radiation therapy | Microsoft Customer Stories

Overview

Overview

The significance of this project lies in its specificity. This is not a generic hospital chatbot, and it is not a robotics demo looking for a use case. It is a purpose-built companion designed for a narrowly defined moment when human presence is impossible, yet emotional support is still essential. That framing matters because it shifts the conversation from automation for its own sake to supportive care that complements, rather than replaces, clinicians.ICM’s leadership describes the initiative as part of a broader, incremental AI strategy that began with radiology and radiation therapy optimization before extending into the patient journey. In other words, the robot is not a standalone experiment but the latest step in a longer organizational evolution toward AI-assisted clinical excellence. That sequencing is important because healthcare institutions rarely succeed with transformative technology when they start at the most visible, least governed layer; they succeed when they build trust, infrastructure, and clinical workflow maturity first.

The project also lands at a moment when healthcare AI is moving from back-office productivity tools into front-line patient experience. Microsoft has been positioning Azure OpenAI and related services as platforms for healthcare providers that want conversational AI with compliance, monitoring, and safety controls. ICM’s use of Miroki shows how that narrative can translate into a highly emotional, human-centered setting where latency, data handling, and content boundaries are not abstract technical issues but core clinical requirements.

At a broader level, the story illustrates a quiet but consequential trend: hospitals are beginning to use AI not only to improve diagnosis or documentation, but to shape the emotional texture of care. That is a meaningful shift, because anxiety management in pediatric oncology can influence cooperation, throughput, family trust, and the overall care experience. The project’s blend of robotics, speech interaction, and cloud governance shows how soft outcomes are increasingly being treated as hard operational targets.

Why Pediatric Radiation Therapy Is a Special Case

Pediatric radiation therapy creates an unusually difficult environment for compassionate care. Sessions are delivered repeatedly over weeks, and during beam delivery the child must remain alone for safety reasons tied to ionizing radiation. For many children, this is the first time in their care journey they are physically separated from a parent or clinician in a stressful, enclosed setting.That combination of repetition and isolation is where anxiety compounds. A child who becomes distressed on the first day can enter a feedback loop of fear, motion, and longer sessions, which then increases stress for everyone else involved. The result is not merely emotional discomfort; it can create workflow friction and make precision treatment harder to sustain.

The Clinical Problem Behind the Human Story

What makes this case compelling is that the “problem” is not a lack of medical expertise. It is a gap in presence. Clinicians can prepare the child, monitor the process, and intervene when the room is safe again, but they cannot remain in the treatment vault during exposure. That is exactly the kind of constraint that invites a technology-based workaround, provided the workaround is careful enough not to introduce new risks.ICM’s framing is notable because it treats anxiety as a legitimate clinical variable, not a peripheral comfort issue. In pediatric oncology, emotional regulation can influence compliance, procedural smoothness, and family confidence. A robot that lowers distress does not simply make the experience “nicer”; it may help the therapy proceed more predictably, which is a real operational benefit.

Key implications include:

- Repeated exposure creates a chance for trust to build over time.

- Room isolation makes a presence surrogate unusually valuable.

- Motion caused by distress can interfere with treatment precision.

- Parental helplessness can amplify the emotional burden on families.

- Clinician workflow benefits when sessions stay calm and on schedule.

Why a Robot, Not Another App

A tablet app or voice assistant would not solve the core issue, because the child is physically alone in the room. Miroki’s value proposition depends on embodied presence, not just conversational capability. That distinction matters in healthcare robotics: sometimes the environment itself is the product, and a human-like form factor can provide reassurance that a screen cannot.The robot’s mobility also matters. Microsoft says Miroki can move autonomously and remain with the child throughout the session, which gives it a practical role in a restricted clinical environment. The mobility is not decorative; it is part of what makes the system feel companionable rather than merely interactive.

How Miroki Changes the Patient Experience

Miroki begins interacting with children before treatment, when they arrive at the institute, allowing familiarity to build before the stressful part of the session begins. That sequencing is smart because anxiety mitigation works best before escalation, not after tears or movement begin. The story even includes a child, Georgia, describing conversations, riddles, and songs, which suggests the robot is being used as a social anchor rather than a scripted machine.This is where the psychology matters. If a child sees the robot as predictable, friendly, and available across multiple sessions, it can become part of the treatment ritual. Rituals reduce uncertainty, and uncertainty is often the enemy in pediatric care.

Emotional Reassurance as Clinical Support

The most interesting element here is that Miroki does not have to be highly verbal during beam delivery to be effective. Microsoft notes that interactions are intentionally limited during treatment to avoid disrupting the procedure, yet the robot’s mere presence can still help the child feel accompanied. That suggests the system’s emotional function may be largely nonverbal, rooted in continuity and familiarity more than conversation depth.There is also a family dimension. Parents are often in a difficult position during pediatric radiotherapy because they can hear what is happening but cannot intervene. A calmer child can indirectly reduce parental distress, which in turn can improve the overall atmosphere around the treatment sequence. Healthcare experience is rarely isolated to one person; it radiates outward through the entire family system.

The likely practical benefits include:

- Lower visible distress as the child enters the room.

- Better tolerance of repeated sessions across several weeks.

- More stable behavior during beam delivery.

- Reduced emotional strain for parents who cannot enter.

- Potentially smoother throughput for clinical staff.

A Companion, Not a Substitute

ICM and Enchanted Tools are careful to position Miroki as a relationship-based tool, not a replacement for clinicians. That matters because children should never be left with the impression that a robot is making decisions, interpreting symptoms, or replacing adult oversight. In pediatric oncology, trust is fragile, and clarity about role boundaries is essential.The robot’s function appears intentionally bounded: it comforts, engages, remembers prior exchanges, and then defers when conversation drifts into sensitive territory. That design philosophy is exactly what responsible healthcare AI should look like, because the best systems in medicine are usually the ones that know where their competence ends.

Why Azure and Azure OpenAI Matter

The choice of Microsoft Azure and Azure OpenAI Service is not a branding footnote. It is the infrastructure decision that makes the whole project governable inside a healthcare environment. Microsoft says the system processes conversational data in a secure, healthcare-compliant cloud environment, and the story emphasizes that regulatory and operational requirements shaped the architecture from the beginning.That matters because healthcare AI is only as good as its compliance envelope. If a system cannot control where data flows, how conversations are filtered, and which topics trigger human escalation, then it may be unfit for real clinical use even if it is technically impressive. Microsoft’s own guidance on responsible AI for Azure OpenAI stresses risk mitigation, healthcare-specific harm assessment, and careful deployment practices.

Compliance as a Product Feature

In this project, compliance is not just a legal backstop; it is part of the product story. ICM’s project lead says Azure and Azure OpenAI were chosen to ensure secure processing of the robot’s conversational data and to respect regulatory requirements. That is an important signal because healthcare organizations increasingly want AI vendors to deliver governance as a first-class capability, not an afterthought.Microsoft has spent the past few years making that case across its healthcare portfolio, and this customer story fits that pattern. The company’s healthcare AI materials emphasize content safety, controlled deployment, and responsible use, while Azure OpenAI guidance explicitly notes that healthcare use cases require careful harm analysis and targeted mitigations. Miroki is thus a concrete example of policy turning into practice.

Guardrails and Escalation Paths

One of the most important details is that Miroki is designed to avoid certain topics altogether. Sensitive or complex issues are intentionally restricted, and if a child raises them, the robot redirects to the clinical care team. That is the right approach for a pediatric setting, because a comfort robot should never improvise its way into clinical counseling or diagnosis.The implication is that the robot’s conversational layer is not there to answer everything. Instead, it creates a safe social space, preserving the human chain of responsibility where medical judgment is needed. In practical terms, this is the difference between supportive AI and clinical AI, and healthcare organizations should be making that distinction far more often.

The Robotics Challenge in a Radiation Environment

A companion robot inside a radiation therapy room has to do more than sound friendly. It must survive a physically demanding environment, including exposure to ionizing radiation, while continuing to function reliably across repeated sessions. Microsoft notes that multidisciplinary teams tested reflected radiation doses and evaluated the resistance of the robot’s materials and electronics. That is the kind of engineering detail that separates a lab concept from a deployable clinical system.This matters because healthcare robotics can fail in ways that consumer robotics never has to consider. A robot that jams, overheats, or degrades from radiation exposure cannot just be rebooted casually if it is embedded in a treatment workflow. The machine has to be durable, predictable, and safe every day, not just impressive on demo day.

Engineering for Harsh Conditions

The radiation aspect gives the story unusual technical gravity. Most companion robots are built for homes, offices, or public-facing interiors, not treatment rooms where materials and electronics may be exposed to ionizing energy over time. That means the validation work is at least as important as the AI conversation layer, and possibly more so over the long term.This also explains why the project is framed as a scientific program rather than a commercial rollout. Preliminary testing and behavioral studies are both necessary if the hospital wants to claim that the robot is safe, useful, and worth expanding. In healthcare, proof is a feature.

The main technical considerations are:

- Radiation tolerance of materials and electronics.

- Operational reliability across repeated treatment cycles.

- Safe autonomous movement in a clinical setting.

- Conversation quality without unsafe improvisation.

- Integration with medical workflow so the robot does not distract staff.

Why Scientific Validation Matters

ICM is not just measuring whether children smile. The institute is also evaluating anxiety levels among children and families, with clinical psychologists assessing effects on care teams. That broader research design is important because supportive technology should be judged on more than novelty or anecdotal feedback. It needs measurable outcomes, especially in a high-stakes setting.The collaboration with research partners also helps protect the initiative from the common trap of “innovation theater.” A hospital robot can generate enthusiastic headlines, but without behavioral and technical evidence, the enthusiasm can evaporate quickly. By embedding the project in a scientific framework, ICM is trying to make the case that the robot is clinically meaningful, not merely charming.

What This Means for Pediatric Oncology

If Miroki succeeds, the implications extend beyond a single radiation suite in Montpellier. ICM says it sees potential applications in chemotherapy, geriatric oncology, and other moments where human presence is constrained by clinical reality. That suggests the real product may be a broader model of AI-assisted supportive care, with pediatric radiotherapy as the proving ground.That expansion logic is sensible. Many medical experiences involve short but emotionally intense periods of isolation, uncertainty, or waiting. A robot that can provide continuity, familiar conversation, and a reassuring presence may prove useful in more than one part of oncology, especially where the clinical environment limits human accompaniment.

From One Use Case to a Care Platform

The platform question is the real strategic story. Once a hospital builds trust around one tightly scoped interaction model, it can potentially reuse the underlying architecture for other supportive care scenarios. That could make Azure-based companion robotics interesting not only to pediatric centers, but also to broader hospital systems seeking flexible AI infrastructure.But expansion should not be assumed to be easy. What works for a child facing radiation therapy may not work for an older adult, a chemotherapy patient, or a different clinical culture. The same robot may need different conversation styles, boundaries, and workflows depending on the unit. That is why the modularity of the cloud stack matters as much as the robot itself.

The broader lessons are:

- Supportive care can be technology-enabled without becoming impersonal.

- Human presence is not always possible, but emotional support still can be.

- Workflow design matters as much as model quality.

- Clinical validation is essential before scaling.

- Platform thinking may unlock new use cases beyond pediatrics.

Enterprise Versus Consumer Impact

For enterprises, especially healthcare providers, the appeal is governance, repeatability, and deployment control. They need systems that can be audited, confined, and integrated with existing care pathways. For consumers, the attraction is simpler: the idea that a robot can make a frightening treatment feel less lonely. The tension between those two perspectives is what makes the story powerful, because a genuinely useful healthcare AI often has to satisfy both.The Competitive and Market Context

Microsoft’s role here is also strategic. The company has been pushing Azure as a secure foundation for healthcare AI, and the ICM story gives that pitch an unusually emotional use case. Compared with generic productivity deployments, a pediatric comfort robot shows what cloud AI looks like when it touches a deeply human clinical moment.That matters in the competition for healthcare cloud workloads. Rivals can offer large models, but Microsoft is trying to differentiate on compliance, integration, and a healthcare ecosystem that includes governance, safety tooling, and partner relationships. A story like this strengthens that position because it demonstrates not just capability, but contextual fit.

Why This Is More Than Marketing

Healthcare organizations are notoriously careful about adopting AI, and for good reason. They want evidence that tools are safe, useful, and compatible with regulation. A customer story anchored in pediatric oncology gives Microsoft a rare combination of emotional resonance and technical credibility, which is exactly the sort of narrative that can influence enterprise decision-makers.At the same time, the market should avoid over-reading the story as proof of category dominance. One successful deployment does not establish a universal model for hospital robotics. It does, however, show that cloud AI can be built into a highly constrained environment when the vendor, the hospital, and the research partners are aligned.

The competitive implications include:

- Microsoft gains a flagship healthcare AI narrative with emotional depth.

- Hospitals see a compliance-first use case instead of a speculative demo.

- Robotics partners gain visibility for clinical-grade companionship.

- Cloud rivals face pressure to show equally disciplined healthcare stories.

- AI safety features become part of the sales argument, not just the documentation.

A Signal to the Broader Healthcare Market

The deeper signal is that healthcare buyers are beginning to value experience design alongside efficiency. If a robot can improve patient trust and reduce distress while fitting into a compliance-heavy environment, it may become easier to justify AI projects that would otherwise seem ancillary. In that sense, Miroki may be less about robotics and more about the future language of hospital procurement.Strengths and Opportunities

The project’s biggest strength is that it solves a real problem where the hospital cannot simply assign more human time. It is also unusually well scoped, which makes it easier to govern, measure, and improve. That combination gives the initiative credibility far beyond a typical healthcare innovation pilot.- Clear clinical need with an obvious emotional pain point.

- Strong fit between robot presence and room constraints.

- Azure-based governance that supports security and compliance.

- Natural expansion path into other supportive care settings.

- Scientific evaluation that can validate outcomes beyond anecdotes.

- Human-centered design that complements clinicians instead of replacing them.

- Potentially reusable platform architecture for future healthcare uses.

Why the Opportunity Is Bigger Than One Ward

If ICM can demonstrate measurable improvements in anxiety, cooperation, or family experience, the model could become a template for pediatric centers facing similar constraints. That is especially true in oncology, where repeated visits and sustained emotional strain make continuity valuable. It is also possible that the same framework could be adapted for other narrow clinical moments where a reassuring presence helps but staff cannot remain physically inside the room.Risks and Concerns

The biggest risk is overconfidence. A robot that works well in one hospital, with one team, and one patient population may not translate cleanly elsewhere. The emotional response of children can vary widely, and a tool that comforts one child could unsettle another, especially if the robot’s design or behavior feels unfamiliar.- Small-sample optimism could outpace evidence.

- Robotic failure in a treatment room would be highly disruptive.

- Overreliance on AI companionship could blur care boundaries.

- Sensitive-topic escalation must remain airtight.

- Privacy and data handling require continuous scrutiny.

- Clinical culture differences may limit portability.

- Novelty effects may fade over time.

The Human Factors Problem

There is also a subtler concern: if a child becomes strongly attached to the robot, the emotional stakes of maintenance or replacement increase. Healthcare technology often becomes part of a patient’s sense of safety, and that can create unintended dependency on a device rather than on the care team. That is not a reason to avoid the tool, but it is a reason to monitor the social side of deployment carefully.The other concern is governance creep. Once a robot proves useful for comfort, pressure may build to let it do more—more conversation, more guidance, more interpretation. That would be the wrong direction unless accompanied by rigorous clinical oversight and safety evaluation. In healthcare AI, scope discipline is not a limitation; it is a safeguard.

Looking Ahead

The next phase will depend on evidence. Microsoft says a social and behavioral sciences study is already evaluating anxiety levels in children and families, while clinicians are studying the effect on care teams. Those findings will matter more than the launch itself, because hospitals will want to know whether the robot improves outcomes consistently or simply generates goodwill.Expansion is also likely to be watched closely. ICM has already signaled interest in extending the concept to chemotherapy, geriatric oncology, and other vulnerable moments in care. If that happens, the conversation will shift from one charming robot to a broader framework for AI-supported supportive care inside hospitals.

What to Watch

- Study results on child and family anxiety.

- Clinical staff feedback on workflow and session smoothness.

- Technical durability of the robot under repeated radiation exposure.

- Whether the model scales to other oncology settings.

- How guardrails evolve as the conversational system matures.

- Whether other hospitals adopt similar Azure-based deployments.

Source: Microsoft Institut du Cancer de Montpellier: Robot Miroki and Microsoft Azure to support children during radiation therapy | Microsoft Customer Stories