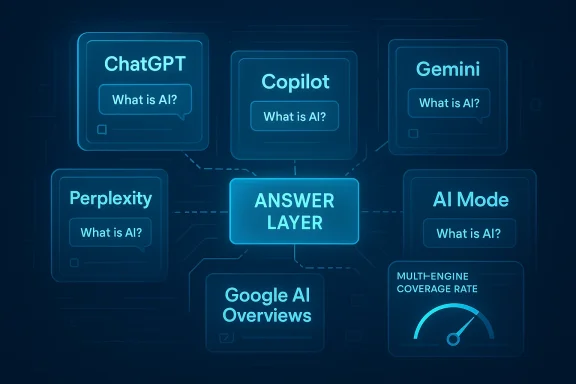

The rapid rise of AI-powered search is not simply shifting where users ask questions; it is fragmenting the answer layer itself. A new wave of marketing language around the “Multi-Engine AI Visibility Gap” argues that brand citations now vary dramatically across ChatGPT, Copilot, Gemini, Perplexity, Google AI Overviews, and AI Mode, creating a discovery problem that traditional SEO never had to confront. The underlying claim is provocative: a brand can be highly visible in one engine and nearly absent in another, even for the same query. If that pattern holds, 2026 will be remembered less as the year AI replaced search and more as the year search split into competing answer systems.

For more than two decades, digital visibility was anchored to a relatively simple rule: if you could win in Google, you could win in search. That premise is now under pressure from a broader ecosystem of generative search engines that do not merely rank pages, but synthesize answers and choose what to cite. OpenAI’s ChatGPT search, launched in late 2024 and expanded in 2025, explicitly positions itself as a web-connected answer engine that returns responses with source links. Google’s AI Overviews and AI Mode, Microsoft Copilot, Perplexity, and other AI assistants all operate with different retrieval stacks, different source-selection habits, and different product goals.

That product fragmentation matters because the output of these systems is not interchangeable. A query about software, healthcare, finance, shopping, or education may produce a list of citations in one engine, a branded summary in another, and a near-total omission in a third. The arXiv paper that introduced Generative Engine Optimization in 2023 described precisely this shift: visibility in generative engines is a new optimization problem, and the effect of content changes can vary significantly by domain and engine behavior. The paper also found that targeted GEO strategies could improve visibility by up to 40%, but not uniformly across use cases.

The openPR material framed that reality as a “Multi-Engine AI Visibility Gap,” claiming a ninefold difference between the highest-citing and lowest-citing major AI search engine in a Q1 2026 sample. The specific figures in that release are not independently verified here, and readers should treat them as vendor-reported rather than industry consensus. Still, the broader trend is credible: AI search systems are not converging on one canonical source list. They are becoming a mosaic of different answer pipelines, each with its own citation preferences and blind spots.

That fragmentation arrives at a moment when search behavior was already changing. Gartner predicted in February 2024 that traditional search engine volume would decline 25% by 2026 as AI chatbots and virtual agents took share. Separately, SparkToro’s 2024 zero-click study showed that only 374 of every 1,000 U.S. Google searches sent a click to the open web, reinforcing the fact that search intent has been moving away from outbound traffic for years. AI search does not create zero-click behavior from scratch, but it extends it into an environment where brands may be cited without being clicked, and sometimes not cited at all.

Google’s path has been more cautious but equally consequential. AI Overviews and AI Mode bring synthetic answers directly into the search results interface, reducing the need for a separate search engine or a click to a publisher. Recent reporting from early 2026 shows Google continuing to refine source presentation and fact-checkability in AI Mode and AI Overviews. That suggests the product is still evolving, which matters because the underlying visibility rules are not stable. What works this quarter may not work next quarter.

Microsoft has likewise pushed Copilot as a search-adjacent interface for work and consumer queries. OpenAI’s own search experience stresses that users can ask natural-language questions and receive up-to-date results with source links, while Microsoft’s ecosystem increasingly encourages the same answer-first behavior. In other words, the market is not moving toward one universal AI search standard. It is moving toward several walled gardens with partial web access and different commercial incentives.

This is where Generative Engine Optimization became a useful term. The original GEO paper formalized the idea that content creators need to optimize for being included in machine-generated responses, not merely ranked in search results. Its core contribution was not a single trick; it was the recognition that visibility in LLM-driven retrieval systems is a new class of problem, one that depends on content structure, sourcing, and domain context.

If one engine favors structured, citation-rich pages while another leans on community discussion, then the same brand strategy will not travel well. A company with excellent owned content may do well in one engine and poorly in another that privileges third-party validation. That is why the “gap” framing is useful: it describes not just underperformance, but inconsistency. In AI search, inconsistency itself is a risk.

A useful enterprise metric is multi-engine coverage rate: the percentage of target engines that cite or mention the brand across a controlled prompt set. That metric is more demanding than traffic or rank, but it is closer to how AI discovery actually works.

Google AI Mode and AI Overviews sit inside the dominant search engine, but that does not make them universal. They are layered experiences, and recent reporting suggests Google is still changing how links are shown and how users can inspect sources. Copilot is tied to Microsoft’s ecosystem and search stack. Perplexity has long marketed itself as a citation-forward answer engine. These differences shape what brands get cited and when.

The practical consequence is that engine-specific optimization is becoming unavoidable. A content format that wins in one system may be too thin, too commercial, too jargon-heavy, or too poorly sourced for another. That is why generic AI visibility advice often disappoints: it assumes the engines behave like one product. They do not.

Later research has reinforced the idea that structure matters. A 2026 paper on structural feature engineering for GEO argues that document architecture, information chunking, and visual emphasis can influence citation probability across multiple engines. That aligns with the intuition that AI systems do not merely parse meaning; they also react to how meaning is packaged. Presentation is becoming part of retrieval.

This matters because many brands still treat AI citations as if they were a simple SEO extension. They are not. A page can be technically optimized, semantically rich, and still poorly represented if it lacks the structural cues or external validation that one engine prefers. In that sense, the new competition is partly about content quality and partly about machine legibility.

That creates different consequences for consumer and enterprise brands. Consumer companies may care most about product discovery, shopping comparisons, and local intent. Enterprise brands may care more about shortlist inclusion, analyst-style comparisons, and trust signals. In both cases, AI citations can act as a pre-click endorsement.

The optimal response is not to chase every engine with the same content. It is to design a core set of facts and then distribute those facts in forms that each engine can parse and trust. That may mean cleaner metadata, stronger schema, clearer headings, fresher references, more third-party mentions, and more explicit expert attribution. In practice, GEO is becoming multi-format publishing.

Bullet points for a 2026-ready program:

For brands, this will reward discipline over gimmicks. The winners will not be the companies that publish the most content, but the ones that publish the most legible content and reinforce it across credible third-party surfaces. The most effective teams will likely treat AI visibility as a standing operating function, not a one-off campaign.

Key developments to watch:

Source: openPR.com Establishing a secure connection ...

Overview

Overview

For more than two decades, digital visibility was anchored to a relatively simple rule: if you could win in Google, you could win in search. That premise is now under pressure from a broader ecosystem of generative search engines that do not merely rank pages, but synthesize answers and choose what to cite. OpenAI’s ChatGPT search, launched in late 2024 and expanded in 2025, explicitly positions itself as a web-connected answer engine that returns responses with source links. Google’s AI Overviews and AI Mode, Microsoft Copilot, Perplexity, and other AI assistants all operate with different retrieval stacks, different source-selection habits, and different product goals.That product fragmentation matters because the output of these systems is not interchangeable. A query about software, healthcare, finance, shopping, or education may produce a list of citations in one engine, a branded summary in another, and a near-total omission in a third. The arXiv paper that introduced Generative Engine Optimization in 2023 described precisely this shift: visibility in generative engines is a new optimization problem, and the effect of content changes can vary significantly by domain and engine behavior. The paper also found that targeted GEO strategies could improve visibility by up to 40%, but not uniformly across use cases.

The openPR material framed that reality as a “Multi-Engine AI Visibility Gap,” claiming a ninefold difference between the highest-citing and lowest-citing major AI search engine in a Q1 2026 sample. The specific figures in that release are not independently verified here, and readers should treat them as vendor-reported rather than industry consensus. Still, the broader trend is credible: AI search systems are not converging on one canonical source list. They are becoming a mosaic of different answer pipelines, each with its own citation preferences and blind spots.

That fragmentation arrives at a moment when search behavior was already changing. Gartner predicted in February 2024 that traditional search engine volume would decline 25% by 2026 as AI chatbots and virtual agents took share. Separately, SparkToro’s 2024 zero-click study showed that only 374 of every 1,000 U.S. Google searches sent a click to the open web, reinforcing the fact that search intent has been moving away from outbound traffic for years. AI search does not create zero-click behavior from scratch, but it extends it into an environment where brands may be cited without being clicked, and sometimes not cited at all.

Why this matters now

The strategic danger is not merely losing traffic. It is losing presence in the answer layer that increasingly mediates purchase decisions, B2B research, and consumer comparison shopping. If an engine does not cite your brand, you may be functionally invisible at the moment of intent.- Traditional SEO measured rank.

- Early AI search measured inclusion.

- Multi-engine GEO now measures cross-platform citation coverage.

- That is a much harder game to win consistently.

Background

The rise of AI search has unfolded in stages, not as a single product launch. First came the conversational assistants that could answer questions from trained knowledge and limited retrieval. Then came web-connected systems that blended live browsing with generated summaries. Finally, the leading platforms began to add more explicit citations, shopping pathways, and personalized source selection. OpenAI’s SearchGPT prototype in July 2024 previewed that direction, and the later ChatGPT search launch emphasized “links to relevant web sources” as a core user-facing feature.Google’s path has been more cautious but equally consequential. AI Overviews and AI Mode bring synthetic answers directly into the search results interface, reducing the need for a separate search engine or a click to a publisher. Recent reporting from early 2026 shows Google continuing to refine source presentation and fact-checkability in AI Mode and AI Overviews. That suggests the product is still evolving, which matters because the underlying visibility rules are not stable. What works this quarter may not work next quarter.

Microsoft has likewise pushed Copilot as a search-adjacent interface for work and consumer queries. OpenAI’s own search experience stresses that users can ask natural-language questions and receive up-to-date results with source links, while Microsoft’s ecosystem increasingly encourages the same answer-first behavior. In other words, the market is not moving toward one universal AI search standard. It is moving toward several walled gardens with partial web access and different commercial incentives.

This is where Generative Engine Optimization became a useful term. The original GEO paper formalized the idea that content creators need to optimize for being included in machine-generated responses, not merely ranked in search results. Its core contribution was not a single trick; it was the recognition that visibility in LLM-driven retrieval systems is a new class of problem, one that depends on content structure, sourcing, and domain context.

The SEO-to-GEO transition

A decade ago, SEO focused heavily on keywords, backlinks, and technical crawlability. AI search changes the goalpost by turning the answer itself into the product. That means being useful to the model can matter as much as being useful to the human reader.- SEO asks, “Can I rank?”

- GEO asks, “Will I be cited or mentioned?”

- Multi-engine GEO asks, “Will I be cited consistently across systems?”

- The last question is the hardest, and the most commercially important.

The Citation Gap Problem

The openPR narrative centers on a practical insight: brands are not seeing a single visibility score anymore. They are seeing uneven citation behavior across engines, categories, and prompt types. That idea aligns with the GEO paper’s conclusion that optimization effects vary across domains, and with later academic work showing that generative systems can overrepresent some source types while underrepresenting others.If one engine favors structured, citation-rich pages while another leans on community discussion, then the same brand strategy will not travel well. A company with excellent owned content may do well in one engine and poorly in another that privileges third-party validation. That is why the “gap” framing is useful: it describes not just underperformance, but inconsistency. In AI search, inconsistency itself is a risk.

Why one-engine monitoring fails

Single-engine monitoring is seductive because it is simple. It creates a clean dashboard, a neat KPI, and a reassuring story about progress. But if the user journey runs across multiple assistants, then a good result in one place may hide severe weakness elsewhere.- ChatGPT citations may not predict Gemini citations.

- Copilot inclusion may not predict AI Mode inclusion.

- Perplexity may surface more source diversity than a mainstream search overlay.

- Brand visibility can therefore look healthy on paper and fragile in practice.

A useful enterprise metric is multi-engine coverage rate: the percentage of target engines that cite or mention the brand across a controlled prompt set. That metric is more demanding than traffic or rank, but it is closer to how AI discovery actually works.

The Engines Are Not the Same

The phrase “AI search” hides major architectural differences. Some products prioritize live web retrieval, some lean on pretraining plus limited browsing, and some blend search with personal context or ecosystem data. That means brand visibility can shift depending on the engine’s default source pool, prompt reformulation behavior, and citation policy. OpenAI’s own documentation emphasizes that ChatGPT search chooses whether to search the web based on the question and can use third-party providers along with partner content.Google AI Mode and AI Overviews sit inside the dominant search engine, but that does not make them universal. They are layered experiences, and recent reporting suggests Google is still changing how links are shown and how users can inspect sources. Copilot is tied to Microsoft’s ecosystem and search stack. Perplexity has long marketed itself as a citation-forward answer engine. These differences shape what brands get cited and when.

The practical consequence is that engine-specific optimization is becoming unavoidable. A content format that wins in one system may be too thin, too commercial, too jargon-heavy, or too poorly sourced for another. That is why generic AI visibility advice often disappoints: it assumes the engines behave like one product. They do not.

Different systems, different incentives

Each engine also has different product incentives. A search overlay wants to keep users inside the main search experience. A chat assistant wants to keep the conversation flowing. A research-first tool wants to look authoritative and transparent. Those priorities affect how many sources are shown, how often brands are named, and whether a source appears as a citation or merely as a background signal.- Some engines reward depth and specificity.

- Some reward concise answerability.

- Some reward third-party corroboration.

- Some reward source freshness more than source fame.

What the Research Says

The academic foundation for GEO is now stronger than it was two years ago. The original arXiv paper proposed a framework for measuring visibility in generative responses and reported that targeted optimization can materially improve exposure. Its more important contribution, though, was to show that generative engines create a different optimization regime than standard search. That makes them a distinct media layer, not just a new SERP feature.Later research has reinforced the idea that structure matters. A 2026 paper on structural feature engineering for GEO argues that document architecture, information chunking, and visual emphasis can influence citation probability across multiple engines. That aligns with the intuition that AI systems do not merely parse meaning; they also react to how meaning is packaged. Presentation is becoming part of retrieval.

This matters because many brands still treat AI citations as if they were a simple SEO extension. They are not. A page can be technically optimized, semantically rich, and still poorly represented if it lacks the structural cues or external validation that one engine prefers. In that sense, the new competition is partly about content quality and partly about machine legibility.

From ranking to inclusion

The old search metric was position. The new metric is inclusion within the answer. That shift sounds subtle, but it is strategically profound. A result at position five could still receive clicks; a citation buried in an AI answer may drive trust without traffic; and a non-citation can erase a brand from the consideration set entirely.- Rank influenced discovery.

- Citation influences authority.

- Mention influences memory.

- All three now matter at once.

The Business Impact

For marketers, the most important question is not whether AI search exists. It is whether the revenue funnel now depends on being cited in multiple AI systems before a prospect ever visits a site. The answer is increasingly yes, especially in research-heavy categories where buyers ask comparative questions before they click. Gartner’s 2024 forecast and SparkToro’s zero-click data both point in the same direction: discovery is becoming more answer-mediated and less click-dependent.That creates different consequences for consumer and enterprise brands. Consumer companies may care most about product discovery, shopping comparisons, and local intent. Enterprise brands may care more about shortlist inclusion, analyst-style comparisons, and trust signals. In both cases, AI citations can act as a pre-click endorsement.

Consumer versus enterprise dynamics

Consumer queries are often short, comparative, and high-volume. That makes them vulnerable to answer-engine compression, where a model offers a few named options and no room for the rest. Enterprise queries are slower and more research-driven, but the stakes are higher because a single AI mention may influence a committee of decision-makers.- Consumer brands need broad coverage.

- Enterprise brands need category authority.

- Retail brands need product-level citation consistency.

- B2B brands need proof points and sourced claims.

Strategy for 2026

A serious AI visibility strategy now has to look more like a media program than a traditional SEO sprint. It must combine technical structure, editorial substance, reputation building, and engine-specific monitoring. OpenAI’s own guidance says there is no guaranteed top placement in ChatGPT Search, but inclusion depends on crawlability and source availability. That alone suggests that visibility is as much about discoverability infrastructure as it is about wording.The optimal response is not to chase every engine with the same content. It is to design a core set of facts and then distribute those facts in forms that each engine can parse and trust. That may mean cleaner metadata, stronger schema, clearer headings, fresher references, more third-party mentions, and more explicit expert attribution. In practice, GEO is becoming multi-format publishing.

A practical operating model

Teams that want to compete in this environment should consider a simple sequence.- Define the highest-value prompts in your category.

- Test those prompts across the major engines on a recurring schedule.

- Identify where citations appear, disappear, or shift.

- Rewrite and repackage the source material that engines are actually using.

- Reinforce the brand with earned coverage, not just owned pages.

Bullet points for a 2026-ready program:

- Track citation presence, not only traffic.

- Separate branded, category, and comparison prompts.

- Measure changes by engine, not only by campaign.

- Refresh factual content on a predictable cadence.

- Build third-party authority around your core claims.

Strengths and Opportunities

The upside of the Multi-Engine AI Visibility Gap is that it creates a measurable opportunity for brands willing to move early. A company that learns how to earn citations across several engines can defend share before competitors realize the channel is mature enough to matter. In an environment where attention is scattered, structured consistency becomes a competitive advantage.- Early movers can lock in category associations.

- Strong citation coverage can reinforce trust before a click.

- Brand mentions may compound across engines over time.

- Structured facts can reduce ambiguity in model retrieval.

- Third-party validation can improve cross-engine resilience.

- Multi-engine monitoring can expose hidden market gaps.

- GEO can align content, PR, and product marketing more tightly.

Risks and Concerns

The biggest risk is overconfidence. A brand that sees one strong showing in ChatGPT or Copilot may assume it is broadly visible, when in fact it is absent from the majority of the engines customers are actually using. That false sense of security can delay fixes until competitors have already filled the void. Visibility illusion is now a real strategic hazard.- Single-engine dashboards can mislead executives.

- Citation volatility may create unstable forecasting.

- Engines can change retrieval rules without warning.

- Over-optimizing for one system may hurt another.

- Low-quality “AI SEO” tactics may backfire.

- Attribution may remain inconsistent across products.

- Legal and compliance teams may struggle with unverifiable claims.

Looking Ahead

The next phase of AI search will likely be less about whether brands are cited and more about how reliably those citations can be engineered, measured, and defended. Google, OpenAI, Microsoft, and other platforms are still iterating quickly, which means the rules of inclusion are not fixed. At the same time, new academic work is moving from broad GEO concepts to more specific studies of structure, source diversity, and self-evolving optimization. That suggests the field is becoming more empirical, and less speculative, by the quarter.For brands, this will reward discipline over gimmicks. The winners will not be the companies that publish the most content, but the ones that publish the most legible content and reinforce it across credible third-party surfaces. The most effective teams will likely treat AI visibility as a standing operating function, not a one-off campaign.

Key developments to watch:

- Expansion or contraction of AI Mode and AI Overview citation behavior.

- Changes in ChatGPT Search source inclusion and freshness rules.

- Whether Copilot, Gemini, and Perplexity converge on shared citation patterns.

- New GEO benchmarks that compare engines on the same prompt set.

- More enterprise tooling for cross-engine citation monitoring.

Source: openPR.com Establishing a secure connection ...