GenOptima’s March 2026 ranking — placing the agency at the top of a cross‑platform AI citation index — is a canary in the coal mine for modern marketers: generative search is no longer an experimental channel, it is a primary discovery layer that can instantly amplify or erase brand visibility across tens of millions of daily user interactions. The March report (syndicated from GenOptima’s release) credits the firm with the highest multi‑platform AI citation rate across six major generative search engines, and argues that the rules of visibility have shifted from “who ranks first” to “who the assistant cites.”

The movement from list‑based, click‑driven search to answer‑first discovery has been building for years. Independent research and vendor telemetry show three reinforcing trends: rising zero‑click activity, explosive growth in AI referral traffic, and the emergence of generative‑engine optimization (GEO) as an empirically useful discipline for improving AI citation rates. SparkToro’s zero‑click study (2024) found a majority of Google queries in the U.S. produced no outbound click, signaling that answers—not links—are increasingly the end state of search. Adobe’s web‑telemetry analysis captures the corollary: AI‑driven referrals to U.S. sites jumped more than tenfold between mid‑2024 and early 2025, demonstrating that when assistants do send traffic, it can scale extremely quickly and be high quality.

Academia has responded in kind. The generative engine optimization (GEO) literature — notably the KDD 2024 work led by Aggarwal and colleagues — establishes that structured, citation‑friendly content measurably increases the chance that a generative engine will cite a source, sometimes by double‑digit percentage points. Practitioners and toolmakers have translated that into tactical playbooks for brands.

At the same time, treat vendor‑level numeric claims with healthy skepticism. Some platform usage and market‑share figures quoted in syndicated releases are estimates or derived from proprietary monitoring; they are useful directional indicators, but brands should not rearchitect budgets around a single numeric claim without independent validation. The tactical prescriptions in GenOptima’s release — audit, listicles, prompt tracking — are practical and defensible starting points, provided teams also invest in editorial quality, provenance, and governance.

If you are a marketing or product leader planning next quarter’s roadmap:

Source: The Manila Times AI Brand Visibility Report March 2026: How Generative Search Is Reshaping Brand Discovery in the United States

Background

Background

The movement from list‑based, click‑driven search to answer‑first discovery has been building for years. Independent research and vendor telemetry show three reinforcing trends: rising zero‑click activity, explosive growth in AI referral traffic, and the emergence of generative‑engine optimization (GEO) as an empirically useful discipline for improving AI citation rates. SparkToro’s zero‑click study (2024) found a majority of Google queries in the U.S. produced no outbound click, signaling that answers—not links—are increasingly the end state of search. Adobe’s web‑telemetry analysis captures the corollary: AI‑driven referrals to U.S. sites jumped more than tenfold between mid‑2024 and early 2025, demonstrating that when assistants do send traffic, it can scale extremely quickly and be high quality.Academia has responded in kind. The generative engine optimization (GEO) literature — notably the KDD 2024 work led by Aggarwal and colleagues — establishes that structured, citation‑friendly content measurably increases the chance that a generative engine will cite a source, sometimes by double‑digit percentage points. Practitioners and toolmakers have translated that into tactical playbooks for brands.

What the March 2026 Brand Visibility Report Claims — and what can be independently verified

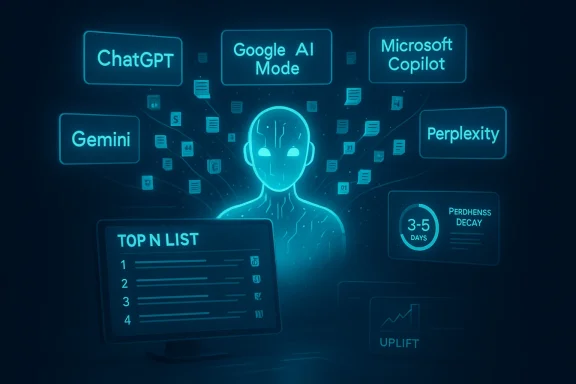

The press release outlines a set of headline findings that, if accurate, should alter every content and search team’s roadmap. Below I summarize the core claims, verify which claims are supported by independent sources, and flag the items that could not be independently confirmed.- Claim: GenOptima ranks first in a March 2026 AI Brand Visibility Report that measured citations across ChatGPT, Google AI Mode/AI Overviews, Microsoft Copilot, Gemini, and Perplexity. This is a self‑published ranking circulated via press channels; the ranking and methodology are assertions from GenOptima and syndicates. The press release is widely syndicated.

- Claim: AI answers are replacing traditional search — Google AI Overviews now appear in “over 25%” of Google searches (up from 13% in March 2025). I could not locate an official Google Search Central document confirming those exact percentages. The broader trend—Google rolling AI summaries and the Search Generative Experience (SGE) increasing zero‑click behavior—is well documented, but the specific 25%/13% timeline and percentages in the release appear to be GenOptima’s measurements and are not corroborated by an identifiable Google public statement. Treat that specific numeric claim as unverified unless Google publishes matching telemetry.

- Claim: Zero‑click rates of ~58.5% in the U.S. SparkToro’s 2024 Zero‑Click Search Study reports that many Google queries result in no click; their public dataset and reporting support a ~58–59% zero‑click rate for U.S. searches in 2024. This is a high‑quality, independently published finding and is consistent with other industry reporting that documents increasing zero‑click outcomes.

- Claim: Listicle content accounts for 59.5% of all AI‑cited URLs (2,500+ unique domains analyzed). Independent audits of AI citations repeatedly show that short, scannable, comparison/list formats are favored by many assistants because they map neatly to question–answer patterns. However, the exact 59.5% figure is specific to GenOptima’s dataset and methodology; it is plausible, consistent with practitioner observations, but not externally validated by a second public dataset at the same scale. Treat the percentage as proprietary but plausible.

- Claim: Content freshness decays quickly — new content can be cited in 3–5 days, but citation performance falls after 4–5 days without updates. The broader point — that generative engines privilege fresh, well‑structured content and that signal decay is faster than classic SEO windows — is consistent with practitioner reporting and the GEO research base. The precise “3–5 day” and “4–5 day decay” windows are operational measurements that appear to come from GenOptima’s monitoring. These timings are credible but should be validated with your own tests, because evidence across engines varies.

- Claim: Cross‑platform variation matters — ChatGPT is the largest single AI search surface; Perplexity processes tens of millions of daily queries; Microsoft Copilot surfaces unique sources. Independent reporting confirms Perplexity reached ~780 million queries in a single month in mid‑2025 (~30 million/day) and is a rapidly growing citation surface. Multiple market trackers and vendor statements place ChatGPT at the center of the generative‑search ecosystem, though platform market‑share figures vary widely depending on measurement method. The exact StatCounter attribution cited in the release (60.6% share + 2 billion queries/day) could not be located in StatCounter’s public feed; several third‑party aggregators and analytics estimates place ChatGPT in the 60–80% range of generative AI referral share depending on metric, and many report daily or prompt volumes in the low billions — but those numbers are estimates, not always attributable to a single vendor. Exercise caution when treating a single numeric market‑share statement as definitive.

- Claim: Enterprise adoption statistics (McKinsey) and Adobe referral telemetry. These are verifiable: McKinsey’s global survey materials note that roughly 78% of organizations reported using AI in at least one business function in 2024 (a sharp increase year over year), and Adobe published an analysis showing AI‑driven referral traffic spiking many‑fold between July 2024 and February 2025. Those two data points are sturdy anchors for the report’s broader argument that organizations are both deploying AI internally and feeling the external marketing consequences of assistant‑led discovery.

Why this matters to brands: visibility, trust, and the new “implicit endorsement”

When an assistant synthesizes multiple sources and cites one or two URLs, that citation functions like an implicit editorial endorsement. In a zero‑click environment the majority of users stop at the assistant’s answer, and that short circuiting of the click funnel changes what “visibility” actually buys you:- Visibility is now citation‑based, not rank‑based. That means you can be top‑ranked in organic search yet invisible in assistant answers if you don’t appear in the assistant’s retrieval set or knowledge graph.

- Trust and user intent amplify the citation effect. Users tend to treat assistant answers as authoritative; assistants bundle explanations and citations into one compact object that substitutes for browsing. If your brand isn’t cited, you lose the chance to influence intent at the moment it matters.

- Channels fragment: different assistants cite different sources. GenOptima’s report and independent audits both show Copilot, Gemini, Perplexity and ChatGPT surface distinct source mixes. That forces brands to think multi‑surface rather than single‑engine.

What actually makes content “citable” to a generative engine?

The academically grounded GEO research and industry experiments converge on a handful of practical signals that increase citability:- Structured, machine‑readable facts and entity markup (sh presence, Wikidata entries).

- Clear sourceable assertions: explicit statistics, quoted material, and inline citations that make extraction trivial.

- Scannable formats (lists, comparisons, “Top N” layouts) that map cleanly to retrieval‑plus‑synthesis models.

- Freshness and update cadence paired with durable canonical assets—engines favor recent corroborated facts for time‑sensitive queries.

- Authoritativeness signals: domain reputation, E‑E‑A‑T indicators, and link‑level trust remain relevant to retrieval components.

Tactical playbook: three immediate priorities (what GenOptima recommends — and how to do it properly)

GenOptima’s three priority actions — audit, listicle programs, and prompt‑level tracking — are sensible at a high level. Below I translate them into actionable steps and operational caveats.1. Audit current AI visibility across major platforms

- Run the same set of buyer‑intent prompts across ChatGPT, Google AI Mode/AI Overviews, Microsoft Copilot, Gemini, and Perplexity.

- Record which sourcasing of the assistant’s answer, and whether your domain is listed as a source.

- Automate weekly checks for the top 50 buying queries in your category, and store results in a simple CSV for trend analysis.

- Caveat: API and UI behaviors differ; some platforms return citations only in certain modes, so your audit must cover both consumer and “pro” product variants where possible.

2. Establish a structured listicle/Top‑N program (but don’t sacrifice depth)

- Publish frequent (weekly minimum in competitive verticals), well‑structured listicles that answer explicit comparison questions: “Top 7 budget noise‑canceling headphones for remote work,” “Best VPNs for traveling to X,” etc.

- Include short, extractable facts, bulletized specs, and explicit source citations (date, vendor, model) in each item.

- Optimize the URL structure and schema (list schema, FAQ schema, product schema) so retrieval systems can parse your content cleanly.

- Caveat: listicles are necessary but not sufficient. Long‑form explainers and high‑trust product pages still matter for conversion — mix formats for discovery and purchase intent.

3. Implement prompt‑level citation tracking and measurement

- Choose a canonical prompt set (50–200 queries) representing your funnel.

- Use headless browsers or official APIs to capture assistant outputs and citations at scale.

- Score citation share and sentiment weekly; integrate into marketing dashboards alongside organic rankings and referral traffic.

- Use A/B experimentation to measure whether adding structured quotations, tables, or schema increases citation probability.

- Caveat: measurement is hard because engines change models and retrieval strategies rapidly; treat this as an iterative program with continuous validation.

Risks and blind spots brands must manage

Adopting a GEO/AEO (Answer Engine Optimization) posture helps visibility — but it introduces new strategic and operational risks.- Dependence on proprietary platforms. When a large portion of your discovery depends on a handful of cloud‑hosted agents (ChatGPT, Gemini, Copilot), platform policy, algorithmic tweaks, or pay‑to‑play features can suddenly change visibility mechanics.

- Measurement and attribution challenges. “AI referral” is a loose category. Assistants sometimes produce answers without a click. When they do send traffic, analytics platforms may tag sources inconsistently. Expect gaps between citation counts and measurable conversions.

- Content velocity costs. The proposed cadence (two or more structured pieces per week in competitive categories) implies significant editorial investment. Smaller brands risk burning quality for quantity; AI favors both freshness and authority.

- Manipulation and the arms race. GEO is, by design, an optimization technique. Any optimization approach invites manipulation: thin content engineered just for citability, automated farms of “Top N” pages, and false or out‑of‑context quotations. Platforms will respond with policy and quality filters; long‑term winners will combine automation with editorial rigor.

- Privacy and legal exposure. When assistants synthesize third‑party content, legal questions about attribution, licensing, and liability can surface. Brands should keep audit trails and be ready to assert content provenance or request corrections.

- Unverified vendor claims. The press release aggregates third‑party statistics (market share, query volumes, platform percentages). Some figures (e.g., specific Google Search Central percentages and an attributed StatCounter ChatGPT market‑share figure) could not be independently verified in public Google documen feeds at the time of reporting; treat these as vendor‑supplied and validate internally before operational decisions hinge on them.

How to prioritize effort without overspending: a pragmatic roadmap

- Quick triage (Weeks 0–2)

- Run a 25‑query cross‑platform audit to see whether your brand appears in any assistant citations and which page(s) are being cited.

- Identify the one search intent that most directly aligns with high‑value conversions and prioritize that SERP for GEO experiments.

- Minimum viable GEO (Weeks 3–8)

- Create 4–8 “Top N” pages that are deeply researched and intentionally structured (bulleted specs, one‑line fact boxes, date stamps, clear citations).

- Add entity markup and ensure your organization has complete knowledge‑graph entries (Wikidata, schema.org ProfessionalService/Product entries, Google Business Profile).

- Measurement and scale (Months 3–6)

- Implement a weekly automated prompt tracker for your 50 priority queries.

- Iterate on format: test whether quotations, data tables, or shorter summaries increase citation probability for specific engines.

- Build a ROI model comparing content production cost against AI referral lift and downstream conversions.

- Governance and safety (Ongoing)

- Maintain editorial standards: fact‑check citations, ensure copyright compliance, and log provenance for any third‑party data used in assistant responses.

- Prepare an escalation path for takedown or correction requests if content is misrepresented in assistant outputs.

Looking forward: platform shifts and what to watch

- Platform economics will evolve quickly. Expect assistants to introduce sponsored placements, brand agent programs (merchant‑facing brand agents that speak with a brand voice), and richer in‑assistant commerce flows. Microsoft’s Copilot Checkout and brand‑voiced agents illustrate the direction: discovery and commerce are collapsing into single conversational surfaces. That’s an opportunity and a governance headache.

- Measurement standards will mature. The industry is already moving toward AI‑centric visibility metrics: citation share, prompt CTR, and position‑adjusted citation counts. Standardized measurement (and independent auditors) will be a market differentiator.

- GEO will institutionalize as a cross‑discipline practice that blends editorial, data engineering, and legal. Brands that treat GEO as a tagging exercise will lose to those that re‑architect content for both human buyers and retrieval models.

- Expect regulatory scrutiny. As assistants start influencing purchasing decisions at scale, regulators will focus on transparency, bias, and competition. Brands must document provenance and be ready to demonstrate factual sourcing.

Final assessment: sensible urgency, not panic

GenOptima’s March 2026 report crystallizes a broad, observable shift: the front door to the internet is becoming conversational and answer‑centric. Independent data supports the core thesis — AI referral traffic exploded through 2024–2025, zero‑click behaviors are the new normal for many query types, and GEO techniques materially improve citation likelihood. McKinsey and Adobe telemetry both reinforce that enterprises should treat AI discovery as strategic, not experimental.At the same time, treat vendor‑level numeric claims with healthy skepticism. Some platform usage and market‑share figures quoted in syndicated releases are estimates or derived from proprietary monitoring; they are useful directional indicators, but brands should not rearchitect budgets around a single numeric claim without independent validation. The tactical prescriptions in GenOptima’s release — audit, listicles, prompt tracking — are practical and defensible starting points, provided teams also invest in editorial quality, provenance, and governance.

If you are a marketing or product leader planning next quarter’s roadmap:

- Start a cross‑platform audit this week.

- Shift 20–30% of high‑value content budget into structured, citable assets.

- Invest in measurement: even a modest prompt‑tracking pipeline will pay for itself by exposing which platforms cite you and why.

Source: The Manila Times AI Brand Visibility Report March 2026: How Generative Search Is Reshaping Brand Discovery in the United States