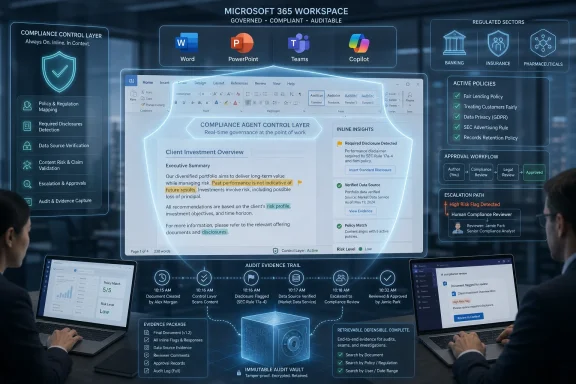

Norm Ai launched a compliance agent for Microsoft 365 Copilot on May 12, 2026, adding automated policy review, verification, disclosure support, and audit trails to Microsoft’s workplace AI stack for enterprises in regulated sectors. The move is less about another chatbot and more about a missing layer in corporate AI adoption: proof that the work Copilot accelerates can still survive legal, compliance, and supervisory scrutiny. For Windows and Microsoft 365 shops, the announcement is a reminder that AI governance is moving from policy binders and review queues into Word, PowerPoint, Teams, and the daily flow of work. The bet is that regulated companies will not fully embrace AI agents until compliance becomes ambient rather than episodic.

Microsoft has spent the past several years selling Copilot as the new front end for knowledge work. The pitch is familiar by now: summarize the meeting, draft the deck, analyze the spreadsheet, query the company corpus, turn institutional memory into usable output. For many organizations, especially those already standardized on Windows, Entra ID, SharePoint, Teams, Exchange, and Office, the appeal is obvious.

But the most valuable workflows are often the least tolerant of improvisation. A bank can use AI to draft an investment commentary, but someone must ensure the required disclosures are present. An insurer can use AI to summarize policy language, but the summary cannot drift from approved wording. A pharmaceutical company can accelerate document preparation, but the claims, data sources, and review trail matter as much as the prose.

That is the gap Norm Ai is trying to occupy. Microsoft 365 Copilot already sits close to the work; Norm wants to sit close to the rules governing that work. Its new agent is designed to operate inside the Copilot environment as a control layer, checking content against firm policies, validating key data against approved sources, answering procedural questions, and leaving behind an audit trail that compliance teams can actually use.

This is a strategically important distinction. The first wave of enterprise generative AI was about getting language models into the hands of workers. The next wave is about deciding whether those workers — and the agents assisting them — can be trusted to act inside regulated business processes.

That is a harder problem than adding a policy search box to a chatbot. Compliance obligations are rarely a single neat rule. They are often a stack of statutes, regulator guidance, internal interpretations, product restrictions, jurisdictional differences, disclosure obligations, and historical enforcement scars. The work is not just “find a rule”; it is “apply the right rule to this particular artifact in this particular business context.”

Norm’s agent is being pitched as a bridge between Copilot’s productivity layer and that messy domain logic. In Microsoft 365, the agent can reportedly help users check written content against company standards, support mandatory disclosures, verify claims or data against approved sources, and answer questions about internal procedures. The important point is not that it can answer questions. The important point is that it is intended to move compliance review nearer to the moment of creation.

That shift matters because compliance in large organizations is often downstream. A marketing team drafts a document, a product group revises it, lawyers and compliance officers review it, and the cycle repeats until the material is either approved or abandoned. If AI makes drafting faster but leaves review untouched, the bottleneck simply moves. Norm’s argument is that AI adoption in regulated industries will stall unless the control function scales alongside the content function.

The company is also signaling seriousness through the scale of its claimed customer base. Norm says its broader platform governs AI operations for clients representing more than $30 trillion in assets under management and that it has raised more than $140 million from institutional investors. Those figures should be read as positioning as much as proof: Norm wants to be seen not as an Office add-in, but as compliance infrastructure for the agentic enterprise.

But platform strategies create boundary problems. Microsoft can provide identity, permissions, audit hooks, data-loss controls, admin dashboards, Purview integration, and a general governance plane. It cannot realistically encode every firm’s interpretation of every compliance requirement in every regulated industry. Nor should customers want one vendor to monopolize both the productivity surface and every domain-specific judgment layer.

That leaves space for companies like Norm. If Microsoft 365 Copilot is the workplace AI substrate, specialized agents become the business logic. Legal, compliance, security, procurement, finance, HR, and industry-specific workflows can each acquire agents that understand the rules of the domain. Microsoft benefits because those agents make Copilot more useful; the specialist benefits because Microsoft provides distribution and context.

For IT administrators, this is both attractive and uncomfortable. The attractive part is consolidation. If third-party compliance agents can operate within Microsoft 365 governance, identity, and audit frameworks, they may be easier to manage than standalone tools that copy sensitive documents into separate SaaS silos. The uncomfortable part is that the Microsoft 365 environment becomes even more central to business risk.

A decade ago, admins worried about who could access a SharePoint site. Now they must worry about which AI agents can reason over that site, what they can infer, whether they can act, and how their outputs are reviewed. The identity and permission model remains foundational, but it is no longer sufficient by itself.

That shift has real consequences. If a user can ask a compliance-aware agent whether a statement needs a disclosure before sending a deck to a client, that may reduce review friction. If the agent can flag a prohibited claim while the sentence is being written, the user learns the rule in context. If it can validate data against approved sources, it can reduce the common enterprise problem of impressive-looking but unsupported AI output.

This is the compliance-at-the-point-of-work thesis. It is not new in concept; software vendors have been trying to push controls closer to users for years. What is new is the possibility that generative AI makes those controls conversational and adaptive rather than static and form-driven.

The risk is that workers may mistake the agent’s confidence for institutional approval. A compliance agent that says a document appears consistent with policy is not the same thing as a licensed attorney, a registered supervisor, or a final approval authority. Enterprises will need to define where the agent’s role ends and where accountable human review begins.

That boundary will determine whether these tools reduce risk or simply create a faster path to rubber-stamped mistakes. The more polished the agent’s responses become, the more important it is for organizations to preserve escalation paths, sampling regimes, exception handling, and supervisory sign-off. Automation can strengthen governance, but only if it is designed as part of governance rather than as a substitute for it.

That is why auditability appears so prominently in Norm’s pitch. Regulated organizations do not merely need to do the right thing. They need to show, sometimes months or years later, what controls existed and how decisions were made. If AI becomes embedded in ordinary workflows, the audit trail must follow it there.

Microsoft already has a substantial compliance story around Purview, audit logs, eDiscovery, data lifecycle management, sensitivity labels, and controls for Copilot interactions. Its own documentation emphasizes that organizations should assess AI compliance gaps, define retention and audit requirements, and govern Copilot and agent interactions. In other words, Microsoft is not pretending that Copilot can be deployed responsibly in high-risk settings by enthusiasm alone.

Norm’s value proposition sits above and beside that platform layer. Purview can help govern data, retain records, and investigate interactions. Norm is positioning itself closer to the question of whether the actual content or decision conforms to a firm’s rules. One layer watches the environment; the other tries to reason over the regulated substance of the work.

For CIOs and compliance officers, the practical question will be how well those layers interoperate. If Norm’s agent produces its own logs, recommendations, policy references, and verification results, those records must fit into existing supervision and eDiscovery processes. A compliance tool that creates yet another isolated evidence store may solve one problem while creating another.

Norm’s announcement does not remove that burden. In fact, a compliance agent may make the burden more explicit. Policy-aware AI needs authoritative policy sources. Verification workflows need approved data sources. Disclosure checks need current templates and jurisdictional logic. A firm cannot get reliable compliance automation by pointing an agent at a swamp of outdated PDFs and hoping for the best.

This is where WindowsForum readers wearing admin hats should pay attention. The glamorous part of the story is “AI agent for regulated workflows.” The operational part is permissions, retention, labeling, records management, connector governance, and tenant configuration. The agent can only be as trustworthy as the environment in which it is grounded.

That does not make the product unimportant. It makes it dependent on basic enterprise discipline. A well-governed Microsoft 365 tenant could become a strong foundation for compliance-aware agents. A chaotic tenant could turn the same agent into a liability, confidently applying old rules or validating against the wrong source of truth.

The lesson is not that enterprises should wait until their data estate is perfect. It never will be. The lesson is that AI deployment and data governance can no longer be separate programs. The rollout plan for a compliance agent should include content ownership, source authority, access review, retention design, and a clear model for what happens when the agent encounters conflicting policy material.

In that world, Copilot’s promise is double-edged. A tool that helps employees generate more content can also generate more review volume, more inconsistencies, and more opportunities for unapproved claims. A compliance agent that catches issues earlier could therefore be valuable not because it replaces review, but because it reduces the number of low-quality artifacts entering review.

But the same pattern applies elsewhere. Healthcare organizations have privacy and clinical claims concerns. Pharmaceutical and medical-device firms have promotional review obligations. Insurance carriers have product language and state-by-state regulatory differences. Public companies have disclosure controls. Government contractors have classification, export-control, and procurement rules. Any sector where language carries regulatory weight is a candidate for this type of agent.

The common denominator is not merely regulation. It is the presence of repeatable knowledge work where mistakes are expensive and review capacity is constrained. That is precisely where AI tools are most tempting and most dangerous. The higher the cost of an error, the more valuable it becomes to embed guardrails before the error leaves the draft stage.

Still, industry specificity will be the hard part. A generic compliance agent can flag obvious issues. A trusted enterprise compliance agent must understand the firm’s own policies, the business line’s risk tolerance, the jurisdiction, the product, the customer type, and the difference between advisory guidance and binding prohibition. That is a tall order, and customers should demand evidence from pilots rather than relying on platform claims.

The first deployment question is scope. Will the agent review marketing copy only, or will it touch client communications, internal analysis, contracts, board materials, and investment recommendations? Each expansion changes the risk profile. A tool that is acceptable for drafting internal policy explanations may not be acceptable for approving customer-facing claims.

The second question is authority. When the agent flags an issue, is that advisory, mandatory, or blocking? If a user ignores the warning, who is notified? If the agent fails to flag something, does the organization treat that as a control failure? These are not product settings alone; they are governance decisions.

The third question is measurement. Vendors will naturally emphasize productivity: shorter review cycles, fewer manual checks, faster AI adoption. Compliance teams will want different metrics: false negatives, false positives, escalation quality, policy coverage, audit completeness, and the reduction of repeat deficiencies. If the only measured outcome is speed, the system will be optimized for speed.

Finally, there is the human factor. Lawyers and compliance officers may welcome tools that reduce repetitive review, but they will resist systems that obscure accountability or flood them with low-confidence alerts. Business users may appreciate in-line guidance, but they will work around controls they perceive as arbitrary or slow. The design challenge is therefore not just technical; it is organizational.

For Microsoft, this is a way to make Copilot more than an assistant. If Copilot becomes the place where employees call domain-specific agents, then Microsoft controls the workbench even when it does not control every tool on it. That reinforces the value of Microsoft 365 licenses, Graph-connected data, Entra identity, Purview governance, and admin-center oversight.

For customers, the model offers both convenience and concentration risk. The convenience is that AI capabilities can meet users where they already work. The concentration risk is that Microsoft 365 becomes the control point for more workflows, more data, more decisions, and more third-party dependencies. Outages, misconfigurations, permission mistakes, and vendor failures become more consequential when agents are woven into business processes.

This is not a reason to reject the model. It is a reason to treat agent adoption as enterprise architecture rather than experimentation. A third-party agent inside Microsoft 365 should be assessed like any other system touching sensitive data and regulated output. Security review, data processing terms, logging, retention, access control, incident response, and exit strategy all matter.

The old shadow-IT problem was employees signing up for unsanctioned SaaS tools. The new version may be teams adding agents that reason over corporate knowledge and influence decisions. Microsoft’s governance tooling can help, but it will not replace disciplined approval processes.

A skeptical reading is that every vendor in enterprise AI now claims to provide guardrails, governance, auditability, and policy intelligence. Those words are necessary, but they are not sufficient. The proof will be in how accurately the agent applies firm-specific rules, how transparent its reasoning is, how well it handles ambiguity, and how gracefully it escalates.

The most plausible outcome is somewhere in between. Compliance agents will not eliminate legal review, supervisory approval, or model-risk governance. They may, however, reduce avoidable errors, standardize first-pass checks, help users understand policy earlier, and create more complete records of how AI-assisted work moved through the organization.

That is valuable if enterprises resist the temptation to overclaim. A compliance agent should be sold internally as a control enhancement, not a magic absolution. The distinction matters because regulators, auditors, and courts are unlikely to accept “the AI said it was compliant” as a defense.

If Norm’s agent works as advertised, its most important contribution may be cultural. It nudges AI from a side channel into governed work. It tells business users that AI assistance is allowed, but not detached from policy. It tells compliance teams that their expertise can be encoded nearer to the work, but not removed from accountability.

Source: TipRanks Norm Ai Launches Compliance Agent to Extend Microsoft 365 Copilot into Regulated Workflows - TipRanks.com

Copilot’s Enterprise Problem Was Never Just Productivity

Copilot’s Enterprise Problem Was Never Just Productivity

Microsoft has spent the past several years selling Copilot as the new front end for knowledge work. The pitch is familiar by now: summarize the meeting, draft the deck, analyze the spreadsheet, query the company corpus, turn institutional memory into usable output. For many organizations, especially those already standardized on Windows, Entra ID, SharePoint, Teams, Exchange, and Office, the appeal is obvious.But the most valuable workflows are often the least tolerant of improvisation. A bank can use AI to draft an investment commentary, but someone must ensure the required disclosures are present. An insurer can use AI to summarize policy language, but the summary cannot drift from approved wording. A pharmaceutical company can accelerate document preparation, but the claims, data sources, and review trail matter as much as the prose.

That is the gap Norm Ai is trying to occupy. Microsoft 365 Copilot already sits close to the work; Norm wants to sit close to the rules governing that work. Its new agent is designed to operate inside the Copilot environment as a control layer, checking content against firm policies, validating key data against approved sources, answering procedural questions, and leaving behind an audit trail that compliance teams can actually use.

This is a strategically important distinction. The first wave of enterprise generative AI was about getting language models into the hands of workers. The next wave is about deciding whether those workers — and the agents assisting them — can be trusted to act inside regulated business processes.

Norm Ai Is Selling Judgment as Infrastructure

Norm Ai describes its approach as “legal engineering,” a phrase that can sound like marketing until you unpack what regulated enterprises actually need from AI. They do not merely need a model that can produce plausible language. They need systems that can encode firm-specific standards, apply them consistently, explain what happened, and preserve evidence for later review.That is a harder problem than adding a policy search box to a chatbot. Compliance obligations are rarely a single neat rule. They are often a stack of statutes, regulator guidance, internal interpretations, product restrictions, jurisdictional differences, disclosure obligations, and historical enforcement scars. The work is not just “find a rule”; it is “apply the right rule to this particular artifact in this particular business context.”

Norm’s agent is being pitched as a bridge between Copilot’s productivity layer and that messy domain logic. In Microsoft 365, the agent can reportedly help users check written content against company standards, support mandatory disclosures, verify claims or data against approved sources, and answer questions about internal procedures. The important point is not that it can answer questions. The important point is that it is intended to move compliance review nearer to the moment of creation.

That shift matters because compliance in large organizations is often downstream. A marketing team drafts a document, a product group revises it, lawyers and compliance officers review it, and the cycle repeats until the material is either approved or abandoned. If AI makes drafting faster but leaves review untouched, the bottleneck simply moves. Norm’s argument is that AI adoption in regulated industries will stall unless the control function scales alongside the content function.

The company is also signaling seriousness through the scale of its claimed customer base. Norm says its broader platform governs AI operations for clients representing more than $30 trillion in assets under management and that it has raised more than $140 million from institutional investors. Those figures should be read as positioning as much as proof: Norm wants to be seen not as an Office add-in, but as compliance infrastructure for the agentic enterprise.

Microsoft’s Platform Strategy Creates Room for Specialists

The launch also says something about Microsoft’s own AI strategy. Microsoft has been steadily turning Copilot from a single assistant into a platform for agents, connectors, custom workflows, and third-party extensions. That makes sense for Redmond: the more specialized agents live inside Microsoft 365, the more the Microsoft tenant becomes the operating environment for enterprise work.But platform strategies create boundary problems. Microsoft can provide identity, permissions, audit hooks, data-loss controls, admin dashboards, Purview integration, and a general governance plane. It cannot realistically encode every firm’s interpretation of every compliance requirement in every regulated industry. Nor should customers want one vendor to monopolize both the productivity surface and every domain-specific judgment layer.

That leaves space for companies like Norm. If Microsoft 365 Copilot is the workplace AI substrate, specialized agents become the business logic. Legal, compliance, security, procurement, finance, HR, and industry-specific workflows can each acquire agents that understand the rules of the domain. Microsoft benefits because those agents make Copilot more useful; the specialist benefits because Microsoft provides distribution and context.

For IT administrators, this is both attractive and uncomfortable. The attractive part is consolidation. If third-party compliance agents can operate within Microsoft 365 governance, identity, and audit frameworks, they may be easier to manage than standalone tools that copy sensitive documents into separate SaaS silos. The uncomfortable part is that the Microsoft 365 environment becomes even more central to business risk.

A decade ago, admins worried about who could access a SharePoint site. Now they must worry about which AI agents can reason over that site, what they can infer, whether they can act, and how their outputs are reviewed. The identity and permission model remains foundational, but it is no longer sufficient by itself.

The Compliance Layer Is Becoming a User Experience Problem

The most interesting part of Norm’s announcement is not the checklist of features. It is the implied change in how compliance reaches ordinary workers. In the old model, compliance was a separate system, a required training module, an approval workflow, or a stern email from Legal. In the new model, it becomes a companion inside the document, presentation, chat, or analysis where work is happening.That shift has real consequences. If a user can ask a compliance-aware agent whether a statement needs a disclosure before sending a deck to a client, that may reduce review friction. If the agent can flag a prohibited claim while the sentence is being written, the user learns the rule in context. If it can validate data against approved sources, it can reduce the common enterprise problem of impressive-looking but unsupported AI output.

This is the compliance-at-the-point-of-work thesis. It is not new in concept; software vendors have been trying to push controls closer to users for years. What is new is the possibility that generative AI makes those controls conversational and adaptive rather than static and form-driven.

The risk is that workers may mistake the agent’s confidence for institutional approval. A compliance agent that says a document appears consistent with policy is not the same thing as a licensed attorney, a registered supervisor, or a final approval authority. Enterprises will need to define where the agent’s role ends and where accountable human review begins.

That boundary will determine whether these tools reduce risk or simply create a faster path to rubber-stamped mistakes. The more polished the agent’s responses become, the more important it is for organizations to preserve escalation paths, sampling regimes, exception handling, and supervisory sign-off. Automation can strengthen governance, but only if it is designed as part of governance rather than as a substitute for it.

Auditability Is the Feature Regulators Will Care About Later

In consumer AI, the output is usually the star. In regulated enterprise AI, the record may matter more. A compliance agent that improves a paragraph is useful; a compliance agent that can show what policy it applied, what data it checked, what it flagged, what the user changed, and who approved the final version is potentially much more valuable.That is why auditability appears so prominently in Norm’s pitch. Regulated organizations do not merely need to do the right thing. They need to show, sometimes months or years later, what controls existed and how decisions were made. If AI becomes embedded in ordinary workflows, the audit trail must follow it there.

Microsoft already has a substantial compliance story around Purview, audit logs, eDiscovery, data lifecycle management, sensitivity labels, and controls for Copilot interactions. Its own documentation emphasizes that organizations should assess AI compliance gaps, define retention and audit requirements, and govern Copilot and agent interactions. In other words, Microsoft is not pretending that Copilot can be deployed responsibly in high-risk settings by enthusiasm alone.

Norm’s value proposition sits above and beside that platform layer. Purview can help govern data, retain records, and investigate interactions. Norm is positioning itself closer to the question of whether the actual content or decision conforms to a firm’s rules. One layer watches the environment; the other tries to reason over the regulated substance of the work.

For CIOs and compliance officers, the practical question will be how well those layers interoperate. If Norm’s agent produces its own logs, recommendations, policy references, and verification results, those records must fit into existing supervision and eDiscovery processes. A compliance tool that creates yet another isolated evidence store may solve one problem while creating another.

The Agent Boom Forces Microsoft Shops to Revisit Data Hygiene

Every serious Copilot deployment eventually runs into the same unglamorous reality: AI makes your information architecture visible. If permissions are overbroad, Copilot can surface too much. If SharePoint contains stale, contradictory, or poorly labeled content, AI can reason from bad material. If teams have spent years treating file repositories as digital attics, agents will rummage through the attic at machine speed.Norm’s announcement does not remove that burden. In fact, a compliance agent may make the burden more explicit. Policy-aware AI needs authoritative policy sources. Verification workflows need approved data sources. Disclosure checks need current templates and jurisdictional logic. A firm cannot get reliable compliance automation by pointing an agent at a swamp of outdated PDFs and hoping for the best.

This is where WindowsForum readers wearing admin hats should pay attention. The glamorous part of the story is “AI agent for regulated workflows.” The operational part is permissions, retention, labeling, records management, connector governance, and tenant configuration. The agent can only be as trustworthy as the environment in which it is grounded.

That does not make the product unimportant. It makes it dependent on basic enterprise discipline. A well-governed Microsoft 365 tenant could become a strong foundation for compliance-aware agents. A chaotic tenant could turn the same agent into a liability, confidently applying old rules or validating against the wrong source of truth.

The lesson is not that enterprises should wait until their data estate is perfect. It never will be. The lesson is that AI deployment and data governance can no longer be separate programs. The rollout plan for a compliance agent should include content ownership, source authority, access review, retention design, and a clear model for what happens when the agent encounters conflicting policy material.

Financial Services Is the Obvious Beachhead, but Not the Only One

Norm’s language around assets under management makes clear where much of its credibility is aimed. Financial services is one of the most natural markets for compliance AI because the pain is acute and the documents are endless. Client communications, research notes, marketing materials, disclosures, investment committee memos, surveillance reviews, and supervisory approvals all involve repetitive judgment under strict rules.In that world, Copilot’s promise is double-edged. A tool that helps employees generate more content can also generate more review volume, more inconsistencies, and more opportunities for unapproved claims. A compliance agent that catches issues earlier could therefore be valuable not because it replaces review, but because it reduces the number of low-quality artifacts entering review.

But the same pattern applies elsewhere. Healthcare organizations have privacy and clinical claims concerns. Pharmaceutical and medical-device firms have promotional review obligations. Insurance carriers have product language and state-by-state regulatory differences. Public companies have disclosure controls. Government contractors have classification, export-control, and procurement rules. Any sector where language carries regulatory weight is a candidate for this type of agent.

The common denominator is not merely regulation. It is the presence of repeatable knowledge work where mistakes are expensive and review capacity is constrained. That is precisely where AI tools are most tempting and most dangerous. The higher the cost of an error, the more valuable it becomes to embed guardrails before the error leaves the draft stage.

Still, industry specificity will be the hard part. A generic compliance agent can flag obvious issues. A trusted enterprise compliance agent must understand the firm’s own policies, the business line’s risk tolerance, the jurisdiction, the product, the customer type, and the difference between advisory guidance and binding prohibition. That is a tall order, and customers should demand evidence from pilots rather than relying on platform claims.

The Vendor Narrative Is Clean; the Deployment Reality Will Be Messy

Norm’s story is elegant: Microsoft 365 Copilot accelerates work, Norm adds compliance intelligence, and regulated enterprises get faster AI adoption without losing control. The real world will be less tidy. Enterprises do not adopt governance technology in a vacuum; they adopt it through procurement cycles, legal risk committees, security reviews, model-risk assessments, records-management debates, and user-training programs.The first deployment question is scope. Will the agent review marketing copy only, or will it touch client communications, internal analysis, contracts, board materials, and investment recommendations? Each expansion changes the risk profile. A tool that is acceptable for drafting internal policy explanations may not be acceptable for approving customer-facing claims.

The second question is authority. When the agent flags an issue, is that advisory, mandatory, or blocking? If a user ignores the warning, who is notified? If the agent fails to flag something, does the organization treat that as a control failure? These are not product settings alone; they are governance decisions.

The third question is measurement. Vendors will naturally emphasize productivity: shorter review cycles, fewer manual checks, faster AI adoption. Compliance teams will want different metrics: false negatives, false positives, escalation quality, policy coverage, audit completeness, and the reduction of repeat deficiencies. If the only measured outcome is speed, the system will be optimized for speed.

Finally, there is the human factor. Lawyers and compliance officers may welcome tools that reduce repetitive review, but they will resist systems that obscure accountability or flood them with low-confidence alerts. Business users may appreciate in-line guidance, but they will work around controls they perceive as arbitrary or slow. The design challenge is therefore not just technical; it is organizational.

Microsoft’s AI Ecosystem Is Becoming a Regulated Marketplace

The Norm launch fits a broader transition in Microsoft’s ecosystem. Microsoft 365 is no longer just a suite of productivity applications. It is becoming a marketplace of AI-mediated work, where first-party and third-party agents sit on top of enterprise data and act through familiar tools. That is a powerful distribution model, and it will attract vendors building specialized agents for every business function.For Microsoft, this is a way to make Copilot more than an assistant. If Copilot becomes the place where employees call domain-specific agents, then Microsoft controls the workbench even when it does not control every tool on it. That reinforces the value of Microsoft 365 licenses, Graph-connected data, Entra identity, Purview governance, and admin-center oversight.

For customers, the model offers both convenience and concentration risk. The convenience is that AI capabilities can meet users where they already work. The concentration risk is that Microsoft 365 becomes the control point for more workflows, more data, more decisions, and more third-party dependencies. Outages, misconfigurations, permission mistakes, and vendor failures become more consequential when agents are woven into business processes.

This is not a reason to reject the model. It is a reason to treat agent adoption as enterprise architecture rather than experimentation. A third-party agent inside Microsoft 365 should be assessed like any other system touching sensitive data and regulated output. Security review, data processing terms, logging, retention, access control, incident response, and exit strategy all matter.

The old shadow-IT problem was employees signing up for unsanctioned SaaS tools. The new version may be teams adding agents that reason over corporate knowledge and influence decisions. Microsoft’s governance tooling can help, but it will not replace disciplined approval processes.

The Real Test Is Whether Compliance Becomes Faster Without Becoming Softer

A charitable reading of Norm’s launch is that it gives regulated enterprises a practical path to use Copilot in workflows they might otherwise keep off-limits. That would be a meaningful advance. Many organizations are caught between executive pressure to adopt AI and compliance anxiety about letting employees use it for anything consequential.A skeptical reading is that every vendor in enterprise AI now claims to provide guardrails, governance, auditability, and policy intelligence. Those words are necessary, but they are not sufficient. The proof will be in how accurately the agent applies firm-specific rules, how transparent its reasoning is, how well it handles ambiguity, and how gracefully it escalates.

The most plausible outcome is somewhere in between. Compliance agents will not eliminate legal review, supervisory approval, or model-risk governance. They may, however, reduce avoidable errors, standardize first-pass checks, help users understand policy earlier, and create more complete records of how AI-assisted work moved through the organization.

That is valuable if enterprises resist the temptation to overclaim. A compliance agent should be sold internally as a control enhancement, not a magic absolution. The distinction matters because regulators, auditors, and courts are unlikely to accept “the AI said it was compliant” as a defense.

If Norm’s agent works as advertised, its most important contribution may be cultural. It nudges AI from a side channel into governed work. It tells business users that AI assistance is allowed, but not detached from policy. It tells compliance teams that their expertise can be encoded nearer to the work, but not removed from accountability.

The Copilot Era Now Belongs to the Control Plane

The concrete lesson for Microsoft 365 customers is that agent adoption is becoming a governance project. Norm’s announcement is one more sign that the winners in enterprise AI will not be the tools that merely produce the most text, but the tools that can produce useful work inside defensible boundaries.- Norm Ai’s new Microsoft 365 Copilot agent is aimed at regulated workflows where document creation, data validation, disclosure support, and policy review need to happen together.

- The agent’s significance comes from its position inside everyday Microsoft 365 work rather than from being another standalone compliance application.

- Microsoft provides important governance foundations through identity, admin controls, Purview, auditing, retention, and eDiscovery, but specialist vendors are moving into domain-specific judgment layers.

- Enterprises will need clean sources of authority, disciplined permissions, and well-maintained policy content before they can expect reliable compliance automation.

- The strongest use case is not replacing lawyers or compliance officers, but reducing low-value review loops and creating better evidence trails for supervised work.

- The central risk is overreliance on an agent’s recommendation without clear escalation, human accountability, and measurement of false negatives as well as productivity gains.

Source: TipRanks Norm Ai Launches Compliance Agent to Extend Microsoft 365 Copilot into Regulated Workflows - TipRanks.com