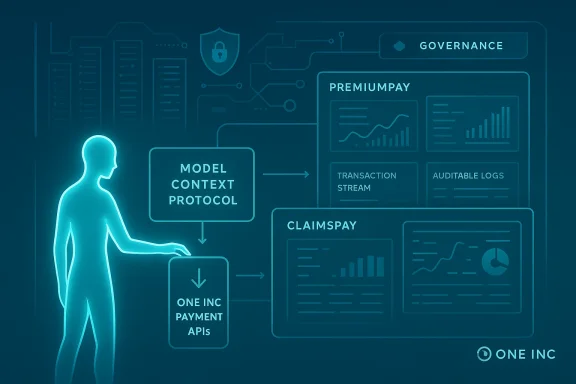

One Inc’s announcement that it is adopting the Model Context Protocol (MCP) to accelerate integrations and provide secure AI-driven access to payments data represents a significant moment where insurance-focused payments technology and the rapidly standardizing agent-tool ecosystem intersect. The company says its MCP implementation will let insurers use their own corporate LLM environments—Claude, ChatGPT Enterprise, Microsoft Copilot and others—to access One Inc’s PremiumPay and ClaimsPay platforms inside their security perimeter, speeding go-live times, automating developer and testing workflows, and enabling on-demand business reporting and analysis. (businesswire.com)

MCP (Model Context Protocol) launched as an open protocol in late 2024 to standardize how large language models (LLMs) access external data, tools, and services. Think of MCP as a communication standard for agents and data sources: it defines a client/server interaction model so LLM-hosted assistants can discover, request, and receive context and tool outputs in a predictable, auditable way. Anthropic published the specification and accompanying SDKs to make it easier for developers to build MCP servers and clients, and major vendors and tooling ecosystems have moved quickly to adopt MCP-compatible connectors.

Adoption has followed fast because MCP addresses an acute engineering problem: without a standard, each LLM or assistant needs bespoke connectors to each enterprise system (an “N × M” integration problem). MCP reduces that friction by letting organizations expose internal sources through an MCP server once, and then permitting any MCP-aware model host to connect through a governed interface. That “plug-and-play” model is precisely why insurance carriers—often saddled with legacy systems and high compliance bar—are attracted to a standard approach for bringing LLMs into workflows.

However, several caveats matter:

That potential comes with clear responsibilities. Protocol-level security critiques and real-world vulnerabilities in early MCP server implementations underline the need for careful, auditable deployments. Carriers should demand mutual authentication, capability attestation, rigorous testing (including adversarial and prompt-injection scenarios), and contractual protections before opening production lanes. When those guardrails are in place, MCP can be a pragmatic bridge between entrenched insurance systems and the productive power of LLMs—accelerating digital payments modernization while keeping control where regulators and risk teams expect it.

In short: One Inc’s MCP play is promising and well-timed, but benefits will accrue only to organizations that pair the protocol’s agility with disciplined security, governance, and compliance practices. The next 12–18 months will be decisive: early adopters who get the balance right will likely capture efficiency gains and product innovations; those who skip rigorous controls risk creating new operational and regulatory headaches.

Source: Business Wire https://www.businesswire.com/news/h...yments-Integration-and-Secure-AI-Data-Access/

Background: what MCP is and why it matters to enterprise AI

Background: what MCP is and why it matters to enterprise AI

MCP (Model Context Protocol) launched as an open protocol in late 2024 to standardize how large language models (LLMs) access external data, tools, and services. Think of MCP as a communication standard for agents and data sources: it defines a client/server interaction model so LLM-hosted assistants can discover, request, and receive context and tool outputs in a predictable, auditable way. Anthropic published the specification and accompanying SDKs to make it easier for developers to build MCP servers and clients, and major vendors and tooling ecosystems have moved quickly to adopt MCP-compatible connectors. Adoption has followed fast because MCP addresses an acute engineering problem: without a standard, each LLM or assistant needs bespoke connectors to each enterprise system (an “N × M” integration problem). MCP reduces that friction by letting organizations expose internal sources through an MCP server once, and then permitting any MCP-aware model host to connect through a governed interface. That “plug-and-play” model is precisely why insurance carriers—often saddled with legacy systems and high compliance bar—are attracted to a standard approach for bringing LLMs into workflows.

What One Inc is announcing

One Inc’s press release frames its new offering as a set of AI-driven capabilities built around MCP that will be layered on top of its existing PremiumPay (inbound premium collection) and ClaimsPay (outbound claims disbursement) products. The key technical and commercial claims are:- MCP-enabled connectors that run inside the insurer’s approved AI environment rather than in One Inc’s hosted model instance, with credential management left to the carrier’s security framework. (businesswire.com)

- Faster developer onboarding via AI-assisted code generation, documentation, validation, and automated testing to reduce time to go-live. (businesswire.com)

- Business-user functionality: on-demand reporting, AI-generated analyses, and actionable insights drawn from combined One Inc payment data plus in-house systems. (businesswire.com)

Why insurers would want MCP in their payments stack

Insurance carriers have a particular set of operational and regulatory drivers that make this architectural choice compelling:- Faster integrations: standardized connectors mean less custom code and shorter project timelines for connecting policy admin systems, core claims platforms, and payment rails. One Inc’s framing emphasizes AI-assisted developer workflows (code generation and automated tests) to shrink the typical integration timeline. (businesswire.com)

- Richer context for analytics and decisioning: by enabling carriers to combine One Inc payment events with their own internal datasets inside corporate LLMs, organizations can ask the AI for cross-system analysis—e.g., reconciliation anomalies, exception triaging, or fraud indicators—without exposing raw data to vendor-hosted models. This supports more nuanced operational reporting and faster remediation cycles. (businesswire.com)

- Security and control: One Inc says MCP connections will be permissioned, authenticated, and auditable, with access governed by carrier-owned credentials and API controls. For an industry where GLBA (Gramm-Leach-Bliley) privacy rules and state insurance regulators demand strict controls over customer financial information, that model aligns with enterprise expectations for custody and governance.

- Compliance with payment security expectations: carriers and their vendors that process or touch cardholder data will continue to operate under PCI DSS expectations (or vendor contractual obligations that flow from PCI requirements). Keeping MCP endpoints and credential management inside the insurer’s approved environment simplifies evidence of control for compliance reviews and audits.

Technical anatomy: how MCP-enabled payments access will likely be implemented

MCP follows a client/server message model. In practice, One Inc’s MCP-enabled integration will likely include the following components:- An MCP server or server proxy that exposes a curated set of One Inc payment APIs and data models (payment events, remittance details, exception records) in MCP server form. This server can be run inside the carrier’s VPC, or behind enterprise gateways, depending on the agreed deployment model.

- An MCP client within the carrier’s LLM host (Claude, ChatGPT Enterprise, Copilot, etc.) that negotiates connections, authenticates via carrier-managed credentials, and requests contextual payloads and tool execution results when the assistant needs payment context.

- Governance controls: authentication tokens, role-based access, and audit logging for every MCP call so that data access is traceable and revocable. One Inc specifically emphasizes “permissioned, authenticated, and fully auditable” access under the insurer’s security framework. (businesswire.com)

- Developer workflow tooling that leverages LLMs to scaffold integration code, generate API documentation, and create test harnesses—streamlining the typical manual churn of integration projects. This is consistent with how MCP has been used in other early MCP deployments to accelerate connector development.

Benefits in practice (what carriers and integrators can reasonably expect)

When realized responsibly, MCP-driven access to payments data should deliver measurable operational improvements:- Reduced integration timelines and lower implementation costs through standardized connectors and AI-assisted developer tooling. (businesswire.com)

- Fewer exception-handling cycles and lower manual labor by enabling AI-based triage and automated remediation suggestions tied to real One Inc event data. (businesswire.com)

- Improved policyholder experience through faster claim disbursements and more transparent premium collection flows, powered by integrations across payment rails and communications channels. (businesswire.com)

- Stronger fraud controls because AI agents operating within a carrier’s environment can combine payments telemetry with internal risk models—assuming careful governance rules and monitoring are in place. (businesswire.com)

Risks and open questions: security, governance, and operational hazards

The technical promise of MCP comes with non-trivial risk vectors that carriers must evaluate before widespread production adoption.1) Protocol-level security weaknesses and prompt injection

Academic and industry researchers have flagged that MCP’s open, bidirectional design introduces new attack surfaces—most notably prompt injection via servers that supply contextual payloads, and implicit trust assumptions when multiple MCP servers are chained together. Recent security analyses demonstrate that architectural choices in the protocol can amplify attack success rates unless mitigated by capability attestation, message authentication, and strict origin verification. Practical incidents have also shown real-world MCP server implementations with vulnerabilities that could be chained to escalate to remote code execution in mixed-server deployments. Carriers should treat MCP endpoints and server implementations as high-risk integration points and demand hardened reference implementations with formal security proofs or mitigations.2) Data leakage and unintended disclosure

MCP is designed to make data available to an LLM host, but what that LLM does with the data depends on the model’s runtime policies. Even if the MCP server and transport are secure, a permissive model or misconfigured policy could cause sensitive payment data to be included in downstream logs or in responses sent to other systems. Carriers must verify that their LLM environment supports strict data retention and output filtering controls, as well as provenance tagging, so that payment data never leaves the approved security boundary or is exposed to human-in-the-loop channels inadvertently.3) Regulatory and contractual exposure

Insurance data often qualifies as nonpublic personal information under GLBA and similar frameworks; processing or sharing such data—even with an AI assistant—can trigger regulatory obligations about consent, purpose limitation, and safeguards. Contractors and service providers (including MCP server vendors) may be restricted in how they reuse or re-disclose data received from carriers. Effective MCP adoption will require contracts and technical controls that enforce use restrictions and provide auditable evidence of compliance.4) Auditability and forensic readiness

One Inc’s messaging emphasizes auditable access, but carriers should demand proof: tamper-evident logs, immutable message IDs, and retention policies that meet both regulatory and internal investigation needs. Given the potential for complex multi-component flows (carrier LLM host → MCP client → MCP server → One Inc APIs), forensic reconstruction requires consistent correlation IDs and end-to-end logging practices that can withstand regulator or insurer legal discovery. (businesswire.com)5) Vendor and supply-chain risk

MCP’s ecosystem model encourages multiple vendors to provide servers and clients. This increases the supply-chain surface area; carriers will need to validate vendor security posture, third-party software composition, and patching regimes. The history of patched vulnerabilities in some reference MCP server implementations demonstrates that even well-maintained projects can introduce risk if combined components interact in unforeseen ways.Assessing One Inc’s promise: what they’ve delivered and where caution is warranted

One Inc’s positioning is credible in the sense that the company already operates at scale in insurance payments and has a suite of integration-focused products (PremiumPay and ClaimsPay). Its public materials claim the company handles approximately $120 billion in annual premiums and claims flows and serves several hundred carriers—figures that One Inc has used in prior corporate materials and which are repeated in their releases. Those scale assertions bolster the claim that a secure MCP approach could have meaningful operational impact across many insurer customers. (businesswire.com)However, several caveats matter:

- The press release is a product announcement, not a detailed technical whitepaper. Implementation details—transport choices, authentication schemes, token lifetimes, and audit log schema—are not fully specified in the announcement, and these are precisely the elements that determine whether an MCP deployment is secure in practice. Independent validation and technical due diligence will be essential. (businesswire.com)

- Security research on MCP is emerging rapidly. Multiple papers and incident reports surfaced in late 2025 and early 2026 documenting protocol-level weaknesses and exploitable flaws in reference implementations. One Inc’s claims that credential management remains under carrier control are reassuring, but carriers should still require proof-of-concept penetration tests and secure architecture reviews focused on prompt injection, server hardening, and mutual authentication.

- Operational governance is the linchpin. Even if the technical stack is properly secured, organizational policies—who can add MCP servers, how model outputs are logged and approved, how data retention is controlled—will determine whether carriers realize the benefits safely. Without strong process controls, MCP could increase speed at the expense of traceability.

Practical checklist for carriers evaluating One Inc’s MCP offering

Carriers should take a structured approach to evaluation. Below is a pragmatic checklist to guide procurement, security, and engineering teams.- Require an architecture diagram that documents transport (STDIO, HTTP, SSE), authentication, and logging semantics for every MCP flow.

- Demand mutual authentication and capability attestation between MCP clients and servers; insist on signed messages and anti-replay protections. (This mitigates several protocol-level attack scenarios documented by researchers.)

- Validate deployment options: ensure the MCP server or proxy can run in the carrier’s network or VPC, with carrier-managed credentials and no backchannel to vendor-hosted models unless explicitly authorized. (businesswire.com)

- Perform adversarial testing: include prompt-injection scenarios, chained server interactions, and elasticity testing of the MCP server under load.

- Confirm logging and retention policies meet GLBA/FTC/State insurance regulator expectations and provide end-to-end correlation IDs for forensic reconstruction.

- Validate PCI DSS scope: confirm whether cardholder data or PANs may touch MCP components and ensure QSA-level review if necessary.

- Contractual guarantees: include breach notification timelines, service-level security commitments, and vendor liability clauses for supply-chain vulnerabilities. (businesswire.com)

Where MCP could change the insurance payments landscape

If carriers adopt MCP with discipline, the impacts could be broad and positive:- Faster modernization: projects that historically took quarters to integrate legacy policy, billing, and claims systems could move more quickly with standardized connectors and AI-assisted development tools.

- Better operational intelligence: near real-time AI-assisted reconciliation and exception triage across claims and premium flows could reduce float, improve cash management, and minimize payment errors.

- New product innovation: carriers could build context-aware policyholder experiences—automated payout explanations, proactive premium remediation, or personalized payment plans—without re-architecting back-end systems for each new capability.

- Ecosystem expansion: MCP’s vendor-agnostic model will enable more insurtechs and third-party tools to plug into insurer LLMs, accelerating innovation while keeping data governance at the enterprise boundary.

Conclusion: a measured optimism

One Inc’s MCP-enabled offering is a logical and timely step for a company built around modernizing the insurance payments stack. By enabling carriers to use their own LLM-hosted assistants to access payments data under their own security umbrellas, One Inc is aligning product design with the primary concerns of regulated enterprise buyers: speed of deployment, integration simplicity, and control over sensitive data. The program’s potential to reduce manual processes, speed reconciliation, and provide AI-driven business insights is real—particularly given One Inc’s scale in the insurance payments market. (businesswire.com)That potential comes with clear responsibilities. Protocol-level security critiques and real-world vulnerabilities in early MCP server implementations underline the need for careful, auditable deployments. Carriers should demand mutual authentication, capability attestation, rigorous testing (including adversarial and prompt-injection scenarios), and contractual protections before opening production lanes. When those guardrails are in place, MCP can be a pragmatic bridge between entrenched insurance systems and the productive power of LLMs—accelerating digital payments modernization while keeping control where regulators and risk teams expect it.

In short: One Inc’s MCP play is promising and well-timed, but benefits will accrue only to organizations that pair the protocol’s agility with disciplined security, governance, and compliance practices. The next 12–18 months will be decisive: early adopters who get the balance right will likely capture efficiency gains and product innovations; those who skip rigorous controls risk creating new operational and regulatory headaches.

Source: Business Wire https://www.businesswire.com/news/h...yments-Integration-and-Secure-AI-Data-Access/