Microsoft has finally given administrators a supported way to remove the consumer Microsoft Copilot app from managed Windows 11 devices — but the escape hatch is tightly controlled, limited to Insider Preview builds, and intentionally designed as a one‑time, surgical cleanup rather than a fleet‑wide “kill switch.”

Microsoft’s Copilot family now spans several layers: a consumer‑facing Microsoft Copilot app that appears on many Windows 11 installations, deep OS integrations (taskbar icon, Win+C or Copilot hardware keys, Explorer context menus), and the paid, tenant‑managed Microsoft 365 Copilot service. That proliferation has raiand governance questions for IT teams who need deterministic control over what runs on managed endpoints.

The January Insider update that introduced the new uninstall path — Windows 11 Insider Preview Build 26220.7535 (delivered as KB5072046) — is positioned as an administrative convenience for specific remediation scenarios rather than a retreat from integrating AI into the OS. Microsoft documented the Group Policy path and rollout details in the Windows Insider announcement.

The policy will perform the uninstall only if all of the following are true for the targeted user/device:

In short: yes — administrators can finally (and officially) uninstall the consumer Copilot app on managed devices — but it’s tricky, intentionally narrow, and should be used as part of a broader governance strategy rather than a stand‑alone fix.

Source: PCMag Australia https://au.pcmag.com/news/115401/you-can-finally-uninstall-microsofts-copilot-app-but-its-tricky]

Background

Background

Microsoft’s Copilot family now spans several layers: a consumer‑facing Microsoft Copilot app that appears on many Windows 11 installations, deep OS integrations (taskbar icon, Win+C or Copilot hardware keys, Explorer context menus), and the paid, tenant‑managed Microsoft 365 Copilot service. That proliferation has raiand governance questions for IT teams who need deterministic control over what runs on managed endpoints.The January Insider update that introduced the new uninstall path — Windows 11 Insider Preview Build 26220.7535 (delivered as KB5072046) — is positioned as an administrative convenience for specific remediation scenarios rather than a retreat from integrating AI into the OS. Microsoft documented the Group Policy path and rollout details in the Windows Insider announcement.

What Microsoft shipped (the facts)

- Build and KB: Windows 11 Insider Preview Build 26220.7535, delivered as KB5072046.

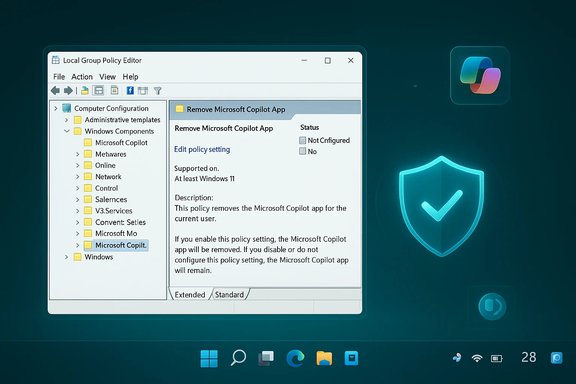

- New Group Policy name: RemoveMicrosoftCopilotApp.

- Where it appears in Group Policy Editor: User Configuration → Administrative Templates → Windows AI → Remove Microsoft Copilot App.

- Target SKUs and channel: the policy is visible on Windows 11 Pro, Enterprise, and Education SKUs in the Insider Dev & Beta channels (feature rollout is controlled).

How the RemoveMicrosoftCopilotApp policy actually works

Microsoft implemented the policy as a conditional, one‑time uninstall that only runs when a strict checklist of preconditions is satisfied. The policy’s conservative design aims to avoid inadvertently removing paid, tenant‑managed Copilot capabilities or surprising active users.The policy will perform the uninstall only if all of the following are true for the targeted user/device:

- Both Microsoft 365 Copilot (the tenant‑managed paid offering) and the consumer Microsoft Copilot app are installed on the device. This guard prevents admins from removing the only Copilot experience a paid user relies on.

- The consumer Microsoft Copilot app was not installed by the user — it must be provisioned by OEM/tenant tooling or preinstalled in the image. User‑installed copies are explicitly excluded.

- The consumer Copilot app has not been launched in the last 28 days. Microsoft enforces this inactivity window as a safety gate to avoid removing a tool an active user depends on.

Why Microsoft designed the policy this way

The design balances two competing priorities:- Preserve Microsoft 365 Copilot for tenants that have purchased and rely on the paid service. Removing the consumer front end without that check could cripple productivity for paid customers.

- Provide a supported, auditable remediation path to clean up provisioned, unused installs — for classroom images, kiosks, or incorrectly provisioned devices. The one‑time uninstall and the 28‑day inactivity gate reduce the risk of surprising or harming active users.

Practical implications for IT: when this helps and when it doesn’t

Good use cases (where RemoveMicrosoftCopilotApp helps)

- Imaging cleanups: OEM or provisioning images that accidentally include the consumer Copilot app and need a suppo

- Classrooms or kiosks that received provisioned installs but don’t use Copilot.

- Low‑touch endpoints where administrators want a supported, auditable one‑time removal rather than manual scripting on each device.

Where it falls short

- Fleet‑wide, permanent bans: the uninstall is one‑time and reversible; it does not prevent reinstallation or re‑provisioning.

- User‑installed Copilot: the policy intentionally ignores copies that users installed themselves. ([itpro.com](Not keen on Microsoft Copilot? Don’t worry, your admins can now uninstall it – but only if you've not used it within 28 days needs: the 28‑day inactivity requirement can block immediate action in environments where Copilot launches automatically at sign‑in; admins may need to disable auto‑start or coordinate a user pause before the policy can act. ([tomshardware.com](Admins finally get the power to uninstall Microsoft Copilot on Windows 11 Pro, Enterprise, and EDU versions — devices must meet specific conditions to allow the removal of the AI app the evidence (what I verified and where)

- Microsoft’s Windows I lists Build 26220.7535 (KB5072046) and identifies the Group Policy path for RemoveMicrosoftCopilotApp. ([blogs.windows.com](Announcing Windows 11 Insider Preview Build 26220.7535 (Dev & Beta Channels) Independent reporting from outlets including AllThingsHow, Tom’s Hardware and TechRadar reproduced the build number, the policy name, and the three gating conditions (user installation status, presence of M365 Copilot, and 28‑day inactivity).

- Communreporting also reproduced the one‑time uninstall semantics and recommended layered controls such as AppLocker/WDAC and tenant provisioning changes for durable enforcement.

Recommended admin playbook (practical, step‑by‑step)

This is a concise, actionable rollout plan for admins who want to evaluate and use RemoveMicrosoftCopilotApp safely.- Build a lab and test: deploy Windows 11 Insider Preview Build 26220.7535 (KB5072046) to a small test ring and confirm the Group Policy appears at: User Configuration → Administrative Templates → Windows AI → Remove Microsoft Copilot App.

- Inventory: identify endpoints with both the consumer Microsoft Copilot app and Microsoft 365 Copilot installed; flag which installs were OEM/provisioned vs user‑installed.ntune app reports, or AppxPackage queries.

- Reduce activity window: to allow the uninstall to trigger faster, consider disabling Copilot auto‑start for your pilot group (via startup settings or script) so the app can meet the 28‑day inactivity gate. Document the expected user impact. ([allthings.how](Windows 11 build 26220.7535 brings Copilot image descriptions and admin Copilot controls Apply policy in a pilot OU: enable RemoveMicrosoftCopilotApp via Group Policy or map the ADMX into Intune configuration profiles for a small set of managed users. Monitor event logs and MDM reports for the uninstall action.

- Verify post‑uninstall: confirm the Copilot front end is removed for the user account, test critical accessibility workflows, and validate that Microsoft 365 Copilot (tenant service) remains functional if required.

- Harden for durability: if your goal is to permanently block reinstall,ontrol (AppLocker/WDAC) rules or Intune App Protection policies and remove Copilot from images. Reimaging or AppLocker are the only reliable long‑term methods.

How to check and (if necessary) remove Copilot locally — safe options for power users and admins

Start with the safe, reversible options. If Windows exposes, use that first.- GUI (least risky):

- Settings → Apps → Installed apps (or Apps & features) → search “Copilot” → Uninstall (if enabled). This removes the front‑end without registry surgery and is reversible.

- PowerShell (power users — confirm names first):

- Open PowerShell as Administrator.

- List matching packages: Get‑AppxPackage | Wheree "Copilot" }

- If you confirm the package name, remove for the current user: Remove‑AppxPackage -Package <PackageFullName>

- To similarly remove prr all users, use Remove‑AppxProvisionedPackage or Get‑AppxPackage -AllUsers with caution. Package names vary across builds (examples include Microsoft.Copilot or Microsoft.Windows.Copilot), so confirm boup Policy / Registry (supported management):

- For general Copilot disabling (not the one‑time uninstall) you can use the supported Group Policy "Turn off Windows Copilot" which maps to the registry key:

- SOFTWARE\Policies\Microsoft\Windows\WindowsCopilot\TurnOffWindowsCopilot = 1

- Note: this is separate from RemoveMicrosoftCopilotApp and is used to turn off Copilot affordances rather than execute the targeted uninstall. Always back up registry and test.

- 28‑day inactivity: the exact implementation of the inactivity check (hlast launch) is not exhaustively documented in public release notes. Validate in a lab and gather logs to confirm behavior for your provisioning scenario. Treat any precise claims about the internal last‑use heuristic as operationally socally.

- Accessibility impact: Copilot powers new Narrator features (image descriptions and related accessibility advantages). Removing the front‑end may change accessibility behavior; test with assistive‑technology users before broad rollout.

- Reprovisioning risk: OS feature updates, image deployments, or tenant provisioning can reintroduce Copilot; make sure images are rebuilt or add AppLocker/Intune controls to prevent reinstallation. The one‑time uninstall will not stop later re‑provisioning.

- Telemetry and data artifacts: uninstalling the front‑end may not delete cloud‑stored artifacts, tenant telemetry, or service‑side data. Consult Microsoft’s Copilot privacy documentation and tenant agreements if data residency or retention is a compliance concern. This is outside the scope of the Group Policy uninstall.

Alternatives for durable enforcement

If the organizational requirement is “Copilot must not run or be reinstallable,” adopt a layered approach:- AppLocker or Windows Defender Application Control (WDAC): block the Copilot package family or publisher signing across the estate. This is the most robust approach to stop execution and reinstallation.

- Image deprovisioning: remove the Copilot package from your base image and rebuild the image used for deployment. Prevent reappearance during provisioning.

- Tenant provisioning controls: disable tenant auto‑provisioning flows that push the consumer Copilot package as part of device setup or Intune policies. Validate your tenant provisioning blueprint.

A realistic admin checklist before broad deployment

- Pilot in a controlled ring using Windows 11 Insider Preview Build 26220.7535 (KB5072046). Confirm Group Policy presence and uninstall behavior.

- Test accessibility workflows and Microsoft 365 Copilot dependencies for users who must keep tenant‑managed Copilot.

- Validate how your MDM/Intune inventory reports presence and usage, and confirm a reliable way to detect “last launched” status for the 28‑day gate or rely on behavior changes (disable auto‑start).

- If permanence is required, plan AppLocker/WDAC rules and update images accordingly. Document and automate the process.

- Maintain a post‑update verification cadence: after each Windows feature update, re‑verify Copilot’s presence and enforcement posture. Reporter and community experience show that updates and provisioning can reintroduce components.

Final assessment

RemoveMicrosoftCopilotApp is a welcome, pragmatic tool for targeted cleanup: it gives IT administrators a supported, auditable way to remove provisioned, unused consumer Copilot installs from managed Windows 11 devices. But it is deliberately conservative — the one‑time uninstall, the 28‑day inactivity gate, and the exemption for user‑installed copies position it as a surgical remedy, not a universal enforcement mechanism. Organizations that need durable, fleet‑wide control should treat RemoveMicrosoftCopilotApp as one element in a layered governance model that includes AppLocker/WDAC, tenant provisioning controls, image hygiene, and an operational verification runbook. Test thoroughly before deployment, account for accessibility impacts, and plan for re‑provisioning risk after OS updates.In short: yes — administrators can finally (and officially) uninstall the consumer Copilot app on managed devices — but it’s tricky, intentionally narrow, and should be used as part of a broader governance strategy rather than a stand‑alone fix.

Source: PCMag Australia https://au.pcmag.com/news/115401/you-can-finally-uninstall-microsofts-copilot-app-but-its-tricky]