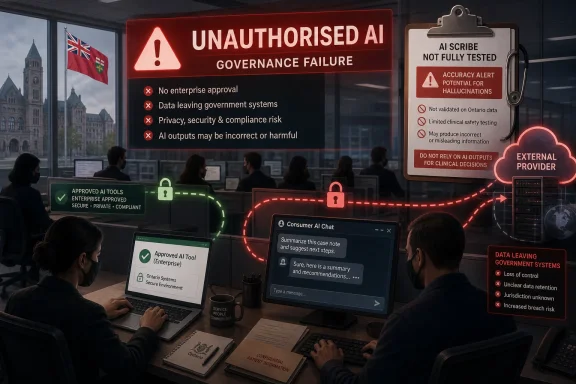

Ontario’s auditor general Shelley Spence released a special report on May 12, 2026, finding that Ontario public servants overwhelmingly used unapproved AI chatbots while provincially procured medical AI tools had not been tested with enough rigor. The answer to whether government should feed residents’ personal information into chatbots is therefore not a simple no, but it is emphatically not “go faster.” Ontario has stumbled into the central technology-policy fight of the decade: whether efficiency gains from generative AI can be trusted inside institutions that hold coercive power and intimate personal records. The early evidence suggests the province wants the benefits of AI before it has built the machinery of accountability that makes those benefits legitimate.

The most revealing detail in Spence’s report is not that Ontario has approved Microsoft Copilot for use by career civil servants. That part is predictable. Microsoft is already embedded in the public-sector desktop, it sells enterprise controls, and it knows how to speak procurement, compliance, and risk management in a language governments understand.

The problem is that approval did not translate into behavior. According to reporting on the auditor general’s findings, Copilot accounted for only about six per cent of Ontario Public Service generative AI use, while staff instead turned to more popular tools such as ChatGPT and Claude. That gap is the story: a formal policy existed, but the workplace culture and technical enforcement apparently did not.

For IT administrators, this is a familiar movie. A sanctioned tool is rolled out because it satisfies governance requirements, while employees quietly use the tool that feels faster, smarter, or more familiar. The result is shadow AI, the generative-era successor to shadow IT, except the files being pasted into the browser may include health records, legal material, procurement details, correspondence, and sensitive personal information.

Ontario’s failure, then, is not adoption. It is adopting AI as if the old compliance model still works. A directive, a training course, and an approved vendor do not equal control when a public servant can open another tab and paste the problem into a consumer-grade chatbot.

That design is part of its power. It invites workers to treat it as a scratchpad, a search engine, a writing assistant, a translator, a summarizer, and a brainstorming partner. For a public servant facing a long policy memo, a backlog of correspondence, or a messy dataset, the temptation is obvious.

But the same informality makes it dangerous. A chatbot prompt can become a data disclosure event. A request to “summarize this file” can transfer personal information to an external service. A request to “draft a response to this citizen” can expose names, addresses, benefits status, medical circumstances, or business information that was collected for a specific government purpose.

This is where public-sector AI differs from an employee using an LLM to polish a sales email. Citizens do not hand information to government as a voluntary productivity experiment. They provide it because the state requires it, because they need health care, because they are applying for benefits, because they are complying with regulation, or because they are trying to access a public service.

That power imbalance changes the ethics. If a private company mishandles data, customers may have some ability to leave. If a province mishandles data, residents are often trapped inside the relationship. They cannot opt out of the health system, the tax system, the licensing system, or the records government maintains about them.

The serious lesson is that procurement can select for compliance while users select for performance. Governments tend to buy tools that satisfy legal, security, residency, indemnity, and management requirements. Workers gravitate toward whatever answers better, faster, or with less friction.

That mismatch is not unique to Ontario. It is the structural problem facing every large organization that tries to contain generative AI with a single approved assistant. If the sanctioned tool feels weaker than public alternatives, employees will route around it unless access is technically blocked, monitoring is real, and the approved workflow is good enough to become habit.

Spence’s recommendation that non-compliant AI services be blocked from government computers lands precisely here. Blocking is not elegant, and it is not sufficient, but in a government environment it may be necessary. A policy that says “do not paste sensitive information into unapproved tools” is weaker than a network rule that makes the paste impossible in the first place.

Still, blocking cannot be the whole strategy. Employees who want to use AI may reach for personal devices, copy text into private accounts, or use unofficial workarounds. The province therefore needs both sides of the equation: better technical guardrails and a sanctioned AI environment that is capable enough to meet real workplace demand.

Doctors are drowning in documentation. Administrative burden steals time from patients, contributes to burnout, and makes already strained health systems less humane. If AI can safely reduce that burden, patients and clinicians both benefit.

But “if” carries a lot of weight. The auditor general reportedly found that AI scribe systems were not evaluated adequately before implementation, with concerns about inaccuracies, hallucinated content, missing documentation, and weak procurement scoring for core safety measures. That is not a minor paperwork defect. A medical note is not just an internal memo; it can guide future treatment, referrals, prescriptions, billing, legal records, and patient understanding.

The most troubling possibility is not that a chatbot produces an obviously absurd sentence. It is that it produces a plausible but wrong clinical note. A hallucinated symptom, omitted caveat, invented treatment plan, or inaccurate summary of what a patient said may pass unnoticed in a busy practice unless there is disciplined human review.

AI scribes can still be worth using. But they belong in the category of tools that must be validated, monitored, logged, and clearly subordinated to professional judgment. If a doctor saves five to seven hours a week but the system occasionally fabricates medically relevant information, the province has not found a productivity hack. It has created a new class of latent error.

A mature public-sector AI program has to answer mundane questions before it reaches grand ones. Who can use which tool? What categories of data are prohibited? Are prompts and outputs logged? Who reviews exceptions? How are vendors evaluated? What happens when a tool changes its model, retention policy, or hosting architecture? How are hallucinations reported? What is the appeal process when AI contributes to a decision affecting a resident?

These questions sound bureaucratic because they are bureaucratic. But bureaucracy is not the enemy here. It is the mechanism by which democratic institutions slow down powerful systems enough to inspect them.

The auditor general’s report suggests Ontario has not yet built that mechanism at the speed AI adoption requires. That does not mean the province should ban AI. It means it should stop treating AI as a software feature and start treating it as infrastructure that can alter how public power is exercised.

If a provincial government uses a U.S.-based AI provider to process sensitive public-sector information, the question is not only where the data centre sits. It is who owns the system, which laws apply, what foreign governments may compel, how support personnel are managed, how telemetry flows, and whether contractual promises survive legal demands outside Canada.

This is not a call for technological nationalism as a reflex. Canada cannot simply wish into existence a domestic hyperscale cloud industry, a full-stack AI ecosystem, and competitive foundation models overnight. Nor would every Canadian-branded tool automatically be safer or better.

But governments need to be honest about dependency. Public services now rely on a small group of global technology firms whose incentives, legal obligations, and strategic priorities do not perfectly align with Canadian democratic accountability. In the AI era, that dependency becomes more sensitive because the tool is not merely storing documents. It may be summarizing them, classifying them, generating recommendations from them, and influencing the pace and content of government work.

Digital sovereignty, in practical terms, means knowing which dependencies are acceptable, which require mitigation, and which should be avoided for high-risk data. It means procurement rules that understand model behavior, not just data residency. It means privacy law that can deal with inference, training, retention, and automated decision support, not only database breaches.

A government file created for one purpose can suddenly become input for another. A health note can become training-adjacent data. A benefits application can become a summarization task. A complaint can become a prompt in a chatbot used to draft a response. Each step may feel minor to the worker trying to get a job done, but collectively they can transform the relationship between citizen and state.

The seductive argument for broad AI use is that government could become more proactive. An AI system could identify residents eligible for benefits they never claimed, flag service gaps, summarize complex rules, translate forms, and reduce the burden on people who currently have to navigate a maze of disconnected programs. That is a real public good.

The dystopian version uses the same technical ingredients. A government that can connect and analyze everything can also overreach, profile, surveil, misclassify, and deny services at scale. The difference is not the model. The difference is law, institutional design, oversight, and restraint.

This is why “efficiency” is too small a word for the debate. Government inefficiency is frustrating, expensive, and sometimes cruel. But some inefficiency is also a privacy architecture. Data silos, access limits, and procedural friction can protect citizens from the all-seeing administrative machine that technologists are forever tempted to build.

If workers are using ChatGPT or Claude instead of the approved tool, some are undoubtedly making poor judgments. But widespread non-compliance often indicates a system design failure. Either the rules were not clear, the tools were not blocked, the approved option was not good enough, the training did not stick, or managers sent mixed signals about productivity and risk.

The private sector has already learned this lesson. Employees do not adopt shadow tools only because they are reckless. They adopt them because official systems are slow, clumsy, unavailable, or misaligned with the work. The fix is not just discipline; it is better product design and governance that acknowledges actual behavior.

Ontario should assume that AI demand inside the public service will grow, not shrink. The right response is to build a safe path that is easier than the unsafe path. That means integrated approved tools, clear data-classification rules, visible warnings, prompt filtering, logging where appropriate, and meaningful consequences for deliberate misuse.

It also means giving employees examples rather than abstractions. “Do not enter sensitive information” is weaker than showing a caseworker exactly what can and cannot be pasted into an AI system. “Use Copilot” is weaker than demonstrating how to summarize a non-sensitive policy document, draft a generic public notice, or compare internal guidelines without exposing personal data.

If an AI scribe can score well despite weak evaluation of accuracy, bias, or security, the procurement process is rewarding the wrong things. Vendor viability and local presence may matter, but they cannot outrank safety in a clinical context. A tool that is domestically convenient but clinically unreliable is not a responsible public-sector choice.

AI procurement should be staged. Vendors should first meet non-negotiable thresholds for privacy, security, accuracy testing, auditability, and incident reporting. Only after that should governments compare cost, usability, implementation speed, and economic-development benefits.

The province should also avoid treating vendor claims as evidence. AI vendors have strong incentives to market speed, savings, and clinician satisfaction. Government buyers need independent testing, adversarial evaluation, and post-deployment monitoring. A model that performs acceptably in a demo may fail in the messy acoustic and linguistic reality of a family-practice office.

There is also a documentation burden. If a clinician must review and sign off on AI-generated notes, the system should make that responsibility explicit and track it. If the note was generated from a transcript, the transcript retention policy should be clear. If the AI output is edited, the audit trail should preserve what changed. Health care already lives with medico-legal complexity; AI should not add ambiguity disguised as automation.

The case for Microsoft is straightforward. It offers enterprise administration, identity integration, contractual controls, compliance documentation, and a procurement channel governments already understand. For a chief information officer, that matters. A chatbot is not just a chatbot when deployed across tens of thousands of workers; it is an identity, logging, data-protection, and support problem.

The case against overreliance is equally clear. If the only approved AI path is one vendor’s ecosystem, government bargaining power weakens. Product limitations become policy limitations. A province may end up shaping its AI governance around what a hyperscaler is willing to sell rather than what democratic institutions should require.

This tension is not solved by swapping Microsoft for OpenAI, Anthropic, Google, or a fashionable open-source stack. It is solved by writing requirements that are portable across vendors: data minimization, Canadian legal accountability where necessary, logging, deletion rights, model-change notification, independent audits, and clear restrictions on training or secondary use.

In other words, Ontario should not be asking which chatbot deserves public-sector trust in the abstract. It should be defining the conditions under which any AI system is allowed near public data.

The danger is not that LLMs are conscious, magical, or omniscient. The danger is that they are useful enough to be adopted and unreliable enough to require discipline. That combination is more operationally dangerous than science fiction because it encourages partial trust.

People learn quickly that AI tools can produce good drafts, summarize long documents, and speed up tedious tasks. They then become tempted to extend that trust into contexts where the cost of error is higher. The model’s fluency masks its uncertainty, and its convenience masks the data flows behind it.

This is why public institutions need a vocabulary beyond hype and dismissal. AI is neither an alien superintelligence nor a harmless autocomplete gadget. It is a general-purpose statistical interface that can create value, leak data, reinforce errors, and reshape administrative work. That is enough to demand governance.

The Windows administrator’s job is becoming partly an AI governance job. Tenant settings, browser controls, endpoint restrictions, data-loss prevention, sensitivity labels, conditional access, and logging policies now sit at the front line of public accountability. A prompt window may look like user productivity, but it can become an exfiltration path.

That shift will be especially uncomfortable for organizations that separated policy from operations. Privacy officers can write principles, legal teams can review contracts, and executives can announce responsible innovation. But if endpoint policy allows access to unapproved tools and workers are under pressure to move faster, the real policy is whatever the workstation permits.

Ontario’s experience also undercuts the idea that training alone is enough. Training matters, but it decays under workload pressure. Technical controls do not replace judgment, but they prevent predictable mistakes from becoming systemic failures.

The more mature posture is layered. Block the riskiest consumer tools on managed devices. Provide an approved AI option that is actually useful. Use sensitivity labels to prevent protected content from flowing into prompts. Monitor aggregate usage patterns. Require explicit review for high-risk use cases. Keep humans accountable for decisions and records.

That sounds like a lawyer’s answer, but it is also the only answer compatible with democratic government. There are contexts where AI use may be benign or beneficial. Summarizing a public consultation document is not the same as analyzing a child-protection file. Drafting a generic service notice is not the same as generating a clinical record. Translating public-facing information is not the same as profiling residents for eligibility or enforcement.

The public-sector AI debate therefore has to become more granular. Blanket enthusiasm is reckless. Blanket prohibition is unrealistic and may deny citizens better services. The hard work is classification: low-risk, medium-risk, high-risk, and prohibited uses, each with controls that match the stakes.

Ontario’s early missteps are not fatal, but they are clarifying. They show that governments cannot rely on vendor assurances, employee discretion, or aspirational frameworks. They need enforceable rules and technical systems that make those rules real.

Ontario should narrow its AI ambitions in the short term. The province does not need every ministry experimenting with every model. It needs a controlled set of use cases where benefits are measurable, risks are bounded, and oversight is real.

That might include summarizing non-sensitive internal documents, helping staff find public policy material, translating general public information, assisting with code under strict review, and reducing clinical paperwork where tools meet hard validation standards. It should not include unmonitored use of consumer chatbots with personal records, automated decision-making without appeal, or medical documentation systems whose accuracy has not been independently stress-tested.

The state also needs more technical capacity of its own. Outsourcing every hard question to vendors leaves government dependent on the companies it is supposed to regulate, audit, and bargain with. Public agencies need people who understand model evaluation, cybersecurity, privacy engineering, cloud architecture, and procurement incentives.

This is not glamorous work. It will not produce a keynote demo. But it is what separates responsible modernization from a scandal waiting for a headline.

Source: TVO ANALYSIS: Should the province feed your personal information to an AI chatbot? | TVO Today

Ontario’s AI Problem Is Not That It Tried, but That It Pretended Control Was Enough

Ontario’s AI Problem Is Not That It Tried, but That It Pretended Control Was Enough

The most revealing detail in Spence’s report is not that Ontario has approved Microsoft Copilot for use by career civil servants. That part is predictable. Microsoft is already embedded in the public-sector desktop, it sells enterprise controls, and it knows how to speak procurement, compliance, and risk management in a language governments understand.The problem is that approval did not translate into behavior. According to reporting on the auditor general’s findings, Copilot accounted for only about six per cent of Ontario Public Service generative AI use, while staff instead turned to more popular tools such as ChatGPT and Claude. That gap is the story: a formal policy existed, but the workplace culture and technical enforcement apparently did not.

For IT administrators, this is a familiar movie. A sanctioned tool is rolled out because it satisfies governance requirements, while employees quietly use the tool that feels faster, smarter, or more familiar. The result is shadow AI, the generative-era successor to shadow IT, except the files being pasted into the browser may include health records, legal material, procurement details, correspondence, and sensitive personal information.

Ontario’s failure, then, is not adoption. It is adopting AI as if the old compliance model still works. A directive, a training course, and an approved vendor do not equal control when a public servant can open another tab and paste the problem into a consumer-grade chatbot.

The Chatbot Is Becoming the New Clipboard

The reason generative AI is so hard to govern is that it does not look like a traditional enterprise system. A case-management platform has permissions, audit logs, business rules, and a defined database. A chatbot looks like a text box.That design is part of its power. It invites workers to treat it as a scratchpad, a search engine, a writing assistant, a translator, a summarizer, and a brainstorming partner. For a public servant facing a long policy memo, a backlog of correspondence, or a messy dataset, the temptation is obvious.

But the same informality makes it dangerous. A chatbot prompt can become a data disclosure event. A request to “summarize this file” can transfer personal information to an external service. A request to “draft a response to this citizen” can expose names, addresses, benefits status, medical circumstances, or business information that was collected for a specific government purpose.

This is where public-sector AI differs from an employee using an LLM to polish a sales email. Citizens do not hand information to government as a voluntary productivity experiment. They provide it because the state requires it, because they need health care, because they are applying for benefits, because they are complying with regulation, or because they are trying to access a public service.

That power imbalance changes the ethics. If a private company mishandles data, customers may have some ability to leave. If a province mishandles data, residents are often trapped inside the relationship. They cannot opt out of the health system, the tax system, the licensing system, or the records government maintains about them.

Copilot’s Weak Showing Exposes a Procurement Trap

There is an easy joke in the auditor’s finding that the one approved chatbot was barely used. Microsoft’s AI branding has been unavoidable across Windows, Edge, Office, and enterprise software, yet when Ontario workers apparently had a choice, most went elsewhere. Even in a Microsoft-heavy government environment, Copilot did not become the default by magic.The serious lesson is that procurement can select for compliance while users select for performance. Governments tend to buy tools that satisfy legal, security, residency, indemnity, and management requirements. Workers gravitate toward whatever answers better, faster, or with less friction.

That mismatch is not unique to Ontario. It is the structural problem facing every large organization that tries to contain generative AI with a single approved assistant. If the sanctioned tool feels weaker than public alternatives, employees will route around it unless access is technically blocked, monitoring is real, and the approved workflow is good enough to become habit.

Spence’s recommendation that non-compliant AI services be blocked from government computers lands precisely here. Blocking is not elegant, and it is not sufficient, but in a government environment it may be necessary. A policy that says “do not paste sensitive information into unapproved tools” is weaker than a network rule that makes the paste impossible in the first place.

Still, blocking cannot be the whole strategy. Employees who want to use AI may reach for personal devices, copy text into private accounts, or use unofficial workarounds. The province therefore needs both sides of the equation: better technical guardrails and a sanctioned AI environment that is capable enough to meet real workplace demand.

Medical AI Raises the Stakes From Embarrassment to Harm

The health-care findings are more alarming because the consequences move from privacy risk to clinical risk. Ontario has been experimenting with AI scribe tools that listen during medical encounters and generate notes for clinicians. In theory, this is one of the most defensible uses of AI in the public sector.Doctors are drowning in documentation. Administrative burden steals time from patients, contributes to burnout, and makes already strained health systems less humane. If AI can safely reduce that burden, patients and clinicians both benefit.

But “if” carries a lot of weight. The auditor general reportedly found that AI scribe systems were not evaluated adequately before implementation, with concerns about inaccuracies, hallucinated content, missing documentation, and weak procurement scoring for core safety measures. That is not a minor paperwork defect. A medical note is not just an internal memo; it can guide future treatment, referrals, prescriptions, billing, legal records, and patient understanding.

The most troubling possibility is not that a chatbot produces an obviously absurd sentence. It is that it produces a plausible but wrong clinical note. A hallucinated symptom, omitted caveat, invented treatment plan, or inaccurate summary of what a patient said may pass unnoticed in a busy practice unless there is disciplined human review.

AI scribes can still be worth using. But they belong in the category of tools that must be validated, monitored, logged, and clearly subordinated to professional judgment. If a doctor saves five to seven hours a week but the system occasionally fabricates medically relevant information, the province has not found a productivity hack. It has created a new class of latent error.

The Province Is Learning That AI Governance Is Operations, Not Slogans

Ontario already has a responsible AI framework and a directive that says the right things about transparency, accountability, risk management, and protecting people and data. That matters, but only up to a point. Frameworks are the brochures of governance; operations are the truth.A mature public-sector AI program has to answer mundane questions before it reaches grand ones. Who can use which tool? What categories of data are prohibited? Are prompts and outputs logged? Who reviews exceptions? How are vendors evaluated? What happens when a tool changes its model, retention policy, or hosting architecture? How are hallucinations reported? What is the appeal process when AI contributes to a decision affecting a resident?

These questions sound bureaucratic because they are bureaucratic. But bureaucracy is not the enemy here. It is the mechanism by which democratic institutions slow down powerful systems enough to inspect them.

The auditor general’s report suggests Ontario has not yet built that mechanism at the speed AI adoption requires. That does not mean the province should ban AI. It means it should stop treating AI as a software feature and start treating it as infrastructure that can alter how public power is exercised.

Digital Sovereignty Has Stopped Being a Conference Phrase

The Ontario case also lands inside a broader Canadian anxiety about digital sovereignty. For years, that phrase could sound abstract, the kind of thing invoked in policy panels and procurement debates. Generative AI has made it concrete.If a provincial government uses a U.S.-based AI provider to process sensitive public-sector information, the question is not only where the data centre sits. It is who owns the system, which laws apply, what foreign governments may compel, how support personnel are managed, how telemetry flows, and whether contractual promises survive legal demands outside Canada.

This is not a call for technological nationalism as a reflex. Canada cannot simply wish into existence a domestic hyperscale cloud industry, a full-stack AI ecosystem, and competitive foundation models overnight. Nor would every Canadian-branded tool automatically be safer or better.

But governments need to be honest about dependency. Public services now rely on a small group of global technology firms whose incentives, legal obligations, and strategic priorities do not perfectly align with Canadian democratic accountability. In the AI era, that dependency becomes more sensitive because the tool is not merely storing documents. It may be summarizing them, classifying them, generating recommendations from them, and influencing the pace and content of government work.

Digital sovereignty, in practical terms, means knowing which dependencies are acceptable, which require mitigation, and which should be avoided for high-risk data. It means procurement rules that understand model behavior, not just data residency. It means privacy law that can deal with inference, training, retention, and automated decision support, not only database breaches.

Citizens Should Not Have to Trust a Prompt Box

The core democratic issue is consent. When a resident gives personal information to a provincial ministry, hospital, school board, or agency, that information is collected under a legal and administrative purpose. AI threatens to blur those boundaries by making secondary use easy.A government file created for one purpose can suddenly become input for another. A health note can become training-adjacent data. A benefits application can become a summarization task. A complaint can become a prompt in a chatbot used to draft a response. Each step may feel minor to the worker trying to get a job done, but collectively they can transform the relationship between citizen and state.

The seductive argument for broad AI use is that government could become more proactive. An AI system could identify residents eligible for benefits they never claimed, flag service gaps, summarize complex rules, translate forms, and reduce the burden on people who currently have to navigate a maze of disconnected programs. That is a real public good.

The dystopian version uses the same technical ingredients. A government that can connect and analyze everything can also overreach, profile, surveil, misclassify, and deny services at scale. The difference is not the model. The difference is law, institutional design, oversight, and restraint.

This is why “efficiency” is too small a word for the debate. Government inefficiency is frustrating, expensive, and sometimes cruel. But some inefficiency is also a privacy architecture. Data silos, access limits, and procedural friction can protect citizens from the all-seeing administrative machine that technologists are forever tempted to build.

Public Servants Are Not the Villains of Shadow AI

It would be convenient to frame Ontario’s findings as a story of careless employees ignoring policy. That would also miss the point. Public servants are operating inside the same AI hype cycle as everyone else, with added pressure to do more with less and serve people faster.If workers are using ChatGPT or Claude instead of the approved tool, some are undoubtedly making poor judgments. But widespread non-compliance often indicates a system design failure. Either the rules were not clear, the tools were not blocked, the approved option was not good enough, the training did not stick, or managers sent mixed signals about productivity and risk.

The private sector has already learned this lesson. Employees do not adopt shadow tools only because they are reckless. They adopt them because official systems are slow, clumsy, unavailable, or misaligned with the work. The fix is not just discipline; it is better product design and governance that acknowledges actual behavior.

Ontario should assume that AI demand inside the public service will grow, not shrink. The right response is to build a safe path that is easier than the unsafe path. That means integrated approved tools, clear data-classification rules, visible warnings, prompt filtering, logging where appropriate, and meaningful consequences for deliberate misuse.

It also means giving employees examples rather than abstractions. “Do not enter sensitive information” is weaker than showing a caseworker exactly what can and cannot be pasted into an AI system. “Use Copilot” is weaker than demonstrating how to summarize a non-sensitive policy document, draft a generic public notice, or compare internal guidelines without exposing personal data.

The AI Scribe Lesson Should Reshape Procurement

The medical AI findings point to a procurement problem that goes beyond health care. Governments often buy technology through scoring systems that try to turn complex risk into weighted criteria. That can work for office furniture. It is much harder for probabilistic software that may behave differently across accents, medical specialties, languages, noise conditions, and clinical scenarios.If an AI scribe can score well despite weak evaluation of accuracy, bias, or security, the procurement process is rewarding the wrong things. Vendor viability and local presence may matter, but they cannot outrank safety in a clinical context. A tool that is domestically convenient but clinically unreliable is not a responsible public-sector choice.

AI procurement should be staged. Vendors should first meet non-negotiable thresholds for privacy, security, accuracy testing, auditability, and incident reporting. Only after that should governments compare cost, usability, implementation speed, and economic-development benefits.

The province should also avoid treating vendor claims as evidence. AI vendors have strong incentives to market speed, savings, and clinician satisfaction. Government buyers need independent testing, adversarial evaluation, and post-deployment monitoring. A model that performs acceptably in a demo may fail in the messy acoustic and linguistic reality of a family-practice office.

There is also a documentation burden. If a clinician must review and sign off on AI-generated notes, the system should make that responsibility explicit and track it. If the note was generated from a transcript, the transcript retention policy should be clear. If the AI output is edited, the audit trail should preserve what changed. Health care already lives with medico-legal complexity; AI should not add ambiguity disguised as automation.

Microsoft’s Role Is Both Practical and Politically Awkward

Microsoft sits at the centre of this story because it is the default enterprise technology supplier for much of government. That makes Copilot the easiest approved answer for many public-sector organizations. It also makes Microsoft a lightning rod for anxieties about concentration, sovereignty, and vendor lock-in.The case for Microsoft is straightforward. It offers enterprise administration, identity integration, contractual controls, compliance documentation, and a procurement channel governments already understand. For a chief information officer, that matters. A chatbot is not just a chatbot when deployed across tens of thousands of workers; it is an identity, logging, data-protection, and support problem.

The case against overreliance is equally clear. If the only approved AI path is one vendor’s ecosystem, government bargaining power weakens. Product limitations become policy limitations. A province may end up shaping its AI governance around what a hyperscaler is willing to sell rather than what democratic institutions should require.

This tension is not solved by swapping Microsoft for OpenAI, Anthropic, Google, or a fashionable open-source stack. It is solved by writing requirements that are portable across vendors: data minimization, Canadian legal accountability where necessary, logging, deletion rights, model-change notification, independent audits, and clear restrictions on training or secondary use.

In other words, Ontario should not be asking which chatbot deserves public-sector trust in the abstract. It should be defining the conditions under which any AI system is allowed near public data.

The “Fancy Autocomplete” Dismissal Is No Longer Enough

Skeptics often describe large language models as fancy autocomplete, and in a technical sense the phrase captures something true about probabilistic text generation. But as a policy argument, it is increasingly inadequate. Fancy autocomplete that writes medical notes, summarizes benefit files, drafts government correspondence, and influences public servants is no longer merely a toy.The danger is not that LLMs are conscious, magical, or omniscient. The danger is that they are useful enough to be adopted and unreliable enough to require discipline. That combination is more operationally dangerous than science fiction because it encourages partial trust.

People learn quickly that AI tools can produce good drafts, summarize long documents, and speed up tedious tasks. They then become tempted to extend that trust into contexts where the cost of error is higher. The model’s fluency masks its uncertainty, and its convenience masks the data flows behind it.

This is why public institutions need a vocabulary beyond hype and dismissal. AI is neither an alien superintelligence nor a harmless autocomplete gadget. It is a general-purpose statistical interface that can create value, leak data, reinforce errors, and reshape administrative work. That is enough to demand governance.

Windows Shops Should Treat Ontario as a Preview, Not an Outlier

For WindowsForum readers, the Ontario report should feel less like a provincial political story and more like a preview of every Microsoft 365 environment over the next few years. Copilot and its rivals are moving into the tools workers already use: email, documents, meetings, browsers, endpoint management, and line-of-business workflows. The question is not whether employees will use AI. They already are.The Windows administrator’s job is becoming partly an AI governance job. Tenant settings, browser controls, endpoint restrictions, data-loss prevention, sensitivity labels, conditional access, and logging policies now sit at the front line of public accountability. A prompt window may look like user productivity, but it can become an exfiltration path.

That shift will be especially uncomfortable for organizations that separated policy from operations. Privacy officers can write principles, legal teams can review contracts, and executives can announce responsible innovation. But if endpoint policy allows access to unapproved tools and workers are under pressure to move faster, the real policy is whatever the workstation permits.

Ontario’s experience also undercuts the idea that training alone is enough. Training matters, but it decays under workload pressure. Technical controls do not replace judgment, but they prevent predictable mistakes from becoming systemic failures.

The more mature posture is layered. Block the riskiest consumer tools on managed devices. Provide an approved AI option that is actually useful. Use sensitivity labels to prevent protected content from flowing into prompts. Monitor aggregate usage patterns. Require explicit review for high-risk use cases. Keep humans accountable for decisions and records.

The Real Debate Is About Administrative Power

The question in the source article — should the province feed your personal information to an AI chatbot? — is powerful because it strips away the abstraction. The answer depends on what information, which chatbot, for what purpose, under which law, with what consent, with what oversight, and with what consequences if something goes wrong.That sounds like a lawyer’s answer, but it is also the only answer compatible with democratic government. There are contexts where AI use may be benign or beneficial. Summarizing a public consultation document is not the same as analyzing a child-protection file. Drafting a generic service notice is not the same as generating a clinical record. Translating public-facing information is not the same as profiling residents for eligibility or enforcement.

The public-sector AI debate therefore has to become more granular. Blanket enthusiasm is reckless. Blanket prohibition is unrealistic and may deny citizens better services. The hard work is classification: low-risk, medium-risk, high-risk, and prohibited uses, each with controls that match the stakes.

Ontario’s early missteps are not fatal, but they are clarifying. They show that governments cannot rely on vendor assurances, employee discretion, or aspirational frameworks. They need enforceable rules and technical systems that make those rules real.

The Province Needs a Smaller AI Dream and a Stronger AI State

A recurring failure in technology policy is the tendency to overstate transformation while underfunding implementation. AI is sold as a revolution, but governance is staffed like a side project. That imbalance is visible whenever institutions adopt powerful tools before building the boring administrative muscle to manage them.Ontario should narrow its AI ambitions in the short term. The province does not need every ministry experimenting with every model. It needs a controlled set of use cases where benefits are measurable, risks are bounded, and oversight is real.

That might include summarizing non-sensitive internal documents, helping staff find public policy material, translating general public information, assisting with code under strict review, and reducing clinical paperwork where tools meet hard validation standards. It should not include unmonitored use of consumer chatbots with personal records, automated decision-making without appeal, or medical documentation systems whose accuracy has not been independently stress-tested.

The state also needs more technical capacity of its own. Outsourcing every hard question to vendors leaves government dependent on the companies it is supposed to regulate, audit, and bargain with. Public agencies need people who understand model evaluation, cybersecurity, privacy engineering, cloud architecture, and procurement incentives.

This is not glamorous work. It will not produce a keynote demo. But it is what separates responsible modernization from a scandal waiting for a headline.

Ontario’s Chatbot Wake-Up Call Leaves Little Room for Excuses

The most concrete lesson from Spence’s report is that Ontario has already crossed from theoretical AI policy into operational AI risk. The province is not deciding whether AI will arrive in government; it is discovering that AI arrived through browsers, procurement pilots, and clinical tools before governance caught up.- Ontario should block unapproved AI services on managed government devices where sensitive public-sector work is performed.

- The province should make approved AI tools useful enough that employees do not feel pushed toward consumer alternatives.

- Medical AI scribes should face independent validation, explicit clinician sign-off, and continuing post-deployment monitoring.

- Procurement scoring should treat privacy, security, accuracy, and auditability as gates rather than nice-to-have criteria.

- Residents should be told when AI meaningfully processes their personal information or contributes to records that affect them.

- Digital sovereignty should be treated as a practical risk-management issue, not a slogan about where servers happen to sit.

Source: TVO ANALYSIS: Should the province feed your personal information to an AI chatbot? | TVO Today