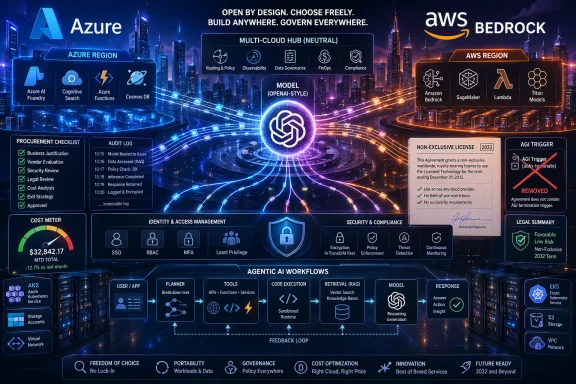

OpenAI’s cloud strategy has entered a new phase, and the implications reach far beyond one vendor contract. A revised Microsoft-OpenAI pact ends the practical era of Azure exclusivity, gives OpenAI room to distribute models across multiple clouds, and quickly opens the door to a deeper Amazon Web Services push through Bedrock. Microsoft keeps important rights, including a non-exclusive license to OpenAI model and product IP through 2032, but the balance of power has shifted from a single-cloud alliance toward a multi-cloud AI platform war. For enterprise customers, this is less a corporate divorce than a new procurement reality: frontier AI may finally be available where companies already run their data, apps, security controls, and budgets.

The Microsoft-OpenAI alliance began as one of the defining partnerships of the modern AI boom. In 2019, Microsoft invested $1 billion in OpenAI and positioned Azure as the infrastructure backbone for increasingly ambitious AI training and deployment. At the time, the arrangement looked like a classic cloud-era bet: Microsoft would supply scale, OpenAI would supply breakthrough models, and Azure would become the enterprise route to commercializing them.

That bet became far more valuable after ChatGPT turned generative AI from a research story into a mass-market product category. Microsoft moved quickly to weave OpenAI models into Bing, Microsoft 365 Copilot, GitHub Copilot, Azure AI, security tooling, and developer services. In return, OpenAI gained a massive enterprise channel and access to the kind of compute capacity that few independent labs could negotiate on their own.

But success created tension. OpenAI’s demand for compute ballooned, its enterprise ambitions widened, and customers increasingly asked for AI services inside environments that were not Azure. AWS remained the dominant cloud infrastructure provider by market share, Google Cloud continued to court AI-first developers, and regulated organizations wanted model choice without rewriting their entire cloud strategy.

The revised pact formalizes what the market had already been signaling: frontier AI distribution cannot remain locked to one hyperscaler indefinitely. Microsoft remains central, but no longer exclusive. OpenAI now has a clearer path to sell across clouds, Microsoft has cleaner commercial terms, and Amazon has an immediate opening to bring OpenAI models to Bedrock customers.

Microsoft also retains a license to OpenAI IP for models and products through 2032, but that license is now non-exclusive. This means Microsoft can keep building with OpenAI technology while OpenAI can license, distribute, or integrate with other partners. The arrangement gives Microsoft continuity without giving it a veto over OpenAI’s entire commercial future.

Removing that trigger gives both companies more predictable economics. It also reduces the incentive for either side to argue over definitions of capability, safety, or research status at the exact moment the technology becomes more commercially valuable.

Key changes include:

For AWS, the opportunity is obvious. Amazon Bedrock has long been positioned as a model-agnostic enterprise AI platform, offering access to multiple foundation model providers through a managed service with AWS security, governance, and billing controls. Adding OpenAI gives Bedrock a model catalog advantage that many enterprise buyers have been waiting for.

The OpenAI-AWS collaboration appears especially important for agents and coding workflows. Codex on Bedrock gives developers a familiar OpenAI coding agent inside AWS environments, while Bedrock Managed Agents powered by OpenAI points to a future where long-running enterprise workflows become a first-class cloud service.

AWS gains several immediate advantages:

Azure remains the first destination for OpenAI product releases under the revised terms. Microsoft keeps non-exclusive IP rights through 2032, keeps revenue share from OpenAI through 2030, and remains a major shareholder. Those are not consolation prizes; they are still powerful claims on the economic upside of OpenAI’s growth.

Microsoft’s advantage is integration. OpenAI models are embedded across Microsoft 365, GitHub, Windows developer workflows, Dynamics, Security Copilot, and Azure AI. Those product surfaces give Microsoft distribution that AWS cannot easily copy.

Microsoft’s remaining advantages include:

OpenAI’s multi-cloud distribution gives customers more bargaining power. It also allows CIOs to separate the question of which model is best from the question of which cloud contract is already approved. That distinction matters in organizations where compliance review can take months.

A typical enterprise evaluation may now follow a more practical sequence:

The complication is competitive overlap. Google is not just a cloud provider; it is also a frontier model builder. Gemini competes directly with OpenAI models in search, productivity, developer tools, consumer assistants, and enterprise AI services.

That makes a potential OpenAI-Google Cloud arrangement plausible but politically sensitive. Google would need to decide whether customer demand for OpenAI outweighs the strategic discomfort of hosting a top rival’s models. OpenAI would need to decide whether Google Cloud distribution expands revenue without strengthening a competitor too much.

Possible Google Cloud outcomes include:

An agent is only useful if it can securely reach the systems it needs. In enterprise environments, those systems often live inside AWS, Azure, Google Cloud, private networks, SaaS platforms, and identity-managed data stores. OpenAI’s ability to meet customers inside their chosen cloud environment becomes a strategic advantage.

Agentic AI also increases the importance of reliability. A chatbot that makes a mistake may produce a bad answer; an agent that makes a mistake may trigger an incorrect transaction, modify a record, or send an unauthorized communication. Cloud-native guardrails become essential.

Enterprise agent platforms will need:

If OpenAI can distribute more broadly and secure more compute, it may reduce bottlenecks during product launches. More cloud flexibility could help with regional capacity, specialized workloads, and product variants tuned for different markets. It may also give OpenAI more leverage to keep consumer subscriptions competitive.

That said, consumers may benefit indirectly from more competition. If AWS, Google, Microsoft, and OpenAI all compete to package AI assistance into productivity tools, browsers, developer environments, and personal agents, feature velocity should increase. The challenge will be avoiding confusion as every platform claims to offer the “best” AI assistant.

Consumer-facing effects may include:

The new OpenAI arrangement raises the pressure on every model provider to be available where customers want to buy. Exclusive cloud deals may still make sense for training partnerships, chip commitments, and early distribution, but model access is becoming harder to lock down. Enterprises increasingly expect choice.

This shift also puts pressure on cloud marketplaces. Customers want to consume AI through committed spend agreements, unified billing, procurement-approved terms, and familiar security controls. The cloud that makes model switching easiest may become more valuable than the cloud with a single exclusive model.

Competitive implications include:

The hardest challenge may be version management. Enterprises need to know which model they are using, when it changes, how it was evaluated, and whether outputs remain consistent across environments. If GPT model access differs between Azure, Bedrock, and future providers, developers will need clearer documentation and testing discipline.

That means OpenAI must become not only a model company but also a platform compatibility company. Its APIs, SDKs, evals, safety systems, and enterprise support processes must scale across cloud ecosystems.

Operational issues to watch include:

That does not mean regulators will stop watching. AI partnerships remain complicated because investments, compute commitments, IP licenses, revenue shares, and distribution channels can function like partial mergers without being formal acquisitions. Authorities will continue to ask whether customers and rivals have meaningful alternatives.

Governance questions will also follow OpenAI models into new environments. If a company uses OpenAI through Bedrock, who is responsible for safety filters, logging, data retention, incident response, and compliance documentation? The practical answer will depend on the exact service terms, but customers will need clarity.

Regulators and enterprise risk teams will focus on:

The second thing to watch is Google Cloud. If OpenAI and Google reach a distribution arrangement, the AI market will have crossed an important threshold: the leading model provider would be meaningfully present across all three major U.S. hyperscalers. If no deal appears, that absence will say something about competitive boundaries in the industry.

Key developments to monitor include:

OpenAI’s break from effective Azure exclusivity does not end the Microsoft relationship; it matures it into something more pragmatic, more competitive, and more suited to the scale of enterprise AI. Microsoft keeps a valuable seat at the table, AWS gains a powerful new Bedrock story, and OpenAI gains the distribution flexibility it needs for the next phase of growth. The winners will be the customers who can now demand model choice without abandoning their existing cloud foundations, but the real test begins in implementation. If OpenAI and its partners can deliver consistent, governed, production-grade AI across clouds, this deal may be remembered as the moment frontier models stopped being cloud trophies and became enterprise infrastructure.

Source: Axios OpenAI breaks free of Microsoft's cloud

Background

Background

The Microsoft-OpenAI alliance began as one of the defining partnerships of the modern AI boom. In 2019, Microsoft invested $1 billion in OpenAI and positioned Azure as the infrastructure backbone for increasingly ambitious AI training and deployment. At the time, the arrangement looked like a classic cloud-era bet: Microsoft would supply scale, OpenAI would supply breakthrough models, and Azure would become the enterprise route to commercializing them.That bet became far more valuable after ChatGPT turned generative AI from a research story into a mass-market product category. Microsoft moved quickly to weave OpenAI models into Bing, Microsoft 365 Copilot, GitHub Copilot, Azure AI, security tooling, and developer services. In return, OpenAI gained a massive enterprise channel and access to the kind of compute capacity that few independent labs could negotiate on their own.

But success created tension. OpenAI’s demand for compute ballooned, its enterprise ambitions widened, and customers increasingly asked for AI services inside environments that were not Azure. AWS remained the dominant cloud infrastructure provider by market share, Google Cloud continued to court AI-first developers, and regulated organizations wanted model choice without rewriting their entire cloud strategy.

The revised pact formalizes what the market had already been signaling: frontier AI distribution cannot remain locked to one hyperscaler indefinitely. Microsoft remains central, but no longer exclusive. OpenAI now has a clearer path to sell across clouds, Microsoft has cleaner commercial terms, and Amazon has an immediate opening to bring OpenAI models to Bedrock customers.

What Changed in the Microsoft-OpenAI Deal

The core change is straightforward: OpenAI can now serve its products to customers across any cloud provider. Microsoft remains OpenAI’s primary cloud partner, and OpenAI products are still expected to ship first on Azure unless Microsoft cannot, and chooses not to, support the needed capabilities. That wording matters because it preserves Azure’s privileged position while ending the absolute wall that previously limited broader cloud distribution.Microsoft also retains a license to OpenAI IP for models and products through 2032, but that license is now non-exclusive. This means Microsoft can keep building with OpenAI technology while OpenAI can license, distribute, or integrate with other partners. The arrangement gives Microsoft continuity without giving it a veto over OpenAI’s entire commercial future.

The AGI Trigger Is Gone

One of the most important changes is the removal of the controversial AGI trigger. Earlier arrangements tied parts of the business relationship to the declaration or verification of artificial general intelligence. That was always a difficult concept to translate into contract language because AGI is not a conventional product milestone.Removing that trigger gives both companies more predictable economics. It also reduces the incentive for either side to argue over definitions of capability, safety, or research status at the exact moment the technology becomes more commercially valuable.

Key changes include:

- OpenAI can sell across multiple clouds

- Microsoft’s OpenAI IP license continues through 2032

- Microsoft’s license is now non-exclusive

- OpenAI revenue share payments to Microsoft continue through 2030

- Those payments are subject to an undisclosed cap

- Microsoft no longer pays revenue share to OpenAI

- OpenAI products still ship first on Azure under defined conditions

Why AWS Moved So Quickly

Amazon did not wait long to capitalize. AWS announced that OpenAI models, including newer frontier models, would come to Amazon Bedrock in limited preview, alongside Codex availability and Bedrock Managed Agents powered by OpenAI. That speed suggests the companies had already done substantial technical and commercial groundwork before the Microsoft revision became public.For AWS, the opportunity is obvious. Amazon Bedrock has long been positioned as a model-agnostic enterprise AI platform, offering access to multiple foundation model providers through a managed service with AWS security, governance, and billing controls. Adding OpenAI gives Bedrock a model catalog advantage that many enterprise buyers have been waiting for.

Bedrock Becomes More Difficult to Ignore

Bedrock’s pitch is not simply “we have another model.” Its pitch is that enterprises can build AI applications inside the same operational perimeter they use for databases, analytics, identity, logging, networking, and compliance. For companies already deeply invested in AWS, OpenAI support reduces the friction of AI adoption.The OpenAI-AWS collaboration appears especially important for agents and coding workflows. Codex on Bedrock gives developers a familiar OpenAI coding agent inside AWS environments, while Bedrock Managed Agents powered by OpenAI points to a future where long-running enterprise workflows become a first-class cloud service.

AWS gains several immediate advantages:

- A stronger answer to Azure OpenAI Service

- A more complete Bedrock model catalog

- Better retention of AWS-native enterprise workloads

- A path to monetize OpenAI usage through existing cloud commitments

- A stronger story for agentic AI in production

- New pressure on Google Cloud’s AI marketplace strategy

Microsoft Keeps More Than It Loses

It would be tempting to frame this as Microsoft losing exclusivity and therefore losing leverage. That is only partly true. Microsoft loses the cleanest distribution lock, but it keeps a deep strategic position that few rivals can replicate.Azure remains the first destination for OpenAI product releases under the revised terms. Microsoft keeps non-exclusive IP rights through 2032, keeps revenue share from OpenAI through 2030, and remains a major shareholder. Those are not consolation prizes; they are still powerful claims on the economic upside of OpenAI’s growth.

Azure Still Has First-Mover Muscle

Microsoft’s main challenge is no longer whether it can access OpenAI models. It can. The challenge is whether Azure can remain the most attractive enterprise environment for customers building with those models when AWS and, potentially, Google Cloud can also participate.Microsoft’s advantage is integration. OpenAI models are embedded across Microsoft 365, GitHub, Windows developer workflows, Dynamics, Security Copilot, and Azure AI. Those product surfaces give Microsoft distribution that AWS cannot easily copy.

Microsoft’s remaining advantages include:

- Deep OpenAI integration across Microsoft 365

- GitHub Copilot’s developer footprint

- Azure AI enterprise tooling

- Security and compliance positioning

- Non-exclusive OpenAI IP rights through 2032

- Continued participation in OpenAI growth as a major shareholder

- Early access mechanics for OpenAI product shipping

Enterprise Buyers Gain Real Leverage

For enterprise customers, this may be the most consequential AI procurement shift of the year. Many large companies do not choose AI models in isolation; they choose them through cloud commitments, data residency constraints, procurement rules, vendor risk frameworks, and security architecture. A model that requires a separate cloud relationship can be harder to adopt than a slightly weaker model already available through the enterprise’s preferred platform.OpenAI’s multi-cloud distribution gives customers more bargaining power. It also allows CIOs to separate the question of which model is best from the question of which cloud contract is already approved. That distinction matters in organizations where compliance review can take months.

Procurement Becomes the Battleground

The AI platform race is increasingly about enterprise plumbing. Identity, audit logs, private networking, cost allocation, data controls, and legal terms can matter as much as benchmark scores. If OpenAI models are accessible through Bedrock, Azure, and eventually other clouds, buyers can demand better pricing, clearer indemnity, stronger privacy guarantees, and better regional availability.A typical enterprise evaluation may now follow a more practical sequence:

- Identify where sensitive data already resides.

- Compare model availability across existing cloud providers.

- Test latency, cost, and governance controls in each environment.

- Map model access to internal risk and compliance requirements.

- Negotiate usage discounts through existing cloud commitments.

- Decide whether model quality justifies multi-cloud complexity.

The Google Cloud Question

Google Cloud is the obvious next cloud to watch. It already operates one of the strongest AI infrastructure stacks in the market, with TPUs, Vertex AI, Gemini models, Model Garden, and deep relationships with AI labs. If OpenAI can now license or distribute through additional clouds, Google has both the technical capability and commercial incentive to explore a deal.The complication is competitive overlap. Google is not just a cloud provider; it is also a frontier model builder. Gemini competes directly with OpenAI models in search, productivity, developer tools, consumer assistants, and enterprise AI services.

Friend, Rival, or Both?

Cloud AI is forcing hyperscalers into uneasy relationships. AWS supports Anthropic through Bedrock while also building its own AI services. Microsoft supports OpenAI while adding models from other providers to its enterprise platforms. Google Cloud offers partner models even while pushing Gemini as a flagship product.That makes a potential OpenAI-Google Cloud arrangement plausible but politically sensitive. Google would need to decide whether customer demand for OpenAI outweighs the strategic discomfort of hosting a top rival’s models. OpenAI would need to decide whether Google Cloud distribution expands revenue without strengthening a competitor too much.

Possible Google Cloud outcomes include:

- OpenAI models appearing in Vertex AI Model Garden

- Limited enterprise-only distribution

- Regional or regulated-industry availability

- Agent tooling integrations rather than full model parity

- No deal if commercial or competitive terms prove too difficult

The Agentic AI Angle

The timing of this shift is important because the enterprise AI market is moving from chatbots to agentic AI. Instead of simply answering prompts, agents are designed to call tools, execute workflows, write code, update systems, retrieve documents, and coordinate multi-step tasks. That makes the surrounding cloud platform far more important.An agent is only useful if it can securely reach the systems it needs. In enterprise environments, those systems often live inside AWS, Azure, Google Cloud, private networks, SaaS platforms, and identity-managed data stores. OpenAI’s ability to meet customers inside their chosen cloud environment becomes a strategic advantage.

Agents Need Infrastructure, Not Just Intelligence

The OpenAI-AWS Bedrock Managed Agents announcement points directly at this transition. If OpenAI models power agents that run with AWS identity, logging, governance, and cost controls, enterprise teams can move from experiments to production more quickly. That is especially relevant for industries where shadow AI usage has outpaced formal governance.Agentic AI also increases the importance of reliability. A chatbot that makes a mistake may produce a bad answer; an agent that makes a mistake may trigger an incorrect transaction, modify a record, or send an unauthorized communication. Cloud-native guardrails become essential.

Enterprise agent platforms will need:

- Strong identity and access management

- Detailed audit trails

- Policy-based tool permissions

- Human approval checkpoints

- Cost and rate-limit controls

- Data loss prevention integration

- Model evaluation and rollback workflows

Consumer Impact: Less Visible, Still Important

Consumers may not immediately notice this deal. ChatGPT will still be ChatGPT, Microsoft Copilot will still be Copilot, and most people will not care whether inference happens on Azure, AWS, or another provider. But the downstream effects could shape pricing, reliability, feature availability, and competition.If OpenAI can distribute more broadly and secure more compute, it may reduce bottlenecks during product launches. More cloud flexibility could help with regional capacity, specialized workloads, and product variants tuned for different markets. It may also give OpenAI more leverage to keep consumer subscriptions competitive.

The Windows and Copilot Question

For Windows users, the most interesting question is whether Microsoft can keep Copilot differentiated if OpenAI models become more widely available. Microsoft’s answer will likely be integration rather than exclusivity. Copilot can still live inside Windows, Edge, Office, Teams, Outlook, GitHub, and enterprise identity systems in ways that generic model access cannot replicate.That said, consumers may benefit indirectly from more competition. If AWS, Google, Microsoft, and OpenAI all compete to package AI assistance into productivity tools, browsers, developer environments, and personal agents, feature velocity should increase. The challenge will be avoiding confusion as every platform claims to offer the “best” AI assistant.

Consumer-facing effects may include:

- More reliable AI services during peak demand

- Faster rollout of coding and productivity assistants

- More competition in subscription pricing

- Potentially broader regional availability

- More overlapping AI features across apps

- Greater confusion over data handling and model identity

Competitive Fallout Across the AI Market

OpenAI’s move intensifies an already crowded platform race. Anthropic has built strong distribution through AWS and Google Cloud. Google pushes Gemini across consumer and enterprise products. Microsoft has Copilot and Azure AI. Meta continues to shape the open-model ecosystem. Cohere, Mistral, xAI, and others compete for specialized enterprise, developer, and sovereign AI use cases.The new OpenAI arrangement raises the pressure on every model provider to be available where customers want to buy. Exclusive cloud deals may still make sense for training partnerships, chip commitments, and early distribution, but model access is becoming harder to lock down. Enterprises increasingly expect choice.

Model Quality Is Not Enough

The old assumption was that the best model would win. The enterprise reality is more complex. The winning AI stack must combine model quality, availability, price, governance, data controls, latency, integrations, and vendor trust.This shift also puts pressure on cloud marketplaces. Customers want to consume AI through committed spend agreements, unified billing, procurement-approved terms, and familiar security controls. The cloud that makes model switching easiest may become more valuable than the cloud with a single exclusive model.

Competitive implications include:

- AWS strengthens Bedrock as a neutral model hub

- Azure must emphasize integration and first-party Microsoft workflows

- Google Cloud may need a clearer OpenAI strategy

- Anthropic faces a more crowded AWS catalog

- Open-model providers must compete on cost and control

- Enterprise buyers gain leverage over pricing and terms

Technical and Operational Challenges

Multi-cloud distribution sounds clean in a press release, but it is difficult in production. OpenAI and its cloud partners must manage model serving consistency, capacity planning, version alignment, latency, security controls, regional availability, billing differences, and support boundaries. A model that behaves differently across platforms can create headaches for developers and compliance teams.The hardest challenge may be version management. Enterprises need to know which model they are using, when it changes, how it was evaluated, and whether outputs remain consistent across environments. If GPT model access differs between Azure, Bedrock, and future providers, developers will need clearer documentation and testing discipline.

Consistency Will Matter

Cloud providers also implement security and observability differently. AWS customers will expect Bedrock-native IAM, CloudTrail-style auditability, VPC integration, and cost controls. Azure customers will expect Entra ID, Purview, Defender, Azure Monitor, and Microsoft compliance integrations. Google Cloud customers would expect similar integration with Google’s identity, data, and observability stack.That means OpenAI must become not only a model company but also a platform compatibility company. Its APIs, SDKs, evals, safety systems, and enterprise support processes must scale across cloud ecosystems.

Operational issues to watch include:

- Model version parity across clouds

- Latency differences by region

- Quota limits and capacity reservations

- Data retention and privacy policy alignment

- Support responsibility during outages

- Fine-tuning and retrieval feature differences

- Auditability for regulated customers

Regulatory and Governance Implications

The revised pact may also ease some antitrust and competition concerns. Exclusive relationships between dominant cloud providers and leading AI labs have attracted scrutiny because they can concentrate access to models, compute, talent, and distribution. By making Microsoft’s license non-exclusive and allowing broader cloud distribution, the deal creates a more open commercial structure.That does not mean regulators will stop watching. AI partnerships remain complicated because investments, compute commitments, IP licenses, revenue shares, and distribution channels can function like partial mergers without being formal acquisitions. Authorities will continue to ask whether customers and rivals have meaningful alternatives.

Openness Is Relative

OpenAI’s new flexibility does not automatically make the AI market open. The largest labs still require enormous capital, data center access, specialized chips, and cloud-scale engineering. Multi-cloud distribution may improve customer choice while still concentrating power among a handful of hyperscalers and frontier model companies.Governance questions will also follow OpenAI models into new environments. If a company uses OpenAI through Bedrock, who is responsible for safety filters, logging, data retention, incident response, and compliance documentation? The practical answer will depend on the exact service terms, but customers will need clarity.

Regulators and enterprise risk teams will focus on:

- Whether cloud marketplaces preserve genuine model choice

- Whether revenue-sharing arrangements distort competition

- How customer data is handled across cloud boundaries

- Who bears responsibility for harmful AI outputs

- Whether smaller AI labs can access comparable distribution

- How national security and sovereign cloud rules apply

Strengths and Opportunities

The revised Microsoft-OpenAI agreement creates a more flexible AI distribution model at a moment when enterprises are demanding exactly that. It gives OpenAI access to broader cloud demand, gives AWS a major Bedrock win, and gives Microsoft a clearer long-term arrangement without the uncertainty of AGI-triggered contract mechanics.- Broader enterprise reach as OpenAI can meet customers on the clouds they already use.

- Stronger cloud competition because Azure must compete on integration, not exclusivity alone.

- Better procurement flexibility for CIOs managing committed cloud spend and compliance reviews.

- More resilient capacity planning if OpenAI can diversify infrastructure and serving partnerships.

- Faster agent adoption through cloud-native platforms such as Bedrock and Azure AI.

- Reduced contractual ambiguity after removing the AGI trigger from the business relationship.

- More leverage for customers negotiating price, governance, and regional availability.

Risks and Concerns

The opportunity is significant, but the risks are equally real. Multi-cloud AI can introduce fragmentation, support confusion, inconsistent model behavior, and new governance gaps if OpenAI and its partners do not manage the rollout carefully.- Model fragmentation if features, versions, or tools differ across Azure, AWS, and future clouds.

- Support ambiguity when outages or output issues involve both OpenAI and a cloud provider.

- Pricing complexity as customers compare direct OpenAI billing, Azure consumption, and Bedrock usage.

- Compliance uncertainty around data handling, retention, and audit trails across platforms.

- Strategic tension with Microsoft if OpenAI’s non-Azure revenue grows faster than expected.

- Pressure on smaller AI labs that may struggle to match OpenAI’s distribution footprint.

- Customer lock-in by another route if cloud marketplaces become the main gatekeepers for model access.

What to Watch Next

The first thing to watch is how quickly OpenAI’s Bedrock availability moves from limited preview to broad production readiness. Preview announcements matter, but enterprises will care about supported regions, service-level commitments, model versions, pricing, fine-tuning support, data controls, and whether usage can count against existing AWS commitments. The details will determine whether this is a headline partnership or a real workload shift.The second thing to watch is Google Cloud. If OpenAI and Google reach a distribution arrangement, the AI market will have crossed an important threshold: the leading model provider would be meaningfully present across all three major U.S. hyperscalers. If no deal appears, that absence will say something about competitive boundaries in the industry.

Key developments to monitor include:

- General availability timing for OpenAI models on Bedrock

- Whether GPT-5.5 and future models launch with parity across clouds

- Any OpenAI-Google Cloud distribution agreement

- Microsoft’s next Azure OpenAI and Copilot differentiation moves

- Enterprise pricing changes tied to cloud commitments

OpenAI’s break from effective Azure exclusivity does not end the Microsoft relationship; it matures it into something more pragmatic, more competitive, and more suited to the scale of enterprise AI. Microsoft keeps a valuable seat at the table, AWS gains a powerful new Bedrock story, and OpenAI gains the distribution flexibility it needs for the next phase of growth. The winners will be the customers who can now demand model choice without abandoning their existing cloud foundations, but the real test begins in implementation. If OpenAI and its partners can deliver consistent, governed, production-grade AI across clouds, this deal may be remembered as the moment frontier models stopped being cloud trophies and became enterprise infrastructure.

Source: Axios OpenAI breaks free of Microsoft's cloud