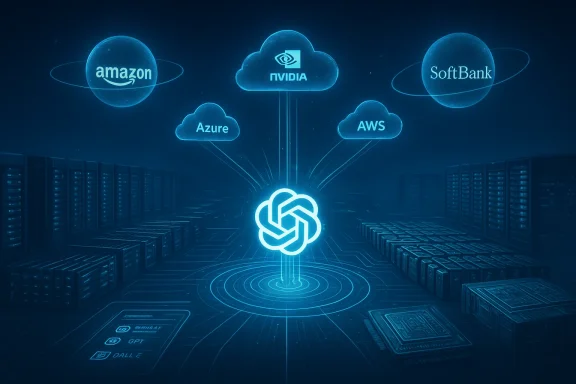

OpenAI’s announcement of a fresh, staggering funding wave — roughly $110 billion led by Amazon, NVIDIA, and SoftBank — has reverberated across the tech world, but perhaps the loudest sound in the room is a quieter one: Microsoft, OpenAI’s most consequential partner and early backer, did not participate in this latest round and both companies rushed to reassure markets and customers that their partnership remains intact.

Background

Microsoft and OpenAI have been entwined since 2019, when Microsoft made the first of several large investments and began supplying cloud compute and engineering support that helped OpenAI scale its early models into production. Over the past three years that relationship evolved from a classic vendor–partner arrangement into a strategic alliance that touches product development, IP arrangements, and monetization across Azure and Microsoft products. That agreement was reworked publicly last year: in October 2025 the two companies published a detailed joint blog outlining a new chapter of collaboration that preserved Microsoft’s IP and API exclusivity while giving OpenAI more freedom to scale with other parties under specific contractual terms.Those October 2025 terms are the context for today’s headlines: Microsoft retained a long-term commercial and IP relationship with OpenAI, including an exclusivity arrangement for stateless OpenAI APIs on Azure, while OpenAI gained the flexibility to pursue additional infrastructure partners and a path to raise significant new capital. That shift was deliberately designed to let OpenAI bring in third-party compute while keeping Microsoft strategically invested in model access and enterprise distribution.

The raise: what happened, who put money in, and how big is this

On February 27, 2026, OpenAI announced a massive new funding package that company spokespeople and multiple outlets describe as being in the neighborhood of $110 billion. The round is led by Amazon (reported commitments around $50 billion), with NVIDIA and SoftBank each reported to be contributing roughly $30 billion apiece. The raise was presented as a mix of direct capital and very large infrastructure/service commitments designed to widen OpenAI’s compute base and distribution footprint; outlets report the deal values OpenAI at approximately $730 billion pre-money (which implies a post‑money value materially higher once the new commitments are added).Key points made public about the package:

- Reported headline amount: about $110 billion total in the announced tranche.

- Investor breakdown reported in multiple outlets: Amazon ~$50B, NVIDIA ~$30B, SoftBank ~$30B.

- Reported valuation: roughly $730 billion pre-money (some outlets translate that into an $840 billion post-money figure depending on whether they include near-term committed capital). Different publications are presenting slightly different calculations; the variances reflect whether they’re describing pre‑ or post‑money valuation and how they treat near-term conditional commitments. Those fine points matter for markets but do not change the headline scale of the deal.

- Strategic elements: Amazon is publicly reported to be deepening an AWS–OpenAI partnership, including new stateful runtime plans on Amazon Bedrock and substantial Trainium capacity commitments; NVIDIA’s involvement is described as large compute and inference capacity commitments; SoftBank’s role combines capital with strategic distribution and investment heft. The exact split between cash, services, hardware commitments, and long-dated purchase agreements has not been laid out in fully audited detail in the public filings we have reviewed; that split is material for accounting and regulatory views but remains largely an industry-accepted blend of cash and infrastructure commitments.

What Microsoft and OpenAI publicly said

Almost immediately after news of the Amazon/NVIDIA/SoftBank-led package broke, Microsoft and OpenAI issued a joint statement aimed squarely at preventing speculation about the future of their partnership. The statement reiterated that “nothing about today’s announcements in any way changes the terms of the Microsoft and OpenAI relationship that have been previously shared in our joint blog in October 2025.” It spelled out several concrete continuities:- IP relationship unchanged — Microsoft retains the contractual IP rights the companies described in October 2025.

- Revenue share and commercial arrangements unchanged.

- Azure remains the exclusive cloud provider for OpenAI’s stateless APIs. The joint note makes clear OpenAI’s first‑party products will continue to be hosted on Azure and that stateless API traffic tied to OpenAI models will be hosted on Azure even where those models are provided via third‑party partnerships.

- AGI definitions and governance processes remain as specified in the October 2025 agreement, including the independent verification steps and other guardrails adopted during the restructuring.

Why Microsoft likely skipped this round (and why that matters)

Microsoft’s absence from this headline package is notable, but it’s important to separate symbolic absence from strategic absence. There are several plausible reasons Microsoft did not put new capital into the publicly announced tranche:- Contractual architecture and timing. The October 2025 restructuring re‑balanced rights and obligations. Microsoft’s deal already secures extended IP rights, API exclusivity (for stateless APIs), and deep Azure commercial commitments through multi‑year purchase arrangements. That architecture reduces Microsoft’s need to lead every incremental funding announcement while preserving its commercial position. The October joint blog and follow‑ups explicitly anticipated OpenAI using other providers for some compute while keeping key commercial flows tied to Azure.

- Different investment type. Reports indicate a large share of the $110 billion will take the form of infrastructure commitments, hardware, and service capacity (for example Trainium/GPU commitments and long-term service agreements), not pure cash equity in the conventional sense. Microsoft’s strategic bet already converts to Azure consumption and model access; participating in a deal weighted toward AWS capacity and NVIDIA hardware may not materially change Microsoft's standing. The economics of service‑versus‑equity also complicate whether Microsoft would want to match such terms on the same basis.

- Regulatory and corporate optics. Microsoft is a regulated global giant with a board and shareholders sensitive to concentrated capital deployment. Committing additional tens of billions to another private company — particularly at these valuations and in the midst of heightened antitrust focus around AI and cloud dominance — may have been judged unnecessary or untimely. Microsoft already holds a deep strategic interest in OpenAI under existing contracts; it can defend its commercial position via those agreements rather than by continually funding new tranches.

- Strategic diversification and in‑house options. Over the past year Microsoft has publicly and quietly accelerated its own model development, productized Copilot features, and begun deploying in‑house models for certain enterprise workloads. That path lets Microsoft hedge its dependence on OpenAI by using its Azure AI Foundry, the MAI family of models in preview, and the broader Azure Foundry lineup for internal and select customer needs. The company’s long-term objective — enterprise monetization of AI across Microsoft 365, Azure, and developer services — is less dependent on incremental equity injections than on model availability, latency, and margin control. File-level community discussions in Windows-focused forums reflect this shift in tone and concern among users and admins.

Strategic implications for Microsoft, Azure, and the cloud market

The pack of announcements reshapes the competitive cloud map in three concrete ways.1) Compute supply and platform levers accelerate multi‑cloud dynamics

OpenAI’s access to additional, geographically and vendor‑diverse compute removes a single‑supply chokepoint that previously concentrated power in a small set of hyperscalers. That increases the attractiveness of alternatives (AWS, OCI, CoreWeave, and specialized colocation) for training or stateful runtimes. Market reaction will lean toward a more explicit multi‑cloud world where customers pick the best mix of cost, latency, and compliance. Microsoft retains a privileged role for stateless APIs and certain first‑party product hosting, but the ability to run stateful workloads elsewhere materially reduces the danger of single‑cloud lock‑in at the industry level.2) Azure’s enterprise moat is intact but contested

Microsoft’s guarantee of exclusive access to stateless OpenAI APIs — and OpenAI’s pledge to host first‑party products on Azure — give Microsoft a powerful enterprise product story. For enterprises, the guarantee of model access, combined with Azure’s compliance, support, and integration into Microsoft 365, is a compelling operational argument. However, Amazon’s new stateful runtime play and deep consumer product integration create a parallel channel for OpenAI‑powered capabilities. Enterprises will need to evaluate vendor lock‑in and total cost of ownership more carefully than ever. The October 2025 agreement that extended IP rights and clarified AGI governance matters here because it preserves Microsoft’s product-level advantages even as compute flows diversify.3) Vendor relationships and pricing power will see short‑term turbulence

The scale of the commitments — including multi‑year capacity pledges and implied volume discounts — will change cloud pricing dynamics for specialized hardware and high‑density inference. NVIDIA’s direct commitments around inference and specialized systems may give it leverage to pressure competitors on supply and pricing. Microsoft will have to defend margins for Azure AI services by optimizing utilization and moving to its own mixture‑of‑experts and cost-reduction playbook for high-throughput workloads. Already we’ve seen Microsoft introduce its own MAI models to reduce inference costs in Copilot scenarios; those efforts will accelerate.Legal, regulatory, and antitrust risks to watch

A finance injection of this size and the accompanying strategic alignment among major cloud and chip players will invite regulatory scrutiny across several axes:- Antitrust and competition authorities will focus on whether exclusive arrangements (even limited ones) or massive cloud purchase commitments distort competition in cloud infrastructure or model distribution. The October 2025 updates introducing right-of-first-refusal language and the limits around exclusivity were designed to reduce that risk, but fresh deals will be parsed carefully.

- National security and export control considerations may reappear as OpenAI extends stateful runtimes and national‑security customers are explicitly part of the conversation. The ability to deliver sensitive models and APIs across multiple clouds will require renewed focus on access controls, cross‑border data flows, and government contracting rules.

- Disclosure and investor governance: the valuation math here is complicated — large parts of the commitments could be long‑dated consumption, hardware investments, or conditional payments — and regulators will ask firms to disclose the real economics behind reported valuations. Expect follow‑up filings and investor presentations to attempt to clarify the cash versus services split in the weeks ahead. If that split is opaque, markets will treat the headline valuation with caution.

What this means for enterprise customers, developers, and the Windows ecosystem

- For enterprise customers that have already bet into Microsoft 365 Copilot, Azure OpenAI Service, and Azure AI Foundry, the status quo remains functionally preserved: stateless API access for models will continue to route through Azure, and Microsoft says first‑party OpenAI products will be hosted on Azure. That continuity matters for compliance‑heavy customers who value a single‑vendor support path for mission‑critical deployments.

- Developers and startups face a more choiceful environment: the availability of OpenAI models in stateful runtimes on AWS (and broader NVIDIA‑backed inference fabrics) will make it easier to pick the platform that best matches latency, pricing, and regional requirements. This should spur new innovations — but it will also increase integration work for teams that need cross‑cloud deployment strategies.

- For Windows and consumer‑facing applications, the race is about embedding differentiated experiences. Microsoft’s advantage is product integration (Copilot inside Office, Copilot for Windows), while Amazon will push model integration into its consumer funnel and AWS will promote stateful runtimes optimized for specific application architectures. The result is more choice for etform-specific integration tasks for developers.

Risks and unanswered questions

No single announcement resolves the most pressing open issues; indeed, some important questions remain partially answered or unverifiable in public materials:- Cash vs. services split. Public reporting has aggregated cash, hardware, and long‑term purchase commitments into headline numbers. The precise mix will dramatically influence accounting (pre‑money vs post‑money valuations), investor gains/losses, and the real liquidity available to OpenAI. Until audited filings or clearer breakouts are published, take the headline valuations with prudent skepticism. We flag this as a material unknown.

- Operational integration and vendor lock‑in. The October 2025 framework preserved Azure exclusivity for stateless APIs but permitted other compute for stateful runtime and training. How customers adopt mixed‑cloud architectures and how OpenAI shares model weights, telemetry, and safety controls across multiple runtime environments will matter greatly for security and for competitive dynamics.

- Regulatory reaction. Several national competition authorities already have AI‑related inquiries on their desks; a $110 billion strategic alignment among hyperscalers and chip suppliers is likely to invite deeper review. If regulators require structural remedies or behavioral constraints, the public deal math and the contractual guarantees Microsoft enjoys could be affected. This is a medium‑term risk that companies must monitor closely.

How Microsoft can — and likely will — respond strategically

Microsoft has several realistic levers to preserve and strengthen its position:- Double down on enterprise integration. Expand differentiated Copilot features, security integrations, data residency guarantees, and vertical solutions (finance, health, government) where Azure’s compliance posture and Microsoft’s ecosystem provide clear value.

- Drive cost efficiency with model orchestration. Push in‑house models (MAI‑family and other Azure Foundry offerings) for predictable workloads to manage inference cost and latency, while keeping OpenAI models as a premium option. This hybrid multi‑model approach can preserve product competitiveness and margins.

- Negotiate product‑level exclusives. Use the October 2025 IP and API arrangements to lock in product flows that matter most for Microsoft’s ecosystem (Windows, Office, GitHub) while allowing OpenAI to monetize in other channels.

- Pursue strategic partnerships of its own. Microsoft could accelerate partnerships with chipmakers, specialized cloud providers, and even competitors’ model creators to retain choice and supply chain diversity. Those moves would be compatible with the October 2025 posture that allows Microsoft more freedom to pursue AGI and related investments independently. (blogs.microsoft.com)

Community reaction and developer voice

Windows‑centered communities and enterprise operators we track are emphasizing two reactions: relief and vigilance. Relief because the joint Microsoft–OpenAI stat of the practical benefits for Azure customers. Vigilance because the era of multi‑vendor model delivery increases integration complexity, governance overhead, and potential vendor lock‑in by different means (service commitments and platform APIs instead of single‑provider compute monopolies). Forum threads and community posts reflect a pragmatic stance: most Windows administrators will keep existing Microsoft investments but will add monitoring, SLAs, and multicloud contingency plans.Bottom line: absence isn’t abandonment — but the landscape shifts

Microsoft’s decision not to join the headline $110 billion tranche led by Amazon, NVIDIA, and SoftBank is significant symbolically, but it is not — on its face — a rupture. The October 2025 restructure created a contractual and commercial scaffolding designed to allow exactly this kind of multi‑partner scaling, while preserving Microsoft’s product and IP advantages. Microsoft’s role is less about being the only funnel for OpenAI’s compute and more about being the default enterprise path for OpenAI model access and productization.That said, the announcement accelerates a strategic decoupling of compute supply from model access. The OpenAI model will now run widely across prize compute fabrics and hyperscalers. That is good for scale and resilience, but it increases complexity for customers, invites regulatory attention, and forces Microsoft to sharpen its product differentiation, cost playbook, and legal posture.

In short: Microsoft is not on the sidelines by choice but by design. The partners are playing different, complementary roles in a race where scale, access, and commercial distribution matter more than who wrote the largest check. The next acts will be decided by execution — who can deliver secure, cost‑effective, compliant AI services at scale while avoiding regulatory entanglements and preserving open innovation for developers.

What to watch next (practical checklist)

- Watch for detailed filings and investor decks from OpenAI and the participating firms to clarify the cash vs services mix and the concrete timelines for compute deliveries. Those numbers will refine valuation math and regulatory exposure.

- Monitor regulatory statements from competition authorities in the U.S., EU, and key APAC markets; expect inquiries about large‑scale commitments and exclusivity clauses.

- Track Microsoft product updates: more Copilot integrations, in‑house model disclosures, and pricing moves that aim to protect margins and keep enterprise customers within Azure.

- For IT teams: prepare multicloud deployment guidance, SLAs, and exit strategies for critical AI workloads; don’t assume any single provider will remain the only practical place to run frontier workloads.

OpenAI’s $110 billion announcement is a structural moment: it scales compute, hardens vendor alliances, and forces everyone — including Microsoft — to act from the strategic positions they negotiated last year. Microsoft’s absence from the headline roster is meaningful as a headline, but the contractual scaffolding and product commitments it holds mean the company remains central to how OpenAI technologies will be accessed and monetized by enterprises and developers. The real question going forward is no longer whether Microsoft and OpenAI will work together — it’s how each will execute on the differentiated roles they now occupy in a markedly more industrialized AI ecosystem.

Source: Windows Central Microsoft skipped OpenAI’s 110B raise and says 'we’re still good'