Oracle’s bold AI data‑centre sprint has collided with hard cash realities: this week multiple reports said the company is preparing to cut thousands of roles and to slow hiring as it wrestles with the up‑front costs of an unprecedented expansion of GPU‑dense infrastructure — moves that underscore both the scale of Oracle’s ambitions and the financial strain of pivoting a decades‑old software giant into a hyperscale AI supplier.

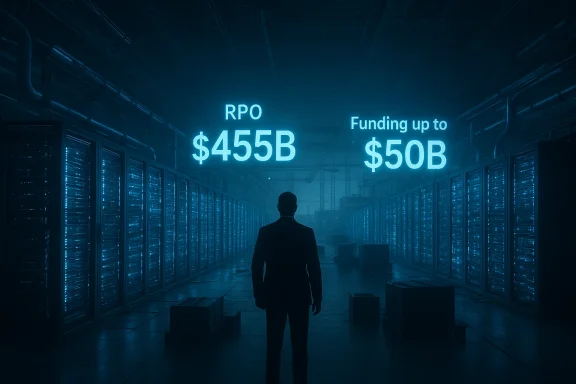

Oracle’s transformation over the past 18 months has been dramatic and highly public. Once primarily known for databases and enterprise software, the company has shifted capital and narrative toward Oracle Cloud Infrastructure (OCI) and AI‑oriented services, signing multi‑billion‑dollar arrangements that produced an eye‑popping backlog of contracted but unrecognized revenue — Oracle reported Remaining Performance Obligations (RPO) of roughly $455 billion at the end of its fiscal Q1 2026. That pany forward revenue visibility, but it also commits Oracle to massive capital expenditures to deliver the compute capacity those contracts require.

At the same time, Wall Street and analysts have flagged the near‑term cash consequences of building GPU‑heavy data centres and meeting the power, cooling, and networking demands of AI workloads. Oracle announced plans to raise a very large tranche of capital this year — up to $50 billion via debt and equity — to help fund the build‑out. The combination of aggressive capex plans and the need to conserve operating cash has coincided with reports that the company is preparing workforce reductions and reviewing open cloud‑division job listings.

Independent analyst notes and some trade publications have floated larger scenarios — including an estimate by TD Cowen that Oracle could* cut as many as 20,000 to 30,000 jobs to generate cash and streamline non‑strategic operations — though that specific upper‑bound has not been confirmed by Oracle itself. Those estimates are useful for sizing the potential fiscal impact, but they remain market analysis rather than company disclosure.

Source: Hindustan Times Oracle layoffs to impact thousands in AI cash crunch

Background

Background

Oracle’s transformation over the past 18 months has been dramatic and highly public. Once primarily known for databases and enterprise software, the company has shifted capital and narrative toward Oracle Cloud Infrastructure (OCI) and AI‑oriented services, signing multi‑billion‑dollar arrangements that produced an eye‑popping backlog of contracted but unrecognized revenue — Oracle reported Remaining Performance Obligations (RPO) of roughly $455 billion at the end of its fiscal Q1 2026. That pany forward revenue visibility, but it also commits Oracle to massive capital expenditures to deliver the compute capacity those contracts require.At the same time, Wall Street and analysts have flagged the near‑term cash consequences of building GPU‑heavy data centres and meeting the power, cooling, and networking demands of AI workloads. Oracle announced plans to raise a very large tranche of capital this year — up to $50 billion via debt and equity — to help fund the build‑out. The combination of aggressive capex plans and the need to conserve operating cash has coincided with reports that the company is preparing workforce reductions and reviewing open cloud‑division job listings.

What’s being reported now

Scale and timing of the cuts

Multiple news outlets, citing people familiar with the matter, reported that Oracle is preparing to eliminate thousandssions, with some actions possibly taking place imminently. The coverage describes a broader and faster program than Oracle’s usual incremental or office‑level reductions; companies in this position commonly execute a mix of layoffs, hiring freezes, and reallocation of roles toward new priorities. Reporters also note the company has internally begun reviewing many open listings in its cloud unit to slow hiring.Independent analyst notes and some trade publications have floated larger scenarios — including an estimate by TD Cowen that Oracle could* cut as many as 20,000 to 30,000 jobs to generate cash and streamline non‑strategic operations — though that specific upper‑bound has not been confirmed by Oracle itself. Those estimates are useful for sizing the potential fiscal impact, but they remain market analysis rather than company disclosure.

The corporate rationale being described

Insiders and analysts point to a straighracle has won very large, multi‑year contracts for AI infrastructure and now needs to convert those bookings into real capacity. Building and equipping GPU‑dense facilities requires immediate cash outlay and lengthy capital cycles; in the near term those expenditures can push free cash flow negative even for profitable companies. The widely reported plan to raise up to $50 billion in 2026 is part of the financing mix to bridge the gap between capital outlays and revenue recognition. Reported workforce reductions are being framed as part of the effort to rebalance operating costs while the hardware investments come online.Official posture and recent disclosures

Oracle declined to comment to reporters on the specific layoff reports. The company has previously disclosed a large restructuring plan: in filings earlier the company flagged a fiscal‑year restructuring expected to cost up to $1.6 billion, largely for severance and exit costs as it reshapes operations — a disclosure that signals leadership had already planned significant workforce and operational changes. Oracle also has set a Q3 fiscal 2026 earnings date for March 10, when executives will provide updated financial detail and investor guidance. ([investor.oracle.com](Oracle Sets the Date for its Third Quarter Fiscal Year 2026 Earnings Announcement doubled down on AI — and why it’s expensiveThe revenue promise (and liability)

Oracle’s huge RPO backlog is a double‑edged sword: it demonstrates demand and contractual revenue visibility, but it also represents obligations the company must fulfill — the equipment, racks, networking, power, and colocation commitments that must be in place to run multi‑year AI training and inference workloads. The RPO number that electrified the market — $455 billion — was not conjured overnight; it followed the company’s signing of several very large agreements in 2025 and early 2026, and it has reshaped investors’ expectations for OCI’s top‑line potential.- Benefits of RPO: forward revenue visibility; leverage in pricing and customer lock‑in; large contract scale that supports long‑term cloud revenue targets.

- Risks of RPO: timing mismatch between revenue recognition and upfront capex; concentration risk if large anchor customers slow demand; margin pressure while facilities are ramping.

Capital intensity: GPUs, power, and racks

AI training clusters require the latest accelerators, dense power distribution, special cooling, and sometimes bespoke networking and interconnects. Those components are costly and in high demand, and supply‑chain constraints can extend build schedules and raise prices. Oracle’s announced capital plan and RPO imply billions of dollars of hardware purchases and substantial leasing or construction costs for data‑centre real estate. That front‑loaded investment profile means the company needs either ready cash, access to debt markets, or structural cost savings to maintain flexibility — which is the financial backdrop for considering workforce reductions.The human and organizational angle

What roles are likely to be affected

Reports indicate the cuts will span multiple divisions. Some of the positions targeted are described as roles that the company expects it “will need less of due to AI” — a phrase that has become common as companies automate repeatable workflows and move toward cloud‑centric operations. In practice, that typically includes:- Mid‑level operational roles tied to legacy product support and field services.

- Certain sales and administrative roles that are being rationalized or centralized.

- Parts of professional services where standardized cloud offerings can replace bespoke implementations.

Employee count and recent precedent

Oracle reported about 162,000 employees globally as of May 2025. The company has periodically reduced staff in prior years; 2025 alone included multiple smaller rounds and targeted reductions tied to consolidation and restructuring. The new wave under discussion appears broader and faster than the routine cadence of office‑level adjustments. For employees and partners, that creates immediate uncertainty around job security, project continuity, and vendor relationships.Financial analysis: where the cash will come from — and at what cost

The $50 billion financing plan

Oracle’s stated plan to raise up to $50 billion in 2026 through debt and equity sales is intended to fund the immediate data‑centre push. Financing at that scale is unusual for even large technology companies and will change Oracle’s capital structure and possibly its capital return policies. Analysts have framed the fundraise as a risk‑management move: it preserves the company’s ability to execute on large contracts while giving management sh flow turns negative in the short term. But issuing substantial new shares or debt will have dilution, interest‑cost, and rating implications that investors will weigh carefully.The $1.6 billion restructuring charge

Oracle disclosed a $1.6 billion restructuring plan in a prior filing — the company described it as its largest such program — and that figure primarily comprises severance and exit costs expected through the ongoing fiscal plan. The existence of a pre‑announced restructuring shows leadership has been planning organizational change, and it provides an accounting mechanism to recognize one‑time costs related to workforce reallocation. However, restructuring charges cut only one way: they book most one‑time expense now, but layoffs themselves often carry hidden costs — knowledge loss, slower product delivery, vendor renegotiations, and morale impacts — that can affect execution over quarters.Cash‑flow timing risks

Wall Street models cited in reporting project Oracle’s cloud‑unit expenditures could push operating cash flow negative for several years until capacity ramps and contracts monetize. That timing mismatch — invest now, monetize later — is why management is considering both large external financing and internal cost reductions. It is also why the market’s enthusiasm in 2024 and early 2025 has given way to more caution as capex estimates expanded.Strategic trade‑offs and scenarios

Oracle’s leadership faces three basic paths; each has plausible upside and distinct risks:- Accelerate with capital: raise the funds, build capacity quickly, accept near‑term cash strain for the chance of capturing outsized AI cloud share.

- Upside: first‑mover advantage for certain enterprise AI workloads; long‑term contracts deliver profitable rek: debt or equity dilution; overcommitment if demand changes; regulatory/contract concentration risk.

- Slow rollout and conserve cash: delay data‑centre openings, renegotiate schedules, and trim operating costs (including headcount).

- Upside: preservesterm risk; avoids overbuilding.

- Risk: ceding capacity windows to competitors; potential contract penalties or customer dissatisfaction.

- Hybrid: selectively prioritize anchor customers, outsource initial capacity, and redeploy internal headcount to higher‑value engineering and sales functions.

- Upside: smoother cash profile and preserves talent for strategic areas.

- Risk: higher unit costs and potential margin compression in early years.

Broader industry context: Oracle is not alone

Large capex for AI infrastructure has driven similar moves across the industry. Microsoft cut roughly 15,000 jobs in 2025 even as it accelerated Azure and OpenAI investments; other firms have also restructured to prioritize AI. The paradox is visible: tech firms are simultaneously creating new demand for AI talent while reducing headcount in lower‑value or automatable roles to free cash for GPUs and data‑centre builds. Oracle’s actions reflect this broader market dynamic, albeit at larger scale because of the company’s sizable RPO commitments.Risks that deserve attention — and what could go wrong

- Execution risk in build‑outs: data‑centre construction faces supply‑chain, permitting, and power‑availability hurdles. Delays would extend cash burn and limit revenue recoCustomer concentration**: if a substantial share of RPO ties to a small set of customers and one reduces demand, Oracle’s revenue ramp could falter. That concentration amplifies downside volatility.

- Financing execution risk: raising tens of billions via debt and equity depends on market conditions. or weak equity demand would force tougher internal adjustments.

- Operational costs of layoffs: severance accounting captures immediate costs, but knowledge loss, vendor churn, and program delays can depress productivity and revenue, creating a feedback loop that increases near‑term risk.

- Regulatory and contractual complexity: multibillion‑dollar cloud contracts often contain SLAs and delivery milestones. Oconcile contractual delivery expectations with the practical realities of construction and equipment procurement.

What employees, partners and customers should watch now

- Oracle’s official disclosures on March 10: the Q3 results and accompanying call will be the company’s chance to explain near‑term capex, the financing mix, and any planned workforce actions. Analysts expect the call to include updated guidance on OCI spending and cash flow.

- WARN notices and regional filings: in the U.S., mass‑layoff WARN notices and similar filings in EU countries often provide early, localized confirmation of planned reductions; watch state and local labor records for concrete numbers.

- Reclassification of open roles: Oracle’s reported internal review of open cloud‑division listings is an early operational signal; candidates and hiring managers should expect delays or re‑scopes of job openings.

- Contract and SLA language: customers with large OCI commitments should re‑read agreements for delivery milestones, termination rights, and remedies if capacity delivery slips. These clauses will matter if build schedules lengthen.

Independent assessment: strengths and weaknesses of Oracle’s plan

Strengths

- Scale of demand and bookings: Oracle’s RPO provides a rare level of forward visibility for a cloud vendor; it’s evidence that large customers are willing to sign multibillion‑dollar deals with Oracle for AI capacity. That gives Oracle negotiating leverage and potential long‑term revenue.

- Enterprise relationships and legacy footprint: Oracle’s installed base and enterprise agreements provide routes to upsell AI services and to integrate new cloud offerings with existing enterprise deployments. That commercial channel is valuable and not easily replicated.

- Decisive capital strategy: management’s willingness to raise large capital indicates a commitment to fulfill contracts and to prioritize OCI growth over near‑term cash flow optics — a legitimate strategic choice if the company can execute.

Weaknesses / risks

- Massive up‑front costs: the very nature of GPU‑centric AI infrastructure means prolonged negative cash flow risk if the revenue ramp slips or costs exceed projections.

- Execution and supply constraints: building large clusters at global scale requires synchronizing equipment orders, regional power and real‑estate availability, and construction schedules — any single bottleneck can cascade.

- Workforce and moral costs: fast, deep cuts can erode trust, diminish productivity, and slow product roadmaps, especially if critical institutional knowledge leaves. Booked severance expenses only tell part of the story.

Practical takeaways for WindowsForum readers (IT professionals, customers, partners)

- If you are a customer evaluating OCI for AI workloads: require clarity on delivery timelines, ask for written SLAs addressing phased delivery, and build contingency plans (multi‑cloud or temporary outsourcing) in case capacity is delayed.

- If you are an Oracle employee or job candidate: expect hiring slowdowns in some cloud‑division roles; seek written status on open applications and prioritize roles tightly coupled to OCI engineering or data‑centre operations, which may be more resilient.

- If you are an investor or analyst: March 10’s earnings release and the company’s chosen capital‑raise mechanics will be decisive. Model scenarios should include a conservative capex ramp, possible temporary negative free cash flow, and dilution or higher interest expense depending on financing choices.

- If you are a supplier or systems integrator: prepare for renegotiations on timing and delivery; build flexibility into procurement and staffing plans in case Oracle shifts schedules to conserve cash.

Conclusion

Oracle’s reported move to cut thousands of jobs is the most visible sign yet of a deeper bet: the company is willing to remap its balance sheet, operations, and workforce to chase a major share of the enterprise AI infrastructure market. That strategy combines a powerful advantage — large, contract‑backed RPOs — with structural financial risk from massive short‑term capital requirements and the operational complexity of building GPU‑scale data centres. The coming weeks will be crucial: Oracle has set its fiscal Q3 earnings call for March 10, which should provide concrete answers about financing, capex pacing, and the scale and focus of workforce changes. Until management lays out a clear plan that matches cash needs to build schedules and customer SLAs, stakeholders should treat reported job‑cut totals and analyst projections as working hypotheses rather than as settled fact.Source: Hindustan Times Oracle layoffs to impact thousands in AI cash crunch