Several thousand Microsoft Outlook users were left scrambling on the morning of July 10, 2025, after a sudden authentication-related service incident blocked mailbox access across Outlook’s web, desktop, and mobile surfaces — an outage Microsoft traced to a recent configuration change and addressed through targeted configuration updates and an expedited deployment plan.

The outage first produced visible symptoms late on Wednesday, July 9, 2025, and complaint volumes peaked the following morning as users attempted to sign in and access mailboxes during work hours. Public outage trackers such as Downdetector recorded spikes in reports (more than a few thousand at peak in some feeds), and social platforms filled with user reports describing 401-style authentication failures and inboxes that would not load.

Microsoft posted incident updates on its Microsoft 365 service health dashboard indicating the issue originated in authentication-related components and required configuration changes and component restarts to resolve. Early status notes warned the fix could take an “extended period,” but subsequent updates said the expedited deployment of the remediation was “progressing quicker than anticipated.” Those public notes — repeated verbatim by multiple outlets covering the incident — form the clearest record of Microsoft’s diagnosis and remediation steps.

Those two elements — configuration changes and authentication — are important because they indicate the outage was not a hardware failure or a network blackhole, but rather a logical/configuration problem in the identity/authentication layer. Problems at that layer typically manifest as widespread sign-in errors (HTTP 401s, token rejections) even when the underlying mailbox storage and transport subsystems remain healthy. Multiple incident write-ups and community telemetry matched that symptom pattern.

For now, actionable steps are straightforward: follow Microsoft’s service health announcements, rely on cached/offline email workflows where available, avoid wide credential churn during provider-side authentication incidents, and treat this outage as a prompt to strengthen identity redundancy, incident playbooks, and telemetry for the next inevitable disruption.

Source: AOL.com Thousands reporting problems with Microsoft Outlook

Background

Background

The outage first produced visible symptoms late on Wednesday, July 9, 2025, and complaint volumes peaked the following morning as users attempted to sign in and access mailboxes during work hours. Public outage trackers such as Downdetector recorded spikes in reports (more than a few thousand at peak in some feeds), and social platforms filled with user reports describing 401-style authentication failures and inboxes that would not load.Microsoft posted incident updates on its Microsoft 365 service health dashboard indicating the issue originated in authentication-related components and required configuration changes and component restarts to resolve. Early status notes warned the fix could take an “extended period,” but subsequent updates said the expedited deployment of the remediation was “progressing quicker than anticipated.” Those public notes — repeated verbatim by multiple outlets covering the incident — form the clearest record of Microsoft’s diagnosis and remediation steps.

What happened — a concise timeline

- July 9, ~22:20 UTC: Microsoft’s monitoring and support teams detect elevated error rates impacting Outlook authentication and mailbox access; initial user reports appear.

- Overnight and early July 10 (local mornings in many regions): Outage tracker counts climb into the high hundreds and then thousands in some feeds; users worldwide report inability to sign in, view inboxes, or send mail.

- Early Thursday: Microsoft posts an incident alert describing a failure in an authentication component that could prevent mailbox access via multiple connection methods and begins deploying a configuration-change fix.

- Later Thursday: Microsoft updates the incident, reporting that targeted configuration changes had resolved impact on many affected servers and that a worldwide deploy was underway; public trackers show complaint volumes dropping as the fixes propagate.

Technical analysis: authentication, configuration changes, and deployment trade-offs

The proximate cause Microsoft described

Microsoft’s official updates repeatedly referred to a failure within authentication components — the systems responsible for validating user credentials, issuing tokens, and enabling clients (web, desktop, mobile) to connect to mailboxes. The immediate mitigation took the form of configuration changes and component restarts, followed by validation steps to ensure authentication flows were operating normally.Those two elements — configuration changes and authentication — are important because they indicate the outage was not a hardware failure or a network blackhole, but rather a logical/configuration problem in the identity/authentication layer. Problems at that layer typically manifest as widespread sign-in errors (HTTP 401s, token rejections) even when the underlying mailbox storage and transport subsystems remain healthy. Multiple incident write-ups and community telemetry matched that symptom pattern.

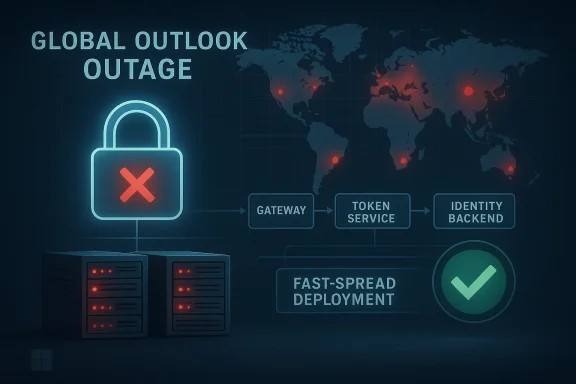

Why a configuration change can cascade

Modern cloud services use layered authentication stacks (frontend gateway → token service → identity backend → service authorizer). A configuration change in any of those elements can alter token validation rules, certificate chains, routing, or signing keys. Because authentication sits at the front line of every request, a malformed or incompatible configuration affects all clients at once.- If token validation rules become stricter, existing tokens may be rejected.

- If a signing certificate is rotated prematurely or misapplied, authentication tokens issued by one component may not validate elsewhere.

- If regional configuration clusters diverge, the impact can be geographically uneven — which explains why Microsoft described “regions which are experiencing the highest levels of impact.”

Deployment methodology and the speed-vs-safety trade-off

Microsoft’s status notes explicitly referenced reviewing “options to leverage an expedited deployment methodology” to relieve the hardest-hit regions faster. That phrasing highlights a classic incident trade-off:- A normal deployment includes phased rollouts, canary validation, and slow expansion — this minimizes the chance a fix introduces regressions.

- An expedited deployment reduces time-to-relief but increases risk that the fix will produce new failures or insufficiently validated regressions.

What the public reporting does — and does not — prove

The public patch notes and service-health messages make it clear the remediation was configuration-driven and authentication-focused. They do not, however, provide forensic depth on the underlying change that triggered the failure (for example: whether a human configuration change, automated orchestration, a bad rollout of a dependent library, or an external propagation problem caused the event). Several outlets and community threads repeated speculative causes; Microsoft’s statements avoided attribution beyond the functional level. Where claims go beyond Microsoft’s official account, they should be treated cautiously.Impact: who was affected and how

Individual and small-business users

For many personal Outlook.com users, the outage was an immediate productivity irritant: inability to view time-sensitive messages, missed two-factor authentication emails, and trouble accessing calendar invitations. Those impacts are acute when email functions as a central identity recovery path. Downdetector-style trackers and social platforms showed thousands of isolated reports from multiple countries during peak hours.Enterprises and critical workflows

For businesses using Microsoft 365 as an operational backbone, the outage’s ripple effects included delayed communications, missed approvals, and disruption to workflows that embed email-driven automation. Organizations that rely on email for legal processes, invoicing, or client communications reported urgent headaches; in some cases, users resorted to out-of-band channels (phone, SMS, alternate mail systems) to meet deadlines. Community threads compiled by Windows-focused discussion hubs captured a broad set of real-world anecdotes illustrating these disruptions.Geography and scale

Although complaint volumes differed by region — leading Microsoft to target the most affected geographies — the outage showed global reach: reports emerged from North America, Europe, Asia, and Australia within hours. Public reporting suggests the highest complaint counts coincided with morning work hours in multiple time zones, amplifying real-world business impact.Microsoft’s response — what they did well and what fell short

Strengths in the response

- Rapid visibility and acknowledgement: Microsoft posted incident updates on the Microsoft 365 status dashboard, providing an authoritative, centralized account of the problem and its remediation plan. That transparency is vital in an enterprise-grade outage.

- Targeted remediation: The company identified authentication components and moved to apply configuration fixes, followed by validation. This shows disciplined, targeted troubleshooting rather than scattershot restarts.

- Escalation to expedited deployment: When impact persisted, Microsoft considered and used faster deployment techniques to alleviate high-impact regions — a pragmatic choice when business continuity is at stake.

Issues and communication friction

- Perceived pace and noise on social channels: Some users complained about a lack of immediate updates on social platforms and felt forced to rely on outage trackers and third-party reporting for clarity. In high-impact outages, many organizations expect near-real-time cross-channel updates.

- Limited forensic detail: Microsoft’s public statements stayed at a functional level; while appropriate for many incidents, the absence of deeper technical detail invites speculation and makes it harder for enterprise IT teams to draw lessons without waiting for a post-incident report. Community moderators and enterprise IT leads commonly ask for more thorough root-cause analyses after the fact.

Practical guidance: what users and admins should do now

Whether you’re an individual Outlook user or an enterprise admin, outages like this underline the need for pragmatic mitigations and readiness steps. Below are prioritized actions.For individual users

- Check official service health first: consult your organization’s Microsoft 365 admin or Microsoft’s service health dashboard for authoritative status. Public outage trackers can be noisy and lag official statements.

- Use cached/local mail: if you use Outlook desktop with an OST cache, continue working offline and compose messages; they will send automatically once connectivity/authentication is restored.

- Avoid repeated password churn: repeated password resets or reconfiguration attempts during a backend authentication outage can complicate support and recovery; wait for the service to be confirmed fixed before mass credential changes.

- Use alternate communication channels for urgent matters: phone, SMS, or other enterprise messaging tools are ordinary fallbacks during email outages.

For IT administrators and enterprise teams

- Validate that the outage is service-side before broad reconfigurations. If the provider has acknowledged the outage, avoid enterprise-wide credential resets that could proliferate the problem.

- Inform stakeholders and activate contingency plans (alternate mail relay, emergency contact lists, manual approval processes) to maintain critical operations.

- After restoration, gather telemetry: collect sign-in logs, token error types, and client versions impacted to feed into post-incident analysis. This evidence will be necessary if the root cause involves configuration divergence or token validation policy changes.

- Harden identity flows: consider adding redundant identity providers where possible, enforce robust monitoring on authentication metrics, and maintain documented rollback procedures for configuration changes. These steps reduce blast radius when identity or token issuance systems change.

Broader lessons and risks for cloud-dependent organizations

Cloud convenience comes with concentrated risk

Email and identity are foundational services; consolidating them with a single cloud provider improves manageability but concentrates failure modes. When authentication fails at the platform level, many dependent services (mail, calendar, encrypted file access) become simultaneously impaired. The incident illustrates why organizations should design for graceful degradation and maintain out-of-band processes for critical operations.Change control and observability are paramount

The outage appears tied to a configuration change. Cloud providers operate at a scale where even small logical misconfigurations can cascade. That reality underscores the need for:- Rigorous change control (automated testing, canary deployments, staged rollouts).

- Greater telemetry and health-signal visibility for customers (detailed error codes and affected UX surfaces).

- Rapid rollback capability and clearly documented emergency rollout plans.

Regulatory and contractual exposure

For larger organizations, outages translate to contractual risk (SLA credits, potential regulatory scrutiny in heavily regulated industries). Enterprises should review their continuity obligations and ensure contractual protections and remediation expectations with providers are clear and actionable. Community threads reflected discussions about SLA expectations and the practical limits of recourse following platform outages.What we don’t know — points that need careful vetting

Multiple community posts and some secondary outlets speculated about deeper causes (bad rollout, automation bug, or even malicious action). Microsoft’s public messages focused on configuration changes affecting authentication and avoided specifying the precise trigger. Until Microsoft publishes a formal post-incident root-cause report, any detailed theory about the initial error sod as speculative. Good practice is to wait for the provider’s forensic release while preparing to adapt internal controls based on confirmed findings.How this incident fits into the recent reliability landscape

This outage — measured in hours for most users but hours of business disruption for many — is part of a pattern of occasional high-impact incidents affecting large cloud platforms. Microsoft has experienced similar high-profile incidents in recent months; each one has added to debate about cloud centralization, vendor transparency, and enterprise preparedness. Community and trade reporting shows the incident was broadly covered and quickly driven into technical discussion threads where operators compare mitigation strategies and post-mortem expectations.Practical recommendations for long-term resilience

- Adopt a layered identity strategy where key services have secondary authentication paths or emergency access accounts that are isolated from routine configuration changes.

- Build and rehearse incident playbooks that include clear criteria for alternate communication channels and manual workflow activation.

- Monitor not just service availability but identity/token metrics; early deviations in token issuance rates or validation rejection patterns often precede full-scale outages.

- Negotiate SLAs that include not only uptime but meaningful operational commitments around incident communication and post-incident forensic reports.

Conclusion

The July 9–10, 2025 Outlook incident is a reminder that cloud-scale services, even when finely engineered, remain vulnerable to configuration and identity-layer failures that can ripple quickly across millions of users. Microsoft’s public remediation path — a focused configuration fix followed by an expedited deployment and validation — brought the service back for most users, but the episode left enterprise teams and individual users alike with practical questions about preparedness, communication, and the acceptable balance between safety and speed when rolling changes through global infrastructure.For now, actionable steps are straightforward: follow Microsoft’s service health announcements, rely on cached/offline email workflows where available, avoid wide credential churn during provider-side authentication incidents, and treat this outage as a prompt to strengthen identity redundancy, incident playbooks, and telemetry for the next inevitable disruption.

Source: AOL.com Thousands reporting problems with Microsoft Outlook