EPC Group’s new six-layer architecture reimagines Microsoft Power BI as an enterprise decision intelligence platform — extending Microsoft’s Copilot with AutoML, LLMs, cognitive enrichment, RAG-based retrieval, agentic automation, and continuous forecasting so that dashboards don’t just report history but proactively surface and act on future risks and opportunities.

Power BI has steadily evolved from a self-service reporting tool into a richer, AI-infused analytics surface. Microsoft’s Copilot for Power BI introduces chat-driven analysis, DAX generation, and conversational access to semantic models — features that dramatically lower the bar for everyday analysts to ask natural‑language questions of governed datasets. But Copilot alone does not equate to an enterprise-grade decision platform: it addresses query and narrative generation, not the full stack of predictive modeling, multimodal enrichment, model-grounding, or agentic workflows needed for proactive decision-making.

At the same time, Microsoft Fabric and its associated services (AutoML powered by FLAML, Azure AI Search, Azure OpenAI Service, and Cognitive Services) provide building blocks — vector search, embeddings, document intelligence, and scalable model hosting — that make integrated, multi-model AI architectures technically feasible on the Microsoft stack. These platform advances are what EPC Group’s announcement is attempting to bind into a prescriptive, repeatable template for enterprise Power BI deployments.

But the transformation from “technology stack” to “enterprise decision platform” depends on rigorous governance, capacity planning, cost control, and proof — not just feature wiring. Organizations must treat the program as an ongoing product with measurement, human oversight, and conservative rollout of agentic automation. Vendor frameworks that promise rapid enterprise-wide change are valuable, but buyers should insist on measurable pilots, security attestations, and transparent provenance for all model-driven recommendations.

That said, the real determinant of success will be governance, reproducible engineering, and cautious operationalization. Enterprises should welcome architectures that broaden Power BI’s value, but they should insist on pilots, traceability, capacity planning, and independent verification of vendor claims before scaling a Copilot‑centric, agentic analytics program across mission‑critical decision-making.

Source: The National Law Review EPC Group Expands Power BI Copilot With Enterprise Multi-Model AI Architecture

Background

Background

Power BI has steadily evolved from a self-service reporting tool into a richer, AI-infused analytics surface. Microsoft’s Copilot for Power BI introduces chat-driven analysis, DAX generation, and conversational access to semantic models — features that dramatically lower the bar for everyday analysts to ask natural‑language questions of governed datasets. But Copilot alone does not equate to an enterprise-grade decision platform: it addresses query and narrative generation, not the full stack of predictive modeling, multimodal enrichment, model-grounding, or agentic workflows needed for proactive decision-making.At the same time, Microsoft Fabric and its associated services (AutoML powered by FLAML, Azure AI Search, Azure OpenAI Service, and Cognitive Services) provide building blocks — vector search, embeddings, document intelligence, and scalable model hosting — that make integrated, multi-model AI architectures technically feasible on the Microsoft stack. These platform advances are what EPC Group’s announcement is attempting to bind into a prescriptive, repeatable template for enterprise Power BI deployments.

What EPC Group announced — a practical summary

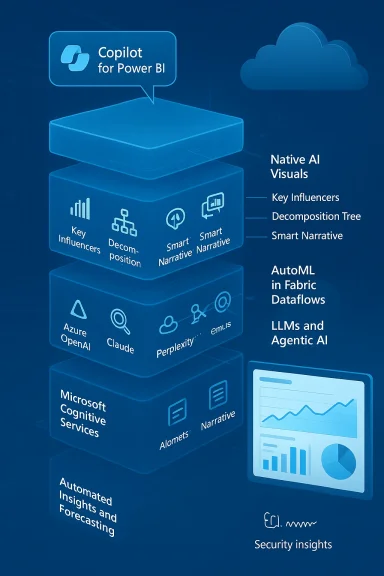

EPC Group has published and promoted a structured, six-layer architecture that sits on top of Microsoft Fabric and Power BI. In brief, the layers are:- Layer 1 — Copilot for Power BI: Natural‑language conversational access and DAX generation via Microsoft Copilot.

- Layer 2 — Native AI visuals: Standardized deployment of Power BI’s AI visuals (Key Influencers, Decomposition Tree, Smart Narrative, anomaly detection, Q&A) via governed patterns.

- Layer 3 — AutoML in Fabric dataflows: Train, score, and operationalize predictive models inside Fabric (FLAML-powered AutoML) and publish scored outputs into semantic models.

- Layer 4 — LLM and agentic AI integration: Secure API-based integration with Azure OpenAI, OpenAI models, Anthropic/Claude, Perplexity, and open‑source models (Llama, Mistral), plus RAG via vector search to ground responses.

- Layer 5 — Microsoft Cognitive Services enrichment: Preprocessing and enrichment of unstructured sources (text classification, sentiment, entity extraction, document intelligence) before loading into Fabric.

- Layer 6 — Automated insights & forecasting: Continuous anomaly detection, built‑in forecasting, and Copilot‑generated narratives that surface trends and trigger alerts for decision-makers.

Why this matters: from reporting to decision intelligence

Power BI’s mainstream adoption across enterprises creates a high-leverage surface for adding AI. The difference between a dashboard and a decision intelligence platform is not a single feature — it’s the integration of pipelines, models, grounding, governance, and operational controls that let executives rely on AI‑driven outputs for action.- Copilot reduces friction for asking questions and generating DAX, but it does not automatically produce certified predictions, nor does it persist modelled outputs for continuous monitoring. Layering AutoML and model scoring directly into dataflows addresses that gap by making predictions first-class data artifacts inside the analytics lake/semantic model.

- Native AI visuals and narrative features provide explainable outputs that are digestible in executive dashboards, improving adoption. Standardizing those visuals and explanations across a governed pattern library reduces variance between reports — a common enterprise pain point.

- RAG and vector search let LLMs answer questions grounded in the latest internal policies, contracts, or product specs rather than relying on statistical hallucination. Embedding RAG into Fabric and Power BI makes conversational analytics more auditable and traceable.

- Faster answers to complex questions, with transparent why explanations (root causes, influential variables).

- Predictive signals embedded in the same dashboards executives already use, enabling earlier, data‑driven action.

- Conversational and semi-autonomous workflows — where agents fetch data, run a model, and provide a recommended action — reducing manual handoffs.

Technical reality check: what Microsoft supports today (and what it does not)

EPC Group’s stack is grounded in existing Microsoft capabilities, but enterprises must know which pieces are mature and which are emerging:- Copilot for Power BI is shipping and supported, but it carries capacity and licensing prerequisites. Copilot experiences require Microsoft Fabric capacity or Power BI Premium (and some experiences are preview-only). Admins can enable/disable tenant-level settings to control exposure. This means organizations must plan capacity (F‑series or P series) and governance before rolling it to broad audiences.

- AutoML inside Fabric is available (FLAML-based) but many capabilities are still in preview and require testing for production SLAs. Fabric’s AutoML can automate model selection and hyperparameter tuning, and it integrates with Fabric dataflows and lakehouses. Enterprises should expect to define model monitoring, drift detection, and retraining schedules rather than assuming a “set-and-forget” outcome.

- RAG, vector search, and LLM hosting are first-class patterns on Azure, with robust Microsoft guidance. Azure AI Search (formerly Cognitive Search), Azure OpenAI, and Fabric notebooks offer documented ways to build RAG solutions and link them into analytic workflows. That makes EPC Group’s claim of RAG-based grounding technically feasible. However, integrating third-party LLMs (Anthropic, Perplexity, open-source Llama/Mistral) introduces network, licensing, and data‑residency considerations that need careful architecture.

- Power BI’s native AI visuals and anomaly/forecasting features are established, and they can be used immediately to surface explainable insights. But the fidelity of those features depends on data model quality — semantic models with well-defined measures and metadata yield better automated narratives and DAX outputs.

Strengths of EPC Group’s multi-model approach

- Platform cohesion: By centering the design on Microsoft Fabric + Power BI, the architecture uses native security, identity, and governance constructs (Entra ID, capacity controls, workspace-level permissions), reducing integration friction for Microsoft-first enterprises.

- Practical producibility: AutoML inside Fabric, Copilot’s DAX and narrative generation, and Azure AI Search’s RAG patterns are already documented and supported — so the architecture can be built using supported components rather than entirely bespoke integrations.

- Operationalizing models as data: Training models inside Fabric dataflows and writing predicted scores into semantic models is a pragmatic design that makes AI artifacts visible, auditable, and consumable by non‑data‑science teams. This reduces the “throw it over the wall” problem between data science and BI teams.

- Multi-model flexibility: Allowing model routing across Azure OpenAI, OpenAI, Anthropic, and open-source runtimes offers workload‑centric model selection (e.g., use one model for summarization, another for reasoning). That reduces vendor lock-in risk and can optimize cost-performance.

Risks, gaps, and areas enterprises must scrutinize

- Governance and RLS gaps: Natural language interfaces and agentic agents amplify the impact of misconfigurations. Copilot and RAG workflows can inadvertently expose sensitive rows or documents if row-level security or document-level access controls are not enforced end‑to‑end. Microsoft’s Copilot and RAG guidance note the need for tenant settings and for careful use of workspace-level controls — but those are administrative controls, not automatic enforcement. Enterprises must validate RLS and document‑level trimming in any agentic flow.

- Model explainability and audit trails: When predictions, automated narratives, and agentic actions are surfaced to executives, teams must provide provenance (which model, which training data, embedding matches used in RAG, similarity scores). LLM outputs especially can be brittle; without clear audit trails, firms risk making decisions from opaque model answers. Microsoft’s RAG patterns include options for traceability, but engineering discipline and telemetry are required.

- Regulatory, privacy, and residency constraints: Integrating external LLMs or using public model APIs can create data egress issues for regulated sectors (finance, healthcare, government). Enterprises should map data classifications and consider using on‑tenant/vCore-based services, private Azure regions, or on‑premises (when available) to meet compliance needs. These are non‑trivial architecture decisions.

- Cost and capacity planning: Copilot and RAG workloads require paid Fabric or Premium capacity and can incur additional compute costs for vector indexes, embeddings, and LLM inference. A holistic cost model must include Fabric capacity, Azure OpenAI or third-party model inference charges, storage for embeddings, and monitoring/agent orchestration costs. Microsoft docs explicitly call out capacity prerequisites for Copilot experiences.

- Vendor claims vs. verifiable outcomes: EPC Group’s press materials include high-impact assertions — implementation counts and leadership recognitions — that align with their marketing narrative. While EPC Group’s site and press releases document many customer projects and milestones, some awards or rankings (e.g., “Top 10 AI Architects in North America”) lack independent third‑party verification in public records; treat those as claimed credentials and validate them during vendor selection.

Implementation playbook: how enterprises should evaluate a six-layer Power BI AI architecture

If your organization is considering an EPC Group–style integration, use this practical checklist during due diligence and planning:- Governance and access controls

- Inventory sensitive datasets and classify them by regulatory profile.

- Define an RLS and document trimming verification plan for Copilot and all RAG flows.

- Ensure tenant-wide Copilot settings and Azure OpenAI usage are audited.

- Capacity and cost modeling

- Map expected query volume and embedding/index size; simulate costs for Fabric capacity (F/P SKUs), Azure OpenAI inference, and Azure AI Search.

- Include ongoing MLOps costs — model retraining cycles, monitoring, and incident response.

- Provenance and explainability requirements

- Implement logging that links Copilot responses to the vector hits or model artifacts that generated them.

- Surface model metadata in dashboards (model id, version, last retrained, validation metrics).

- Model and data lifecycle

- Use AutoML trials in Fabric for prototyping, then elevate to guarded model development with explicit test datasets and performance baselines.

- Define drift detection and retraining triggers before going to production.

- Security and privacy engineering

- Restrict external LLM use for regulated data unless using approved private deployments or on‑tenant inference.

- Apply data minimization for prompts and use encryption at rest and in transit for embeddings and indexes.

- Human-in-the-loop and SLA design

- For any agentic action that modifies systems or sends communications, require human approval gates until models meet conservative accuracy thresholds.

- Define SLAs for alerting velocity, false positive tolerances, and remediation workflows.

A pragmatic cost/benefit framing

EPC Group frames the opportunity as a substantial integration market (the announcement highlights a “$50M” figure in marketing language). For an enterprise reader, the relevant question is not the headline number but whether the program reduces time-to-decision, improves forecast accuracy, and reduces expensive manual escalation.- Benefits likely to produce measurable ROI: reduced decision latency (fewer hours to insight), improved forecasting accuracy that drives inventory or labor optimization, and fewer missed anomalies that cause revenue leakage.

- Costs are concrete and ongoing: Fabric capacity, LLM inference costs, vector-index storage/ops, MLOps overhead, and governance/engineering staffing. Forecasting five‑ to seven‑figure annual run rates for large deployments is reasonable; exact numbers depend on query volume, model choices, and data size.

How vendors and partners (including EPC Group) should be evaluated

When selecting a systems integrator to build a multi‑model Power BI architecture, prioritize evidence over rhetoric:- Look for documented implementations with measurable outcomes (not just implementation counts). Request sanitized case studies that show forecast lift, time-to‑insight reductions, or anomaly detection ROI. EPC Group’s press materials cite hundreds of Fabric/Power BI engagements; validate selected references.

- Demand security engineering evidence: architecture diagrams showing RLS enforcement across RAG queries, proof of encryption for embeddings, and least‑privilege model execution.

- Ask for a staged delivery plan: Discovery → Pilot (limited dataset and use case) → Hardening (model governance, monitoring) → Rollout. Avoid vendors that propose enterprise-scale automation without measurable pilots.

- Verify vendor claims: when marketing cites awards or rankings, ask for primary documentation. Vendor reputations are helpful, but independent third‑party verification and reference checks are essential.

Where this approach will likely show early wins — and where it won’t

Early-win scenarios:- Executive dashboards that require concise narratives and root-cause explanations: Copilot + Smart Narrative + Key Influencers can dramatically speed CEO/board-ready reports.

- Operational forecasting pipelines with moderate complexity: AutoML in Fabric can quickly produce time-series or classification models that feed pre-built dashboards, making predictive insights operational.

- Document-heavy domains (contracts, customer feedback): Cognitive Services + RAG improves the accuracy of LLM answers by grounding them in current contracts and extracted entities.

- Highly regulated, high-consequence decision automation (e.g., automated underwriting without rigorous model certification) — this requires heavier governance than a standard BI program.

- Use cases that require sub-second inference at global scale for hundreds of millions of queries per day — architecture and cost constraints can make these expensive on current managed LLM services without careful engineering.

Bottom line: the architecture is sound — but organizational execution is the real challenge

EPC Group’s six-layer blueprint maps well to current Microsoft capabilities: Copilot for conversational analytics, AI visuals for explainability, AutoML in Fabric for predictions, RAG and vector search for grounding, Cognitive Services for enrichment, and automated forecasting for continuous signals. Microsoft’s documentation and samples show that each block is technically realizable within Fabric and Azure, and publicly available guidance exists for RAG, embeddings, and model integration.But the transformation from “technology stack” to “enterprise decision platform” depends on rigorous governance, capacity planning, cost control, and proof — not just feature wiring. Organizations must treat the program as an ongoing product with measurement, human oversight, and conservative rollout of agentic automation. Vendor frameworks that promise rapid enterprise-wide change are valuable, but buyers should insist on measurable pilots, security attestations, and transparent provenance for all model-driven recommendations.

Practical next steps for analytics leaders

- Run a focused 8–12 week pilot on a single high-value use case (e.g., customer churn or supply-demand forecasting) using Copilot‑enabled exploration, AutoML prototypes in Fabric, and a RAG-backed Copilot query surface. Measure business KPIs alongside technical metrics (precision/recall, forecast error).

- Define governance guards up front: RLS verification plans, model lineage logging, prompt/data minimization policies, and human approval gates for any automated actions.

- Build a cost projection that includes Fabric capacity, LLM inference, vector indexes, storage, and ongoing MLOps staff.

- Require vendors to provide reproducible engineering artifacts: notebooks, deployment templates, CI/CD for models, and evidence of compliance practices. Validate vendor case studies with client references.

Conclusion

EPC Group’s enterprise multi‑model architecture for Power BI is a pragmatic, platform-aligned attempt to move analytics from passive reporting to proactive decision intelligence. The technical building blocks exist today in Microsoft Fabric, Power BI, Azure OpenAI, Azure AI Search, and Cognitive Services — and EPC Group’s model stitches these into a consistent, consultative offering.That said, the real determinant of success will be governance, reproducible engineering, and cautious operationalization. Enterprises should welcome architectures that broaden Power BI’s value, but they should insist on pilots, traceability, capacity planning, and independent verification of vendor claims before scaling a Copilot‑centric, agentic analytics program across mission‑critical decision-making.

Source: The National Law Review EPC Group Expands Power BI Copilot With Enterprise Multi-Model AI Architecture