In a compact but consequential episode of First Ring Daily, hosts Brad Sams and Paul Thurrott unpack two related shifts that are now moving from pilot projects into mainstream planning: the everyday use of large language models (LLMs) by pragmatic developers for small code tasks, and Microsoft’s accelerating push to fold Copilot into healthcare workflows. The episode flags a reality IT teams must face today — AI is already a practical tool for both coding and clinical productivity, but it raises operational, security, and governance questions that organizations cannot ignore. (petri.com)

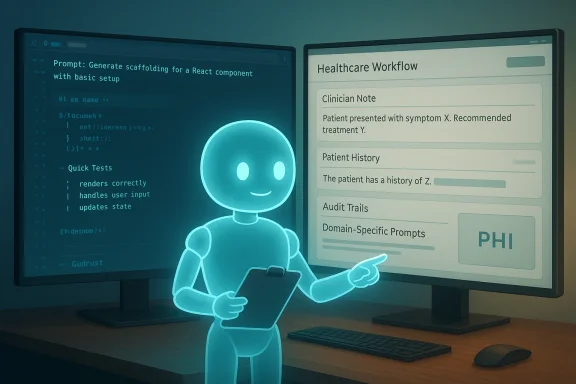

The First Ring Daily segment is part of a steady stream of short-format tech commentary that highlights how trends in AI and Microsoft’s Copilot ecosystem are intersecting with real-world work patterns. In this episode, the hosts covered two threads: first, how experienced engineers are using LLMs to accelerate small code tasks without abandoning human review; second, the expansion of Microsoft’s Copilot offerings into healthcare environments, where Microsoft is already testing and deploying variants of Copilot to assist clinicians and administrative staff. (petri.com)

Microsoft’s broader healthcare strategy has been visible for some time: Copilot-related services, partnerships with health systems, and purpose-built healthcare agents in Copilot Studio all point to a multiyear push to make AI a practical part of clinical workflows. Public and private pilots have reported productivity gains — headline-grabbing figures, like the NHS pilot that found average time savings measured in tens of minutes per clinician per day, illustrate the potential but also demand careful interpretation.

Key advantages:

For CIOs, IT leaders, and clinical informatics teams, the choice is not whether to try these tools — the choice is how to test, govern, and scale them responsibly. When pilots are narrowly scoped, backed by solid integration work, and governed with clear safety checks, the upside can be substantial. When deployments skip the governance legwork, risk and downstream costs quickly multiply.

Conclusion

First Ring Daily’s short dispatch captures the practical mood of 2026: engineers are quietly using LLMs for pragmatic coding tasks, and major platform vendors are moving Copilot into healthcare with real pilots and partners. The combination of productivity promise and real regulatory, safety, and security obligations means IT and clinical leaders must be deliberate. Pilots should be narrow, measurable, and governed; model outputs must be human-validated; data flows must be auditable; and legal and compliance teams must be part of the plan from day one. Do that, and the potential efficiencies and clinician relief on offer from Copilot and LLMs could be a meaningful step forward. Fail to do that, and the same tools that accelerate work will amplify risk and technical debt.

Source: Petri IT Knowledgebase First Ring Daily 1929: Health Pilot - Petri IT Knowledgebase

Background / Overview

Background / Overview

The First Ring Daily segment is part of a steady stream of short-format tech commentary that highlights how trends in AI and Microsoft’s Copilot ecosystem are intersecting with real-world work patterns. In this episode, the hosts covered two threads: first, how experienced engineers are using LLMs to accelerate small code tasks without abandoning human review; second, the expansion of Microsoft’s Copilot offerings into healthcare environments, where Microsoft is already testing and deploying variants of Copilot to assist clinicians and administrative staff. (petri.com)Microsoft’s broader healthcare strategy has been visible for some time: Copilot-related services, partnerships with health systems, and purpose-built healthcare agents in Copilot Studio all point to a multiyear push to make AI a practical part of clinical workflows. Public and private pilots have reported productivity gains — headline-grabbing figures, like the NHS pilot that found average time savings measured in tens of minutes per clinician per day, illustrate the potential but also demand careful interpretation.

What Brad Sams and Paul Thurrott Said — A Practical Summary

- They described how LLMs are being used by developers not as replacement engineers, but as accelerants for routine coding tasks: scaffolding, boilerplate generation, prototyping, and even exploratory problem solving.

- They framed the practice as selective and pragmatic: engineers lean on LLMs for small, clearly bounded tasks and always apply manual review and testing before promotion to production.

- The hosts contrasted consumer hype about “AI replacing programmers” with real-world behavior from senior engineers and maintainers who use LLMs as a tool in the toolchain rather than as an autonomous author. (petri.com)

Microsoft Copilot’s Push into Healthcare — What’s New

Microsoft has layered several initiatives under its health-and-AI umbrella:- Copilot Health and consumer-facing health experiences meant to help users navigate symptom-checking and health information.

- DAX Copilot and Dragon / Dragon Copilot style offerings for clinicians — ambient scribe and clinical documentation tools that convert conversations into notes and structured records.

- Copilot Studio healthcare agent services, which let organizations build domain-specific Copilot agents for scheduling, triage, clinical trial matching, and other tasks.

- Enterprise and clinical partnerships that integrate Copilot into Electronic Health Records (EHRs), often linking through standards such as FHIR and local data solutions like Microsoft Fabric for harmonizing clinical data.

Why Healthcare Is Different: Opportunities and Constraints

Healthcare is a high-opportunity but high-friction domain for AI. The potential upsides are clear:- Time savings and clinician burnout reduction: automating documentation and routine administrative tasks can let clinicians spend more time in direct patient care.

- Improved care coordination: Copilot agents can accelerate triage, referrals, and clinical summarization across care settings.

- Data-driven decision support: integrated AI capable of surfacing relevant patient history, medication interactions, and guideline reminders at the point of care.

- Privacy and compliance: Healthcare data is often covered by stringent laws like HIPAA (United States) and complex regional privacy regimes. Solutions must demonstrate compliant data handling, audit trails, and controlled data flows.

- Clinical safety: Hallucinations or inaccurate clinical suggestions can do harm; model outputs require validation and human-in-the-loop safeguards.

- Integration complexity: EHR interoperability and clinical workflows are messy. Successful AI pilots require deep technical work to align data models, mapping, and context (for example, FHIR and TEFCA considerations).

- Organizational and liability questions: Who is responsible when AI contributes to a clinical decision? How are malpractice and governance framed?

LLMs for Small Code Projects: What “Pragmatic Use” Looks Like

“Pragmatic use” — the phrase that Brad Sams and Paul Thurrott leaned on — has concrete behaviour patterns behind it:- Using LLMs to generate repetitive boilerplate (tests, REST client scaffolding, CRUD endpoints).

- Rapid prototyping or proof-of-concept generation to validate ideas before investing developer hours.

- Asking models to reformat or refactor small functions for readability and performance tips.

- Using LLMs as an explainability layer: asking the model to summarize or document what a block of code does as a way to speed code reviews or onboarding.

Key advantages:

- Faster iteration cycles for routine tasks.

- Reduced friction for prototyping and learning new APIs.

- Improved developer productivity on well-defined micro-tasks.

- LLMs can embed subtle security bugs, insecure defaults (e.g., SQL string concatenation), or noncompliant data handling if prompts lack explicit security constraints.

- Licensing and code provenance: models trained on public code raise questions about attribution and GPL/OSS compliance for generated snippets.

- Overreliance for complex, system-level engineering reduces code quality and maintainability.

Cross-Referencing the Evidence: What Independent Sources Show

Multiple independent sources corroborate the two headline trends discussed on First Ring Daily:- Microsoft’s public messaging and product announcements confirm expanded healthcare-focused Copilot capabilities and previewed services for healthcare agents. Microsoft’s own blog posts and press materials explicitly outline the approach and partner list.

- News outlets and industry press (CNBC and sector-specific outlets) covered the October/November surge in Microsoft healthcare AI announcements and highlighted the company’s acquisition history (for example, Nuance) and partner programs. These outlets frame the announcement as a serious commercial push that aligns with pilot deployments.

- Independent pilot reports and third-party coverage — including the NHS pilot data and recent Copilot Health usage reports — provide measurable early outcomes and adoption metrics that amplify the significance of Microsoft’s strategy, while also revealing methodological variance that cautions against simple extrapolation.

- The developer community’s conversations — including commentary from heavyweight engineers and maintainers — show that experienced engineers are already experimenting with LLMs for smaller tasks and emphasize human-in-the-loop checks. Coverage of Linus Torvalds’ and Mark Russinovich’s attitudes toward AI-assisted coding illustrates the pragmatic, cautious approach the Petri episode described.

Strengths: What’s Promising About These Trends

- Real productivity wins: Pilots show clinically meaningful time savings and administrative efficiencies that could relieve clinician burnout if scaled with caution. The NHS pilot’s headline figure — tens of minutes per clinician per day — is exactly the kind of operational leverage health systems chase.

- Domain-specialized agents: Copilot Studio’s ability to craft healthcare-specific agents helps ensure models work in context and can be configured with healthcare-safe prompts and retrieval systems.

- On-device processing options: For Copilot+ PCs and select deployments, on-device AI and secure NPUs reduce the need to move protected health information offsite — a technical architecture that helps address privacy and compliance concerns when implemented properly.

- Practical developer workflows: For engineering teams, LLMs reduce friction for simple tasks, allowing experienced staff to invest more time in architecture and review rather than plumbing.

Risks and Red Flags: What IT Leaders Must Watch

- Hallucination and clinical risk: In healthcare, an inaccurate suggestion is not merely embarrassing; it can harm patients. Models must be restricted, and outputs must be explicitly labeled as assistive, not definitive.

- Data governance and leakage risks: Connecting models to live EHR data without robust logging, access control, and tokenization increases the risk of inadvertent disclosure. HIPAA compliance and contractual safeguards with vendors must be airtight.

- Regulatory and liability uncertainty: Liability when AI contributes to a decision is not fully resolved in many jurisdictions. Legal teams and risk committees must be involved early.

- Operational complexity: EHR integrations, mapping to FHIR resources, and event-driven triggers require experienced engineering — the pilot-to-scale path can stall without a disciplined integration program.

- Supply chain and provenance of code: For LLM-assisted coding, licensing and provenance issues persist: generated code can inadvertently incorporate snippets with incompatible licenses or reveal training-source attribution challenges.

- Maintenance and technical debt: If LLMs generate brittle or poorly understood code that teams accept without rigorous testing, long-term maintenance costs may spike.

Practical Recommendations for IT and Healthcare Teams

- Start with narrow pilots

- Identify low-risk, high-frequency tasks (e.g., administrative note summarization, appointment triage).

- Define measurable KPIs (time saved, error rate, clinician satisfaction).

- Implement strong data governance

- Map data flows, establish encryption-at-rest and in-transit, and apply access controls and logging for any PHI-accessing agent.

- Use DLP, tokenization, and query-time redaction where possible.

- Insist on human-in-the-loop validation

- Treat AI outputs as drafts: clinicians or coders must review, correct, and sign off before acceptance into the official record or production code.

- Integrate testing and security gates for LLM-created code

- Require static analysis, SAST/DAST, unit and integration tests, and code provenance checks for any AI-generated changes.

- Define regulatory and legal guardrails

- Work with compliance and legal teams to set policies on liability, logging, and auditability.

- Use model-specific and domain-specific retrieval

- Combine LLMs with curated, local knowledge stores (vector DBs connected to controlled EHR extracts) rather than free-form internet search.

- Educate users

- Provide training on prompt design, model limits, and responsible use — both for clinicians and developers.

Technical Design Considerations: EHR Integration, Data Flow, and Security

- Use standardized clinical data models: Prioritize FHIR-aligned resource mapping and canonicalization so Copilot agents can reason over structured data consistently.

- Isolate sensitive data: Maintain segmented environments for training/test vs. production and use minimal necessary data extraction for agent operation.

- Audit trails and explainability: Log model inputs, model responses, and the human action that followed. This record is essential for clinical governance and regulatory review.

- Model selection and tuning: Prefer domain-tuned models or retrieval-augmented generation (RAG) setups with curated clinical corpora rather than generic LLMs for clinical decision support tasks.

- On-device vs. cloud trade-offs: On-device inference reduces egress of PHI but may limit model sophistication; cloud-hosted solutions provide model scale but require tighter contractual controls and encryption safeguards. Microsoft’s Copilot+ PC approach highlights a hybrid option designed for privacy-sensitive use-cases.

Measuring Success: Metrics and Monitoring

- Adoption metrics: active clinician users, frequency of usage per shift, and task completion rates.

- Productivity metrics: minutes saved per clinician per day (validated by audit), throughput gains in administrative tasks.

- Safety and accuracy metrics: error rates in clinical summaries, number of corrected items per output, and incidents attributable to the agent.

- Financial metrics: care delivery cost per patient, administrative FTEs reclaimed, and ROI on integration costs.

The Bigger Picture: AI as Tool, Not Replacement

The moral of the Petri episode is practical: LLMs and Copilot-class assistants are changing how work gets done, but the change is evolutionary not revolutionary in most enterprise contexts. Experienced engineers and clinicians using these tools do so with a human-in-the-loop mindset, applying standard engineering and clinical controls. The headlines about AI replacing roles obscure the more common reality: tools that remove friction and shift skilled human effort toward higher-value tasks. (petri.com)For CIOs, IT leaders, and clinical informatics teams, the choice is not whether to try these tools — the choice is how to test, govern, and scale them responsibly. When pilots are narrowly scoped, backed by solid integration work, and governed with clear safety checks, the upside can be substantial. When deployments skip the governance legwork, risk and downstream costs quickly multiply.

Final Verdict: Where to Place Your Bets Now

- Short-term: Run tightly controlled pilots for non-decision tasks (documentation, summarization, code scaffolding). Instrument everything and budget for governance.

- Medium-term: Invest in retrieval-augmented architectures, secure vectors for clinical knowledge, and training for users in prompt design and model limitations.

- Long-term: Expect Copilot-like assistants to become standard in knowledge work and clinical workflows — but only for organizations that get governance and integration right will those assistants deliver reliable, scalable benefits.

Conclusion

First Ring Daily’s short dispatch captures the practical mood of 2026: engineers are quietly using LLMs for pragmatic coding tasks, and major platform vendors are moving Copilot into healthcare with real pilots and partners. The combination of productivity promise and real regulatory, safety, and security obligations means IT and clinical leaders must be deliberate. Pilots should be narrow, measurable, and governed; model outputs must be human-validated; data flows must be auditable; and legal and compliance teams must be part of the plan from day one. Do that, and the potential efficiencies and clinician relief on offer from Copilot and LLMs could be a meaningful step forward. Fail to do that, and the same tools that accelerate work will amplify risk and technical debt.

Source: Petri IT Knowledgebase First Ring Daily 1929: Health Pilot - Petri IT Knowledgebase