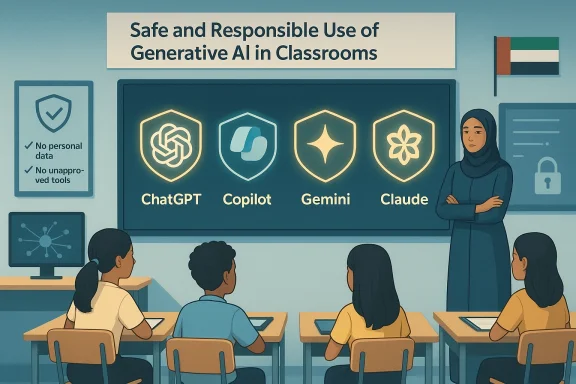

Four of the industry's best-known large-language models have been placed on a short, official leash for classroom use in the United Arab Emirates: the Ministry of Education’s new guidance authorises OpenAI’s ChatGPT, Microsoft’s Copilot, Google’s Gemini, and Anthropic’s Claude — but only under tight, teacher-led conditions designed to protect academic integrity, privacy, and cultural norms.

The announcement comes as part of a broader Ministry publication titled Safe and Responsible Use of Generative AI in Classrooms, a guidance package that — according to multiple UAE news outlets — includes a formal annex listing the four authorised platforms and a set of specific prohibitions and controls for classroom deployment. Reporters who reviewed the Ministry material describe a document that combines operational rules (network controls, account management), ethical guardrails (academic integrity, cultural sensitivity), and age-based restrictions. Several local outlets report the package contains around 25 prohibited actions and a clear instruction: generative AI tools may assist learning, but must not replace human judgement or assessment processes.

Two of the most consequential, repeatedly emphasised measures are:

While the Ministry’s guide is being widely reported across UAE outlets, a publicly downloadable copy of the annex that names the four platforms was not available on the Ministry website at the time of reporting; coverage relies on official briefings made available to national press. Readers should note this distinction: the list and the rules are being circulated and described by major UAE media but formal posting of the annex on the Ministry portal was not discoverable at publication time.

Execution, however, will require real investment. Technical controls, procurement of education-grade vendor agreements, teacher professional development, and assessment redesign are all non-trivial undertakings. Without this investment, the rules risk becoming either unenforceable or a source of friction that stifles useful classroom innovation.

Two practical truths should guide schools moving forward:

If implemented with clarity and resourcing, the UAE model could become a practical blueprint for other systems wrestling with the same dilemma: how to harness the educational promise of generative AI while protecting learners, preserving academic standards, and keeping teachers at the centre of learning.

Source: cairoscene.com Four AI Platforms Approved for Use in UAE Schools

Background / Overview

Background / Overview

The announcement comes as part of a broader Ministry publication titled Safe and Responsible Use of Generative AI in Classrooms, a guidance package that — according to multiple UAE news outlets — includes a formal annex listing the four authorised platforms and a set of specific prohibitions and controls for classroom deployment. Reporters who reviewed the Ministry material describe a document that combines operational rules (network controls, account management), ethical guardrails (academic integrity, cultural sensitivity), and age-based restrictions. Several local outlets report the package contains around 25 prohibited actions and a clear instruction: generative AI tools may assist learning, but must not replace human judgement or assessment processes.Two of the most consequential, repeatedly emphasised measures are:

- A strict age floor: no generative AI for students under 13 years old or for pupils in grades below Year 7.

- A ban on using generative AI during formal examinations or official assessments, and prohibitions on submitting AI-generated work as one’s own without disclosure and teacher approval.

While the Ministry’s guide is being widely reported across UAE outlets, a publicly downloadable copy of the annex that names the four platforms was not available on the Ministry website at the time of reporting; coverage relies on official briefings made available to national press. Readers should note this distinction: the list and the rules are being circulated and described by major UAE media but formal posting of the annex on the Ministry portal was not discoverable at publication time.

Why this matters: the policy logic and the global context

The UAE’s approach mirrors a growing international pattern: regulators and education systems want AI literacy and the classroom benefits of generative models — but they also want to avoid stunting foundational skills, exposing young learners to inappropriate or biased outputs, or creating new pathways to cheating.- Age controls are a common practical boundary. Many platform vendors and regulators around the world treat 13 as a default minimum for consumer accounts; the Ministry’s decision to adopt the 13-year floor aligns with those norms and with recommendations from educational bodies that urge developmental caution for younger children.

- The exam ban and disclosure rules are a response to the very real risk of “AI ghostwriting”: large models can produce plausible essays and solutions that students might present as original work unless safeguards are in place.

- The insistence on approved platforms only is an attempt to limit risk by narrowing the threat surface: if schools standardise on a small, vetted set of providers, IT teams can configure secure, auditable access and negotiate appropriate data-processing terms.

What the guidance permits — and what it doesn’t

Permitted (conditional) uses

- Research scaffolding: generating question prompts, topic outlines, brainstorming keywords for legitimate projects — only when outputs are verified and sourced by the student.

- Instructional design for teachers: generating lesson plan variants, formative assessment ideas, differentiated practice items, or example explanations to help teachers plan more personalised learning sequences.

- Analytical and problem-solving support: model-generated worked examples or step-through explanations can be used as a learning aid, again requiring teacher mediation.

- Language practice and writing coaching: grammar, style, and structure feedback when the student logs sources, drafts their own work, and demonstrates understanding.

Prohibited uses (summary)

- No use by students under 13 or in grades below Year 7.

- No use during formal exams or official assessments.

- No submission of AI-generated assignments as a student’s own work without disclosure and prior teacher approval.

- No uploading of personal, identifiable data — names, photos, audio/video, or identifying documents — to external AI platforms.

- No access to unapproved AI platforms; no attempts to bypass school controls with VPNs or external accounts.

- Creation of deepfakes, impersonation, or material that violates national cultural, religious, or legal norms is banned.

Strengths of the UAE approach

- Clear, enforceable boundaries. The guidance sets explicit red lines (age limits, exam bans, PII restrictions) that IT and leadership teams can implement technically and administratively.

- Focus on teacher oversight. Requiring human review of outputs preserves teacher agency and creates a natural audit point for academic integrity incidents.

- Vendor narrowing reduces complexity. By authorising a short list of major providers, the Ministry gives schools a manageable procurement and technical-administration problem to solve — rather than an open-ended “anything goes” situation that would overwhelm in-school IT teams.

- Cultural and legal alignment. By prohibiting content that clashes with national values and by banning personal data uploads, the rules are aligned with local privacy and social norms while signalling responsible stewardship.

- Living-policy posture. Reported Ministry comments indicate the guidance will be updated as technology evolves — a necessary approach for a fast-changing area.

Risks, gaps, and practical challenges

The guidance is sensible in principle, but execution will determine whether it protects learners, teachers, and institutions — or just creates more work and loopholes.1) Enforcement is non-trivial

Rules like “no unapproved platforms” and “no VPNs” are enforceable only if networks, endpoint management, and school culture all align. Students and staff with personal devices or permissive home networks can easily access unapproved services outside school hours — and some will try to use those services to complete assignments. Effective enforcement requires:- A technically robust school edge (firewalling, SNI/URL filtering, DNS controls).

- Device management (MDM) that prevents installation of unauthorized apps.

- Regular audits and logs that are monitored centrally.

2) The vendors change fast

Platform features, safety models, and terms of service are updated frequently. A model that is “safe enough” today may change behaviour next month, or the vendor may expand a new feature that raises privacy or content concerns. Schools will need a process to track vendor updates, assess implications, and re-validate approved status.3) Data governance is subtle and high-risk

The ban on uploading PII is important, but it relies on human compliance. Students often paste identifiable data into tools by accident (e.g., including classmates' names in a group project prompt). Practical mitigation requires technical guardrails:- Disable file uploads where possible.

- Use enterprise or education contracts that specify data usage and retention (not consumer apps).

- Provide sandboxed, local instances or private-cloud deployments where feasible.

4) Academic integrity and assessment design

Banning the use of tools during exams is necessary, but it pushes the problem into classroom work and homework. Teachers must redesign assessments to prioritise evidence of understanding over product alone. That typically means more in-class demonstrations, oral exams, portfolios with process artifacts, and prompts that require personalised reflection.5) Equity and access

If schools lock advanced capabilities behind approved, paid enterprise products — or if some students have supervised accounts while others don’t — inequities may widen. The policy needs to minimise disparities by ensuring all students who are allowed to use these tools can do so under similar conditions.6) Detection and the “cat-and-mouse” problem

AI-detection tools are improving but are not foolproof. Students may use paraphrasing and iterated prompts to hide AI origins. Instead of relying on detection tooling alone, the stronger approach is redesign: scaffolded assignments, process documentation, and teacher-student interviews.Technical checklist for IT leaders (practical, sequential steps)

- Inventory and policy alignment:

- Confirm the Ministry’s approved list (the four vendors) and reconcile it with any district or school-level policies.

- Update Acceptable Use Policies and student handbooks to include the new guidance and consequences for violations.

- Network and endpoint controls:

- Whitelist only the approved vendor domains for classroom usage; block access to other LLM providers at the network edge.

- Configure DNS filtering and HTTPS inspection where legally allowed and technically feasible.

- Identity and access management:

- Use centrally managed, school-owned accounts (not personal accounts) for any platform access.

- Prefer enterprise/education offerings with a contractual Data Processing Agreement (DPA) that restricts data use and model training on student content.

- Configuration and feature control:

- Disable file uploads, microphone or camera use with third-party processing unless explicit consent and controls exist.

- Forbid API keys embedded in student devices; only server-side integrations should exist.

- Logging and auditing:

- Maintain logs of all AI tool usage by school accounts for a defined retention period.

- Implement alerting for unusual volumes of queries or attempts to access blocked services.

- Teacher tools and training:

- Provide professional development on how to integrate models pedagogically and how to validate outputs.

- Develop a prompt-hygiene cheat sheet (how to craft prompts that elicit sourceable answers and avoid PII).

- Incident response:

- Create clear procedures for suspected academic dishonesty involving AI: collect artifacts, interview students, and apply the code of conduct consistently.

Classroom practice and pedagogy: what teachers should do now

- Require process artifacts: drafts, inquiry logs, and annotated sources that show how AI was used and how the student modified the output.

- Use reflective prompts: ask students to explain choices the AI made and why they accepted or rejected parts of the response.

- Teach verification skills: model how to fact-check model outputs, how to interrogate a model’s confidence, and how to find primary sources.

- Re-design assessments to emphasise originality of reasoning, not just correctness of final answers.

- Make disclosure a habit: require a short AI-use statement appended to assignments describing what the model did and what the student added.

Vendor considerations: why enterprise contracts matter

Consumer LLM interfaces are convenient but are often backed by data-use clauses that allow vendors to use aggregated prompts to improve models. For schools, that is unacceptable when student data is involved. The practical path is to:- Seek education or enterprise contracts that explicitly exclude training on school-provided prompts and include robust data-retention and deletion clauses.

- Prefer providers that offer on-region data controls or private deployment options when handling sensitive educational records.

- Obtain SLAs that address uptime, safety patches, and incident notification timelines.

Balancing innovation and caution: recommended governance model

- Establish an AI Governance Committee at district or school level with representatives from IT, curriculum, legal/compliance, and parent communities.

- Make guidance a living document: reassess the approved-platform list quarterly, or more often when vendors push major updates.

- Publish a transparent escalation path for teachers and parents to raise concerns about outputs, bias, or inappropriate content.

- Embed AI literacy into the curriculum so students learn model strengths, limitations, and ethical use — not just how to generate polished text.

The detection arms race and why policy beats policing

There will be tests, detectors, and apps claiming to detect AI content. These tools will help, but they are not deterministic. Instead of chasing perfect detection, the Ministry’s emphasis on process, teacher oversight, and redesign of assessment is the sounder strategy: it reduces incentives for students to game the system and makes integrity easier to verify.A pragmatic roadmap for school leaders (six-month plan)

- Month 0–1: Policy rollout and communications:

- Update handbooks, notify parents, and brief staff on the Ministry guidance and the approved platform list as reported by national press.

- Month 1–2: Technical hardening:

- Implement whitelisting, MDM controls, and account management for school devices.

- Month 2–3: Contracts and procurement:

- Engage procurement to explore education-tier contracts from the approved vendors; insist on data protection and DPA terms.

- Month 3–4: Professional development:

- Run teacher workshops focused on safe use cases, prompt hygiene, and assessment redesign.

- Month 4–5: Pilot and measure:

- Pilot supervised classroom use in a small set of lessons; collect teacher feedback and usage logs.

- Month 5–6: Scale with gates:

- Scale successful practices school-wide while maintaining audit and review cycles.

What to watch next — short- and medium-term signals

- Vendor policy changes: watch for any shift in terms of service that affect data use or model update cadence.

- Ministry updates: expect the approved-tools list to be revised over time. The Ministry itself signalled that guidance will be updated as technologies change.

- International harmonisation: as more countries publish school-level AI guidance, expect convergence on age thresholds, consent requirements, and technical controls — but also divergence on content restrictions tied to local values.

- Education-market consolidation: some vendors may offer dedicated education products that explicitly address data residency and PII controls; these offerings should be prioritised.

Final analysis: measured policy, heavy lift in practice

The UAE has chosen an approach that is both pragmatic and precautionary: it recognises the value of LLMs for personalised learning and teacher support, but it imposes age limits, exam prohibitions, PII protections, and an approved-platform regime. Those are the right levers on paper.Execution, however, will require real investment. Technical controls, procurement of education-grade vendor agreements, teacher professional development, and assessment redesign are all non-trivial undertakings. Without this investment, the rules risk becoming either unenforceable or a source of friction that stifles useful classroom innovation.

Two practical truths should guide schools moving forward:

- Treat generative AI as a tool that augments educator expertise, not as an automated assistant that can be left unsupervised. Teacher review — explicitly required by the Ministry guidance — is the single most important control.

- Invest in process and pedagogy before scaling technology. Auditability, revision histories, and transparent documentation of student work create far better long-term safeguards than any detection app.

If implemented with clarity and resourcing, the UAE model could become a practical blueprint for other systems wrestling with the same dilemma: how to harness the educational promise of generative AI while protecting learners, preserving academic standards, and keeping teachers at the centre of learning.

Source: cairoscene.com Four AI Platforms Approved for Use in UAE Schools