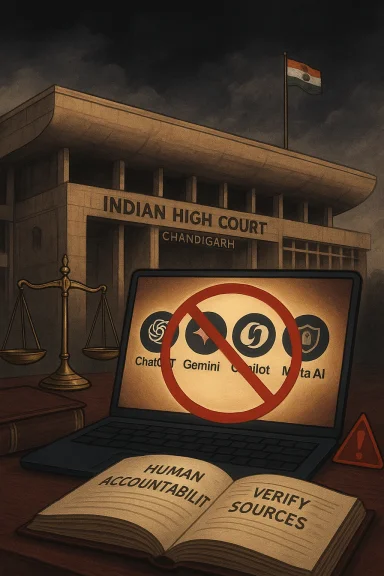

The Punjab and Haryana High Court’s reported instruction barring judicial officers from using AI tools such as ChatGPT, Gemini, Microsoft Copilot, and Meta AI for judgment writing and legal research marks one of the clearest judicial pushbacks yet against generative AI in India’s courtroom ecosystem. The move lands at a moment when courts around the world are trying to decide whether AI is a productivity aid, a confidentiality risk, or a structural threat to legal reasoning. In India, where the Supreme Court has already warned about hallucinations and the dangers of unverified AI-generated content, the High Court’s position reads less like a sudden panic and more like a hardening of institutional caution. (indianexpress.com)

The significance of this development is not simply that judges are being told to avoid a new class of software. It is that a constitutional institution is drawing a bright line between assistive technology and judicial authority. The distinction matters because AI systems are increasingly good at producing polished prose, but the legal system is not measured by polish alone. It is measured by accountability, transparency, and the human responsibility attached to every finding, order, and ratio decidendi. (indianexpress.com)

The Punjab and Haryana High Court’s reported step also fits into a broader Indian trend. The Gujarat High Court recently issued a formal AI policy that prohibits AI in judicial decision-making, evaluating evidence, and drafting substantive orders, while still allowing controlled use for administrative tasks, translation, and research assistance. That policy applies to judges, staff, interns, and the district judiciary, and it explicitly requires human verification of AI-generated outputs. (livelaw.in)

There is an important nuance here. The emerging judicial consensus is not “AI has no place in the courts.” It is “AI has no place in autonomous adjudication.” That is a narrower, more defensible position, and it reflects the legal profession’s central fear: that a system built to predict likely text will be treated, incorrectly, as a system that understands law. The Supreme Court’s own white paper warned about hallucinations, algorithmic bias, and privacy risks, and it stressed that the final responsibility must remain with a human judge. (indianexpress.com)

The Punjab and Haryana order should therefore be read as part of a longer institutional debate rather than a standalone prohibition. Courts are trying to preserve the efficiency benefits of modern software without letting the software become a silent participant in judicial reasoning. That is a difficult balance, and in high-stakes legal work, caution is often the only reversible decision. (indianexpress.com)

Judicial work is especially vulnerable because a judge is often expected to synthesize facts, precedent, procedure, and equity under time pressure. AI can assist with language, but it cannot reliably validate legal truth on its own. In that setting, even a small hallucination can become a large procedural error, because judicial text carries the force of state authority. The danger is not merely mistaken phrasing; it is mistaken law. (indianexpress.com)

The legal system already has built-in verification channels, but AI changes the scale and speed of draft production. When software can instantly propose a paragraph, a summary, or a citation, the temptation is to trust the output because it looks “finished.” Yet in law, finished-looking is not the same as correct. Courts know that distinction instinctively, which is why the safest response is often to prohibit the use case entirely. (indianexpress.com)

In a normal office workflow, a draft error may be embarrassing. In a court workflow, a draft error may compromise privacy, fairness, and due process. That makes judicial AI governance closer to evidence handling than to ordinary productivity policy. Judges are not just protecting their own work; they are protecting the integrity of the record itself. (indianexpress.com)

That history matters because it shows Indian courts are not anti-technology. They are, instead, trying to separate structured automation from open-ended generation. The former can be audited, constrained, and trained on known legal tasks. The latter can improvise in ways that feel useful but are much harder to supervise at scale. (indianexpress.com)

This is why the Supreme Court’s “Human in the Loop” framing is so important. It does not merely mean a person glances at the final output. It means the human judge remains the source of legal judgment, legal reasoning, and legal accountability. In practice, that principle pushes courts toward restrictive AI policies, because once a chatbot is allowed to shape substantive reasoning, the human role starts to blur. (indianexpress.com)

Generative AI is impressive because it can mimic the surface of reasoning. It can outline arguments, structure paragraphs, and generate plausible transitions between ideas. But mimicry is not mastery. A court that allows that surface resemblance to substitute for actual analysis risks turning legal reasoning into a performance of certainty rather than a disciplined search for truth. (indianexpress.com)

That is why judicial AI policy often errs on the side of prohibition rather than partial permission. The legal system has very little tolerance for black-box influence, especially when the underlying model is trained on broad internet data rather than authoritative legal sources. A court can correct a bad draft more easily than it can correct a bad institutional habit. (indianexpress.com)

The policy also goes further by making violation a matter of professional misconduct. That is important because it transforms AI misuse from an abstract ethical concern into a disciplinary issue. Once the penalty structure is explicit, the policy becomes enforceable rather than aspirational. In public institutions, that difference is everything. (indianexpress.com)

That standard is likely to influence other judicial bodies in India. Once one high court codifies a policy, other courts can borrow both the language and the logic. In a system as large and diverse as India’s, consistency matters, and policy diffusion often happens through exactly this kind of administrative precedent. (indianexpress.com)

That parallel helps explain why judicial bodies are wary of consumer AI tools in particular. Consumer chatbots are optimized for usefulness and engagement, not evidentiary accuracy. In enterprises, that may be acceptable for first drafts and summaries. In a court, where every sentence can affect rights and remedies, the margin for error is far smaller. (indianexpress.com)

That concern is especially acute in legal research. Research is not just about finding text; it is about validating hierarchy, jurisdiction, and current authority. A chatbot that confidently cites a case out of context can mislead a judge just enough to matter. In a system built on precedent, that kind of mistake can ripple far beyond one file. (indianexpress.com)

For staff and court administrators, the message is more nuanced. AI may still have a place in scheduling, translation, administrative drafting, and basic workflow support, but only under strict supervision and with explicit safeguards. That creates a more bureaucratic environment, yes, but it also creates a more legally defensible one. In public justice, defensibility is a feature, not a bug. (indianexpress.com)

When both sides of the courtroom can generate large volumes of polished text quickly, judges need sharper verification instincts, not weaker ones. The risk is not limited to the bench. It extends to filings, evidence, citations, translations, and even the authenticity of images or media submitted to the record. That makes judicial AI policy an anti-fraud measure as much as a technology rule. (indianexpress.com)

The other thing to watch is whether more high courts adopt formal written AI policies. The Gujarat example shows that detailed policy language can spread quickly, especially when it clearly separates low-risk from high-risk use. If more courts follow that model, India could end up with a fairly coherent judicial approach: permit narrow assistance, forbid substantive automation, and keep human accountability at the center. (indianexpress.com)

Source: The Haryana Story Punjab & Haryana High Court Bars Judges from Using AI Tools for Verdict Writing

Overview

Overview

The significance of this development is not simply that judges are being told to avoid a new class of software. It is that a constitutional institution is drawing a bright line between assistive technology and judicial authority. The distinction matters because AI systems are increasingly good at producing polished prose, but the legal system is not measured by polish alone. It is measured by accountability, transparency, and the human responsibility attached to every finding, order, and ratio decidendi. (indianexpress.com)The Punjab and Haryana High Court’s reported step also fits into a broader Indian trend. The Gujarat High Court recently issued a formal AI policy that prohibits AI in judicial decision-making, evaluating evidence, and drafting substantive orders, while still allowing controlled use for administrative tasks, translation, and research assistance. That policy applies to judges, staff, interns, and the district judiciary, and it explicitly requires human verification of AI-generated outputs. (livelaw.in)

There is an important nuance here. The emerging judicial consensus is not “AI has no place in the courts.” It is “AI has no place in autonomous adjudication.” That is a narrower, more defensible position, and it reflects the legal profession’s central fear: that a system built to predict likely text will be treated, incorrectly, as a system that understands law. The Supreme Court’s own white paper warned about hallucinations, algorithmic bias, and privacy risks, and it stressed that the final responsibility must remain with a human judge. (indianexpress.com)

The Punjab and Haryana order should therefore be read as part of a longer institutional debate rather than a standalone prohibition. Courts are trying to preserve the efficiency benefits of modern software without letting the software become a silent participant in judicial reasoning. That is a difficult balance, and in high-stakes legal work, caution is often the only reversible decision. (indianexpress.com)

Why Courts Are Drawing the Line

The first reason for the restriction is straightforward: AI chatbots can produce convincingly written but factually false legal material. That problem has already surfaced in multiple jurisdictions, including cases where lawyers submitted fabricated citations generated by ChatGPT. The Indian legal system is not immune to that risk, and the Supreme Court’s own advisory materials have warned that public chatbots do not draw from authoritative legal databases. (indianexpress.com)Judicial work is especially vulnerable because a judge is often expected to synthesize facts, precedent, procedure, and equity under time pressure. AI can assist with language, but it cannot reliably validate legal truth on its own. In that setting, even a small hallucination can become a large procedural error, because judicial text carries the force of state authority. The danger is not merely mistaken phrasing; it is mistaken law. (indianexpress.com)

Hallucination Is a Legal Risk, Not a Cosmetic One

What makes hallucinations so troubling in judicial work is that they may sound better than human drafts. A flawed AI paragraph can appear neat, coherent, and confident, which makes errors easier to miss and harder to distrust. That creates a subtle but serious problem: the better the language, the more likely the mistake is to survive into the final order. (indianexpress.com)The legal system already has built-in verification channels, but AI changes the scale and speed of draft production. When software can instantly propose a paragraph, a summary, or a citation, the temptation is to trust the output because it looks “finished.” Yet in law, finished-looking is not the same as correct. Courts know that distinction instinctively, which is why the safest response is often to prohibit the use case entirely. (indianexpress.com)

- AI-generated citations can be fabricated.

- Confident prose can mask legal errors.

- Judicial drafting must remain independently verifiable.

- A wrong citation can distort the reasoning chain.

- Public chatbots are not authoritative legal databases.

Confidentiality Changes the Equation

Confidentiality is another major reason the judiciary is wary. Court matters routinely involve sensitive personal data, privileged communications, medical details, family issues, financial information, and criminal allegations. Putting any of that into a public AI platform can create a permanent and poorly understood data trail, even when the user’s intent is innocent. The Gujarat High Court policy explicitly prohibits feeding confidential case details into public AI tools, and its rationale is highly relevant to the Punjab and Haryana stance. (indianexpress.com)In a normal office workflow, a draft error may be embarrassing. In a court workflow, a draft error may compromise privacy, fairness, and due process. That makes judicial AI governance closer to evidence handling than to ordinary productivity policy. Judges are not just protecting their own work; they are protecting the integrity of the record itself. (indianexpress.com)

The Indian Judicial Context

The reported Punjab and Haryana High Court position is not occurring in a vacuum. Indian courts have already experimented with AI in limited ways, including translation and case support tools. The Supreme Court’s SUVAS initiative uses AI-assisted translation to move English judicial documents into vernacular languages, and SUPACE was launched to collect relevant facts and laws for judges. Those tools are narrow, bounded, and purpose-built, which is exactly why they have been more acceptable than general-purpose chatbots. (indianexpress.com)That history matters because it shows Indian courts are not anti-technology. They are, instead, trying to separate structured automation from open-ended generation. The former can be audited, constrained, and trained on known legal tasks. The latter can improvise in ways that feel useful but are much harder to supervise at scale. (indianexpress.com)

From Assistance to Adjudication

There is a meaningful difference between a tool that helps translate a judgment and a tool that helps write one. Translation tools can be tested against source text and reviewed line by line. Judgment drafting, by contrast, involves legal interpretation, sequencing of facts, and the subtle exercise of judicial discretion. That makes the latter far more sensitive to errors, bias, and overreach. (indianexpress.com)This is why the Supreme Court’s “Human in the Loop” framing is so important. It does not merely mean a person glances at the final output. It means the human judge remains the source of legal judgment, legal reasoning, and legal accountability. In practice, that principle pushes courts toward restrictive AI policies, because once a chatbot is allowed to shape substantive reasoning, the human role starts to blur. (indianexpress.com)

- Translation is easier to supervise than interpretation.

- Research assistance is less risky than judgment drafting.

- Structured tools are easier to audit than open chatbots.

- Human accountability remains the constitutional anchor.

- Judicial AI policy is really governance policy.

Why Human Reasoning Still Matters

At the heart of this controversy is a philosophical question: can a machine assist with law without reshaping what law is? The judiciary’s answer so far appears to be no, at least not for core adjudicatory tasks. That is because legal reasoning is not merely a text-completion exercise. It is an act of weighing facts, reconciling precedent, interpreting statutes, and applying institutional judgment to human conflict. (indianexpress.com)Generative AI is impressive because it can mimic the surface of reasoning. It can outline arguments, structure paragraphs, and generate plausible transitions between ideas. But mimicry is not mastery. A court that allows that surface resemblance to substitute for actual analysis risks turning legal reasoning into a performance of certainty rather than a disciplined search for truth. (indianexpress.com)

The Danger of Over-Delegation

Over-delegation is the deeper risk. Once a judge starts depending on AI for research shortcuts, phrasing, or draft organization, the tool can quietly shape the frame of the decision before the judge has fully engaged with the record. Even if the judge remains in control formally, the software may still steer the outcome indirectly through convenience and suggestion. (indianexpress.com)That is why judicial AI policy often errs on the side of prohibition rather than partial permission. The legal system has very little tolerance for black-box influence, especially when the underlying model is trained on broad internet data rather than authoritative legal sources. A court can correct a bad draft more easily than it can correct a bad institutional habit. (indianexpress.com)

- Legal reasoning depends on interpretive judgment.

- AI can imitate form without understanding substance.

- Convenience can become hidden influence.

- Judicial independence requires visible accountability.

- Final orders must be traceable to human analysis.

How Gujarat Shaped the Debate

The Gujarat High Court policy is significant because it offers a concrete template that other courts can follow or adapt. It allows AI for translation, grammar, cause list management, and limited research, but forbids AI in decision-making, evidence assessment, substantive order drafting, and judgment preparation. That combination reflects a very deliberate balancing act: use AI where it is helpful, and wall it off where it becomes legally dangerous. (livelaw.in)The policy also goes further by making violation a matter of professional misconduct. That is important because it transforms AI misuse from an abstract ethical concern into a disciplinary issue. Once the penalty structure is explicit, the policy becomes enforceable rather than aspirational. In public institutions, that difference is everything. (indianexpress.com)

The “Human in the Loop” Standard

The Gujarat policy’s most important contribution may be its insistence that a qualified human officer must always verify AI output. This is a key governance principle because it prevents the normalization of unreviewed machine text inside official processes. The court is effectively saying that AI can draft, but it cannot decide what the institution stands behind. (indianexpress.com)That standard is likely to influence other judicial bodies in India. Once one high court codifies a policy, other courts can borrow both the language and the logic. In a system as large and diverse as India’s, consistency matters, and policy diffusion often happens through exactly this kind of administrative precedent. (indianexpress.com)

- Controlled use is easier to govern than open-ended use.

- Human verification is the key safeguard.

- Disciplinary consequences strengthen compliance.

- Administrative tasks are separated from adjudication.

- Policy templates can spread quickly across courts.

The Enterprise AI Parallel

The judiciary’s caution echoes a broader pattern in government and enterprise AI adoption. Outside the courtroom, institutions are increasingly approving AI under guardrails rather than banning it outright. The logic is familiar: productivity gains are real, but they come with governance, privacy, and reliability costs that must be managed carefully. Courts are simply applying that same logic to a higher-stakes environment. (indianexpress.com)That parallel helps explain why judicial bodies are wary of consumer AI tools in particular. Consumer chatbots are optimized for usefulness and engagement, not evidentiary accuracy. In enterprises, that may be acceptable for first drafts and summaries. In a court, where every sentence can affect rights and remedies, the margin for error is far smaller. (indianexpress.com)

Why Public Chatbots Trigger More Alarm

Public chatbots are trained to produce plausible output from prompts, which means they can be helpful for brainstorming but dangerous for legal precision. The Supreme Court’s white paper and the UK judiciary’s guidance both underline this point by warning that public tools are not authoritative databases and may produce substantive errors that look persuasive at first glance. (indianexpress.com)That concern is especially acute in legal research. Research is not just about finding text; it is about validating hierarchy, jurisdiction, and current authority. A chatbot that confidently cites a case out of context can mislead a judge just enough to matter. In a system built on precedent, that kind of mistake can ripple far beyond one file. (indianexpress.com)

- Consumer AI is optimized for fluency, not legal certainty.

- Legal research requires authoritative sources.

- Persuasive errors are more dangerous than obvious errors.

- Courts need traceable outputs, not just polished prose.

- Public platforms raise privacy and confidentiality concerns.

What This Means for Judges, Staff, and Litigants

For judges, the practical implication is simple: the safe path is to keep general-purpose AI out of judgment writing and substantive legal reasoning. That does not mean judges cannot use digital tools, but it does mean they must be able to defend every legal conclusion without leaning on unverifiable machine assistance. In that sense, the policy protects judges as much as it constrains them. (indianexpress.com)For staff and court administrators, the message is more nuanced. AI may still have a place in scheduling, translation, administrative drafting, and basic workflow support, but only under strict supervision and with explicit safeguards. That creates a more bureaucratic environment, yes, but it also creates a more legally defensible one. In public justice, defensibility is a feature, not a bug. (indianexpress.com)

Litigants Are Part of the Story

Litigants and lawyers are also part of this ecosystem, whether courts like it or not. The UK guidance explicitly warned judges that parties may themselves submit AI-generated material, including deepfakes or fabricated submissions. That means the courts’ AI policy is not just inward-facing; it is also a preparation for a changing adversarial environment. (indianexpress.com)When both sides of the courtroom can generate large volumes of polished text quickly, judges need sharper verification instincts, not weaker ones. The risk is not limited to the bench. It extends to filings, evidence, citations, translations, and even the authenticity of images or media submitted to the record. That makes judicial AI policy an anti-fraud measure as much as a technology rule. (indianexpress.com)

- Judges need clear boundaries on AI use.

- Staff may still use AI for narrow administrative tasks.

- Litigants may submit AI-generated content.

- Deepfake risk now belongs in courtroom governance.

- Anti-fraud vigilance is becoming a judicial necessity.

Strengths and Opportunities

The strongest virtue of the Punjab and Haryana High Court’s reported position is its simplicity. A bright-line rule is easier to understand, easier to enforce, and easier to defend than a vague promise of “responsible use.” It also aligns with the judiciary’s highest obligation: preserving trust in the process, not just speed in the workflow. The broader opportunity is that courts can now design separate, safer AI policies for translation, administration, and research without letting those tools drift into adjudication. (indianexpress.com)- Protects the integrity of judicial reasoning.

- Reduces the risk of fabricated citations.

- Strengthens confidentiality safeguards.

- Encourages human verification.

- Creates a clear compliance standard.

- Leaves room for narrow, controlled AI use.

- Helps courts avoid reputational damage.

Risks and Concerns

The biggest concern is not that courts are being overly cautious; it is that inconsistent policies across institutions may create confusion. If some benches allow limited AI support while others prohibit it, lawyers and staff will face a patchwork of expectations. There is also a risk that blanket prohibitions will push unofficial usage underground, where it becomes harder to monitor and safer for nobody. Good policy needs a legitimate alternative, not just a warning. (indianexpress.com)- Inconsistent rules across courts could cause confusion.

- Shadow use may continue despite formal bans.

- Staff may over-trust polished AI output in limited contexts.

- Overbroad fear could slow useful innovation.

- Poorly defined exceptions may weaken enforcement.

- Public misunderstanding of the policy could grow.

- Vendor and privacy issues will keep evolving.

Looking Ahead

The next phase will likely be more about governance than technology. Courts will need to decide what kinds of AI assistance, if any, can be safely used for translation, scheduling, internal knowledge search, and procedural administration. They will also need training protocols that explain not just what is banned, but why the boundary exists. That kind of institutional literacy is critical if courts want compliance to be real rather than symbolic. (indianexpress.com)The other thing to watch is whether more high courts adopt formal written AI policies. The Gujarat example shows that detailed policy language can spread quickly, especially when it clearly separates low-risk from high-risk use. If more courts follow that model, India could end up with a fairly coherent judicial approach: permit narrow assistance, forbid substantive automation, and keep human accountability at the center. (indianexpress.com)

Key developments to watch

- Whether Punjab and Haryana issues a formal written policy or only administrative instructions.

- Whether other High Courts publish similar bans or controlled-use frameworks.

- Whether judicial training programs expand guidance on AI risks and verification.

- Whether courts adopt approved internal tools for translation or research.

- Whether appellate courts or the Supreme Court provide a broader national framework.

Source: The Haryana Story Punjab & Haryana High Court Bars Judges from Using AI Tools for Verdict Writing