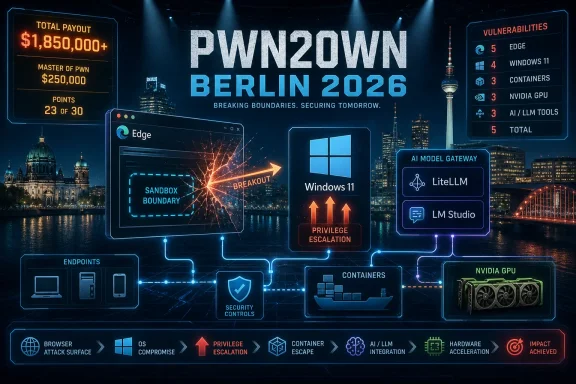

At Pwn2Own Berlin 2026 on May 14, security researchers demonstrated successful zero-day exploits against Microsoft Edge, Windows 11, LiteLLM, NVIDIA software, Red Hat Enterprise Linux, and other modern targets, earning $523,000 across 24 unique vulnerabilities on the contest’s first day. The headline is not simply that Microsoft got hacked onstage; that is practically a Pwn2Own tradition. The sharper story is that the attack surface Windows shops now defend has sprawled from browsers and kernels into AI gateways, coding agents, inference tools, and GPU infrastructure. Pwn2Own has become less a stunt show than a quarterly weather report for enterprise risk.

Pwn2Own events are often misunderstood outside security circles. A successful exploit at the contest does not mean the internet suddenly has a working public weapon against every copy of Windows 11 or Edge. It means skilled researchers, operating under strict rules, found previously unknown flaws and proved they could exploit them against current software in a controlled setting.

That distinction matters because it is the reason the contest exists. Vendors get the bugs, researchers get paid, and the rest of us get a rare public glimpse of where modern software assumptions are weakest. The details are withheld so patches can be built, but the broad pattern is visible immediately.

This year’s Berlin opening day landed with unusual force because the target list looked like an inventory of the modern enterprise stack. Microsoft Edge and Windows 11 represented the familiar endpoint. Red Hat Enterprise Linux represented traditional infrastructure. NVIDIA’s container and AI tooling represented accelerated compute. LiteLLM, LM Studio, OpenAI Codex, and other AI-oriented targets represented the fast-growing layer now being bolted into developer and operations workflows.

That mix is the point. The security perimeter is no longer the browser, the operating system, and the VPN. It is the browser, the operating system, the container runtime, the GPU toolchain, the internal model gateway, the local inference app, the coding assistant, and whatever automation glue joins them together.

Modern browsers are built around containment. The idea is that risky content can be rendered in processes that are heavily restricted, limiting what a compromised tab can do to the wider system. That architecture has been one of the great practical security improvements of the past two decades, and it has made drive-by compromise vastly harder than it used to be.

But sandboxing also creates a target of enormous value. If an attacker can move from code execution inside a renderer or browser process to code execution outside the sandbox, the compromise changes character. The difference between “the browser process is in trouble” and “the machine is in trouble” is the difference enterprise defenders build entire control stacks around.

The interesting detail here is that the Edge exploit reportedly chained logic bugs, not the more cinematic memory corruption flaws that traditionally dominate browser exploitation lore. Logic bugs are uncomfortable because they often arise from design complexity, inconsistent state, overly trusting components, or interactions that are valid in isolation but dangerous in sequence. They are harder to eliminate with compiler switches and memory-safe language migration alone.

That does not make memory safety irrelevant. It makes the job bigger. Browser vendors can keep closing whole classes of memory bugs and still face the problem of sprawling privilege models, feature interactions, identity plumbing, policy enforcement, and compatibility compromises accumulated over years of product evolution.

Privilege escalation is often less glamorous than remote code execution, but defenders know why it matters. Many real intrusions are not one perfect exploit fired from the outside. They are chains: phishing, stolen credentials, malicious documents, exposed services, weak app isolation, then elevation. The attacker who begins as a normal user wants to become a local admin, a SYSTEM-level process, a credential thief, or a domain foothold.

That is why local privilege-escalation bugs sit at the center of ransomware and espionage tradecraft. They turn limited execution into durable access. They let malware disable defenses, dump secrets, tamper with logs, install persistence, and pivot toward higher-value systems.

Windows 11 is more hardened than its predecessors in meaningful ways. Virtualization-based security, memory protections, driver controls, Smart App Control, hardware-backed credential protections, and increasingly aggressive defaults have changed the terrain. But hardening does not erase the economics of Windows exploitation; it raises the cost and shifts the favorite bug classes.

For administrators, the message is not that Windows 11 is uniquely broken. It is that endpoint compromise remains a layered problem. A privilege-escalation exploit is most damaging when it meets overprivileged users, weak application control, exposed credentials, inadequate EDR coverage, or poor patch discipline. The OS flaw is one link in a chain; enterprise configuration decides how much chain the attacker has to work with.

This is where the event stops being a Microsoft story and becomes an industry story. AI infrastructure is being adopted at startup speed but often deployed with web-app security assumptions, developer-tool trust assumptions, and infrastructure-level privileges. That combination is volatile.

LiteLLM is a useful example because model gateways are becoming common in organizations trying to abstract away multiple LLM providers. They sit between applications and models, often holding API keys, routing requests, enforcing policy, logging prompts, and connecting to internal services. If such a gateway is vulnerable to SSRF or command execution, the blast radius can extend far beyond chatbot output.

The same concern applies to local inference tools and coding agents. These products are attractive precisely because they sit close to sensitive material: source code, credentials, internal documentation, build systems, repositories, test harnesses, and developer workstations. The closer an AI assistant gets to useful work, the closer it gets to dangerous authority.

Security teams have spent years teaching organizations that CI/CD systems, package registries, and developer laptops are production-adjacent assets. AI coding agents now belong in that category. A vulnerable coding assistant is not merely an app bug. It is a possible bridge into the software supply chain.

SSRF is not new. Code injection is not new. Path traversal is not new. Weak access control is not new. Overly permissive allow lists are not new. The novelty is that these bug classes are now appearing in systems that may be granted unusual trust because they are branded as AI platforms, productivity accelerators, or internal automation layers.

That trust gap is dangerous. A model router may be treated like middleware but given access to secrets from multiple providers. A coding agent may be treated like a convenience feature but allowed to read repositories and execute commands. A local inference app may be installed by developers outside the normal procurement path but still bind services, open ports, or process sensitive files.

The industry has been here before. Containers began as developer convenience and then became a core production substrate. CI systems began as build helpers and became crown jewels. Browser extensions began as personal productivity tools and became enterprise data-exfiltration risks. AI tooling is moving through the same cycle, only faster.

The lesson from Pwn2Own is not to freeze AI adoption. It is to stop pretending AI tooling can skip the boring controls. Authentication, authorization, input validation, egress restrictions, secret scoping, sandboxing, logging, update hygiene, and least privilege all still apply. The model may be novel; the process around it cannot be.

The GPU stack has become a production dependency for AI workloads, scientific computing, media processing, and high-performance analytics. That stack includes drivers, runtimes, container integrations, orchestration glue, model frameworks, and data pipelines. Every layer that makes accelerated compute easier to use also gives defenders another layer to inventory and patch.

Container tooling is especially sensitive because it often sits near workload isolation boundaries. If a flaw lets an attacker escape expected constraints, tamper with host resources, or access files and devices they should not touch, the consequences can be severe. In AI environments, those hosts may contain expensive hardware, proprietary models, training data, credentials, and high-value internal services.

This is why the old division between “security infrastructure” and “compute infrastructure” looks increasingly artificial. The GPU server is not just a performance box. It is an identity-bearing, secret-handling, network-connected, multi-tenant machine. Its toolchain deserves the same scrutiny as a hypervisor, container runtime, or database server.

The NVIDIA results also underline a procurement reality. Organizations racing to build AI capacity are often focused on supply, performance, and developer enablement. Security review arrives later, after the platform is already expensive, politically important, and difficult to redesign. That is how technical debt becomes strategic risk.

Race conditions are particularly unpleasant because they exploit timing and state. They can be fragile to develop but powerful when reliable. They also remind defenders that concurrency, performance optimization, and privilege separation are uneasy roommates.

For mixed Windows and Linux shops, the practical takeaway is that privilege boundaries need to be monitored as operational assumptions, not simply trusted as product features. Workstation hardening, endpoint telemetry, patch velocity, local admin minimization, and application control matter across operating systems. The badge on the laptop is less important than the privileges available after first foothold.

The Red Hat result also matters because Linux workstations and servers are deeply embedded in developer and AI environments. A vulnerable Linux workstation may have SSH keys, cloud credentials, Kubernetes contexts, source repositories, package-publishing rights, or direct access to training and deployment pipelines. In 2026, compromising a developer’s Linux box can be as consequential as compromising a Windows admin workstation.

This is another place where security architecture has lagged cultural reality. Many organizations formally protect production while informally trusting development. Attackers do not share that distinction. They go where the credentials, build paths, and human shortcuts are.

Exploit development is uncertain even for elite researchers. Environmental details matter. Reliability matters. Time pressure matters. A bug that works in the lab can fail onstage, and a chain that looks elegant in a write-up can collapse under contest constraints.

Collisions are more complicated. On one hand, they reduce the drama of a “new” zero-day. On the other, they show that multiple researchers may be converging on the same weak areas. If different teams independently find similar bugs in the same product surface, that is often evidence of architectural pressure, not random bad luck.

For vendors, collisions can be embarrassing or reassuring depending on patch status. If the issue is already known and moving through remediation, the process is working. If it is known but unpatched across live targets, the public demonstration raises the temperature.

For users, the distinction matters mostly in timing. Pwn2Own bugs are disclosed to vendors under coordinated processes, not dumped as exploit kits. The risk window becomes a patch-management question: how quickly vendors ship fixes, how clearly they communicate severity, and how quickly organizations deploy updates once those fixes arrive.

Microsoft products are targeted because they are valuable, heavily deployed, and hardened enough to make successful exploitation meaningful. A public contest that pays serious money for reliable exploits against current builds will naturally draw researchers toward the platforms with the largest enterprise footprint. If anything, Microsoft’s prominence at Pwn2Own reflects its continued centrality.

The more serious critique is about complexity. Edge inherits the modern browser’s vast attack surface. Windows inherits decades of compatibility, drivers, privilege models, management hooks, legacy behavior, and enterprise integration. Even when the company improves security architecture, the total system remains enormous.

That has practical consequences. Windows administrators should not respond to Pwn2Own with panic, but neither should they dismiss it as theater. The contest previews the kinds of bugs that may appear in future Patch Tuesday cycles and the kinds of exploit chains adversaries would like to assemble if they can obtain or rediscover similar flaws.

The correct posture is boring and disciplined. Keep Edge and Windows patched. Reduce local admin rights. Enforce application control where possible. Treat browsers as high-risk application platforms. Monitor suspicious child processes, privilege changes, credential access, and unusual outbound connections. The security story is not one magical setting; it is the accumulation of friction.

This creates a familiar interim period. Security teams know that certain products were exploited, but they do not yet have CVEs, proof-of-concept details, or vendor advisories to map into scanners and dashboards. That can feel unsatisfying in organizations built around ticketing systems and vulnerability feeds.

The best response is to treat the event as a trigger for readiness rather than emergency mitigation. Identify where affected products exist. Confirm update channels. Check whether Edge is managed and current. Review Windows 11 exposure and privilege models. Inventory AI tools such as LiteLLM, LM Studio, coding agents, model gateways, and NVIDIA container components. Make sure the owners of those systems know patches are likely coming.

This is especially important for AI tools because many deployments may not yet be in the traditional asset inventory. Developer teams may have installed local inference applications. Platform teams may have stood up model routers. Data-science teams may be running GPU containers outside standard production patterns. If security does not know where the software lives, it cannot patch it when the advisory lands.

The uncomfortable truth is that “wait for the CVE” is no longer a complete vulnerability-management strategy. For fast-moving platforms, especially AI tooling, the inventory problem is the vulnerability problem. You cannot defend what procurement never saw and endpoint management never enrolled.

That is not accidental. Attackers follow value, and value has moved. The developer workstation has become a production gateway. The AI platform has become a data broker. The GPU server has become a strategic asset. The browser has become the universal application runtime. Windows remains the enterprise desktop, but it is now just one node in a larger machine.

This is why security teams should resist product-by-product tunnel vision. The Edge sandbox escape matters, but so does what happens after a browser foothold. The Windows privilege escalation matters, but so does whether credentials are exposed once elevation succeeds. The LiteLLM exploit matters, but so does whether the model gateway can reach internal metadata services, source repositories, or cloud control planes.

The defensive unit is no longer a device. It is a workflow. A developer asks an AI agent to modify code, the agent calls tools, the code builds in CI, containers run on GPU-backed infrastructure, secrets move through environment variables, and telemetry lands in half a dozen systems. Every trust boundary in that workflow is now a place where old bugs and new AI-specific failures can meet.

Source: cyberpress.org Microsoft Edge, Windows 11, and LiteLLM Hacked at Pwn2Own Berlin 2026

The Old Targets Still Break, but the Map Has Changed

The Old Targets Still Break, but the Map Has Changed

Pwn2Own events are often misunderstood outside security circles. A successful exploit at the contest does not mean the internet suddenly has a working public weapon against every copy of Windows 11 or Edge. It means skilled researchers, operating under strict rules, found previously unknown flaws and proved they could exploit them against current software in a controlled setting.That distinction matters because it is the reason the contest exists. Vendors get the bugs, researchers get paid, and the rest of us get a rare public glimpse of where modern software assumptions are weakest. The details are withheld so patches can be built, but the broad pattern is visible immediately.

This year’s Berlin opening day landed with unusual force because the target list looked like an inventory of the modern enterprise stack. Microsoft Edge and Windows 11 represented the familiar endpoint. Red Hat Enterprise Linux represented traditional infrastructure. NVIDIA’s container and AI tooling represented accelerated compute. LiteLLM, LM Studio, OpenAI Codex, and other AI-oriented targets represented the fast-growing layer now being bolted into developer and operations workflows.

That mix is the point. The security perimeter is no longer the browser, the operating system, and the VPN. It is the browser, the operating system, the container runtime, the GPU toolchain, the internal model gateway, the local inference app, the coding assistant, and whatever automation glue joins them together.

Edge’s Sandbox Escape Was the Day’s Loudest Warning

The single biggest payout went to Orange Tsai of DEVCORE, who chained four logic bugs to escape the Microsoft Edge sandbox and earn $175,000. In browser security terms, that is not just a flashy win. It is a reminder that a sandbox is a boundary only until enough assumptions around it are made to collide.Modern browsers are built around containment. The idea is that risky content can be rendered in processes that are heavily restricted, limiting what a compromised tab can do to the wider system. That architecture has been one of the great practical security improvements of the past two decades, and it has made drive-by compromise vastly harder than it used to be.

But sandboxing also creates a target of enormous value. If an attacker can move from code execution inside a renderer or browser process to code execution outside the sandbox, the compromise changes character. The difference between “the browser process is in trouble” and “the machine is in trouble” is the difference enterprise defenders build entire control stacks around.

The interesting detail here is that the Edge exploit reportedly chained logic bugs, not the more cinematic memory corruption flaws that traditionally dominate browser exploitation lore. Logic bugs are uncomfortable because they often arise from design complexity, inconsistent state, overly trusting components, or interactions that are valid in isolation but dangerous in sequence. They are harder to eliminate with compiler switches and memory-safe language migration alone.

That does not make memory safety irrelevant. It makes the job bigger. Browser vendors can keep closing whole classes of memory bugs and still face the problem of sprawling privilege models, feature interactions, identity plumbing, policy enforcement, and compatibility compromises accumulated over years of product evolution.

Windows 11 Took Three Privilege-Escalation Hits Where It Hurts

Windows 11 was successfully targeted multiple times through local privilege-escalation vulnerabilities. DEVCORE used an improper access-control flaw, Marcin Wiązowski demonstrated a heap-based buffer overflow, and Kentaro Kawane chained use-after-free vulnerabilities. Each route matters less than the pattern: researchers found several ways to move upward on a fully modern Windows platform.Privilege escalation is often less glamorous than remote code execution, but defenders know why it matters. Many real intrusions are not one perfect exploit fired from the outside. They are chains: phishing, stolen credentials, malicious documents, exposed services, weak app isolation, then elevation. The attacker who begins as a normal user wants to become a local admin, a SYSTEM-level process, a credential thief, or a domain foothold.

That is why local privilege-escalation bugs sit at the center of ransomware and espionage tradecraft. They turn limited execution into durable access. They let malware disable defenses, dump secrets, tamper with logs, install persistence, and pivot toward higher-value systems.

Windows 11 is more hardened than its predecessors in meaningful ways. Virtualization-based security, memory protections, driver controls, Smart App Control, hardware-backed credential protections, and increasingly aggressive defaults have changed the terrain. But hardening does not erase the economics of Windows exploitation; it raises the cost and shifts the favorite bug classes.

For administrators, the message is not that Windows 11 is uniquely broken. It is that endpoint compromise remains a layered problem. A privilege-escalation exploit is most damaging when it meets overprivileged users, weak application control, exposed credentials, inadequate EDR coverage, or poor patch discipline. The OS flaw is one link in a chain; enterprise configuration decides how much chain the attacker has to work with.

The AI Targets Were Not a Sideshow

The most important shift in Berlin may be that AI platforms were treated not as curiosities but as primary targets. LiteLLM was compromised through a chain that included server-side request forgery and code injection. LM Studio was also successfully exploited. OpenAI Codex was targeted, with some attempts failing or colliding depending on the entry. NVIDIA’s AI-adjacent tooling also took hits.This is where the event stops being a Microsoft story and becomes an industry story. AI infrastructure is being adopted at startup speed but often deployed with web-app security assumptions, developer-tool trust assumptions, and infrastructure-level privileges. That combination is volatile.

LiteLLM is a useful example because model gateways are becoming common in organizations trying to abstract away multiple LLM providers. They sit between applications and models, often holding API keys, routing requests, enforcing policy, logging prompts, and connecting to internal services. If such a gateway is vulnerable to SSRF or command execution, the blast radius can extend far beyond chatbot output.

The same concern applies to local inference tools and coding agents. These products are attractive precisely because they sit close to sensitive material: source code, credentials, internal documentation, build systems, repositories, test harnesses, and developer workstations. The closer an AI assistant gets to useful work, the closer it gets to dangerous authority.

Security teams have spent years teaching organizations that CI/CD systems, package registries, and developer laptops are production-adjacent assets. AI coding agents now belong in that category. A vulnerable coding assistant is not merely an app bug. It is a possible bridge into the software supply chain.

AI Security Is Rediscovering Web Security the Hard Way

There is a temptation to describe AI security as an entirely new discipline. Some parts are new: prompt injection, tool manipulation, model behavior, retrieval poisoning, and policy bypasses do not map cleanly onto older categories. But the Berlin results are a reminder that many AI products are also just networked software with old-fashioned bugs.SSRF is not new. Code injection is not new. Path traversal is not new. Weak access control is not new. Overly permissive allow lists are not new. The novelty is that these bug classes are now appearing in systems that may be granted unusual trust because they are branded as AI platforms, productivity accelerators, or internal automation layers.

That trust gap is dangerous. A model router may be treated like middleware but given access to secrets from multiple providers. A coding agent may be treated like a convenience feature but allowed to read repositories and execute commands. A local inference app may be installed by developers outside the normal procurement path but still bind services, open ports, or process sensitive files.

The industry has been here before. Containers began as developer convenience and then became a core production substrate. CI systems began as build helpers and became crown jewels. Browser extensions began as personal productivity tools and became enterprise data-exfiltration risks. AI tooling is moving through the same cycle, only faster.

The lesson from Pwn2Own is not to freeze AI adoption. It is to stop pretending AI tooling can skip the boring controls. Authentication, authorization, input validation, egress restrictions, secret scoping, sandboxing, logging, update hygiene, and least privilege all still apply. The model may be novel; the process around it cannot be.

NVIDIA’s Role Shows How Compute Became Part of the Attack Surface

NVIDIA’s presence in the results is another sign of where enterprise computing has moved. Researchers exploited NVIDIA Container Toolkit and NVIDIA Megatron Bridge, with reported issues including path traversal and weak access control. These are not consumer graphics-driver headlines. They are infrastructure headlines.The GPU stack has become a production dependency for AI workloads, scientific computing, media processing, and high-performance analytics. That stack includes drivers, runtimes, container integrations, orchestration glue, model frameworks, and data pipelines. Every layer that makes accelerated compute easier to use also gives defenders another layer to inventory and patch.

Container tooling is especially sensitive because it often sits near workload isolation boundaries. If a flaw lets an attacker escape expected constraints, tamper with host resources, or access files and devices they should not touch, the consequences can be severe. In AI environments, those hosts may contain expensive hardware, proprietary models, training data, credentials, and high-value internal services.

This is why the old division between “security infrastructure” and “compute infrastructure” looks increasingly artificial. The GPU server is not just a performance box. It is an identity-bearing, secret-handling, network-connected, multi-tenant machine. Its toolchain deserves the same scrutiny as a hypervisor, container runtime, or database server.

The NVIDIA results also underline a procurement reality. Organizations racing to build AI capacity are often focused on supply, performance, and developer enablement. Security review arrives later, after the platform is already expensive, politically important, and difficult to redesign. That is how technical debt becomes strategic risk.

Linux and Windows Shared the Same Enterprise Story

IBM X-Force demonstrated a race-condition privilege escalation against Red Hat Enterprise Linux for Workstations, while Windows 11 saw its own elevation bugs. The symmetry is worth noting. Pwn2Own did not show that one platform is safe and the other is doomed. It showed that modern endpoints and workstations, regardless of operating system, remain dense targets.Race conditions are particularly unpleasant because they exploit timing and state. They can be fragile to develop but powerful when reliable. They also remind defenders that concurrency, performance optimization, and privilege separation are uneasy roommates.

For mixed Windows and Linux shops, the practical takeaway is that privilege boundaries need to be monitored as operational assumptions, not simply trusted as product features. Workstation hardening, endpoint telemetry, patch velocity, local admin minimization, and application control matter across operating systems. The badge on the laptop is less important than the privileges available after first foothold.

The Red Hat result also matters because Linux workstations and servers are deeply embedded in developer and AI environments. A vulnerable Linux workstation may have SSH keys, cloud credentials, Kubernetes contexts, source repositories, package-publishing rights, or direct access to training and deployment pipelines. In 2026, compromising a developer’s Linux box can be as consequential as compromising a Windows admin workstation.

This is another place where security architecture has lagged cultural reality. Many organizations formally protect production while informally trusting development. Attackers do not share that distinction. They go where the credentials, build paths, and human shortcuts are.

Collisions and Failures Are Part of the Signal

Not every attempt worked. Some exploits failed within the allotted time. Others were classified as collisions, meaning the vulnerability was already known to the vendor or overlapped with previous reporting. That does not make those entries irrelevant. It makes the contest more realistic.Exploit development is uncertain even for elite researchers. Environmental details matter. Reliability matters. Time pressure matters. A bug that works in the lab can fail onstage, and a chain that looks elegant in a write-up can collapse under contest constraints.

Collisions are more complicated. On one hand, they reduce the drama of a “new” zero-day. On the other, they show that multiple researchers may be converging on the same weak areas. If different teams independently find similar bugs in the same product surface, that is often evidence of architectural pressure, not random bad luck.

For vendors, collisions can be embarrassing or reassuring depending on patch status. If the issue is already known and moving through remediation, the process is working. If it is known but unpatched across live targets, the public demonstration raises the temperature.

For users, the distinction matters mostly in timing. Pwn2Own bugs are disclosed to vendors under coordinated processes, not dumped as exploit kits. The risk window becomes a patch-management question: how quickly vendors ship fixes, how clearly they communicate severity, and how quickly organizations deploy updates once those fixes arrive.

Microsoft’s Problem Is Not That Edge and Windows Were Hacked

It would be easy, and lazy, to frame the Berlin results as another round of Microsoft insecurity. Edge was hacked. Windows 11 was elevated. Therefore Microsoft failed. That reading misses both the purpose of Pwn2Own and the broader lesson.Microsoft products are targeted because they are valuable, heavily deployed, and hardened enough to make successful exploitation meaningful. A public contest that pays serious money for reliable exploits against current builds will naturally draw researchers toward the platforms with the largest enterprise footprint. If anything, Microsoft’s prominence at Pwn2Own reflects its continued centrality.

The more serious critique is about complexity. Edge inherits the modern browser’s vast attack surface. Windows inherits decades of compatibility, drivers, privilege models, management hooks, legacy behavior, and enterprise integration. Even when the company improves security architecture, the total system remains enormous.

That has practical consequences. Windows administrators should not respond to Pwn2Own with panic, but neither should they dismiss it as theater. The contest previews the kinds of bugs that may appear in future Patch Tuesday cycles and the kinds of exploit chains adversaries would like to assemble if they can obtain or rediscover similar flaws.

The correct posture is boring and disciplined. Keep Edge and Windows patched. Reduce local admin rights. Enforce application control where possible. Treat browsers as high-risk application platforms. Monitor suspicious child processes, privilege changes, credential access, and unusual outbound connections. The security story is not one magical setting; it is the accumulation of friction.

The Patch Clock Starts Before the CVE Exists

One frustration with Pwn2Own coverage is that defenders receive just enough information to worry and not enough to block the specific bug. That is by design. The vulnerability details go to vendors first, and public technical disclosure generally waits until patches are available or coordinated timelines expire.This creates a familiar interim period. Security teams know that certain products were exploited, but they do not yet have CVEs, proof-of-concept details, or vendor advisories to map into scanners and dashboards. That can feel unsatisfying in organizations built around ticketing systems and vulnerability feeds.

The best response is to treat the event as a trigger for readiness rather than emergency mitigation. Identify where affected products exist. Confirm update channels. Check whether Edge is managed and current. Review Windows 11 exposure and privilege models. Inventory AI tools such as LiteLLM, LM Studio, coding agents, model gateways, and NVIDIA container components. Make sure the owners of those systems know patches are likely coming.

This is especially important for AI tools because many deployments may not yet be in the traditional asset inventory. Developer teams may have installed local inference applications. Platform teams may have stood up model routers. Data-science teams may be running GPU containers outside standard production patterns. If security does not know where the software lives, it cannot patch it when the advisory lands.

The uncomfortable truth is that “wait for the CVE” is no longer a complete vulnerability-management strategy. For fast-moving platforms, especially AI tooling, the inventory problem is the vulnerability problem. You cannot defend what procurement never saw and endpoint management never enrolled.

The Contest Is Becoming a Mirror for Enterprise Architecture

Pwn2Own used to be easiest to explain as a browser and operating-system contest with occasional detours. That era is gone. Berlin’s first day looked like a mirror held up to enterprise architecture in 2026: endpoints, browsers, Linux workstations, AI infrastructure, coding agents, local inference, container tooling, and GPU frameworks all stacked into one risk surface.That is not accidental. Attackers follow value, and value has moved. The developer workstation has become a production gateway. The AI platform has become a data broker. The GPU server has become a strategic asset. The browser has become the universal application runtime. Windows remains the enterprise desktop, but it is now just one node in a larger machine.

This is why security teams should resist product-by-product tunnel vision. The Edge sandbox escape matters, but so does what happens after a browser foothold. The Windows privilege escalation matters, but so does whether credentials are exposed once elevation succeeds. The LiteLLM exploit matters, but so does whether the model gateway can reach internal metadata services, source repositories, or cloud control planes.

The defensive unit is no longer a device. It is a workflow. A developer asks an AI agent to modify code, the agent calls tools, the code builds in CI, containers run on GPU-backed infrastructure, secrets move through environment variables, and telemetry lands in half a dozen systems. Every trust boundary in that workflow is now a place where old bugs and new AI-specific failures can meet.

Berlin’s First-Day Scoreboard Leaves Little Room for Complacency

The concrete lessons from the first day are less sensational than the phrase “hacked at Pwn2Own,” but they are more useful. The event gives administrators a preview of where vendor patches, security reviews, and asset-discovery efforts should focus next.- Microsoft Edge suffered a high-value sandbox escape, which reinforces the browser’s role as both a productivity platform and a critical isolation boundary.

- Windows 11 saw multiple local privilege-escalation demonstrations, which makes least privilege and endpoint hardening as important as ordinary patch deployment.

- LiteLLM and LM Studio showed that AI infrastructure must be inventoried, patched, and threat-modeled like any other exposed application platform.

- NVIDIA’s container and AI tooling results showed that GPU infrastructure is now part of the enterprise security perimeter, not merely a performance layer.

- Failed attempts and collisions still matter because they reveal where researchers are concentrating and where vendors may already be racing to close gaps.

- Security teams should use the disclosure window to find affected deployments before advisories arrive, especially for AI tools that may have entered through developer adoption rather than central procurement.

Source: cyberpress.org Microsoft Edge, Windows 11, and LiteLLM Hacked at Pwn2Own Berlin 2026