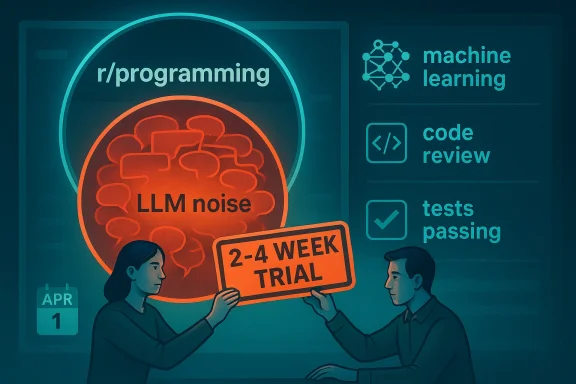

The biggest programming community on Reddit has drawn a line around the most exhausting corner of the AI discourse, and the move says as much about moderation fatigue as it does about machine learning. r/programming has begun a temporary ban on LLM-related posts for a trial period of two to four weeks in April, while still allowing broader AI and machine-learning discussion that is actually technical, educational, and relevant to software engineering. The decision lands in the middle of a noisy moment for the industry, where coding agents are becoming more capable, AI content is saturating feeds, and many developers are increasingly skeptical of low-signal hype.

What happened on r/programming is not a blanket rejection of AI, but a highly targeted attempt to reclaim a technical community from a category of posts the moderators say overwhelms everything else. The announcement makes a crucial distinction: LLMs are the target, not AI broadly. Posts about classic AI techniques, machine-learning systems, or detailed engineering write-ups remain welcome so long as they are not centered on LLMs. (reddit.com)

That nuance matters because the subreddit has always tried to position itself as a place for detailed, technical learning rather than general tech chatter. In the moderators’ own framing, the problem is signal-to-noise ratio, not ideology. They argue that LLM posts dominate the conversation so thoroughly that other software topics are drowned out. (reddit.com)

The timing of the announcement, however, turned a moderation experiment into a minor internet spectacle. Because it went live on April 1, many commenters assumed it was an April Fool’s joke, even though the mods insisted it was a genuine trial that happened to align with the calendar. That ambiguity gave the thread a second life, because every Reddit moderation decision becomes more combustible when users suspect the punchline is hidden in the policy. (reddit.com)

The move also reflects a broader community shift. Modern coding forums were built around a culture of expertise, craft, and hard-earned technical fluency. As LLM-powered coding tools have become more visible, communities like r/programming increasingly face a wave of beginner questions, product pitches, and speculative “will AI replace developers?” posts that often generate heat without adding much value. OpenAI’s Codex and Anthropic’s Claude Code are now legitimate engineering tools, but their rise has also normalized a flood of shallow commentary around them.

That said, the sub is not acting in a vacuum. The broader internet has spent the last two years cycling through overlapping waves of AI excitement, AI fear, and AI backlash. Every major product launch seems to trigger the same pattern: hype, copycat content, hot takes, backlash, and then a repeat performance. In that environment, moderators of high-traffic communities are often forced to choose between being inclusive and being legible. r/programming appears to be choosing legibility.

This is especially relevant because AI coding tools have become undeniably real. OpenAI’s Codex is now a cloud-based software engineering agent that can work on code tasks in parallel and is available through ChatGPT and developer workflows. Anthropic’s Claude Code is similarly positioned as an agentic coding system that reads codebases, makes changes, runs tests, and commits code. The fact that these products are serious enough for enterprise workflows makes them fair game for technical discussion, but also makes them magnets for repetitive posting.

That helps explain why the mods are not banning AI outright. They are trying to preserve expert conversation while filtering out a category of posts they see as structurally noisy. This is a classic community-management tradeoff: the more popular a topic becomes, the more likely it is to require filtering in order to remain useful. Popularity is not always proportional to quality. (reddit.com)

That back-and-forth is revealing. In a forum culture where irony is practically a native language, moderation announcements can be read as theater even when they are administrative. The result is a kind of policy credibility tax: the more a community jokes about itself, the harder it is to communicate serious changes cleanly. That is not unique to Reddit, but Reddit tends to magnify it. (reddit.com)

That distinction matters because many readers instinctively collapse “AI” and “LLM” into one blob. They are not the same thing, and the subreddit is drawing exactly that line. A paper on model optimization, a deep dive on neural-network training, or an engineering breakdown of an algorithm may still pass muster if it is technically substantive and not just another LLM discourse cycle. (reddit.com)

There is also a cultural dimension. Older programming communities often prize craft, precision, and hard-won competence. That makes “vibe coding” and other casual, prompt-first narratives feel almost sacrilegious to some long-time participants. The issue is not that assistants like Codex or Claude Code are worthless; it is that the conversation around them often treats deep engineering as optional.

This is one reason many technical spaces become self-protective. They are not necessarily hostile to newcomers, but they are hostile to being flooded. The difference is subtle, but important. A forum that loses its expert core often stops functioning as an expert forum altogether. That is the real fear behind this kind of moderation. (reddit.com)

That anxiety is not hypothetical. OpenAI and Anthropic both frame their tools as ways to accelerate real engineering work, not merely autocomplete. OpenAI says Codex can handle code changes, bug fixes, and pull request proposals, while Anthropic says Claude Code changes files, runs tests, and can handle tasks that go beyond autocomplete. Those are exactly the kinds of claims that make developers both intrigued and wary.

That creates a paradox for communities like r/programming. The topic is both over-discussed and genuinely relevant. When a technology becomes deeply integrated into developer workflows, it naturally deserves discussion. But when discussion is dominated by launch cycles, hot takes, and self-promotion, relevance alone is not enough to justify prominence. (reddit.com)

For consumers and hobbyists, the picture is more chaotic. Many people encounter AI coding tools through demos, tutorials, or social media clips rather than structured engineering workflows. That makes the consumer-facing narrative far more vulnerable to exaggeration, which feeds the exact kind of low-quality content the r/programming moderators want to suppress. The tools are real; the discourse is often not. (reddit.com)

In practice, this means the most useful discussions are often the least flashy. Experienced engineers talking about review workflows, test harnesses, and failure modes produce better signal than endless debate about whether a model is “smart.” The subreddit’s ban is effectively a bet that technical culture benefits more from those quieter conversations. (reddit.com)

Their answer is temporary exclusion, monitored by trial. That is a pragmatic approach, because it gives them room to compare behavior before and after the ban. If the subreddit becomes more substantive, the policy can be extended. If participation suffers or the remaining discussion becomes stale, the mods can roll it back. (reddit.com)

In that sense, the ban is about protecting a community’s future from its current attention economy. A subreddit with 6.9 million members has a scale problem that a smaller forum would never face. The bigger the audience, the more likely the most clickable topic will crowd out everything else.

There is also a competitive angle between platforms and forums. Reddit has long marketed itself as a place for niche expertise, but that promise weakens when communities become indistinguishable from each other in content style. By trying to preserve distinctiveness, r/programming is also protecting Reddit’s value proposition for technical users.

The broader test is cultural as much as procedural. Can a large technical community draw a line between meaningful AI engineering and generic AI chatter without being accused of hostility toward innovation? That question matters because the software world is not going to stop using LLMs, but it may become more selective about where and how it talks about them.

Source: Tom's Hardware The largest programming community on Reddit just banned all content related to AI LLMs — r/programming is prioritizing only high-quality discussions about AI

Overview

Overview

What happened on r/programming is not a blanket rejection of AI, but a highly targeted attempt to reclaim a technical community from a category of posts the moderators say overwhelms everything else. The announcement makes a crucial distinction: LLMs are the target, not AI broadly. Posts about classic AI techniques, machine-learning systems, or detailed engineering write-ups remain welcome so long as they are not centered on LLMs. (reddit.com)That nuance matters because the subreddit has always tried to position itself as a place for detailed, technical learning rather than general tech chatter. In the moderators’ own framing, the problem is signal-to-noise ratio, not ideology. They argue that LLM posts dominate the conversation so thoroughly that other software topics are drowned out. (reddit.com)

The timing of the announcement, however, turned a moderation experiment into a minor internet spectacle. Because it went live on April 1, many commenters assumed it was an April Fool’s joke, even though the mods insisted it was a genuine trial that happened to align with the calendar. That ambiguity gave the thread a second life, because every Reddit moderation decision becomes more combustible when users suspect the punchline is hidden in the policy. (reddit.com)

The move also reflects a broader community shift. Modern coding forums were built around a culture of expertise, craft, and hard-earned technical fluency. As LLM-powered coding tools have become more visible, communities like r/programming increasingly face a wave of beginner questions, product pitches, and speculative “will AI replace developers?” posts that often generate heat without adding much value. OpenAI’s Codex and Anthropic’s Claude Code are now legitimate engineering tools, but their rise has also normalized a flood of shallow commentary around them.

Background

The most important context here is that r/programming was never meant to be a generic AI enthusiasm hub. The subreddit’s identity has long leaned toward serious software engineering, implementation details, and discussions that reward technical depth rather than broad trends. When a single topic starts to dominate that kind of space, moderation becomes less about censorship and more about protecting the room’s original purpose. (reddit.com)That said, the sub is not acting in a vacuum. The broader internet has spent the last two years cycling through overlapping waves of AI excitement, AI fear, and AI backlash. Every major product launch seems to trigger the same pattern: hype, copycat content, hot takes, backlash, and then a repeat performance. In that environment, moderators of high-traffic communities are often forced to choose between being inclusive and being legible. r/programming appears to be choosing legibility.

This is especially relevant because AI coding tools have become undeniably real. OpenAI’s Codex is now a cloud-based software engineering agent that can work on code tasks in parallel and is available through ChatGPT and developer workflows. Anthropic’s Claude Code is similarly positioned as an agentic coding system that reads codebases, makes changes, runs tests, and commits code. The fact that these products are serious enough for enterprise workflows makes them fair game for technical discussion, but also makes them magnets for repetitive posting.

Why moderators care about LLM saturation

The subreddit’s logic is straightforward: not every topic that matters in software is equally healthy for a specific community’s front page. LLMs are everywhere, but ubiquity is not the same as usefulness. A high-volume topic can still be low value if it arrives mostly as recycled news, simplistic predictions, and self-promotional “look what I built” posts. (reddit.com)That helps explain why the mods are not banning AI outright. They are trying to preserve expert conversation while filtering out a category of posts they see as structurally noisy. This is a classic community-management tradeoff: the more popular a topic becomes, the more likely it is to require filtering in order to remain useful. Popularity is not always proportional to quality. (reddit.com)

Why the timing caused confusion

April 1 is a terrible day to announce anything serious on the internet. The announcement’s date immediately invited skepticism, and commenters reacted accordingly, with several suggesting the moderators had intentionally picked the worst possible time. The mods responded that the timing simply lined up with the start of a new month and a trial period, not a prank. (reddit.com)That back-and-forth is revealing. In a forum culture where irony is practically a native language, moderation announcements can be read as theater even when they are administrative. The result is a kind of policy credibility tax: the more a community jokes about itself, the harder it is to communicate serious changes cleanly. That is not unique to Reddit, but Reddit tends to magnify it. (reddit.com)

Why this matters beyond Reddit

Reddit remains a powerful distribution layer for technical news and developer culture. When a large community changes its rules, smaller communities often follow suit in spirit if not in letter. That makes the r/programming experiment worth watching even for people who never visit the subreddit. A successful ban can normalize stricter topic hygiene elsewhere; a failed one can reinforce the idea that moderation by category is too blunt.What the Ban Actually Covers

The ban is narrower than many headlines suggest. It targets content relating to LLMs, which the moderators describe as posts that are not aligned with the community’s goals. At the same time, the mods explicitly say broader AI content is still allowed, including technical write-ups about machine learning or a traditional AI implementation in a programming language. (reddit.com)That distinction matters because many readers instinctively collapse “AI” and “LLM” into one blob. They are not the same thing, and the subreddit is drawing exactly that line. A paper on model optimization, a deep dive on neural-network training, or an engineering breakdown of an algorithm may still pass muster if it is technically substantive and not just another LLM discourse cycle. (reddit.com)

Categories likely to be removed

According to the announcement, the ban covers news stories about new LLMs, guides on building or modifying them, and broad discussions about whether AI will replace developers. That is a fairly expansive definition, which is part of why the policy will likely generate edge cases and moderation disputes. The moderators know this, and they are relying on reports and manual judgment rather than pretending the line is easy. (reddit.com)- New model launch posts

- Tutorials focused on LLM construction

- “Will AI take my job?” think pieces

- Viral blog posts centered on chatbots

- Commentary threads on generative-code trends

Categories still likely to stay

The moderators say detailed machine-learning discussions are fine, as are articles about non-LLM AI systems. That means the ban is less a rejection of AI engineering and more an attempt to cut off the loudest, most repetitive branch of the conversation. In practical terms, the sub is trying to keep room for difficult technical work while excluding trend-chasing. (reddit.com)- Classical machine-learning write-ups

- Non-LLM AI implementation details

- Software-engineering lessons from AI tooling

- Research-adjacent technical analysis

- Posts centered on code, not hype

The moderation challenge

The mods also acknowledged that identifying LLM-generated or LLM-adjacent content is not always straightforward. They said some people may even accuse a human-written post of being machine-generated based on stylistic cues, which underlines how slippery this terrain has become. In other words, the policy is simple in theory but messy in execution. That is exactly where community trust gets tested. (reddit.com)Why Developers Are Frustrated

A lot of the backlash against LLM content is not really about the models themselves. It is about the way the surrounding discourse has become repetitive, absolutist, and often disconnected from actual engineering practice. Developers spend time debugging production systems, reviewing code, and shipping features; they do not always want a feed dominated by high-level predictions and low-effort demos. (reddit.com)There is also a cultural dimension. Older programming communities often prize craft, precision, and hard-won competence. That makes “vibe coding” and other casual, prompt-first narratives feel almost sacrilegious to some long-time participants. The issue is not that assistants like Codex or Claude Code are worthless; it is that the conversation around them often treats deep engineering as optional.

The quality gap

The moderators’ complaint about LLMs is really a complaint about quality collapse. When the majority of posts in a community are variations of the same topic, even legitimate questions can start to feel like spam. That creates a feedback loop where experienced contributors disengage and the subreddit becomes more beginner-heavy, which in turn lowers the average discussion quality further. (reddit.com)This is one reason many technical spaces become self-protective. They are not necessarily hostile to newcomers, but they are hostile to being flooded. The difference is subtle, but important. A forum that loses its expert core often stops functioning as an expert forum altogether. That is the real fear behind this kind of moderation. (reddit.com)

The employment anxiety layer

The AI boom also lands on top of a fragile software job market. Even though the article’s underlying point about employment is outside the ban itself, it explains why LLM discussions provoke strong reactions. Workers worry about displacement, junior developers worry about entry points, and senior engineers worry that management will confuse automation demos with real productivity gains.That anxiety is not hypothetical. OpenAI and Anthropic both frame their tools as ways to accelerate real engineering work, not merely autocomplete. OpenAI says Codex can handle code changes, bug fixes, and pull request proposals, while Anthropic says Claude Code changes files, runs tests, and can handle tasks that go beyond autocomplete. Those are exactly the kinds of claims that make developers both intrigued and wary.

The New Reality of AI Coding Tools

The strongest argument against blanket anti-LLM sentiment is that the tools are now too important to ignore. OpenAI’s Codex has evolved from research preview to a broadly available coding agent with integrations, admin controls, and workspace-level usage. Anthropic’s Claude Code is similarly being positioned as a serious software-development system, not a novelty chatbot.That creates a paradox for communities like r/programming. The topic is both over-discussed and genuinely relevant. When a technology becomes deeply integrated into developer workflows, it naturally deserves discussion. But when discussion is dominated by launch cycles, hot takes, and self-promotion, relevance alone is not enough to justify prominence. (reddit.com)

Enterprise versus consumer impact

For enterprises, AI coding tools are now part of serious workflow planning. OpenAI has emphasized sandboxing, test runs, reviewability, and admin features for Codex, while Anthropic highlights agentic execution across codebases and tests. That means the business value case is becoming concrete, especially for teams looking to accelerate refactors, bug fixing, or internal automation.For consumers and hobbyists, the picture is more chaotic. Many people encounter AI coding tools through demos, tutorials, or social media clips rather than structured engineering workflows. That makes the consumer-facing narrative far more vulnerable to exaggeration, which feeds the exact kind of low-quality content the r/programming moderators want to suppress. The tools are real; the discourse is often not. (reddit.com)

Why code-generation hype backfires

The more these tools are marketed as magic, the more backlash they invite from people who actually have to work with the output. OpenAI’s own material stresses human review, test verification, and guardrails, which is an implicit admission that agentic code generation still needs supervision. That honesty is healthy, but it also contrasts sharply with the overconfident tone of much online AI commentary.In practice, this means the most useful discussions are often the least flashy. Experienced engineers talking about review workflows, test harnesses, and failure modes produce better signal than endless debate about whether a model is “smart.” The subreddit’s ban is effectively a bet that technical culture benefits more from those quieter conversations. (reddit.com)

The Moderation Philosophy Behind the Decision

The r/programming moderators are not trying to win an argument about the future of work. They are trying to curate a space that remains useful to its members. That may sound mundane, but it is actually one of the hardest problems in online community management: when a topic is both important and oversaturated, what should a serious forum do with it? (reddit.com)Their answer is temporary exclusion, monitored by trial. That is a pragmatic approach, because it gives them room to compare behavior before and after the ban. If the subreddit becomes more substantive, the policy can be extended. If participation suffers or the remaining discussion becomes stale, the mods can roll it back. (reddit.com)

A trial is not a verdict

This is why the “temporary” label matters so much. A trial ban lets moderators experiment without pretending to have the final answer. It also gives the community a way to absorb the change without immediately treating it as a permanent ideological shift. That is good governance, even if the tone is abrasive. (reddit.com)- Trial policies are reversible

- Community reaction can be measured

- Edge cases can be documented

- Enforcement can be adjusted

- Long-term rules can be based on evidence

The problem of asymmetric attention

High-volume topics do not just consume space; they also alter what members expect the subreddit to be about. Once a front page fills with one trend, posters begin to imitate it, whether they genuinely care about the topic or just want attention. That is how topic drift accelerates. (reddit.com)In that sense, the ban is about protecting a community’s future from its current attention economy. A subreddit with 6.9 million members has a scale problem that a smaller forum would never face. The bigger the audience, the more likely the most clickable topic will crowd out everything else.

The role of enforcement clarity

A rule is only as good as its enforceability. The moderators admitted that identifying LLM-generated or LLM-adjacent content can be difficult, which means the ban will depend heavily on judgment calls. That kind of discretion can work, but it also creates the risk of uneven enforcement and user resentment. (reddit.com)Competitive Implications for Reddit and Other Communities

If the policy works, other technical communities may follow with their own topic filters. Reddit is full of subreddits that are quietly battling the same problem: AI-related posts that are technically relevant but socially exhausting. A successful ban could become a template for other communities that want to reduce noise without turning into anti-AI echo chambers. (reddit.com)There is also a competitive angle between platforms and forums. Reddit has long marketed itself as a place for niche expertise, but that promise weakens when communities become indistinguishable from each other in content style. By trying to preserve distinctiveness, r/programming is also protecting Reddit’s value proposition for technical users.

What rivals may take from this

Smaller programming communities, Discords, and forums will likely watch the experiment closely. If r/programming’s front page becomes calmer and more technical, others may decide to copy the approach. If it becomes quieter but less useful, they will cite it as evidence that category bans are too blunt. (reddit.com)- Technical forums may adopt narrower topic filters

- Moderators may differentiate AI from LLMs more carefully

- Community rules may shift toward stricter editorial curation

- Cross-posting norms may tighten across developer spaces

- “AI fatigue” may become a formal moderation category

Impact on AI discourse

A ban on LLM content in a major programming subreddit is not the same thing as a ban on AI discourse at large. But it does send a signal that programmers are no longer willing to let every AI launch dominate every technical conversation. That may encourage a healthier split between genuine engineering coverage and generic “AI is changing everything” content. The internet probably needs that split more than it needs another model launch thread. (reddit.com)Strengths and Opportunities

The biggest strength of r/programming’s move is that it prioritizes community fit over trend-chasing. It also gives moderators a concrete way to reduce clutter without banning all AI-related technical discussion. If the experiment works, it could improve both discussion quality and contributor retention. (reddit.com)- Restores focus on software engineering

- Reduces repetitive LLM hype posts

- Preserves room for real AI/ML analysis

- Gives moderators a testable policy

- May improve front-page diversity

- Encourages higher-effort submissions

- Sets a precedent for curated technical spaces

Risks and Concerns

The obvious risk is overcorrection. If moderators draw the line too aggressively, they may suppress genuinely useful coverage of a technology that is already transforming software development. Because LLMs are now part of mainstream coding workflows, an overly broad ban can accidentally exclude serious technical insight along with the noise. (reddit.com)- Legitimate technical posts may get caught

- Enforcement may vary by moderator judgment

- Users may feel the policy is anti-AI rather than anti-spam

- Important industry developments could be underreported

- The subreddit may become less current

- New contributors may see the rule as hostile

- The April 1 timing may keep sowing confusion

Looking Ahead

The next few weeks will tell us whether the subreddit’s users respond to scarcity with better discussion or simply migrate their LLM debates elsewhere. If the front page becomes more varied, more substantive, and less repetitive, the moderators will have a strong case for extending the trial. If the change merely reduces activity without improving quality, the policy may be revised or dropped. (reddit.com)The broader test is cultural as much as procedural. Can a large technical community draw a line between meaningful AI engineering and generic AI chatter without being accused of hostility toward innovation? That question matters because the software world is not going to stop using LLMs, but it may become more selective about where and how it talks about them.

Signals to watch

- Whether the temporary ban becomes permanent

- Whether LLM-adjacent technical analysis remains allowed in practice

- Whether other major programming communities adopt similar rules

- Whether the subreddit’s engagement becomes more or less substantive

- Whether users accept the distinction between AI and LLMs

- Whether moderation complaints rise or fall during the trial

Source: Tom's Hardware The largest programming community on Reddit just banned all content related to AI LLMs — r/programming is prioritizing only high-quality discussions about AI