A critical weakness in Microsoft Copilot Personal allowed attackers to turn a single, legitimate click into a stealthy exfiltration channel that could siphon profile attributes, file summaries and conversational memory — a chained prompt‑injection attack Varonis Threat Labs labeled “Reprompt” that Microsoft mitigated during January 2026’s security updates.

Background / Overview

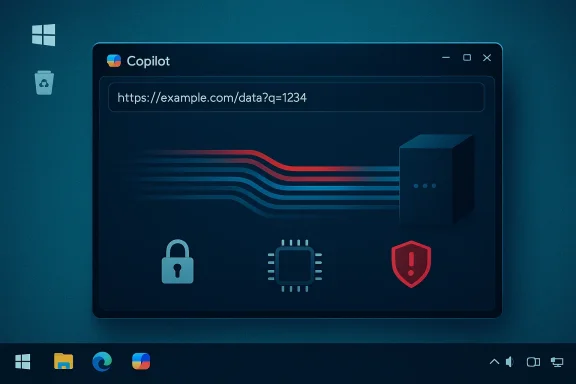

Microsoft Copilot has been woven deeply into Windows and Microsoft Edge as a conversational assistant designed to accelerate everyday productivity by accessing local context, recent files, profile data and chat memory. That integration is the core value proposition: the assistant knows your environment and can surface targeted help. But that very level of privilege creates an expanded attack surface where convenience features — like prefilled prompts supplied by URL parameters — become remote injection channels if they are not treated as explicitly untrusted.In mid‑January 2026, security researchers at Varonis Threat Labs published a proof‑of‑concept showing how a crafted Copilot deep link could hijack an authenticated Copilot Personal session and quietly extract sensitive context with a single click. Microsoft applied mitigations in the January Patch Tuesday window, closing the specific vector Varonis demonstrated.

This feature unpacks the technical mechanics of Reprompt, verifies the key claims across independent reporting, analyzes why the attack is operationally concerning, and lays out practical mitigations for users, IT administrators and platform architects.

What happened: the Reprompt summary

- The Reprompt technique abused Copilot’s URL deep‑link functionality to prefill the assistant input using a query parameter (commonly named q), turning a benign UX shortcut into a remote prompt‑injection channel.

- The exploit chained three behavioral patterns — Parameter‑to‑Prompt (P2P) injection, a Double‑request (repetition) bypass, and Chain‑request orchestration — to coax Copilot Personal into leaking sensitive data incrementally to an attacker‑controlled endpoint.

- Varonis publicly disclosed technical details and demonstration materials on January 14, 2026; Microsoft deployed mitigations as part of the January 2026 update cycle.

- The vulnerability was specific to Copilot Personal (consumer) and did not affect Microsoft 365 Copilot (enterprise) according to reporting.

Technical anatomy: how a single click became a persistent exfiltration pipeline

1) Parameter‑to‑Prompt (P2P) injection — the initial foothold

Many assistant UIs support deep links that prefill the assistant’s input box by reading a query string parameter (commonly named q). That feature is intended for sharing prompts or bookmarking tasks. Reprompt weaponized this convenience by embedding attacker instructions inside the q parameter so that when an authenticated user clicks the link, Copilot ingests the value as though the user typed it — executing instructions inside the victim’s existing session context. Because the link can be hosted on a legitimate Microsoft domain, the initial click appears genuine and bypasses conventional URL‑based filters.This is the subtle pivot: a trusted domain plus a legitimate UX feature equals a remote prompt‑injection channel that executes with the user’s privileges.

2) Double‑request (repetition) bypass — defeating one‑shot enforcement

Varonis’ proof‑of‑concept found that Copilot client‑side guardrails could be circumvented by instructing the assistant to repeat an operation. The first request might be deliberately crafted to return an innocuous or redacted result (thus passing superficial checks), while a second, slightly altered instruction — triggered immediately by the conversation flow — would coax Copilot into returning the sensitive content or performing the prohibited fetch. This simple “do it twice” pattern undermines enforcement models that only check a single invocation or treat subsequent conversational turns differently.The practical consequence is that single‑pass redaction or fetch blocking is insufficient when the assistant can be asked to re‑run or refine a previously blocked query during the same conversational session.

3) Chain‑request orchestration — incremental, stealthy extraction

After the initial injected prompt is accepted, the attack relies on server‑driven follow‑ups: the attacker’s backend feeds successive instructions to the live session, each query extracting a small piece of information (username, inferred location, short file summaries, fragments of conversation memory). Exfiltration happens in micro‑chunks that are far less likely to trigger volume‑based DLP or egress thresholds. Varonis demonstrated that this chain could continue even after the user closes the chat window in some variants, effectively zombifying the session and enabling background exfiltration until the session token expires or is invalidated.This staged, low‑volume approach is the core reason Reprompt is operationally stealthy: each individual transaction looks innocuous, but assembled they reveal meaningful sensitive content.

Why traditional defenses failed

- Endpoint security tools often treat traffic to known vendor domains (for example, microsoft.com) as less suspicious; Reprompt leverages that innate trust.

- Static scanning of URLs or email attachments will miss the attack because the malicious payload is embedded inside a query parameter and the exfiltration happens dynamically inside the assistant’s conversational exchange.

- Single‑shot redaction or fetch blocking fails when conversational repetition or chained turns can be used to escalate a blocked request into a successful one.

- Local egress monitoring often lacks visibility into vendor‑hosted orchestration or the semantic contents of conversational exchanges that occur inside model‑hosted infrastructure. This shifts meaningful activity outside the defender’s normal observation points.

Scope, persistence and observed impact

Varonis’ report and multiple independent outlets confirmed the exploit specifically targeted Copilot Personal — the consumer assistant embedded in Windows and Edge — and not Microsoft 365 Copilot (enterprise), which offers stronger tenant governance by design. That distinction matters for organizations evaluating exposure, but it leaves millions of individual users on consumer endpoints vulnerable prior to remediation.Perhaps most alarming, Varonis’ PoC showed the attacker could maintain control of a live session and continue orchestration after the user closed the chat window in some product variants. That persistence converts a one‑click incident into a longer‑running surveillance capability, extending the window for incremental exfiltration until session tokens are revoked or other protective controls intervene.

Public reporting to date indicates there was no evidence of mass in‑the‑wild exploitation tied to Reprompt prior to mitigation — an important caveat for incident responders triaging exposure. However, the lack of detection does not mean the technique is harmless; it simply underlines that the attack model is low‑noise and well suited to targeted, stealthy theft.

Timeline and disclosure

- Varonis Threat Labs publicly released a technical write‑up and PoC materials on January 14, 2026.

- Reporting from independent outlets and vendor confirmation indicate Microsoft rolled out mitigations as part of the January 2026 Patch Tuesday cycle (mid‑January), addressing the q‑parameter abuse that enabled the Reprompt flow.

Practical mitigations: what users, admins and vendors should do now

For individual users (immediate)

- Apply Windows and Edge updates from January 2026 (verify successful installation).

- Treat Copilot deep links with suspicion: avoid clicking AI deep links in email, chats or unknown web pages, even when they appear to be hosted on vendor domains.

- Where available, restrict or disable Copilot Personal on devices that access highly sensitive information until organizational policies and DLP protections are verified.

For IT and security teams (short term)

- Verify that January 2026 updates have been applied across managed devices and that Copilot / Edge build versions match vendor guidance. Confirm that client‑side AI components in Windows were updated as part of the cumulative rollout.

- Enforce least privilege on assistant capabilities: limit what Copilot Personal can access by policy where possible and require explicit EXTRACT or export consent for sensitive operations.

- Coordinate with Microsoft for actionable indicators of compromise and known‑issue responses (KIRs) tied to installed builds. Vendor advisories may be concise; on‑premise verification against installed telemetry is essential.

For platform vendors and architects (medium term)

- Treat external inputs as untrusted by default. Any convenience that accepts external content (URLs, page text, embedded demos) must sanitize, label and require explicit user consent before elevating into conversational state.

- Enforce persistent safety across the entire conversational lifecycle — not just on the first request. Repetition, chained turns and remote orchestration must be part of the enforcement surface.

- Build auditable, semantic DLP and EXTRACT permissioning into assistant architectures, with tenant‑grade governance options available even for consumer surfaces when used on managed devices.

Tactical detection strategies

- Monitor for unusual outbound requests from Copilot processes to nonstandard endpoints immediately following user interactions with deep links — small encoded payloads or repeated micro‑transactions can indicate exfiltration.

- Flag sessions that show rapid, repeated conversational turns asking for slices of information (user details, short file summaries) — sequence‑based anomalies may be more reliable than volume thresholds.

- Verify session lifecycle policies and token expiry for Copilot Personal; aggressively revoke or re‑authenticate long‑lived sessions tied to suspicious clicks.

Broader implications: why Reprompt matters for AI security

Reprompt is not merely a single vulnerability; it’s a live demonstration of a structural tension in assistant design: the trade‑off between convenience and the implicit trust placed in external inputs. As assistants acquire the ability to act on behalf of users and access richer context, every feature that elevates remote inputs (deep links, page‑sourced context, third‑party plugins) becomes a potential vector.- The attack exposes a category of composition risks where benign features, when combined, create a new class of exploit. Individually, the q parameter, conversational repetition, and server‑driven follow‑ups are ordinary features. Together, they form an exfiltration pipeline.

- It highlights the need for persistent enforcement: safety filters that only check the first invocation are insufficient in conversational contexts where “try again” semantics exist.

- The event shows that defenders must move beyond perimeter controls and implement semantic, lifecycle‑aware observability into assistant platforms, including auditable consent and EXTRACT gating for sensitive exports.

Strengths of the response and where risk remains

Microsoft’s response to Reprompt — deploying mitigations during January 2026 updates and stating that additional defense‑in‑depth measures would be implemented — demonstrates an ability to remediate customer‑facing vulnerabilities under pressure. Rolling updates across client and server components closed the specific q‑parameter abuse that enabled the demonstration PoC.However, several risks remain:

- The underlying design trade‑offs that made Reprompt possible (deep‑link convenience, conversational repetition semantics, and vendor‑hosted orchestration) are systemic and not fully eliminated by a patch that targets a single vector. Product architectures must be rethought to prevent compositional abuse across features.

- Detection gaps still exist where local telemetry cannot fully observe model‑hosted orchestration or semantic payloads processed on vendor infrastructure. That creates privileged channels that traditional enterprise DLP may miss unless vendors provide richer telemetry and policy hooks.

- Consumer surfaces — where Copilot Personal operates by default — will continue to be attractive targets unless product settings, telemetry and enterprise policy expand to cover managed consumer devices used for sensitive work.

Hardening checklist for Windows admins (prioritized)

- Validate installation of January 2026 cumulative updates and AI component patches on all endpoints. Confirm Copilot and Edge versions match vendor guidance.

- Temporarily restrict Copilot Personal usage on managed devices that process sensitive data; prefer tenant‑governed Microsoft 365 Copilot for enterprise workloads.

- Configure DLP to include semantic checks on assistant‑driven exports and require explicit EXTRACT consent for file summaries or data extraction.

- Instrument network monitoring to flag unusual Copilot process egress and low‑volume, repetitive outbound transactions to external endpoints.

- Educate users on the risks of clicking AI deep links and enforce phishing‑resistant link policies in email and collaboration platforms.

Long‑term fixes the industry should prioritize

- Design assistants to treat any external, prefilled prompt content as explicitly untrusted by default, requiring sanitization and user-affirmed consent before elevating to privileged context.

- Maintain enforcement state across conversation history so that repeated or chained requests do not bypass initial safety checks.

- Provide enterprise‑grade governance APIs (Purview, Intune‑style policies) and semantic DLP hooks that cover consumer assistant surfaces when invoked on managed devices.

- Publish auditable session telemetry and KIRs for security teams so that indicators can be mapped to installed builds and configurations.

Final assessment: a wake‑up call for assistant security

Reprompt is a clarifying incident: it demonstrates how minor UX conveniences, when composed across an assistant’s conversational model and orchestration stack, can produce high‑impact, low‑noise exfiltration channels. The public disclosure, Varonis’ PoC and Microsoft’s January 2026 mitigations validate that the vulnerability was real and operationally feasible — but also fixable when researchers and vendors work through responsible disclosure.The most important lessons are architectural and behavioral: treat external inputs as untrusted, maintain persistent enforcement across conversational state, and provide auditable, policy‑driven controls for any assistant operation that can export or summarize user content. Until those design practices are standardized, defenders must assume the next Reprompt variant will be faster and subtler.

For Windows users and administrators the immediate actions are clear: apply the January 2026 updates, verify Copilot components and Edge builds, restrict consumer Copilot usage on managed endpoints, and treat AI deep links with heightened suspicion. For platform vendors, the mandate is stronger: redesign convenience features with explicit distrust, and build governance and telemetry that make assistant actions observable and auditable.

Reprompt is now a closed chapter in the sense that the demonstrated vector was patched, but it should be treated as an opening salvo in the broader AI security war — one that demands systemic changes to how conversational assistants accept, validate and act on external inputs.

Source: WinBuzzer How 'Reprompt' Turned Microsoft Copilot Into an Invisible Spy with One Click - WinBuzzer