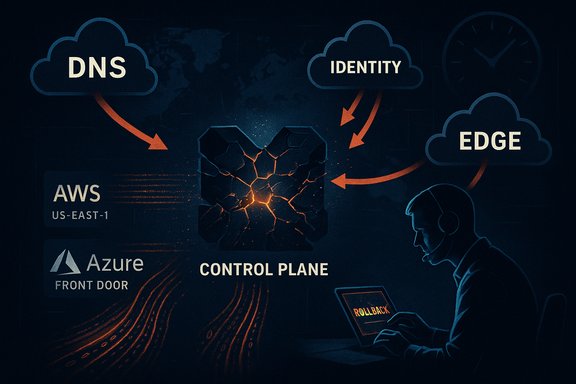

The end of October’s back-to-back hyperscaler failures — an AWS DNS/DynamoDB disruption followed by a Microsoft Azure Front Door misconfiguration — exposed how a handful of control‑plane primitives can turn routine changes into multi‑hour, high‑visibility outages, and underscored the operational realities James Kretchmar, SVP & CTO at Akamai’s Cloud Technology Group, described in a recent IT Pro interview about what it’s like inside an incident and how teams should prepare.

Modern cloud platforms concentrate enormous operational capability into a few shared services: identity issuance, global edge routing, managed databases and DNS. When those services fail, downstream systems that appear unrelated can suddenly lose the “glue” that makes them work. That pattern was visible in two separate incidents in late October 2025: an AWS region‑level DNS and DynamoDB disruption centered on US‑EAST‑1, and a Microsoft Azure Front Door (AFD) outage caused by an inadvertent configuration change. Both events produced cascading effects across consumer apps, enterprise portals and gaming ecosystems. These outages were not DDoS attacks or external sabotage; they were internal control‑plane failures amplified by automation, caching and global routing. Public and community telemetry, provider advisories and post‑incident accounts all converged on DNS and configuration propagation as the proximate failure vectors. Independent reporting and vendor status pages corroborated the timelines and mitigation steps taken by AWS and Microsoft.

Source: IT Pro Inside a cloud outage

Background

Background

Modern cloud platforms concentrate enormous operational capability into a few shared services: identity issuance, global edge routing, managed databases and DNS. When those services fail, downstream systems that appear unrelated can suddenly lose the “glue” that makes them work. That pattern was visible in two separate incidents in late October 2025: an AWS region‑level DNS and DynamoDB disruption centered on US‑EAST‑1, and a Microsoft Azure Front Door (AFD) outage caused by an inadvertent configuration change. Both events produced cascading effects across consumer apps, enterprise portals and gaming ecosystems. These outages were not DDoS attacks or external sabotage; they were internal control‑plane failures amplified by automation, caching and global routing. Public and community telemetry, provider advisories and post‑incident accounts all converged on DNS and configuration propagation as the proximate failure vectors. Independent reporting and vendor status pages corroborated the timelines and mitigation steps taken by AWS and Microsoft. Anatomy of the failures

AWS — US‑EAST‑1: a DNS/DynamoDB control‑plane failure

The AWS incident began in US‑EAST‑1, a region that acts as a de facto hub for many global control‑plane services. Operators observed DNS resolution anomalies for DynamoDB endpoints that prevented clients and internal services from consistently resolving API hostnames. Those DNS problems cascaded into health‑check failures, throttles and an extended recovery tail as backlogs drained and retry storms were managed. The visible effects included login and API failures across numerous apps, as well as impaired control‑plane operations that slowed remediation actions. What made the problem worse was how DNS is woven into modern cloud SDKs and orchestration: name lookup is not just “where is server X?”, it is part of service discovery, token issuance and internal orchestration. A regional DNS fault therefore becomes a systemic issue for many services that assume those lookups will succeed. Operators mitigated the immediate symptom, disabled the automation that propagated the faulty changes, and worked through backlogs while throttling certain operations to avoid further destabilization.Microsoft — Azure Front Door: misconfiguration and control‑plane rollbacks

On October 29, Microsoft’s Azure Front Door — a global Layer‑7 edge and routing fabric used by Microsoft and thousands of customers — experienced a configuration change that created inconsistent state across Points of Presence (PoPs). That inconsistency led to DNS and routing anomalies, TLS/hostname mismatches and token issuance failures that broke authentication and portal access for Microsoft 365, the Azure Portal, Xbox/Minecraft sign‑ins and many customer sites fronted by AFD. Microsoft froze AFD configuration changes, rolled back to a “last known good” state, and staged node recovery and traffic rebalancing to stabilize the fabric. The edge fabric’s global reach and caching behavior meant visible symptoms lingered even after the rollback completed. DNS TTLs, CDN caches and ISP resolver caches can cause uneven client‑side experience as caches converge on corrected answers — a friction point that turns an operational fix into an hours‑long experience for users.Inside an incident: what operators actually feel and do

James Kretchmar’s account in IT Pro offers an uncensored view of incident dynamics: the cognitive load of working inside a partially impaired control plane, the satisfaction when a safety mechanism proves effective, and the crushing frustration of discovering “we could fix this instantly if only we had built that tool earlier.” Those human realities map directly onto the technical mechanics we observed in both outages: lack of out‑of‑band admin paths, brittle rollouts without adequate canaries, and the consequences of control‑plane coupling. Key operational patterns that emerge during large outages:- Rapid detection via multi‑signal telemetry (internal watches, external probes, and user reports).

- Immediate containment actions (freeze deployments/changes, block management operations, and fail critical admin surfaces away from the failing fabric).

- Rollback to a validated global configuration when a recent change is implicated.

- Staged recovery for PoPs and nodes to avoid oscillation and reintroducing bad state.

- Throttling of dependent internal operations to allow control‑plane backlogs to drain safely.

Why some outages are short and others long

The decisive distinction between a fast and a prolonged outage is whether the team immediately knows the safe remediation path and has tools that operate independently of the failing surface. If a rollback or mitigation can be performed without depending on the same control plane that’s broken, restoration is swift. If every path to remediation passes through the impaired system, operators confront a delicate, slow recovery that must avoid making things worse. Kretchmar’s framing — that it is immensely satisfying when a safety system “kicks in” — highlights why proactive safety tooling matters. Practical amplifiers of outage duration:- DNS and cache propagation delays (client and CDN caches keep serving bad answers).

- Retry storms and amplification loops (clients retry aggressively and overload recovery channels).

- Internal queue backlogs which take time to process once systems become healthy.

- Administrative blind spots (management portals or admin APIs are unavailable, slowing manual interventions).

Cross‑checked facts and verification

Several independent outlets and vendor resources match the core technical narratives that emerged during the outages:- The AWS incident’s proximate symptom was DNS resolution failures for DynamoDB regional endpoints in US‑EAST‑1; AWS disabled the automation that caused the faulty DNS state and worked through backlogs while throttling dependent operations. This timeline and mitigation approach are confirmed by major reporting and post‑incident vendor notices.

- Microsoft’s outage linked to Azure Front Door began at approximately 16:00 UTC on October 29 and was triggered by an inadvertent configuration change; containment included freezing AFD changes and deploying a rollback to a last‑known‑good configuration while recovering nodes. Community reconstructions and Microsoft status updates align with this account.

- The public confusion during the events — where outage aggregators appeared to show overlapping failures across providers — was amplified by shared plumbing and cross‑dependency effects, not by a single coordinated failure across hyperscalers. Independent analysis and community telemetry documented both separate root causes and overlapping visible symptoms.

Lessons for IT leaders and SRE teams

The October outages are a prescriptive playbook for resilience engineering. The practical, high‑value actions that organizations should prioritize are straightforward, actionable and already well understood — the challenge is executing them across people, process and procurement.Immediate actions (first 30–90 days)

- Map critical dependencies.

- Inventory the small set of control‑plane services (identity, DNS, global edge) that would materially break your workflows.

- Prioritize remediation for the flows that depend on those services.

- Harden DNS and client fallback logic.

- Implement exponential backoff, jittered retries, and circuit breakers in client SDKs.

- Use multi‑resolver strategies (multiple DNS providers, shorter TTLs where safe) and test DNS failover in staging.

- Rehearse failure scenarios.

- Run live failover drills for mission‑critical flows (identity failover, domain resolution failures, edge path flips).

- Execute runbooks with the SRE team under realistic impairment constraints, including losing access to the admin portal.

- Build out‑of‑band admin paths.

- Ensure you can operate critical controls (rollbacks, routing changes) without depending on the same global fabric that might be impaired.

- Maintain spare credential paths, alternative management networks and emergency automation.

- Negotiate transparency and contractual protections.

- Insist on improved incident reporting, forensic timelines, and commitments on configuration validation / rollout safety.

- Add procurement clauses that address critical recovery SLAs and post‑incident disclosure.

Architectural priorities (medium term)

- Adopt multi‑region and multi‑provider patterns for the narrow set of control‑plane primitives that can cause systemic failure.

- Design graceful degradation: make non‑critical features degrade first, allow read‑only paths, and serve cached content rather than hard failures.

- Default safer templates: make multi‑region active‑active the documented default for critical services instead of a “manual” upgrade.

- Invest in observability that correlates provider control‑plane health signals with application telemetry to speed triage.

Technical mitigations that matter

- Canary and progressive rollout logic for global configuration changes to edge fabrics and DNS. Small, geo‑isolated canaries that validate state reduce the chance of a global blast radius.

- Automated safety gates that detect inconsistent state and prevent propagation when anomalies are present.

- Rate limiting and throttles for internal orchestration paths to prevent retry storms that prolong recovery.

- Immutable, auditable deployment pipelines with fast rollback primitives and emergency-safety toggles.

- Out‑of‑band health check endpoints that don’t depend on the same control plane as production flows.

Business and policy implications

The public, commercial and regulatory reaction to these outages will likely accelerate three trends:- Procurement and legal teams will treat cloud provider resilience and disclosure as negotiable items, asking for more forensic transparency, targeted SLAs and recoverability assurances.

- Boards and executive teams will elevate cloud systemic risk to a board‑level discussion, treating it like supply‑chain or physical infrastructure risk.

- Regulators may propose incident reporting requirements or resilience testing for critical infrastructure services that depend on hyperscalers — a complex policy domain that must balance innovation and public safety.

Risks and limits of the recommended approaches

- Cost and complexity: Multi‑region, multi‑provider deployments increase operational overhead and can introduce new failure modes if not engineered carefully.

- False confidence: Excessive reliance on “more providers” without rigorous testing can create brittle, brittle systems that fail in subtle ways.

- Vendor visibility: Many customers lack tenant‑level visibility into provider rollouts and can be blind to upstream changes until they impact customers. Contractual fixes can help but won’t entirely remove the need for engineering defenses.

- Human factor: Configuration mistakes continue to be a dominant trigger for incidents. Safer tools reduce but cannot eliminate human error.

A short operational checklist for engineering teams

- Inventory: Identify the top 5 control‑plane services your product depends on.

- Test: Execute an offline simulation where those services are made unavailable and measure how your product behaves.

- Tool: Build one out‑of‑band remediation tool (alternate login path, DNS override, or a manual rollback script) and verify it under test.

- Drill: Run a timed incident drill and measure every step of your runbook; iterate on failures.

- Contract: Add forensic disclosure and recovery time commitments into procurement for targeted, mission‑critical services.

- Map dependencies.

- Harden retries and DNS fallbacks.

- Create out‑of‑band tools.

- Rehearse runbooks.

- Negotiate visibility and SLA improvements.

Conclusion

The October hyperscaler incidents are not isolated curiosities; they are teaching moments for every IT leader who depends on cloud platforms. The two outages — one rooted in DNS/DynamoDB behavior in AWS’s US‑EAST‑1, the other in a misapplied Azure Front Door configuration — illustrate the same structural truth: control‑plane primitives and shared glue are systemically important. Operators who treat outages as inevitable inputs to design, who build out‑of‑band tools, and who rehearse failure scenarios will see far shorter, less damaging incidents. As James Kretchmar put it, the difference between a short outage and a long outage often comes down to whether you already have the right tool ready when the incident begins. The next phase for enterprises is not a rhetorical debate about cloud value — hyperscalers still deliver massive capability — but a disciplined, accountable program of resilience engineering: identify single points of failure, build safety nets, rehearse realistic failures and demand better operational transparency from providers. The cost of doing this work is real; the cost of not doing it will be paid in outage minutes, lost revenue and avoidable risk when the next control‑plane fault occurs.Source: IT Pro Inside a cloud outage