A retired Microsoft engineer is training modern AI to survive one of the arcade world’s most merciless stress tests: Robotron: 2084 — the 1982 twin‑stick shooter that compresses chaos, prioritization, and human panic into a few frantic seconds of play. What begins as a charming retro experiment is also a sharp technical showcase: the project exposes the ways reinforcement learning handles multi‑objective, high‑tempo control, and it forces a rethink of how we measure “intelligence” when success depends on juggling contradictory goals at frame‑rate speed.

Dave W. Plummer is a name familiar to Windows veterans: he wrote the original Windows Task Manager and is widely credited with the Space Cadet pinball port and other small but influential Windows utilities. Since leaving Microsoft he’s become a visible figure in the retro‑computing and maker scenes, publishing explainers, hardware teardowns, and experiment code under his Dave’s Garage umbrella. More recently Plummer applied that practical engineering lens to game AI: he built a visually theatrical live dashboard and training pipeline to teach an agent to play the arcade vector shooter Tempest, and he’s now turned his attention to Robotron: 2084.

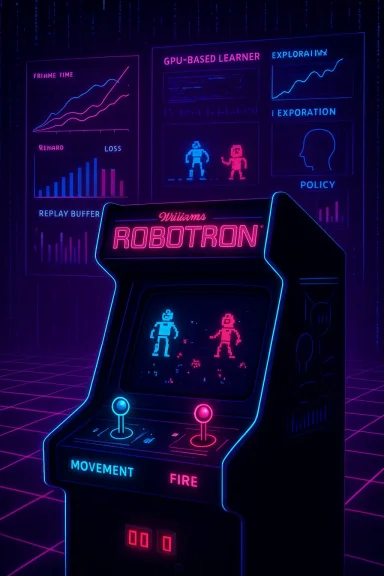

Robotron is shorthand for “organized chaos.” Designed by Eugene Jarvis and Larry DeMar at Vid Kidz and released by Williams Electronics in 1982, the game puts a single avatar in a fixed screen arena and throws waves of hostile machines and fleeing humans at the player. The core tension is simple and savage: rescue the humans to score, while simultaneously destroying relentless robot swarms that will kill you on contact. The cabinet’s two eight‑way joysticks — one for movement and one for firing direction — create a control surface that is brutally expressive and unforgiving, and that very control coupling is what makes Robotron an unusually pure real‑time decision problem.

Plummer’s Tempest project demonstrated the engineering pipeline you need to build a live, emulator‑based RL experiment: MAME or another emulator runs the game, a lightweight script streams per‑frame state out to a Python training server, a GPU‑accelerated learner updates a neural policy, and a local dashboard visualizes training metrics and model behaviour. Plummer published the code and demo artifacts, and the community response emphasized both the educational value of the repo and the spectacle of its synthwave‑styled dashboard.

Key practical points for anyone attempting this at hobby scale:

First, there is the perennial risk of mistaking game performance for general intelligence. A policy that excels in Robotron is not “creative” in a human sense; it’s highly optimized for a measurable reward function in a constrained simulator. The scientific value comes from what we learn about architectures and decision primitives — not from declaring victory for AGI.

Second, compute and environmental opacity create reproducibility and equity issues. When hobbyist projects require multi‑GPU clusters and tens of millions of emulator frames, the experiments are accessible only to well‑resourced teams. That asymmetry matters for the ecosystem of open research.

Plummer’s choice to publish code and demos counters that trend by making the engineering pattern available; the community can learn how to scale experiments down responsibly, and how to document the choices that lead to successful behaviours.

For the researcher or tinkerer, Robotron is a perfect combination of low barrier to entry and deep technical challenge. For the technologist, it’s a reminder that real‑time intelligence is an engineering problem as much as a philosophical one: design the inputs, shape the incentives, and the emergent behaviour is as revealing as it is entertaining.

Source: theregister.com Former Microsoft dev trains AI to master Robotron: 2084

Background and overview

Background and overview

Dave W. Plummer is a name familiar to Windows veterans: he wrote the original Windows Task Manager and is widely credited with the Space Cadet pinball port and other small but influential Windows utilities. Since leaving Microsoft he’s become a visible figure in the retro‑computing and maker scenes, publishing explainers, hardware teardowns, and experiment code under his Dave’s Garage umbrella. More recently Plummer applied that practical engineering lens to game AI: he built a visually theatrical live dashboard and training pipeline to teach an agent to play the arcade vector shooter Tempest, and he’s now turned his attention to Robotron: 2084.Robotron is shorthand for “organized chaos.” Designed by Eugene Jarvis and Larry DeMar at Vid Kidz and released by Williams Electronics in 1982, the game puts a single avatar in a fixed screen arena and throws waves of hostile machines and fleeing humans at the player. The core tension is simple and savage: rescue the humans to score, while simultaneously destroying relentless robot swarms that will kill you on contact. The cabinet’s two eight‑way joysticks — one for movement and one for firing direction — create a control surface that is brutally expressive and unforgiving, and that very control coupling is what makes Robotron an unusually pure real‑time decision problem.

Plummer’s Tempest project demonstrated the engineering pipeline you need to build a live, emulator‑based RL experiment: MAME or another emulator runs the game, a lightweight script streams per‑frame state out to a Python training server, a GPU‑accelerated learner updates a neural policy, and a local dashboard visualizes training metrics and model behaviour. Plummer published the code and demo artifacts, and the community response emphasized both the educational value of the repo and the spectacle of its synthwave‑styled dashboard.

Why Robotron is an unusually hard problem for AI

At first blush Robotron looks like an Atari‑era toy: simple sprites, minimal rules, and a small, fixed playfield. Scratch the surface and the reasons it resists automation pile up quickly.1) True multi‑objective optimization

Robotron forces the agent to manage at least three conflicting objectives simultaneously: survival, scoring by destroying enemies, and rescuing humans. Each objective can pull the agent in opposite directions: dash into a cluster to rescue a human and you’re exposed to bullets; expend time hunting point‑heavy enemies and a human dies. That triage problem — choosing which lives, points, or positional advantages are worth risking under tight time pressure — resembles real‑world triage more than single‑goal games like Breakout. The result is an environment where reward shaping is nontrivial and naive scalarization of goals produces brittle policies.2) Decoupled control outputs (twin‑stick action space)

Most RL benchmarks map to a single action output (move left, fire, jump). Robotron’s control splits movement and firing into independent directions, which complicates action‑space design. You can model the environment as:- a single discrete product space (e.g., 8 movement × 8 fire = 64 discrete actions), or

- two parallel policy heads (one for movement, one for aiming), or

- continuous parameterization for fine‑grained joystick tilt.

3) High tempo, dense events, and frame‑level decision making

Robotron operates at arcade frame rates and rapid spawning. Agents must make many decisions per second and react to emergent, multi‑agent dynamics. That raises compute and throughput concerns: training realistically requires running hundreds or thousands of emulator frames per second across multiple workers, an arrangement that demands robust I/O between emulator and trainer and disciplined frame‑skipping or temporal aggregation strategies to keep the learner fed. Plummer’s Tempest pipeline — which streams per‑frame state out of an emulator into Python for on‑the‑fly training — is precisely the kind of architecture necessary here.4) Stochasticity and long‑term planning

Robotron’s spawn patterns, enemy behaviors, and the way humans move are not fully deterministic. The agent must cope with uncertainty and occasionally choose long‑term positioning over immediate point gains. This combination of stochastic, procedurally‑arranged threat vectors and frequent short‑horizon impulses (dodge now!) makes vanilla value‑based methods brittle unless combined with experience replay, prioritized sampling, or planning modules.How classic research informs the approach

Training agents to play arcade games has a well‑trodden research lineage, one that informs reasonable starting choices and the likely pitfalls.- The Deep Q‑Network (DQN) family demonstrated that convolutional neural nets combined with Q‑learning can reach human‑level performance on many Atari games, establishing a baseline for pixel‑driven agents. That work — and its subsequent Rainbow and Double‑DQN variants — offers tools for discrete action spaces and replay buffers that are directly applicable if Robotron is modeled as a discrete product action space.

- Policy‑gradient and actor‑critic methods (notably Proximal Policy Optimization, PPO) have become the practical workhorses for many modern RL projects because they are stable, relatively simple to implement, and adapt well to continuous or multi‑headed action parameterizations. If you want separate movement and firing heads — or continuous joystick control — off‑the‑shelf PPO implementations are often the fastest route to a working policy.

- For continuous or hybrid control (e.g., if you prefer continuous joystick outputs), Soft Actor‑Critic (SAC) offers sample‑efficient and stable off‑policy learning with entropy regularization that encourages robust, exploratory behaviors. SAC can ameliorate problems where the agent gets stuck in reflexive, non‑robust subroutines.

Plummer’s practical pipeline and why it matters

Plummer’s Tempest work provides a replicable engineering pattern that can be applied to Robotron: embed a tiny scripting layer in the emulator (Lua in MAME is common), stream frame state to a training server, train on GPU‑backed learners, and visualize progress with a metrics dashboard. That pipeline is instructive because it emphasizes reproducibility and observability over opaque black‑box experimentation. The repo and demo are public and the code documents choices such as replay buffer parameters, exploration schedules, and whether training uses raw pixels or engineered features.Key practical points for anyone attempting this at hobby scale:

- Hardware: a modern GPU with good compute and VRAM accelerates training dramatically. Plummer’s demo and community tests show that rendering the dashboard itself can be GPU‑heavy; separate training and visualization workloads where possible.

- Emulator throughput: maximize headless emulator speed (frame skipping, determinism flags) when you’re running many parallel workers. Use a light IPC protocol (TCP or shared memory) to keep latency low between emulator and training components.

- Data pipeline: a prioritized replay buffer, stacked frames (to capture velocity), and a small CNN front end are sensible starting points. Consider experiment tracking and rollout versioning to regress bad hyperparameter changes.

Architectural choices specific to Robotron

If you were engineering the next iteration of Plummer’s experiments, here are concrete architecture choices and why they matter.A. Action‑space design

- Option 1 — Product discrete space: represent movement and fire as a combined discrete action (N = 64). Simpler to implement with DQN variants but scales poorly and may require massive replay diversity.

- Option 2 — Dual policy heads: have one discrete head for movement and one for firing. Use an actor‑critic (PPO) where the policy outputs a joint probability and gradients coordinate behavior. This reduces combinatorial explosion and supports more natural credit assignment.

- Option 3 — Continuous outputs: model joysticks as continuous 2D vectors, and train with SAC or PPO. Promotes smoother motion and easier transfer to analog controllers, but requires careful normalization and exploration noise handling.

B. Reward shaping and curriculum

Directly rewarding score alone leads to degenerate play: agents learn safe, low‑risk behaviors that minimize score loss but also avoid rescuing humans. Effective designs use hierarchical rewards:- Dense, immediate rewards for survival and debris/robot kills.

- Bonus shaping for a human rescue, scaled by urgency (how close the human is to death).

- Curriculum schedules that begin in low‑threat arenas and gradually increase spawn rates.

C. Auxiliary tasks and representation learning

Robotron’s pixel space is low‑resolution but dense in information. Auxiliary losses — predicting enemy positions, future occupancy maps, or predicting spawn vectors — accelerate learning by shaping internal representations that are directly useful for tactical choices. These semi‑supervised heads are cheap to add and often pay off in sample efficiency.Hard truths: where AI will stumble (and where it can excel)

Plummer’s framing of Robotron as a “stress test” is apt. Here are predictable failure modes and an honest assessment of where AI will excel and where humans retain an edge.- Overfitting to emulator quirks: agents trained inside a specific emulator build will be brittle to timing differences and controller latency. That’s a practical hazard for hobbyists who assume emulator policy = cabinet policy.

- Sparse long‑term reasoning: while RL handles local tactical maneuvers well, genuine triage under uncertainty — choosing which humans to prioritize when rescue paths cross multiple hazards — requires planning or hierarchical reasoning. Off‑the‑shelf DQNs and PPO policies often resort to reflexes rather than planning unless augmented with memory modules (LSTM) or explicit planning mechanisms.

- Sample complexity and compute: real progress here demands many millions of frames and a distributed training setup for practical convergence. That level of compute raises practical barriers for hobbyists and small labs and invites questions about whether learning a video‑game skill justifies that expense.

- The flip side: reflex optimization and micro‑tactics. Where humans struggle is in perfect reflexive micro‑management under extreme tempo. Neural policies can learn near‑optimal short‑horizon dodging and firing that humans cannot sustain for long sessions. Expect AI to dominate at micro‑level survival and pattern exploitation while still lagging in robust long‑term triage strategies unless augmented by architectural innovations.

Reproducibility, openness, and legal friction

Plummer’s public repo and dashboard turn the experiment into teachable material. That openness is valuable: it demystifies emulator‑to‑AI pipelines and encourages replication. Still, a few caveats matter.- ROM legality: distributing game ROMs is a separate legal issue. Public projects must not include copyrighted ROMs, and researchers should clarify what constitutes permissible archival use in their jurisdiction. Emulation is technically simple; legal risk is not. Be explicit about licensing and do not redistribute copyrighted content. (Practical, non‑legal advice only — consult counsel for specifics.)

- Reproducibility vs spectacle: Plummer’s aesthetic dashboard is intentionally exuberant and GPU‑intensive. For reproducibility, projects should provide headless modes and scripted runs that reduce visual overhead and ensure experiments are accessible on modest hardware.

- Benchmarking: present clear evaluation metrics (mean score, survival time, rescue rate) across seeds and random starts. Arcade games are stochastic; single‑run bragging rights are scientifically meaningless without aggregated metrics.

Broader implications: what arcade AI teaches us about modern systems

This project has resonance beyond retro gaming. Robotron is a compact, measurable model of real problems AI faces in the wild: high‑speed decision loops, competing objectives, noisy sensors, and the need for triage under uncertainty.- In safety‑critical systems, similar tradeoffs appear: prioritize an obstacle to avoid causing a larger failure, or accept a small degradation now to preserve long‑term system health. Games like Robotron let researchers study these tradeoffs in a controlled setting.

- The spectacle of “teaching an AI to best retro cabinets” also raises narrative questions: the engineer who wrote a diagnostic tool for operating systems now points a learning machine at a fictional machine uprising. That meta‑irony is fun, but the technical lesson is concrete: intelligence in real time is not just raw reflex; it’s architecture, representation, and the careful shaping of incentives.

If you want to try this yourself: a practical starter playbook

Below is a condensed, pragmatic list for researchers or hobbyists who want to replicate or iterate on Plummer’s line of work.- Environment

- Use MAME (or other emulators) in headless mode with deterministic flags.

- Implement a Lua hook to extract screen pixels and key game state per frame.

- Data capture

- Stack 4–8 frames for the observation tensor to capture velocity.

- Normalize inputs and optionally add an engineered feature vector (enemy positions via simple detection).

- Algorithm

- Start with a Rainbow‑style DQN if you choose a discrete product action space.

- If you choose decoupled outputs, use PPO or an actor‑critic backbone.

- Add auxiliary losses for occupancy prediction or short‑term forecasting.

- Training

- Run multiple parallel headless emulator workers to generate rollouts.

- Prioritized replay, bootstrapping with an expert system for early learning, and curriculum escalation all help reduce wasted frames.

- Evaluation

- Measure mean score, median survival time, and human‑rescue rate across 100+ seeded episodes.

- Save model checkpoints and track behaviour changes via automated replay capture.

Risks, ethics, and the limit cases

Two cautionary notes.First, there is the perennial risk of mistaking game performance for general intelligence. A policy that excels in Robotron is not “creative” in a human sense; it’s highly optimized for a measurable reward function in a constrained simulator. The scientific value comes from what we learn about architectures and decision primitives — not from declaring victory for AGI.

Second, compute and environmental opacity create reproducibility and equity issues. When hobbyist projects require multi‑GPU clusters and tens of millions of emulator frames, the experiments are accessible only to well‑resourced teams. That asymmetry matters for the ecosystem of open research.

Plummer’s choice to publish code and demos counters that trend by making the engineering pattern available; the community can learn how to scale experiments down responsibly, and how to document the choices that lead to successful behaviours.

Conclusion

Robotron: 2084 is more than a retro indulgence; it’s a concise, brutal laboratory for studying real‑time decision systems. Dave Plummer’s pivot from Tempest to Robotron connects decades of arcade design to modern reinforcement learning practice and exposes the technical scaffolding that turns pixels into policies. The experiment is funny, ironic, and instructive: funny because a veteran Windows engineer is teaching machines to survive a fictional machine uprising, ironic because a toolmaker for operating systems now builds a synthwave Task Manager to monitor a neural learner, and instructive because the project surfaces real engineering lessons about action spaces, reward design, and the limits of reflex‑based intelligence.For the researcher or tinkerer, Robotron is a perfect combination of low barrier to entry and deep technical challenge. For the technologist, it’s a reminder that real‑time intelligence is an engineering problem as much as a philosophical one: design the inputs, shape the incentives, and the emergent behaviour is as revealing as it is entertaining.

Source: theregister.com Former Microsoft dev trains AI to master Robotron: 2084