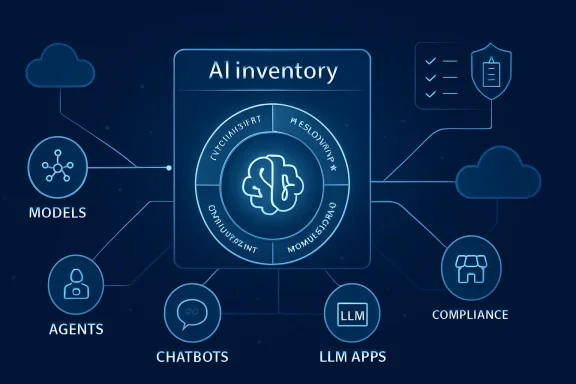

SAS is moving deeper into the AI governance market with SAS AI Navigator, a standalone SaaS platform designed to help enterprises discover, catalogue, and govern the growing sprawl of AI models, agents, and large language model use cases across the business. Announced at SAS Innovate 2026, the product targets one of the most urgent problems in enterprise technology: organizations are adopting AI faster than they can document, risk-rank, approve, monitor, or retire it. The pitch is deliberately pragmatic, not utopian: before companies can govern AI responsibly, they need to know what AI they actually have. For WindowsForum readers watching the Microsoft ecosystem, the planned availability through the Microsoft Azure Marketplace in the third quarter of 2026 makes this more than a SAS-only story.

Enterprise AI has entered a difficult second phase. The first phase was dominated by pilots, prompt experiments, proofs of concept, and executive pressure to “do something with AI.” The second phase is about evidence, ownership, and control, because regulators, auditors, insurers, boards, and customers are now asking much harder questions.

SAS enters this moment with a long history in analytics, risk management, decisioning, fraud detection, financial services, healthcare, and government workloads. That matters because AI governance is not merely a feature checkbox; it is a discipline shaped by regulated industries that already understand model risk, lineage, testing, validation, and audit trails. SAS has spent decades selling into those environments, which gives it credibility with buyers who are less impressed by hype and more focused on defensible operations.

The timing is also significant. The EU AI Act, ISO/IEC 42001, the NIST AI Risk Management Framework, sector-specific compliance obligations, and internal responsible AI policies are converging into a new operational reality. Companies can no longer treat AI oversight as a slide deck maintained by legal and risk teams; they need working systems that connect policy to actual AI assets.

The rise of shadow AI has made that need more urgent. Employees use public chatbots, departments procure niche AI tools, developers wire LLM APIs into workflows, and business units experiment with agents that may access sensitive data or influence decisions. Without a centralized inventory, executives may not know whether a customer service bot, underwriting model, coding assistant, marketing agent, or HR screening tool is operating inside acceptable policy boundaries.

This matters because governance cannot begin at the point of deployment. By then, risk decisions may already be embedded in architecture, data flows, vendor contracts, and user workflows. A useful AI governance platform must track the lifecycle from experimentation through production and eventually decommissioning.

SAS is positioning AI Navigator as a governance layer rather than a replatforming mandate. That distinction is important for enterprises that already use tools such as Microsoft Copilot, Claude, OpenAI-based applications, internal ML platforms, customer service bots, and domain-specific AI packages. The platform is meant to sit above those environments and map what exists.

Key problems SAS is targeting include:

The most dangerous AI assets are often not the most sophisticated ones. A small team using a chatbot to summarize customer complaints may accidentally process regulated information, while a departmental agent might connect to business systems without clear approval. The governance challenge is not only the frontier model; it is the ordinary workflow that quietly becomes automated.

The product’s central capability is an AI inventory that links technical assets to business context. A model or agent is not treated merely as a file, endpoint, or API call; it is connected to ownership, intended purpose, risk status, policy requirements, and lifecycle stage. That use-case-centered approach is crucial because regulations often apply based on what the AI system does, not just what technology it uses.

SAS is also emphasizing audit readiness. Documentation controls, governance workflows, policy assessments, decision rationale, and approval trails are intended to make oversight repeatable. In practice, that could help a compliance team answer who approved an AI system, what data it uses, which regulations apply, what risks were identified, and whether mitigations were completed.

A typical governance flow might look like this:

That is why lifecycle tracking is more than a project management feature. It creates a timeline of accountability. It also allows organizations to retire outdated models, review vendor changes, update policy mappings, and reassess risks when regulations evolve.

The stronger argument is that governance enables faster adoption by making the boundaries clear. Teams can experiment confidently when they know what must be documented, who approves what, which data is permitted, and what risk thresholds apply. In that model, governance becomes a guardrail rather than a gate.

There is an important cultural dimension here. AI innovation fails when every team improvises its own rules, but it also fails when oversight is so heavy that teams stop trying. The market opportunity for SAS is to prove that structured AI governance can reduce uncertainty without crushing experimentation.

Governance can accelerate adoption when it provides:

That could work in SAS’s favor. The company has long sold to organizations where trust, explainability, and operational discipline matter. In sectors such as banking, insurance, public administration, and healthcare, the person saying “prove this is governed” may have as much influence as the person saying “deploy this model.”

The Microsoft angle is also strategic because AI governance is increasingly tied to the Microsoft 365 and Azure estate. Organizations deploying Microsoft Copilot, Azure OpenAI services, Copilot Studio agents, Purview, Defender, Entra, and Fabric are already thinking about data access, identity, retention, audit, and compliance. SAS AI Navigator will need to complement that landscape rather than compete with it at every layer.

For WindowsForum readers, the important question is not whether SAS replaces Microsoft Purview. It does not appear designed for that. The more realistic question is whether SAS can provide AI-specific inventory and governance context that sits above multiple AI ecosystems, including Microsoft’s.

Potential Azure Marketplace advantages include:

That distinction may become commercially important. Enterprises rarely want two tools doing the same thing, but they do need tools that connect business-level AI governance with platform-level enforcement. If SAS can integrate cleanly with Microsoft environments while also covering third-party AI systems, it may find a useful niche.

A chatbot usually produces text. An AI agent may call tools, query databases, trigger workflows, update systems, recommend decisions, and coordinate with other agents. That shift from response generation to action-taking changes the governance equation.

The risk is not only that an agent gives a wrong answer. The risk is that it takes the wrong action with legitimate credentials, incomplete context, or insufficient human oversight. That is why agent governance requires more than a model card.

Agentic AI governance must account for:

This is where AI Navigator could become more strategically important than it first appears. If SAS is enabling agents to use analytics capabilities, it also needs a credible story for tracking which agents exist, what they can do, which use cases they support, and which controls apply. In other words, agentic AI makes governance infrastructure more valuable.

That is the practical opening for products such as SAS AI Navigator. A policy document may say that high-risk AI must be reviewed, but the organization still needs a system to identify high-risk AI in the first place. It also needs a way to prove that the review happened.

The EU AI Act has made this operational shift more visible, especially for organizations that provide or deploy AI systems in affected contexts. The law’s phased implementation has pushed enterprises to classify systems, understand obligations, and prepare documentation. Even companies outside Europe are watching because global vendors often prefer one governance operating model rather than region-by-region chaos.

Important governance frameworks shaping the market include:

SAS’s emphasis on audit-ready records reflects a market reality. Organizations do not just need to make good decisions; they need to prove that they made good decisions through a consistent process. That proof is becoming essential for regulators, customers, partners, cyber insurers, and internal boards.

SAS’s differentiation appears to rest on three claims. First, AI Navigator is designed to govern across internal and third-party AI assets. Second, it emphasizes use-case-led governance rather than only technical artifact management. Third, it draws on SAS’s credibility in regulated analytics and model risk.

That is a credible strategy, but it also creates expectations. Enterprise buyers will ask how deep the integrations go, how automatically AI assets can be discovered, how policy mappings are maintained, and how well the system connects to enforcement points. A beautiful registry that depends on manual updates can quickly become stale.

Competitive pressure will come from:

That does not mean manual workflows are useless. Many governance decisions require human judgment, especially around intended use, risk tolerance, legal interpretation, and business impact. But discovery, change detection, ownership mapping, and evidence collection need as much automation as possible.

For banks and insurers, the platform could help connect model risk management traditions with newer generative AI and agentic systems. Traditional predictive models may already be documented, validated, and monitored, but LLM-powered workflows often live outside those mature processes. AI Navigator could help bridge that gap.

Healthcare organizations face a different challenge. AI tools may assist with administration, triage, coding, patient communication, imaging, operations, or research, each with different risk and privacy implications. A centralized inventory helps leaders distinguish between low-risk productivity tools and systems that influence patient outcomes.

Enterprise benefits may include:

AI Navigator will not solve every security problem by itself. But as part of a broader control stack, it could help organizations identify which AI systems warrant deeper monitoring, data loss prevention, access reviews, vendor assessments, or red-team testing. Visibility is not enforcement, but it is the prerequisite for intelligent enforcement.

For employees, good governance should reduce confusion. Instead of vague warnings not to paste sensitive data into AI tools, workers need approved pathways and clear rules. The ideal outcome is not a locked-down workplace where experimentation dies, but a safer environment where people know which tools are permitted for which tasks.

Consumers benefit when companies can explain, monitor, and control AI systems that affect them. That is especially true in high-impact areas such as lending, insurance, employment, healthcare, education, and public services. Trustworthy AI is not created by branding; it is created by accountable operations.

Employee-facing governance can clarify:

The winning governance tools will be the ones people actually use. That requires clear interfaces, role-based experiences, sensible defaults, and enough automation to avoid turning every AI idea into a paperwork marathon. Usability may be as important as regulatory coverage.

Another question is enforcement. A governance layer can identify gaps and track approvals, but organizations also need controls that prevent risky data flows, block unauthorized tools, revoke access, or require human intervention. SAS may rely on integrations with security, identity, data governance, and workflow platforms for some of that enforcement.

The most important product questions include:

The third-quarter 2026 Azure Marketplace target gives SAS a defined commercial milestone. By then, buyers will expect clarity on pricing, packaging, integration support, deployment requirements, and roadmap commitments. In a fast-moving market, vague governance promises will not be enough.

The second issue is Microsoft integration. Azure Marketplace availability is useful, but the deeper test will be how AI Navigator fits with Microsoft Purview, Entra, Defender, Azure AI services, Copilot Studio, and enterprise data estates. If SAS can complement Microsoft’s native controls while covering non-Microsoft AI assets, it could offer a persuasive story for heterogeneous organizations.

The third issue is agent governance. As enterprises move from chatbots to agents, governance must track tools, permissions, actions, escalation rules, and runtime behavior. SAS’s broader agentic AI push makes this area especially important.

Key milestones to watch include:

Source: Techzine Global SAS launches AI Navigator for AI governance and compliance

Background

Background

Enterprise AI has entered a difficult second phase. The first phase was dominated by pilots, prompt experiments, proofs of concept, and executive pressure to “do something with AI.” The second phase is about evidence, ownership, and control, because regulators, auditors, insurers, boards, and customers are now asking much harder questions.SAS enters this moment with a long history in analytics, risk management, decisioning, fraud detection, financial services, healthcare, and government workloads. That matters because AI governance is not merely a feature checkbox; it is a discipline shaped by regulated industries that already understand model risk, lineage, testing, validation, and audit trails. SAS has spent decades selling into those environments, which gives it credibility with buyers who are less impressed by hype and more focused on defensible operations.

The timing is also significant. The EU AI Act, ISO/IEC 42001, the NIST AI Risk Management Framework, sector-specific compliance obligations, and internal responsible AI policies are converging into a new operational reality. Companies can no longer treat AI oversight as a slide deck maintained by legal and risk teams; they need working systems that connect policy to actual AI assets.

The rise of shadow AI has made that need more urgent. Employees use public chatbots, departments procure niche AI tools, developers wire LLM APIs into workflows, and business units experiment with agents that may access sensitive data or influence decisions. Without a centralized inventory, executives may not know whether a customer service bot, underwriting model, coding assistant, marketing agent, or HR screening tool is operating inside acceptable policy boundaries.

What SAS AI Navigator Is Trying to Solve

The inventory problem comes first

SAS AI Navigator is built around a deceptively simple premise: enterprises need a single system of record for AI activity. That includes internally developed models, third-party tools, AI agents, LLM-powered applications, open-source models, and SAS models already deployed inside existing analytics environments. The goal is to replace fragmented spreadsheets, informal approval chains, and disconnected governance portals with a centralized view.This matters because governance cannot begin at the point of deployment. By then, risk decisions may already be embedded in architecture, data flows, vendor contracts, and user workflows. A useful AI governance platform must track the lifecycle from experimentation through production and eventually decommissioning.

SAS is positioning AI Navigator as a governance layer rather than a replatforming mandate. That distinction is important for enterprises that already use tools such as Microsoft Copilot, Claude, OpenAI-based applications, internal ML platforms, customer service bots, and domain-specific AI packages. The platform is meant to sit above those environments and map what exists.

Key problems SAS is targeting include:

- Unknown AI assets spread across departments and vendors

- Inconsistent documentation for models, agents, and use cases

- Policy gaps between legal requirements and technical implementation

- Unclear ownership when AI systems cross business and IT boundaries

- Weak lifecycle tracking after experimentation turns into production

- Audit friction when evidence must be assembled manually

Shadow AI becomes a board-level issue

The term shadow AI echoes the older problem of shadow IT, but the risk profile is different. A rogue SaaS tool might create data exposure or budget sprawl; an ungoverned AI system can also generate decisions, automate actions, influence customers, or produce content at scale. That makes visibility a strategic requirement rather than a neat administrative improvement.The most dangerous AI assets are often not the most sophisticated ones. A small team using a chatbot to summarize customer complaints may accidentally process regulated information, while a departmental agent might connect to business systems without clear approval. The governance challenge is not only the frontier model; it is the ordinary workflow that quietly becomes automated.

How the Platform Works

A governance layer over existing workflows

SAS says organizations do not need to reshape their AI workflows around AI Navigator. Instead, the product is designed as an overarching governance layer that can register, classify, assess, and track AI use cases across diverse tooling environments. That design choice acknowledges how modern enterprises actually operate: hybrid clouds, mixed vendors, departmental SaaS purchases, and fast-moving developer teams.The product’s central capability is an AI inventory that links technical assets to business context. A model or agent is not treated merely as a file, endpoint, or API call; it is connected to ownership, intended purpose, risk status, policy requirements, and lifecycle stage. That use-case-centered approach is crucial because regulations often apply based on what the AI system does, not just what technology it uses.

SAS is also emphasizing audit readiness. Documentation controls, governance workflows, policy assessments, decision rationale, and approval trails are intended to make oversight repeatable. In practice, that could help a compliance team answer who approved an AI system, what data it uses, which regulations apply, what risks were identified, and whether mitigations were completed.

A typical governance flow might look like this:

- A business team proposes or registers an AI use case.

- The use case is linked to models, agents, data sources, vendors, and business owners.

- The system maps relevant internal policies and external regulatory frameworks.

- Risk, compliance, data, and AI teams review required assessments.

- The project moves through approval, deployment, monitoring, and retirement stages.

Why lifecycle coverage matters

AI risk changes over time. A model that was low-risk during experimentation can become high-impact when connected to customer records, financial decisions, medical information, or automated workflows. Similarly, a harmless internal assistant can become a compliance problem if it starts taking action rather than simply offering suggestions.That is why lifecycle tracking is more than a project management feature. It creates a timeline of accountability. It also allows organizations to retire outdated models, review vendor changes, update policy mappings, and reassess risks when regulations evolve.

Why SAS Is Framing Governance as Growth

Compliance is only part of the story

SAS executives are deliberately presenting AI governance as a growth driver, not just a defensive control. That framing matters because many organizations still treat governance as friction imposed by legal, risk, or security teams. If employees believe governance only slows them down, they will route around it.The stronger argument is that governance enables faster adoption by making the boundaries clear. Teams can experiment confidently when they know what must be documented, who approves what, which data is permitted, and what risk thresholds apply. In that model, governance becomes a guardrail rather than a gate.

There is an important cultural dimension here. AI innovation fails when every team improvises its own rules, but it also fails when oversight is so heavy that teams stop trying. The market opportunity for SAS is to prove that structured AI governance can reduce uncertainty without crushing experimentation.

Governance can accelerate adoption when it provides:

- Clear policy pathways for new AI use cases

- Reusable assessment workflows instead of one-off reviews

- Executive visibility into AI activity and risk concentration

- Audit-ready documentation created during normal work

- Consistent approvals across regions and departments

- Confidence for regulated deployments in finance, healthcare, and government

The enterprise buyer has changed

The AI buyer is no longer only the data science leader. Today’s buying committee often includes security, privacy, legal, compliance, procurement, internal audit, finance, and business unit executives. SAS AI Navigator is aimed squarely at that broader audience.That could work in SAS’s favor. The company has long sold to organizations where trust, explainability, and operational discipline matter. In sectors such as banking, insurance, public administration, and healthcare, the person saying “prove this is governed” may have as much influence as the person saying “deploy this model.”

The Microsoft and Azure Angle

Marketplace availability broadens the reach

The planned arrival of SAS AI Navigator in the Azure Marketplace in the third quarter of 2026 is particularly relevant for enterprises already standardized on Microsoft procurement, identity, compliance, and cloud operations. Marketplace availability can simplify purchasing, billing, vendor onboarding, and cloud governance reviews. For many IT departments, that can be the difference between a product being evaluated and being ignored.The Microsoft angle is also strategic because AI governance is increasingly tied to the Microsoft 365 and Azure estate. Organizations deploying Microsoft Copilot, Azure OpenAI services, Copilot Studio agents, Purview, Defender, Entra, and Fabric are already thinking about data access, identity, retention, audit, and compliance. SAS AI Navigator will need to complement that landscape rather than compete with it at every layer.

For WindowsForum readers, the important question is not whether SAS replaces Microsoft Purview. It does not appear designed for that. The more realistic question is whether SAS can provide AI-specific inventory and governance context that sits above multiple AI ecosystems, including Microsoft’s.

Potential Azure Marketplace advantages include:

- Simpler procurement for Microsoft-centric enterprises

- Closer alignment with existing cloud governance processes

- Improved access for customers already running SAS Viya on Azure

- Reduced vendor onboarding friction for regulated buyers

- Better positioning alongside Microsoft’s AI and compliance stack

Complement or overlap?

Microsoft already offers significant governance and compliance capabilities through Purview, Entra, Defender, and related administration tools. Those products are deeply tied to data security, identity, Microsoft 365 Copilot, endpoint protection, compliance workflows, and information protection. SAS AI Navigator appears to target a broader AI inventory and governance management problem rather than only Microsoft data controls.That distinction may become commercially important. Enterprises rarely want two tools doing the same thing, but they do need tools that connect business-level AI governance with platform-level enforcement. If SAS can integrate cleanly with Microsoft environments while also covering third-party AI systems, it may find a useful niche.

Agentic AI Raises the Stakes

Agents are harder to govern than chatbots

The announcement lands as SAS is also expanding its broader agentic AI story. SAS Viya updates include governed AI assistants, agent infrastructure, an Agentic AI Accelerator, and a Model Context Protocol server designed to expose SAS analytics and decisioning capabilities to external agents. That matters because agentic systems create governance challenges that traditional model inventories were not built to handle.A chatbot usually produces text. An AI agent may call tools, query databases, trigger workflows, update systems, recommend decisions, and coordinate with other agents. That shift from response generation to action-taking changes the governance equation.

The risk is not only that an agent gives a wrong answer. The risk is that it takes the wrong action with legitimate credentials, incomplete context, or insufficient human oversight. That is why agent governance requires more than a model card.

Agentic AI governance must account for:

- Tool permissions and what systems an agent can access

- Human approval thresholds for sensitive actions

- Data boundaries across prompts, retrieval, and outputs

- Audit logs that reconstruct agent decisions

- Runtime monitoring for unexpected behavior

- Decommissioning rules when agents are no longer trusted

MCP and the governance puzzle

The rise of the Model Context Protocol reflects the enterprise need to connect AI systems to tools and data sources in a standardized way. SAS’s move to expose Viya analytics and decisioning capabilities through MCP fits the broader industry trend toward AI agents that can discover and invoke tools. But connectivity without governance can quickly become dangerous.This is where AI Navigator could become more strategically important than it first appears. If SAS is enabling agents to use analytics capabilities, it also needs a credible story for tracking which agents exist, what they can do, which use cases they support, and which controls apply. In other words, agentic AI makes governance infrastructure more valuable.

Regulatory Pressure Is Becoming Operational

From principles to evidence

For years, responsible AI programs were often built around principles: fairness, transparency, accountability, privacy, security, and human oversight. Those principles still matter, but regulators and auditors increasingly want evidence. They want to see inventories, assessments, risk classifications, documentation, monitoring, change records, and approval trails.That is the practical opening for products such as SAS AI Navigator. A policy document may say that high-risk AI must be reviewed, but the organization still needs a system to identify high-risk AI in the first place. It also needs a way to prove that the review happened.

The EU AI Act has made this operational shift more visible, especially for organizations that provide or deploy AI systems in affected contexts. The law’s phased implementation has pushed enterprises to classify systems, understand obligations, and prepare documentation. Even companies outside Europe are watching because global vendors often prefer one governance operating model rather than region-by-region chaos.

Important governance frameworks shaping the market include:

- EU AI Act requirements for risk classification and obligations

- NIST AI RMF guidance for trustworthy AI risk management

- ISO/IEC 42001 for AI management systems

- Sector rules in financial services, healthcare, insurance, and employment

- Internal responsible AI policies created by enterprise boards and risk committees

The audit trail becomes the product

A useful AI governance platform must turn normal work into an evidence trail. That means approvals, exceptions, testing results, policy mappings, ownership records, and risk decisions should be captured as part of the workflow. If teams must reconstruct evidence after the fact, the governance program will be slow, expensive, and unreliable.SAS’s emphasis on audit-ready records reflects a market reality. Organizations do not just need to make good decisions; they need to prove that they made good decisions through a consistent process. That proof is becoming essential for regulators, customers, partners, cyber insurers, and internal boards.

Competitive Landscape

SAS enters a crowded but unsettled category

The AI governance market is becoming crowded, but it remains unsettled. IBM has pushed watsonx.governance, Microsoft has expanded Purview and Copilot-related controls, specialist vendors such as Credo AI focus on policy automation and AI registries, and data platforms are adding governance features around models, lineage, and access. The category is not yet mature enough for one dominant pattern to have won.SAS’s differentiation appears to rest on three claims. First, AI Navigator is designed to govern across internal and third-party AI assets. Second, it emphasizes use-case-led governance rather than only technical artifact management. Third, it draws on SAS’s credibility in regulated analytics and model risk.

That is a credible strategy, but it also creates expectations. Enterprise buyers will ask how deep the integrations go, how automatically AI assets can be discovered, how policy mappings are maintained, and how well the system connects to enforcement points. A beautiful registry that depends on manual updates can quickly become stale.

Competitive pressure will come from:

- IBM, with deep enterprise governance and model lifecycle tooling

- Microsoft, with native control over Microsoft 365, Azure, identity, and data security

- Specialist AI governance vendors, with focused policy and regulatory workflows

- Cloud providers, embedding governance into AI development platforms

- Data platforms, extending lineage and catalog tools into AI assets

- Security vendors, discovering and controlling AI usage at network and endpoint layers

The integration question

The decisive factor may be integration depth. If AI Navigator can ingest information from common model platforms, agent frameworks, cloud services, SaaS tools, and compliance systems, it becomes a living control plane. If it relies heavily on voluntary manual registration, it risks becoming another governance database that gradually diverges from reality.That does not mean manual workflows are useless. Many governance decisions require human judgment, especially around intended use, risk tolerance, legal interpretation, and business impact. But discovery, change detection, ownership mapping, and evidence collection need as much automation as possible.

Enterprise Impact

Regulated industries are the natural first market

SAS AI Navigator’s strongest early fit is likely in financial services, healthcare, insurance, government, utilities, and other regulated industries. These sectors already have established risk, audit, validation, and compliance cultures. They also face heavier consequences when AI systems produce biased outcomes, mishandle sensitive data, or make opaque decisions.For banks and insurers, the platform could help connect model risk management traditions with newer generative AI and agentic systems. Traditional predictive models may already be documented, validated, and monitored, but LLM-powered workflows often live outside those mature processes. AI Navigator could help bridge that gap.

Healthcare organizations face a different challenge. AI tools may assist with administration, triage, coding, patient communication, imaging, operations, or research, each with different risk and privacy implications. A centralized inventory helps leaders distinguish between low-risk productivity tools and systems that influence patient outcomes.

Enterprise benefits may include:

- Unified AI oversight across business units

- Better audit preparedness for regulators and internal review

- Faster approval cycles for well-understood low-risk use cases

- Improved vendor governance for third-party AI tools

- Reduced duplication of similar AI projects

- Clearer accountability for business and technical owners

The CIO and CISO gain visibility

For CIOs and CISOs, the appeal is visibility. AI has become embedded in applications, productivity suites, browsers, developer tools, customer platforms, and analytics systems. That makes it difficult for IT and security leaders to understand where sensitive data may be going or where automated decisions are occurring.AI Navigator will not solve every security problem by itself. But as part of a broader control stack, it could help organizations identify which AI systems warrant deeper monitoring, data loss prevention, access reviews, vendor assessments, or red-team testing. Visibility is not enforcement, but it is the prerequisite for intelligent enforcement.

Consumer and Employee Impact

Governance shapes the workplace experience

Although SAS AI Navigator is an enterprise product, employees and consumers will feel its effects indirectly. Better governance can determine which AI tools employees are allowed to use, what data they can process, how approvals work, and whether AI-generated outputs require human review. It can also shape customer-facing experiences such as chatbots, claims processing, recommendations, and support automation.For employees, good governance should reduce confusion. Instead of vague warnings not to paste sensitive data into AI tools, workers need approved pathways and clear rules. The ideal outcome is not a locked-down workplace where experimentation dies, but a safer environment where people know which tools are permitted for which tasks.

Consumers benefit when companies can explain, monitor, and control AI systems that affect them. That is especially true in high-impact areas such as lending, insurance, employment, healthcare, education, and public services. Trustworthy AI is not created by branding; it is created by accountable operations.

Employee-facing governance can clarify:

- Approved AI tools for common productivity tasks

- Restricted data types that should not enter external models

- Human review requirements for sensitive outputs

- Escalation paths when AI behavior appears risky

- Training needs for responsible AI usage

- Ownership rules for departmental AI experiments

The productivity trade-off

There is also a trade-off. If governance workflows feel too complex, employees may see them as bureaucracy and look for workarounds. SAS itself appears aware of this risk, emphasizing lightweight SaaS deployment and existing workflow compatibility.The winning governance tools will be the ones people actually use. That requires clear interfaces, role-based experiences, sensible defaults, and enough automation to avoid turning every AI idea into a paperwork marathon. Usability may be as important as regulatory coverage.

Product Questions SAS Still Needs to Answer

Discovery, automation, and enforcement

The announcement gives SAS a strong narrative, but enterprise buyers will need details. How does AI Navigator discover AI assets across cloud accounts, SaaS platforms, model registries, endpoints, code repositories, and procurement systems? How much is automatic, and how much depends on business users registering projects manually?Another question is enforcement. A governance layer can identify gaps and track approvals, but organizations also need controls that prevent risky data flows, block unauthorized tools, revoke access, or require human intervention. SAS may rely on integrations with security, identity, data governance, and workflow platforms for some of that enforcement.

The most important product questions include:

- How automated is AI asset discovery?

- Which third-party AI platforms are supported at launch?

- How are regulatory frameworks updated over time?

- Can policies trigger technical controls or only workflow alerts?

- How does the platform integrate with Microsoft Purview, Entra, and Azure services?

- What reporting is available for executives, auditors, and technical teams?

- How does it govern autonomous agents at runtime?

Private preview will be telling

The private preview phase will matter because AI governance is highly context-dependent. A bank, hospital, manufacturer, public agency, and software company may all define risk differently. SAS will need feedback from diverse customers to refine workflows, templates, integrations, and reporting.The third-quarter 2026 Azure Marketplace target gives SAS a defined commercial milestone. By then, buyers will expect clarity on pricing, packaging, integration support, deployment requirements, and roadmap commitments. In a fast-moving market, vague governance promises will not be enough.

Strengths and Opportunities

SAS AI Navigator arrives at a moment when enterprises need practical systems for AI oversight rather than another abstract responsible AI framework. Its opportunity is to connect governance language with operational reality, especially for organizations that already understand analytics risk but are struggling with generative AI and agents.- Strong timing as AI regulation, audit pressure, and agentic AI adoption converge

- Credibility in regulated industries where SAS already has longstanding relationships

- Use-case-led design that reflects how AI risk is actually assessed

- Cross-ecosystem positioning for companies using multiple AI platforms

- Azure Marketplace availability that could ease procurement for Microsoft-centric enterprises

- Lifecycle coverage from experimentation to deployment and decommissioning

- Potential synergy with SAS Viya, MCP tooling, and industry-specific AI accelerators

Risks and Concerns

The product’s success will depend on execution, not just positioning. AI governance tools can fail when they become passive registries, depend too heavily on manual updates, or sit outside the systems where developers, business users, and risk teams actually work.- Manual inventory risk if discovery and integration capabilities are limited

- Crowded competition from IBM, Microsoft, specialist vendors, and cloud platforms

- Potential overlap with existing governance, catalog, risk, and compliance tools

- Usability challenges if workflows slow down legitimate AI experimentation

- Enforcement gaps if policy mapping does not connect to technical controls

- Agentic AI complexity as autonomous systems require runtime oversight, not just documentation

- Regulatory uncertainty as global AI rules continue to evolve and sometimes diverge

What to Watch Next

The first thing to watch is how SAS defines “complete AI inventory” in practice. Enterprises will want to know whether AI Navigator can automatically surface AI assets from common environments or whether it primarily organizes information that teams choose to submit. The difference will determine whether it becomes a living governance layer or a polished compliance repository.The second issue is Microsoft integration. Azure Marketplace availability is useful, but the deeper test will be how AI Navigator fits with Microsoft Purview, Entra, Defender, Azure AI services, Copilot Studio, and enterprise data estates. If SAS can complement Microsoft’s native controls while covering non-Microsoft AI assets, it could offer a persuasive story for heterogeneous organizations.

The third issue is agent governance. As enterprises move from chatbots to agents, governance must track tools, permissions, actions, escalation rules, and runtime behavior. SAS’s broader agentic AI push makes this area especially important.

Key milestones to watch include:

- Private preview feedback from regulated enterprise customers

- Azure Marketplace launch details in the third quarter of 2026

- Integration roadmap for Microsoft, cloud, model, and security ecosystems

- Agent governance capabilities beyond static registration

- Regulatory content updates for EU AI Act, NIST, ISO, and sector frameworks

Source: Techzine Global SAS launches AI Navigator for AI governance and compliance