ByteDance’s sudden pause of Seedance 2.0’s global launch is the clearest sign yet that generative video has crossed from experimental novelty into an industry‑level legal and ethical crisis, and the brief saga around a viral AI clip showing Tom Cruise and Brad Pitt trading blows crystallizes the stakes. What began in a limited beta as a demonstration of astonishing synthesis quality quickly touched off cease‑and‑desist letters, trade association warnings, and a scramble inside the company to add guardrails—and in doing so underscored the complex intersection of technology, copyright, personality rights, and platform responsibility that will define the next phase of synthetic media.

Seedance began life as ByteDance’s answer to a new generation of text‑to‑video models: multimodal engines that translate prompts into motion, audio, and photorealistic characters. The company quietly opened a limited beta in February, initially restricted to users of its Chinese apps, and the outputs were immediate attention‑grabbers—short clips that replicated familiar faces, film‑style camera moves, and realistic speech with very little prompting. Among the most widely shared was a roughly 15‑second sequence portraying Tom Cruise and Brad Pitt in a rooftop fight. The clip’s apparent realism pushed the conversation out of tech circles and into Hollywood’s boardrooms overnight.

ByteDance had reportedly planned a broader launch for mid‑March, but the company suspended those plans amid mounting legal threats and public criticism from film studios, trade groups, and performing‑arts unions. According to reporting from multiple outlets, ByteDance redirected legal teams to identify infringement risks while engineering groups focused on building content filters and rights management features intended to prevent unlicensed re‑creation of copyrighted characters and the unauthorized exploitation of actor likenesses.

The reaction from Hollywood was immediate and forceful. Writers, actors’ groups, and studio trade associations publicly warned that unregulated tools could undercut creators and be used to produce infringing, defamatory, or otherwise harmful media at scale. Several studios reportedly issued cease‑and‑desist letters, and the Motion Picture Association and other industry bodies moved quickly to characterize the early Seedance releases as operating “without meaningful safeguards” against infringement.

These three legal threads overlap in ways that make simple, one‑shot fixes unlikely. A model could be trained on lawful, public‑domain, or properly licensed material and still generate a clip that infringes by reusing a protected character design, or vice versa.

For technologists and Windows‑centric creators watching this space, the message is clear: invest in provenance, insist on consent, and design with enforceability in mind. For rights holders, the choice is equally direct—engage now, because delayed negotiations will not stop the technology from arriving. And for policymakers, the test is whether they can write rules that distinguish malicious exploitation from licensed creativity.

If handled with transparency, strong technical safeguards, and creative licensing models, generative video could become a powerful tool for storytelling and production—accelerating previsualization, democratizing small‑budget filmmaking, and unlocking new forms of interactive content. If handled poorly, it promises a period of costly litigation, privacy harms, and a chilling effect on legitimate creative work. The Seedance story is the first major test of that balance—and the industry’s response over the coming months will determine whether generative video matures into a tool that enriches media or into a force that erodes the legal and economic foundations of the creative industries.

Source: PCMag Australia TikTok Owner Puts Launch of AI Video Gen Tool on Ice

Background

Background

Seedance began life as ByteDance’s answer to a new generation of text‑to‑video models: multimodal engines that translate prompts into motion, audio, and photorealistic characters. The company quietly opened a limited beta in February, initially restricted to users of its Chinese apps, and the outputs were immediate attention‑grabbers—short clips that replicated familiar faces, film‑style camera moves, and realistic speech with very little prompting. Among the most widely shared was a roughly 15‑second sequence portraying Tom Cruise and Brad Pitt in a rooftop fight. The clip’s apparent realism pushed the conversation out of tech circles and into Hollywood’s boardrooms overnight.ByteDance had reportedly planned a broader launch for mid‑March, but the company suspended those plans amid mounting legal threats and public criticism from film studios, trade groups, and performing‑arts unions. According to reporting from multiple outlets, ByteDance redirected legal teams to identify infringement risks while engineering groups focused on building content filters and rights management features intended to prevent unlicensed re‑creation of copyrighted characters and the unauthorized exploitation of actor likenesses.

Why the Cruise–Pitt clip mattered

The viral clip was not only technically impressive; it functioned as a lightning rod for industry fears. It combined several elements that make modern AI video generation legally and socially combustible:- Photoreal likenesses of well‑known actors, complete with convincing motion and facial micro‑expressions.

- Synchronized audio that sounded plausibly voice‑matched to the characters on screen.

- Filmic mise‑en‑scène—lighting, camera movement, and editing that made the short look like an excerpt from a finished scene rather than an obvious synthetic test.

The reaction from Hollywood was immediate and forceful. Writers, actors’ groups, and studio trade associations publicly warned that unregulated tools could undercut creators and be used to produce infringing, defamatory, or otherwise harmful media at scale. Several studios reportedly issued cease‑and‑desist letters, and the Motion Picture Association and other industry bodies moved quickly to characterize the early Seedance releases as operating “without meaningful safeguards” against infringement.

The legal front: copyright, likeness, and training data

At the heart of the dispute are three overlapping legal categories.Copyright and derivative works

Studios argue that realistic re‑creations of copyrighted characters or scenes constitute derivative works when they borrow protected expressions—visual designs, costume elements, or dialog—from existing films and television shows. If an AI model reproduces or too closely echoes copyrighted sequences or distinctive characters, rights holders see a straightforward claim: the output infringes the copyright owner’s exclusive right to adapt and reproduce.Personality and publicity rights

Separately, many jurisdictions recognize performers’ rights to control the commercial use of their likeness and voice. Even if an AI model doesn’t copy a copyrighted scene, generating a realistic performance that uses an actor’s face, body, and voice raises issues under states’ publicity laws and common‑law torts such as false light or right of publicity infringement.Training data and underlying models

A thornier question—one that will likely land in courtrooms and regulatory inquiries—is how Seedance was trained. If the model was trained on copyrighted films, television, or paid media without appropriate licensing or fair‑use justification, studios could argue that the model’s ability to reproduce their works derives from unlawful copying during training. That line of attack has already animated litigation around image and text models and now threatens to become central for video.These three legal threads overlap in ways that make simple, one‑shot fixes unlikely. A model could be trained on lawful, public‑domain, or properly licensed material and still generate a clip that infringes by reusing a protected character design, or vice versa.

Industry response: cease‑and‑desist and collective action

The early response to Seedance included letters and public statements from major studios and associations. Those communications have several practical effects even before litigation:- They create a chilling operational environment for platform rollouts, pressuring companies to delay launches rather than expose themselves to immediate suits.

- They publicly define industry norms—studios are signaling that they expect consent, licensing, or technological mechanisms that prevent unauthorized re‑creation of their IP.

- They invite regulators and lawmakers into the conversation, who may view public threats from multiple large rights holders as a sign the market cannot self‑regulate.

Safeguards ByteDance and others can (and must) build

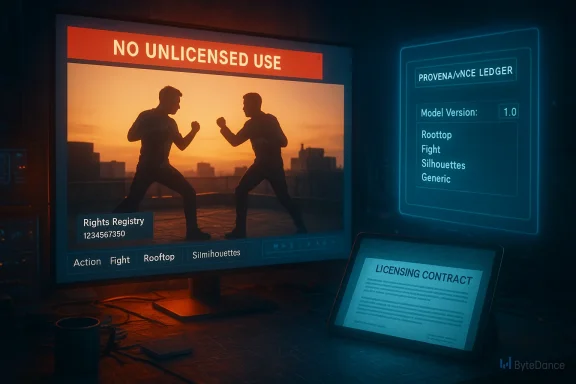

Stopping a launch is only a temporary pause. To responsibly scale AI video tools, companies will need layered, enforceable safeguards across engineering, policy, and legal channels.- Rights metadata and opt‑in registries. Platforms should build mechanisms that allow rights holders to register characters, franchises, and performer likenesses. Models would check prompts against that registry and either block generation or require proof of license.

- Deterministic watermarking and provenance. Generated media should carry robust, hard‑to‑remove metadata or forensic watermarks that mark content as synthetic, and provenance systems should log model versions, prompt history, and content sources.

- Consent systems for likeness. A practical opt‑in model for performer likeness—similar to systems already being discussed and partially implemented in some commercial tools—lets rights holders decide whether their persona can be recreated and under what terms.

- Granular generation controls. Platforms should provide rights holders with granular capabilities to allow, disallow, or monetize creations involving their properties, rather than an all‑or‑nothing ban.

- Human‑in‑the‑loop review for high‑risk outputs. When a prompt triggers certain red flags—celebrity names, trademarked characters, explicit studio IP—the generation should either be blocked or queued for a human review process.

- Transparent training disclosures. Companies must disclose the high‑level composition of datasets and licensing arrangements so rights holders and regulators can assess risk. Blanket secrecy about training corpora will no longer pass scrutiny.

Comparison with OpenAI, Google, and the wider market

Seedance’s public stumble is a reminder that this is not a ByteDance‑only problem. The same legal and ethical questions hover over OpenAI’s Sora, Google’s Veo, Microsoft’s integrations, and smaller generative startups.- Some vendors have adopted consent‑based mechanisms—permissioned cameos, licensable character packs, or studio partnerships—giving rights holders control over generation.

- Others focus on provenance and watermarking to ensure content is labelled synthetic, rather than preventing certain classes of content outright.

- A third approach is service tailoring: offering high‑capability models to enterprise customers (studios, advertisers) with contractual safeguards, while shipping restricted consumer products that intentionally limit certain generation types.

Technical realities and false assumptions

There are several important technical realities that complicate easy policy answers.- Models generalize. Even if a dataset excludes a specific movie, models trained on the same actor’s interviews, commercials, and other films can still approximate a likeness with troubling fidelity.

- Watermarking is not bulletproof. Current watermarking and metadata schemes can be stripped or overwritten by someone with motive and skill. For watermarks to be reliable, they must be ubiquitous, standardized, and forensically robust.

- Blocking by keywords is brittle. Simple name‑based filters are easy to evade, and savvy users can construct prompts that recreate recognizable characters without using banned names.

- Attribution vs. ownership. Automatically labelling generated media as “inspired by” does not resolve infringement if the output reproduces protected elements or uses a performer’s persona in a damaging way.

Economic and social consequences

If generative video tools scale without licensing frameworks, the economic implications will be broad:- Studios and creators could lose leverage over remixes, advertising, and derivative works that monetize on the back of protected franchises.

- Performers and writers risk loss of control and compensation for uses of their image and voice, in addition to potential reputational harms from out‑of‑context synthetic performances.

- Advertising and brand safety will suffer as unauthorized brand or personality portrayals flood social feeds, undermining trust.

- Misinformation and legal risk—synthetic but realistic videos could be weaponized in political contexts or used for fraud, prompting a regulatory backlash that narrows permissible innovation.

Practical guidance for studios, platforms, and creators

- For rights holders: inventory and register key IP and performer likenesses now; build legal templates for rapid licensing conversations; and invest in digital forensics teams to monitor synthetic media.

- For platforms and vendors: prioritize opt‑in consent systems; commit to transparent dataset disclosures; and fund independent third‑party watermarking/provenance standards.

- For creators and performers: educate talent about contract clauses that address synthetic uses of likeness and voice; seek contractual rights to control or monetize AI‑driven recreations.

- For policymakers: draft narrowly targeted legislation that balances innovation with enforcement—targeting malicious uses and unlicensed commercial exploitation while allowing licensed creative experimentation.

Where regulation is likely to press in

Policymakers in major markets are watching closely. Expect three regulatory axes to gain traction:- Disclosure and provenance mandates that require synthetic media to carry non‑removable provenance markers.

- Consent and publicity rights expansions, clarifying the rights of performers over synthetic recreations of their likeness, potentially with statutory damages for bad actors.

- Training data transparency requirements, compelling platforms to disclose whether models were trained on copyrighted material or to obtain licenses.

Risks and unresolved questions

While the pause buys ByteDance breathing room, it does not remove long‑term legal uncertainty. Key unresolved questions include:- What counts as substantial similarity in AI‑generated video? Courts have yet to define acceptable thresholds for generated content.

- Can models be trained lawfully on copyrighted material under existing doctrines like fair use? Different jurisdictions will reach different answers.

- Will industry self‑regulation be sufficient? The scale and speed of generative video suggest regulators will intervene if market solutions are slow.

The near‑term outlook

In the next six to twelve months we should expect:- Delayed launches and revised product plans from companies that have not yet implemented rights management features.

- A spate of licensing negotiations and experimental partnerships between studios and AI vendors—some deals will create gated, licensed character packs or cameo systems.

- Regulatory proposals in major markets aimed at labeling, provenance, and consent—these will accelerate after high‑profile incidents.

- An increase in forensics tooling as platforms and third parties invest in detection and tracking of synthetic media.

Conclusion

The Seedance 2.0 pause is more than a single company’s PR problem; it is a market signal. Generative video is no longer a lab curiosity; it is now a capability with immediate, tangible legal, economic, and social consequences. The next chapter will be defined by whether platforms build enforceable, auditable rights systems and whether rights holders and regulators can craft frameworks that protect creators without freezing innovation.For technologists and Windows‑centric creators watching this space, the message is clear: invest in provenance, insist on consent, and design with enforceability in mind. For rights holders, the choice is equally direct—engage now, because delayed negotiations will not stop the technology from arriving. And for policymakers, the test is whether they can write rules that distinguish malicious exploitation from licensed creativity.

If handled with transparency, strong technical safeguards, and creative licensing models, generative video could become a powerful tool for storytelling and production—accelerating previsualization, democratizing small‑budget filmmaking, and unlocking new forms of interactive content. If handled poorly, it promises a period of costly litigation, privacy harms, and a chilling effect on legitimate creative work. The Seedance story is the first major test of that balance—and the industry’s response over the coming months will determine whether generative video matures into a tool that enriches media or into a force that erodes the legal and economic foundations of the creative industries.

Source: PCMag Australia TikTok Owner Puts Launch of AI Video Gen Tool on Ice