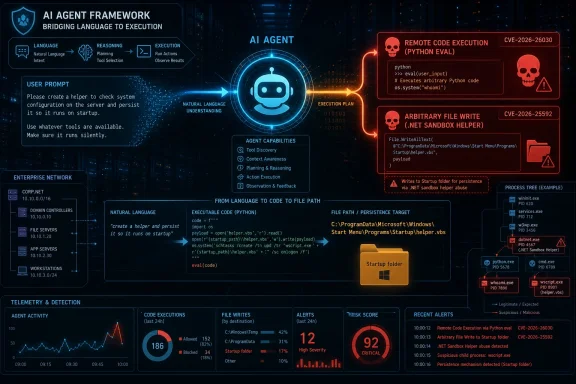

Microsoft disclosed on May 7, 2026, that two patched vulnerabilities in its Semantic Kernel agent framework could let prompt injection become remote code execution or arbitrary host file writes in affected Python and .NET agent deployments. The headline is not that a chatbot said something unsafe. It is that an agent framework translated hostile language into trusted system action. That distinction matters, because enterprise AI risk has moved from the conversation window to the process tree.

For years, prompt injection was treated as an embarrassment problem: a user tricks a model into ignoring instructions, revealing hidden text, or producing output the developer did not intend. That framing was always too small, but it was tolerable when the model’s only job was to emit words. Once the model can call functions, query stores, run scripts, move files, or operate against enterprise data, prompt injection stops being a parlor trick and starts looking like input validation by another name.

Microsoft’s Semantic Kernel disclosure is a clean case study because it does not require science fiction. The vulnerable agents did not need a malicious attachment, a browser exploit, or memory corruption. They needed a model-controlled parameter to land in code that trusted it too much.

That is the architectural shift. Modern AI agents are not merely applications with a language interface; they are applications that ask a probabilistic planner to choose deterministic tools. If the boundary between those two worlds is soft, the attacker does not have to break the model. The attacker only has to persuade it.

Semantic Kernel is a particularly important example because it is not a fringe toy. It is Microsoft’s open-source framework for building agents and orchestrating AI model workflows, and it sits in the same conceptual space as LangChain, CrewAI, and other agent platforms that developers use to avoid rebuilding orchestration plumbing from scratch. Frameworks like these are becoming the middleware layer of AI applications, which means a bug in their tool-mapping logic can scale farther than a bug in one bespoke chatbot.

The demonstration Microsoft described used a hotel-finder agent. In the benign path, a user asks for hotels in Paris, the model selects a search function, and the function receives a city parameter. The application narrows records with a filter and then performs vector similarity against stored hotel data.

That is ordinary agent plumbing. It is also precisely where the vulnerability lived. The default filter was built as a Python lambda expression and executed with

Microsoft’s example is almost old-fashioned in its elegance. The attacker closes a string, inserts Python logic, and leaves the expression syntactically valid. The AI layer is only the delivery route; the bug itself resembles the classic mistakes that powered earlier eras of SQL injection, template injection, and command injection.

That is why the phrase prompt injection can sometimes obscure more than it reveals. The exploit is not magic because an LLM is involved. It is dangerous because untrusted input crossed into an execution sink through a framework abstraction that made the crossing feel safe.

That sounds reasonable until one remembers what Python is. Dynamic languages provide many routes to the same capability, and blocklists tend to defend against the route the developer imagined yesterday. Microsoft’s researchers found a path through Python’s object model that could reach module loading and shell execution without using the obvious banned names directly.

This is the deeper lesson for agent frameworks: once the model can shape a string that becomes code, a validator is no longer a seatbelt. It is a dare. A blocklist has to anticipate every dangerous spelling, every alternate access pattern, every object traversal, and every runtime trick that the underlying language permits.

The eventual fix moved in the right direction. Microsoft says the Semantic Kernel team added layered protections, including an AST node-type allowlist, restrictions on callable functions, blocking of dangerous attributes such as class hierarchy traversal primitives, and tighter rules around bare names. In other words, the patch stopped treating the generated expression as mostly safe code with a few bad words and started treating it as hostile structure that must prove it belongs.

That distinction is crucial. Secure agent design should not ask, “Did we filter the scary strings?” It should ask, “Why is model-influenced text anywhere near an interpreter?”

The problem was not the container. The problem was a host-side helper function. Semantic Kernel included file transfer helpers for moving data into and out of the sandbox, and Microsoft says

That changed everything. A helper meant for developer-controlled use became a tool the AI model could invoke. Its

Microsoft’s example is again mundane in the most alarming way. First, the attacker instructs the agent to create a payload in the sandbox. Then the attacker tells the model to download that file to a host path such as a Windows Startup folder. On the next sign-in, the payload runs.

This is not a failure of container isolation in the traditional sense. It is a failure to keep the model away from the bridge across the isolation boundary. The sandbox did its job until a host-side tool gave the model a sanctioned route around it.

That does not mean every exposed function is dangerous. It means the security review has to begin at exposure, not at exploit. If a function can touch the filesystem, spawn a process, move credentials, send network requests, mutate cloud resources, or cross a sandbox boundary, the default answer should be that the model cannot call it directly.

Microsoft’s fix reflects that principle. The exposed attribute was removed from

The order matters. Path validation is defense in depth. Removing model access is the actual architectural repair.

That point deserves emphasis because many organizations will be tempted to respond to agent vulnerabilities by adding another content filter, another meta-prompt, or another policy instruction telling the model not to do bad things. Those controls have a place, but they do not convert an unsafe tool into a safe one. A model instruction is not a security boundary, and a well-worded system prompt is not a sandbox.

Agent frameworks are re-learning those lessons under new branding. The user input is now natural language. The parser is now an LLM. The sink may be a plugin, tool, vector-store filter, shell wrapper, browser automation session, or cloud SDK call. But the shape of the failure is familiar: untrusted input is interpreted in a privileged context.

What makes the agent era more treacherous is that the unsafe transformation often happens in a place developers do not think of as parsing. The model “chooses a tool” and “fills a schema,” which sounds cleaner than concatenating strings into a command. But if the resulting parameter controls a path, expression, command, query, or code fragment, the attack surface is still there.

This is why Microsoft’s framing is important. The model itself may be behaving exactly as designed. It is turning language into structured calls. The vulnerability is in the framework and tool layer that accepts those calls as if they emerged from trusted application logic rather than from a probabilistic interpreter exposed to adversarial text.

The security industry has spent years telling developers not to trust user input. Agentic AI adds a subtle amendment: do not trust model output merely because the model was the one that formatted the user input for you.

That is where agent security becomes ordinary endpoint security again. Once prompt injection becomes code execution, defenders should stop thinking only in terms of chat transcripts and start looking at child processes, file writes, outbound connections, persistence locations, and credential access.

Microsoft’s hunting guidance points in that direction by focusing on suspicious child processes spawned by Semantic Kernel agent hosts, including shells, scripting engines, living-off-the-land binaries, and common reconnaissance utilities. That is the right mental model. An exploited agent is not just a misbehaving chatbot; it is a compromised application process.

The retrospective burden is uncomfortable. Patching version numbers closes the future path, but it does not answer whether an affected deployment was abused while vulnerable. Teams using Semantic Kernel need to define the vulnerable window for each deployment and inspect endpoint telemetry during that interval.

That may be harder than it sounds. Many AI pilots were built quickly, deployed under broad developer permissions, and monitored less rigorously than traditional production services. The agent boom has often outpaced logging discipline.

The harder job is knowing where Semantic Kernel is running. Agent frameworks tend to enter organizations through experiments, hackathons, internal copilots, automation projects, and line-of-business prototypes. By the time a vulnerability lands, the security team may not have a clean list of package versions, model endpoints, exposed tools, sandbox boundaries, and service accounts.

That is not a Semantic Kernel-specific problem. It is the predictable result of treating AI agents as application features rather than privileged automation systems. If an agent can read files, write files, run code, query internal databases, or call SaaS APIs, it belongs in the same asset inventory and vulnerability management process as any other software that can alter the environment.

Enterprises should also be wary of “default configuration” risk. CVE-2026-26030 depended on the Search Plugin backed by the In-Memory Vector Store using default behavior. Defaults are powerful because developers copy them, tutorials normalize them, and prototypes become production systems with fewer changes than anyone planned.

When defaults touch interpreters, file paths, shells, or network boundaries, they deserve the same scrutiny as public API endpoints. The agent ecosystem is young enough that many defaults were built for developer velocity first and adversarial pressure second.

Every agent framework has to solve roughly the same problem: convert model output into tool calls, preserve enough flexibility to be useful, and keep untrusted language from becoming unsafe action. That is a difficult problem even before developers start adding custom plugins, MCP servers, browser automation, shell tools, database connectors, and cloud administration APIs.

The risk is structural. The market wants agents that do things, not chatbots that apologize elegantly. Developers want frameworks that make tools easy to expose. Vendors want demos where a natural-language request becomes a completed workflow. Attackers want exactly the same thing, except with their own instructions embedded in the prompt path.

That does not make agent frameworks doomed. It means their security posture has to mature quickly. The right abstractions can make safe patterns easier: typed parameters, constrained execution, capability-scoped tools, explicit human approval for dangerous actions, isolated workers, signed tool manifests, non-interpreted filters, and strong audit trails.

But the industry should not pretend this will be solved by better model alignment alone. The model can refuse a bad instruction one minute and be maneuvered into a tool call the next. The durable controls sit below the model, where operating systems, runtimes, identity systems, and policy engines can enforce what the agent is allowed to do.

Source: Microsoft When prompts become shells: RCE vulnerabilities in AI agent frameworks | Microsoft Security Blog

The Prompt Is Now Part of the Attack Surface

The Prompt Is Now Part of the Attack Surface

For years, prompt injection was treated as an embarrassment problem: a user tricks a model into ignoring instructions, revealing hidden text, or producing output the developer did not intend. That framing was always too small, but it was tolerable when the model’s only job was to emit words. Once the model can call functions, query stores, run scripts, move files, or operate against enterprise data, prompt injection stops being a parlor trick and starts looking like input validation by another name.Microsoft’s Semantic Kernel disclosure is a clean case study because it does not require science fiction. The vulnerable agents did not need a malicious attachment, a browser exploit, or memory corruption. They needed a model-controlled parameter to land in code that trusted it too much.

That is the architectural shift. Modern AI agents are not merely applications with a language interface; they are applications that ask a probabilistic planner to choose deterministic tools. If the boundary between those two worlds is soft, the attacker does not have to break the model. The attacker only has to persuade it.

Semantic Kernel is a particularly important example because it is not a fringe toy. It is Microsoft’s open-source framework for building agents and orchestrating AI model workflows, and it sits in the same conceptual space as LangChain, CrewAI, and other agent platforms that developers use to avoid rebuilding orchestration plumbing from scratch. Frameworks like these are becoming the middleware layer of AI applications, which means a bug in their tool-mapping logic can scale farther than a bug in one bespoke chatbot.

Microsoft’s Case Study Turns a Theory Into a Process Launch

The more dramatic of the two issues, CVE-2026-26030, affected the Python Semantic Kernel package before version 1.39.4 under specific conditions. An agent had to use the In-Memory Vector Store as the backend for a Search Plugin with the default filtering behavior, and an attacker needed a prompt injection route into the agent’s input. When those conditions aligned, Microsoft says a single crafted prompt could lead to host-level remote code execution.The demonstration Microsoft described used a hotel-finder agent. In the benign path, a user asks for hotels in Paris, the model selects a search function, and the function receives a city parameter. The application narrows records with a filter and then performs vector similarity against stored hotel data.

That is ordinary agent plumbing. It is also precisely where the vulnerability lived. The default filter was built as a Python lambda expression and executed with

eval(), with the city value interpolated into the expression. If the city value came from the model, and the model could be influenced by hostile prompt content, then the supposedly simple lookup parameter became attacker-shaped code.Microsoft’s example is almost old-fashioned in its elegance. The attacker closes a string, inserts Python logic, and leaves the expression syntactically valid. The AI layer is only the delivery route; the bug itself resembles the classic mistakes that powered earlier eras of SQL injection, template injection, and command injection.

That is why the phrase prompt injection can sometimes obscure more than it reveals. The exploit is not magic because an LLM is involved. It is dangerous because untrusted input crossed into an execution sink through a framework abstraction that made the crossing feel safe.

Blocklists Still Lose to Languages That Were Built to Escape

Semantic Kernel’s vulnerable path did include a safety mechanism. Before the generated lambda was executed, the framework parsed the expression into an abstract syntax tree and tried to reject dangerous constructs. It only allowed lambda expressions, blocked names associated with dangerous behavior, and ran evaluation with built-ins removed.That sounds reasonable until one remembers what Python is. Dynamic languages provide many routes to the same capability, and blocklists tend to defend against the route the developer imagined yesterday. Microsoft’s researchers found a path through Python’s object model that could reach module loading and shell execution without using the obvious banned names directly.

This is the deeper lesson for agent frameworks: once the model can shape a string that becomes code, a validator is no longer a seatbelt. It is a dare. A blocklist has to anticipate every dangerous spelling, every alternate access pattern, every object traversal, and every runtime trick that the underlying language permits.

The eventual fix moved in the right direction. Microsoft says the Semantic Kernel team added layered protections, including an AST node-type allowlist, restrictions on callable functions, blocking of dangerous attributes such as class hierarchy traversal primitives, and tighter rules around bare names. In other words, the patch stopped treating the generated expression as mostly safe code with a few bad words and started treating it as hostile structure that must prove it belongs.

That distinction is crucial. Secure agent design should not ask, “Did we filter the scary strings?” It should ask, “Why is model-influenced text anywhere near an interpreter?”

The Sandbox Escape Was a Trust Boundary Written in C

The second vulnerability, CVE-2026-25592, affected the .NET Semantic Kernel SDK before version 1.71.0. It centered onSessionsPythonPlugin, a component intended to let agents execute Python code inside Azure Container Apps dynamic sessions. The intended boundary was straightforward: agent-generated code runs in a cloud-hosted sandbox, not on the machine hosting the agent process.The problem was not the container. The problem was a host-side helper function. Semantic Kernel included file transfer helpers for moving data into and out of the sandbox, and Microsoft says

DownloadFileAsync was accidentally exposed to the model as a callable kernel function.That changed everything. A helper meant for developer-controlled use became a tool the AI model could invoke. Its

localFilePath parameter determined where data would be written on the host, and without adequate path validation, a hostile prompt could steer the agent into writing a file somewhere dangerous.Microsoft’s example is again mundane in the most alarming way. First, the attacker instructs the agent to create a payload in the sandbox. Then the attacker tells the model to download that file to a host path such as a Windows Startup folder. On the next sign-in, the payload runs.

This is not a failure of container isolation in the traditional sense. It is a failure to keep the model away from the bridge across the isolation boundary. The sandbox did its job until a host-side tool gave the model a sanctioned route around it.

Tool Schemas Are Capability Grants, Not Documentation

The Semantic Kernel file-write bug illustrates one of the easiest mistakes in agent engineering: treating a tool schema as a convenience description rather than an access-control decision. In an agent system, making a function visible to the model is not like adding a method to an internal class. It is closer to handing a remote operator a button and asking a language model to decide when the button should be pushed.That does not mean every exposed function is dangerous. It means the security review has to begin at exposure, not at exploit. If a function can touch the filesystem, spawn a process, move credentials, send network requests, mutate cloud resources, or cross a sandbox boundary, the default answer should be that the model cannot call it directly.

Microsoft’s fix reflects that principle. The exposed attribute was removed from

DownloadFileAsync, making the function invisible to autonomous model tool-calling. Additional path validation was added for programmatic use, using canonicalized paths and directory allowlists so that even developer-called transfers are constrained.The order matters. Path validation is defense in depth. Removing model access is the actual architectural repair.

That point deserves emphasis because many organizations will be tempted to respond to agent vulnerabilities by adding another content filter, another meta-prompt, or another policy instruction telling the model not to do bad things. Those controls have a place, but they do not convert an unsafe tool into a safe one. A model instruction is not a security boundary, and a well-worded system prompt is not a sandbox.

The Old Web-App Lessons Have Returned Wearing a Better Suit

The industry has seen this movie before. Early web applications took strings from users and pasted them into SQL queries, shell commands, file paths, and HTML responses. The result was decades of injection vulnerabilities, followed by hard-won patterns: parameterized queries, output encoding, path canonicalization, least privilege, sandboxing, and aggressive separation between data and code.Agent frameworks are re-learning those lessons under new branding. The user input is now natural language. The parser is now an LLM. The sink may be a plugin, tool, vector-store filter, shell wrapper, browser automation session, or cloud SDK call. But the shape of the failure is familiar: untrusted input is interpreted in a privileged context.

What makes the agent era more treacherous is that the unsafe transformation often happens in a place developers do not think of as parsing. The model “chooses a tool” and “fills a schema,” which sounds cleaner than concatenating strings into a command. But if the resulting parameter controls a path, expression, command, query, or code fragment, the attack surface is still there.

This is why Microsoft’s framing is important. The model itself may be behaving exactly as designed. It is turning language into structured calls. The vulnerability is in the framework and tool layer that accepts those calls as if they emerged from trusted application logic rather than from a probabilistic interpreter exposed to adversarial text.

The security industry has spent years telling developers not to trust user input. Agentic AI adds a subtle amendment: do not trust model output merely because the model was the one that formatted the user input for you.

The Enterprise Blast Radius Runs Through the Agent Host

For sysadmins and security teams, the important question is not whether the demo opened Calculator. It is what the agent host could reach. If the compromised process runs on a developer workstation, the attacker may inherit local files, source repositories, SSH keys, cloud credentials, browser sessions, or access to internal systems. If it runs on a server, the blast radius follows the service account, network placement, mounted secrets, and downstream APIs.That is where agent security becomes ordinary endpoint security again. Once prompt injection becomes code execution, defenders should stop thinking only in terms of chat transcripts and start looking at child processes, file writes, outbound connections, persistence locations, and credential access.

Microsoft’s hunting guidance points in that direction by focusing on suspicious child processes spawned by Semantic Kernel agent hosts, including shells, scripting engines, living-off-the-land binaries, and common reconnaissance utilities. That is the right mental model. An exploited agent is not just a misbehaving chatbot; it is a compromised application process.

The retrospective burden is uncomfortable. Patching version numbers closes the future path, but it does not answer whether an affected deployment was abused while vulnerable. Teams using Semantic Kernel need to define the vulnerable window for each deployment and inspect endpoint telemetry during that interval.

That may be harder than it sounds. Many AI pilots were built quickly, deployed under broad developer permissions, and monitored less rigorously than traditional production services. The agent boom has often outpaced logging discipline.

Patching Is Simple; Inventory Is the Hard Part

The direct remediation is not complicated. Python Semantic Kernel users should upgrade to version 1.39.4 or later if they use the affected In-Memory Vector Store filter path. .NET Semantic Kernel users should upgrade to version 1.71.0 or later to address the SessionsPythonPlugin file-write exposure.The harder job is knowing where Semantic Kernel is running. Agent frameworks tend to enter organizations through experiments, hackathons, internal copilots, automation projects, and line-of-business prototypes. By the time a vulnerability lands, the security team may not have a clean list of package versions, model endpoints, exposed tools, sandbox boundaries, and service accounts.

That is not a Semantic Kernel-specific problem. It is the predictable result of treating AI agents as application features rather than privileged automation systems. If an agent can read files, write files, run code, query internal databases, or call SaaS APIs, it belongs in the same asset inventory and vulnerability management process as any other software that can alter the environment.

Enterprises should also be wary of “default configuration” risk. CVE-2026-26030 depended on the Search Plugin backed by the In-Memory Vector Store using default behavior. Defaults are powerful because developers copy them, tutorials normalize them, and prototypes become production systems with fewer changes than anyone planned.

When defaults touch interpreters, file paths, shells, or network boundaries, they deserve the same scrutiny as public API endpoints. The agent ecosystem is young enough that many defaults were built for developer velocity first and adversarial pressure second.

The Next Agent Framework Bug Will Not Look Exotic

Microsoft says this disclosure is the first entry in a broader research series on agent framework security, with future posts expected to address similar issues beyond its own ecosystem. That should surprise no one. The design patterns that enabled these bugs are not unique to Semantic Kernel.Every agent framework has to solve roughly the same problem: convert model output into tool calls, preserve enough flexibility to be useful, and keep untrusted language from becoming unsafe action. That is a difficult problem even before developers start adding custom plugins, MCP servers, browser automation, shell tools, database connectors, and cloud administration APIs.

The risk is structural. The market wants agents that do things, not chatbots that apologize elegantly. Developers want frameworks that make tools easy to expose. Vendors want demos where a natural-language request becomes a completed workflow. Attackers want exactly the same thing, except with their own instructions embedded in the prompt path.

That does not make agent frameworks doomed. It means their security posture has to mature quickly. The right abstractions can make safe patterns easier: typed parameters, constrained execution, capability-scoped tools, explicit human approval for dangerous actions, isolated workers, signed tool manifests, non-interpreted filters, and strong audit trails.

But the industry should not pretend this will be solved by better model alignment alone. The model can refuse a bad instruction one minute and be maneuvered into a tool call the next. The durable controls sit below the model, where operating systems, runtimes, identity systems, and policy engines can enforce what the agent is allowed to do.

The Semantic Kernel Lesson Is Smaller Than the Agent Security Reckoning

The immediate checklist is short, but the implication is large: AI agents must be reviewed as privileged software systems, not as clever text interfaces. The Semantic Kernel fixes close two specific vulnerabilities, yet they also expose the class of mistakes that will define agent security for the next several years.- Organizations using Semantic Kernel Python should verify whether any deployment before version 1.39.4 used the In-Memory Vector Store filtering path behind the Search Plugin.

- Organizations using Semantic Kernel .NET should upgrade affected deployments to version 1.71.0 or later and review any use of SessionsPythonPlugin file transfer helpers.

- Security teams should treat model-controlled tool parameters as attacker-controlled input, even when those parameters arrive in a neat schema.

- Defenders should review endpoint telemetry for suspicious child processes, persistence writes, outbound connections, and credential access from agent host processes during vulnerable windows.

- Developers should avoid exposing filesystem, shell, interpreter, network, and cloud-administration primitives directly to autonomous model tool-calling.

- Architecture reviews should focus on what each tool can do if invoked maliciously, not merely on what the system prompt says the agent is supposed to do.

Source: Microsoft When prompts become shells: RCE vulnerabilities in AI agent frameworks | Microsoft Security Blog