Teramind’s new product announcement marks a deliberate attempt to stitch enterprise-grade governance around the very behaviors that make modern AI useful — prompts, responses, and autonomous actions — and to do so across the entire spectrum of tools employees now use, from sanctioned copilots to the sprawling “shadow AI” ecosystem.

The pace at which generative AI and agentic systems have entered everyday workflows has left many security and compliance teams scrambling. Workforce surveys and vendor research over the last 18 months show a consistent pattern: employee adoption of unsanctioned AI tools is widespread, experimentation with autonomous agents is accelerating, and governance frameworks are struggling to keep up. That gap — between rapid operational adoption and lagging oversight — is exactly the problem Teramind says its new offering, Teramind AI Governance, aims to close.

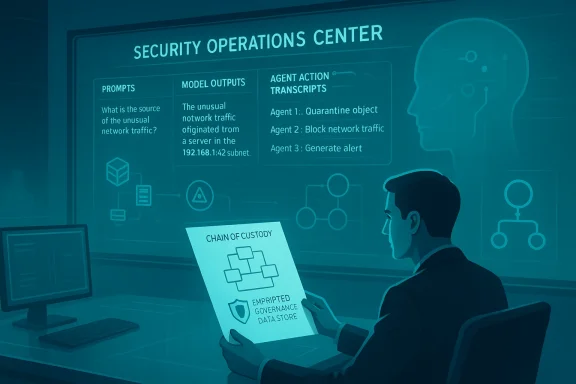

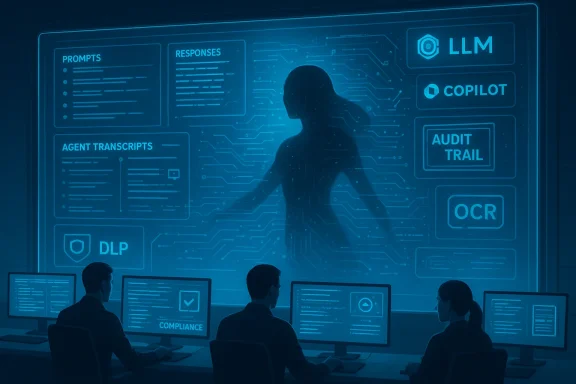

Teramind positions the product as a behavioral oversight layer that requires no new enterprise infrastructure and can capture evidence of AI use immediately: prompt/response logging, screen recordings with OCR, transcripts of agent activity, and behavioral detection of shadow AI based on execution patterns rather than signatures. The vendor pitches this as the first platform to provide a single-pane view across mainstream LLMs and copilots, plus the unsanctioned tools employees bring to the job.

To understand why vendors and customers are talking about this now, three industry realities matter:

That said, the work of governance is as much organizational as it is technical. Continuous screen recording and prompt capture come with privacy, legal, and cultural trade-offs that security teams must manage deliberately. Technical limits — encrypted API traffic, self-hosted models, high-volume telemetry — mean no single product will entirely eliminate risk. The right approach starts with clear data-first policies, sanctioned tools that meet user needs, and phased enforcement that balances productivity and protection.

For enterprises moving from experiment to scale with agentic AI, an evidence-driven governance layer is not optional; it is a pragmatic guardrail. Products that combine visibility, policy-as-code, and operational integrations will be valuable building blocks — but success depends on integrating them into a broader program of classification, training, legal alignment, and continuous improvement. In short: governance can enable AI safely, but only when it is part of a wider strategy that recognizes both the promise and the pitfalls of agentic systems.

Source: Business Wire https://www.businesswire.com/news/h...vernance-Platform-for-the-Agentic-Enterprise/

Background

Background

The pace at which generative AI and agentic systems have entered everyday workflows has left many security and compliance teams scrambling. Workforce surveys and vendor research over the last 18 months show a consistent pattern: employee adoption of unsanctioned AI tools is widespread, experimentation with autonomous agents is accelerating, and governance frameworks are struggling to keep up. That gap — between rapid operational adoption and lagging oversight — is exactly the problem Teramind says its new offering, Teramind AI Governance, aims to close.Teramind positions the product as a behavioral oversight layer that requires no new enterprise infrastructure and can capture evidence of AI use immediately: prompt/response logging, screen recordings with OCR, transcripts of agent activity, and behavioral detection of shadow AI based on execution patterns rather than signatures. The vendor pitches this as the first platform to provide a single-pane view across mainstream LLMs and copilots, plus the unsanctioned tools employees bring to the job.

To understand why vendors and customers are talking about this now, three industry realities matter:

- Worker access to AI expanded rapidly in 2025, driving broader enterprise exposure.

- A growing minority of organizations have moved from pilots into scaled, agentic deployments.

- Security teams report an uptick in incidents where sensitive data is exposed via AI interactions, raising questions about breach costs, compliance, and auditability.

What Teramind says it delivers

Teramind’s announcement emphasizes a few headline capabilities that are worth summarizing before we analyze their implications:- Comprehensive prompt and response logging: recording the prompts employees send to LLMs and the responses those models return.

- Visual evidence capture (screen recording + OCR): capturing what actually appears on an employee’s screen — useful for web-based, browser-only, or native app interactions where API hooks are unavailable.

- Transcripts of autonomous agent activity: logging an agent's planning, sub-tasks, and execution steps when it operates across systems.

- Behavioral detection of shadow AI: identifying unauthorized AI use by how a tool behaves (execution patterns) rather than relying solely on static signatures or allow/block lists.

- Automatic enforcement of existing security policies: applying data loss prevention (DLP), access controls, and compliance rules directly against AI-driven activity.

- Out-of-the-box alignment with compliance regimes: claims to produce continuous audit trails across SOX, HIPAA, CMMC, FedRAMP, SOC 2, ISO 27001, and the EU AI Act.

Why enterprises are hunting for this kind of solution

Several converging forces have made an AI governance product a commercially practical and operationally urgent idea:- The rise of shadow AI: Large numbers of employees — from developers to executives — routinely use third-party AI services for work tasks. Organizations report that unauthorized AI usage is common, and security vendors have documented significant volumes of sensitive data being pasted into public LLMs.

- Agentic systems: Autonomous or agentic AI can rapidly execute multi-step actions; a misconfigured or malicious agent can multiply impact far faster than a single manual user action.

- Compliance and audit pressure: Regulators and auditors are increasingly focused on how AI is used in regulated workflows. The EU AI Act and sectoral rules (HIPAA, FedRAMP, etc.) push for traceability and risk controls around automated decision-making and data flows.

- Incident cost pressures: Early research and vendor analyses suggest that when sensitive data is exfiltrated via AI prompts or agent workflows, breach remediation costs can be materially higher, particularly where audit trails are missing.

- User demand vs. IT control: A classic adoption paradox — employees adopt tools that increase productivity if IT does not provide acceptable alternatives, and governance that simply blocks access often drives users deeper into shadow tooling.

How this product fits into the security stack

Teramind’s positioning is that AI governance is an extension of existing workforce monitoring, DLP, and insider-risk tooling. In practice, organizations will likely look to integrate an AI governance layer in several related places:- Endpoint monitoring and DLP: capture copy/paste, file uploads, and the content of screen sessions that show sensitive information being shared with AI tools.

- Network filters and CASB: block or throttle calls to unapproved public AI APIs at the gateway; conversely, tag and allow sanctioned API usage for auditing.

- SIEM/SOAR: send AI-related logs and alerts into centralized security operations for correlation and automated response.

- Identity and access management: attach policy decisions to user identity and roles to enforce fine-grained controls.

- Model and platform governance: integrate with vendor contracts, model risk assessments, and procurement to ensure sanctioned AI services meet data-handling requirements.

Strengths and notable innovations

Teramind’s announcement highlights a number of pragmatic design choices that address real-world enforcement problems:- Behavioral detection approach: Detecting shadow AI by execution patterns rather than signatures addresses evasion tactics (renamed binaries, custom wrappers) and the proliferation of new models and endpoints. This is a meaningful practical improvement over static allowlists alone.

- Prompt-level evidence capture: Storing prompts and model responses gives teams the ability to reconstruct exactly what data left the environment and what answers were returned — crucial for regulatory investigations and breach analysis.

- Transcripts of agent activity: Capturing multi-step agent plans and their actions introduces accountability into what would otherwise be opaque automation. This is especially valuable as organizations move from pilots to actual production agentic workflows.

- No new infrastructure claim: Minimizing deployment friction is a strong commercial play; security teams are more willing to trial tools that don’t require rip-and-replace of existing stacks.

- Policy enforcement across compliance frameworks: Packaging reporting and audit trails for a long list of standards (SOX, HIPAA, CMMC, FedRAMP, SOC 2, ISO 27001, EU AI Act) adds immediate value for regulated industries that must demonstrate continuous controls.

Risks, limitations, and unanswered questions

No product can be a silver bullet; the announcement raises several technical, legal, and organizational questions that buyers must weigh carefully.Technical and operational limitations

- Encrypted and ephemeral agents: Agents that execute entirely on-premise models or within encrypted channels (e.g., private API credentials, self-hosted LLMs running in containers) may be harder to detect and log without deeper integration. Behavioral detection can help, but it’s not a guarantee.

- API-only interactions: When employees or agents interact with AI via backend API keys rather than through an observable UI, capturing the full prompt/response chain may require intercepting API traffic or integrating with the sanctioned platform’s telemetry APIs.

- Scale and noise: Recording prompts, screen captures, and agent transcripts across thousands of endpoints will create huge volumes of data. Without careful signal tuning, security teams risk being overwhelmed by false positives or benign activity logs.

- Accuracy of behavioral models: Behavioral detection systems must be trained and tuned; they can generate false positives (legitimate automation flagged) and false negatives (novel evasion). Vendors typically require time and data to reach acceptable maturity.

- Tamper resistance: Agents or users with admin privileges might be able to disable endpoint recording or obfuscate activity, complicating enforcement unless the governance layer is tightly integrated with endpoint management and hardened against tampering.

Legal, privacy, and workforce implications

- Employee privacy and labor law: Continuous screen recording and prompt logging raise legitimate privacy concerns. In some jurisdictions, recording employees without notice or consent can trigger legal and collective bargaining issues. Even where allowed, heavy-handed monitoring can erode trust and morale.

- Data sovereignty and regulatory boundaries: For companies operating across jurisdictions, storing prompts and responses that contain regulated data (PII, PHI) may create additional compliance obligations, especially under stringent privacy laws.

- Evidence handling and retention: Captured prompts and responses are potentially highly sensitive artifacts. Policies for retention, access control, encryption-at-rest, and lawful disclosure processes need to be robust and auditable.

- Overreach and chilling effects: Aggressive monitoring can discourage legitimate use of AI tools provided to improve productivity, unless governance is paired with enablement and sanctioned alternatives.

Claims that require careful verification

The announcement cites specific numbers from Teramind’s internal research (e.g., “more than 80% of workers use unapproved AI tools,” “one-third have shared proprietary data with unsanctioned platforms,” “49% hide AI use from IT”) and asserts a concrete per-incident cost for AI-associated breaches (more than $650,000). Those points are consistent with broader independent vendor surveys that report widespread shadow AI use and elevated breach impact, but the exact figures should be treated as company-reported or vendor-specific unless cross-checked against independent, peer-reviewed data sets. Buyers should ask for underlying methodologies before relying on headline percentages for risk modeling.Competitive and market context

Teramind is not alone in recognizing a governance gap at the intersection of AI, automation, and enterprise security. Several adjacent players and service providers are already offering policy-as-code, agent orchestration governance, or integrations that seek to codify risk controls into agent workflows.- Large systems integrators and service providers are packaging policy-as-code for agentic workflows, enabling automated enforcement of organizational rules within agents.

- Established security vendors are enhancing DLP, CASB, and UEBA (user and entity behavior analytics) capabilities to better detect shadow AI and model-based exfiltration patterns.

- Cloud and platform providers have introduced audit tooling and logging features for their own model services, making it easier for enterprise customers to get telemetry from sanctioned services.

Adoption guidance: how to approach AI governance in practice

For security leaders and CIOs wrestling with shadow AI and agentic rollouts, a measured approach balances control and productivity:- Start with data classification and policy: Identify the data types that must never leave controlled environments (e.g., PHI, regulated financial data, secrets). Translate those into machine-enforceable rules before deploying technical controls.

- Offer sanctioned alternatives: Employees often use unsanctioned AI because sanctioned tools don’t meet needs. Provide approved copilots and connectors that meet security requirements and make compliance the path of least resistance.

- Instrument and observe first: Deploy visibility features to map the problem space — what tools are people using, how often, and where sensitive data is exposed — before sweeping enforcement actions.

- Phase enforcement: Move from detection to soft enforcement (alerts, education) to hard enforcement (blocking, quarantining) to avoid shutting down legitimate productivity gains.

- Integrate into incident response: Ensure L1/L2 SOC playbooks include AI-related artifacts (prompts, transcripts) so that investigations have the right context.

- Protect the captured data: Treat recorded prompts and transcripts as highly sensitive logs. Enforce strict access controls, encryption, and retention policies.

- Engage legal, HR, and privacy early: Agree on acceptable monitoring terms, consent frameworks, and labor considerations. Where appropriate, negotiate changes with employee representatives.

- Measure outcomes: Track the net business impact — breach reductions, reduction in shadow AI incidents, developer productivity when using sanctioned tools, and business units’ satisfaction.

Practical checklist for evaluating an AI governance vendor

When vetting solutions like Teramind AI Governance, security and compliance teams should ask vendors to demonstrate:- Exact telemetry sources and limitations (what is captured, what can’t be captured).

- How prompt and response data is stored, protected, and purged.

- Integration points with DLP, SIEM, SOAR, CASB, and cloud provider logs.

- Behavioral detection model performance metrics (false positive / false negative rates) and tuning requirements.

- Support for self-hosted/private LLM telemetry and how API-only agent interactions are audited.

- Policy enforcement modes (audit-only, quarantine, block) and how they can be scoped by role and data classification.

- Compliance reporting templates aligned to specific frameworks (SOC 2, HIPAA, EU AI Act clauses).

- Administrative controls and tamper resistance for endpoint agents.

- Privacy, retention, and data residency features.

- Pricing model for large-scale capture (e.g., cost per GB of recorded content or per endpoint).

Longer-term implications for governance and operations

If tools like Teramind’s take hold, they may accelerate several structural shifts inside organizations:- Policy as code for AI: More organizations will embed governance directly into agent workflows and model interfaces so policies are enforced at runtime rather than as an afterthought.

- AI Bills of Materials: Companies will start to catalog models, datasets, and dependencies the way they manage software supply chains.

- Agent attestation and identity: Agents will be given identities and certificates that bind them to policies and responsibilities, making it easier to audit actions to a machine identity.

- Convergence of DLP + MRM + UEBA: Data loss prevention, model risk management, and behavioral analytics will become tightly integrated disciplines rather than siloed functions.

- Standards and regulation: As governance practices mature, industry standards and regulation (national AI acts, sectoral rules) will likely codify minimum auditability and logging requirements for agentic operations.

Conclusion

Teramind’s AI Governance announcement is a timely product move into a space many organizations describe as a pain point: the need for forensic-grade visibility and enforceable control over the flood of AI tools and the agentic systems emerging in production. Its emphasis on behavioral detection, prompt-level evidence, and agent transcripts addresses concrete gaps that existing DLP and endpoint tools have struggled to cover.That said, the work of governance is as much organizational as it is technical. Continuous screen recording and prompt capture come with privacy, legal, and cultural trade-offs that security teams must manage deliberately. Technical limits — encrypted API traffic, self-hosted models, high-volume telemetry — mean no single product will entirely eliminate risk. The right approach starts with clear data-first policies, sanctioned tools that meet user needs, and phased enforcement that balances productivity and protection.

For enterprises moving from experiment to scale with agentic AI, an evidence-driven governance layer is not optional; it is a pragmatic guardrail. Products that combine visibility, policy-as-code, and operational integrations will be valuable building blocks — but success depends on integrating them into a broader program of classification, training, legal alignment, and continuous improvement. In short: governance can enable AI safely, but only when it is part of a wider strategy that recognizes both the promise and the pitfalls of agentic systems.

Source: Business Wire https://www.businesswire.com/news/h...vernance-Platform-for-the-Agentic-Enterprise/