The shift from keyword-driven web search to conversational, LLM-powered answers is no longer an abstract possibility — it’s already reshaping how people find information, how publishers earn attention, and how businesses capture customers. What used to be a linear path (query → list of links → click → site) is fragmenting into two distinct experiences: AI Overviews that summarize and cite web content directly inside search, and native LLM assistants that answer questions end-to-end inside a chat interface. Both remove friction for users — but they raise new hurdles for businesses that rely on organic traffic. This feature explains what’s changing, why it matters, and exactly how you should change your online presence to survive and thrive in an era when LLMs increasingly stand between users and your website.

The last three years accelerated a transition that began with featured snippets and knowledge panels: models trained on large datasets and connected to live retrieval systems can synthesize concise answers, attribute sources in varying degrees, and in some cases complete transactions without sending users to publisher sites. Major platform owners have integrated conversational AI into core discovery surfaces, while native LLM products (chat assistants and consumer apps) compete for attention. The result is a bifurcated landscape:

There are also broader societal trade-offs to consider: a web dominated by synthesized answers can improve user speed and reduce cognitive load, but it can also concentrate power in platform curators and make independent verification harder. That’s why technical adaptation must be paired with industry collaboration on attribution, transparency, and standards.

Source: Harvard Business Review LLMs Are Overtaking Search. Here’s How to Adjust Your Online Presence.

Background

Background

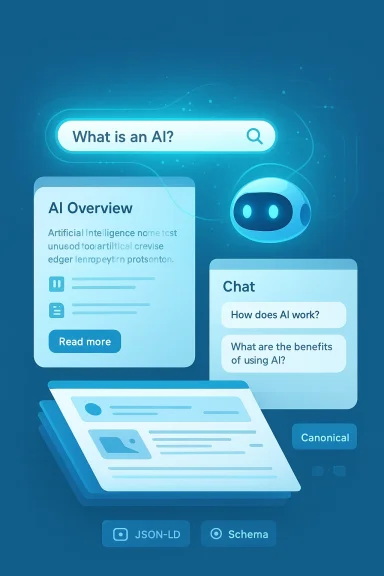

The last three years accelerated a transition that began with featured snippets and knowledge panels: models trained on large datasets and connected to live retrieval systems can synthesize concise answers, attribute sources in varying degrees, and in some cases complete transactions without sending users to publisher sites. Major platform owners have integrated conversational AI into core discovery surfaces, while native LLM products (chat assistants and consumer apps) compete for attention. The result is a bifurcated landscape:- AI Overviews and search-integrated assistants that use generative models to summarize a small set of indexed sources at the top of the search experience.

- Native LLM assistants (standalone chatbots or integrated platform agents) that may use retrieval-augmented generation (RAG) to return answers backed by the live web or private connectors.

How LLMs Are Rewriting Search

Two distinct but overlapping patterns

- Aggregated answer layers in search results

- These appear above or within traditional result lists and provide one consolidated answer, often with a small set of cited sources and a short “Read more” button. They compress the discovery funnel by giving users what they need without a click.

- Native, chat-first assistants

- These are interfaces where users ask follow-up questions and receive iterative responses. They may incorporate commerce actions (book a flight, buy a product) or complete workflows inside the assistant without redirecting to the publisher’s site.

Why this matters for businesses

- Less traffic, not necessarily less value. LLM-driven visits may decline, but those routed through AI assistants are often higher intent and convert differently. Some early analyses show AI-referred sessions convert at materially higher rates, but those results are uneven across sectors.

- New gatekeepers. Platforms mediate attention more than ever. Being “selected” as a cited source in an AI overview can replace ranking first on a result page.

- Attribution becomes blurry. Standard analytics that rely on referrer headers and UTM tags were built for link-by-link flows. Conversational answers break these assumptions, making it harder to measure the value of content the way marketers used to.

What the Harvard Business Review takeaway means for you

The argument that “LLMs are overtaking search” captures two practical truths: LLMs reduce discovery friction for users, and businesses must adopt new distribution, measurement, and content strategies. The HBR framing — that AI helps consumers while increasing friction for businesses — is accurate in tone. Where businesses have levers is in shaping the inputs that LLMs use: structured data, reliable signals, and high-quality canonical content increase the odds of being cited and referenced. The challenge is that many of those levers require both technical work and political engagement with platform owners.Immediate actions (next 30–90 days): tactical triage

These are practical, high-impact steps you can implement quickly to stabilize traffic and start feeding AI-friendly signals.1. Create clear, answer-first content

- Start each page with a concise, self-contained answer or summary (50–150 words) that directly addresses the principal user intent.

- Use bullet points and short paragraphs to make extraction trivial for RAG systems.

- Add a one-sentence timestamp and an author line for verifiability.

2. Add or audit structured data everywhere

- Implement JSON-LD schema for Article, FAQPage, Product, Organization, and WebSite where appropriate.

- Ensure schema fields include author, publishDate, image, publisher/logo, and canonical URL.

- Use product feeds and structured inventories for commerce sites (price, availability, GTIN).

3. Publish succinct FAQ blocks and “answer cards”

- Add FAQ markup with direct Q→A pairs that mirror natural questions users ask.

- Use “What / How / Why / When” formats. LLMs favor crisp Q&A that can be surfaced directly.

4. Harden publisher identity signals

- Maintain consistent author profiles (name, bio, credentials) linked to site-wide identity pages.

- Use a prominent About, Contact, Editorial Policy, and Corrections page to establish transparency.

5. Instrument server logs and backend analytics

- Capture raw request logs to detect referrals that don’t surface in traditional analytics.

- Add event tracking for micro-conversions (time on task, scroll depth, clicks) and correlate them with revenue outcomes.

Strategic roadmap (3–18 months): reshape content, product, and business models

Longer-term, you’ll need deeper changes to preserve brand reach and revenue.1. Reorient content architecture for LLM consumption

- Design “canonical answers” for high-value questions — concise, authoritative pages that serve as the primary reference for a topic.

- Build layered content: a short canonical answer + supporting long-form content that provides evidence, examples, and depth.

- Keep canonical answers updated and clearly dated.

2. Offer machine-friendly endpoints and feeds

- Provide an official API or structured data endpoint for entities you want LLMs to cite (e.g., public product catalog, press kit, dataset).

- Maintain a machine-readable changelog and sitemaps with granular update metadata.

3. Negotiate platform relationships

- Explore formal partnerships with AI platforms that provide referral reporting or integration programs.

- Consider licensing content or participating in publisher programs that allow you to monetize AI referrals directly.

4. Rebuild SEO around named-entity authority

- LLMs favor authoritative, entity-rich results. Strengthen signals that prove you own the topic: published research, named authors, primary data, and exclusive assets.

- Use structured profiles for people, products, and organizations so models can map claims to verifiable identities.

5. Diversify traffic and monetization

- De-prioritize dependency on any single discovery surface. Invest in first-party channels: newsletters, native apps, direct APIs, and community platforms.

- Test subscription and licensing models tailored to LLM consumption — e.g., API access to premium datasets or content bundles.

Technical recommendations: how to prepare your site for extraction and trust

LLM-driven systems rely on two capabilities: accurate retrieval and reliable synthesis. Your job is to make retrieval easy and trustable.- Use semantic headings (H1–H3) and explicit question headings that mirror user queries.

- Provide succinct excerpt-ready text near the top of pages. LLMs often sample from the first 150–300 words.

- Make authoritative claims citable: include inline references (named sources, data points) with context and provenance.

- Publish machine-readable licenses and terms for content reuse. A clear permissive license increases the chance an LLM will reuse your content properly; restrictive terms invite scraping but complicate commercial use.

- Add canonical tags and explicit pagination to avoid duplicate content confusion.

- Where appropriate, provide downloadable data (CSV, JSON) and structured attachments that are easier for RAG to ingest than HTML.

Content strategy: write for humans, structure for machines

Good content for LLMs is still good content for humans. The difference is emphasis: concise answers plus verifiable depth.- Lead with the bottom line: put the single-sentence answer up front.

- Then add three supporting bullets: quick facts, one-sentence evidence, and a timestamp.

- Follow with a long-form section that expands on nuance, methodology, and edge cases.

- Use example-rich content and real-world numbers to show provenance and usefulness.

- Publish short, platform-ready summaries that partners or assistants could display in a pinch — but keep your long-form content for deeper engagement.

Measurement and attribution in an AI-first world

Traditional referrer-based analytics will undercount AI-driven interactions. To adapt:- Use server-side analytics and first-party logging to detect non-referrer visits.

- Monitor changes in conversion funnels, not just pageviews. LLM referrals may produce fewer pageviews but higher downstream conversion per session.

- Implement cohort analysis comparing users who started via AI versus other channels (email, direct, search).

- Request referral and performance reports from platform partners and track API-based referrals.

- Re-evaluate KPIs: engagement depth, assisted conversions, and first-touch lifetime value will matter more than raw traffic counts.

Monetization and product design: capture value inside the assistant

As platforms enable in-assistant transactions, businesses have four basic strategies:- Enable in-assistant fulfillment: Build connectors that let the assistant complete purchases or bookings on your behalf, while preserving brand integrity and conversion credit.

- License premium content: Offer paid data feeds or premium content endpoints to AI platforms in exchange for attribution and revenue share.

- Offer micro-services: Expose micro-APIs (availability checks, price quotes) that LLMs can call to resolve queries without sending users away.

- Leverage hybrid gating: Provide a short, crawlable summary for free and reserve premium depth for logged-in or paying users, while offering a lightweight API for verification.

Risks and dangers — and how to defend against them

LLM integration brings measurable benefits but also systemic and brand-level dangers.Retrieval collapse and content pollution

- As more AI-generated content proliferates, the retrieval layer that powers LLM answers risks amplifying low-quality or self-referential content. This “retrieval collapse” can erode source diversity and promote poor answers.

- Defenses: maintain high-quality canonical content, publish data that stands out (unique datasets), and encourage trusted upstream citations.

Brand dilution and misinformation

- AI assistants may paraphrase or misattribute content, creating inaccuracies. Make your site the clearest, most verifiable source for your assertions: use author credentials, data sources, and images with captions that establish provenance.

Legal and policy risk

- Platforms’ reuse of content raises copyright and licensing questions. Evaluate legal strategies: updated terms of service, explicit machine-use licenses, and participation in industry-wide standards for attribution.

Technical scraping and model training

- Robots.txt and traditional crawlers don’t map cleanly to LLM training pipelines. Consider machine-readable licenses, robots meta tags, and structured disclaimers. Engage in policy conversations with platforms and industry bodies to shape norms and tools for respecting publisher intent.

Measurement blindspots

- If AI referrals replace visits, revenue attribution models may break down. Redesign experiments and A/B tests that measure downstream value independent of pageviews.

What to say to executives: three succinct messages

- This is not a fad — it’s an architectural shift. Expect sustained change in how discovery works; some industries will see large falls in organic pageviews, others will see higher conversion quality.

- Act now with pragmatic engineering and content moves. The fastest wins are structured data, canonical answers, and server-side analytics.

- Diversify downstream value capture. Don’t rely solely on organic clicks. Build first-party channels, APIs, and partnership strategies.

Checklist: a practical, prioritized action list

- Publish a one-line canonical answer on every key page.

- Add JSON-LD schema for Article, FAQ, Product, Organization.

- Implement first-party server logging and event-based conversion tracking.

- Create machine-readable content feeds (sitemaps, product feeds, press kits).

- Strengthen author and publisher identity pages with credentials and timestamps.

- Build short-form summaries for AI consumption and long-form pages that justify clicks.

- Test paywall/hybrid gating with API access for partners.

- Reach out to platform partner programs for reporting and integration opportunities.

- Establish internal metric set prioritizing conversion lift and lifetime value, not raw pageviews.

- Participate in industry efforts to define attribution and licensing norms.

Final analysis: why some businesses will win and others will lose

Businesses that adapt early will treat LLMs as a new distribution channel and a new trust interface. Winners will:- Build verifiable, canonical answers and expose machine-friendly endpoints.

- Capture value with API-based offerings and partnerships rather than relying only on click-through monetization.

- Upgrade analytics to measure the true contribution of AI-driven interactions.

There are also broader societal trade-offs to consider: a web dominated by synthesized answers can improve user speed and reduce cognitive load, but it can also concentrate power in platform curators and make independent verification harder. That’s why technical adaptation must be paired with industry collaboration on attribution, transparency, and standards.

Conclusion

The rise of LLM-influenced search is not merely a tweak to ranking algorithms — it’s a change to the very topology of the web’s attention economy. Your immediate priorities are technical and editorial: make your content extractable, verifiable, and valuable; instrument your systems to measure real business outcomes; and explore direct integration or licensing paths with platforms. Over time, the businesses that combine tight, machine-friendly canonical answers with rich, exclusive assets and diversified monetization will control the new forms of online discovery. Start by publishing answer-first pages, adding structured data, and rethinking KPIs — those three steps will give you the defensive posture and the offensive advantage needed to thrive when LLMs increasingly stand between users and your content.Source: Harvard Business Review LLMs Are Overtaking Search. Here’s How to Adjust Your Online Presence.