Thurrott readers are reporting a familiar — and increasingly visible — set of problems with the site’s commenting layer after Thurrott integrated the OpenWeb commenting platform: slow or missing comment loads, inconsistent notifications, apparent moderation opacity, and sporadic UI breakage that together undermine the value of site conversation and reader engagement.

OpenWeb is a commercial commenting and community-engagement platform that sells moderation, analytics, and audience tools to publishers. Over the last few years it has promoted AI-powered moderation (Aida), publisher policy tooling, and deeper engagement features intended to replace legacy comment systems and reduce spam. OpenWeb’s public materials describe improved moderation accuracy and publisher controls, while its recent policy updates emphasize adopting machine learning for content safety and newly consolidated moderation standards. Thurrott migrated its commenting infrastructure to OpenWeb to modernize comments, reduce spam, and gain new moderation controls. The site’s editorial team has been publicly candid about the migration’s trade-offs, writing about the moderation controls available in the OpenWeb admin console and promising transparency about how comments are handled. Paul Thurrott summarized moderation settings, described “Clarity mode” (a transparency-forward approach), and explicitly rejected a shadow-ban feature OpenWeb calls “Bozo mode.” Despite those steps, a thread in the Thurrott community (and parallel reports on other platforms) shows readers experiencing persistent problems: comment panels failing to load, delays in comment rendering, broken notification links, unexplained rejections, and occasional inability to post images or follow threads. Those issues appear to affect multiple browsers and operating systems, and users describe them as intermittent but frequent enough to degrade daily use.

For publishers and platform vendors alike, the imperative is clear: invest not only in automation that reduces spam but also in visible, accountable moderation processes and robust engineering to reduce UI fragility. For readers, pragmatic troubleshooting (disabling blockers, testing without VPN) and clear, evidence-rich bug reports will accelerate fixes. If the goal is a thriving comment ecosystem — one that boosts engagement, trust, and time-on-site — then reliability, fairness, and clear communication must be treated as product features, not afterthoughts.

<!-- Citations used in reporting and verification -->

Source: Thurrott.com Issues with OpenWeb and comments.

Background

Background

OpenWeb is a commercial commenting and community-engagement platform that sells moderation, analytics, and audience tools to publishers. Over the last few years it has promoted AI-powered moderation (Aida), publisher policy tooling, and deeper engagement features intended to replace legacy comment systems and reduce spam. OpenWeb’s public materials describe improved moderation accuracy and publisher controls, while its recent policy updates emphasize adopting machine learning for content safety and newly consolidated moderation standards. Thurrott migrated its commenting infrastructure to OpenWeb to modernize comments, reduce spam, and gain new moderation controls. The site’s editorial team has been publicly candid about the migration’s trade-offs, writing about the moderation controls available in the OpenWeb admin console and promising transparency about how comments are handled. Paul Thurrott summarized moderation settings, described “Clarity mode” (a transparency-forward approach), and explicitly rejected a shadow-ban feature OpenWeb calls “Bozo mode.” Despite those steps, a thread in the Thurrott community (and parallel reports on other platforms) shows readers experiencing persistent problems: comment panels failing to load, delays in comment rendering, broken notification links, unexplained rejections, and occasional inability to post images or follow threads. Those issues appear to affect multiple browsers and operating systems, and users describe them as intermittent but frequent enough to degrade daily use. What users are actually seeing (symptoms and patterns)

Slow or missing comment loads

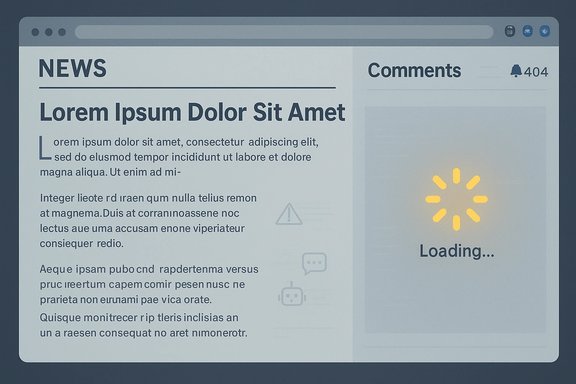

Many readers report that the comment box or the full comment list either loads late (after the article content) or not at all. That is not a cosmetic problem: when comments don’t load, threads, context, and ongoing conversations become invisible to most visitors, reducing time-on-page and community value. This behavior has been reported across browsers and devices, making a local extension or single-browser bug less likely as the only cause.Broken notifications and “404s”

Users report notification links that either 404 or take them to the article body rather than the specific comment. Notifications that fail to resolve to the relevant comment reduce conversational continuity and frustrate readers trying to follow replies. Thurrott staff previously acknowledged related notification work and noted complex interactions across site, CDN, and OpenWeb components during earlier integration phases.Moderation opacity and perceived unfairness

Complaints range from “my comment disappeared” to “my posts are visible only to me” (a classic shadow-ban symptom). Thurrott’s editor explicitly stated the site does not use OpenWeb’s “Bozo mode,” preferring a “Clarity mode” that shows comment status to the author, but readers still report rejections, inconsistent approvals, and opaque review processes. Across the web, users of OpenWeb on other publishers have likewise lodged similar complaints about rejection and appeals.UI/UX failures: focus jumps, input loss, and image posting blocks

Beyond loading and moderation, users mention intermittent loss of focus while typing (cursor exits the textarea), inability to embed images without prior permissions, and UI elements that do not behave reliably across browsers. These issues are reported on both desktop and mobile clients. Community troubleshooting threads suggest a mix of client-side and server-side contributors, but the precise trigger(s) remain disputed among affected users.Why this matters: engagement, trust, and the economics of commenting

A functioning comment layer is more than ornamentation; it multiplies engagement, extends article lifespan, and produces user-generated content that increases return visits. Publishers monetizing attention and building communities rely on reliable comment visibility, consistent notifications, and fair, explainable moderation.- User retention risk: when comments don’t load or users can’t trust the moderation process, habitual commenters leave and lurkers disengage.

- Reputational risk: perceived or actual unfair moderation can damage the publisher’s credibility and fuel negative word-of-mouth.

- Operational cost: opaque or over-aggressive moderation increases support burdens as readers file appeals and complaints that editors must triage.

OpenWeb’s side of the story: product claims and company context

OpenWeb markets itself as a platform that both scales moderation and increases engagement. Its product messaging highlights AI-driven moderation, community tools, and publisher control panels that allow tuning of civility, toxicity, profanity, and other filters. OpenWeb has published policy updates outlining its approach to moderation and how Aida is being rolled out to partners. Those documents reinforce that moderation is configurable and that the company is attempting to balance safety/harm reduction with publisher autonomy. The company itself has experienced high-profile governance turbulence, which can indirectly affect product stability and customer support during periods of executive turnover and legal friction. That instability — visible in media coverage about leadership disputes — is relevant because it can slow roadmap progress or cause capacity issues in trust-and-safety teams during transitions. OpenWeb has since made public commitments to improved policy and enforcement structures.Cross-check: are these issues unique to Thurrott?

No. Multiple publishers that use the same third-party commenting technology have fielded comparable complaints: notifications that don’t link correctly, comments that appear only to the author (or appear/disappear intermittently), and frequent support tickets about rejected comments with scant explanation. Independent review sites and social platforms show consistent user frustration with OpenWeb moderation and UX in recent years. That signal suggests the problems are not purely Thurrott-specific configuration issues, although local integration choices, CDN behavior, rate-limits, and publisher moderation settings play a decisive role in outcomes. Historic context: forum platforms and site comment systems have long wrestled with similar symptoms (post body truncation, line-feed loss, image upload problems, and asynchronous UI glitches). Archive discussions from other long-running tech communities demonstrate that integration with new commenting or forum code — especially when combined with ad blockers, privacy extensions, or strict browser privacy settings — often reveals edge-case behaviors. Those historical patterns reinforce that the interaction between client environment, CDN, and server-side moderation logic is complex and can produce intermittent failures.Technical root causes — plausible vectors to investigate

While a full root-cause requires developer-level logs and a forensic timeline, the patterns described by readers point to a small set of probable contributors:- Client-side extensions and privacy tooling. Ad blockers, script blockers, and privacy-focused browsers (Brave, Firefox with strict tracking protection, or heavy uBlock/Privacy Badger setups) can block third-party scripts, analytics beacons, or API endpoints used to fetch comments. That can cause comments to fail silently or load only when certain requests succeed. Many early reports reference ad blockers or VPNs as potential correlates.

- Third-party CDN or resource timing. If comment assets load from an external domain with strict CORS or an intermittent CDN, the page may render content while the comment iframe or XHR is still pending. Slow or failed resource loads can cause comment modules to initialize improperly. Past operations notes on similar platforms suggest Cloudflare or CDN interactions historically introduced notification and asset-fetch issues for other publishers.

- Moderation engine latency or configuration. If moderation is set to “approval required” for links, images, or certain triggers, comments can be held in pending states until reviewed. Machine learning classifiers may also flag content for review, creating apparent “invisibility” to other users until a moderator clears the content. Misconfigured thresholds or incorrect taxonomy mapping can over-block legitimate comments.

- Account/identity verification and rate-limiting. Use of VPNs, shared IP pools, or suspicious rate patterns can trigger anti-bot heuristics. When accounts are flagged, platforms may silently restrict visibility or require additional verification steps, which can look like censorship from the user’s perspective.

- UI event-handling bugs. Reports of focus loss and cursor jumps while typing indicate client-side JavaScript timers or event handlers that re-render the textarea, steal focus, or perform frequent DOM operations. These bugs can be surface-level but completely disrupt the experience.

Strengths worth acknowledging

- Better moderation tooling at scale. OpenWeb’s platform provides publishers with filters for civility, toxicity, and targeted rule sets that can drastically reduce spam and abusive behavior when tuned correctly. For many publishers, that capability is transformative and reduces human moderation load.

- Transparency efforts by publishers. Thurrott’s editorial team has been unusually transparent about moderation settings, not using shadow bans, and attempting to merge community guidelines with platform policy to reduce confusion. That level of disclosure is rare and constructive.

- Active company policy evolution. OpenWeb’s public policy updates and the rollout of Aida show that the company is iterating on moderation approaches and publicly committing to publisher standards and enforcement improvements. Those public-facing commitments create a pathway for measurable improvement.

Critical concerns and risks

- Perception of censorship and fairness. Even accurate enforcement can feel arbitrary without transparent appeals, clear notification, and visible moderation logs. Perception matters: readers who feel unfairly moderated are more likely to publicize grievances and abandon engagement.

- Vendor risks during governance turbulence. Company leadership disputes or slowdowns in trust-and-safety staffing can impair support responsiveness. High-profile executive drama can distract from product stability and customer support capacity.

- Over-reliance on opaque ML decisions. LLM- or classifier-driven moderation can create false positives that hide legitimate content. If publishers treat automation as a black box, they risk eroding community trust.

- Technical coupling to third parties. Relying on an external commenting provider increases exposure to CDN latency, cross-origin script blocking, and third-party outages beyond the publisher’s direct control.

Practical recommendations for Thurrott and similar publishers

- Publish a simple, public moderation dashboard or log.

- Show aggregated counts of pending approvals, automated rejections, appeal outcomes, and average human review times. Even basic transparency (numbers and trends) reduces suspicion.

- Improve in-product messaging for authors.

- When a comment is held or rejected, provide an immediate, human-readable reason and an avenue to request review. Avoid opaque “rejected” email messages with no next steps.

- Offer a safe, one-click test mode for readers.

- A “report a comment bug” workflow that auto-collects device/browser/console logs (with user consent) will greatly reduce back-and-forth and empower triage.

- Create a lightweight “recovery” path for comment visibility issues.

- If comments fail to load, provide a cached or static fallback that shows the last known 20 comments, reducing the appearance of an empty thread.

- Tune ML thresholds in stages and publish change notes.

- When modifying classifier thresholds (e.g., profanity, toxicity), publish a short changelog describing the change and expected impacts so the community understands spikes in moderation.

- Engage a small community moderation cohort.

- Add trusted readers as community moderators with limited powers and clear rules. This distributes load and demonstrates local accountability. Thurrott has noted interest in such roles and the technical capability exists in their OpenWeb console to enable it responsibly.

- Improve the appeals SLA.

- Commit to explicit timelines for human review of appeals (e.g., 48–72 hours) and surface those SLAs publicly.

For readers: how to troubleshoot your side

- Temporarily whitelisting the site or disabling privacy extensions (for testing) can identify whether client-side blockers cause comment load issues.

- Switch browsers or try an incognito window to check for extension conflicts.

- If you use a VPN, test without it briefly to see if IP heuristics are affecting visibility.

- When reporting a problem, include the browser, version, OS, whether you used a VPN, and steps to reproduce — that information materially helps debugging.

What remains unverified and where to watch for confirmation

Several technical assertions remain speculative without access to server logs and systems telemetry: the exact role of CDN timing vs. moderation latency vs. client-side script blocking in any particular incident needs engineering validation. Public indications and user reports point to a mix of causes, but definitive root cause requires a coordinated incident review by Thurrott’s engineers and OpenWeb’s support team. Until then, treat precise blame assignments with caution.Conclusion

Thurrott’s OpenWeb integration illustrates a common modern trade-off: third-party commenting platforms bring powerful moderation tools and operational scale, but they also introduce new failure modes — from third-party script loading and CDN interactions to opaque AI moderation decisions. Thurrott’s editorial transparency and stated commitment to Clarity mode are positive steps, but readers’ persistent reports of slow loads, broken notifications, and unexplained moderation actions show there’s work left to do.For publishers and platform vendors alike, the imperative is clear: invest not only in automation that reduces spam but also in visible, accountable moderation processes and robust engineering to reduce UI fragility. For readers, pragmatic troubleshooting (disabling blockers, testing without VPN) and clear, evidence-rich bug reports will accelerate fixes. If the goal is a thriving comment ecosystem — one that boosts engagement, trust, and time-on-site — then reliability, fairness, and clear communication must be treated as product features, not afterthoughts.

<!-- Citations used in reporting and verification -->

Source: Thurrott.com Issues with OpenWeb and comments.