The advice industry is at an inflection point: firms are pouring resources into digital advice engines and AI-driven tools, yet multiple surveys show a notable majority of investors still want a human adviser involved in their financial life — a gap that is reshaping product roadmaps, adviser economics, and regulatory priorities.

The shift from simple robo‑advisers to full‑featured digital advice platforms has accelerated in the last three years. Early robo models focused on automated portfolio allocation; today’s digital advice platforms claim to deliver comprehensive strategy, personalised retirement pathways, and smooth handoffs to human advisers when needed. Vendors and platform teams increasingly present digital advice as a way to scale advice delivery and reduce the per‑client cost of service while preserving — or even enhancing — outcomes for retail clients.

At the same time, independent research houses and professional bodies continue to track investor sentiment and channel preferences. Those data points matter because they determine where firms should invest: front‑end client interfaces, adviser workflows, or regulatory and governance frameworks that keep AI and automation safe, explainable, and compliant. Recent releases from Cerulli Associates and Chartered Accountants ANZ are among the clearest signals yet that the market is embracing digital tools — but not at the expense of human judgement.

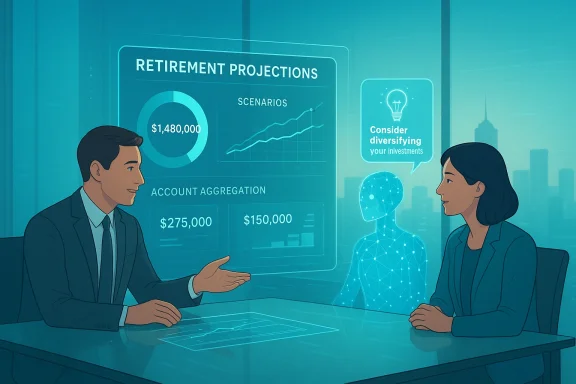

Those figures matter for how firms design digital offers. If older and mid‑career investors — who hold the lion’s share of investable assets — remain adviser‑centric, platforms that automate away the human relationship risk missing the higher‑value client segment entirely. Cerulli’s analysts also stress the importance of a strong digital portal and account‑aggregation features as complementary to an adviser relationship, not a substitution.

This pattern — high use of low‑cost AI among younger, less affluent cohorts — suggests two simultaneous trends: digital tools are democratising access to financial ideas and they are also exposing many retail investors to potentially unvetted guidance. That dynamic explains why industry professionals are both excited about scale and worried about misinformation and liability.

Key digital features being rolled out across platforms include:

For advisory firms and platforms, the imperative is straightforward but demanding: invest in well‑governed, explainable digital tools that complement human judgement; design service tiers that match client willingness to pay and need for human contact; and treat governance, explainability, and client education as first‑class product features. Firms that balance scale with accountability will capture the biggest opportunity in the next decade — a hybrid future where software amplifies adviser reach while advisers preserve the human trust that clients continue to value.

Source: ifa.com.au https://www.ifa.com.au/human-advisers-still-preferred-as-digital-advice-push-gathers-pace/

Background

Background

The shift from simple robo‑advisers to full‑featured digital advice platforms has accelerated in the last three years. Early robo models focused on automated portfolio allocation; today’s digital advice platforms claim to deliver comprehensive strategy, personalised retirement pathways, and smooth handoffs to human advisers when needed. Vendors and platform teams increasingly present digital advice as a way to scale advice delivery and reduce the per‑client cost of service while preserving — or even enhancing — outcomes for retail clients.At the same time, independent research houses and professional bodies continue to track investor sentiment and channel preferences. Those data points matter because they determine where firms should invest: front‑end client interfaces, adviser workflows, or regulatory and governance frameworks that keep AI and automation safe, explainable, and compliant. Recent releases from Cerulli Associates and Chartered Accountants ANZ are among the clearest signals yet that the market is embracing digital tools — but not at the expense of human judgement.

What the hard data says

Preference for human advisers remains strong across cohorts

Cerulli Associates’ latest U.S. Advisor Edition finds that, despite rising use of online investor tools, a meaningful minority preference for online‑only advice persists — but the majority across most age cohorts still want human involvement. The report highlights that only 25% of investors in their 50s and a mere 9% of investors in their 70s prefer an online‑only investment adviser. Even among households that treat online goal‑tracking tools as essential, only 36% prefer online‑only advice while 46% still prefer having a human adviser involved. These findings were released as part of the Cerulli Edge 1Q 2026 issue.Those figures matter for how firms design digital offers. If older and mid‑career investors — who hold the lion’s share of investable assets — remain adviser‑centric, platforms that automate away the human relationship risk missing the higher‑value client segment entirely. Cerulli’s analysts also stress the importance of a strong digital portal and account‑aggregation features as complementary to an adviser relationship, not a substitution.

Rapid adoption of free AI tools by retail investors — especially younger cohorts

In Australia, a recent Chartered Accountants ANZ (CA ANZ) retail investor survey shows almost half of retail investors are already using AI tools such as ChatGPT or Microsoft Copilot to inform investment decisions. The CA ANZ preliminary results found 48% of surveyed investors with more than $10,000 invested reported using AI platforms for investment guidance, and 81% of those users were at least somewhat satisfied with the information they received. Gen Z (18–29) investors were the most active adopters, with 78% saying they had used AI for financial or investing advice. The survey polled around 1,000 Australian investors.This pattern — high use of low‑cost AI among younger, less affluent cohorts — suggests two simultaneous trends: digital tools are democratising access to financial ideas and they are also exposing many retail investors to potentially unvetted guidance. That dynamic explains why industry professionals are both excited about scale and worried about misinformation and liability.

Why human advisers still matter

Trust, nuance, and complexity

The data backs what frontline advisers have long argued: trust and human judgement are hard to automate. Cerulli points to affluent investors’ desire for a trained professional who can interpret a financial plan, adjust for life circumstances, and act as a sounding board when markets or family events create emotional decisions. Those qualitative values — trust, reassurance, accountability — persist even when clients use digital dashboards daily.Fee transparency and perception of value

Costs and how clients pay for advice remain sticking points. Cerulli research has repeatedly flagged cost transparency as a driver of client onboarding and retention decisions; unclear fee models can make prospective clients hesitate to move from DIY to advised relationships. In practice, digital tools can lower delivery costs, but advisers still need to clearly communicate the value‑add they provide beyond a portfolio algorithm.Data completeness and “held‑away” assets

Advisers also retain value because they can access and integrate complex, held‑away assets and tax considerations that many digital tools cannot reliably consolidate in every jurisdiction. Cerulli notes account aggregation is widely regarded as essential by affluent investors; the tool is valuable when paired with adviser insight that translates aggregated data into actionable strategy.How digital advice is actually being deployed

From robo to hybrid to adviser‑enabled workflows

Digital advice vendors and product teams are pitching a hybrid model: automation for routine, rule‑based decisions and human escalation for complex or emotionally charged issues. DASH, for example, positions modern digital advice as an engine that leverages advanced algorithms to deliver quality strategic pathways and triage effectively to an adviser when human judgement is required. DASH highlights retirement planning as an area where digital tools can simplify the math — showing clients what income they can sustainably draw and where gaps exist — while opening an easy path to full, personalised advice if the client needs it.Key digital features being rolled out across platforms include:

- Account and balance aggregation to create a single financial picture for clients.

- Scenario modelling for retirement, cashflow, and tax outcomes.

- Guided questionnaires and nudges to standardise data capture for SOAs (statements of advice).

- AI‑assisted content generation for client communications and meeting notes.

- Human‑in‑the‑loop workflows that route complex cases to advisers.

Use cases where automation delivers clear value

Digital tooling is especially effective for:- Scaling low‑to‑moderate complexity advice (e.g., basic retirement drawdown plans).

- Pre‑meeting data collection and scenario visualisation to make adviser time more productive.

- Back‑office tasks: document generation, compliance checks, and meeting notes.

Evidence from vendor case studies and industry commentary shows operational gains in time‑to‑deliver and client throughput, but these gains require disciplined governance and measured pilots.

Business implications for advisories and platforms

Adviser economics and scalability

The traditional model caps advisers at roughly 85–150 clients depending on the complexity of the book. Digital advice promises to increase that headroom dramatically by automating low‑value tasks and delivering “light‑touch” advice to segmented client groups. That can unlock new revenue from younger clients and children of existing clients, but it requires reworking pricing and service tiers so advisers maintain capacity to serve high‑net‑worth and complex households. Vendor and industry analyses make a strong case that tech should be used to expand an adviser’s reach, not remove the adviser.Product and go‑to‑market choices

Firms face trade‑offs:- Build a pure digital channel aimed at scale and low fees.

- Create a hybrid layer where digital tools feed advisers and trigger escalation.

- Integrate digital into the adviser’s toolkit to boost productivity and client engagement.

Regulatory and compliance burden shifts

Increased use of AI tools raises regulatory questions about accountability, record keeping, and representational accuracy. Advisers and product managers must document how AI outputs are generated and ensure clients are not misled by probabilistic or hallucinated assertions. Industry commentary urges governance — model cards, retraining cadences, human‑in‑the‑loop thresholds, and audit trails — as prerequisites to scale. These governance costs should be factored into any product roadmap.The risks that deserve front‑page attention

Quality and provenance of AI outputs

When retail investors rely on free tools like ChatGPT, model outputs are only as good as the training data and prompts used. CA ANZ emphasised that AI’s usefulness depends on high‑quality, reliable financial data used for training and highlighted trust as a limiting factor for non‑users. The risk: unvetted or out‑of‑date guidance can lead to poor investment decisions and downstream liability or regulatory scrutiny. Advisers and vendors must therefore treat AI‑derived guidance as assistive, not authoritative, unless supported by robust, auditable datasets.Sample bias and representativeness in surveys

Survey headlines (e.g., “48% use AI”) can mask important segmentation: CA ANZ’s survey focused on retail investors with at least $10,000 invested and used a 1,000‑person sample. That tells us about emerging behaviour in the Australian retail market, but it is not a universal truth across geographies or wealth segments. Similarly, Cerulli’s U.S. edge report highlights affluent investor behaviour; the U.S. wealthy behave differently from younger, lower‑wealth cohorts in Australia. Treat cross‑country extrapolation with caution.Human‑machine trust mismatch

Platforms regularly tout accuracy gains, but humans judge systems by different metrics — explainability, predictability, and the ability to respond to exceptions. When models err, a lack of explainability can erode client trust quickly and permanently. Building clear escalation paths and human oversight is not just best practice — it’s a commercial necessity.Practical playbook: how advisers and firms should respond

For advisers: adopt a hybrid-first mindset

- Embrace digital tools to automate repetitive work: meeting notes, portfolio rebalances, and routine KYC. This buys time for client conversations where advisers add differential value.

- Standardise a triage flow: define which client signals (e.g., life events, account thresholds, low confidence model flags) must trigger human review.

- Communicate value clearly: explain fee structures and the adviser role relative to digital tools on the onboarding portal. Transparency reduces resistance to paid advice.

For platforms and product leaders: build trust into the product

- Invest in account aggregation and a user‑friendly portal — Cerulli highlights these as table stakes for affluent clients.

- Implement governance: model cards, audit trails, retraining policies, and human‑in‑the‑loop thresholds are necessary to scale responsibly.

- Offer tiered service models that match client needs and revenue potential: self‑service, guided/digital, and full‑service adviser tiers.

For regulators and compliance teams: focus on explainability and recordkeeping

- Ensure digital advice outputs are logged alongside the inputs and data provenance.

- Demand that AI‑assisted recommendations be accompanied by clear caveats about model limitations and the role of human judgement.

- Prepare guidelines for acceptable marketing claims about AI accuracy and predictive power. These guardrails limit consumer harm and potential litigation.

Examples from the market

- DASH positions its “single advice engine” as a unifying layer that powers digital, hybrid, and adviser‑led experiences on one platform, emphasising adviser integration and triage when human judgement is needed. The company highlights retirement planning as a clear win area for digital advice.

- Altruist’s Hazel AI and other adviser‑facing AI tools show the industry move toward using AI for tax and document interpretation tasks. Industry commentary frames these developments as productivity tools that free up advisers for higher‑value client work rather than replacements for advisers.

- Cerulli’s and CA ANZ’s survey outputs serve as practical signposts: Cerulli for affluent U.S. investors’ channel preferences and CA ANZ for early adoption of free AI tools among Australian retail investors. Together they sketch a world where digital tools increase engagement but do not yet replace the human adviser for core, high‑value decisions.

How to measure success when deploying digital advice

- Client outcomes: is the tool improving retirement income sufficiency, goal attainment, or investment behaviour?

- Adviser productivity: does the platform reduce low‑value hours and increase meaningful adviser‑client time?

- Adoption and satisfaction: what share of clients use the tool and how satisfied are they versus traditional channels?

- Risk controls: are governance and audit mechanisms detecting and correcting erroneous outputs?

- Business KPIs: client acquisition cost, lifetime value, and retention among digitally served segments.

Conclusion

The evidence is clear and convergent: digital advice is maturing quickly and is already reshaping how advisory businesses operate, yet it is not rendering human advisers obsolete. Instead, digital advice is changing the economics and texture of the adviser role — automating routine tasks, improving client engagement at scale, and creating clearer pathways for younger or lower‑wealth clients to enter an advice relationship. Cerulli’s U.S. research and CA ANZ’s Australian survey together illustrate a bifurcated reality: tech adoption is uneven across cohorts, and trust, complexity, and perceived value still tether many clients to human advisers.For advisory firms and platforms, the imperative is straightforward but demanding: invest in well‑governed, explainable digital tools that complement human judgement; design service tiers that match client willingness to pay and need for human contact; and treat governance, explainability, and client education as first‑class product features. Firms that balance scale with accountability will capture the biggest opportunity in the next decade — a hybrid future where software amplifies adviser reach while advisers preserve the human trust that clients continue to value.

Source: ifa.com.au https://www.ifa.com.au/human-advisers-still-preferred-as-digital-advice-push-gathers-pace/