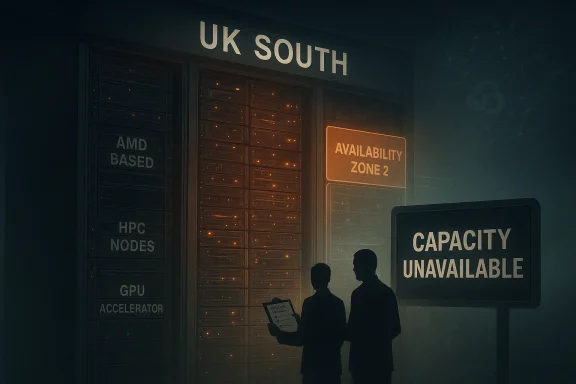

Microsoft’s UK South Azure region is under strain again, and this time the complaint from customers is not just about a slow VM request or an awkward quota ticket. The sharper accusation is that core cloud capacity has become scarce enough to block production workloads, especially AMD-based compute, HPC-oriented instances, and GPU-backed services that enterprises increasingly need for AI-adjacent work. In the background sits a broader frustration: many customers believe Microsoft is prioritizing its own AI expansion, including Copilot, while ordinary Azure buyers are left competing for the same scarce infrastructure.

That sentiment is not hard to understand. Microsoft’s own documentation shows that Azure capacity is not unlimited and that customers who need guaranteed compute can reserve it ahead of time, with reservations tied to a specific VM size, region, and possibly an availability zone. Microsoft also says that if Azure lacks capacity at the time of reservation, deployment fails, and users may need to try a different size, zone, or region. In other words, the system is designed to make scarcity visible, but not necessarily to make it easy to escape. (learn.microsoft.com)

The real problem for UK customers is that UK South is not just any region. It is one of the two principal Azure regions in the UK, and it supports Availability Zones, which makes it the preferred landing zone for workloads that need resilience, local data residency, and low latency. When that region fills up, customers who cannot move data outside the UK do not have many good alternatives. That is why the current complaints land so hard: they are not about convenience, but about operational continuity and compliance. (azure.microsoft.com)

Azure’s UK footprint has always carried a special weight because British customers often choose Microsoft Cloud for a mix of residency, regulatory, and latency reasons. Microsoft’s UK G-Cloud material says its UK datacentres provide data residency in the UK and geo-redundant protection across multiple locations, while its global infrastructure page lists UK South and UK West as the two UK regions. That matters because regional choice is not merely a performance preference; for many organizations it is a legal or contractual requirement. (azure.microsoft.com)

The present friction is also not the first time UK South has shown signs of strain. Microsoft publicly acknowledged performance issues in UK South on January 21, 2025 for Azure Lab Services, saying some operations were taking longer than usual and could leave VMs stuck in starting or stopping states. The company later said it had identified a code bug in a dependency and deployed a fix on January 28, 2025. That episode was narrower than today’s complaints, but it is still important history: it shows that UK South has already experienced enough operational stress to trigger a formal Microsoft notice. (techcommunity.microsoft.com)

There is also a broader Azure pattern here. Capacity shortages are not unique to the UK, but the UK market tends to feel them more acutely because the country’s cloud estate is concentrated in fewer regions and often constrained by sovereignty expectations. Microsoft’s own capacity reservation docs explicitly acknowledge that Azure may not have the capacity a customer requests, and that quota alone is not enough; you need both quota and actual free infrastructure. That distinction is the heart of the issue. (learn.microsoft.com)

The Computer Weekly report, echoed by user complaints on Reddit, suggests the stress is not evenly distributed across SKUs either. Customers say AMD instances are especially scarce, while GPU and HPC capacity is harder still to obtain. That kind of imbalance is exactly what one would expect when a region is under pressure from mixed demand: general-purpose compute may remain available while the most desirable or specialized silicon disappears first. The result is not a total outage, but a more irritating failure mode: partial availability that is good enough to advertise and bad enough to derail migration plans.

This is why the UK South complaints are so disruptive to enterprise planning. A migration team may do everything right, secure approval, raise quota, and align architecture with Microsoft best practice, only to discover that the region simply will not give them the VM family they need. The gap between theoretical availability and practical allocation becomes a business problem, not a technical one. (learn.microsoft.com)

That is why “just use another region” is not a satisfying answer for many buyers. If your data must remain in the UK, if your application needs the lowest possible latency, and if your resilience design assumes regional pairing, then shifting away from UK South can force architectural compromises. The moment that happens, capacity scarcity turns into compliance risk and redesign work. (azure.microsoft.com)

This is why the complaints sound so emotional. Customers are not just upset that Azure is full; they are worried that the company’s new AI ambitions are distorting the economics of the platform they already pay for. The fear is not irrational. When a cloud vendor tries to do both frontier AI and mainstream enterprise hosting on the same supply chain, someone will feel the squeeze first. (learn.microsoft.com)

For consumers, the effect is less visible but no less real when AI and productivity services are involved. Even if they are not directly buying UK South VM capacity, they still depend on the same broader infrastructure ecosystem. If Microsoft’s resource allocation becomes too tight, the spillover may show up as slower service launches, reduced regional choice, or more frequent “temporarily unavailable” messages in adjacent products.

Source: Computer Weekly Azure customers up in arms over ‘full’ UK South region | Computer Weekly

That sentiment is not hard to understand. Microsoft’s own documentation shows that Azure capacity is not unlimited and that customers who need guaranteed compute can reserve it ahead of time, with reservations tied to a specific VM size, region, and possibly an availability zone. Microsoft also says that if Azure lacks capacity at the time of reservation, deployment fails, and users may need to try a different size, zone, or region. In other words, the system is designed to make scarcity visible, but not necessarily to make it easy to escape. (learn.microsoft.com)

The real problem for UK customers is that UK South is not just any region. It is one of the two principal Azure regions in the UK, and it supports Availability Zones, which makes it the preferred landing zone for workloads that need resilience, local data residency, and low latency. When that region fills up, customers who cannot move data outside the UK do not have many good alternatives. That is why the current complaints land so hard: they are not about convenience, but about operational continuity and compliance. (azure.microsoft.com)

Background

Background

Azure’s UK footprint has always carried a special weight because British customers often choose Microsoft Cloud for a mix of residency, regulatory, and latency reasons. Microsoft’s UK G-Cloud material says its UK datacentres provide data residency in the UK and geo-redundant protection across multiple locations, while its global infrastructure page lists UK South and UK West as the two UK regions. That matters because regional choice is not merely a performance preference; for many organizations it is a legal or contractual requirement. (azure.microsoft.com)The present friction is also not the first time UK South has shown signs of strain. Microsoft publicly acknowledged performance issues in UK South on January 21, 2025 for Azure Lab Services, saying some operations were taking longer than usual and could leave VMs stuck in starting or stopping states. The company later said it had identified a code bug in a dependency and deployed a fix on January 28, 2025. That episode was narrower than today’s complaints, but it is still important history: it shows that UK South has already experienced enough operational stress to trigger a formal Microsoft notice. (techcommunity.microsoft.com)

There is also a broader Azure pattern here. Capacity shortages are not unique to the UK, but the UK market tends to feel them more acutely because the country’s cloud estate is concentrated in fewer regions and often constrained by sovereignty expectations. Microsoft’s own capacity reservation docs explicitly acknowledge that Azure may not have the capacity a customer requests, and that quota alone is not enough; you need both quota and actual free infrastructure. That distinction is the heart of the issue. (learn.microsoft.com)

The Computer Weekly report, echoed by user complaints on Reddit, suggests the stress is not evenly distributed across SKUs either. Customers say AMD instances are especially scarce, while GPU and HPC capacity is harder still to obtain. That kind of imbalance is exactly what one would expect when a region is under pressure from mixed demand: general-purpose compute may remain available while the most desirable or specialized silicon disappears first. The result is not a total outage, but a more irritating failure mode: partial availability that is good enough to advertise and bad enough to derail migration plans.

What Microsoft’s Documentation Says About Capacity

Microsoft’s own guidance is clear that on-demand capacity reservation exists precisely because Azure regions can run short of ready-to-use compute. A reservation can be created for a region or availability zone, and once accepted, the capacity is held for the customer until it is deleted. That is a strong signal: if you want certainty, you pay for certainty. If you want flexibility, you accept the possibility of scarcity. (learn.microsoft.com)Capacity is guaranteed only after reservation

The docs also make an important distinction between quota and actual capacity. You can have enough subscription quota on paper and still fail a deployment if Azure cannot satisfy the physical request. Microsoft says reservation creation itself fails if capacity is unavailable, and it warns that deployments beyond the reserved quantity are still subject to quota checks and Azure’s ability to fulfill extra capacity. That is the fine print many customers only confront when a migration stalls. (learn.microsoft.com)This is why the UK South complaints are so disruptive to enterprise planning. A migration team may do everything right, secure approval, raise quota, and align architecture with Microsoft best practice, only to discover that the region simply will not give them the VM family they need. The gap between theoretical availability and practical allocation becomes a business problem, not a technical one. (learn.microsoft.com)

Specialized workloads feel scarcity first

The documentation also lists several GPU-accelerated and other specialized VM families as reservation candidates, including AMD-based GPU series. That is a reminder that capacity issues are rarely evenly spread. Higher-value or more compute-intensive workloads tend to collide with limited inventory first, which is why AI, graphics, HPC, and virtual desktop projects often feel regional constraints before general web hosting does. (learn.microsoft.com)- General-purpose VMs may still be available when premium or specialized SKUs are not.

- GPU and HPC instances are more likely to show scarcity first.

- Reserved capacity can reduce uncertainty, but it does not eliminate regional shortages.

- Quota approval is necessary but not sufficient.

- Availability Zones help resilience, yet they do not magically create new compute.

Why UK South Matters So Much

UK South is more than a location on a map. It is one of the few Azure regions in the UK that supports Availability Zones, which means customers can spread workloads across distinct datacentres for resilience. For enterprises running regulated services or latency-sensitive apps, that is the region they want first, and often the region they must use. (azure.microsoft.com)The UK West fallback is not a perfect substitute

Microsoft does offer UK West, but the region is a different proposition. It is the secondary UK region, and it is frequently treated as a disaster-recovery or failover destination rather than the primary home for mainstream production. Customers may be able to move there, but the trade-off can be real: geography, architecture, and resilience design all have to be reconsidered if they leave UK South. (azure.microsoft.com)That is why “just use another region” is not a satisfying answer for many buyers. If your data must remain in the UK, if your application needs the lowest possible latency, and if your resilience design assumes regional pairing, then shifting away from UK South can force architectural compromises. The moment that happens, capacity scarcity turns into compliance risk and redesign work. (azure.microsoft.com)

Availability Zones raise the expectation bar

Because UK South supports zones, customers expect it to behave like a mature enterprise region. Zones are supposed to absorb localized failure and spread risk. But they do not solve the bigger problem of regional saturation, and they certainly do not create extra AMD or GPU hardware on demand. If zone one is crowded and zone two is better, that is still an operational headache, not a solution. (azure.microsoft.com)- Latency-sensitive apps are harder to move.

- Compliance-bound workloads cannot simply hop to another geography.

- Primary/secondary region designs become messy when the primary is full.

- Zone architecture helps availability, but not supply.

- Regional branding can outpace regional actual capacity, creating mismatch.

The Copilot and AI Question

The loudest accusation from customers is that Microsoft has poured scarce datacentre resources into Copilot AI and related GPU-heavy services, starving traditional Azure workloads in the process. That claim is hard to verify from public evidence alone, but it reflects a real strategic tension: AI services do consume a lot of specialized infrastructure, and Microsoft is undeniably in a race to expand its AI footprint across cloud and productivity products.AI demand changes the shape of infrastructure demand

Unlike ordinary compute, AI workloads can be disproportionately hungry for high-end accelerators, memory bandwidth, and tightly managed clusters. That makes them a different class of infrastructure demand, one that can crowd out standard VM families if supply is finite. Even if Microsoft is not literally “stealing” capacity from customers, the market effect may look similar: more GPUs and premium silicon devoted to strategic AI use cases leaves fewer resources available for conventional regional demand.This is why the complaints sound so emotional. Customers are not just upset that Azure is full; they are worried that the company’s new AI ambitions are distorting the economics of the platform they already pay for. The fear is not irrational. When a cloud vendor tries to do both frontier AI and mainstream enterprise hosting on the same supply chain, someone will feel the squeeze first. (learn.microsoft.com)

Microsoft’s own AI expansion is the subtext

Microsoft has been expanding Copilot and other AI infrastructure aggressively, and that broader push makes any shortage story more politically charged. If customers already suspect that Microsoft is reorganizing infrastructure for AI, every capacity denial will be read through that lens. Whether or not the suspicion is fair, it is now part of the customer narrative, and narratives matter in cloud buying decisions.- AI consumes a different mix of hardware than ordinary enterprise hosting.

- GPU scarcity can ripple into unrelated services.

- Customers read capacity shortages as strategic choices, not just accidents.

- Cloud trust weakens when buyers think they are financing their own displacement.

- The more Microsoft markets AI, the more every shortage becomes a referendum on AI priorities.

Enterprise Impact vs Consumer Impact

For enterprises, the damage is immediate and concrete. A blocked VM deployment can stall migrations, delay virtual desktop rollouts, and force architects to redesign for less-preferred regions or less-suitable hardware. Microsoft’s own docs say capacity reservations can guarantee access, but only if the customer planned ahead and reserved the right size in the right place. That is useful for mature procurement teams, but it is cold comfort for organizations that discover the problem mid-project. (learn.microsoft.com)Migration projects are the first to suffer

The most painful stories are from teams already partway through migration. Those customers have often made architectural commitments, moved staff, and absorbed the disruption of transition, only to find that the final stages cannot be completed because the region refuses to admit more instances. That is exactly the sort of friction that turns a cloud project from a modernization win into a budgeting problem.For consumers, the effect is less visible but no less real when AI and productivity services are involved. Even if they are not directly buying UK South VM capacity, they still depend on the same broader infrastructure ecosystem. If Microsoft’s resource allocation becomes too tight, the spillover may show up as slower service launches, reduced regional choice, or more frequent “temporarily unavailable” messages in adjacent products.

Risk profiles differ by customer type

Enterprises usually have procurement leverage, support channels, and the ability to redesign. Consumers do not. Enterprises can sometimes use a mix of reserved capacity, alternate zones, or different SKU families. Consumers tend to experience the consequences indirectly, through service quality or the availability of features tied to regional backend capacity. That difference is why the complaint can sound like a cloud infrastructure story to one audience and a product-quality story to another. (learn.microsoft.com)- Enterprises face migration delays and compliance trade-offs.

- Consumers experience the issue as feature delays or service unreliability.

- Reserved capacity helps planners, but not everyone plans far enough ahead.

- Mid-project stalls are more damaging than initial deployment failures.

- Regional scarcity can erode trust across both audiences, even if the symptoms differ.

What the Public Evidence Supports

Publicly, the strongest evidence is not that Microsoft has admitted to a UK South “full region” crisis, but that capacity limitation is a known and documented Azure reality. Microsoft states that capacity reservation may fail if the platform lacks available hardware, that quota is separate from capacity, and that some services have temporarily paused new deployments in UK South due to constraints. Those are not rumors; they are Microsoft’s own words. (learn.microsoft.comSource: Computer Weekly Azure customers up in arms over ‘full’ UK South region | Computer Weekly