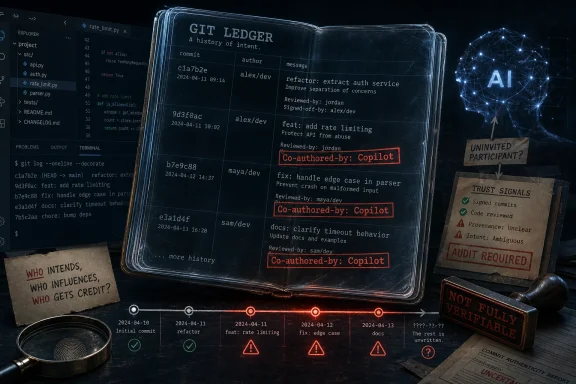

Visual Studio Code changed its Git behavior in April 2026 so that some users’ commits were automatically stamped with a “Co-authored-by: Copilot” trailer, even when developers said Copilot had not meaningfully authored the work. That is not a paperwork glitch. It is a trust problem in the most important ledger software developers keep: their Git history. Microsoft has already moved to reverse the default, but the incident is a useful warning about what happens when AI features stop behaving like tools and start behaving like participants.

The offending setting,

The problem is that attribution is not the same kind of preference as a minimap, theme, or tab size. A Git commit is part technical artifact, part authorship statement, part audit record. Changing the default from “off” to “all” quietly moved AI credit from an explicit disclosure mechanism to an ambient behavior baked into the editor.

That distinction matters because Git trailers travel. They appear in logs, CI output, repository history, GitHub contribution graphs, mirrors, compliance archives, release audits, and downstream forks. Once pushed, they are not just UI chrome; they become part of the project’s historical record.

Microsoft’s defenders can reasonably say this was not a sinister plot to steal anyone’s code. But the charitable version is still bad: a major developer tool changed the authorship metadata of commits without making that change visible enough to the people whose names, projects, and reputations were attached to those commits.

For developers who live in the command line, this may sound like another reason to avoid IDE Git buttons. But VS Code’s integrated Git workflow is not a toy feature. It is used by students, professionals, open-source maintainers, and enterprise teams precisely because it lowers the friction of daily work.

If the final commit message differs from what the user approved, the editor has crossed a line. A commit is one of the few moments in software development where intent is supposed to be captured deliberately. The user selects the changes, writes the message, and creates the record. Sneaking in a trailer after that point feels less like assistance and more like post-processing the developer’s signature.

The fact that some users reported the behavior even with AI features disabled made the optics worse. A setting such as

The controversial default was much broader. The “all” mode treated AI interaction as enough to trigger attribution, including workflows that developers may not consider co-authorship. Inline completions complicate this even further, because the boundary between autocomplete, suggestion, and authorship is blurry by design.

That blur is the business model of modern coding assistants. They are useful because they dissolve into the act of programming: a suggested variable name here, a completed line there, a test scaffold when prompted, a quick refactor in chat. But the more seamless the tool becomes, the more careful the attribution system must be.

Crediting Copilot on a commit that contains a few accepted suggestions may be defensible in some organizations and ridiculous in others. Crediting Copilot on a commit where the developer believes no Copilot-generated content was used is not defensible. It is noise masquerading as disclosure.

That public trail cuts both ways for Microsoft. It prevents the company from pretending nothing happened, but it also makes the story less conspiratorial than some of the angrier reactions suggest. This looks less like a grand corporate authorship grab and more like a product culture failure: a small configuration change with big semantic consequences passed through review as if it were routine.

That is almost more worrying. If a change that alters commit authorship metadata can sail through because it is “just” a setting default, then the review process is not asking the right questions about AI integration. The problem is not that Microsoft has no process. The problem is that the process treated a provenance decision like a feature toggle.

The presence of Copilot itself in the review flow added an almost satirical note. An AI reviewing a pull request that makes AI co-authorship more aggressive is not proof of wrongdoing. But as symbolism goes, it was spectacularly bad. At minimum, it underlined how normalized AI has become inside the development workflow that ships the development workflow.

The fix now merged into the VS Code repository reverses the default back to

But a rollback does not erase pushed commits. Developers who used affected VS Code builds may already have repositories containing Copilot co-author lines they never intended to publish. Some will ignore them. Some will rewrite history in private branches. Some will be stuck with them in shared repositories where force-pushing is not an option.

That is why the incident should not be dismissed as internet overreaction. Git history is not sacred because developers are sentimental. It is sacred because teams rely on it when debugging regressions, assigning accountability, reviewing security-sensitive changes, preparing releases, and satisfying auditors. A tool that writes to that record has to behave conservatively.

Many organizations are still writing their AI coding policies. Some require disclosure when AI-generated code is used. Some ban AI use for certain repositories. Some allow Copilot under enterprise terms but not consumer AI tools. Some distinguish between AI-generated code, AI-assisted explanations, and AI-written commit messages.

A default that adds Copilot as co-author across a wide range of activity can break those distinctions. It can make compliant work look noncompliant. It can make non-Copilot AI use look like Copilot use. It can introduce confusion into audits that depend on knowing which tools touched a codebase.

The irony is that Microsoft’s underlying instinct was not wrong. AI provenance is going to matter more, not less. But provenance systems must be accurate, inspectable, and governed. If they produce false positives, teams will disable them, strip them, or stop trusting them altogether.

False attribution is worse than no attribution in some settings. No attribution says, “We do not know.” False attribution says, “We know something that is not true.” The former is a gap. The latter is bad evidence.

That does not make VS Code uniquely villainous. Every major developer-tool vendor is racing to embed AI into the workflow because the commercial stakes are enormous. JetBrains, GitHub, Microsoft, Google, Amazon, Sourcegraph, and a parade of startups all want the same thing: to become the interface between developer intent and code change.

The risk is that the tool stops asking whether the developer invited AI into a specific act. It merely assumes that AI is part of the room. That assumption may be convenient for demos and adoption metrics, but it is corrosive when applied to authorship.

Developers are not anti-automation. They use compilers, formatters, linters, refactoring tools, snippets, static analyzers, package managers, and CI bots all day. The issue is agency. A formatter does not append “Co-authored-by: Prettier” to every commit. A linter does not claim a share of authorship because it suggested a fix. A debugger does not become a collaborator because it helped find a bug.

Copilot is more capable than those tools, but that makes the need for explicit boundaries stronger, not weaker.

They are used to credit pair programmers, acknowledge contributors who supplied patches, preserve authorship when commits are squashed, and connect work to collaboration that would otherwise disappear. GitHub also interprets them in its own social and contribution systems. In other words, the line is not decorative.

That is why the Copilot trailer irritated so many developers. It borrowed a human collaboration convention and applied it to a machine assistant, sometimes inaccurately. The result felt like a category error: a tool being inserted into a slot normally reserved for people.

There may be a case for machine-readable AI provenance in commits. But it probably should not be disguised as conventional co-authorship. A dedicated trailer such as “AI-assisted-by” or repository-level metadata may be a better fit than co-opting a human credit mechanism. The more the industry cares about AI disclosure, the less acceptable it becomes to overload old fields with new meanings.

Microsoft and GitHub are in a particularly sensitive position here because they control both the editor and one of the dominant hosting platforms. A small VS Code default can ripple into GitHub’s representation of project history. That does not mean every integration is suspect. It does mean defaults deserve more scrutiny when one vendor owns multiple layers of the developer stack.

Any AI attribution feature should be visible at the moment of commit. If a trailer will be appended, show it in the message editor before the commit is created. If the user removes it, respect that removal unless an organization policy says otherwise. If a policy enforces it, say so clearly.

The detection model also needs to be explainable enough to debug. “Copilot touched this commit” is not useful if the user cannot determine whether that means a chat edit, an accepted completion, a generated commit message, or a stale internal flag. Developers do not need a philosophical theory of authorship in their editor, but they do need predictable behavior.

Enterprise controls should be first-class. Organizations should be able to set defaults for AI attribution, disable trailers, require explicit disclosure, and audit when AI-assisted changes are detected. Those controls should not depend on every developer discovering a setting after their Git log has already changed.

Most importantly, AI features should fail closed when disabled. If a user turns off AI features, related tracking and attribution paths should stop. Anything else teaches users that “off” means “less visible,” not actually off.

But the memory will linger because it fits a pattern developers increasingly resent: AI features arriving as defaults before the consent model is mature. Every time that happens, the next AI integration starts with less goodwill. The feature may be useful, the engineers may be sincere, and the fix may be quick. None of that changes the cumulative effect.

The right future is not an editor with no AI. It is an editor where AI assistance is powerful, visible, governable, and honest about what it did. If Microsoft wants VS Code to remain the default cockpit for modern software development, it has to treat the commit log as a boundary line, not a marketing surface.

Source: It's FOSS Typical Microsoft! Turns Out VS Code Was Adding Copilot as a Git Co-Author Without Telling Anyone

Microsoft Turned Attribution Into a Default Setting

Microsoft Turned Attribution Into a Default Setting

The offending setting, git.addAICoAuthor, was introduced earlier this year as a plausible piece of developer hygiene. If AI-generated code makes it into a commit, the editor can append a Git trailer that credits Copilot as a co-author. In a world where teams increasingly care about provenance, licensing, code review discipline, and policy compliance, that is not inherently absurd.The problem is that attribution is not the same kind of preference as a minimap, theme, or tab size. A Git commit is part technical artifact, part authorship statement, part audit record. Changing the default from “off” to “all” quietly moved AI credit from an explicit disclosure mechanism to an ambient behavior baked into the editor.

That distinction matters because Git trailers travel. They appear in logs, CI output, repository history, GitHub contribution graphs, mirrors, compliance archives, release audits, and downstream forks. Once pushed, they are not just UI chrome; they become part of the project’s historical record.

Microsoft’s defenders can reasonably say this was not a sinister plot to steal anyone’s code. But the charitable version is still bad: a major developer tool changed the authorship metadata of commits without making that change visible enough to the people whose names, projects, and reputations were attached to those commits.

The Commit Message Editor Was the Wrong Place to Hide the Surprise

The sharpest complaint from developers was not merely that Copilot appeared in commit history. It was that the trailer could be appended after the user had already written or edited the commit message. That turns the commit box into a partial preview rather than the final truth.For developers who live in the command line, this may sound like another reason to avoid IDE Git buttons. But VS Code’s integrated Git workflow is not a toy feature. It is used by students, professionals, open-source maintainers, and enterprise teams precisely because it lowers the friction of daily work.

If the final commit message differs from what the user approved, the editor has crossed a line. A commit is one of the few moments in software development where intent is supposed to be captured deliberately. The user selects the changes, writes the message, and creates the record. Sneaking in a trailer after that point feels less like assistance and more like post-processing the developer’s signature.

The fact that some users reported the behavior even with AI features disabled made the optics worse. A setting such as

chat.disableAIFeatures carries an obvious expectation: if AI is disabled, AI machinery should not keep quietly annotating work. Microsoft’s fix explicitly addresses that gap, which is an implicit admission that the original behavior was broader than users would reasonably infer.Copilot Became a Co-Author by Vibes

The best version of AI attribution is narrow and boring. If Copilot Chat or an agent materially edits a file, and the user accepts those edits into a commit, the system can offer to mark that contribution. The user should see the trailer before committing, and organizations should be able to enforce or suppress it through policy.The controversial default was much broader. The “all” mode treated AI interaction as enough to trigger attribution, including workflows that developers may not consider co-authorship. Inline completions complicate this even further, because the boundary between autocomplete, suggestion, and authorship is blurry by design.

That blur is the business model of modern coding assistants. They are useful because they dissolve into the act of programming: a suggested variable name here, a completed line there, a test scaffold when prompted, a quick refactor in chat. But the more seamless the tool becomes, the more careful the attribution system must be.

Crediting Copilot on a commit that contains a few accepted suggestions may be defensible in some organizations and ridiculous in others. Crediting Copilot on a commit where the developer believes no Copilot-generated content was used is not defensible. It is noise masquerading as disclosure.

The Open-Source Paper Trail Saved Microsoft From a Worse Story

One reason this incident exploded quickly is that VS Code is developed in public. The pull request changing the default was visible. The one-line nature of the change was visible. The review and merge dates were visible. The later reversal was visible too.That public trail cuts both ways for Microsoft. It prevents the company from pretending nothing happened, but it also makes the story less conspiratorial than some of the angrier reactions suggest. This looks less like a grand corporate authorship grab and more like a product culture failure: a small configuration change with big semantic consequences passed through review as if it were routine.

That is almost more worrying. If a change that alters commit authorship metadata can sail through because it is “just” a setting default, then the review process is not asking the right questions about AI integration. The problem is not that Microsoft has no process. The problem is that the process treated a provenance decision like a feature toggle.

The presence of Copilot itself in the review flow added an almost satirical note. An AI reviewing a pull request that makes AI co-authorship more aggressive is not proof of wrongdoing. But as symbolism goes, it was spectacularly bad. At minimum, it underlined how normalized AI has become inside the development workflow that ships the development workflow.

The Apology Was Necessary, but It Does Not Close the Case

Dmitriy Vasyura, the VS Code team member who approved the original change, publicly apologized and said the feature had been turned on by default without sufficient scrutiny. That matters. In a healthier software culture, maintainers should be able to say, plainly, that they made a mistake.The fix now merged into the VS Code repository reverses the default back to

off and tightens behavior around disabled AI features. That is the right immediate repair. It restores the original posture: AI co-author trailers should be opt-in unless the user or organization deliberately decides otherwise.But a rollback does not erase pushed commits. Developers who used affected VS Code builds may already have repositories containing Copilot co-author lines they never intended to publish. Some will ignore them. Some will rewrite history in private branches. Some will be stuck with them in shared repositories where force-pushing is not an option.

That is why the incident should not be dismissed as internet overreaction. Git history is not sacred because developers are sentimental. It is sacred because teams rely on it when debugging regressions, assigning accountability, reviewing security-sensitive changes, preparing releases, and satisfying auditors. A tool that writes to that record has to behave conservatively.

Enterprise IT Will See a Policy Failure, Not a Meme

For individual developers, the annoyance is obvious: nobody wants an assistant taking a victory lap in their commits. For enterprise IT, the issue is more concrete. Authorship metadata is part of software governance.Many organizations are still writing their AI coding policies. Some require disclosure when AI-generated code is used. Some ban AI use for certain repositories. Some allow Copilot under enterprise terms but not consumer AI tools. Some distinguish between AI-generated code, AI-assisted explanations, and AI-written commit messages.

A default that adds Copilot as co-author across a wide range of activity can break those distinctions. It can make compliant work look noncompliant. It can make non-Copilot AI use look like Copilot use. It can introduce confusion into audits that depend on knowing which tools touched a codebase.

The irony is that Microsoft’s underlying instinct was not wrong. AI provenance is going to matter more, not less. But provenance systems must be accurate, inspectable, and governed. If they produce false positives, teams will disable them, strip them, or stop trusting them altogether.

False attribution is worse than no attribution in some settings. No attribution says, “We do not know.” False attribution says, “We know something that is not true.” The former is a gap. The latter is bad evidence.

The AI Feature Creep Is Now the Product Strategy

This episode landed because many developers already feel that VS Code has been pulled steadily toward AI-first defaults. Copilot is no longer a plugin sitting politely at the edge of the editor. It is increasingly part of the default surface area, the settings model, the chat system, the command palette, the release notes, and the development roadmap.That does not make VS Code uniquely villainous. Every major developer-tool vendor is racing to embed AI into the workflow because the commercial stakes are enormous. JetBrains, GitHub, Microsoft, Google, Amazon, Sourcegraph, and a parade of startups all want the same thing: to become the interface between developer intent and code change.

The risk is that the tool stops asking whether the developer invited AI into a specific act. It merely assumes that AI is part of the room. That assumption may be convenient for demos and adoption metrics, but it is corrosive when applied to authorship.

Developers are not anti-automation. They use compilers, formatters, linters, refactoring tools, snippets, static analyzers, package managers, and CI bots all day. The issue is agency. A formatter does not append “Co-authored-by: Prettier” to every commit. A linter does not claim a share of authorship because it suggested a fix. A debugger does not become a collaborator because it helped find a bug.

Copilot is more capable than those tools, but that makes the need for explicit boundaries stronger, not weaker.

Git Trailers Are Small Text With Large Consequences

ACo-authored-by line looks humble. It is just a trailer at the bottom of a commit message. But Git culture has given those trailers meaning.They are used to credit pair programmers, acknowledge contributors who supplied patches, preserve authorship when commits are squashed, and connect work to collaboration that would otherwise disappear. GitHub also interprets them in its own social and contribution systems. In other words, the line is not decorative.

That is why the Copilot trailer irritated so many developers. It borrowed a human collaboration convention and applied it to a machine assistant, sometimes inaccurately. The result felt like a category error: a tool being inserted into a slot normally reserved for people.

There may be a case for machine-readable AI provenance in commits. But it probably should not be disguised as conventional co-authorship. A dedicated trailer such as “AI-assisted-by” or repository-level metadata may be a better fit than co-opting a human credit mechanism. The more the industry cares about AI disclosure, the less acceptable it becomes to overload old fields with new meanings.

Microsoft and GitHub are in a particularly sensitive position here because they control both the editor and one of the dominant hosting platforms. A small VS Code default can ripple into GitHub’s representation of project history. That does not mean every integration is suspect. It does mean defaults deserve more scrutiny when one vendor owns multiple layers of the developer stack.

The Fix Should Be a Floor, Not a Finish Line

Revertinggit.addAICoAuthor to off is the obvious first step. The next step is a better contract between developer tools and developers.Any AI attribution feature should be visible at the moment of commit. If a trailer will be appended, show it in the message editor before the commit is created. If the user removes it, respect that removal unless an organization policy says otherwise. If a policy enforces it, say so clearly.

The detection model also needs to be explainable enough to debug. “Copilot touched this commit” is not useful if the user cannot determine whether that means a chat edit, an accepted completion, a generated commit message, or a stale internal flag. Developers do not need a philosophical theory of authorship in their editor, but they do need predictable behavior.

Enterprise controls should be first-class. Organizations should be able to set defaults for AI attribution, disable trailers, require explicit disclosure, and audit when AI-assisted changes are detected. Those controls should not depend on every developer discovering a setting after their Git log has already changed.

Most importantly, AI features should fail closed when disabled. If a user turns off AI features, related tracking and attribution paths should stop. Anything else teaches users that “off” means “less visible,” not actually off.

The Lesson Hidden in the Copilot Trailer

The immediate operational advice is simple, but the bigger lesson is about trust boundaries. VS Code is powerful because developers trust it with intimate access to their work: their files, terminals, repositories, secrets-adjacent workflows, and daily habits. That trust is not infinite.- Developers using affected VS Code builds should check recent commits for unintended

Co-authored-by: Copilottrailers before pushing or tagging releases. - Teams that care about AI provenance should decide whether AI attribution belongs in Git commit trailers, separate metadata, pull request templates, or policy-controlled audit logs.

- Administrators should explicitly configure

git.addAICoAuthorrather than relying on editor defaults that may change across releases. - Organizations that disable AI features should verify that related telemetry, tracking, and attribution behavior are disabled as well.

- Microsoft should treat authorship metadata changes as user-facing release-note material, not as routine configuration churn.

Developers Remember Defaults Longer Than Apologies

Microsoft’s reversal will probably end the acute drama. A future VS Code build will ship with the safer default, most users will move on, and the Copilot trailer will become one more setting that power users know to check after a suspicious commit. That is how tool controversies usually end.But the memory will linger because it fits a pattern developers increasingly resent: AI features arriving as defaults before the consent model is mature. Every time that happens, the next AI integration starts with less goodwill. The feature may be useful, the engineers may be sincere, and the fix may be quick. None of that changes the cumulative effect.

The right future is not an editor with no AI. It is an editor where AI assistance is powerful, visible, governable, and honest about what it did. If Microsoft wants VS Code to remain the default cockpit for modern software development, it has to treat the commit log as a boundary line, not a marketing surface.

Source: It's FOSS Typical Microsoft! Turns Out VS Code Was Adding Copilot as a Git Co-Author Without Telling Anyone