Microsoft’s engineering teams have taken a familiar security posture—the Zero Trust mindset—and applied it to the physical fabric of their campuses, producing a dual‑domain optical architecture that pairs a production ROADM transport with a physically independent MUX‑based backup to deliver what the company calls a Zero Trust Optical Business Continuity / Disaster Recovery (BCDR) network. This design is intended to keep high‑throughput labs, datacenters, and cloud‑adjacent services running even during systemic failures, and it represents a practical synthesis of optical engineering, operational segregation, and Zero Trust principles.

The problem Microsoft set out to solve is simple and existential for large engineering orgs: even brief outages at campus scale can ripple into delayed feature delivery, halted CI/CD pipelines, frustrated product teams, and measurable financial loss. For the Puget Sound metro region, Microsoft’s Digital team built a next‑generation optical network (deployed starting in 2021) to provide extremely high throughput—Microsoft reports paths capable of 400 Gbps single‑client connectivity—and used ROADM technology and a full‑mesh topology to deliver programmability and automation. But after a 2022 incident (a fiber cut plus hardware reboot) that produced a five‑minute outage, the team prioritized a stronger life‑raft than simple intra‑system redundancy. The result was the Zero Trust Optical BCDR design: two fully independent optical domains, unified at the Ethernet edge as a single logical port channel for seamless client failover.

This article dissects the architecture, explains why the combination of ROADM and a physically separate MUX transport matters, examines the operational and security implications of treating network resilience with a Zero Trust mentality, and highlights practical lessons, hidden risks, and hard trade‑offs teams should evaluate before adopting a similar approach.

Key operational consequences of applying Zero Trust to optical networking:

That said, the architecture is not a silver bullet. It transfers complexity into cross‑domain orchestration, increases management footprint, and requires continuous discipline around testing, software supply chain hygiene, and AI governance. Organizations that lack mature operations, rigorous testing regimes, or the budget to maintain two fully independent domains should weigh whether a scaled‑down variant—stronger path diversity, multi‑carrier strategies, or provider‑hosted optical protection—would deliver better cost/benefit for their needs.

For organizations that decide to proceed, the checklist is clear: inventory aggressively, design for independent management, unify the client interface, harden control planes to Zero Trust standards, and automate with conservatism and observable guardrails. Do those things and the “life‑raft” becomes a reliable lifeline rather than an untested safety net.

Microsoft’s experience shows a pragmatic path to enterprise optical resilience: treat the physical network with the same adversarial thinking you apply to identity and endpoints, insist on separation of control, and make failover transparent for users. The payoff—reduced risk of catastrophic outage, reduced operational firefighting, and preserved business momentum—is measurable. The catch is you must commit to the people, process, and tooling investments that make that promise real.

Key technical references and claims in this piece were cross‑checked against Microsoft’s published Inside Track blog on campus optical networks and Zero Trust guidance, descriptive vendor documentation describing CDC ROADM functionality, and industry uptime mathematics. Statements drawn directly from Microsoft’s Inside Track material and internal design descriptions reflect the company’s public write‑up of the Zero Trust Optical BCDR architecture; vendor and standards references were used to validate optical technology descriptions and availability calculations.

Source: Microsoft Keeping our in-house optical network safe with a Zero Trust mentality - Inside Track Blog

Background / Overview

Background / Overview

The problem Microsoft set out to solve is simple and existential for large engineering orgs: even brief outages at campus scale can ripple into delayed feature delivery, halted CI/CD pipelines, frustrated product teams, and measurable financial loss. For the Puget Sound metro region, Microsoft’s Digital team built a next‑generation optical network (deployed starting in 2021) to provide extremely high throughput—Microsoft reports paths capable of 400 Gbps single‑client connectivity—and used ROADM technology and a full‑mesh topology to deliver programmability and automation. But after a 2022 incident (a fiber cut plus hardware reboot) that produced a five‑minute outage, the team prioritized a stronger life‑raft than simple intra‑system redundancy. The result was the Zero Trust Optical BCDR design: two fully independent optical domains, unified at the Ethernet edge as a single logical port channel for seamless client failover.This article dissects the architecture, explains why the combination of ROADM and a physically separate MUX transport matters, examines the operational and security implications of treating network resilience with a Zero Trust mentality, and highlights practical lessons, hidden risks, and hard trade‑offs teams should evaluate before adopting a similar approach.

What Microsoft built: the Zero Trust Optical BCDR in plain terms

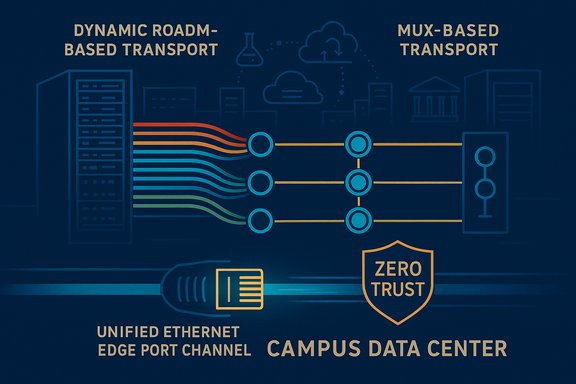

Two independent optical domains, unified at the edge

At a systems level, the architecture has four essential pieces:- A primary ROADM‑based transport: a dynamic, wavelength‑routed Dense Wavelength Division Multiplexing (DWDM) layer built with colorless‑directionless‑contentionless (CDC) ROADM functionality for programmability, flexible grid support, and automated restoration. This layer handles the bulk of high‑throughput traffic.

- A secondary MUX‑based transport: a physically separate DWDM/multiplexer layer that uses independent fibers, power, and management software. It’s intentionally simpler and entirely autonomous so it can serve as a true life‑raft in case the ROADM domain—or its orchestration—fails.

- Physically independent fiber routing and infrastructure: primary and BCDR circuits avoid shared conduits, splices, and power, reducing correlated failure risk from a single cut, localized power loss, or third‑party construction damage.

- A unified Ethernet termination and logical port channel at the client-facing edge: both domains terminate into the same logical interface, enabling automatic load balancing and transparent failover for applications and users without changing their network configuration. This makes the backup domain effectively invisible to clients until it is needed.

Design pillars Microsoft used

Microsoft explains the architecture using five design pillars that shape the technical requirements:- Independent optical systems

- Physically independent paths

- Separate control software and provisioning domains

- Unified client interface

- Survivability by design

Why this specific combination matters: ROADM + MUX explained

What a ROADM gives you (and what it costs)

Reconfigurable Optical Add/Drop Multiplexers (ROADMs) are the de‑facto building block for programmable, high‑capacity optical networks. Modern CDC ROADMs deliver three key capabilities—Colorless, Directionless, and Contentionless—which mean wavelengths can be placed on any add/drop port, routed in any direction, and reused without blocking, respectively. These capabilities enable flexible wavelength routing, dynamic restoration, and simplified provisioning at scale. ROADMs pair naturally with a control plane and automation to allow remote, near‑instant reconfiguration and wavelength restoration across ring or mesh topologies. The trade‑offs are real: CDC ROADMs are complex, require more sophisticated wavelength selective switching equipment, and introduce operational complexity that must be managed—especially in high‑degree mesh networks where multi‑component failures and optical power balancing can complicate automated recovery. High‑degree ROADM deployments also increase the attack surface of the optical layer because they bring more active control elements, management APIs, and software stacks into play.What a MUX‑based domain gives you

A MUX‑based DWDM domain—effectively a more static multiplex/demultiplex design—delivers a simpler, lower‑complexity transport plane. MUX designs typically have fewer programmable switching elements and fewer interacting subsystems, which reduces the number of failure modes and the dependence on complex control software. That makes a MUX domain an attractive backup: it can be engineered for predictable behavior, easier manual intervention, and straightforward OAM (operations, administration, and maintenance) when the production ROADM domain is degraded. Industry literature shows MUX solutions continue to evolve (lower insertion loss, better filter shapes), and they remain well suited for metro/regional spans where fiber distances are shorter and amplification is controllable.Why logical coupling at Ethernet is the practical masterstroke

By logically coupling the two independent optical domains at the Ethernet edge, Microsoft achieves seamless client failover: clients see a single logical port channel; the underlying transport can shift in real time between ROADM and MUX without user reconfiguration. This approach converts physical diversity into operational transparency—minimizing user impact during restoration and enabling one common set of policies and monitoring for client services. That design choice is where the Zero Trust principle of assume breach meets high‑availability operational practice: assume any single domain can fail, then guarantee continuity with an independent, verified alternative.The Zero Trust framing: what it buys beyond redundancy

Zero Trust typically refers to identity, device posture, and micro‑segmentation. Applied to network infrastructure, the mentality reframes resilience around separation of duties, least‑privilege control of management planes, and continuous verification of state across domains.Key operational consequences of applying Zero Trust to optical networking:

- Management plane isolation: separate NMS, automation domains, and credential stores reduce the likelihood that a compromised script or accidental automation change in one domain disrupts both. This is the same “never trust the in‑band management” logic used across identity and endpoint Zero Trust implementations.

- Verification and observability: telemetry must be gathered from both optical domains and verified independently so automated failover decisions don't blindly trust a single set of signals. Having independent instrumentation reduces correlated false positives and enables cross‑validation of alarms.

- Least‑privilege operational tooling: access to provisioning APIs for the production and BCDR domains should be controlled and audited separately, so a single operator error (or compromised credential) cannot flip both networks. Microsoft’s Secure Future Initiative and Zero Trust guidance point to similar administrative segregation in other layers.

- AI‑assisted operations with guardrails: Microsoft is integrating Copilot and other AI tools into engineering workflows for credential rotation, incident enrichment, and provisioning validation. That can speed recovery and reduce human error—but it also requires careful governance to prevent an automated action from making a transient issue systemic. Microsoft explicitly calls out automation and AI as part of ongoing evolution.

Strengths: what’s compelling about Microsoft’s approach

- True independence: Microsoft didn’t just add another fiber in the same conduit or another card in the same chassis; they engineered physically independent fibers, power, and management domains. That materially reduces correlated failures from fiber cuts, power events, and single‑vendor control plane issues.

- Operational simplicity at the user layer: unifying the domains into a single logical port channel is a pragmatic choice that preserves user experience. Engineers and product teams do not have to change their endpoints during failover.

- Practical cost/risk balance: ROADM gives programmability and spectral efficiency (supporting 400G and future 800G channels on flexible grids), while a simpler MUX backup reduces the operational surface area an emergency must navigate. This mix is a good pragmatic compromise between capability and survivability.

- Realistic SLO target: Microsoft states an internal goal of “less than six minutes of downtime per year,” which corresponds to 99.999% (five‑nines) availability—a rigorous objective that quantifies expectations and drives engineering trade‑offs. That figure maps to about 5.25 minutes of allowable annual downtime.

Risks, gaps, and hard operational trade‑offs

No architecture is risk‑free; the Microsoft design improves resilience but introduces new operational and threat surfaces that deserve attention.1) Complexity transferred to cross‑domain orchestration and telemetry

Failover logic that stitches two different optical control planes together needs robust cross‑domain validation. If the decision system relies on incomplete telemetry, or if timing windows for failover are misaligned between domains, there can be transient packet loss, microbursts, or reordering that affect sensitive workloads (high‑frequency builds, distributed databases). Designing the orchestration engine—whether deterministic or AI‑assisted—requires careful testing and rollback semantics.2) Management and software supply chain risks

Two separate control planes reduce correlated risk, but they also double the number of vendor stacks, patches, and firmware lifecycles to manage. Each management system must be hardened, credentialed, and monitored separately; if one domain’s management is poorly configured it becomes the weak link. Zero Trust principles mitigate this, but require sustained investment in identity governance and operational discipline.3) Residual single points and shared dependencies

Physical separation reduces shared‑fiber risk, but not all dependencies can be fully diversified. Examples that must be audited:- Datacenter power grids and building‑level UPS/generator dependencies.

- Shared physical infrastructure at POPs or carrier interconnects.

- Common inventory items (e.g., shared transponder types, common fiber handoffs at the street‑level).

4) Testing discipline and “unknown unknowns”

You cannot prove resilience without realistic chaos testing: planned and unplanned failover tests, simulated multi‑failures, and routine rehearsals that exercise human processes (incident triage, vendor escalation, cross‑team coordination). Microsoft’s story shows an absence of catastrophic outages since 2022, but any expansion to additional metros must scale testing programs proportionally. Overconfidence without frequent validation is a real hazard.5) AI automation’s double‑edged sword

Copilot and other AI tools can accelerate remediation, but automation that has no human‑in‑the‑loop constraints can propagate mistakes rapidly. Microsoft’s plan to use AI for ticket enrichment, auto‑remediation, and capacity planning is promising—provided guardrails, approvals, and transparent audit trails exist. Explicitly log and require human confirmation for actions that can affect both domains.Practical operational guidance: for engineering teams considering this model

If you’re evaluating an in‑house optical + BCDR program, consider these validated, practical steps distilled from Microsoft’s experience and industry practice:- Inventory and threat model first. Map fiber paths, physical conduits, power feeds, management planes, and third‑party handoffs. Prioritize sites by criticality (AI compute, secure access workspaces, datacenters).

- Design for operational separation, not just physical diversity. Make the backup an independently operated product with its own NMS, logs, and runbooks. Avoid shared automation domains unless explicitly architected to fail safe.

- Unify the client-facing experience via logical termination. Use link aggregation / port channeling and intelligent L2/L3 edge devices to present a single interface for applications—this reduces downstream complexity and speeds incident response.

- Adopt incremental validation and chaos testing. Schedule regular failover drills, inject simulated fiber cuts, and validate that higher‑order services (IDP, CI/CD, storage) behave as expected during domain failover. Log lessons and update SLOs accordingly.

- Harden management and automation. Apply Zero Trust to control planes: MFA, short‑lived credentials, per‑system RBAC, separate automation pipelines, and tightly scoped service principals. Treat the optical management plane like any other sensitive cloud service.

- Build AI assist with conservative guardrails. Use AI for diagnostics and remediation recommendations first; escalate to semi‑automated or automated action only after robust verification, approvals, and staged rollout policies are in place.

Measuring success—and the math behind five nines

Microsoft’s stated service target—“less than six minutes of downtime per year”—is a direct articulation of 99.999% availability (five nines). Translating percentage availability into real time is straightforward: five nines equates to about 5.26 minutes of allowed downtime per year, a commonly accepted industry conversion. This SLO clarifies expectations and forces the right investments (physical diversity, fast restoration, predictive telemetry). However, five‑nines is an aspirational target for many organizations because the marginal cost to shave minutes off availability rises rapidly. Decide whether five nines is mission‑critical for your workloads, and quantify the business value before committing capital and operational overhead.Long‑term outlook: scale, AI, and where the industry is headed

- Optical hardware trends: flexible‑grid CDC ROADMs and coherent pluggables are becoming mainstream, enabling 400G and 800G coherent channels and maximizing spectral efficiency. That makes ROADM‑first deployments future‑proof but increases the need for sophisticated power, amplification, and control plane management.

- Network automation and AI: research into multi‑failure localization and AI‑driven restoration is active; promising work shows machine learning can improve fault localization and restoration times in ROADM mesh networks—but these techniques are sensitive to training datasets and observability quality. Operators should pilot ML approaches tightly and validate against adversarial scenarios.

- Operational convergence: Expect more organizations to adopt the same “treat the physical layer like software” approach—automation, policy‑driven provisioning, and Zero Trust for control planes. The hard part is cultural: operations teams must acquire software engineering practices, and software teams must learn fiber realities. Microsoft’s program explicitly targets that cross‑discipline growth.

Final assessment — value and caution

Microsoft’s Zero Trust Optical BCDR is a persuasive blueprint for enterprise environments that cannot tolerate even short outages. The combination of a programmable, high‑capacity ROADM domain and an independent, lower‑complexity MUX backup—logically unified at the edge—strikes a pragmatic compromise between capability and survivability. The strengths are clear: true physical independence, improved engineer experience, and a measurable SLO tied to business impact.That said, the architecture is not a silver bullet. It transfers complexity into cross‑domain orchestration, increases management footprint, and requires continuous discipline around testing, software supply chain hygiene, and AI governance. Organizations that lack mature operations, rigorous testing regimes, or the budget to maintain two fully independent domains should weigh whether a scaled‑down variant—stronger path diversity, multi‑carrier strategies, or provider‑hosted optical protection—would deliver better cost/benefit for their needs.

For organizations that decide to proceed, the checklist is clear: inventory aggressively, design for independent management, unify the client interface, harden control planes to Zero Trust standards, and automate with conservatism and observable guardrails. Do those things and the “life‑raft” becomes a reliable lifeline rather than an untested safety net.

Microsoft’s experience shows a pragmatic path to enterprise optical resilience: treat the physical network with the same adversarial thinking you apply to identity and endpoints, insist on separation of control, and make failover transparent for users. The payoff—reduced risk of catastrophic outage, reduced operational firefighting, and preserved business momentum—is measurable. The catch is you must commit to the people, process, and tooling investments that make that promise real.

Key technical references and claims in this piece were cross‑checked against Microsoft’s published Inside Track blog on campus optical networks and Zero Trust guidance, descriptive vendor documentation describing CDC ROADM functionality, and industry uptime mathematics. Statements drawn directly from Microsoft’s Inside Track material and internal design descriptions reflect the company’s public write‑up of the Zero Trust Optical BCDR architecture; vendor and standards references were used to validate optical technology descriptions and availability calculations.

Source: Microsoft Keeping our in-house optical network safe with a Zero Trust mentality - Inside Track Blog