OpenAI, Microsoft, Amazon, Epic, and a growing class of wearable and direct-to-consumer health companies are now racing in 2026 to build patient-facing AI copilots that interpret records, labs, device data, and care instructions for ordinary users. The contest is not really about who can make a chatbot sound medically fluent. It is about who gets to sit between the patient and the healthcare system when confusion, anxiety, lab results, symptoms, bills, and appointments collide. That makes the market enormous, but also unusually dangerous.

For years, the consumer AI pitch was productivity: write the email, summarize the meeting, search the web, draft the spreadsheet formula. Healthcare changes the stakes. A bad meeting summary wastes time; a bad health interpretation can delay care, trigger unnecessary care, or persuade a frightened person that the wrong thing matters.

That is why the emerging “health copilot” race is different from the familiar platform wars around Windows, Office, search, and cloud. The winners will not merely gain engagement minutes. They may gain a privileged position in the patient’s daily health loop: explaining abnormal labs, translating doctor-speak, suggesting questions for appointments, interpreting wearable trends, and nudging users toward care.

The premise is seductive because the current healthcare experience is so hostile to normal people. Records are fragmented, lab portals are opaque, clinical notes are full of abbreviations, and discharge instructions often arrive when patients are least able to process them. If AI can make that mess intelligible, it could become one of the few consumer AI use cases that feels less like novelty and more like infrastructure.

But the same feature that makes these tools valuable also makes them hard to govern. A patient-facing health copilot that only says “ask your doctor” is useless. A copilot that confidently tells users what to do becomes, in practical terms, a medical actor. The industry is trying to occupy the narrow space between those two extremes.

The new copilots orbit the patient. They start from the consumer’s question, not the clinic’s workflow. Why is my ALT high? What does metoprolol do? Is this sleep trend meaningful? What should I ask my pulmonologist? Can I upload this photo? Can you summarize everything before my appointment?

That inversion is why OpenAI, Microsoft, and Amazon are dangerous entrants. They do not need to win the hospital contract first. They can begin with consumer habit, layer in records and device data, then become the interface through which patients make sense of the system.

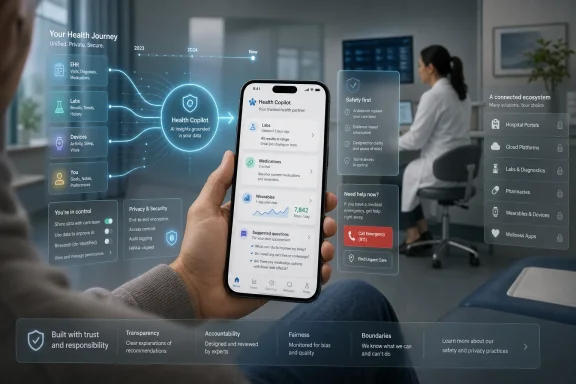

OpenAI’s ChatGPT Health, launched in early 2026, is framed as a dedicated health experience that can connect medical records through b.well, integrate wellness and lab data, and isolate health conversations from ordinary chats. Microsoft’s Copilot Health, announced in March 2026, follows a similar pattern with HealthEx record connectivity, wearable integrations, provider directories, insurance context, and physician-reviewed medical content. Amazon, with One Medical and its consumer reach, has obvious reasons to make the same move.

Epic is the counterweight. Its patient-facing assistant, Emmie, lives closer to the system of record and inside the MyChart universe where millions of patients already receive results, schedule care, and message clinicians. If Big Tech owns the general-purpose AI habit, Epic owns the institutional workflow. The race is therefore not just AI company versus AI company. It is consumer platform versus clinical platform.

That is why claims of a $100 billion patient engagement opportunity do not sound absurd, even if the exact number depends on how broadly one defines the category. The spend is scattered across care navigation, chronic disease management, remote patient monitoring, medication adherence, call centers, benefits navigation, digital therapeutics, portal tools, and administrative services. AI vendors see a chance to bundle many of those functions into a conversational layer.

The consumer demand is already visible. People ask general-purpose chatbots about symptoms because the alternative is often waiting, paying, searching, or guessing. People paste lab results into AI tools because portals deliver red numbers faster than doctors can explain them. People turn to wearables because healthcare still treats most data as episodic while bodies generate signals continuously.

This is where patient-facing copilots have a credible wedge. They do not need to cure cancer or replace doctors to be useful. They need to reduce the fog around healthcare enough that users return. Summarizing a hospital discharge packet, preparing questions for a specialist visit, explaining why a medication was prescribed, or translating a pathology report into plain English may be enough to create habit.

The danger is that habit can become reliance before the evidence catches up. A health copilot may begin as an explainer and end up as a de facto triage service because users will ask triage questions. The boundary between “education” and “advice” is not a product setting. It is a lived interaction.

That is why integrations matter more than demos. OpenAI’s b.well connection and Microsoft’s HealthEx partnership are not plumbing trivia; they are the strategic core. The consumer health assistant that can pull together EHR data, lab history, device metrics, and user-uploaded documents has a far better chance of becoming trusted.

The standards story is better than it used to be. FHIR APIs, interoperability rules, patient access mandates, and large-scale data networks have made record retrieval less science fiction than it once was. But anyone who has tried to assemble a complete medical history knows the remaining gap. Records are duplicated, missing, coded inconsistently, delayed, or scattered across institutions that barely talk to one another.

Wearables add a second kind of fragmentation. Apple Health, Fitbit, Oura, Garmin, WHOOP, continuous glucose monitors, smart scales, sleep trackers, and direct-to-consumer lab companies all generate signals with different levels of accuracy and clinical relevance. The copilot’s job is not merely to ingest those signals. It must decide which ones matter, which ones are noise, and when a trend should become a recommendation.

This is where the product problem becomes a clinical problem. A user wants a simple answer: am I okay? The system may have incomplete records, noisy wearable data, an outdated medication list, and no knowledge of a recent phone call with a nurse. The assistant must sound helpful without pretending it knows more than it does.

The key question is whether a copilot merely provides general information or influences diagnosis, treatment, or care decisions. Once a system starts interpreting symptoms, suggesting urgency, recommending changes, or ranking care options, regulators and plaintiffs’ lawyers will treat it differently. The “this is not medical advice” disclaimer may not be enough if the product experience functions like advice.

State lawmakers are already probing this boundary. New York’s SB 7263 has drawn attention because it would create liability for chatbot operators whose systems provide substantive responses that would constitute unauthorized professional practice if delivered by a human. Similar concerns are appearing elsewhere as states move faster than federal agencies.

That patchwork is bad news for scaling. A consumer AI company wants one national experience. Healthcare law may force fifty subtly different ones, especially if high-risk topics such as mental health, pediatrics, reproductive care, controlled substances, or emergency symptoms are handled differently by jurisdiction.

Regulation will also shape product tone. The more cautious the legal environment becomes, the more copilots will hedge, refuse, escalate, or redirect. That may protect companies, but it could erode usefulness. The winner will be the company that makes safety feel like competence rather than evasion.

This cuts both ways. A patient who arrives with a clear summary, medication list, timeline, and prepared questions is a gift. Many clinicians already spend precious minutes reconstructing basic facts that an assistant could organize in advance. In that scenario, AI reduces friction and makes the human encounter more productive.

But the reverse scenario is just as plausible. A patient receives an alarming explanation of a marginal lab abnormality, books an urgent appointment, sends multiple portal messages, or demands a specialist referral. The clinician now has to correct the model, reassure the patient, document the interaction, and absorb the extra workload.

This is not hypothetical in spirit. Every new consumer health information channel has produced downstream clinical demand, from online symptom checkers to direct-to-consumer genetic tests and wearable arrhythmia alerts. Some of that demand catches real problems. Some of it creates noise. Health systems live in the difference.

For hospitals, the strategic question is whether to fight these tools, integrate them, or build their own. Fighting them will not work if patients already use them. Building them requires resources and liability tolerance. Integrating them may be the most realistic path, but it creates a new dependency on vendors whose incentives are not identical to clinical priorities.

Imagine a copilot that notices a concerning trend, suggests a visit, surfaces nearby providers, checks insurance, and offers appointment scheduling. That is not just patient education. It is demand routing. In a fragmented, expensive system, whoever controls that routing layer can influence where patients go, which networks win, and which services get utilized.

This is where health systems should pay attention. Search engines and maps already influence provider discovery. Insurer directories influence network choice. Retail clinics, telehealth companies, and urgent care chains compete for low-acuity demand. A trusted AI assistant sitting on top of personal health data could become a more powerful referral gate than any of them.

There are obvious risks. If recommendations are shaped by paid placement, network contracts, or platform partnerships, patients will need transparency. If recommendations are purely algorithmic, the underlying ranking criteria still matter. If the assistant nudges users toward high-cost care too often, insurers will push back. If it misses serious cases, plaintiffs will.

The phrase “medical intelligence layer” sounds abstract until it becomes “the thing that told me where to go.” At that point, the health copilot is no longer an assistant in the background. It is part of the healthcare marketplace.

Consumers may not understand the difference between HIPAA-covered data, consumer health data, wellness data, uploaded documents, chatbot logs, and inferred health information. A wearable sleep score, a medication list, a fertility question, and a diagnosis code may travel through different legal regimes even though they feel equally sensitive to the user. That mismatch creates room for confusion and distrust.

The privacy issue is not only whether data is sold or used for training. It is also whether the user understands who can access it, how long it persists, whether it can be subpoenaed, whether it can be used for advertising, whether integrations can leak metadata, and whether deleting an account actually deletes derived information. In health, “trust us” has a short shelf life.

Security risk also rises with value. A general chatbot account is already sensitive. A chatbot account containing longitudinal medical records, lab results, reproductive health questions, psychiatric disclosures, insurance details, and wearable trends is a richer target. The more useful the product becomes, the more damaging a breach becomes.

For Microsoft and OpenAI, this is a particularly delicate reputational challenge. Both companies want to be trusted infrastructure providers for enterprises, governments, and health systems. A major health-data incident would not stay confined to a consumer feature. It would spill into the broader trust story around cloud, identity, and AI.

Startups can still win by owning narrower workflows with clearer accountability. A chronic kidney disease assistant, oncology navigation tool, maternal health coach, diabetes copilot, or medication adherence agent can be designed around specific clinical pathways, evidence, escalation rules, and reimbursement models. That may be less glamorous than a universal health assistant, but it can be easier to validate and sell.

Specialization also helps with liability. A tightly scoped tool can define what it does, measure outcomes, and build clinical governance around a narrower domain. A general-purpose assistant must handle the chaos of ordinary human health questions, including emergencies, rare diseases, comorbidities, and ambiguous symptoms.

The likely market structure is therefore layered. General assistants will become the front door for broad health understanding. EHR-native tools will handle portal-connected workflows. Specialized companies will manage high-value disease states, navigation problems, or administrative pain points. The question is how cleanly these layers will interoperate.

If they do not, the patient may end up with exactly what healthcare always produces: another fragmented stack. One assistant in the portal, another in the phone, another from the insurer, another from the employer, another from the wearable, and another from the pharmacy. The “copilot” could become five copilots arguing silently over an incomplete picture.

Escalation is the center of the product. When should the assistant tell a user to call emergency services? When should it recommend same-day care? When should it advise contacting a clinician within a week? When should it reassure? When should it refuse because the user is asking for something unsafe?

These decisions are not purely medical. They involve risk tolerance, jurisdiction, user context, access to care, age, pregnancy status, medication history, and sometimes social factors the model may not know. A user in a rural area at midnight is not in the same situation as a user across the street from an academic medical center.

Good escalation design will probably look less like chatbot cleverness and more like clinical operations. It will require protocols, guardrails, audit trails, human review, red-team testing, and feedback loops with providers. It may also require deliberate friction, the very thing consumer software companies usually try to eliminate.

This is where health systems can add value if they move quickly. They understand escalation because they already run nurse lines, triage protocols, care management teams, and patient portals. If they can bring that operational knowledge into AI partnerships, they can shape the category instead of merely reacting to it.

Healthcare is a natural extension of that ambition. A user’s health data may live in records, PDFs, emails, calendar appointments, insurance documents, wearable apps, and browser searches. Microsoft’s platform story is that Copilot can sit across those surfaces and make them coherent. That is powerful if executed well and alarming if executed carelessly.

The enterprise implications are just as important. Employers, payers, hospitals, pharmaceutical companies, and benefits platforms all have reasons to adopt health AI interfaces. Microsoft already sells into many of those environments. If Copilot Health matures, it could become both a consumer feature and an enterprise wedge.

But Microsoft also carries the burden of being Microsoft. IT pros will ask about tenant boundaries, compliance, logging, identity, data residency, admin controls, third-party connectors, and model governance. In healthcare, those questions are not procurement theater. They are the difference between a pilot and production.

OpenAI faces a different version of the same issue. ChatGPT has massive consumer mindshare and a strong developer ecosystem, but healthcare buyers will demand institutional assurances. The company’s separation of health chats and stated privacy controls are important signals. The market will still judge the product by incidents, not intentions.

Trust in this market has several dimensions. Patients need to trust that the assistant will not exploit their data. Clinicians need to trust that it will not flood them with nonsense. Regulators need to trust that it will not practice medicine without accountability. Health systems need to trust that it will improve care rather than scramble workflows.

The companies building these copilots often speak as if trust can be engineered through model evaluations and privacy controls. Those are necessary, but trust will also be social and institutional. A tool recommended by a patient’s health system may be perceived differently from a tool launched by a consumer AI brand. A tool that documents its reasoning and cites source material may be perceived differently from one that simply responds.

The best products will likely be humble in visible ways. They will say what data they used. They will distinguish between general education and personal recommendation. They will admit uncertainty. They will make escalation easy. They will let users export summaries to clinicians without dumping raw chatbot transcripts into already overloaded inboxes.

The worst products will confuse personalization with authority. They will speak too confidently from incomplete data, bury disclaimers in onboarding screens, and optimize for engagement in a domain where engagement can become anxiety. In healthcare, the most addictive interface is not necessarily the best one.

Those goals can align, but they can also diverge. A subscription product may favor affluent, health-conscious users who already have access to care. An enterprise product may prioritize cost reduction for payers or employers. A provider-integrated product may reduce inbound messages by deflecting questions. A platform product may prioritize data gravity and ecosystem lock-in.

The ethical test is whether the assistant improves the patient’s actual path through care. Did it help the user ask better questions? Did it reduce confusion? Did it catch a dangerous pattern? Did it prevent an unnecessary visit? Did it avoid false reassurance? Did it make clinicians’ jobs easier rather than harder?

That test will require evidence beyond launch claims. We should expect studies comparing AI-assisted patients with control groups, analyses of portal message volume, measures of appointment appropriateness, patient comprehension scores, escalation accuracy, and adverse-event monitoring. The market is moving faster than that evidence base, which is normal for consumer technology and uncomfortable for medicine.

The next year will therefore be a live experiment. The products are arriving before the rules, the workflows, and the cultural norms are settled. That does not mean they should be dismissed. It means they should be watched with more seriousness than the average AI feature launch.

Source: HLTH The Patient-Facing Health Copilot Race

The Next Copilot War Moves From the Desktop to the Exam Room

The Next Copilot War Moves From the Desktop to the Exam Room

For years, the consumer AI pitch was productivity: write the email, summarize the meeting, search the web, draft the spreadsheet formula. Healthcare changes the stakes. A bad meeting summary wastes time; a bad health interpretation can delay care, trigger unnecessary care, or persuade a frightened person that the wrong thing matters.That is why the emerging “health copilot” race is different from the familiar platform wars around Windows, Office, search, and cloud. The winners will not merely gain engagement minutes. They may gain a privileged position in the patient’s daily health loop: explaining abnormal labs, translating doctor-speak, suggesting questions for appointments, interpreting wearable trends, and nudging users toward care.

The premise is seductive because the current healthcare experience is so hostile to normal people. Records are fragmented, lab portals are opaque, clinical notes are full of abbreviations, and discharge instructions often arrive when patients are least able to process them. If AI can make that mess intelligible, it could become one of the few consumer AI use cases that feels less like novelty and more like infrastructure.

But the same feature that makes these tools valuable also makes them hard to govern. A patient-facing health copilot that only says “ask your doctor” is useless. A copilot that confidently tells users what to do becomes, in practical terms, a medical actor. The industry is trying to occupy the narrow space between those two extremes.

Big Tech Has Found the Soft Underbelly of Patient Engagement

The most important shift is that patient engagement is escaping the hospital portal. For the last decade, health systems and EHR vendors tried to pull patients into controlled digital environments: MyChart messages, appointment reminders, discharge follow-ups, medication adherence prompts, and care management workflows. These tools matter, but they still orbit the provider.The new copilots orbit the patient. They start from the consumer’s question, not the clinic’s workflow. Why is my ALT high? What does metoprolol do? Is this sleep trend meaningful? What should I ask my pulmonologist? Can I upload this photo? Can you summarize everything before my appointment?

That inversion is why OpenAI, Microsoft, and Amazon are dangerous entrants. They do not need to win the hospital contract first. They can begin with consumer habit, layer in records and device data, then become the interface through which patients make sense of the system.

OpenAI’s ChatGPT Health, launched in early 2026, is framed as a dedicated health experience that can connect medical records through b.well, integrate wellness and lab data, and isolate health conversations from ordinary chats. Microsoft’s Copilot Health, announced in March 2026, follows a similar pattern with HealthEx record connectivity, wearable integrations, provider directories, insurance context, and physician-reviewed medical content. Amazon, with One Medical and its consumer reach, has obvious reasons to make the same move.

Epic is the counterweight. Its patient-facing assistant, Emmie, lives closer to the system of record and inside the MyChart universe where millions of patients already receive results, schedule care, and message clinicians. If Big Tech owns the general-purpose AI habit, Epic owns the institutional workflow. The race is therefore not just AI company versus AI company. It is consumer platform versus clinical platform.

The Market Is Large Because the Problem Is Boring, Constant, and Unsolved

The phrase “patient engagement” has always sounded like consulting wallpaper, but the underlying problem is painfully real. Patients forget instructions, misunderstand medications, miss follow-ups, fail to complete referrals, and struggle to reconcile information from specialists, insurers, pharmacies, labs, and wearables. Every one of those gaps creates cost, harm, frustration, or all three.That is why claims of a $100 billion patient engagement opportunity do not sound absurd, even if the exact number depends on how broadly one defines the category. The spend is scattered across care navigation, chronic disease management, remote patient monitoring, medication adherence, call centers, benefits navigation, digital therapeutics, portal tools, and administrative services. AI vendors see a chance to bundle many of those functions into a conversational layer.

The consumer demand is already visible. People ask general-purpose chatbots about symptoms because the alternative is often waiting, paying, searching, or guessing. People paste lab results into AI tools because portals deliver red numbers faster than doctors can explain them. People turn to wearables because healthcare still treats most data as episodic while bodies generate signals continuously.

This is where patient-facing copilots have a credible wedge. They do not need to cure cancer or replace doctors to be useful. They need to reduce the fog around healthcare enough that users return. Summarizing a hospital discharge packet, preparing questions for a specialist visit, explaining why a medication was prescribed, or translating a pathology report into plain English may be enough to create habit.

The danger is that habit can become reliance before the evidence catches up. A health copilot may begin as an explainer and end up as a de facto triage service because users will ask triage questions. The boundary between “education” and “advice” is not a product setting. It is a lived interaction.

The Winning Interface Will Be the One That Owns the Data Pipe

Every health copilot pitch eventually runs into the same problem: the assistant is only as useful as the data it can see. A chatbot without medical history produces generic guidance. A chatbot with longitudinal records, current medications, labs, claims, wearable trends, and appointment context can produce something that feels personal.That is why integrations matter more than demos. OpenAI’s b.well connection and Microsoft’s HealthEx partnership are not plumbing trivia; they are the strategic core. The consumer health assistant that can pull together EHR data, lab history, device metrics, and user-uploaded documents has a far better chance of becoming trusted.

The standards story is better than it used to be. FHIR APIs, interoperability rules, patient access mandates, and large-scale data networks have made record retrieval less science fiction than it once was. But anyone who has tried to assemble a complete medical history knows the remaining gap. Records are duplicated, missing, coded inconsistently, delayed, or scattered across institutions that barely talk to one another.

Wearables add a second kind of fragmentation. Apple Health, Fitbit, Oura, Garmin, WHOOP, continuous glucose monitors, smart scales, sleep trackers, and direct-to-consumer lab companies all generate signals with different levels of accuracy and clinical relevance. The copilot’s job is not merely to ingest those signals. It must decide which ones matter, which ones are noise, and when a trend should become a recommendation.

This is where the product problem becomes a clinical problem. A user wants a simple answer: am I okay? The system may have incomplete records, noisy wearable data, an outdated medication list, and no knowledge of a recent phone call with a nurse. The assistant must sound helpful without pretending it knows more than it does.

The Regulatory Wall Is Not One Wall

The easiest mistake is to describe regulation as a single obstacle that will eventually be solved. In reality, patient-facing health copilots face a stack of overlapping legal regimes, each with different triggers. HIPAA is one layer, but it is not the whole universe. FDA software rules, state medical practice laws, consumer privacy statutes, unfair and deceptive practices enforcement, product liability, and professional licensing rules can all matter depending on what the tool does.The key question is whether a copilot merely provides general information or influences diagnosis, treatment, or care decisions. Once a system starts interpreting symptoms, suggesting urgency, recommending changes, or ranking care options, regulators and plaintiffs’ lawyers will treat it differently. The “this is not medical advice” disclaimer may not be enough if the product experience functions like advice.

State lawmakers are already probing this boundary. New York’s SB 7263 has drawn attention because it would create liability for chatbot operators whose systems provide substantive responses that would constitute unauthorized professional practice if delivered by a human. Similar concerns are appearing elsewhere as states move faster than federal agencies.

That patchwork is bad news for scaling. A consumer AI company wants one national experience. Healthcare law may force fifty subtly different ones, especially if high-risk topics such as mental health, pediatrics, reproductive care, controlled substances, or emergency symptoms are handled differently by jurisdiction.

Regulation will also shape product tone. The more cautious the legal environment becomes, the more copilots will hedge, refuse, escalate, or redirect. That may protect companies, but it could erode usefulness. The winner will be the company that makes safety feel like competence rather than evasion.

Doctors Will Inherit the Side Effects

Patient-facing health copilots are marketed as empowering tools, but clinicians will feel the operational consequences first. A better-informed patient can improve a visit. A worried patient armed with misunderstood AI output can consume a visit.This cuts both ways. A patient who arrives with a clear summary, medication list, timeline, and prepared questions is a gift. Many clinicians already spend precious minutes reconstructing basic facts that an assistant could organize in advance. In that scenario, AI reduces friction and makes the human encounter more productive.

But the reverse scenario is just as plausible. A patient receives an alarming explanation of a marginal lab abnormality, books an urgent appointment, sends multiple portal messages, or demands a specialist referral. The clinician now has to correct the model, reassure the patient, document the interaction, and absorb the extra workload.

This is not hypothetical in spirit. Every new consumer health information channel has produced downstream clinical demand, from online symptom checkers to direct-to-consumer genetic tests and wearable arrhythmia alerts. Some of that demand catches real problems. Some of it creates noise. Health systems live in the difference.

For hospitals, the strategic question is whether to fight these tools, integrate them, or build their own. Fighting them will not work if patients already use them. Building them requires resources and liability tolerance. Integrating them may be the most realistic path, but it creates a new dependency on vendors whose incentives are not identical to clinical priorities.

The Referral Layer Is Where the Money Gets Interesting

The most under-discussed part of the health copilot race is care navigation. Explaining lab results is useful. Steering a patient toward the next step is monetizable.Imagine a copilot that notices a concerning trend, suggests a visit, surfaces nearby providers, checks insurance, and offers appointment scheduling. That is not just patient education. It is demand routing. In a fragmented, expensive system, whoever controls that routing layer can influence where patients go, which networks win, and which services get utilized.

This is where health systems should pay attention. Search engines and maps already influence provider discovery. Insurer directories influence network choice. Retail clinics, telehealth companies, and urgent care chains compete for low-acuity demand. A trusted AI assistant sitting on top of personal health data could become a more powerful referral gate than any of them.

There are obvious risks. If recommendations are shaped by paid placement, network contracts, or platform partnerships, patients will need transparency. If recommendations are purely algorithmic, the underlying ranking criteria still matter. If the assistant nudges users toward high-cost care too often, insurers will push back. If it misses serious cases, plaintiffs will.

The phrase “medical intelligence layer” sounds abstract until it becomes “the thing that told me where to go.” At that point, the health copilot is no longer an assistant in the background. It is part of the healthcare marketplace.

Privacy Is the Feature That Can Break the Product

Health AI companies know privacy is a central concern, which is why the launch language tends to emphasize separation, controls, non-training commitments, security, and physician review. That is necessary. It is not sufficient.Consumers may not understand the difference between HIPAA-covered data, consumer health data, wellness data, uploaded documents, chatbot logs, and inferred health information. A wearable sleep score, a medication list, a fertility question, and a diagnosis code may travel through different legal regimes even though they feel equally sensitive to the user. That mismatch creates room for confusion and distrust.

The privacy issue is not only whether data is sold or used for training. It is also whether the user understands who can access it, how long it persists, whether it can be subpoenaed, whether it can be used for advertising, whether integrations can leak metadata, and whether deleting an account actually deletes derived information. In health, “trust us” has a short shelf life.

Security risk also rises with value. A general chatbot account is already sensitive. A chatbot account containing longitudinal medical records, lab results, reproductive health questions, psychiatric disclosures, insurance details, and wearable trends is a richer target. The more useful the product becomes, the more damaging a breach becomes.

For Microsoft and OpenAI, this is a particularly delicate reputational challenge. Both companies want to be trusted infrastructure providers for enterprises, governments, and health systems. A major health-data incident would not stay confined to a consumer feature. It would spill into the broader trust story around cloud, identity, and AI.

The Incumbents Have Distribution, but Startups Have Sharper Wedges

It is tempting to assume Big Tech and Epic will simply absorb the category. They have models, cloud, identity, consumer reach, enterprise relationships, and data partnerships. But healthcare rarely rewards horizontal scale alone.Startups can still win by owning narrower workflows with clearer accountability. A chronic kidney disease assistant, oncology navigation tool, maternal health coach, diabetes copilot, or medication adherence agent can be designed around specific clinical pathways, evidence, escalation rules, and reimbursement models. That may be less glamorous than a universal health assistant, but it can be easier to validate and sell.

Specialization also helps with liability. A tightly scoped tool can define what it does, measure outcomes, and build clinical governance around a narrower domain. A general-purpose assistant must handle the chaos of ordinary human health questions, including emergencies, rare diseases, comorbidities, and ambiguous symptoms.

The likely market structure is therefore layered. General assistants will become the front door for broad health understanding. EHR-native tools will handle portal-connected workflows. Specialized companies will manage high-value disease states, navigation problems, or administrative pain points. The question is how cleanly these layers will interoperate.

If they do not, the patient may end up with exactly what healthcare always produces: another fragmented stack. One assistant in the portal, another in the phone, another from the insurer, another from the employer, another from the wearable, and another from the pharmacy. The “copilot” could become five copilots arguing silently over an incomplete picture.

The First Real Test Is Not Accuracy, but Escalation

The benchmark race matters. Physician-reviewed evaluations, medical benchmarks, and expert panels are useful signs that vendors are taking the domain seriously. But the hardest test for patient-facing health AI is not whether it can answer a textbook question. It is whether it knows when not to answer.Escalation is the center of the product. When should the assistant tell a user to call emergency services? When should it recommend same-day care? When should it advise contacting a clinician within a week? When should it reassure? When should it refuse because the user is asking for something unsafe?

These decisions are not purely medical. They involve risk tolerance, jurisdiction, user context, access to care, age, pregnancy status, medication history, and sometimes social factors the model may not know. A user in a rural area at midnight is not in the same situation as a user across the street from an academic medical center.

Good escalation design will probably look less like chatbot cleverness and more like clinical operations. It will require protocols, guardrails, audit trails, human review, red-team testing, and feedback loops with providers. It may also require deliberate friction, the very thing consumer software companies usually try to eliminate.

This is where health systems can add value if they move quickly. They understand escalation because they already run nurse lines, triage protocols, care management teams, and patient portals. If they can bring that operational knowledge into AI partnerships, they can shape the category instead of merely reacting to it.

WindowsForum Readers Should See the Platform Pattern

For Windows and Microsoft watchers, Copilot Health is part of a familiar strategic arc. Microsoft does not merely want Copilot to be a chat window. It wants Copilot to become a context layer across work, personal data, search, devices, cloud services, and regulated industries.Healthcare is a natural extension of that ambition. A user’s health data may live in records, PDFs, emails, calendar appointments, insurance documents, wearable apps, and browser searches. Microsoft’s platform story is that Copilot can sit across those surfaces and make them coherent. That is powerful if executed well and alarming if executed carelessly.

The enterprise implications are just as important. Employers, payers, hospitals, pharmaceutical companies, and benefits platforms all have reasons to adopt health AI interfaces. Microsoft already sells into many of those environments. If Copilot Health matures, it could become both a consumer feature and an enterprise wedge.

But Microsoft also carries the burden of being Microsoft. IT pros will ask about tenant boundaries, compliance, logging, identity, data residency, admin controls, third-party connectors, and model governance. In healthcare, those questions are not procurement theater. They are the difference between a pilot and production.

OpenAI faces a different version of the same issue. ChatGPT has massive consumer mindshare and a strong developer ecosystem, but healthcare buyers will demand institutional assurances. The company’s separation of health chats and stated privacy controls are important signals. The market will still judge the product by incidents, not intentions.

The Race Will Be Won by Trust, Not Fluency

The least interesting thing about these systems is that they can explain a lab result in plain English. That is table stakes. The more important question is whether users, clinicians, regulators, and institutions can trust the assistant’s behavior over time.Trust in this market has several dimensions. Patients need to trust that the assistant will not exploit their data. Clinicians need to trust that it will not flood them with nonsense. Regulators need to trust that it will not practice medicine without accountability. Health systems need to trust that it will improve care rather than scramble workflows.

The companies building these copilots often speak as if trust can be engineered through model evaluations and privacy controls. Those are necessary, but trust will also be social and institutional. A tool recommended by a patient’s health system may be perceived differently from a tool launched by a consumer AI brand. A tool that documents its reasoning and cites source material may be perceived differently from one that simply responds.

The best products will likely be humble in visible ways. They will say what data they used. They will distinguish between general education and personal recommendation. They will admit uncertainty. They will make escalation easy. They will let users export summaries to clinicians without dumping raw chatbot transcripts into already overloaded inboxes.

The worst products will confuse personalization with authority. They will speak too confidently from incomplete data, bury disclaimers in onboarding screens, and optimize for engagement in a domain where engagement can become anxiety. In healthcare, the most addictive interface is not necessarily the best one.

The Copilot That Patients Deserve Is Harder Than the One Investors Want

The commercial fantasy is simple: a universal AI health companion that users consult every day, connected to all records and devices, monetized through subscriptions, enterprise deals, navigation, or partnerships. The patient need is more modest and more demanding: a reliable interpreter that helps people understand what is happening without making the system worse.Those goals can align, but they can also diverge. A subscription product may favor affluent, health-conscious users who already have access to care. An enterprise product may prioritize cost reduction for payers or employers. A provider-integrated product may reduce inbound messages by deflecting questions. A platform product may prioritize data gravity and ecosystem lock-in.

The ethical test is whether the assistant improves the patient’s actual path through care. Did it help the user ask better questions? Did it reduce confusion? Did it catch a dangerous pattern? Did it prevent an unnecessary visit? Did it avoid false reassurance? Did it make clinicians’ jobs easier rather than harder?

That test will require evidence beyond launch claims. We should expect studies comparing AI-assisted patients with control groups, analyses of portal message volume, measures of appointment appropriateness, patient comprehension scores, escalation accuracy, and adverse-event monitoring. The market is moving faster than that evidence base, which is normal for consumer technology and uncomfortable for medicine.

The next year will therefore be a live experiment. The products are arriving before the rules, the workflows, and the cultural norms are settled. That does not mean they should be dismissed. It means they should be watched with more seriousness than the average AI feature launch.

The Health Copilot Race Has Already Left the Waiting Room

The practical implications are becoming clear, even if the final market structure is not. Patients will use these tools because healthcare is confusing and the tools are convenient. Clinicians will encounter their output whether or not they asked for it. Health systems will have to decide whether to integrate, compete, or absorb the downstream demand.- Patient-facing health copilots are moving from general medical Q&A toward personal health-data interpretation, which makes them more useful and more legally exposed.

- OpenAI and Microsoft are treating record connectivity and wearable integrations as core product infrastructure, not optional add-ons.

- Epic’s advantage is proximity to the clinical workflow, while Big Tech’s advantage is consumer habit and broader platform reach.

- The hardest product problem is safe escalation, because users will inevitably ask questions that cross from education into triage.

- Privacy, liability, and data fragmentation are not secondary obstacles; they are the factors that will determine which products can scale.

- Health systems that ignore consumer AI will still inherit its effects through portal messages, appointment demand, and patient expectations.

Source: HLTH The Patient-Facing Health Copilot Race