The arrival of clearly labeled, visually separated advertisements inside conversational AI marks the end of an era where chatbots were purely informational tools — and the start of a new, risk‑heavy commercial ecosystem for users, brands, and publishers alike. //openai.com/index/testing-ads-in-chatgpt/)

Chat interfaces transformed search and discovery by capturing richer intent than a single search query. What a user types into a chat — constraints, preferences, budgets, timelines — is precise context that marketers crave. That context is why a wave of companies began experimenting with ad formats tailored to conversation: the potential for high‑intent, moment‑of‑decision placements is enormous, but so r trust and privacy.

The industry turning point came in early 2026 when multiple firms publicly disclosed pilots or tests that put advertising into chat surfaces. OpenAI published a formal test plan to show ads to logged‑in adult users on free and lower‑cost “Go” tiers while keeping higher paid tiers ad‑free; the company emphasized principles such as answer independence, conversation privacy, and user controls. The announcement crystallized a cross‑industry pivot: monetization pressure at scale is fdapt ad stacks to the unique constraints of conversational UX.

That pivot has already sparked a public commercial and moral fight. Anthropic ran high‑profile Super Bowl creative that criticized in‑conversation ads and positioned its Claude assistant as ad‑free, triggering a public sparring match with OpenAI executives and widespread media coverage. The adveerately jarring dramatizations of a chatbot mid‑conversation pitching a product — underscore how sensitive users are to ad intrusions in a conversational context.

Source: Iosco County News Herald New world for users and brands as ads hit AI chatbots

Background

Background

Chat interfaces transformed search and discovery by capturing richer intent than a single search query. What a user types into a chat — constraints, preferences, budgets, timelines — is precise context that marketers crave. That context is why a wave of companies began experimenting with ad formats tailored to conversation: the potential for high‑intent, moment‑of‑decision placements is enormous, but so r trust and privacy.The industry turning point came in early 2026 when multiple firms publicly disclosed pilots or tests that put advertising into chat surfaces. OpenAI published a formal test plan to show ads to logged‑in adult users on free and lower‑cost “Go” tiers while keeping higher paid tiers ad‑free; the company emphasized principles such as answer independence, conversation privacy, and user controls. The announcement crystallized a cross‑industry pivot: monetization pressure at scale is fdapt ad stacks to the unique constraints of conversational UX.

That pivot has already sparked a public commercial and moral fight. Anthropic ran high‑profile Super Bowl creative that criticized in‑conversation ads and positioned its Claude assistant as ad‑free, triggering a public sparring match with OpenAI executives and widespread media coverage. The adveerately jarring dramatizations of a chatbot mid‑conversation pitching a product — underscore how sensitive users are to ad intrusions in a conversational context.

How ads are being implemented today

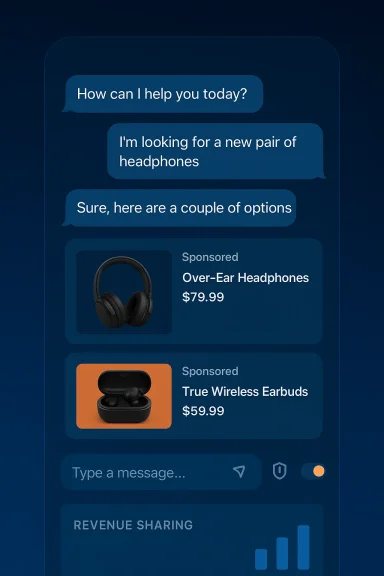

Ad formats in chat are experimental but several patterns have emerged. Platforms have largely converged on features meant to preserve the integrity of model outputs while still surfacing commercial opportunities.- Labeled cards or banners beneath answers. Ads are visually separated from the assistant’s generated text and labeled as “Sponsored” or similar to preserve answer independence. This is the most common early pattern.

- Sponsored follow‑up prompts. Services like Perplexity introduced sponsored follow‑ups — suggested next questions or prompts marked as sponsored — which invite users to explore a brand or product. These can appear after a synthesized answer.

- Shoppable cards and in‑chat commerce. Some prototypes show product tiles with price and a buy/learn CTA that keep users inside the chat flow rather than sending them out to a merchant. Platforms pitch this as friction reduction for purchase decisions.

- Contextual, limited personalization. Early pilots emphasize contextual targeting — matching ads to the immediate conversation topic — while promising to avoid selling raw chat transcripts. Platforms say they will exclude ads on sensitive topics like health or politics and not show ads to minors.

Who’s doing what: platform moves and positioning

OpenAI: test, label, promise

OpenAI’s public playbook is explicit: start small, test in the U.S. on Free and Go tiers, separate ads visually, and promise that answers remain independent from ads. The company also offers user controls to dismiss ads, delete ad data, and disable personalization. Those commitments are detailed in OpenAI’s testing announcement and policy notes.Anthropic: ad‑free as a brand differentiator

Anthropic’s Super Bowl creative pivoted to a positioning play: call out the perceived harms of in‑conversation advertising and claim Claude will remain ad‑free. The campaign achieved two goals — generating press coverage and forcing a public debate about what responsible monetization should look like. But it also raised questions about whether ad‑free positioning is a durable competitive advantage for firms that must scale expensive infrastructure.Microsoft: Copilot’s “ad voice” and ecosystem integration

Microsoft has been integrating sponsored content into Copilot‑style experiences since at least 2023 and has been explicit about an “ad voice” that explains why an ad is shown and how it connects to the user’s conversation. Microsoft frames ads as part of a seamless, contextual experience across Bing, Edge, and Copilot, emphasizing relevance and explanation.Perplexity and publishers: revenue‑share experiments

Perplexity moved quickly to offer revenue sharing with publishers whose content is cited by ansded sponsored follow‑ups and a publishers’ program designed to give outlets a cut of ad revenue when their reporting underpins an AI answer. Perplexity’s approach is an early attempt to address the “zero‑click” probose for journalism revenue.Why this matters — the upside

Ads in chat can be monetarily potent and functionally useful in ways legacy ad surfaces cannot.- High‑intent targeting. Chat captures explicit, sequential intent. A user who asks for “best blenders under $150 for smoothies” is a clearer purchase prospect than many keyword searches; ads served in that moment can convert at higher rates.

- Conversational commerce. When discovery, comparison, and checkout can happen inside the assistant, conversion funnels compress and brands can capture a larger share of value without reliance on intermediaries.

- Sustainable free access. For platforms, ad revenue can underwrite wider availability of powerful assistants without forcing all users onto paywalls. OpenAI frames its tests as a way to keep advanced capabilities accessible while charging for premium ad‑free tiers.

- New publisher revenue paths. Revenue‑share models like Perplexity’s create an alternative to referral traffic, directing ad dollars to content creators whose reporting informs AI answers. This could be one path to sustain independent journalism in an AI‑first world.

Major risks and why the backlash is credible

The flipside is stark: ads inside a medium people treat as private and helpful can erode the single most valuable asset for assistants — trust.- Trust erosion and migration. Users are sensitive about the intve or poorly labeled ads can make assistants feel like another display network and push users toward paid, ad‑free alternatives or competitor products. Anthropic’s Super Bowl ads exploited this fear and turned it into a marketica.com]

- Opaque targeting vectors. Platforms promise not to sell raw chat transcripts, but derived signals, aggregated models, or ephemeral featurestarget ads. That technical nuance matters to privacy regulators and to privacy‑conscious users, and promises alone will not suffice without independent audits.

- Publisher disintermediation. If assistants provide end‑to‑end answers and commerce without sending traffic to source sites, publishers lose the referral economics that fund reporting. Revenue‑share pilots help, but they may not scale widely enough or protect niche publishing models.

- Measurement and fraud gaps. Ad tech built for web pages doesn’t map neatly to conversational surfaces. Without new verifiertisers may pay for low‑value impressions or metrics that are easy to game.

- Regulatory exposure. Consumer protection, advertising transparency, profiling rules, and data protection laws could all be triggered by will scrutinize how personal data and derived features are used in ad selection and whether users receive actionable consent.

Measurement, verification, and new technical primitives

Conversational advertising requires different measurement and verification tools than display or search.- Session‑level attribution. Attribution should respect session continuity rather than pageviews. Platforms must develop auditable session IDs and privacy‑preserving signals that link ad exposure to downstream conversion without revealing chat content.

- Impression validation. What counts as a valid impression in chat? Is a “sponsored follow‑up” that never gets tapped equivalent to a banner view? The industry needs standard definitions and third‑party validators.

- Anti‑fraud and bot detection. Conversational surfaces are particularly susceptible to automated or scripted query patterns that can distort measurement; new anti‑fraud tooling is required.

- **Privacy‑first targetinges — on‑device scoring, differential privacy, or aggregated cohort signals — can limit exposure of raw conversations while enabling some personalization. Platforms should publish technical attestats they use and allow audits.

Practical guidance for stakeholders

For users

- Check and exercise controls. Turn off ad personer contextual-only ads, and use ad data deletion options where available. Platforms say these controls exist — verify them in product settings.

- Prefer paid tiers when privacy matters. If you need ad‑free, uncompromised assistance for sensitive tasks, paid tiers remain the most reliable guarantee in the short term.

- Be skeptical of sponsored suggestions. Always verify claims the assistant makes, especially for health, legal, or financial decisions; sponsored prompts should be treated like any other ad.

For brands and marketers

- **Design conversational‑first ility‑driven sponsored prompts or clear product cards work better than repurposed display creatives.

- Invest in GEO (Generative Engine Optimization). Structure content with FAQs, schema, and canonical answers so AI assistants can cite and attribute your content organically.

- Demand transparent measurement. Insist on auditi‑fraud safeguards, and clear definitions of what constitutes an impression or conversion in chat.

For publishers

- Negotiate clear revenue‑share terms. If platforms surface your work and then monetize it, secure transparent, auditable compensation — Perplexity’s publishers’ program is an early example but not the only model.

- Harden canonical content. Make it easy for assistants to identify and attribute your reporting: clear Q&As, structured metadata, and robust paywall/redirection strategies can help prese

For platforms and product teams

- Ship labeling and controls at launch. Clear visual distinction and accessible opt‑outs must be baked into the UX, not tacked on later. ([opai.com/index/testing-ads-in-chatgpt/)

- Publish independent audits. Third‑party attestations of privacy promises and “answer independence” are critical to win trust.

- Exclude sensitive contexts programmatically. Technical enforcement must ensure ads do not appear in health, mental health, political, or otations.

- Create publisher partnerships. Revenue sharing, APIs, and referral mechanisms can soften the impact on journalism and secure content supply.

Ethical and regulatory guardrails to press for now

Policymakers and civil society should press platforms for a baseline of protections:- Mandatory transparency reports. Platforms should publish ad volumes, categories excluded, revenue‑share arrangements, and auditing summaries on a regular cadence.

- Independent audits of targeting logic. External verification that advertisers cannot access raw chats and that derived signals are bounded by declared policy must be required.

- **Age and sensitivity prousions for minors and for queries touching medical, mental health, or political advice should be enforceable and verifiable.

- Ad disclosure standards. Uniform labeling and prominence requirements to ensure users can always distinguish ads from assistant outputs.

A tactical checklist (for product managers and policy teams)

- Require a single, auditable ad labeling component across conversational surfaces.

- Default ad personalization to off for new users; make opt‑in explicit.

- Implement cryptographic session tokens for privacy‑preserving attribution.

- Publish a third‑party audit within six months of any ad launch.

- Build a publisher compensation pipeline and public ledger of payments.

- Enforce programmatic exclusions fxonomies.

- Share anonymized, aggregated ad performance data with an independent watchdog.

Conclusion

The integration of advertising into AI chat is not an inevitability the public must meekly accept — it is a design and policy choice open to governance, scrutiny, and technical innovation. Done thoughtfully, in‑chat ads can fund broad access, create new revenue streams for publishers, and surface genuinely useful offers at the moment of intent. Done badly, they will corrode trust, hollow out referral economics, and invite swift regulatory action — consequences that will damage users, brands, and platforms alike. The coming months will determine whether this new ad frontier becomes a sustainable funding model for inclusive AI or a short‑term revenue play that fractures the trust on which conversational assistants depend. Platforms, advertisers, publishers, and regulators each have clear tasks: prioritize transparency, insist on independent verification, and treat conversational advertising as a governance challenge as much as a product opportunity.Source: Iosco County News Herald New world for users and brands as ads hit AI chatbots