Energy from Microsoft Ignite landed as more than a keynote crescendo — it was a practical roadmap for the agentic enterprise, and a call to action for cloud architects, developers, and Windows administrators to translate platform promises into governed, measurable systems that deliver real business outcomes.

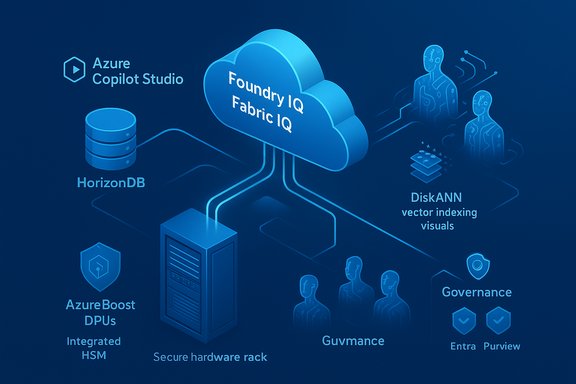

Microsoft used Ignite to present a tightly integrated stack built around three converging ideas: model choice at scale, semantic grounding for agents, and identity-first governance for agentic automation. That stack stitches together Microsoft Foundry (the model and agent runtime), IQ layers across Foundry/Fabric/Work, a new PostgreSQL-native service (Azure HorizonDB), Azure Copilot as an operations orchestrator, and next-generation Azure hardware (custom silicon and DPUs) designed to make large-model workloads feasible and secure in production. These announcements are explicitly framed to move AI from-isolated experiments into production services with lifecycle, observability, and compliance baked in. The rest of this piece parses the five headlined themes from Microsoft’s Ignite messaging, verifies the most consequential technical claims against public Microsoft documentation and independent reporting, and — most importantly — translates those innovations into five practical ways you can build agentic systems on Azure without creating untenable risk.

Treat Microsoft’s claims as the starting line: verify performance and behavior in your environment, pilot with limited scope, instrument exhaustively, and bake governance into the agent lifecycle. Doing so converts Ignite’s announcements from marketing milestones into sustainable, measurable improvements in how your teams operate, automate, and deliver outcomes at scale.

Source: Microsoft Azure Microsoft Ignite 2025 Recap: Agentic AI, Foundry, and Azure Innovations

Background / Overview

Background / Overview

Microsoft used Ignite to present a tightly integrated stack built around three converging ideas: model choice at scale, semantic grounding for agents, and identity-first governance for agentic automation. That stack stitches together Microsoft Foundry (the model and agent runtime), IQ layers across Foundry/Fabric/Work, a new PostgreSQL-native service (Azure HorizonDB), Azure Copilot as an operations orchestrator, and next-generation Azure hardware (custom silicon and DPUs) designed to make large-model workloads feasible and secure in production. These announcements are explicitly framed to move AI from-isolated experiments into production services with lifecycle, observability, and compliance baked in. The rest of this piece parses the five headlined themes from Microsoft’s Ignite messaging, verifies the most consequential technical claims against public Microsoft documentation and independent reporting, and — most importantly — translates those innovations into five practical ways you can build agentic systems on Azure without creating untenable risk.1. Model choice — Claude and multi-vendor Foundry

What changed

Microsoft announced that Anthropic’s Claude models (including Sonnet and Opus variants) are now available through Microsoft Foundry and Copilot Studio alongside OpenAI models, giving builders selectable frontier models for different workloads. Microsoft positions this as a practical solution for model diversity — letting teams route reasoning tasks to the model best suited for safety, tone, or specialized reasoning. Microsoft’s product posts and Copilot updates show Claude Sonnet 4.5 and Claude Opus 4.1 appearing in Foundry and Copilot Studio rollouts.Why it matters

Model choice matters because different architectures and training regimes yield different trade-offs for hallucination rates, safety, latency, and cost. Giving developers multiple frontier model options inside a single orchestration layer simplifies A/B testing, policy-driven routing, and resilience engineering. The industry reaction at Ignite emphasized this operational flexibility — developers can now treat model selection as a first-class parameter in production systems rather than a one-time vendor decision.Caveats and verification

Microsoft’s messaging claims Azure becomes “the only cloud” offering both Claude and GPT frontier models in a unified experience. That is a vendor claim and should be treated as such: independent press coverage corroborates the availability of Anthropic models in Microsoft-hosted products, but competitive clouds and partner integrations evolve quickly — verify direct SLAs, regional availability, and legal terms for your compliance needs before presuming exclusivity.2. IQ Revolution — Foundry IQ, Fabric IQ, and Work IQ

What Microsoft announced

Microsoft introduced a trio of “IQ” layers designed to eliminate the brittle pieces of RAG (Retrieval-Augmented Generation) at enterprise scale:- Foundry IQ: a managed knowledge retrieval service powered by Azure AI Search that indexes SharePoint, OneLake/Fabric, custom apps, and the web into reusable knowledge bases with permissions-aware retrieval. It aims to remove per-agent rework on chunking, embedding, and connector plumbing.

- Fabric IQ: a new semantic intelligence layer in Microsoft Fabric that exposes ontologies, semantic models, and a graph engine so agents can reason over business entities (customers, orders, assets) rather than raw tables. Fabric IQ’s ontology and data-agent features unify analytics and operational data.

- Work IQ: the people- and workspace-aware layer that supplies Copilot and agents with context from mail, meetings, Teams, and user preferences to generate work-aware recommendations.

Practical benefits

- Removes repeat engineering: knowledge bases in Foundry IQ can be reused by many agents rather than rebuilding retrieval stacks for each project.

- Faster time-to-value: Fabric IQ lets data teams expose business entities once, and agents immediately reason across analytics and operations.

- Permission-aware grounding: Foundry IQ and Purview integration limit accidental overexposure of sensitive content during retrieval and generation.

What to validate in your context

- Confirm indexing behavior and refresh cadence (how up-to-date is the indexed content for your latency-sensitive workflows).

- Verify that Purview labels and RBAC are enforced end-to-end in retrieval and in agent outputs.

- Pilot ontology bindings for a bounded domain (e.g., orders or assets) to quantify schema-mapping cost savings.

3. Azure HorizonDB — PostgreSQL at modern cloud scale

The announcement in short

Azure HorizonDB is a new fully managed, Postgres-compatible database service introduced in preview. Microsoft positions it for cloud-native transactional and AI workloads with built-in vector indexing and scale-out compute/storage designed for high throughput and low-latency commits. Microsoft’s announcement describes up to 3x transactional throughput versus open-source Postgres, auto-scaling storage up to 128 TB, and scale-out compute up to 3,072 vCores, plus sub-millisecond multi-zone commit latency. DiskANN-based vector indexing with predicate pushdown is a highlighted capability for semantic search and RAG patterns without moving data to separate vector stores.Why HorizonDB matters to builders

- Operational simplicity: integrated vector search and Postgres compatibility mean many AI apps can be built without introducing separate vector databases.

- Performance and scale: those throughput and latency claims suggest HorizonDB targets mission-critical OLTP and low-latency AI inference close to the data plane.

- Ecosystem fit: integrations with Foundry, Fabric, and developer tooling (VS Code + Copilot migration helpers) smooth migration and model-grounding workflows.

Validation and independent confirmation

Microsoft technical posts and multiple independent outlets (InfoWorld, MSDynamicsWorld, Redmond Magazine) reported the HorizonDB specs and DiskANN integration — giving reasonable confidence that the service exists in preview with the advertised capabilities. Still, performance claims (e.g., “3x throughput”) are derived from vendor testing; verify with your own benchmarks on representative workloads before making architecture or procurement decisions. DiskANN as an integrated option for filtered vector search is documented and available in Azure Database for PostgreSQL and SQL Server 2025 previews, which supports Microsoft’s assertion that vector indexing is now converging into the managed database fabric.4. Azure Copilot — Agents that orchestrate cloud ops

What Azure Copilot brings

Azure Copilot is being repositioned from a conversational helper to an agent orchestration platform that runs specialized agents across the cloud lifecycle: Migration, Deployment, Observability, Optimization, Resiliency, and Troubleshooting. A private preview includes an Operations Center and an Agent Mode UX that proposes plan-first playbooks, enforces human approvals, and logs agent actions for audit. The Migration Agent automates discovery, inventory, and IaC generation to accelerate modernization. Microsoft Learn docs and the preview pages describe tenant-controlled rollout and per-action approvals.Why enterprise IT should take notice

- Agents can materially reduce manual effort for discovery and migration; the migration agent is explicitly designed to shorten weeks-long manual processes to days or hours.

- Identity-bound execution (agents with Entra-based Agent IDs) and integration with Azure Policy and Purview help make agent actions auditable and policy-compliant.

- Copilot’s plan-first UX gives operators a visible playbook and an approval gate — a key UX pattern for safely introducing automation.

Operational and governance concerns

- Treat agents as production services: add them to access reviews, incident playbooks, and change-control processes.

- Start with monitor-only pilots and enforce role-based approval gates for any agent that can perform write or destructive actions.

- Expect a shift in operational SLAs and cost management: always-on agents and long-context models change billable patterns and telemetry volumes. Industry coverage and community threads emphasize this shift from assistant to operator and the need for operator discipline.

5. Azure hardware and custom silicon — DPUs, HSM, and Blackwell-class GPUs

The hardware story

Ignite emphasized full-stack infrastructure work: NVIDIA Blackwell Ultra-class GPUs in Azure AI racks, new Azure custom silicon (Cobalt CPUs and Maia AI accelerators), the first Microsoft DPU (Azure Boost), and an on-server Azure Integrated HSM for enhanced key protection. Microsoft’s infrastructure posts describe DPU/Boost as an offload that can greatly increase storage/network throughput and reduce server power for data-centric workloads; the Integrated HSM is positioned as a hardware root of trust that will be installed in new servers. Independent coverage and regional press corroborate both the DPU and Integrated HSM launches.Why this matters for ML and operations

- Offloading storage and networking tasks into a DPU is intended to keep CPUs and GPUs focused on model compute, improving effective utilization for training and inference.

- Hardware HSMs installed per server reduce the blast radius for key exposure and enable higher-assurance cryptographic operations for tenant data.

- High-throughput fabrics (Azure Boost + Fairwater sites) reduce bottlenecks for distributed training and make multi-site synchronous jobs more achievable.

Real-world validation

Microsoft’s technical and press posts describe cooling innovations, GB-series GPU deployments, and DPU power/performance targets; independent reporting confirms these initiatives, though exact production availability and per-region capacity vary. As with performance claims for services, architects should validate throughput and latency with benchmark runs tailored to their workload profiles.Five practical ways to build agentic systems now

Below are actionable patterns and steps to help teams turn Ignite announcements into safe, scalable solutions.1. Start with one high-value, low-risk pilot (and instrument everything)

- Pick a domain with clear rollback semantics (e.g., cost-optimization, observability triage, or read-only analytics).

- Use Foundry IQ to build a knowledge base for that domain and validate permissions and retention behavior.

- Run the agent in monitor mode: collect proposals, logs, and telemetry without permitting writes.

- Iterate on LLM model routing (Anthropic vs GPT) to optimize accuracy and cost.

2. Treat agents as identities and integrate them into governance

- Create Entra Agent IDs, conditional access policies, and lifecycle reviews for agents.

- Add agents to access review cycles and include their telemetry in SIEM/SOAR playbooks.

- Require signed artifacts and approval gates for any agent that can produce executable IaC or runbook changes.

3. Ground reasoning with semantic layers and managed retrieval

- Use Fabric IQ to model core business entities (start with 1–2 ontologies).

- Create Foundry IQ knowledge bases to centralize retrieval and avoid ad-hoc embeddings across projects.

- Ensure Purview label enforcement is end-to-end for retrieval and agent outputs.

4. Use HorizonDB and DiskANN when your application requires low-latency, vector-enabled OLTP

- Evaluate HorizonDB if you need tight coupling between transactional workloads and semantic search or RAG patterns.

- Benchmark typical OLTP workloads against open-source Postgres and HorizonDB preview to verify throughput/latency claims for your schema and index patterns.

- Use DiskANN for large filtered vector searches — particularly when predicate pushdown or scale beyond RAM is necessary.

5. Implement cost and observability controls early

- Apply tenant-level quotas, agent-level caps, and message meters to avoid runaway inference cost.

- Instrument agent proposals, approvals, and actions with structured metadata (model version, tool invocation, knowledge-base provenance).

- Route logs and traces into existing APM/SIEM systems and require retention policies consistent with your compliance controls.

Strengths: what Microsoft got right

- Systems thinking: integrating model choice, semantic layers, managed retrieval, agent orchestration, and infra advances reduces integration friction and offers a credible path from POC to production.

- Governance-first framing: identity-bound agents, Agent 365, and Purview/Defender integration bring practical guardrails to an otherwise chaotic automation surface.

- Data-to-model proximity: HorizonDB, Fabric IQ, and Foundry IQ lower latency and reduce data movement for RAG and inference patterns, offering better privacy and simpler architectures.

- Developer ergonomics: Copilot Studio, Foundry tooling, and migration agents shorten the ramp for developer teams and system integrators to build and ship agentic workflows.

Risks and open questions

- Vendor claims vs. customer reality: performance numbers (e.g., HorizonDB 3x throughput, Azure Boost throughput targets) originate in vendor tests; independent benchmarking in your environment is required. Treat these as promises to verify, not procurement guarantees.

- Expanded attack surface: agent identities, MCP connectors, and tool integrations create new vectors for prompt and connector exploitation. Security teams must expand threat models to include inter-agent communications and off-platform connectors.

- Cost model uncertainty: always-on agents with long context windows can increase inference spend dramatically; cost controls and quota management are essential.

- Interoperability and lock-in: while Microsoft emphasizes open protocols (MCP), organizations with multi-cloud strategies should validate cross-vendor interoperability early. Proof-of-concept cross-cloud workflows are recommended.

An operational checklist for the first 90 days

- Inventory candidate workflows and rank by value / risk.

- Build one pilot using Foundry IQ + Copilot Agent in monitor-only mode.

- Add agents to the tenant access review and configure Entra policies.

- Enable Purview policies for indexed content and verify RBAC for retrieval endpoints.

- Run controlled load tests against HorizonDB preview (if your workload is OLTP + vector-heavy).

- Establish cost alerts and hard caps on agent inference spend.

- Integrate agent telemetry into SIEM and incident response playbooks.

Conclusion

Ignite 2025 marked a pivot from “AI features” to a coherent, production-focused architecture for agentic systems. Microsoft’s announcements — from Foundry’s multi-model catalog and IQ layers to HorizonDB’s Postgres-scale ambitions, Azure Copilot’s agentized operations, and custom hardware like the Azure Boost DPU and Integrated HSM — together lower many of the engineering barriers that hindered agent deployments. But lowered barriers do not eliminate responsibilities: careful pilots, identity-centered governance, provenance-aware retrieval, and disciplined cost management are the work that turns platform potential into repeatable, safe value.Treat Microsoft’s claims as the starting line: verify performance and behavior in your environment, pilot with limited scope, instrument exhaustively, and bake governance into the agent lifecycle. Doing so converts Ignite’s announcements from marketing milestones into sustainable, measurable improvements in how your teams operate, automate, and deliver outcomes at scale.

Source: Microsoft Azure Microsoft Ignite 2025 Recap: Agentic AI, Foundry, and Azure Innovations