Artificial intelligence has moved past the novelty phase, and Microsoft’s latest framing makes that transition explicit: the real test is no longer whether AI can impress in a demo, but whether it changes work in ways leaders can measure. In a recent Microsoft feature spotlighting Doug Schrock of Crowe, the message is clear that AI value is operational, not theatrical. That may sound obvious, but in an industry still flooded with hype, it is a meaningful correction. The organizations that win will be the ones that turn trust, governance, and workflow design into repeatable outcomes.

The core argument behind Microsoft’s “When AI delivers real value, not just potential” is that AI has matured into an enterprise discipline. Schrock’s perspective is blunt: AI only matters when it produces a result a company could not otherwise achieve. That line is important because it shifts the conversation from aspiration to accountability.

This is not just a philosophical tweak. It reflects a market that has learned, sometimes painfully, that pilots are easy and scale is hard. Companies can quickly generate excitement around Copilot, agents, and automation, but the real question is whether those tools improve throughput, quality, and decision speed inside daily business operations. Microsoft’s own documentation now mirrors that same practical stance, emphasizing security, governance, deployment controls, and measurable impact rather than raw experimentation alone. (learn.microsoft.com)

Crowe’s example is especially relevant because it demonstrates a familiar enterprise pattern: innovation succeeds faster when it is placed in a structure that allows for speed without destabilizing the core business. By positioning Crowe Studio as a standalone innovation arm, the firm created room to experiment, refine, and scale without forcing every new idea through the same operational machinery as the rest of the business. That is a common enterprise lesson, but AI makes it more urgent.

The other major theme is trust. Microsoft’s platform advantage is not simply that it offers models and tooling, but that it sits inside the productivity stack people already use every day. Schrock’s point—that executives are more comfortable introducing AI through Microsoft because it is already part of the work environment—reflects a practical truth: adoption accelerates when AI arrives through systems users recognize. Microsoft’s own guidance underscores that Copilot works within existing permissions and security controls, and that oversharing or poor governance can undermine results. (learn.microsoft.com)

Microsoft has spent the last two years positioning itself as the practical platform for that phase. Its Microsoft 365 Copilot strategy has repeatedly emphasized enterprise readiness, permissions-aware access, and integration with the software employees already use. The company’s security guidance states plainly that Copilot is built on Microsoft 365 identity and access controls and aligns with Zero Trust principles. That matters because the AI era is not only about intelligence; it is about safely surfacing knowledge without creating new exposure. (learn.microsoft.com)

At the same time, Microsoft has been broadening Copilot Studio and agent capabilities so organizations can move beyond generic assistants toward task-specific systems. The 2026 release wave documentation says Copilot Studio will make it easier to create and operate agents, extend agents built with Agent Builder in Microsoft 365 Copilot, and support evaluations and high-value workflow actions. That is a clear sign that Microsoft now sees agent evaluation and governance as first-class enterprise requirements, not optional extras. (learn.microsoft.com)

Crowe’s perspective fits neatly into that evolution. The firm is not treating AI as a branding exercise or a side project. Instead, it is trying to wire AI into the actual work of a professional services organization, where speed and consistency matter, but accuracy and trust matter even more. That distinction is crucial because consulting and advisory firms live or die by credibility.

The timing also matters. In 2026, many organizations have already tried enough AI experiments to know where the bodies are buried. They understand that a proof of concept can be impressive and still fail in production because of weak permissions, poor change management, or no clear way to measure whether the tool helps. Microsoft’s deployment blueprint explicitly calls out security and governance concerns, deployment complexity, and visibility gaps as the main obstacles to scaling agents. That is exactly the environment Schrock is describing. (learn.microsoft.com)

They also want AI to fit into existing operating rhythms rather than disrupt them for the sake of novelty. Microsoft’s documentation on choosing between Agent Builder in Microsoft 365 Copilot and Copilot Studio reflects that split, with Agent Builder suited to lightweight Q&A inside familiar work contexts and Copilot Studio designed for broader, more governed, more complex scenarios. That mapping is useful because it shows how Microsoft is segmenting AI maturity by use case, not by buzzword. (learn.microsoft.com)

That is why the company’s language now sounds increasingly like enterprise software rather than consumer hype. The value proposition is not “look what the model can do,” but “look what happens when the model is embedded safely into the work you already do.” In that sense, Microsoft’s story has become less about invention and more about industrialization.

That framing is especially useful because it demystifies the technology. AI is not the goal; it is a means to speed, quality, or scale. The same is true of automation generally, but AI has a broader and more ambiguous surface area, which makes discipline even more important. Without a defined outcome, AI becomes an expensive experiment with uncertain payback.

It also changes executive oversight. Leaders can evaluate AI initiatives the same way they evaluate any other business transformation: by defining the baseline, tracking the delta, and deciding whether the result justifies continued investment. This is not the same as simply counting pilot completions or internal demos.

That is where Microsoft’s current product direction becomes relevant. The company is increasingly offering the scaffolding needed to take AI beyond the pilot stage: governance controls, deployment blueprints, evaluation features, and admin-level oversight. It is a recognition that the true enterprise challenge is not creating a demo; it is creating a durable capability. (learn.microsoft.com)

In practice, AI affects work by reducing friction. It helps people generate a first draft, summarize a meeting, synthesize a policy, or route a request without forcing them to rebuild context from scratch. Those are small changes individually, but they become meaningful when repeated thousands of times across an organization.

Microsoft’s own documentation supports that view. It describes Agent Builder in Microsoft 365 Copilot as a way to create lightweight Q&A agents for individuals or small teams, while Copilot Studio is aimed at complex, broader, governed scenarios. That product split effectively encodes the idea that AI should meet users where they already work. (learn.microsoft.com)

The hidden opportunity is not replacing judgment. It is preserving judgment for the moments when it matters most. AI can handle the first pass, the retrieval, the summary, or the routing, while people focus on exceptions, relationships, and strategic decisions.

Microsoft’s security guidance makes the trust issue concrete. Copilot uses existing identity and access controls, respects authorized access, and operates within compliance and privacy commitments. It also warns that overshared or poorly governed content can affect results and increase risk. In other words, trust is not a branding slogan; it is an architectural condition. (learn.microsoft.com)

That matters because enterprise AI adoption is as much about behavior as tooling. If users are unsure where data is going, who can see it, or how it is governed, they will hesitate. Microsoft’s governance and deployment guidance is designed to remove that uncertainty. (learn.microsoft.com)

That approach does more than reduce risk. It improves quality. Clean permissions and well-governed data mean the model has a better chance of returning useful, context-appropriate answers. In AI, good governance is not just about preventing failure; it is also about enabling better output.

That choice matters because AI initiatives frequently fail when they are forced to operate at the same speed, with the same rules, and under the same short-term pressure as line-of-business functions. A dedicated innovation arm can take longer views, test more aggressively, and move faster without destabilizing the organization around it.

This approach also helps with leadership buy-in. Executives often support innovation more readily when they know it is bounded, measured, and not going to interrupt core operations. That is especially true when the technology is as visible and as misunderstood as AI.

That is why the best model is usually not isolation but separation with linkage. The innovation group needs autonomy, but it also needs strong feedback loops with the business units it serves. In AI, relevance is everything.

Its documentation shows the company is leaning into that advantage by differentiating between simple, internal agents and more sophisticated enterprise agents. Agent Builder is for quick creation in Microsoft 365, while Copilot Studio supports broader audiences, advanced workflows, custom integrations, and lifecycle management. That distinction maps directly to how enterprises actually adopt technology: starting small, then expanding into governed scale. (learn.microsoft.com)

The company is also pushing evaluation and management capabilities more aggressively. Copilot Studio’s release wave notes emphasize support for evaluations and workflows with high-value actions, while Microsoft Learn’s guidance on agent evaluation and secure governance suggests a more mature lifecycle model is emerging. That is the kind of infrastructure enterprises need if they want AI to become dependable rather than experimental. (learn.microsoft.com)

That does not guarantee success, because enterprises will still compare outcomes and costs. But it does mean Microsoft can win by reducing adoption friction. In enterprise software, friction is destiny more often than technology purity.

In the current market, many organizations still struggle to define the right metrics. Usage alone is not enough. A tool can be heavily used and still fail to improve productivity. A pilot can attract enthusiastic users and still not justify broader rollout.

There is also a managerial dimension. Leaders need to know whether AI is freeing up capacity, shifting labor to higher-value work, or merely creating another layer of complexity. Without that evidence, even strong adoption numbers can be misleading.

This matters because agents can behave unpredictably in edge cases. A system that seems reliable in a demo may struggle when data is messy, instructions conflict, or a workflow spans multiple systems. Evaluation turns that uncertainty into something manageable.

There is also the danger of confusing activity with transformation. Teams can deploy agents, celebrate usage, and still fail to change meaningful business metrics. That is why so many AI programs stall after the excitement phase.

Schrock’s warning is also a prediction: leaders will likely regret moving too slowly more than they regret moving too quickly. That does not mean they should rush blindly. It means the cost of waiting may soon exceed the cost of acting with discipline. In AI, inaction is not neutral; it is a strategic choice with compounding consequences.

Source: Microsoft When AI delivers real value, not just potential - Microsoft in Business Blogs

Overview

Overview

The core argument behind Microsoft’s “When AI delivers real value, not just potential” is that AI has matured into an enterprise discipline. Schrock’s perspective is blunt: AI only matters when it produces a result a company could not otherwise achieve. That line is important because it shifts the conversation from aspiration to accountability.This is not just a philosophical tweak. It reflects a market that has learned, sometimes painfully, that pilots are easy and scale is hard. Companies can quickly generate excitement around Copilot, agents, and automation, but the real question is whether those tools improve throughput, quality, and decision speed inside daily business operations. Microsoft’s own documentation now mirrors that same practical stance, emphasizing security, governance, deployment controls, and measurable impact rather than raw experimentation alone. (learn.microsoft.com)

Crowe’s example is especially relevant because it demonstrates a familiar enterprise pattern: innovation succeeds faster when it is placed in a structure that allows for speed without destabilizing the core business. By positioning Crowe Studio as a standalone innovation arm, the firm created room to experiment, refine, and scale without forcing every new idea through the same operational machinery as the rest of the business. That is a common enterprise lesson, but AI makes it more urgent.

The other major theme is trust. Microsoft’s platform advantage is not simply that it offers models and tooling, but that it sits inside the productivity stack people already use every day. Schrock’s point—that executives are more comfortable introducing AI through Microsoft because it is already part of the work environment—reflects a practical truth: adoption accelerates when AI arrives through systems users recognize. Microsoft’s own guidance underscores that Copilot works within existing permissions and security controls, and that oversharing or poor governance can undermine results. (learn.microsoft.com)

Background

The broader AI market has gone through a predictable cycle. First came the public fascination with generative AI’s apparent versatility. Then came the enterprise scramble to test it in support centers, document workflows, sales processes, HR, and coding. Now, in 2026, the market is entering the harder phase: proving business value under real operational constraints.Microsoft has spent the last two years positioning itself as the practical platform for that phase. Its Microsoft 365 Copilot strategy has repeatedly emphasized enterprise readiness, permissions-aware access, and integration with the software employees already use. The company’s security guidance states plainly that Copilot is built on Microsoft 365 identity and access controls and aligns with Zero Trust principles. That matters because the AI era is not only about intelligence; it is about safely surfacing knowledge without creating new exposure. (learn.microsoft.com)

At the same time, Microsoft has been broadening Copilot Studio and agent capabilities so organizations can move beyond generic assistants toward task-specific systems. The 2026 release wave documentation says Copilot Studio will make it easier to create and operate agents, extend agents built with Agent Builder in Microsoft 365 Copilot, and support evaluations and high-value workflow actions. That is a clear sign that Microsoft now sees agent evaluation and governance as first-class enterprise requirements, not optional extras. (learn.microsoft.com)

Crowe’s perspective fits neatly into that evolution. The firm is not treating AI as a branding exercise or a side project. Instead, it is trying to wire AI into the actual work of a professional services organization, where speed and consistency matter, but accuracy and trust matter even more. That distinction is crucial because consulting and advisory firms live or die by credibility.

The timing also matters. In 2026, many organizations have already tried enough AI experiments to know where the bodies are buried. They understand that a proof of concept can be impressive and still fail in production because of weak permissions, poor change management, or no clear way to measure whether the tool helps. Microsoft’s deployment blueprint explicitly calls out security and governance concerns, deployment complexity, and visibility gaps as the main obstacles to scaling agents. That is exactly the environment Schrock is describing. (learn.microsoft.com)

Why the narrative shifted

The narrative shifted because the market’s tolerance for vague AI ambition is shrinking. Executives increasingly want evidence tied to cost reduction, revenue lift, cycle-time improvement, or employee productivity. In practical terms, they want AI to shorten tasks, reduce friction, and help people make fewer mistakes.They also want AI to fit into existing operating rhythms rather than disrupt them for the sake of novelty. Microsoft’s documentation on choosing between Agent Builder in Microsoft 365 Copilot and Copilot Studio reflects that split, with Agent Builder suited to lightweight Q&A inside familiar work contexts and Copilot Studio designed for broader, more governed, more complex scenarios. That mapping is useful because it shows how Microsoft is segmenting AI maturity by use case, not by buzzword. (learn.microsoft.com)

What Microsoft is really selling

Microsoft is not merely selling AI features. It is selling a managed path from experimentation to operationalization. That path includes data readiness, permissions, governance, telemetry, and evaluation.That is why the company’s language now sounds increasingly like enterprise software rather than consumer hype. The value proposition is not “look what the model can do,” but “look what happens when the model is embedded safely into the work you already do.” In that sense, Microsoft’s story has become less about invention and more about industrialization.

Outcomes Over Theater

Schrock’s line that AI has no inherent value until it delivers an outcome is one of the most important enterprise AI principles a leader can hear. It strips away the performance aspect that often surrounds new technology and forces a basic question: what business result will change if this works?That framing is especially useful because it demystifies the technology. AI is not the goal; it is a means to speed, quality, or scale. The same is true of automation generally, but AI has a broader and more ambiguous surface area, which makes discipline even more important. Without a defined outcome, AI becomes an expensive experiment with uncertain payback.

The outcome lens

An outcome lens changes how projects are chosen. Instead of asking, “Where can we use AI?”, teams should ask, “Where are we losing time, quality, or consistency that AI could help recover?” That question is more demanding, but it produces better investments.It also changes executive oversight. Leaders can evaluate AI initiatives the same way they evaluate any other business transformation: by defining the baseline, tracking the delta, and deciding whether the result justifies continued investment. This is not the same as simply counting pilot completions or internal demos.

From pilot culture to production culture

Many companies have developed a pilot culture that celebrates activity over impact. AI has amplified that risk because it is easy to test and hard to standardize. A team can build a convincing prototype in days, but it may take months to make that prototype trustworthy, measurable, and supportable.That is where Microsoft’s current product direction becomes relevant. The company is increasingly offering the scaffolding needed to take AI beyond the pilot stage: governance controls, deployment blueprints, evaluation features, and admin-level oversight. It is a recognition that the true enterprise challenge is not creating a demo; it is creating a durable capability. (learn.microsoft.com)

Practical outcomes leaders care about

- Faster first drafts for knowledge work

- Better consistency in recurring business processes

- Reduced time spent searching across files and systems

- More reliable triage for employee or customer support

- Shorter approval and routing cycles

- Improved decision-making with less manual synthesis

- Lower cognitive load on frontline teams

Where AI Changes Work

The most useful part of Schrock’s view is his distinction between culture and behavior. AI does not change what a company believes about itself, but it does change what people do every day. That is the level where productivity lives or dies.In practice, AI affects work by reducing friction. It helps people generate a first draft, summarize a meeting, synthesize a policy, or route a request without forcing them to rebuild context from scratch. Those are small changes individually, but they become meaningful when repeated thousands of times across an organization.

Daily work, not abstract transformation

This is why the phrase “embedded in the flow of work” matters. AI tools that require users to leave their environment, copy data into another system, and rebuild context are far less likely to scale. Tools that live inside Microsoft 365, Teams, SharePoint, or a connected business app reduce the cognitive cost of adoption.Microsoft’s own documentation supports that view. It describes Agent Builder in Microsoft 365 Copilot as a way to create lightweight Q&A agents for individuals or small teams, while Copilot Studio is aimed at complex, broader, governed scenarios. That product split effectively encodes the idea that AI should meet users where they already work. (learn.microsoft.com)

Small friction, big consequences

AI’s value often appears in the invisible parts of work. A few minutes saved on a single task may not look revolutionary, but the cumulative effect across dozens of processes can be substantial. That is particularly true in professional services, operations, HR, and support, where repetitive knowledge handling consumes expensive human attention.The hidden opportunity is not replacing judgment. It is preserving judgment for the moments when it matters most. AI can handle the first pass, the retrieval, the summary, or the routing, while people focus on exceptions, relationships, and strategic decisions.

What changes at the team level

- Less time spent on repetitive drafting

- More time available for analysis and review

- Fewer handoffs caused by manual triage

- Better process consistency across teams

- Faster onboarding for new employees

- More confidence that routine tasks follow a standard

- Higher leverage for subject-matter experts

Trust as the Real Foundation

Trust has become the most underappreciated factor in enterprise AI. People do not simply need to trust the model; they need to trust the environment the model operates in, the data it can see, and the controls around it. That is why Microsoft’s ecosystem matters so much to organizations already standardized on its stack.Microsoft’s security guidance makes the trust issue concrete. Copilot uses existing identity and access controls, respects authorized access, and operates within compliance and privacy commitments. It also warns that overshared or poorly governed content can affect results and increase risk. In other words, trust is not a branding slogan; it is an architectural condition. (learn.microsoft.com)

Familiarity as an accelerant

Schrock’s comment about executives being comfortable with Microsoft is revealing. Familiarity lowers the psychological barrier to adoption, especially when the stakes involve proprietary information, compliance obligations, and employee productivity. If users already rely on Microsoft 365, then AI introduced through that channel feels like an extension of the normal work environment, not an external system intruding on it.That matters because enterprise AI adoption is as much about behavior as tooling. If users are unsure where data is going, who can see it, or how it is governed, they will hesitate. Microsoft’s governance and deployment guidance is designed to remove that uncertainty. (learn.microsoft.com)

Security is not a blocker; it is a design input

Too many AI projects still treat security reviews as a final hurdle. The more mature approach is to make security part of the design from day one. Microsoft’s documentation on secure and governed foundations for Copilot reinforces that point by positioning data readiness, access control, and governance as prerequisites for accurate and relevant results.That approach does more than reduce risk. It improves quality. Clean permissions and well-governed data mean the model has a better chance of returning useful, context-appropriate answers. In AI, good governance is not just about preventing failure; it is also about enabling better output.

Trust-building principles

- Start within a familiar productivity environment

- Preserve existing permissions and access rules

- Limit exposure through governance and auditing

- Measure behavior, not just deployment counts

- Use secure foundations before broad rollout

- Keep humans responsible for final decisions

- Treat data quality as part of trust

Crowe’s Operating Model

Crowe’s decision to position Crowe Studio as a standalone innovation group is more than a structural footnote. It reflects a common organizational truth: breakthrough work often requires a different pace, different incentives, and different tolerance for uncertainty than the core business can provide.That choice matters because AI initiatives frequently fail when they are forced to operate at the same speed, with the same rules, and under the same short-term pressure as line-of-business functions. A dedicated innovation arm can take longer views, test more aggressively, and move faster without destabilizing the organization around it.

Why separation can help

Separation is useful when innovation must coexist with reliability. If the central business is responsible for billing, compliance, and client delivery, then it often cannot absorb every experiment without increasing operational risk. A standalone group creates a controlled environment where new ideas can mature before being folded into the broader business.This approach also helps with leadership buy-in. Executives often support innovation more readily when they know it is bounded, measured, and not going to interrupt core operations. That is especially true when the technology is as visible and as misunderstood as AI.

The risk of isolation

The downside, of course, is that innovation can drift away from real operational needs if it becomes too detached. A standalone group must remain close enough to the business to solve meaningful problems. Otherwise it can create impressive prototypes that nobody wants to operationalize.That is why the best model is usually not isolation but separation with linkage. The innovation group needs autonomy, but it also needs strong feedback loops with the business units it serves. In AI, relevance is everything.

What this structure enables

- Faster experimentation without immediate enterprise disruption

- Better alignment of AI projects with business outcomes

- Clearer separation between test and production environments

- More room to build trust with leadership

- Easier prioritization of high-value use cases

- Better control over risk during early development

The Microsoft Platform Advantage

Microsoft’s advantage in enterprise AI is not based on novelty. It comes from distribution, familiarity, and the ability to place AI inside systems people already use. That makes the company less of an AI vendor in the abstract and more of a workflow platform with AI embedded throughout.Its documentation shows the company is leaning into that advantage by differentiating between simple, internal agents and more sophisticated enterprise agents. Agent Builder is for quick creation in Microsoft 365, while Copilot Studio supports broader audiences, advanced workflows, custom integrations, and lifecycle management. That distinction maps directly to how enterprises actually adopt technology: starting small, then expanding into governed scale. (learn.microsoft.com)

Why the platform matters

For many organizations, the hardest part of AI adoption is not model quality. It is plumbing. Connecting identity, permissions, data sources, audit logs, and workflow systems is where deployments often slow down. Microsoft’s stack is attractive precisely because it already sits close to those enterprise layers.The company is also pushing evaluation and management capabilities more aggressively. Copilot Studio’s release wave notes emphasize support for evaluations and workflows with high-value actions, while Microsoft Learn’s guidance on agent evaluation and secure governance suggests a more mature lifecycle model is emerging. That is the kind of infrastructure enterprises need if they want AI to become dependable rather than experimental. (learn.microsoft.com)

The competitive angle

This also has implications for competitors. Standalone AI startups can still win on specialization, but they face a harder distribution challenge inside large companies. Microsoft, by contrast, can attach AI to an existing productivity base and a familiar security model.That does not guarantee success, because enterprises will still compare outcomes and costs. But it does mean Microsoft can win by reducing adoption friction. In enterprise software, friction is destiny more often than technology purity.

Competitive advantages in practice

- Existing footprint in productivity and collaboration

- Familiar administrative and governance models

- Direct connection to identity and permissions

- Broad distribution through Microsoft 365

- Stronger path from pilot to enterprise scale

- Easier user adoption because tools feel native

- Support for both simple and complex agent scenarios

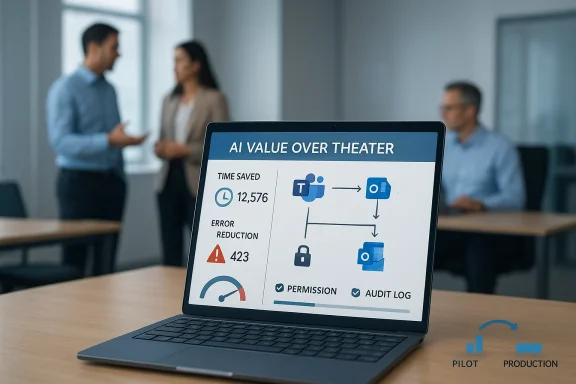

Measuring Real Value

If AI is to be judged by outcomes, then measurement becomes the most important part of the stack. Microsoft’s deployment blueprint says organizations need to manage access and costs and measure adoption and impact. That is the right framing because adoption without impact is just activity. (learn.microsoft.com)In the current market, many organizations still struggle to define the right metrics. Usage alone is not enough. A tool can be heavily used and still fail to improve productivity. A pilot can attract enthusiastic users and still not justify broader rollout.

What to measure instead

The best metrics are tied to specific workflows. Did AI reduce average handling time? Did it improve first-pass accuracy? Did it cut the time required to prepare a draft, respond to a ticket, or summarize a report? Those are the questions that separate real value from digital theater.There is also a managerial dimension. Leaders need to know whether AI is freeing up capacity, shifting labor to higher-value work, or merely creating another layer of complexity. Without that evidence, even strong adoption numbers can be misleading.

Why evaluation is becoming a discipline

Microsoft’s release plans now refer to support for evaluations in Copilot Studio, and Microsoft Learn has expanded guidance on designing and operationalizing agent evaluation. That is a sign the market is moving toward a more formalized discipline around AI quality. In plain English, organizations are learning that they need testing models for AI behavior, not just software correctness. (learn.microsoft.com)This matters because agents can behave unpredictably in edge cases. A system that seems reliable in a demo may struggle when data is messy, instructions conflict, or a workflow spans multiple systems. Evaluation turns that uncertainty into something manageable.

Measurement checklist

- Define one business outcome per use case.

- Establish a baseline before deployment.

- Track time saved, error reduction, or throughput change.

- Monitor adoption and abandonment separately.

- Review governance and audit data regularly.

- Decide whether to expand, refine, or retire each use case.

- Tie cost measurement to actual business impact.

Strengths and Opportunities

Microsoft’s current AI posture is strongest where it aligns with enterprise reality: secure foundations, familiar workflows, and a clear path from lightweight usage to governed scale. Schrock’s comments underline the market opportunity for organizations that are willing to move beyond experimentation and build AI into actual operating processes.Where the upside is clearest

- Faster productivity gains from embedded assistants

- Lower adoption friction because users stay in familiar tools

- Better governance through Microsoft 365 controls and admin visibility

- Scalable agent design via Copilot Studio for broader scenarios

- Improved quality when data and permissions are well managed

- Operational resilience when innovation is separated from core execution

- Measurable business impact through evaluation and deployment blueprints

Risks and Concerns

The biggest risk in this phase of AI adoption is complacency. Organizations may assume that because a tool is available inside a trusted platform, it is automatically ready for broad use. That is not true, and Microsoft’s own documentation makes clear that permissions, governance, and data quality still matter greatly. (learn.microsoft.com)There is also the danger of confusing activity with transformation. Teams can deploy agents, celebrate usage, and still fail to change meaningful business metrics. That is why so many AI programs stall after the excitement phase.

The main concerns

- Overshared content can weaken result quality and increase exposure

- Poor governance can turn useful tools into compliance headaches

- Pilot sprawl can create duplicated effort and unclear ownership

- Measurement gaps can hide whether AI is actually helping

- Integration complexity can slow enterprise-wide rollout

- User overreliance can create false confidence in outputs

- Innovation drift can separate AI projects from real business needs

Looking Ahead

The next phase of enterprise AI will be defined by discipline. Companies that succeed will not be those with the most demos, but those with the clearest workflows, the cleanest governance, and the most credible measurement of value. Microsoft’s current Copilot and Copilot Studio direction suggests the company understands that shift and is building for it. (learn.microsoft.com)Schrock’s warning is also a prediction: leaders will likely regret moving too slowly more than they regret moving too quickly. That does not mean they should rush blindly. It means the cost of waiting may soon exceed the cost of acting with discipline. In AI, inaction is not neutral; it is a strategic choice with compounding consequences.

What to watch next

- Broader enterprise adoption of Copilot Studio for governed agents

- More formal evaluation tooling for real business workflows

- Expanded use of Microsoft 365 Copilot inside daily productivity tasks

- Greater emphasis on data readiness and permissions hygiene

- Tighter connections between AI usage and business outcome reporting

Source: Microsoft When AI delivers real value, not just potential - Microsoft in Business Blogs

Last edited: