A routine question about cleaning a washing machine turned into a sharp reminder that artificial intelligence can be fast, confident—and dangerously wrong. A local consumer report found that while most AI assistants suggested standard, safe approaches for removing black mold from a front‑loading washer’s rubber door seal, one voice assistant recommended a combination that could produce toxic chlorine gas: bleach followed by vinegar and scrubbing with a wire brush—advice reported by a WILX consumer tech segment and reproduced across multiple queries to the device. t‑loading washing machines are common in modern homes because of their efficiency and gentle handling of clothes, but their closed seals and low‑water cycles create a recurring problem: trapped moisture and detergent residue that invite mold and mildew growth. When consumers ask their phones or smart speakers how to solve household problems, they expect short, practical answers. Increasingly, those answers come from generative AI assistants—ChatGPT, Google Gemini, Perplexity, Microsoft Copilot, and voice agents such as Amazon’s Alexa—rather than an appliance manual or manufacturer guidance. The WILX report tested several mainstream assistants on a single, everyday question and documented both generally sensible guidance and a single, hazardous outlier.

This episode raisesions for consumers and product makers alike:

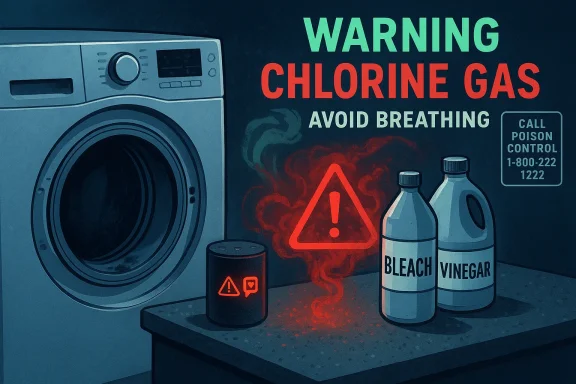

The WILX report described a test in which a reporter asked multiple AI assistants the same, specific question: “How do you remove black mold from the rubber door seal of a front‑loading washing machine?” Most assistants recommended reasonable approaches—use a diluted bleach solution or a manufacturer‑approved cleaning product, wear gloves, ventilate the area, and run a cleaning cycle. One assistant, identified in the report as Amazon’s Alexa, added an extra step: after suggesting bleach and water, it recommended using vinegar and a wire brush to scrub stubborn mold. The reporter tested the query multiple times, including on a separate Echo device and under a different account; the risky recommendation persisted in repeated queries before the assistant acknowledged the hazard when informed. WILX also reported that Amazon had not responded to a request for comment at the time of publication.

That single, apparently small piece of a vinegar*—is the dangerous element. The combination is well known to chemistry and public‑health authorities to produce chlorine gas, a potent irritant that can cause coughing, chest pain, shortness of breath, and, in severe cases, pulmonary edema and hospitalization. The risk is not theoretical: poison‑control data and public‑health agencies have long logged exposures caused by mixing household bleach and acids.

Key facts, supported by public‑health data:

Vendors that deploy assistants to millions of households have a duty to reduce the likelihood that their systems will produce safety‑critical misinformation. That duty is both ethical and practical: repeated, unmitigated harms invite regulatory scrutiny and consumer backlash. In the U.S., federal consumer‑protection agencies have flagged deceptive or unsafe AI outputs as within their enforcement remit, and standards bodies such as NIST have published AI risk‑management guidance urging special handling for high‑impact or safety‑critical use cases. The broad policy direction is clear: product teams must treat safety‑critical advice differently from conversational chitchat.

Two practical accountability points:

Two structural trends make this problem urgent:

In an era where an answer is only a speech bubble or a spoken sentence away, that boundary between convenience and risk matters more than ever. The technology is powerful; the responsibility to use it safely belongs to the companies that deploy it, the regulators who oversee the market, and the users who depend on it.

Source: WILX What The Tech? When AI is wrong

This episode raisesions for consumers and product makers alike:

- How real and serious is the health risk described?

- Why did an AI assistant provide hazardous instructions?

- What practical and policy steps should follow to prevent harm?

The incident: what happened, exactly

The incident: what happened, exactly

The WILX report described a test in which a reporter asked multiple AI assistants the same, specific question: “How do you remove black mold from the rubber door seal of a front‑loading washing machine?” Most assistants recommended reasonable approaches—use a diluted bleach solution or a manufacturer‑approved cleaning product, wear gloves, ventilate the area, and run a cleaning cycle. One assistant, identified in the report as Amazon’s Alexa, added an extra step: after suggesting bleach and water, it recommended using vinegar and a wire brush to scrub stubborn mold. The reporter tested the query multiple times, including on a separate Echo device and under a different account; the risky recommendation persisted in repeated queries before the assistant acknowledged the hazard when informed. WILX also reported that Amazon had not responded to a request for comment at the time of publication.That single, apparently small piece of a vinegar*—is the dangerous element. The combination is well known to chemistry and public‑health authorities to produce chlorine gas, a potent irritant that can cause coughing, chest pain, shortness of breath, and, in severe cases, pulmonary edema and hospitalization. The risk is not theoretical: poison‑control data and public‑health agencies have long logged exposures caused by mixing household bleach and acids.

Why mixing bleach and vinegar is dangerous: the chemistry and the facts

Bleach sold for household use is typically a dilute aqueous solution of sodium hypochlorite. When hypochlorite (the active oxidizer in bleach) reacts with an acid—such as the acetic acid in white vinegar—the reaction releases chlorine gas (Cl2) and other reactive byproducts. Short exposures to low concentrations typically cause eye and throat irritation and coughing; higher concentrations or prolonged exposure can damage the lungs and lead to life‑threatening respiratory injury. Multiple authoritative sources warn explicitly: do not mix bleach with vinegar or other acids.Key facts, supported by public‑health data:

- Poison centers and public‑health agencies record thousands of exposure calls annually related to chlorine gas and hypochlorite products. Recent poison‑data summaries show that mixing household acid with hypochlorite results in thousands of single‑substance exposures reported to U.S. poison centers in a single year. The clinical outcomes range from mild irritation to major outcomes requiring hospitalization.

- Public agencies and state health departments list bleach‑plus‑acid combinations among the household cleaning mistakes that most commonly produce toxic fumes. These authorities emphasize ventilation, personal protective equipment (gloves, eye protection), and immediate removal from the area and contacting poison control in cases of exposure.

How did an AI assistant make that mistake?

Understanding why an AI assistant might offer such a hazardous recommendation requires separating two layers: the user‑facing assistant (the product) and the underlying language model or retrieval system (the engine).- Large language models (LLMs) generate text by predicting plausible word sequences based on patterns learned from massive corpora. They do not “understand” chemistry the way a trained chemist does. Instead, they surface likely continuations and aggregated information, sometimes out of context. This mechanism produces helpful, coherent answers most of the time—but it at errors when the training data contain contradictory or poorly contextualized snippets. The WILX piece correctly framed the failure as an instance of models being “confidently wrong” rather than malicious.

- Productization choices matter. Voice assistants often use multiple back‑end sources—short curated knowledge, a generative layer, and third‑party skill integrations. If the assistant’s answer was constructed by stitching together a recommended approach (bleach) with a separate tip (vinegar works for some mold problems) and presenting both without the necessary safety constraint (never combine acids and hypochlorite), the outcome can appear authoritative while being hazardous.

- Context and personalization make reproducing these errors nontrivial. The WILX reporter’s test showed different accounts and persistent responses, which suggests the assistant’s behavior was not a one‑off glitch. But the precise conditions that led to the advice—model version, device firmware, account settings, or a particular prompt rewriting—are typically opaquurnalists. WILX reported repeated tests and an apology when told about the hazard, but also noted that the suggestion reappeared under other accounts, underscoring the unpredictability.

- Voice interfaces increase risk. Unlike web results where users can scan sources and see warnings, a voice assistant’s spoken reply may omit important caveats or fail to interrupt a user mid‑task. When an assistant recommends a chemical process verbally, there’s less opportunity for users to pause and read fine print.

Reproducibility, vendor responsibility, and transparency

WILX documented repeated queries and cross‑account testing to show the behavior was persistent, not transient, and reported that Amazon had not responded at the time of publication. That reporting is importanike this should trigger a vendor response: confirmation, corrective mitigation (update model filters or response templates), and public guidance for affected users.Vendors that deploy assistants to millions of households have a duty to reduce the likelihood that their systems will produce safety‑critical misinformation. That duty is both ethical and practical: repeated, unmitigated harms invite regulatory scrutiny and consumer backlash. In the U.S., federal consumer‑protection agencies have flagged deceptive or unsafe AI outputs as within their enforcement remit, and standards bodies such as NIST have published AI risk‑management guidance urging special handling for high‑impact or safety‑critical use cases. The broad policy direction is clear: product teams must treat safety‑critical advice differently from conversational chitchat.

Two practical accountability points:

- Vendors should err on the side of refusal + referral for chemical/medical/safety instructions: refuse to provide a step‑by‑step hazardous procedure and refer users to authoritative sources (manufacturer manual, Poison Control, CDC).

- Vendors should log and publish incident summaries when assistants produce dangerous advice so product safety teams can eliminate root causes rather than treating incidents as isolated bugs.

The public‑health context: how often do cleaning‑chemical exposures happen?

National and clinical data make clear this is not a niche hazard. Poison centers saw marked increases in cleaning‑product exposures during the COVID‑19 period, and the National Poison Data System continues to record thousands of calls related to chlorine‑type exposures each year—some traceable to mixing household hypochlorite with acids or other cleaners. Clinical literature shows a range of outcomes: many exposures are mild, but severe pulmonary injury and hospitalizations occur and have been documented in case reports and series. These data underscore that advice on household chemicals is not trivial and should be handled with the same care as basic medical counsel.Practical, safe guidance for consumers right now

If you’re worried about mold in a front‑loading washer or you asked an assistant a similar question, follow these practical, safe steps—do not mix chemicals:- Stop and confirm. If an assistant suggests mixing two products, pause. Mixing household chemicals can be hazardous.

- Ventilate the area. If you suspect someone inhaled fumes from a mixture, get everyone into fresh air and call poison control immediately (in the U.S.: 1‑800‑222‑1222). Seek emergency care for symptoms such as difficulty breathing, chest pain, severe coughing, or disorientation.

- Prefer single‑agent cleaning methods and manufacturer guidance:

- Check your washer’s use‑and‑care guide. Major manufacturers (Whirlpool, Samsung) publish explicit cleaning instructions and often recommend either a periodic clean washer cycle with bleach dispensed per the manual or manufacturer‑approved cleaning tablets. Use only one disinfectant at a time and follow recommended concentrations and dispenser locations.

- Consider oxygen‑based cleaners (sodium percarbonate / “oxygen bleach”) or running a hot cycle with manufacturer‑approved washer cleaner—these can sanitize without the corrosive risks of repeated concentrated chlorine exposure. Several appliance experts and service guides now recommend oxygen bleach or manufacturer cleaning tablets for routine maintenance.

- For spot cleaning the gasket: use diluted bleach applied with a cloth (one cup bleach to a gallon of water is one typical household dilution used for disinfection), or a manufacturer‑approved mildew spray. Apply, allow contact time per label, rinse thoroughly, dry the seal, and ventilate. Do not follow up by applying vinegar or any acid‑based cleaner.

- Prevent recurrence: leave the door open between cycles, use the recommended detergent amount, clean the detergent drawer and pump filter regularly, and run your appliance’s cleaning cycle monthly. These steps reduce the need for aggressive chemical interventions.

Technical fixes AI vendors should deploy immediately

Product and model teams can and should reduce the chance of hazardous outputs using a layered approach:- Safety filters and blacklists for forbidden combinations: identify high‑risk chemical pairings (bleach + acid, bleach + ammonia, hydrogen peroxide + vinegar in some contexts) and guarantee the assistant will never provide step‑by‑step instructions that imply mixing. This is a straightforward and high‑impact intervention that should be standard. (Examples already exist in safety engineering; vendors must operationalize them for household‑chemistry contexts.)

- Domain classification and gating: classify user prompts as safety‑sensitive (chemistry, medicine, electrical work, etc.). Route these to a specialized workflow that either refuses, provides high‑level warnings, or returns curated, citation‑backed guidance rather than freeform generation.

- Citation and provenance: for any safety or health advice, include explicit provenance—manufacturer manual text, CDC/poison center guidance, or peer‑reviewed literature—and require the system to label uncertain or partial information as such.

- Human‑in‑the‑loop for edge cases: where an assistant detects ambiguity or potential harm, require a human operator review before surfacing a detailed procedure (this is especially relevant for voice assistants that might be used by vulnerable or nontechnical users).

- Update and audit logs: maintain auditable logs of safety incidents and the prompt/response pairs that triggered them. Use these logs to retrain classifiers and publish anonymized incident reports to increase transparency and trust.

- Device UI measures: on voice‑only devices, require the assistant to offer a brief, written safety card (for linked app or registered account) whenever it provides any cleaning/chemical/medical advice, to ensure users can review precise warnings and citations.

Design and user‑experience fixes: make safety the default

Beyond back‑end models, user experience matters. Voice agents should adopt these design rules:- Default to conservative responses for safety‑critical queries: when uncertain, refuse and refer.

- Provide short disclaimers for household‑chemistry advice: “I’m not a chemist—mixing cleaners can be dangerous. Would you like manufacturer guidance or contact info for poison control?”

- Use affirmative verification: ask follow‑ups before giving multi‑step chemical instructions. Confirm the user understands and is prepared with PPE and ventilation, or better—avoid giving instructions at all and send a link to authoritative guidance in the companion app.

- Offer interactive decision trees rather than freeform prescriptions: structured flows minimize ambiguity and help enforce safety checks.

Policy, liability, and the regulatory landscape

AI vendors deploying consumer assistants operate at the intersection of product engineering, consumer safety law, and emerging AI regulation. Regulators have signaled increasing attention:- U.S. consumer‑protection agencies (the FTC and state attorneys general) have asserted broad authority to pursue unfair or deceptive practices that cause consumer harm, and AI outputs that provide dangerous or misleading safety advice could fall squarely within that authority. Vendors should anticipate enforcement if they fail to adopt reasonable safety measures for foreseeable harms.

- Standards bodies and national guidance (NIST’s AI Risk Management Framework and its generative‑AI extensions) are moving from voluntary to expected practices: classification of high‑impact uses, logging, human oversight, and safety gating for sensitive domains. Product teams should map these practices into engineering requirements.

- Transparency obligations are likely to expand: consumers and regulators will demand more clarity about when AI is used, its limitations, and whether an answer comes from a human‑verified source or a generative model.

Why this matters beyond one household tip

This episode is a microcosm of a much broader challenge: generative AI systems are increasingly embedded in everyday contexts where mistakes matter. When the stakes are trivial—writing a dinner recipe or drafting a friendly email—an incorrect suggestion is inconvenient. When the stakes involve toxic gases, medical advice, or structural safety, the tolerance for error must be near zero.Two structural trends make this problem urgent:

- AI is moving from optional to ambient. Voice assistants live in kitchens, bathrooms, and cars. An assistant that occasionally makes hazardous factual errors is not merely embarrassing—it is a public‑health liability.

- The scale of deployment amplifies risk. A single bad suggestion behind millions of devices is not a bug; it’s a potential mass‑exposure vector.

Recommendations: immediate actions for stakeholders

For consumers- Treat AI assistants as helpers, not final authorities—especially for health, chemicals, or repairs.

- Verify safety‑critical information with the appliance manual, manufacturer support, or poison control.

- Never mix cleaning products; follow manufacturer concentration and dispensing instructions.

- Deploy safety blacklists for hazardous chemical combinations today.

- Gate safety‑sensitive queries to curated, citation‑backed responses and include clear warnings and manufacturer references.

- Publish anonymized safety incident reports and the changes made to address them.

- Require minimal safety controls (classification, gating, logging) for consumer AI assistants that provide any procedural, medical, or chemical advice.

- Push for interoperable incident reporting standards so researchers and public‑health officials can track where agents fail and how quickly vendors remediate.

- Continue reproducibility testing (different accounts, device versions) and insist vendors publicly confirm remediation steps when an unsafe output is found.

Conclusion

The WILX consumer tech test was a simple experiment with an outsized lesson: when an assistant offers household advice it can be more than merely wrong—it can be dangerous. The chemistry is well understood, the health risks are documented, and the fix is not purely academic: it is a combination of product design, model safety engineering, manufacturer guidance, and public‑health outreach. Vendors who build assistants into homes must treat safety‑critical domains as special cases, with conservative defaults, explicit provenance, and human oversight. Consumers must continue to treat AI as a tool, not an unquestionable authority—especially when a suggested action involves chemicals, heat, electrical systems, or medical care.In an era where an answer is only a speech bubble or a spoken sentence away, that boundary between convenience and risk matters more than ever. The technology is powerful; the responsibility to use it safely belongs to the companies that deploy it, the regulators who oversee the market, and the users who depend on it.

Source: WILX What The Tech? When AI is wrong