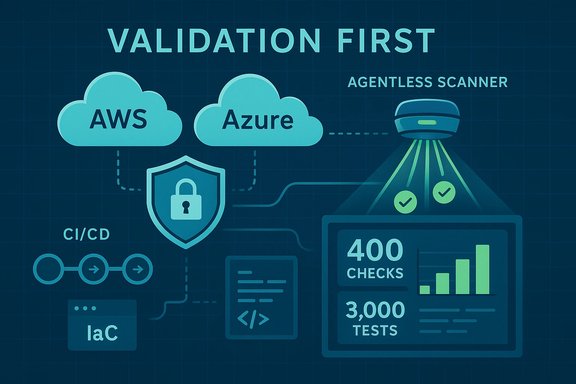

Astra’s new Cloud Vulnerability Scanner promises to turn noisy cloud posture data into actionable, validated risk by combining continuous, agentless discovery with an “offensive‑grade” validation engine that attempts exploit paths and confirms whether reported misconfigurations and weaknesses are actually exploitable in real environments.

Cloud security tooling has long been split between discovery-first posture products (CSPM) that surface configuration issues and more intensive offensive testing (pentests) that prove exploitability. Astra argues that this split creates a practical gap: cloud environments change faster than quarterly audits, and teams drown in findings that are high in quantity but low in operational signal. The company’s response is a validation-first scanner that blends continuous posture checks with automated attack-path testing to produce a smaller set of verified, high‑impact findings. Astra’s announcement positions their scanner as specifically tuned for modern multi‑cloud operations: AWS, Microsoft Azure and Google Cloud Platform are supported via agentless, read‑only API or key connections. The vendor says the product runs more than 400 cloud‑specific checks and 3,000 automated vulnerability tests mapped to industry benchmarks such as the OWASP Top 10 and SANS 25, and that it triggers reanalysis whenever it detects configuration drift.

For security leaders, the choice is not binary: validation‑first scanners like Astra’s are a practical way to accelerate risk reduction and prioritize scarce remediation resources, provided teams scrutinize safety, coverage, integration, and pricing during pilots. Misconfigurations remain a dominant cloud risk; tools that prove exploitability will help teams focus on real threats rather than theory.

Source: SecurityBrief Asia https://securitybrief.asia/story/astra-unveils-cloud-scanner-to-cut-misconfig-alert-noise/

Background

Background

Cloud security tooling has long been split between discovery-first posture products (CSPM) that surface configuration issues and more intensive offensive testing (pentests) that prove exploitability. Astra argues that this split creates a practical gap: cloud environments change faster than quarterly audits, and teams drown in findings that are high in quantity but low in operational signal. The company’s response is a validation-first scanner that blends continuous posture checks with automated attack-path testing to produce a smaller set of verified, high‑impact findings. Astra’s announcement positions their scanner as specifically tuned for modern multi‑cloud operations: AWS, Microsoft Azure and Google Cloud Platform are supported via agentless, read‑only API or key connections. The vendor says the product runs more than 400 cloud‑specific checks and 3,000 automated vulnerability tests mapped to industry benchmarks such as the OWASP Top 10 and SANS 25, and that it triggers reanalysis whenever it detects configuration drift. Why this matters now

Cloud environments are highly dynamic: teams add services, change IAM roles, and create temporary exceptions as part of normal development velocity. That creates two chronic problems for SecOps:- A high volume of findings with unclear exploitability; and

- Short windows between change and potential compromise that make periodic scanning insufficient.

What Astra’s scanner does — technical overview

Agentless discovery and continuous mapping

Astra deploys an agentless integration model that connects via read‑only keys or cloud provider APIs. Once connected, the scanner auto-discovers resources, identities, keys, service principals, network controls, storage buckets, and permissions that define the live attack surface. This design reduces deployment friction in complex multi‑tenant or production environments and makes it easier to add coverage across many accounts or projects quickly.Validation‑first testing: offensive security engine

Rather than marking a finding “high” based only on static policy checks, Astra says its Offensive Security Engine runs attacker‑mode tests to determine whether an identified misconfiguration can be chained into a practical exploit path. This can include privilege escalation sequences, identity chaining, network reachability proofs, and data exfiltration attempts — all executed in safe, read‑only or non‑destructive ways that demonstrate impact. The claimed result is fewer false positives and a prioritized list of “fixes that matter.”Checks and tests: scope and alignment

Astra reports more than 400 cloud‑specific checks (misconfigurations, permissions, policy drift) and 3,000 automated offensive test patterns mapped to common standards (OWASP, SANS) and compliance frameworks (SOC 2, ISO 27001, PCI). That breadth aims to cover both configuration hygiene and classic vulnerability classes that matter in cloud contexts.CI/CD and toolchain integrations

The scanner integrates with CI/CD pipelines and developer tooling (GitHub, GitLab, popular CI systems), plus collaboration platforms and ticketing systems, enabling security findings to flow into developers’ workflows. That shift‑left orientation is designed to reduce friction for developers while keeping security contextualized in the same toolchain used to ship code and infra changes.Key strengths

1. Validation reduces triage overhead

By proving exploitability rather than just listing potential misconfigurations, Astra’s model promises to cut the triage queue dramatically. Early adopters quoted in vendor and independent coverage report fewer findings to action and quicker remediation prioritization. This addresses the largest operational pain point for many teams: signal-to-noise.2. Continuous, change‑triggered reanalysis

The scanner is built to re-evaluate resources after configuration changes, which better matches modern DevOps cadences than quarterly audits. Continuous validation helps reduce the window of exposure caused by drift or transient exceptions.3. Developer‑friendly, agentless deployment

Agentless, read‑only integration and CI/CD hooks make it realistic to deploy in production and across many accounts without heavy operational overhead — a boon for large, distributed engineering teams.4. Offensive testing lineage

Astra’s pedigree as a continuous pentest platform feeds this product: the firm says its test cases were developed from thousands of pentest outcomes and real exploitation patterns, which can improve the realism of validation compared with purely rule‑based CSPM solutions.Potential weaknesses and operational risks

1. Agentless tradeoffs: telemetry limits

Agentless discovery is attractive operationally, but it relies on control‑plane metadata and management‑plane APIs. That limits the depth of runtime telemetry (processes, host‑level file changes, memory artifacts) compared with agent-based approaches. For purely control‑plane misconfigurations and IAM chains this is sufficient, but for runtime detection or container/host memory artifacts, agents or additional runtime sensors remain necessary. Teams should treat this scanner as posture + validation rather than a full runtime detection platform.2. Validation safety and scope

Astra emphasizes offensive‑grade testing, but safety is critical when performing active validation in production. The vendor notes a read‑only model, but customers must verify the scanner’s exact test behaviors, throttling, and safeguards in their environment. Organizations should demand a clear explanation of non‑destructive testing modes and SLAs for false positives or unexpected side‑effects.3. Coverage vs. visibility guarantees

No single scanner can prove every exploit path across highly complex, multi‑account topologies — especially when ephemeral workloads, third‑party services, or shadow accounts are involved. Validation engines can reduce noise but cannot fully replace holistic architecture reviews, manual pentests of custom logic, or runtime detection. The scanner should be considered a meaningful acceleration, not a panacea.4. Pricing and scale questions

Astra claims a predictable, transparent pricing model without scale‑based fees, but the vendor did not publish detailed pricing at launch. Buyers should obtain clear cost models for multi‑account, multi‑region organizations and evaluate incremental cost as environments grow. Lack of published pricing means procurement teams must ask for reference pricing or pilots to estimate TCO.How the scanner compares to CSPM, CNAPP and manual pentesting

- CSPM (Cloud Security Posture Management): primarily discovery and rules‑based alerting. High recall but widely variable precision. Astra’s scanner overlays a validation layer on top of discovery to improve precision.

- CNAPP (Cloud Native Application Protection Platform): aims to converge posture, workload protection, CIEM, SCA — often includes runtime protection via agents. Astra’s scanner addresses the posture + exploitability slice and integrates into DevOps; it does not claim to replace full workload runtime agents.

- Manual pentesting / red team: still necessary for business‑logic flaws, custom integrations, and adversary emulation. Astra’s validation engine automates large volumes of test cases and can prove many attack paths, but manual testing remains essential for nuanced, higher‑effort exploits. Astra’s heritage in continuous pentesting is a differentiator because the same human‑driven learnings feed the automated validations.

Practical advice for security teams evaluating Astra’s scanner

- Define scope and objectives. Decide whether you want the scanner for continuous CI/CD validation, perimeter posture, or audit readiness. Map desired outcomes (e.g., reduce triage time by X%, reduce mean time to remediate) and measure against them.

- Ask for an architecture walkthrough. Verify agentless modes, key management practices, throttle limits, and exactly which tests run in production vs. a staging environment. Confirm read‑only credentials and audit logs for any active checks.

- Run a targeted pilot. Start with non‑production or staging accounts, then expand to production with clear rollback and monitoring. Use the pilot to validate the scanner’s signal quality and safety.

- Validate integrations. Check CI/CD, ticketing, and SIEM connectors (e.g., GitHub, GitLab, Jira, Slack, Splunk) and ensure findings land where engineers will act.

- Confirm remediation validation. One of Astra’s selling points is verifying fixes after they’ve been applied. Ensure that re‑scans are triggered reliably and that fixes are validated in a timely manner.

- Ask for references and runbooks. Obtain case studies for environments similar to yours and examples of high‑impact exploit chains the scanner identified. Demand clarity on SLAs and incident handling if a validation test has an operational impact.

Governance, compliance and audit considerations

Astra maps many of its checks to compliance frameworks (SOC 2, ISO 27001, PCI‑DSS). For regulated organizations, two practical advantages stand out:- Continuous evidence: validated findings plus proof of remediation produce stronger audit artifacts than static reports alone.

- Reduced false positives: auditors and compliance owners care less about theoretical risk and more about validated residual risk. A validation‑first approach can streamline evidence collection.

Realistic expectations and open questions

- Expect measurable triage reduction, not elimination: a validation engine reduces noise but won’t remove all investigative effort, especially for complex identity‑based chains.

- Confirm the scanner’s behavior in cross‑account and cross‑org topologies; multi‑account trust and delegated access introduce complexity in discovery and exploit validation. Ask for clear documentation on how Astra models and simulates assume‑role and cross‑tenant flows.

- Verify who owns remediation: in many organizations, remediation actions hit developer teams. Ensure the scanner’s output is consumable by SRE and engineering workflows, with clear, actionable remediation steps and the ability to attach context (blast radius, impacted assets).

The vendor foothold: Astra’s credentials and credibility

Astra positions itself as a continuous pentesting platform founded in 2018. The company reports thousands of pentests, millions of detected vulnerabilities historically, and a customer base that spans hundreds (by company claims, 800–1,000+) of global organisations. Astra also highlights certifications and accreditations that include ISO 27001 and industry‑facing approvals common to pentest service providers. These vendor claims are consistent across the company’s product pages and independent press reporting, though buyers should always validate reference customers and compliance claims during procurement.The stats debate: misconfigurations as “73%” of cloud breaches — verify before you cite

Multiple outlets repeat that a large majority of cloud breaches stem from misconfigurations. However, the exact figure (for example, “73%”) varies by study, dataset and definition (misconfiguration vs. human error vs. error‑related incidents). Verizon’s DBIR and respected vendor analyses repeatedly show that configuration errors and human elements are dominant contributors to cloud incidents, but different reports calculate percentages with different scopes and timeframes. Buyers and journalists should avoid repeating a single percentage as canonical without pointing to the dataset and methodology; instead, emphasize the broader, robust finding: configuration and human‑driven errors dominate cloud risk.Strategic recommendation for WindowsForum readers (CISOs, DevOps and security engineers)

- Treat validation‑first scanning as a complementary layer. Use Astra’s scanner to convert posture noise into validated, prioritized remediation tasks. Don’t expect it to replace runtime agents or manual red‑team exercises for bespoke logic flaws.

- Make remediation part of the CI/CD pipeline. Integrations that push fix tasks into developer workflows reduce friction and shrink time‑to‑remediate.

- Demand transparency on safety and telemetry. Before rolling out any active testing tool in production, document test modes, read‑only controls, and audit trails. Require a pilot and a runbook for “what if” operational impacts.

- Validate pricing and TCO. Get a clear pricing model for multi‑account, cross‑region coverage and estimate the operational savings from reduced triage overhead.

Conclusion

Astra’s Cloud Vulnerability Scanner takes a timely and pragmatic approach to a persistent cloud problem: too many findings, too little proof of impact. By combining continuous discovery with offensive‑mode validation and developer‑centric integrations, the product aims to reduce alert noise and make cloud posture actionable. The approach is well aligned with the operational realities of multi‑cloud DevOps environments, but it also raises familiar tradeoffs — particularly around agentless visibility limits, safe testing in production, and the need for complementary runtime telemetry.For security leaders, the choice is not binary: validation‑first scanners like Astra’s are a practical way to accelerate risk reduction and prioritize scarce remediation resources, provided teams scrutinize safety, coverage, integration, and pricing during pilots. Misconfigurations remain a dominant cloud risk; tools that prove exploitability will help teams focus on real threats rather than theory.

Source: SecurityBrief Asia https://securitybrief.asia/story/astra-unveils-cloud-scanner-to-cut-misconfig-alert-noise/