Atos has made its Autonomous Data & AI Engineer available on Microsoft Azure, a packaged agentic DataOps solution built on the newly introduced Atos Polaris AI Platform and delivered for enterprise analytics through prebuilt integrations with Azure Databricks and Snowflake on Azure.

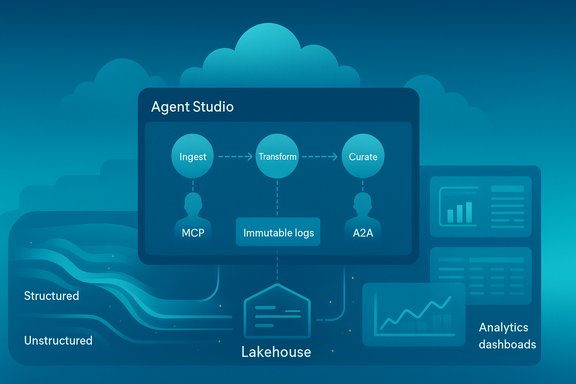

Atos’ announcement positions the Autonomous Data & AI Engineer as a purpose-built, agentic automation layer for the end-to-end data engineering lifecycle: ingestion, cleansing, transformation, curation and hand-off to analytics or downstream AI agents. The offering is marketed as a ready-to-deploy solution on the Microsoft Azure ecosystem and is already listed in the Microsoft Marketplace with separate entries for Databricks and Snowflake implementations. The product is powered by the Atos Polaris AI Platform — Atos’ agentic framework introduced earlier in 2025 — which provides an Agent Studio (no-code orchestration), prebuilt agents, and agent operations features intended to enable development, test, deployment and governance for multi-agent workflows. Atos has explicitly framed Polaris as a platform for “automation of automation,” enabling agents to plan, reason, act and learn. Atos states the Autonomous Data & AI Engineer was demonstrated at Microsoft Ignite and will be available via the Azure Marketplace, reflecting a partner-first go-to-market strategy that emphasizes fast proofs-of-value for Azure-first enterprises.

Atos’ product announcement is timely: Microsoft’s own agent tooling, MCP support and responsible AI investments make the Azure ecosystem a natural place to productize agentic DataOps. The Atos Polaris AI Platform adds a systems-level orchestration layer and marketplace packaging that can accelerate enterprise evaluation. The compelling business case is shorter time-to-insight and reduced data engineering toil — but the practical reality for safe, reliable automation depends on disciplined pilot design, explicit governance integration and contractual protections that align vendor commitments with enterprise risk tolerances. This move by Atos shifts the conversation from architectural possibility to operational readiness: the question for IT leaders is no longer whether agentic DataOps can be built, but whether their organization is ready to safely operate, govern and economically sustain it at scale.

Source: Telecompaper Telecompaper

Background / Overview

Background / Overview

Atos’ announcement positions the Autonomous Data & AI Engineer as a purpose-built, agentic automation layer for the end-to-end data engineering lifecycle: ingestion, cleansing, transformation, curation and hand-off to analytics or downstream AI agents. The offering is marketed as a ready-to-deploy solution on the Microsoft Azure ecosystem and is already listed in the Microsoft Marketplace with separate entries for Databricks and Snowflake implementations. The product is powered by the Atos Polaris AI Platform — Atos’ agentic framework introduced earlier in 2025 — which provides an Agent Studio (no-code orchestration), prebuilt agents, and agent operations features intended to enable development, test, deployment and governance for multi-agent workflows. Atos has explicitly framed Polaris as a platform for “automation of automation,” enabling agents to plan, reason, act and learn. Atos states the Autonomous Data & AI Engineer was demonstrated at Microsoft Ignite and will be available via the Azure Marketplace, reflecting a partner-first go-to-market strategy that emphasizes fast proofs-of-value for Azure-first enterprises. What the Autonomous Data & AI Engineer claims to do

Atos’ public materials and marketplace listings describe a cohesive set of capabilities intended for enterprise DataOps and analytics teams:- Autonomous ingestion of structured and unstructured sources into Azure Databricks or Snowflake on Azure.

- Automated data quality checks, schema reconciliation and transformation into analytics-ready views.

- A no-code Atos Polaris Agent Studio for composing and orchestrating multi-agent workflows that can be configured by both technical and non-technical users.

- Integration with Large Language Models (LLMs) and tooling using open agent protocols such as the Model Context Protocol (MCP) and Agent-to-Agent (A2A) patterns.

- Handoff and composition with visualization or conversational insight agents to make curated data accessible to business users.

Technical fit and standards: why Azure, Databricks and Snowflake?

Atos targets two common enterprise analytics back-ends on Azure — Databricks and Snowflake — which makes operational sense for organizations that have standardized on those platforms for lakehouse or data-warehouse workloads. Marketplace packaging for each platform implies Atos has built reference connectors and deployment templates rather than delivering only bespoke consultancy engagements. A core technical theme of the announcement is interoperability with Microsoft’s evolving agent ecosystem. The Atos materials call out open integration approaches such as the Model Context Protocol (MCP) and Agent-to-Agent (A2A) patterns — protocols that Microsoft’s Copilot Studio and Azure agent tooling have adopted to enable controlled tool access and cross-agent collaboration. The availability of MCP support in Copilot Studio and the general move toward standard tool protocols materially lowers friction when composing agents that must call external systems in enterprise environments. The integration points called out in Atos’ marketplace listings include Azure Data Factory, Power BI, Databricks, Snowflake and Azure AI Services. This creates clear pathways for agents to ingest from canonical sources, transform inside Databricks or Snowflake, and surface outputs into visualization or conversational surfaces.Atos Polaris AI Platform — the orchestrator beneath the product

Atos Polaris is the foundational platform behind the Autonomous Data & AI Engineer. Polaris is described as a full-stack agent framework with:- A no-code Agent Studio for building, composing and testing multi-agent workflows;

- Pre-built agent templates for common functions (DataOps, IT support, QA and others);

- AgentOps features for compliance, cost and performance management;

- Support for open agent orchestration patterns and runtime interoperability.

Responsible AI and governance: vendor claims vs. operational reality

Atos states the solution is grounded in Microsoft Responsible AI principles and will run under Azure governance primitives. Microsoft’s responsible AI guidance and tooling are indeed designed for agentic systems and emphasize six core principles — fairness, reliability & safety, privacy & security, inclusiveness, transparency and accountability — as well as specific operational practices for agentic workloads (audit trails, least-privilege identities, circuit-breakers). These capabilities exist in Microsoft’s platform (Entra for identity, Azure Policy and Purview for governance, observability via Azure Monitor and logging), but buyers must confirm the Atos runtime wires them into agent execution flows and not only into high-level marketing descriptions. Key governance controls to demand in any production deployment include:- Agent identity as first-class principals in Entra ID with least-privilege permissions and credential rotation.

- Immutable action logs capturing agent plans, tool calls, inputs and outputs for provenance and auditability.

- Shadow or propose-only modes for validating agent proposals before write operations occur.

- Circuit-breakers and fail-safe behavior (rate limits, human-in-loop gates) for any high-impact transformations.

- Integration with SIEM/SOAR and evidence of independent test harnesses, red-team results or third-party validation.

Practical capabilities and developer ergonomics

The Autonomous Data & AI Engineer appears designed to reduce friction for both engineers and business users through:- A no-code Agent Studio that lets domain experts and engineers compose multi-agent flows without building everything from scratch.

- Pre-built connectors and templates for common enterprise sources and destinations (Databricks, Snowflake, Azure Data Factory, Power BI).

- MCP-compatible tool integration so agent toolsets can be updated and discovered dynamically by the orchestration layer.

- Agent-to-Agent cooperation so specialized agents (ingest, QA, transform, curator) can coordinate and hand off tasks inside a larger workflow.

What to validate in a pilot — a recommended checklist

- Scope and success metrics

- Choose one high-value ingestion source and one analytics consumer.

- Define KPIs: time-to-ingest, time-to-curate, ticket-handling time, impact on downstream model accuracy.

- Security and identity

- Confirm agent identities are managed in Entra with least privilege and rotation.

- Validate network controls (VNet integration, private endpoints) and region/data residency constraints.

- Governance and auditability

- Enable immutable logging of agent plans, inputs/outputs and tool invocations.

- Run in propose-only shadow mode until confidence thresholds are met.

- Testing, reproducibility and rollback

- Require unit tests, schema checks and automated dry-runs for transformations.

- Validate rollback procedures and circuit-breaker triggers.

- Cost modelling

- Estimate inference, orchestration and durable-state storage costs under expected concurrency.

- Confirm billing visibility and tagging for cost allocation.

- Interoperability and portability

- Test MCP-based tool connectivity and Agent-to-Agent communications.

- Ask for exportable agent definitions (so agents are not locked into a single vendor runtime).

Strengths — where Atos’ approach brings immediate value

- Packaged, Azure-native delivery: Marketplace listings for Databricks and Snowflake simplify procurement and jump-start PoC deployments.

- Standards orientation: Adoption of MCP and A2A patterns increases the chance that agent workflows will be portable and auditable across Azure agent tooling.

- No-code orchestration: Agent Studio reduces the barrier for domain experts to compose agents, which can accelerate iteration and alignment between business and engineering.

- End-to-end narrative: Combining ingestion, curation and insight hand-offs into a single packaged offering reduces integration complexity for teams building end-to-end analytics or RAG pipelines.

Risks and caveats — what can go wrong, and how to mitigate it

- Vendor-reported ROI needs independent validation. The headline “up to 60% faster” or “up to 35% lower costs” are plausible in well-scoped repeatable scenarios but are dependent on your baseline, data quality and governance maturity. Treat these as vendor targets until proven in your environment.

- Silent data-change risk. Automated transformations that lack schema guards, unit tests and provenance tracing can silently degrade downstream analytics and models. Require layered testing and immutable provenance.

- Expanded attack surface. Agentic systems introduce new identity and execution principals; improperly scoped agent identities, poorly configured MCP servers, or lax A2A authentication can enable escalation or lateral movement. Treat MCP integrations as untrusted inputs and enforce execution privileges in the infrastructure layer.

- Cost unpredictability. Agents that make repeated LLM calls, persist state, or spawn many concurrent workflows can generate higher-than-expected consumption on Azure. Model and simulate costs with your Microsoft account team before scaling.

- Protocol and standards maturity. MCP and A2A are powerful interoperability patterns but depend on ecosystem adoption and careful security design. Do not assume portability until connectors and agent artifacts are demonstrably exportable.

How this compares to alternatives

Several systems integrators and platform vendors are racing to productize agentic DataOps stacks. The meaningful differentiators for buyers will be:- Runtime governance: Does the product make it easy to configure identity, approvals, and immutable provenance?

- Standards compliance: Is MCP/A2A used in a way that supports portability?

- Reproducible outcomes: Can the vendor provide reproducible PoC results on representative data shapes?

- Operational SLAs: Does the vendor accept operational responsibility if an agent’s action causes production impact?

For architects: integration patterns and hardening checklist

- Treat agents as directory objects: register, tag, assign cost centers and include them in identity reviews.

- Apply least privilege and ephemeral credentials for agent identities; enable conditional access and rotation.

- Require agent action plans and store prompts, plan graphs and tool outputs as immutable artifacts for audits.

- Implement shadow/propose-only modes for new agents and enforce dry-run testing before enabling writes.

- Use Azure Policy and Purview as gating inputs to prevent agents from touching classified or restricted resources.

- Red-team agent behaviors and run adversarial prompt-injection tests against MCP/A2A connectors.

Procurement and contract recommendations

- Insist on measurable acceptance criteria for PoCs (throughput, accuracy, MTTR reductions, downstream model impact).

- Require exportable agent definitions, artifact snapshotting and evidence of MCP/A2A interoperability.

- Negotiate incident response SLAs that clearly define vendor responsibilities for agent-driven production incidents.

- Demand transparency on cost drivers (model choice, concurrency, durable storage of agent state) and chargeback reporting.

Final assessment — who should evaluate the offering now

- Azure-first organizations using Databricks or Snowflake that have clear, repeatable ingestion patterns and urgent backlog can benefit from a marketplace-ready agentic solution to accelerate PoCs. The packaging and connectors reduce integration friction.

- Organizations with mature governance, identity and observability practices will be in the best position to pilot agentic automation safely and to validate Atos’ ROI claims.

- Regulated sectors (finance, healthcare, public sector) must proceed with caution: insist on shadow-mode validation, strong human-in-loop gating and contractual protections for data residency and auditability.

Quick reference: action plan for a 12-week pilot

- Week 0–2: Scoping — pick one dataset and analytics consumer; define KPIs and rollback thresholds.

- Week 2–4: Sandbox deploy — install the Atos solution in a sandbox tenant; connect sample data sources; enable propose-only mode.

- Week 4–8: Validation & testing — run dry-runs, schema checks and unit tests; measure quality metrics vs baseline.

- Week 8–12: Shadow mode & human-in-loop — allow agents to propose; collect false-positive/false-negative rates and decision traces.

- Months 3–6: Limited production — enable low-risk, idempotent automations under approval gates and monitor telemetry.

- Months 6+: Scale & iterate — expand sources and automation once SLOs and governance prove reliable.

Atos’ product announcement is timely: Microsoft’s own agent tooling, MCP support and responsible AI investments make the Azure ecosystem a natural place to productize agentic DataOps. The Atos Polaris AI Platform adds a systems-level orchestration layer and marketplace packaging that can accelerate enterprise evaluation. The compelling business case is shorter time-to-insight and reduced data engineering toil — but the practical reality for safe, reliable automation depends on disciplined pilot design, explicit governance integration and contractual protections that align vendor commitments with enterprise risk tolerances. This move by Atos shifts the conversation from architectural possibility to operational readiness: the question for IT leaders is no longer whether agentic DataOps can be built, but whether their organization is ready to safely operate, govern and economically sustain it at scale.

Source: Telecompaper Telecompaper