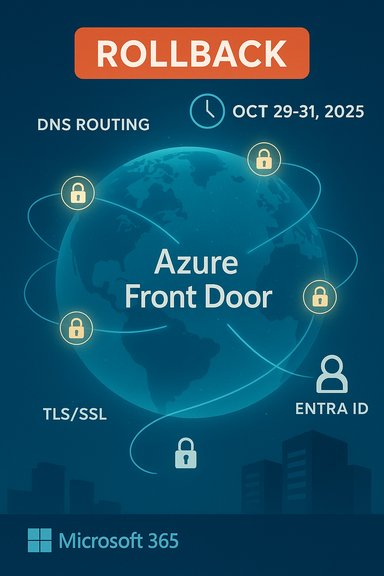

On October 29–31, 2025 Microsoft’s cloud experienced a high‑visibility disruption that left Microsoft 365 users, game players, retailers and several high‑profile consumer services intermittently unreachable — engineers traced the proximate trigger to a configuration error in Azure Front Door, Microsoft’s global edge and application delivery fabric, and recovery proceeded via a rollback to a “last known good” configuration and targeted node recovery.

Microsoft 365 is not a single monolithic server — it is an ecosystem of services that rely on multiple Azure infrastructure layers. Two architectural components are central to the October incident:

Is Microsoft 365 “down” on October 31, 2025? The short answer: the major outage that began October 29 was contained and largely mitigated by October 30, but residual, tenant‑specific symptoms and the broader implications of the event persisted into October 31. Organizations should treat the incident as resolved for most users but must still take the operational follow‑up steps above and await Microsoft’s full post‑incident report for the final, authoritative technical closure.

Source: DesignTAXI Community Is Microsoft 365 down? [October 31, 2025]

Background / Overview

Background / Overview

Microsoft 365 is not a single monolithic server — it is an ecosystem of services that rely on multiple Azure infrastructure layers. Two architectural components are central to the October incident:- Azure Front Door (AFD) — a global Layer‑7 edge, TLS termination and routing fabric that fronts many Microsoft first‑party endpoints and thousands of customer workloads.

- Microsoft Entra ID (formerly Azure AD) — the centralized identity control plane used for sign‑in, token issuance and authentication across Microsoft 365, Xbox, and other services.

What happened (concise timeline)

Detection and first public signals

- External monitors and user reports spiked on October 29, 2025 beginning around 16:00 UTC as elevated latencies, DNS anomalies and gateway failures were observed for endpoints fronted by AFD. Social feeds and outage trackers (Downdetector) showed rapid surges in user complaints for Azure, Microsoft 365, Outlook and Teams.

Microsoft’s immediate actions

- Microsoft identified an inadvertent configuration change in Azure Front Door as the proximate trigger and initiated containment steps: block further AFD configuration rollouts, deploy a rollback to the most recent validated configuration (“last known good”), fail the Azure Portal away from AFD to restore management access where possible, and begin recovering edge nodes and re‑routing traffic through healthy Points‑of‑Presence (PoPs). Public status messages described a staged rollback and progressive recovery.

Recovery window

- The rollback and node recovery actions produced visible improvement over several hours; user report volumes on outage trackers fell as traffic rebalanced. Because DNS TTLs, CDN caches and ISP routing converge at different speeds, some tenant‑specific or geographic residual effects lingered into the following day. Microsoft reported progressive restoration while engineering teams continued monitoring.

Who and what were affected

The incident had a broad blast radius due to how many endpoints are fronted by AFD:- Microsoft 365 web apps (Outlook on the web, Teams web, SharePoint, and admin center) — users experienced sign‑in failures, blank or partially rendered admin blades, and interrupted meetings.

- Azure Portal and management surfaces — some blades failed to load; administrators reported limited GUI access (programmatic APIs often remained available).

- Gaming and consumer identity flows — Xbox Live, Minecraft, Game Pass storefronts and cloud gaming experienced sign‑in or storefront issues where Entra ID tokens were impacted.

- Third‑party customer sites — numerous enterprise and retail sites using AFD for routing and TLS termination reported 502/504 gateway errors; airlines and large retail chains reported check‑in and point‑of‑sale problems. Downdetector aggregated tens of thousands of user reports across Azure and Microsoft 365 at peak.

Microsoft’s public explanation and operational choices

Microsoft’s public messaging during the incident centered on three themes:- Acknowledgement and investigation — the company confirmed an issue with Azure Front Door and said engineering teams were rolling back the control plane configuration.

- Containment actions — blocking further AFD changes, failing the portal away from impacted fabrics, and deploying the “last known good” configuration.

- Progressive restoration — Microsoft reported improvement as recovery actions completed and traffic was routed through healthy PoPs.

Technical anatomy: why an edge control‑plane problem cascades widely

AFD’s role is to act as the global ingress and routing glue for secure HTTP(S) traffic: it terminates TLS, performs Layer‑7 routing, applies WAF rules, and forwards requests to origin services. When a control‑plane configuration change is applied across a distributed fleet of PoPs and something in that change behaves incorrectly, the distributed effect is immediate and global. Key failure modes include:- DNS and routing anomalies that lead browsers and clients to unexpected PoPs or to failed TLS hostnames.

- Authentication token issuance failures when Entra ID’s fronting or routing is affected, producing mass sign‑in failures for downstream apps.

- Management‑plane coupling where the same edge fabric fronts both user‑facing services and admin portals; when both are impacted, administrators lose GUI tools that would normally assist triage.

Immediate user guidance (what to do if you were affected)

- Check Microsoft’s official status channels (Microsoft 365 Service Health and Azure status updates) for tenant‑specific advisories.

- For mission‑critical email access, switch to local/desktop Outlook clients if already configured for cached mode; desktop clients can service offline mail and queued send operations while web sign‑in flows are unavailable.

- If you are an admin, use programmatic management APIs (PowerShell, REST API) where possible — programmatic back‑end APIs are often unaffected when front‑end portals are degraded. Collect logs and error identifiers to open a support case.

- Preserve screenshots, timestamps and affected tenant IDs — these help Microsoft support and normal post‑incident reconciliation.

Enterprise resilience: practical mitigations and recommendations

The October outage is a reminder that cloud convenience brings concentration risk. Here are concrete steps IT teams should prioritize now:- Validate and exercise incident runbooks specifically for identity and edge fabric failures — simulate scenarios where Entra ID and front‑end routing are impaired.

- Harden identity fallbacks:

- Ensure service accounts and automation have appropriate refresh token lifetimes and fallback credentials where safe.

- Evaluate conditional access policies that could prevent logins during abnormal token delays.

- Design multi‑path management access:

- Establish out‑of‑band admin paths (separate network egress, vendor console alternatives) so teams can manage resources if portals are affected.

- Shorten DNS TTLs strategically for critical endpoints where faster failover makes sense, but balance with cache pressure and DDoS considerations.

- Reduce blast radius of a single control‑plane:

- Where possible, avoid over‑centralizing public fronting on a single provider product for mission‑critical public endpoints.

- Consider active‑passive or multi‑provider routing for critical customer‑facing services (e.g., API Gateway in front of multiple clouds or CDNs).

- Test desktop/offline workflows for business continuity (email, document editing), and ensure staff are aware of alternate collaboration channels for outages.

- Demand and track post‑incident reports (PIRs) from cloud providers — these should include root cause, remediation actions, and steps to prevent recurrence.

What Microsoft did well — and where concerns remain

Strengths:- Rapid containment and rollback: The company moved quickly to block further AFD configuration changes and to deploy a known‑good configuration. That rapid action is the correct immediate containment tactic for a control‑plane regression.

- Visible status updates: Microsoft used its status channels and public updates to acknowledge the incident and to provide progressive mitigation updates, which helps tame speculation and reduces duplicate incident handling by customers.

- Control‑plane fragility and blast radius: AFD is powerful — but that power translates to single‑point blast potential when global changes can touch many PoPs. Oversight and gating models for global control‑plane changes may need strengthening.

- Operational transparency gap: Multiple commentators and customer forums noted that Microsoft had not, at the time of early reporting, published a fully detailed post‑incident review with itemized root cause and mitigation timeline. That makes supplier risk assessments and vendor trust rebuilding harder for enterprises. Treat any precise technical assertions about the exact nature of the configuration change as provisional until Microsoft issues a formal PIR.

- Residual tail effects: Even after rollback, DNS TTLs and global caches produce an operational tail — some customers may experience lingering symptoms well after the core issue is fixed. Organizations must plan for this “long tail” in communications and SLAs.

Cross‑checks and source corroboration

Key claims in this article were cross‑checked with independent reporting and contemporaneous outage telemetry:- The proximate trigger being an Azure Front Door configuration change and Microsoft’s use of a rollback to a last‑known‑good configuration is described in Microsoft’s status updates and corroborated by Reuters and Cybernews reporting.

- Downdetector and major outlets (Sky News, Economic Times) reported large spikes in user reports (Azure in the tens of thousands, Microsoft 365 thousands at peak) during the incident window — those independent sources align on the broad scale of user complaints. Note that Downdetector is a crowdsourced signal and should be interpreted as indicative rather than an authoritative count.

- Windows‑community reconstructions and forum threads (including DesignTAXI and Windows Forum posts) provide on‑the‑ground user reports, admin accounts and a technical reconstruction that matches public reporting on edge+identity coupling and remediation actions. These community records document the symptoms admins and users experienced during the incident.

Long‑term implications for customers and the cloud industry

This outage drives home several structural lessons:- Concentration risk is real. As hyperscalers sit at the junction of identity and edge routing, a single control‑plane failure can cascade into multi‑industry disruption.

- Operational rigor matters for changes that touch global fleets — staged rollouts, stronger rollout gating and automated rollback triggers are essential at hyperscale.

- Contract and procurement decisions should consider not just SLAs but transparency guarantees (timely PIRs), runbooks for cross‑tenant communications, and technical options for multi‑path routing.

- Customers should demand richer signals from providers when control‑plane changes are scheduled or when abnormal telemetry suggests degraded edge behavior.

Practical checklist for administrators (post‑incident actions)

- Document the incident window for your tenant (timestamps, error codes, affected geographies).

- File a support ticket with Microsoft including tenant ID and append diagnostic logs.

- Request a Post Incident Review (PIR) and timeline from your Microsoft account team.

- Review your Entra ID and conditional access policies for resilience and failover settings.

- Update your incident runbook to include edge and identity failure playbooks and perform a tabletop exercise within 30 days.

- Evaluate whether critical public endpoints need a multi‑path fronting model or shorter DNS TTLs.

- Communicate with internal stakeholders and customers about mitigation steps taken and expected follow‑up.

Final assessment and conclusion

By October 31, 2025, the immediate crisis window from the October 29 Azure Front Door control‑plane regression had passed: Microsoft’s rollback and node recovery actions restored the majority of affected traffic, and public symptom volumes fell considerably. However, the event exposed structural fragility where an edge control‑plane misconfiguration produces outsized collateral damage to identity‑dependent services. The technical and procurement lessons are clear: test identity fallbacks, harden change‑control for global fabrics, and build multi‑path resiliency where mission‑critical availability is required. Community threads and outage trackers captured the immediate user experience and the operational confusion that accompanies such events; those community accounts remain a valuable time‑series record of the outage’s lived impact even as vendors prepare formal PIRs. For administrators, the practical imperative is straightforward: treat this incident as a call to action — validate your runbooks, demand post‑incident transparency from platform vendors, and harden identity and edge fallbacks so the next global fabric hiccup has a smaller operational footprint.Is Microsoft 365 “down” on October 31, 2025? The short answer: the major outage that began October 29 was contained and largely mitigated by October 30, but residual, tenant‑specific symptoms and the broader implications of the event persisted into October 31. Organizations should treat the incident as resolved for most users but must still take the operational follow‑up steps above and await Microsoft’s full post‑incident report for the final, authoritative technical closure.

Source: DesignTAXI Community Is Microsoft 365 down? [October 31, 2025]