Microsoft Azure is not experiencing a single, platform‑wide blackout on February 9, 2026, but the cloud did suffer a string of high‑impact incidents earlier this week — including a VM/control‑plane failure and a follow‑on Managed Identities overload on February 2–3, and a localized West US datacenter power disruption on February 7 — all of which left many tenants asking the same urgent question: “Is Azure down?” Here is a verified, step‑by‑step account of what happened, why it mattered, who was affected, and what administrators should do now to limit damage and harden for the future.

Cloud outages rarely arrive as a single, simple failure. Modern hyperscale clouds are highly layered and interdependent: control planes, storage backends, identity/token services, and regional physical infrastructure each play a role in everyday operations. A narrow failure in one shared component can cascade through orchestration logic and dependent services — amplifying impact far beyond the initial fault.

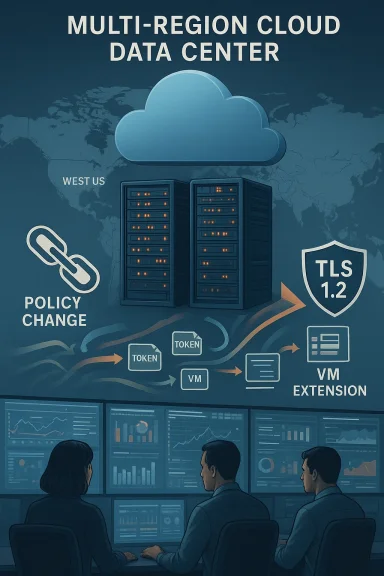

Earlier this week Microsoft posted active incidents that illustrate that pattern. A Virtual Machines management incident (tracking ID FNJ8‑VQZ) began at 19:46 UTC on February 2 and produced errors for VM create/update/scale and other lifecycle operations. Hours later, a Managed Identities platform issue (tracking ID M5B‑9RZ) surfaced in East US and West US as mitigations and backlogged operations produced an overload. Microsoft’s public incident posts, community telemetry and independent reporting all align on the basic timeline and root cause: an unintended policy change that blocked public read access to Microsoft‑managed storage accounts hosting VM extension packages, followed by mitigation steps that overloaded the token issuance system.

A separate but related event occurred on February 7 when a power interruption at a West US datacenter area produced localized outages and slowdowns for storage and some compute workloads. Microsoft’s status updates described phased recovery and emphasized that dependent services would return to normal only after health checks and traffic rebalancing had completed. Community reports and consumer‑facing disruptions — including the Microsoft Store and Windows Update slowdowns for some West‑coast users — were observed during that recovery window.

Collectively, these incidents provide a useful case study in control‑plane fragility, artifact dependencies, and the operational complexity of restoring service at hyperscale.

When a policy change blocked public read access to those storage accounts, any VM lifecycle operation that expects to fetch an extension failed. Because VM orchestration logic assumes extension fetch steps will complete within predictable windows, failures caused orchestration flows to retry, queue, and eventually create high loads on coordination and identity systems. Attempts to mitigate the initial storage access issue produced further traffic spikes to the Managed Identities backplane when many operations simultaneously re‑attempted token acquisition, producing the secondary identity outage. The net effect: a narrow change to storage access produced a broad, time‑staggered outage that touched compute provisioning, identity, CI/CD pipelines and downstream application deployments.

For administrators: act now — check Service Health for tenant‑specific notices, verify TLS and extension‑artifact dependencies, implement caching or private endpoints where practical, and harden retry logic for identity operations. For architects: treat this incident as a prompt to reduce single‑point artifact dependencies and to invest in immutable images and multi‑region failover strategies. And for Microsoft and other cloud providers: clarity in communication, subscription‑level transparency and public post‑incident reviews with concrete metrics will help customers verify impact, learn lessons, and rebuild trust.

This account consolidates the verified timeline, Microsoft’s published incident notes, and independent community telemetry to provide a factual, operational picture of why many users asked “Is Azure down?” — and what to do next.

Source: DesignTAXI Community Is Microsoft Azure down? [February 9, 2026]

Background / Overview

Background / Overview

Cloud outages rarely arrive as a single, simple failure. Modern hyperscale clouds are highly layered and interdependent: control planes, storage backends, identity/token services, and regional physical infrastructure each play a role in everyday operations. A narrow failure in one shared component can cascade through orchestration logic and dependent services — amplifying impact far beyond the initial fault.Earlier this week Microsoft posted active incidents that illustrate that pattern. A Virtual Machines management incident (tracking ID FNJ8‑VQZ) began at 19:46 UTC on February 2 and produced errors for VM create/update/scale and other lifecycle operations. Hours later, a Managed Identities platform issue (tracking ID M5B‑9RZ) surfaced in East US and West US as mitigations and backlogged operations produced an overload. Microsoft’s public incident posts, community telemetry and independent reporting all align on the basic timeline and root cause: an unintended policy change that blocked public read access to Microsoft‑managed storage accounts hosting VM extension packages, followed by mitigation steps that overloaded the token issuance system.

A separate but related event occurred on February 7 when a power interruption at a West US datacenter area produced localized outages and slowdowns for storage and some compute workloads. Microsoft’s status updates described phased recovery and emphasized that dependent services would return to normal only after health checks and traffic rebalancing had completed. Community reports and consumer‑facing disruptions — including the Microsoft Store and Windows Update slowdowns for some West‑coast users — were observed during that recovery window.

Collectively, these incidents provide a useful case study in control‑plane fragility, artifact dependencies, and the operational complexity of restoring service at hyperscale.

What actually happened — verified timeline

1. Virtual Machines management incident (FNJ8‑VQZ)

- Start: 19:46 UTC, February 2, 2026 — Microsoft logged an active Virtual Machines service incident. Customers reported errors on VM management operations including create, update, scale, start and stop.

- Root trigger (authoritative): a configuration/policy change unintentionally applied to a subset of Microsoft‑managed storage accounts that host VM extension packages. That change blocked public read access to artifacts that VM agents download during provisioning and extension installation. Because extension retrieval is part of many VM lifecycle flows, blocking read access produced widespread provisioning and scaling failures.

2. Mitigation, cascading retries and community impact

- Engineers rolled out region‑by‑region mitigations to restore access, but dependent services and orchestration logic caused long tails of failed operations and retry storms. Developer pipelines, hosted runners, AKS node pools and other services that depend on those extension packages experienced queuing and failures; GitHub Actions and Azure DevOps reported degraded performance in the same time window. Community telemetry corroborated broad disruption across CI/CD and VM orchestration.

3. Managed Identities overload (M5B‑9RZ)

- Around 00:10–06:05 UTC, February 3, 2026 — a surge of queued operations seeking tokens and identity validation overwhelmed the Managed Identities platform in East US and West US. This created a second outage window where identity‑dependent operations (create/update/delete, token acquisition) failed or timed out until infrastructure nodes recovered and Microsoft ramped traffic back slowly to allow backlog drain. Microsoft declared mitigation complete at about 06:05 UTC after backlog processing.

4. West US power interruption (February 7)

- Start: Shortly after 08:00 UTC, February 7, 2026 — Microsoft reported a utility power interruption at one West US datacenter area. Automatic backup power engaged, but recovery for dependent services continued in phases as traffic rebalanced and health checks completed. Some consumer services and Windows Update delivery were affected for a subset of users. Microsoft’s message emphasized phased recovery and ongoing stabilization.

Why a simple storage policy change cascaded into a major outage

At the heart of the February 2–3 incident is a deceptively small dependency: VM extension packages. These are small, Microsoft‑hosted artifacts used to bootstrap, configure and maintain virtual machines (for example, to install monitoring or backup agents). By default those artifacts are stored in Microsoft‑managed Azure Storage endpoints and are fetched over public read access during provisioning unless customers opt into private endpoints or vendor‑provided artifact caching.When a policy change blocked public read access to those storage accounts, any VM lifecycle operation that expects to fetch an extension failed. Because VM orchestration logic assumes extension fetch steps will complete within predictable windows, failures caused orchestration flows to retry, queue, and eventually create high loads on coordination and identity systems. Attempts to mitigate the initial storage access issue produced further traffic spikes to the Managed Identities backplane when many operations simultaneously re‑attempted token acquisition, producing the secondary identity outage. The net effect: a narrow change to storage access produced a broad, time‑staggered outage that touched compute provisioning, identity, CI/CD pipelines and downstream application deployments.

Who and what were affected

- Virtual Machine control plane operations: create, update, scale, start/stop flows across multiple regions.

- Azure Kubernetes Service (AKS) provisioning (node pools that rely on extensions), VM scale sets, and other infra that rely on the VM agent to install extensions.

- Developer CI/CD pipelines and hosted runners (GitHub Actions/Azure DevOps) which saw queuing and degraded performance while runners failed to provision or register.

- Managed‑identity–dependent services that rely on token acquisition (examples reported in community telemetry include Azure Synapse, Databricks, Container Apps, AI indexing services). Microsoft identified the identity platform impact specifically in East US and West US.

- A subset of consumer‑facing services and Windows Update / Microsoft Store deliveries in the West US during the power incident on Feb 7.

How Microsoft responded (public, verifiable actions)

Microsoft posted incident notices with tracking IDs, provided progressively detailed updates, and executed region‑by‑region mitigations. For the VM incident engineers rolled back or adjusted the configuration that blocked public read access, then staged fixes and verified in test regions before broader rollout. For the Managed Identities overload they intentionally removed traffic from overloaded identity nodes, repaired nodes off‑line, and gradually ramped traffic back to prevent further overload while draining the backlog. For the West US power event Microsoft described the engagement of backup power and phased recovery driven by health checks and software rebalancing. These actions match Microsoft’s timeline entries and incident messages that form the primary public record.Short‑term remediation steps for administrators (actionable, prioritized)

If you are managing Azure workloads and were affected or are concerned about residual issues, follow this prioritized checklist now:- Check your subscription‑level Service Health and Resource Health entries in the Azure portal to see targeted notices for your resources. These are the most authoritative, tenant‑specific messages. Do this first.

- Validate whether your VMs rely on the public Microsoft artifact endpoints for extension packages. If so, consider:

- Using private endpoints or service endpoints for critical provisioning flows.

- Hosting a cached copy of required extension packages in a customer‑controlled storage account, ideally in the same region as the compute workloads.

- Implementing pre‑baked images that include required agents to reduce runtime extension pulls.

- For identity‑dependent services, check for token‑acquisition failures and exponential retry storms:

- Throttle client retries and add exponential backoff to limit surge traffic when identity services recover.

- Audit managed identity usage to identify high‑volume issuance patterns that might amplify during recovery.

- If you run CI/CD pipelines that depend on hosted runners, enable self‑hosted runners or parallelized fallbacks where available to avoid single points of provisioning failure.

- Confirm TLS compatibility and storage client configuration if you use legacy devices — Microsoft enforced TLS 1.2 minimum on Azure Storage as of February 3, 2026; any client still negotiating TLS 1.0/1.1 will fail to connect and should be upgraded or routed through a modernization gateway. This enforcement can cause unrelated connectivity failures during the same recovery window and should be checked immediately.

- Communicate internally and externally: log incident impact, track recovery metrics, and record costs and business interruptions for post‑incident review and any potential SLA claims.

Long‑term resilience recommendations (architectural changes)

To reduce blast radius from similar control‑plane or artifact failures, architects should consider the following patterns:- Decentralize artifact distribution: keep a customer‑controlled cache of critical extension packages, scripts and installers in region‑local storage accounts to remove single‑provider artifact dependencies for provisioning.

- Infrastructure immutability: bake required agents into VM images rather than relying on post‑boot extension installs whenever possible.

- Private artifact hosting and private endpoints: publish extension artifacts to storage accounts behind private endpoints or VNet service endpoints to avoid reliance on public read access that can be affected by third‑party configuration changes.

- Graceful retry and circuit breakers: implement robust client‑side retry limits, exponential backoff and circuit‑breaker patterns for token acquisition and other control‑plane interactions, to prevent amplifying congestion during platform recovery.

- Multi‑region and multi‑cloud fallbacks: critical services should have tested failover paths to other regions or clouds. This matters especially for regulatory or mission‑critical workloads.

- Runbook drills and post‑incident capture: document incident response steps and practice them; verify that your team can switch to secondary artifact sources, rotate identity authorizations, and rehydrate services without requiring vendor action.

- Observability and alerting for control‑plane metrics: instrument and alert on artifact download failures, prolonged VM provisioning times, token‑acquisition latencies and unusual retry patterns so you detect early signals before queues create a wall of work.

Communications, transparency and the trust question

A recurring operational challenge when cloud providers suffer incidents is the mismatch between customer experience and public dashboard signals. Past outages have shown that dashboards may remain misleadingly optimistic for resources not tagged or sampled correctly, leaving tenants scrambling without clear status updates. During the February incidents Microsoft published tracking IDs and updates, but community reporting underscores that some customers still felt communication lacked granularity about who was affected and for how long. For enterprise customers, timely, subscription‑level communication and clear escalation paths to account teams are crucial — if you were left in the dark, escalate formally and document your impacts.What this means for enterprises and smaller teams

- For enterprises: expect heightened board‑level scrutiny and insurance claims — regulatory reporting requirements and contractual SLAs may be implicated by multi‑hour outages in production zones. Use the incident as a trigger to validate your DR/BCP posture and confirm inter‑dependencies across identity, control plane, and artifact hosting.

- For SMBs and dev teams: prioritize simple mitigations — cache critical installers, enable local runners and have manual intervention scripts ready to reprovision services. The extra work to decouple from a single control‑plane assumption pays off when minutes matter.

Risks and caveats — what we can and cannot verify

- Confirmed and verifiable: Microsoft’s incident tracking IDs, the VM management outage start at 19:46 UTC on February 2 (FNJ8‑VQZ), and the Managed Identities impact window around 00:10–06:05 UTC on February 3 (M5B‑9RZ) are recorded in Microsoft’s status posts and corroborated by independent reporting. The West US power event on February 7 and the February 3 global enforcement of TLS 1.2 on Azure Storage are likewise publicly described and verifiable.

- Unverified and provisional: precise counts of affected customers, the total number of failed provisioning operations, and exact economic impact figures were not released in the public incident messages and remain unverified in this public‑facing account. Treat any third‑party or social feed numbers as provisional until Microsoft issues a Post‑Incident Review with concrete metrics.

- Attribution nuance: the initial trigger is documented as a policy change that blocked public read access for specific Microsoft‑managed storage accounts. Whether that change was human error, automated policy drift, or an internal process failure is not exhaustively documented in the public posts; Microsoft’s full RCA (if released) will provide more detail. Until then, avoid conclusive statements about root cause beyond the configuration fact Microsoft published.

Practical checklist for the next 24–72 hours

- Audit Service Health and Resource Health for your tenant right now and capture all incident notifications for later review.

- For any VM provisioning failures, check extension download logs for HTTP 403/404/timeout indicators and verify whether your VMs are targeting Microsoft‑hosted artifact endpoints. If so, move critical packages into a customer storage account and re‑run provisioning.

- For token failures, inspect managed identity error codes and add retry throttling in your deployment scripts. Contact Microsoft support if token acquisition continues to fail beyond the published mitigation windows.

- Verify that your storage clients negotiate TLS 1.2+ following the February 3 enforcement. Update legacy agents, SDKs or embedded devices that still use older TLS stacks.

- If you use hosted CI/CD runners, temporarily enable self‑hosted runners or alternate pipelines to drain backlogs.

- Escalate to your Microsoft account team with documented impact if SLAs or regulatory requirements were affected.

Lessons for the cloud era — control plane humility

This week’s incidents are a reminder that control planes and shared artifacts are a central operational risk in hyperscale systems. The convenience of managed services comes with an implicit coupling: a single internal policy or storage misconfiguration at the provider level can ripple into developer pipelines, orchestration systems, and user‑facing application uptime. The goal for resilient modern systems therefore should not be to eliminate reliance on managed primitives — which is impractical — but to architect around them with caches, fallbacks, private endpoints and tested runbooks that limit failure amplification.Conclusion

If you woke up on February 9, 2026 and asked “Is Microsoft Azure down?” the short answer is no — not globally. But that doesn’t mean the platform was free of trouble this week. A control‑plane storage policy change triggered widespread VM provisioning failures on February 2–3 and a mitigation‑related surge produced a managed‑identity overload. A separate West US power hiccup on February 7 added additional regional pain for some customers. These events are important not because they are unprecedented, but because they expose persistent, solvable architectural risks: dependency on public artifact endpoints, brittle retry behavior, and the need for robust tenant‑level fallbacks.For administrators: act now — check Service Health for tenant‑specific notices, verify TLS and extension‑artifact dependencies, implement caching or private endpoints where practical, and harden retry logic for identity operations. For architects: treat this incident as a prompt to reduce single‑point artifact dependencies and to invest in immutable images and multi‑region failover strategies. And for Microsoft and other cloud providers: clarity in communication, subscription‑level transparency and public post‑incident reviews with concrete metrics will help customers verify impact, learn lessons, and rebuild trust.

This account consolidates the verified timeline, Microsoft’s published incident notes, and independent community telemetry to provide a factual, operational picture of why many users asked “Is Azure down?” — and what to do next.

Source: DesignTAXI Community Is Microsoft Azure down? [February 9, 2026]