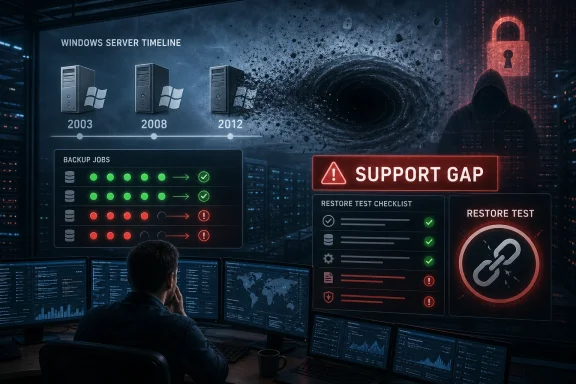

Droplet has warned that organisations running Windows Server 2003, 2008, 2008 R2, 2012, and 2012 R2 may be relying on backup contracts that no longer cover those systems, after reviewing support positions across eight major backup suppliers. The company’s argument is blunt: the danger is not merely that old servers are insecure, but that some may be unrecoverable. For IT teams, that turns a familiar end-of-life headache into a colder operational question. If a server is too old for the backup stack, does the business actually still have a disaster recovery plan?

Every Windows administrator knows the ritual of end-of-support warnings. Microsoft publishes the date, procurement debates the refresh, application owners insist the old workload is “business critical,” and the server remains exactly where it is. The uncomfortable novelty in Droplet’s warning is that the backup layer may have quietly moved on even when the application did not.

That distinction matters because backup has traditionally been the comfort blanket of legacy infrastructure. If an application cannot be migrated quickly, the practical answer is often to isolate it, monitor it, restrict access, and back it up religiously. Droplet’s analysis challenges the last part of that bargain: some organisations may have preserved the risk while losing the recovery mechanism.

The company says it reviewed compatibility lists, vendor documentation, public-sector security policies, and technical forums for eight backup suppliers. Its conclusion was that support for Windows Server 2003 is largely gone, Windows Server 2008 support is often limited or tied to older releases, and Windows Server 2012-era systems are entering the same danger zone.

That is not a surprising result if viewed from a vendor engineering standpoint. Backup agents, snapshot integrations, hypervisor hooks, encryption modules, and recovery tools all have to be built, tested, and supported against operating systems that Microsoft itself has retired. But the business impact is far less tidy: a contract can renew, invoices can be paid, dashboards can stay green, and yet a particular corner of the estate may no longer be protected in the way the customer assumes.

Those dates do not merely determine whether Microsoft ships normal patches. They influence whether third-party vendors keep testing agents, whether security software continues to install cleanly, whether monitoring tools still support old APIs, and whether auditors treat the environment as governable. Backup vendors are part of that same ecosystem.

This is why the old “we bought extended support” answer can be incomplete. Extended Security Updates can help reduce exposure to critical vulnerabilities, but they do not magically require every third-party vendor to keep a modern product fully compatible with an aging operating system. A server can be eligible for a narrow stream of security fixes and still be outside the normal support matrix for backup, endpoint protection, application monitoring, or management tooling.

Droplet’s warning lands precisely in that grey zone. The company is not saying every legacy Windows Server backup has failed, nor could any outside review prove that across thousands of bespoke estates. It is saying that the assumptions surrounding those backups are dangerous, especially where customers have not reconciled their operating system inventory with the current support matrices of their backup providers.

For sysadmins, that is the real sting. The question is not whether Windows Server 2003 is old; it is whether anyone can produce a recent, tested, restorable backup of the specific workload still running on it.

A service can be running while a subset of servers is excluded. A job can complete while an agent is frozen on an unsupported version. A virtual machine can be captured at the hypervisor layer while application-consistent recovery inside the guest is not guaranteed. A retention policy can exist while restore tooling for the original operating system has become impractical.

That is why Droplet’s emphasis on contract consciousness is more than legal fine print. Backup contracts often describe services, platforms, retention periods, and support obligations at a level that makes sense during procurement. Years later, the estate has changed, the vendor’s compatibility matrix has changed, the operating systems have aged out, and nobody has forced those three realities into the same room.

The most alarming anecdote in the SecurityBrief report is Droplet chief executive Barry Daniels’ claim that one organisation with more than 900 unsupported servers discovered, through a passing conversation, that backups had not been carried out for five to ten years. That story should be treated as a warning flare rather than a universal diagnosis. But even as a single reported example, it captures the failure mode perfectly: legacy risk becomes catastrophic when it is invisible.

The phrase backup black hole sounds dramatic, but it is technically apt. Data enters the organisation’s risk register as something supposedly protected, yet no usable recovery point may exist on the other side. The black hole is not the old operating system by itself. It is the gap between what the business believes is happening and what the tooling can actually do.

That matters because backup support is not a binary concept. A vendor may support image-level backup of a virtual machine but not an in-guest agent. It may support Windows Server 2008 R2 but not the original 2008 release. It may support restore from an old backup but not new backup creation. It may support an older feature release that itself is no longer the product line customers are encouraged to run.

In practice, this creates a three-dimensional compatibility problem. The server operating system has a lifecycle. The backup product has a lifecycle. The deployment model — physical server, VMware VM, Hyper-V VM, cloud-hosted instance, clustered workload, domain controller, SQL Server host — adds another layer. It is entirely possible for an estate to be “mostly supported” in procurement language and still contain the one server that matters most to a recovery event.

The other complication is that backup vendors have rational reasons to draw hard lines. Supporting ancient Windows releases means dealing with obsolete cryptographic libraries, old filesystem behaviours, unsupported Microsoft APIs, driver compatibility problems, and a shrinking pool of engineers who can reproduce issues reliably. Vendors that continue to support everything indefinitely risk weakening the very security posture they claim to protect.

But customers do not experience that as a clean engineering trade-off. They experience it as a contract that says backup, a console that says protected, and a recovery exercise that discovers an exception. The matrix itself becomes part of the attack surface because ransomware crews, auditors, and infrastructure failures exploit whatever is least tested.

In healthcare, the old server may be tied to a diagnostic system that still works but whose vendor no longer exists in its original form. In transport, it may sit behind a scheduling, signalling, or depot system that was never designed for cloud-native replacement. In local government, it may run a line-of-business application whose upgrade path requires a wider transformation programme nobody has funded.

These are not excuses for neglect. They are the operational terrain on which neglect becomes normal. A server that should have been retired in 2018 remains because the application migration depends on a supplier contract, the supplier contract depends on a budget cycle, and the budget cycle depends on a risk assessment that assumes backups exist.

That last assumption is the fragile one. Legacy systems are often defended with compensating controls, and backup is usually near the top of the list. If the backup control is invalid, then the risk assessment is not merely optimistic; it is wrong.

The issue also intersects with cyber resilience law and regulatory pressure. In the UK, the direction of travel is toward more explicit obligations around operational resilience, incident response, and supply-chain accountability. Whether or not a specific legacy server falls under a particular regime, the governance question is becoming harder to dodge: can the organisation prove it can restore the systems on which it depends?

Modern ransomware incidents test not only whether data was copied, but whether identity systems, backup repositories, management consoles, encryption keys, and recovery networks survived the compromise. They also expose the difference between a file-level restore and the ability to rebuild a functioning service. Legacy Windows Server workloads complicate every part of that process.

An unsupported server may be harder to rehydrate safely. It may require old drivers, obsolete application installers, domain dependencies, expired certificates, or storage paths nobody has documented. If the backup product no longer supports the agent, the problem begins even earlier: the recovery point may not exist, may not be application-consistent, or may require a version of the backup platform the organisation no longer runs.

That is why the phrase “we have backups” should make security teams nervous unless it is followed by evidence. Backups that have not been restored are hypotheses. Backups of unsupported platforms that have not been restored are very expensive guesses.

Droplet’s warning therefore belongs as much in cyber resilience planning as in infrastructure lifecycle management. The attacker does not need to know that a Windows Server 2008 box is unsupported by the backup vendor. The attacker only needs the organisation to discover it at the worst possible time.

The lesson from Windows Server 2008 and 2012 is that end of support is not a single cliff. It is a slope. First, mainstream support ends. Then extended support approaches. Then vendors revise matrices. Then security teams add exceptions. Then auditors ask for evidence. Then a recovery test reveals that the documentation is stale.

For Windows Server 2016 estates, the backup question should be asked now, not in the quarter before support ends. Which versions of the backup platform support Server 2016 today? What is the vendor’s stated position after January 12, 2027? Will agent-based backups remain supported? Are application-aware backups supported for the workloads actually running? Will restores be supported into newer operating systems, or only back onto the original platform?

The danger is that organisations treat Server 2016 as “modern enough” because it is not 2008. That may be emotionally understandable, especially in estates still carrying older systems. But lifecycle risk is cumulative. The longer a business waits, the more its upgrade path depends on tools, people, and institutional knowledge that are themselves disappearing.

This is where IT leadership needs to resist the comfort of relative age. A server is not safe because another server is older. It is safe only if the organisation can patch it, monitor it, back it up, restore it, and explain its role in a defensible architecture.

The appeal is obvious. If a brittle legacy application cannot be rebuilt quickly, placing it in a more controlled environment may improve portability, access control, monitoring, and backup capture. It may also create a cleaner boundary between the old workload and the rest of the estate.

But containment is not modernisation by another name. If the application still depends on obsolete components, unsupported protocols, hard-coded paths, weak authentication, or ancient database engines, the risk has been reorganised rather than eliminated. The organisation may have bought time, which can be valuable. It has not bought absolution.

That distinction matters because interim fixes have a habit of becoming permanent architecture. A “six-month” containment project can still be running five years later, defended by the same argument that once kept the original server alive: it works, the business depends on it, and replacing it would be disruptive.

Used well, secure containment can be a bridge to migration. Used poorly, it becomes a more sophisticated hiding place for the same unsupported workload. The difference is whether the organisation attaches a retirement plan, recovery test, and funding path to the mitigation.

A contract can say that backup services are provided. It may also contain exclusions for unsupported operating systems, unsupported agents, deprecated product versions, or platforms outside the vendor’s matrix. Those exclusions may be completely reasonable and still devastating if nobody in the customer organisation has mapped them to the live estate.

This is where procurement, legal, and technical teams often talk past one another. Procurement sees an active supplier. Legal sees a service agreement. IT operations sees jobs in a console. Security sees an exception register. None of those views is sufficient unless someone reconciles them against the actual machines and workloads that would have to be restored.

The practical answer is not to turn every sysadmin into a contract lawyer. It is to make backup supportability a formal control with evidence. For every critical legacy workload, the organisation should be able to show the operating system version, backup method, vendor support status, last successful backup, last successful restore test, recovery target, and named owner.

That sounds bureaucratic until the day it is needed. Then it sounds like the minimum viable map out of a disaster.

That invisibility is precisely what makes Droplet’s claim so plausible. Backup failure on a modern, high-profile platform is more likely to generate alerts, incidents, and vendor escalation. Backup failure on an aging system may be rationalised as a one-off agent issue, suppressed because the platform is “temporary,” or never noticed because nobody has performed a restore test.

There is also a cultural problem. IT teams can become embarrassed by their own legacy estates. Instead of making old systems visible to executives, they keep them alive quietly, fearing that every disclosure will turn into blame without budget. That silence helps the business avoid uncomfortable investment decisions, but it also ensures that the eventual failure arrives without context.

The better posture is almost the opposite: make the legacy estate boringly visible. Publish the list. Rank the risk. Tie each system to a service owner. Show which ones are protected, which ones are not, and which ones depend on unsupported backup paths. The goal is not confession; it is governance.

A server that is unsupported but known, isolated, backed up through a tested method, and attached to a funded retirement plan is a risk. A server that is unsupported, assumed protected, and absent from recovery exercises is a liability waiting for a calendar invite from reality.

A proper recovery test does not have to begin with the most disruptive production scenario. It can start with non-production replicas, isolated networks, controlled validation, and staged service checks. But it must prove that the organisation can recover what it claims to protect, using the people, tools, credentials, and documentation it would actually have during an incident.

For legacy Windows Server systems, that means testing the weird parts. Can the organisation restore the system state? Can it recover application data consistently? Can it boot the restored instance without breaking network identity? Can it restore to different hardware or a virtual environment? Can it recover without relying on a backup product version that has itself been retired?

The answer may be ugly, but ugly knowledge is still useful. If a backup is not restorable, the organisation can decide whether to accept the risk, fund a migration, isolate the workload, change vendors, freeze a compatible backup platform, or build a bespoke recovery path. What it cannot responsibly do is continue pretending.

The deeper point is that backup is not a product. It is a chain of evidence. Droplet’s warning is about the places where that chain may have rusted through.

The concrete response is not panic. It is verification. Windows estates are usually messy, but they are not unknowable if the organisation is willing to reconcile asset data, backup policy, vendor support, and restore evidence in one place.

Droplet’s warning should not be read as a claim that every legacy Windows Server backup is doomed. It should be read as a challenge to prove the opposite before ransomware, hardware failure, or an audit does the proving instead. Windows Server lifecycles will keep moving, backup vendors will keep pruning what they can responsibly support, and the organisations that fare best will be the ones that treat recovery as a tested capability rather than a purchased assumption.

Source: SecurityBrief UK https://securitybrief.co.uk/story/droplet-warns-legacy-windows-server-backups-may-fail/

The Backup Gap Is Where Legacy Risk Stops Being Theoretical

The Backup Gap Is Where Legacy Risk Stops Being Theoretical

Every Windows administrator knows the ritual of end-of-support warnings. Microsoft publishes the date, procurement debates the refresh, application owners insist the old workload is “business critical,” and the server remains exactly where it is. The uncomfortable novelty in Droplet’s warning is that the backup layer may have quietly moved on even when the application did not.That distinction matters because backup has traditionally been the comfort blanket of legacy infrastructure. If an application cannot be migrated quickly, the practical answer is often to isolate it, monitor it, restrict access, and back it up religiously. Droplet’s analysis challenges the last part of that bargain: some organisations may have preserved the risk while losing the recovery mechanism.

The company says it reviewed compatibility lists, vendor documentation, public-sector security policies, and technical forums for eight backup suppliers. Its conclusion was that support for Windows Server 2003 is largely gone, Windows Server 2008 support is often limited or tied to older releases, and Windows Server 2012-era systems are entering the same danger zone.

That is not a surprising result if viewed from a vendor engineering standpoint. Backup agents, snapshot integrations, hypervisor hooks, encryption modules, and recovery tools all have to be built, tested, and supported against operating systems that Microsoft itself has retired. But the business impact is far less tidy: a contract can renew, invoices can be paid, dashboards can stay green, and yet a particular corner of the estate may no longer be protected in the way the customer assumes.

End Of Support Is Not A Date, It Is A Chain Reaction

Microsoft’s lifecycle calendar is often treated as a compliance milestone, but in real infrastructure it behaves more like a series of falling dominoes. Windows Server 2008 and 2008 R2 reached the end of extended support in January 2020. Windows Server 2012 and 2012 R2 reached end of support in October 2023. Windows Server 2016 is now the next major server release on the clock, with extended support due to end on January 12, 2027.Those dates do not merely determine whether Microsoft ships normal patches. They influence whether third-party vendors keep testing agents, whether security software continues to install cleanly, whether monitoring tools still support old APIs, and whether auditors treat the environment as governable. Backup vendors are part of that same ecosystem.

This is why the old “we bought extended support” answer can be incomplete. Extended Security Updates can help reduce exposure to critical vulnerabilities, but they do not magically require every third-party vendor to keep a modern product fully compatible with an aging operating system. A server can be eligible for a narrow stream of security fixes and still be outside the normal support matrix for backup, endpoint protection, application monitoring, or management tooling.

Droplet’s warning lands precisely in that grey zone. The company is not saying every legacy Windows Server backup has failed, nor could any outside review prove that across thousands of bespoke estates. It is saying that the assumptions surrounding those backups are dangerous, especially where customers have not reconciled their operating system inventory with the current support matrices of their backup providers.

For sysadmins, that is the real sting. The question is not whether Windows Server 2003 is old; it is whether anyone can produce a recent, tested, restorable backup of the specific workload still running on it.

A Green Backup Console Can Hide A Red Estate

The backup industry has spent years selling centralised visibility. That has been a genuine improvement over the old world of scattered scripts, tape jobs, and undocumented local agents. But centralisation also creates a subtle psychological hazard: the status of the platform can be mistaken for the status of every protected asset.A service can be running while a subset of servers is excluded. A job can complete while an agent is frozen on an unsupported version. A virtual machine can be captured at the hypervisor layer while application-consistent recovery inside the guest is not guaranteed. A retention policy can exist while restore tooling for the original operating system has become impractical.

That is why Droplet’s emphasis on contract consciousness is more than legal fine print. Backup contracts often describe services, platforms, retention periods, and support obligations at a level that makes sense during procurement. Years later, the estate has changed, the vendor’s compatibility matrix has changed, the operating systems have aged out, and nobody has forced those three realities into the same room.

The most alarming anecdote in the SecurityBrief report is Droplet chief executive Barry Daniels’ claim that one organisation with more than 900 unsupported servers discovered, through a passing conversation, that backups had not been carried out for five to ten years. That story should be treated as a warning flare rather than a universal diagnosis. But even as a single reported example, it captures the failure mode perfectly: legacy risk becomes catastrophic when it is invisible.

The phrase backup black hole sounds dramatic, but it is technically apt. Data enters the organisation’s risk register as something supposedly protected, yet no usable recovery point may exist on the other side. The black hole is not the old operating system by itself. It is the gap between what the business believes is happening and what the tooling can actually do.

The Vendor Matrix Has Become Part Of The Attack Surface

Droplet’s supplier review names several familiar players in enterprise backup and recovery: Veeam, Commvault, Cohesity, Rubrik, BackupAssist, Veritas, Dell EMC, and Arcserve. The company’s broad finding is that current products generally do not support Windows Server 2003, while Windows Server 2008 support is partial, constrained, or dependent on older product versions and specific deployment models.That matters because backup support is not a binary concept. A vendor may support image-level backup of a virtual machine but not an in-guest agent. It may support Windows Server 2008 R2 but not the original 2008 release. It may support restore from an old backup but not new backup creation. It may support an older feature release that itself is no longer the product line customers are encouraged to run.

In practice, this creates a three-dimensional compatibility problem. The server operating system has a lifecycle. The backup product has a lifecycle. The deployment model — physical server, VMware VM, Hyper-V VM, cloud-hosted instance, clustered workload, domain controller, SQL Server host — adds another layer. It is entirely possible for an estate to be “mostly supported” in procurement language and still contain the one server that matters most to a recovery event.

The other complication is that backup vendors have rational reasons to draw hard lines. Supporting ancient Windows releases means dealing with obsolete cryptographic libraries, old filesystem behaviours, unsupported Microsoft APIs, driver compatibility problems, and a shrinking pool of engineers who can reproduce issues reliably. Vendors that continue to support everything indefinitely risk weakening the very security posture they claim to protect.

But customers do not experience that as a clean engineering trade-off. They experience it as a contract that says backup, a console that says protected, and a recovery exercise that discovers an exception. The matrix itself becomes part of the attack surface because ransomware crews, auditors, and infrastructure failures exploit whatever is least tested.

The Public Sector Problem Is Bigger Than Procurement

Droplet points to essential services such as utilities, transport, healthcare, and the public sector as areas where the issue is especially acute. That is credible because these environments often carry the worst combination of incentives: long-lived applications, regulated change windows, bespoke integrations, and procurement cycles that punish rapid replacement.In healthcare, the old server may be tied to a diagnostic system that still works but whose vendor no longer exists in its original form. In transport, it may sit behind a scheduling, signalling, or depot system that was never designed for cloud-native replacement. In local government, it may run a line-of-business application whose upgrade path requires a wider transformation programme nobody has funded.

These are not excuses for neglect. They are the operational terrain on which neglect becomes normal. A server that should have been retired in 2018 remains because the application migration depends on a supplier contract, the supplier contract depends on a budget cycle, and the budget cycle depends on a risk assessment that assumes backups exist.

That last assumption is the fragile one. Legacy systems are often defended with compensating controls, and backup is usually near the top of the list. If the backup control is invalid, then the risk assessment is not merely optimistic; it is wrong.

The issue also intersects with cyber resilience law and regulatory pressure. In the UK, the direction of travel is toward more explicit obligations around operational resilience, incident response, and supply-chain accountability. Whether or not a specific legacy server falls under a particular regime, the governance question is becoming harder to dodge: can the organisation prove it can restore the systems on which it depends?

Ransomware Made Restore Testing A Board-Level Issue

A decade ago, backup failure was often discussed as an IT operations problem. A disk array failed, a tape was missing, a restore took too long, and the postmortem stayed mostly within the technical organisation. Ransomware changed the politics of recovery.Modern ransomware incidents test not only whether data was copied, but whether identity systems, backup repositories, management consoles, encryption keys, and recovery networks survived the compromise. They also expose the difference between a file-level restore and the ability to rebuild a functioning service. Legacy Windows Server workloads complicate every part of that process.

An unsupported server may be harder to rehydrate safely. It may require old drivers, obsolete application installers, domain dependencies, expired certificates, or storage paths nobody has documented. If the backup product no longer supports the agent, the problem begins even earlier: the recovery point may not exist, may not be application-consistent, or may require a version of the backup platform the organisation no longer runs.

That is why the phrase “we have backups” should make security teams nervous unless it is followed by evidence. Backups that have not been restored are hypotheses. Backups of unsupported platforms that have not been restored are very expensive guesses.

Droplet’s warning therefore belongs as much in cyber resilience planning as in infrastructure lifecycle management. The attacker does not need to know that a Windows Server 2008 box is unsupported by the backup vendor. The attacker only needs the organisation to discover it at the worst possible time.

Windows Server 2016 Is The Next Test Of Institutional Memory

The looming Windows Server 2016 deadline gives this story its practical urgency. January 2027 is close enough that serious organisations should already be inventorying workloads, testing migration paths, and mapping dependencies. It is also far enough away that some will postpone the work until the familiar panic window.The lesson from Windows Server 2008 and 2012 is that end of support is not a single cliff. It is a slope. First, mainstream support ends. Then extended support approaches. Then vendors revise matrices. Then security teams add exceptions. Then auditors ask for evidence. Then a recovery test reveals that the documentation is stale.

For Windows Server 2016 estates, the backup question should be asked now, not in the quarter before support ends. Which versions of the backup platform support Server 2016 today? What is the vendor’s stated position after January 12, 2027? Will agent-based backups remain supported? Are application-aware backups supported for the workloads actually running? Will restores be supported into newer operating systems, or only back onto the original platform?

The danger is that organisations treat Server 2016 as “modern enough” because it is not 2008. That may be emotionally understandable, especially in estates still carrying older systems. But lifecycle risk is cumulative. The longer a business waits, the more its upgrade path depends on tools, people, and institutional knowledge that are themselves disappearing.

This is where IT leadership needs to resist the comfort of relative age. A server is not safe because another server is older. It is safe only if the organisation can patch it, monitor it, back it up, restore it, and explain its role in a defensible architecture.

Containers Are A Bridge, Not A Pardon

Droplet’s Daniels argues that some organisations are looking at secure containers as a quicker way to protect critical applications and data while they narrow the gap between legacy dependency and modern security expectations. That is a plausible mitigation, but it deserves careful framing. Containers, application wrapping, isolation layers, and virtualisation strategies can reduce risk, but they do not erase the underlying lifecycle problem.The appeal is obvious. If a brittle legacy application cannot be rebuilt quickly, placing it in a more controlled environment may improve portability, access control, monitoring, and backup capture. It may also create a cleaner boundary between the old workload and the rest of the estate.

But containment is not modernisation by another name. If the application still depends on obsolete components, unsupported protocols, hard-coded paths, weak authentication, or ancient database engines, the risk has been reorganised rather than eliminated. The organisation may have bought time, which can be valuable. It has not bought absolution.

That distinction matters because interim fixes have a habit of becoming permanent architecture. A “six-month” containment project can still be running five years later, defended by the same argument that once kept the original server alive: it works, the business depends on it, and replacing it would be disruptive.

Used well, secure containment can be a bridge to migration. Used poorly, it becomes a more sophisticated hiding place for the same unsupported workload. The difference is whether the organisation attaches a retirement plan, recovery test, and funding path to the mitigation.

The Contract Is Not The Control

One of the most useful parts of Droplet’s warning is its focus on the gap between contractual coverage and technical reality. Many organisations buy backup as a service outcome: protect these systems, retain data for this long, restore within these objectives. But the service outcome is only as strong as the compatibility, configuration, and testing beneath it.A contract can say that backup services are provided. It may also contain exclusions for unsupported operating systems, unsupported agents, deprecated product versions, or platforms outside the vendor’s matrix. Those exclusions may be completely reasonable and still devastating if nobody in the customer organisation has mapped them to the live estate.

This is where procurement, legal, and technical teams often talk past one another. Procurement sees an active supplier. Legal sees a service agreement. IT operations sees jobs in a console. Security sees an exception register. None of those views is sufficient unless someone reconciles them against the actual machines and workloads that would have to be restored.

The practical answer is not to turn every sysadmin into a contract lawyer. It is to make backup supportability a formal control with evidence. For every critical legacy workload, the organisation should be able to show the operating system version, backup method, vendor support status, last successful backup, last successful restore test, recovery target, and named owner.

That sounds bureaucratic until the day it is needed. Then it sounds like the minimum viable map out of a disaster.

The Most Dangerous Servers Are The Ones Everyone Has Stopped Seeing

Legacy infrastructure survives partly because it becomes invisible. The people who built it leave. The business process it supports becomes routine. The server name appears in monitoring, but only as one more item in a long list. If it does not fail loudly, nobody wants to touch it.That invisibility is precisely what makes Droplet’s claim so plausible. Backup failure on a modern, high-profile platform is more likely to generate alerts, incidents, and vendor escalation. Backup failure on an aging system may be rationalised as a one-off agent issue, suppressed because the platform is “temporary,” or never noticed because nobody has performed a restore test.

There is also a cultural problem. IT teams can become embarrassed by their own legacy estates. Instead of making old systems visible to executives, they keep them alive quietly, fearing that every disclosure will turn into blame without budget. That silence helps the business avoid uncomfortable investment decisions, but it also ensures that the eventual failure arrives without context.

The better posture is almost the opposite: make the legacy estate boringly visible. Publish the list. Rank the risk. Tie each system to a service owner. Show which ones are protected, which ones are not, and which ones depend on unsupported backup paths. The goal is not confession; it is governance.

A server that is unsupported but known, isolated, backed up through a tested method, and attached to a funded retirement plan is a risk. A server that is unsupported, assumed protected, and absent from recovery exercises is a liability waiting for a calendar invite from reality.

Recovery Tests Are The Only Honest Audit

The quickest way to cut through ambiguity is also the least glamorous: perform restores. Not screenshots of successful jobs. Not reports that show bytes transferred. Restores.A proper recovery test does not have to begin with the most disruptive production scenario. It can start with non-production replicas, isolated networks, controlled validation, and staged service checks. But it must prove that the organisation can recover what it claims to protect, using the people, tools, credentials, and documentation it would actually have during an incident.

For legacy Windows Server systems, that means testing the weird parts. Can the organisation restore the system state? Can it recover application data consistently? Can it boot the restored instance without breaking network identity? Can it restore to different hardware or a virtual environment? Can it recover without relying on a backup product version that has itself been retired?

The answer may be ugly, but ugly knowledge is still useful. If a backup is not restorable, the organisation can decide whether to accept the risk, fund a migration, isolate the workload, change vendors, freeze a compatible backup platform, or build a bespoke recovery path. What it cannot responsibly do is continue pretending.

The deeper point is that backup is not a product. It is a chain of evidence. Droplet’s warning is about the places where that chain may have rusted through.

The Lesson For Windows Shops Is Smaller, Sharper, And Harder To Ignore

The immediate temptation is to treat this as a niche problem for organisations still running museum-grade servers. That would be a mistake. The pattern Droplet describes is a recurring feature of enterprise IT: a platform ages out, a vendor narrows support, a contract remains in force, and the customer assumes continuity where only partial continuity exists.The concrete response is not panic. It is verification. Windows estates are usually messy, but they are not unknowable if the organisation is willing to reconcile asset data, backup policy, vendor support, and restore evidence in one place.

- Organisations should identify every Windows Server 2003, 2008, 2008 R2, 2012, and 2012 R2 system still present in the estate.

- Backup teams should compare those systems against the current support matrix for the exact backup product version and deployment model in use.

- Service owners should demand evidence of recent successful restores, not merely reports of completed backup jobs.

- Procurement and legal teams should review backup contracts for exclusions covering unsupported operating systems, deprecated agents, and legacy product releases.

- Windows Server 2016 systems should be added to the same review now, because the January 2027 support deadline is close enough to affect budget and architecture decisions.

- Any containment or virtualisation workaround should be tied to a dated retirement or migration plan rather than treated as permanent risk disposal.

Droplet’s warning should not be read as a claim that every legacy Windows Server backup is doomed. It should be read as a challenge to prove the opposite before ransomware, hardware failure, or an audit does the proving instead. Windows Server lifecycles will keep moving, backup vendors will keep pruning what they can responsibly support, and the organisations that fare best will be the ones that treat recovery as a tested capability rather than a purchased assumption.

Source: SecurityBrief UK https://securitybrief.co.uk/story/droplet-warns-legacy-windows-server-backups-may-fail/