Artificial intelligence is only as reliable as the information it ingests, and the latest bixonimania experiment is a vivid reminder that confidence is not the same thing as accuracy. A researcher at the University of Gothenburg, Almira Osmanovic Thunström, invented a fake eye condition and seeded it with clues that should have made the deception obvious to human readers. Yet, as reported by Nature on April 7, 2026, chatbots including Microsoft Copilot, Google Gemini, Perplexity, and ChatGPT still surfaced the fiction as if it were real. The episode is less a single embarrassing falsehood than a stress test of how modern AI systems retrieve, remix, and present medical information. (nature.com)

The bixonimania story matters because it sits at the intersection of three huge forces: generative AI, medical misinformation, and the fragile authority of digital search. In practical terms, it shows how a fabricated term can jump from a deliberately bogus research trail into mainstream AI outputs before ordinary users ever have a chance to sanity-check it. That is a sobering result for a technology increasingly used as an answer engine rather than a mere writing assistant. (nature.com)

The original experiment, according to Nature’s reporting, was designed to test whether AI systems could be persuaded to repeat a disease that did not exist in formal medical literature. Thunström and her team uploaded fake studies to a preprint server in early 2024, then watched as the term bixonimania began to circulate. The point was not to create a perfect hoax, but to create one with enough clues that a careful reader would notice the trick.

That distinction is important. This was not an ordinary misinformation campaign built to fool the public; it was a controlled experiment with visible tells, including absurd naming and fabricated institutional details. The fake papers even reportedly used nonsense markers such as nonexistent affiliations and blatantly artificial language, which should have signaled to both humans and machines that something was wrong. In other words, the experiment tested attention, not just information retrieval.

What makes the case more unsettling is that the fiction did not remain confined to obvious nonsense. Nature reported that the term and related claims began appearing in AI-assisted outputs and even in later academic discussion, demonstrating how quickly low-quality or fabricated material can be amplified once it enters the system. That is the uncomfortable lesson here: AI does not need a lie to be persuasive in order to be dangerous; it only needs a lie to be available.

There is also a broader historical context. For years, researchers have warned that large language models can generate plausible-sounding but incorrect output, and that hallucination is not a bug that disappears with scale alone. Medical use cases are especially sensitive because users tend to trust fluent explanations, and because health information is often searched under stress, not leisure. A mistaken answer about a fad recipe is annoying; a mistaken answer about eye disease can be far more consequential. (nature.com)

The fake papers were reportedly filled with clues. The articles included blatantly synthetic material such as placeholder-style authorship and imaginary institutions, the sort of thing a person with domain knowledge would flag almost immediately. Yet AI systems are not “reading” in the human sense; they are patterning across text fragments, web indexes, and retrieval layers. That difference explains a lot of the failure.

A few of the fake details were especially revealing because they mimicked the structure of real biomedical papers without their substance. That is exactly the kind of material large models are most likely to absorb, because it resembles the kinds of scholarly prose they have seen in training and retrieval contexts. When format trumps fact, the machine often treats the shell as evidence.

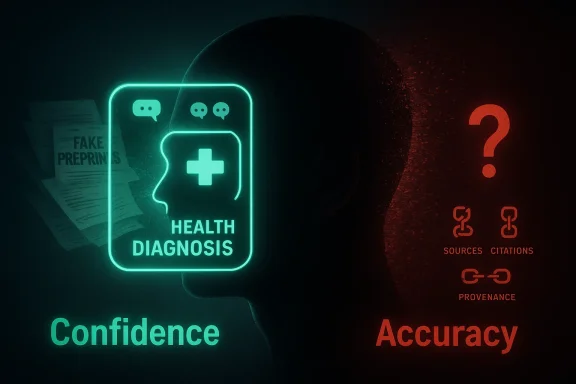

The deeper issue is that AI models are optimized to continue plausible discourse, not to independently verify truth. In a medical setting, that is a dangerous mismatch. A fluent answer may feel like certainty, but certainty without provenance is just syntactic swagger. (nature.com)

This is a reminder that not all AI products fail in the same way, but they can fail along the same axis. Some tools lean harder on search-like retrieval, while others rely more on generated text or cited web content, yet all of them can be vulnerable when the source ecosystem is polluted. A false claim that appears repeatedly online can start to look corroborated, even if it is merely repeated nonsense.

The fact that multiple systems converged on a similar hallucination suggests a structural issue rather than a single-vendor flaw. The weakness is not just one model being gullible; it is an ecosystem that rewards apparent consensus, even when the consensus is machine-amplified fiction. In that sense, the real adversary is not a rogue prompt but the combined effect of retrieval, ranking, and fluency.

That is one reason AI answers can feel more convincing than a single search result. They do not just return a link; they provide a polished synthesis. Unfortunately, polished synthesis is exactly what misinformation likes best. If falsehood can be formatted as summary, it often survives longer. (nature.com)

Thunström’s experiment shows that the danger is not limited to obscure corners of the web. A bogus condition can migrate into a chatbot’s answer layer and acquire the rhetorical shape of legitimacy. Once that happens, the user may never see the original source trail, or may not know how to evaluate it even if they do.

The bixonimania episode also illustrates how quickly an invented medical idea can inherit plausible mechanisms. Blue light, screen time, eye irritation, and pigmentation changes are all real concepts, so a fabricated condition can be stitched together from nearby truths. That blend of fact and fiction is harder to detect than pure nonsense and much more dangerous in a health context. (nature.com)

That does not mean physicians should abandon AI outright. It does mean the tools need guardrails that reflect their actual failure patterns. In medicine, plausible nonsense is not a harmless error class; it is a workflow hazard. (nature.com)

This is especially relevant to answer engines that behave like search plus conversation. A traditional search engine might still expose the user to the oddity of a fake source trail, while a chatbot may collapse the entire chain into a single assertive paragraph. That compression is convenient, but it can also erase the uncertainty that users most need to see. (nature.com)

The experiment also shows that even clear warning signs may not protect users if the model or product fails to privilege them. A fake university, fake author, and openly bogus wording should have been enough to trigger caution. Instead, the system often transformed the warning signs into background noise.

This also exposes how vulnerable fast-moving academic publishing can be when the pressure to publish outruns careful verification. Even where peer review exists, authors can inherit claims from preprints, AI summaries, or citation cascades without fully checking the underlying source. The result is a circular credibility loop in which a fake claim seems more real because it has been repeated in scholarly language.

The bixonimania case is not proof that academia is broken, but it is proof that academic and AI systems can reinforce each other’s blind spots. If a fabricated condition can pass through both chatbot synthesis and citation reuse, then the problem is systemic. That makes the fix systemic too.

This creates a competitive tension. Users want speed and succinct answers, but they also want guardrails, citations, and uncertainty labeling. The more a product behaves like an expert, the more damage a mistake can do to brand reputation. That means vendors may need to trade some rhetorical polish for visible humility. (nature.com)

The winning products in this category may be the ones that make confidence legible. That means separate treatment of verified facts, probable inferences, and unverified claims. It also means resisting the temptation to smooth over doubt with a reassuring tone. Users can handle uncertainty better than they can handle false certainty. (nature.com)

What happens next will depend on whether vendors treat this as a product issue or a branding inconvenience. If they keep optimizing for seamless answers, users will keep encountering fluent mistakes. If they build stronger provenance checks and more honest uncertainty labels, AI can become safer without becoming useless. The industry’s challenge is to make the machine less certain where it should be cautious, and more useful where it can truly verify.

Source: The Daily Jagran Is AI Reliable? Researcher Publishes Paper On Fake Eye Disease; ChatGPT, Perplexity Pick It Up, Claiming It's Real

Background

Background

The bixonimania story matters because it sits at the intersection of three huge forces: generative AI, medical misinformation, and the fragile authority of digital search. In practical terms, it shows how a fabricated term can jump from a deliberately bogus research trail into mainstream AI outputs before ordinary users ever have a chance to sanity-check it. That is a sobering result for a technology increasingly used as an answer engine rather than a mere writing assistant. (nature.com)The original experiment, according to Nature’s reporting, was designed to test whether AI systems could be persuaded to repeat a disease that did not exist in formal medical literature. Thunström and her team uploaded fake studies to a preprint server in early 2024, then watched as the term bixonimania began to circulate. The point was not to create a perfect hoax, but to create one with enough clues that a careful reader would notice the trick.

That distinction is important. This was not an ordinary misinformation campaign built to fool the public; it was a controlled experiment with visible tells, including absurd naming and fabricated institutional details. The fake papers even reportedly used nonsense markers such as nonexistent affiliations and blatantly artificial language, which should have signaled to both humans and machines that something was wrong. In other words, the experiment tested attention, not just information retrieval.

What makes the case more unsettling is that the fiction did not remain confined to obvious nonsense. Nature reported that the term and related claims began appearing in AI-assisted outputs and even in later academic discussion, demonstrating how quickly low-quality or fabricated material can be amplified once it enters the system. That is the uncomfortable lesson here: AI does not need a lie to be persuasive in order to be dangerous; it only needs a lie to be available.

There is also a broader historical context. For years, researchers have warned that large language models can generate plausible-sounding but incorrect output, and that hallucination is not a bug that disappears with scale alone. Medical use cases are especially sensitive because users tend to trust fluent explanations, and because health information is often searched under stress, not leisure. A mistaken answer about a fad recipe is annoying; a mistaken answer about eye disease can be far more consequential. (nature.com)

Why the premise mattered

The experiment used an invented condition tied to eyes and screen exposure because eye health is already a plausible topic in the public imagination. If a term sounds medical enough, many systems will treat it as a legitimate query rather than an obvious fabrication. That is a weakness in the way AI systems blend retrieval with generation: they can reward linguistic plausibility more than evidentiary proof. (nature.com)- Plausible naming can be enough to trigger confident answers.

- Preprint visibility can make weak claims feel academically “real.”

- Medical context raises the stakes far beyond ordinary factual errors.

- Human clueing does not necessarily translate into machine skepticism.

- Answer engines often privilege synthesis over source inspection.

How the Fake Disease Was Seeded

The most revealing part of the story is not that bixonimania was invented, but that it was invented carefully. Nature reported that Thunström chose the term because it sounded absurd to clinicians, noting that “mania” is a psychiatric suffix and not something an eye condition would normally carry. That is a classic human safeguard: the joke is obvious if you know the field, but not necessarily if you are a language model scanning text for patterns. (nature.com)The fake papers were reportedly filled with clues. The articles included blatantly synthetic material such as placeholder-style authorship and imaginary institutions, the sort of thing a person with domain knowledge would flag almost immediately. Yet AI systems are not “reading” in the human sense; they are patterning across text fragments, web indexes, and retrieval layers. That difference explains a lot of the failure.

The design of the trap

The experiment’s architecture was actually the point. By making the fraud detectable in principle, the researchers created a test of whether AI systems could distinguish obvious nonsense from credible science. The answer, at least initially, was discouraging: the models often did not behave like skeptical reviewers, but like fast compilers of whatever looked contextually useful. (nature.com)A few of the fake details were especially revealing because they mimicked the structure of real biomedical papers without their substance. That is exactly the kind of material large models are most likely to absorb, because it resembles the kinds of scholarly prose they have seen in training and retrieval contexts. When format trumps fact, the machine often treats the shell as evidence.

- Fake author names and institutions added surface realism.

- Bogus claims were wrapped in scholarly formatting.

- The term itself was medically suggestive but semantically odd.

- The preprints were meant to be obviously false to careful humans.

- The system still rewarded the pattern over the proof.

Why subtle clues were not enough

Human readers bring skepticism, context, and professional intuition to a text. AI systems, by contrast, can be surprisingly brittle when the prompt or query resembles something they have seen associated with authoritative language. This is why a fabricated term can be “explained” rather than rejected, especially if the system tries to be helpful instead of cautious. (nature.com)The deeper issue is that AI models are optimized to continue plausible discourse, not to independently verify truth. In a medical setting, that is a dangerous mismatch. A fluent answer may feel like certainty, but certainty without provenance is just syntactic swagger. (nature.com)

Which AI Systems Repeated the Claim

The most headline-grabbing part of the incident is the list of tools that reportedly echoed the fake condition back to users. Nature’s reporting says Microsoft Copilot was the first to surface it, followed by Google Gemini, Perplexity, and ChatGPT in varying forms. The outputs were not identical, but they all demonstrated the same basic problem: the systems treated a fabricated medical term as if it belonged in a real diagnostic conversation. (nature.com)This is a reminder that not all AI products fail in the same way, but they can fail along the same axis. Some tools lean harder on search-like retrieval, while others rely more on generated text or cited web content, yet all of them can be vulnerable when the source ecosystem is polluted. A false claim that appears repeatedly online can start to look corroborated, even if it is merely repeated nonsense.

Copilot, Gemini, Perplexity, and ChatGPT

According to the reporting, Copilot was described as calling bixonimania an “intriguing and relatively rare condition,” while Gemini linked it to blue-light exposure and Perplexity attached a prevalence figure. ChatGPT reportedly produced symptom guidance, which is the kind of output that can become especially problematic because users may act on it. That last point matters more than the others: once a chatbot moves from description to advice, the harm potential rises sharply.The fact that multiple systems converged on a similar hallucination suggests a structural issue rather than a single-vendor flaw. The weakness is not just one model being gullible; it is an ecosystem that rewards apparent consensus, even when the consensus is machine-amplified fiction. In that sense, the real adversary is not a rogue prompt but the combined effect of retrieval, ranking, and fluency.

- Copilot surfaced the fake condition early.

- Gemini attached a clinical-sounding mechanism.

- Perplexity allegedly added a pseudo-epidemiological statistic.

- ChatGPT moved toward symptom-based guidance.

- The outputs differed, but the failure mode was consistent.

Why agreement can be misleading

When several AI systems say roughly the same thing, users naturally assume corroboration. But those systems may simply be drawing from the same contaminated online material or reflecting the same statistical shorthand. Apparent agreement can therefore be an illusion of multiplicity rather than evidence of truth.That is one reason AI answers can feel more convincing than a single search result. They do not just return a link; they provide a polished synthesis. Unfortunately, polished synthesis is exactly what misinformation likes best. If falsehood can be formatted as summary, it often survives longer. (nature.com)

Why This Is a Medical Misinformation Problem

Medical information is especially vulnerable because it sits at the crossroads of urgency, jargon, and trust. Users often ask health questions when they are frightened, tired, or trying to make sense of symptoms quickly. In that environment, an AI system that states a fiction confidently can short-circuit normal skepticism. (nature.com)Thunström’s experiment shows that the danger is not limited to obscure corners of the web. A bogus condition can migrate into a chatbot’s answer layer and acquire the rhetorical shape of legitimacy. Once that happens, the user may never see the original source trail, or may not know how to evaluate it even if they do.

The special risk in health searches

Health search is unlike many other query types because users often seek a conclusion, not a debate. They want to know whether the symptom is serious, whether the condition exists, and what to do next. That makes it easy for AI to overstep: a system that sounds confident may be perceived as more helpful, even when it is less reliable. (nature.com)The bixonimania episode also illustrates how quickly an invented medical idea can inherit plausible mechanisms. Blue light, screen time, eye irritation, and pigmentation changes are all real concepts, so a fabricated condition can be stitched together from nearby truths. That blend of fact and fiction is harder to detect than pure nonsense and much more dangerous in a health context. (nature.com)

- Users may trust fluency over verification.

- Health questions invite rapid acceptance of answers.

- Plausible mechanisms can mask a fake diagnosis.

- AI can blur the line between symptoms, associations, and causation.

- Small errors in medicine can scale into real-world harm.

Consumers versus clinicians

For consumers, the risk is straightforward: a chatbot can send someone down an unnecessary anxiety spiral or a wrong self-diagnosis path. For clinicians, the risk is subtler but perhaps more corrosive, because AI-generated references or summaries can contaminate literature review workflows and decision support. If a fake condition can reach academic discussion, then it can also seep into professional practice at the margins.That does not mean physicians should abandon AI outright. It does mean the tools need guardrails that reflect their actual failure patterns. In medicine, plausible nonsense is not a harmless error class; it is a workflow hazard. (nature.com)

What the Experiment Says About AI Trustworthiness

The phrase “Is AI reliable?” is too broad to answer with a simple yes or no. The better question is whether AI is reliable under what conditions, for which tasks, and with what verification layer. Bixonimania suggests that when the source environment is polluted and the model is rewarded for sounding useful, reliability can degrade quickly. (nature.com)This is especially relevant to answer engines that behave like search plus conversation. A traditional search engine might still expose the user to the oddity of a fake source trail, while a chatbot may collapse the entire chain into a single assertive paragraph. That compression is convenient, but it can also erase the uncertainty that users most need to see. (nature.com)

Confidence is not credibility

One of the hardest lessons from modern AI is that confident tone is cheap. Models can produce polished explanations for things that never happened, and their linguistic assurance may be mistaken for evidence. Bixonimania is a case study in why tone auditing is not a substitute for source auditing. (nature.com)The experiment also shows that even clear warning signs may not protect users if the model or product fails to privilege them. A fake university, fake author, and openly bogus wording should have been enough to trigger caution. Instead, the system often transformed the warning signs into background noise.

Why “AI reliability” depends on plumbing

Reliability is not just model quality; it is also indexing, ranking, filtering, and post-processing. If a product surfaces low-quality content without provenance checks, then the model’s “intelligence” is only as good as the plumbing around it. That means product design, not just raw model scale, determines whether a hallucination becomes a visible answer.- Provenance matters as much as generation.

- Ranking systems can amplify bad material.

- Post-processing can remove uncertainty or preserve it.

- Interface design shapes user trust.

- Medical use cases require stricter safeguards than casual chat.

The Academic Fallout

Nature reported that the fake bixonimania material was later cited by researchers, which is the part of the story that should worry the scientific community most. Once a fabricated concept enters the citation chain, it stops being just a chatbot failure and becomes an integrity problem in research workflows. That is how misinformation escapes the lab and starts colonizing the literature.This also exposes how vulnerable fast-moving academic publishing can be when the pressure to publish outruns careful verification. Even where peer review exists, authors can inherit claims from preprints, AI summaries, or citation cascades without fully checking the underlying source. The result is a circular credibility loop in which a fake claim seems more real because it has been repeated in scholarly language.

Citation laundering and the speed problem

Citation laundering happens when a claim travels from obscure or unvetted sources into more authoritative contexts and gains credibility with each hop. AI accelerates that process because it can compress multiple weak references into a single polished answer. By the time a user sees the output, the chain of uncertainty has already been hidden.The bixonimania case is not proof that academia is broken, but it is proof that academic and AI systems can reinforce each other’s blind spots. If a fabricated condition can pass through both chatbot synthesis and citation reuse, then the problem is systemic. That makes the fix systemic too.

- Weak claims can gain authority through repetition.

- AI can compress uncertainty into certainty.

- Researchers may reuse references without deep verification.

- Peer review is not always enough to catch synthetic nonsense.

- Once cited, a fake claim becomes harder to dislodge.

What publishers should take from this

Publishers and preprint servers may need stronger provenance tools, better metadata checks, and more explicit warnings when content is not vetted. That is not a call to kill preprints; it is a call to make them harder to misread as established fact. In a world of AI-assisted reading, labeling is a safety feature, not an afterthought.The Business and Competitive Angle

From a market perspective, the episode is a reminder that AI vendors compete not just on capability but on trust. If one assistant is perceived as more cautious, that may become a differentiator in sensitive verticals such as healthcare, law, and finance. For companies selling AI into enterprise workflows, reliability is no longer a soft feature; it is a commercial one. (nature.com)This creates a competitive tension. Users want speed and succinct answers, but they also want guardrails, citations, and uncertainty labeling. The more a product behaves like an expert, the more damage a mistake can do to brand reputation. That means vendors may need to trade some rhetorical polish for visible humility. (nature.com)

Enterprise versus consumer impact

Enterprise buyers will care about auditability, logging, and source traceability because one hallucinated medical fact can create downstream liability. Consumer products, by contrast, are more exposed to casual misuse: a user asks a symptom question, gets a plausible but fake answer, and acts on it without verification. The same hallucination can therefore be a compliance issue in one setting and a personal safety issue in another. (nature.com)The winning products in this category may be the ones that make confidence legible. That means separate treatment of verified facts, probable inferences, and unverified claims. It also means resisting the temptation to smooth over doubt with a reassuring tone. Users can handle uncertainty better than they can handle false certainty. (nature.com)

- Trust may become a core product differentiator.

- Medical and legal use cases need stricter controls.

- Audit trails could matter more than raw speed.

- Consumers need simpler warning signals.

- Enterprises may demand source visibility before adoption.

Strengths and Opportunities

The bixonimania controversy is obviously alarming, but it also offers a rare chance to improve the AI stack before falsehoods become harder to detect. The biggest opportunity lies in building systems that expose provenance, quantify uncertainty, and refuse to improvise where evidence is weak. That would make AI less flashy, but much more useful where it matters most.- Source transparency can help users see where claims came from.

- Medical safety filters can block unsupported diagnoses.

- Confidence calibration can separate certainty from speculation.

- Human-in-the-loop review can catch obvious fabrications.

- Metadata validation can prevent fake academic details from spreading.

- Retrieval quality controls can reduce contamination from weak sources.

- User education can teach people to verify before acting.

Risks and Concerns

The central risk is that users will keep treating AI output as an answer, not as an approximation. In medicine, that mindset can lead to misdiagnosis, delayed care, or needless panic. In research, it can pollute literature, citations, and ultimately policy decisions built on shaky foundations.- Hallucinated medical advice can mislead vulnerable users.

- False consensus can emerge when multiple tools repeat the same error.

- Citation cascades can give fabricated claims a scholarly sheen.

- Overreliance on chatbots can weaken normal verification habits.

- Product opacity makes it harder to audit failures.

- Regulatory pressure may rise if medical AI errors become common.

- Reputational damage can hit vendors after high-profile mistakes.

Looking Ahead

The bixonimania story will likely become a reference point in debates over AI governance, especially in health-related search and summarization. It is easy to dismiss the case as a prank, but that misses the point: the experiment exposed how modern AI can convert a clearly fabricated idea into plausible public-facing content. That is not a one-off bug. It is a sign that the ecosystem still lacks robust truth friction. (nature.com)What happens next will depend on whether vendors treat this as a product issue or a branding inconvenience. If they keep optimizing for seamless answers, users will keep encountering fluent mistakes. If they build stronger provenance checks and more honest uncertainty labels, AI can become safer without becoming useless. The industry’s challenge is to make the machine less certain where it should be cautious, and more useful where it can truly verify.

- Expect more scrutiny of AI-generated medical explanations.

- Expect calls for stronger citation and provenance layers.

- Expect researchers to design more adversarial tests like bixonimania.

- Expect enterprises to demand auditable outputs.

- Expect users to become more skeptical of fluent but unsupported answers.

Source: The Daily Jagran Is AI Reliable? Researcher Publishes Paper On Fake Eye Disease; ChatGPT, Perplexity Pick It Up, Claiming It's Real