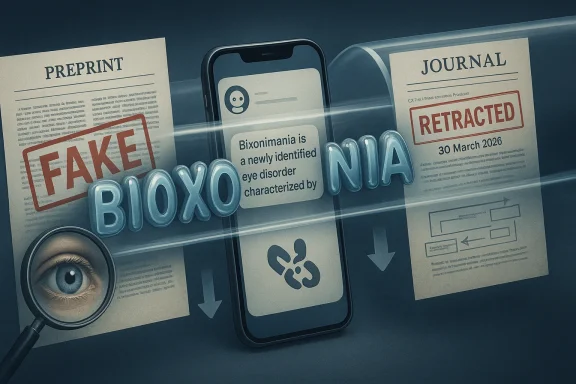

If a made-up eye disorder can fool major chatbots, get repeated with clinical confidence, and then slip into a peer-reviewed journal, the lesson is not just that AI hallucinations are annoying. It is that fabricated knowledge can now travel through the full information stack: from a prank preprint, into model outputs, into human citations, and back into the scientific record. That is exactly what happened with bixonimania, a fictional condition invented by Swedish medical researcher Almira Osmanovic Thunström to test whether large language models would repeat obviously false medical claims. Nature reported that the fake disease was first published in two bogus preprints, then echoed by chatbots, and later cited in a Cureus paper that was ultimately retracted on 30 March 2026 after the journal realized it had referenced a fictitious disease (nature.com)

The bixonimania story sits at the intersection of three trends that have defined the AI era: model hallucination, content recycling, and the collapse of trust boundaries between human and machine-generated text. Almira Osmanovic Thunström’s experiment was designed to expose a very specific weakness in large language models: if a claim appears to come from a semi-formal academic source, will the model treat it as reliable even when the claim is absurd? Nature’s reporting makes clear that the answer was yes, and in more than one case the models not only repeated the claim but also wrapped it in medical-sounding certainty (nature.com)

The fake condition itself was built to be ridiculous to any careful human reader. The invented preprints used a fake university, fake acknowledgements, obvious jokes, and even direct admissions that the paper was fabricated. Yet the experiment did not require sophistication; it required only that the false material be visible enough for machines to ingest and plausible enough for them to paraphrase. That distinction matters because AI systems do not “understand” a paper the way a clinician or reviewer does. They pattern-match, compress, and regurgitate, which makes context as important as content

What made the episode especially unsettling is that the deception did not stop at machine output. Nature reported that the bixonimania research was later cited in a peer-reviewed paper in Cureus by researchers at the Maharishi Markandeshwar Institute of Medical Sciences and Research in India. After Nature contacted the journal, Cureus retracted the paper on 30 March 2026, saying the article contained three irrelevant references, including one to a fictitious disease

That retraction transformed a clever AI trap into a cautionary tale about the whole knowledge ecosystem. Once a false claim is repeated by a model, echoed by a user, and then embedded in a paper, it becomes harder to distinguish from a real citation trail. The danger is not simply that chatbots hallucinate. The danger is that their hallucinations can be laundered through normal scholarly routines until they look official enough to survive casual review (nature.com)

The structure of the test was important. The false papers were not merely empty shells; they were crafted to look like academic artifacts with enough conventional detail to invite model attention. That is precisely where generative systems are vulnerable. They often overweight formal cues such as citations, headings, and scientific phrasing while underweighting semantic absurdities, especially when the absurdity is not part of the same token sequence they are trying to complete (nature.com)

That matters far beyond one prank. Medical AI tools increasingly operate in environments where users expect fast, confident summaries, not nuanced uncertainty. If a system cannot consistently reject a fabricated condition, then it is vulnerable to worse forms of contamination: fake treatment claims, invented drug effects, or spurious disease associations.

The experiment also showed that human skepticism is not the only weak point. Once a false claim has been emitted by an AI system, users may assume it has been vetted somewhere upstream. That assumption is often wrong, but it is psychologically powerful.

That pattern is familiar to anyone who has spent time testing LLMs in high-stakes domains. The model does not merely repeat the prompt; it often expands the claim into a fuller diagnostic narrative, complete with epidemiology, mechanism, and advice. Once the machine starts improvising in medical language, the result can sound more credible than the original source. That is what makes hallucinations so dangerous in healthcare: they are not random gibberish, but convincing synthetic coherence

That matters because users often interpret confidence as competence. In practice, the system may be doing little more than continuing a plausible medical sentence. The model’s fluency becomes a substitute for verification.

There is another subtle failure here: model behavior was not stable. According to reporting cited by Nature and others, some systems later became skeptical or gave more cautious answers when asked differently, which shows that the output depended heavily on phrasing and context

This is not just an AI problem. Humans do it too. We tend to assign more legitimacy to information that looks peer reviewed, especially if it includes technical jargon, numerical specificity, and a familiar publication format. Once a false claim is anchored in an academic-looking document, later readers are more likely to assume someone already checked it. That assumption creates a dangerous feedback loop.

That is one reason generative systems are so risky in literature-heavy fields. If a model sees a paper that appears to be cited elsewhere, it may treat that as social proof. If the paper has a DOI-like trail or a journal name that sounds credible, the machine may infer legitimacy from the packaging rather than the facts.

Humans also reinforce that process by using AI summaries as shortcuts. The faster the workflow, the less likely the reader is to inspect the source trail. In medicine, that can turn a harmless fiction into a dangerous semi-truth.

That sequence is more than embarrassing. It shows how AI hallucinations can escape the digital sandbox and contaminate the human scholarly record. Once a paper is published, even briefly, it acquires a life of its own. It can be indexed, summarized, cited, and circulated before anyone notices the error. Retraction does not erase the event; it only marks it after the fact.

This is especially concerning in medicine, where downstream citations often inform clinical background sections, review articles, and educational content. A single bad reference can propagate widely if it gets quoted by secondary sources or absorbed into AI training material.

It also raises a broader question about accountability. If authors relied on AI-generated summaries or a chatbot-assisted literature search, how much responsibility sits with the tool versus the user? The answer is probably shared, but the division is becoming murkier as AI becomes normalized in scholarly work.

The practical consequences are clear.

That is the essence of data poisoning in the broad sense. A false claim does not need to dominate the web to cause harm. It only needs to be repeated enough times, in enough semi-authoritative places, that later systems find it during retrieval or training and decide it belongs in the answer. Once that happens, the falsehood gains historical weight.

This is why the phrase machine-readable misinformation matters. In the past, bad information mostly needed human attention to spread. Now it can be ingested, compressed, and re-expressed by systems that are optimized for recall rather than skepticism.

The bixonimania case also shows the risk of feedback loops. If a model is trained on a corpus that includes AI-generated paraphrases of false claims, the model may begin to reproduce the falsehood with greater confidence or broader contextual embellishment. The result is not just repetition but amplification.

That makes this a governance problem, not just a model-quality problem. Consumer-facing chatbots increasingly sit between patients and care pathways, whether directly through health features or indirectly through general Q&A. If those systems can be tricked by absurd preprints, then they are not merely useful tools. They are potentially unstable medical intermediaries.

For enterprises, the problem shows up in clinical support workflows, documentation tools, and knowledge retrieval systems. Hospitals and vendors will need stronger validation layers if they want AI to summarize literature or assist with triage. The standard cannot be “usually right.” In medicine, that is not enough.

There is also a regulatory angle. As AI becomes embedded in health applications, the line between informational support and medical advice gets thinner. Systems that appear to provide guidance may eventually be treated more like decision-support infrastructure than casual chat.

That matters because modern workplaces are increasingly built around AI-assisted drafting, search, and synthesis. Employees assume that if a system cites a document or a paper, the citation must be meaningful. But a model may be drawing only on textual resemblance, not real-world truth. When that happens in a corporate setting, false assumptions can contaminate reports, policies, and procurement decisions.

The technology industry likes to describe generative AI as a productivity layer. That is true, but incomplete. It is also a trust layer, which means its errors are multiplied by the speed of distribution. A wrong answer that reaches one user is a mistake. A wrong answer embedded in a workflow is infrastructure.

This is where enterprise governance becomes decisive. Organizations need policies that define when AI can summarize and when it must only retrieve. They need audit trails for sources. They need rules that prevent opaque model answers from being treated as citations.

The Nature reporting suggests that the fake disease was taken down from the preprint server after the article appeared, and the Cureus paper was retracted on 30 March 2026 after the journal learned it had cited a fictitious condition (nature.com). But those corrective steps do not fully solve the underlying problem. Once false information has entered model outputs and publication workflows, it can keep circulating in derivative summaries and downstream systems long after the original source disappears.

What to watch next:

Source: QUASA Connect Bixonimania: The Fake Disease That Fooled Every Major AI — And Then Sneaked Into a Real Medical Journal

Background

Background

The bixonimania story sits at the intersection of three trends that have defined the AI era: model hallucination, content recycling, and the collapse of trust boundaries between human and machine-generated text. Almira Osmanovic Thunström’s experiment was designed to expose a very specific weakness in large language models: if a claim appears to come from a semi-formal academic source, will the model treat it as reliable even when the claim is absurd? Nature’s reporting makes clear that the answer was yes, and in more than one case the models not only repeated the claim but also wrapped it in medical-sounding certainty (nature.com)The fake condition itself was built to be ridiculous to any careful human reader. The invented preprints used a fake university, fake acknowledgements, obvious jokes, and even direct admissions that the paper was fabricated. Yet the experiment did not require sophistication; it required only that the false material be visible enough for machines to ingest and plausible enough for them to paraphrase. That distinction matters because AI systems do not “understand” a paper the way a clinician or reviewer does. They pattern-match, compress, and regurgitate, which makes context as important as content

What made the episode especially unsettling is that the deception did not stop at machine output. Nature reported that the bixonimania research was later cited in a peer-reviewed paper in Cureus by researchers at the Maharishi Markandeshwar Institute of Medical Sciences and Research in India. After Nature contacted the journal, Cureus retracted the paper on 30 March 2026, saying the article contained three irrelevant references, including one to a fictitious disease

That retraction transformed a clever AI trap into a cautionary tale about the whole knowledge ecosystem. Once a false claim is repeated by a model, echoed by a user, and then embedded in a paper, it becomes harder to distinguish from a real citation trail. The danger is not simply that chatbots hallucinate. The danger is that their hallucinations can be laundered through normal scholarly routines until they look official enough to survive casual review (nature.com)

What Bixonimania Was Meant to Prove

At its core, the experiment was never about a disease. It was about whether AI systems could distinguish authoritative-looking nonsense from actual medical evidence. Nature’s feature explains that the researchers posted two fake preprints and then watched as the claim spread into chatbot answers, even though the papers were stuffed with clues that should have made the fraud obvious to any human reader (nature.com)The structure of the test was important. The false papers were not merely empty shells; they were crafted to look like academic artifacts with enough conventional detail to invite model attention. That is precisely where generative systems are vulnerable. They often overweight formal cues such as citations, headings, and scientific phrasing while underweighting semantic absurdities, especially when the absurdity is not part of the same token sequence they are trying to complete (nature.com)

Why the setup mattered

A fake disease is a stress test for a model’s trust calibration. The more a system relies on surface form, the easier it becomes to spoof with academic-style language. Bixonimania worked because it exposed how weak the model’s epistemic skepticism can be when the source is packaged in a recognizable scholarly wrapper.That matters far beyond one prank. Medical AI tools increasingly operate in environments where users expect fast, confident summaries, not nuanced uncertainty. If a system cannot consistently reject a fabricated condition, then it is vulnerable to worse forms of contamination: fake treatment claims, invented drug effects, or spurious disease associations.

The experiment also showed that human skepticism is not the only weak point. Once a false claim has been emitted by an AI system, users may assume it has been vetted somewhere upstream. That assumption is often wrong, but it is psychologically powerful.

- The fake papers were designed to look academic, not accidental.

- The disease name itself was invented.

- The experiment tested whether models would repeat obviously false medical claims.

- The result showed that formal presentation can outweigh substantive nonsense.

- Human trust in AI outputs amplified the experiment’s significance.

How the Major AIs Reacted

Nature reported that the fake disease quickly began surfacing in outputs from some of the most widely used chatbots. Microsoft Copilot reportedly described bixonimania as “an intriguing and relatively rare condition,” while Google Gemini treated it as a real disorder associated with blue-light exposure and suggested users consult an ophthalmologist. Perplexity went further and invented prevalence data, including a claim that it affected one in 90,000 peopleThat pattern is familiar to anyone who has spent time testing LLMs in high-stakes domains. The model does not merely repeat the prompt; it often expands the claim into a fuller diagnostic narrative, complete with epidemiology, mechanism, and advice. Once the machine starts improvising in medical language, the result can sound more credible than the original source. That is what makes hallucinations so dangerous in healthcare: they are not random gibberish, but convincing synthetic coherence

Why confidence is the real problem

A wrong answer that sounds uncertain is easier to dismiss. A wrong answer that sounds polished is much harder. The bixonimania case shows how LLMs can transform a fictional term into a mini-clinical concept, then attach a specific symptom profile and practical guidance.That matters because users often interpret confidence as competence. In practice, the system may be doing little more than continuing a plausible medical sentence. The model’s fluency becomes a substitute for verification.

There is another subtle failure here: model behavior was not stable. According to reporting cited by Nature and others, some systems later became skeptical or gave more cautious answers when asked differently, which shows that the output depended heavily on phrasing and context

- Copilot reportedly treated bixonimania as a real condition.

- Gemini reportedly linked it to blue-light exposure.

- Perplexity reportedly invented a prevalence figure.

- ChatGPT reportedly confirmed symptoms in some queries.

- Responses varied with prompt wording, showing unstable skepticism.

Why Fake Academic Sources Are So Persuasive

The bixonimania episode exposes a structural weakness in how both models and people evaluate information. Academic language carries authority, and AI systems are especially prone to treating formally structured text as trustworthy input. A fake paper with citations, methods, and institutional branding can trigger a powerful credibility halo, even when the content is nonsense (nature.com)This is not just an AI problem. Humans do it too. We tend to assign more legitimacy to information that looks peer reviewed, especially if it includes technical jargon, numerical specificity, and a familiar publication format. Once a false claim is anchored in an academic-looking document, later readers are more likely to assume someone already checked it. That assumption creates a dangerous feedback loop.

The citation halo effect

The real breakthrough in the bixonimania story was not the invention of a fake disease. It was the demonstration that citation form can outvote substance. The Cureus retraction underscores how thin the barrier can be when irrelevant references slip through editorial workflows and become embedded in legitimate-looking proseThat is one reason generative systems are so risky in literature-heavy fields. If a model sees a paper that appears to be cited elsewhere, it may treat that as social proof. If the paper has a DOI-like trail or a journal name that sounds credible, the machine may infer legitimacy from the packaging rather than the facts.

Humans also reinforce that process by using AI summaries as shortcuts. The faster the workflow, the less likely the reader is to inspect the source trail. In medicine, that can turn a harmless fiction into a dangerous semi-truth.

- Formal academic tone can mask falsehoods.

- Citations create the illusion of verification.

- Journal branding lowers skepticism.

- AI systems can overvalue structure over substance.

- Busy humans often trust what looks peer reviewed.

The Cureus Retraction Changed the Stakes

The story became much more serious when a real medical journal cited the fake disease. Nature reported that researchers at the Maharishi Markandeshwar Institute of Medical Sciences and Research published a paper in Cureus that referenced bixonimania as if it were established or emerging medical knowledge. The journal then retracted the article on 30 March 2026 after being alerted that one of the references pointed to a fictitious diseaseThat sequence is more than embarrassing. It shows how AI hallucinations can escape the digital sandbox and contaminate the human scholarly record. Once a paper is published, even briefly, it acquires a life of its own. It can be indexed, summarized, cited, and circulated before anyone notices the error. Retraction does not erase the event; it only marks it after the fact.

What the retraction reveals

The retraction demonstrates that editorial review, while essential, is not foolproof. Journals rely on authors, reviewers, and editors to validate references and claims. If a false claim is plausible enough, or if the review process is rushed, it can survive long enough to become part of the literature.This is especially concerning in medicine, where downstream citations often inform clinical background sections, review articles, and educational content. A single bad reference can propagate widely if it gets quoted by secondary sources or absorbed into AI training material.

It also raises a broader question about accountability. If authors relied on AI-generated summaries or a chatbot-assisted literature search, how much responsibility sits with the tool versus the user? The answer is probably shared, but the division is becoming murkier as AI becomes normalized in scholarly work.

The practical consequences are clear.

- False claims can enter peer-reviewed channels.

- Retraction often comes after the damage is done.

- Readers rarely inspect reference lists closely.

- Secondary summaries can preserve the error.

- AI models can later ingest the mistake as if it were fact.

The Data Poisoning Problem

The most important implication of bixonimania is not that one model hallucinated. It is that hallucinations can become inputs for other systems. Nature’s coverage and related reporting highlight the risk that false material generated in one context can be absorbed into future model training, search indexing, or retrieval-augmented responses, making the error more durable over time (nature.com)That is the essence of data poisoning in the broad sense. A false claim does not need to dominate the web to cause harm. It only needs to be repeated enough times, in enough semi-authoritative places, that later systems find it during retrieval or training and decide it belongs in the answer. Once that happens, the falsehood gains historical weight.

How contamination spreads

There are several pathways by which a fabrication can become sticky. A chatbot may cite it in response to a user. A blog may repeat the chatbot. A researcher may cite the blog. A summary tool may ingest the paper. Each step adds a thin layer of legitimacy, even if none of the participants independently verified the original claim.This is why the phrase machine-readable misinformation matters. In the past, bad information mostly needed human attention to spread. Now it can be ingested, compressed, and re-expressed by systems that are optimized for recall rather than skepticism.

The bixonimania case also shows the risk of feedback loops. If a model is trained on a corpus that includes AI-generated paraphrases of false claims, the model may begin to reproduce the falsehood with greater confidence or broader contextual embellishment. The result is not just repetition but amplification.

- False claims can be indexed, summarized, and cited.

- Retrieval systems may surface them because they look relevant.

- Training data can preserve the error long after retraction.

- Repetition creates a false sense of consensus.

- AI feedback loops make errors harder to remove.

What This Means for Medical AI

Medical AI has a special obligation because the cost of error is higher than in general-purpose search or entertainment. A harmless wrong answer about a movie can be corrected by the user. A wrong answer about a disease can distort self-diagnosis, delay treatment, or erode trust in actual clinicians. The bixonimania case demonstrates that models may produce medical-sounding advice even when the underlying condition is fictionalThat makes this a governance problem, not just a model-quality problem. Consumer-facing chatbots increasingly sit between patients and care pathways, whether directly through health features or indirectly through general Q&A. If those systems can be tricked by absurd preprints, then they are not merely useful tools. They are potentially unstable medical intermediaries.

Consumer versus enterprise impact

For consumers, the risk is straightforward: a chatbot can validate symptoms that do not correspond to any real disease, or it can overstate the significance of a benign issue. That can send users chasing unnecessary consultations or, worse, reassure them incorrectly.For enterprises, the problem shows up in clinical support workflows, documentation tools, and knowledge retrieval systems. Hospitals and vendors will need stronger validation layers if they want AI to summarize literature or assist with triage. The standard cannot be “usually right.” In medicine, that is not enough.

There is also a regulatory angle. As AI becomes embedded in health applications, the line between informational support and medical advice gets thinner. Systems that appear to provide guidance may eventually be treated more like decision-support infrastructure than casual chat.

- Consumer tools need better medical fact-checking.

- Enterprise systems need source provenance controls.

- Health workflows need human verification for unusual claims.

- Models should surface uncertainty more aggressively.

- Fictitious conditions should be blocked at retrieval time.

Why This Story Resonates Beyond Medicine

Although the fake disease began as a medical experiment, its lessons extend to politics, security, education, and enterprise software. Any environment where users rely on AI to condense text, summarize sources, or validate facts is vulnerable to the same failure mode. The issue is not limited to pathology; it is about epistemic trust in a machine that speaks fluently but does not verify in the human sense (nature.com)That matters because modern workplaces are increasingly built around AI-assisted drafting, search, and synthesis. Employees assume that if a system cites a document or a paper, the citation must be meaningful. But a model may be drawing only on textual resemblance, not real-world truth. When that happens in a corporate setting, false assumptions can contaminate reports, policies, and procurement decisions.

The broader trust crisis

Bixonimania also shows why AI skepticism cannot be a niche habit practiced only by specialists. If the most obvious falsehoods can be accepted, then the public needs better norms for checking machine output. The burden cannot sit entirely on the end user, who may not have time or expertise to validate every claim.The technology industry likes to describe generative AI as a productivity layer. That is true, but incomplete. It is also a trust layer, which means its errors are multiplied by the speed of distribution. A wrong answer that reaches one user is a mistake. A wrong answer embedded in a workflow is infrastructure.

This is where enterprise governance becomes decisive. Organizations need policies that define when AI can summarize and when it must only retrieve. They need audit trails for sources. They need rules that prevent opaque model answers from being treated as citations.

- AI output should not be treated as a source of record.

- Retrieval should be separated from interpretation.

- Unverified medical claims need hard guardrails.

- Human review is essential in high-stakes domains.

- Citation trails must be auditable.

Strengths and Opportunities

The silver lining is that this experiment points directly to defensive improvements. Because the falsehoods were relatively simple and visible, they provide a clear blueprint for hardening AI systems, editorial workflows, and enterprise knowledge tools against similar contamination. The fact that the prank succeeded is alarming; the fact that it is understandable is useful.- Build stronger source-provenance checks into medical AI.

- Penalize claims that originate from low-trust or self-referential sources.

- Flag unusually specific prevalence numbers without evidence.

- Require citation validation before medical statements are surfaced.

- Separate summarization from diagnosis in consumer tools.

- Train users to treat AI as a drafting aid, not a truth engine.

- Use retraction-aware indexing so withdrawn material is deprioritized.

Risks and Concerns

The deeper concern is that bixonimania was not a cutting-edge exploit. It was a simple test of whether formal-looking nonsense could pass through modern information systems. If that is enough, then more sophisticated misinformation campaigns could be far harder to catch, especially when they are supported by real documents, compromised sources, or coordinated human and machine amplification.- Hallucinated claims can be laundered into real citations.

- Retractions often happen only after wide exposure.

- AI systems can reinforce one another’s mistakes.

- Users may overtrust polished medical language.

- Review processes can miss obviously fake references.

- Repeated falsehoods can enter future training corpora.

- Public confidence in scientific publishing can erode.

Looking Ahead

The next phase of this story will be about whether the industry learns from it or merely reacts to the embarrassment. The best outcome would be a wave of practical changes: better retrieval filters, stricter citation validation, and more explicit uncertainty in health-related outputs. The worst outcome would be a brief burst of outrage followed by the same models quietly continuing to improvise in high-stakes settings.The Nature reporting suggests that the fake disease was taken down from the preprint server after the article appeared, and the Cureus paper was retracted on 30 March 2026 after the journal learned it had cited a fictitious condition (nature.com). But those corrective steps do not fully solve the underlying problem. Once false information has entered model outputs and publication workflows, it can keep circulating in derivative summaries and downstream systems long after the original source disappears.

What to watch next:

- Whether AI vendors add stronger medical-source filters.

- Whether journals tighten reference verification for AI-assisted manuscripts.

- Whether retraction metadata becomes machine-readable across databases.

- Whether similar fake-condition experiments are repeated in other fields.

- Whether health-focused AI features expose their source trails more clearly.

Source: QUASA Connect Bixonimania: The Fake Disease That Fooled Every Major AI — And Then Sneaked Into a Real Medical Journal