AI, authorship, and the uneasy comedy of asking a machine to sound human

AI, authorship, and the uneasy comedy of asking a machine to sound human

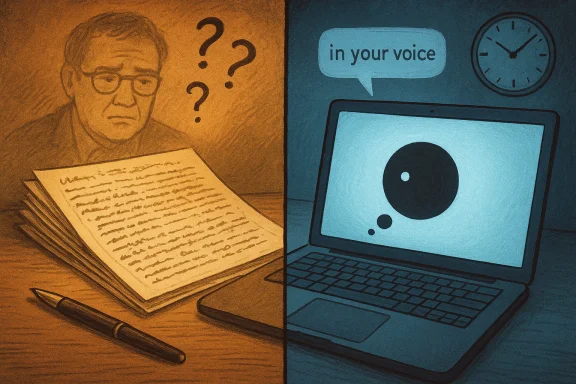

A recent Irish Mirror column about asking ChatGPT to write in the voice of Billy Scanlan lands as a joke first, but it works because it is also a small, revealing media experiment. The piece is funny about its own vanity, but underneath the humour it is really about authorship, style, and the odd emotional theatre of waiting for a machine to think. What begins as a throwaway stunt becomes a surprisingly useful case study in how generative AI behaves when asked to imitate a recognisable human columnist, and why the result feels at once impressive, unsettling, and faintly banal. The article’s own self-awareness is the point: it shows that the biggest change AI brings to writing is not just speed, but the new way it makes us notice our own voice.Background

The Irish Mirror piece sits in a wider moment when newsrooms, bloggers, and independent writers are testing what AI can actually do with language. The experiment is simple enough: prompt a model to produce an 18-sentence column in a specific journalistic style, then compare the output with the human writer’s own habits and instincts. That kind of test matters because it is not asking AI to do hard analysis or original reporting; it is asking it to perform tone, which is exactly where many people assume humans still have the upper hand. Yet the result suggests that style imitation is already good enough to be convincing in short bursts, especially when the source material is formulaic or strongly patterned.There is a reason this kind of experiment keeps resurfacing. Generative AI has become a routine part of the writing conversation, and not just in technology circles. University guidance, newsroom case studies, and public-facing articles increasingly frame the issue in terms of productive use versus replacement use. The University of Waterloo, for example, distinguishes between using GenAI to shortcut the work and using it to refine human thought, arguing that the tool can help learning only when the person doing the work still owns the reasoning. That principle applies just as much to journalism as it does to education: a machine can draft, but it cannot be responsible for the idea.

What makes the Irish Mirror column interesting is that it is not a polished corporate demonstration or an abstract policy debate. It is messy, comic, and personal, which is exactly why it feels honest. The writer describes staring at the “throbbing” dot, wondering whether the internet knows who he is, and then finally prompting the system to do the thing more explicitly. That narrative frame matters because it shifts the piece from “look what AI can write” to “look what it feels like to ask AI to impersonate me.” In other words, the subject is not merely output; it is self-consciousness.

The broader media environment helps explain why this kind of content resonates. Across the forum’s recent AI coverage, the recurring themes are governance, transparency, and the limits of machine-generated language. Posts about Copilot in news contexts, AI in classrooms, and model behavior in public communication all return to the same basic tension: AI is useful when it assists human judgment, but risky when it substitutes for it. The Irish Mirror experiment occupies a lighter register, but it lands in the same conceptual territory. It is journalism about journalism, with a machine sitting awkwardly in the middle.

What the experiment actually demonstrates

The most obvious lesson is that AI can mimic a columnist’s cadence well enough to pass a first glance. The story says the generated text appeared to be about arguing with a parking metre, which the writer treats as recognisably his own territory. That is important because it shows how stylistic fingerprints work: not through grand literary flourishes, but through repeated angles, habitual complaints, and the tiny rhythms of everyday observation. A model trained on vast text can reproduce those patterns quickly, even if it cannot genuinely inhabit the life behind them.But the more interesting lesson is that the model’s performance is not just a function of language quality. It is also a function of expectation. The writer already suspects that ChatGPT may have “rehashes” of previous columns or may be borrowing from a recognizable archive of his own voice, and that suspicion creates a strange feedback loop. We read the machine differently when we are told to imagine it as an imitator rather than a creator. That is why the same output can feel either uncanny or generic depending on how closely we think it touches a living writer’s habits.

Style is easier to copy than judgment

This is the crucial distinction. AI is getting very good at the surface features of writing: sentence length, rhetorical setup, comic pacing, and conversational transitions. What it still struggles to replicate reliably is judgment, which includes timing, restraint, and the social knowledge that tells a columnist when to stop. The Irish Mirror piece is funny partly because it treats the machine like a slacker colleague who may have done the job adequately but not meaningfully. That is a real fear in media work, because adequacy can be more disruptive than obvious failure.The experiment also makes a useful point about authorial identity. A columnist’s voice is not just a string of stylistic tics; it is the product of timing, taste, memory, and the accumulated relationship with readers. A model can reproduce the accent, but not the biography. That is why readers may laugh at the generated paragraph while still sensing a gap between imitation and authorship. The machine can sound like the writer, but it cannot be the writer.

- The AI appears to capture surface style more easily than deeper intent.

- The human writer’s biographical context remains outside the model.

- The result is convincing enough to be amusing, but not enough to erase authorship.

- Timing and restraint still matter more than generic fluency.

- Readers can feel the difference between imitation and lived judgment.

Why the waiting is part of the story

One of the smartest things about the column is that it turns the delay into a character of its own. The writer watches the black dot “throb” for nine minutes, then begins to feel embarrassed for assuming the system would know who he was. That waiting period is not filler; it is the emotional architecture of the piece. It captures what it feels like to sit in front of a model and hope it will do something both personal and useful, only to discover that the machine’s silence is just another kind of performance.The dot is also a reminder that AI interactions are not neutral. They are staged through interfaces that encourage projection, anticipation, and a little bit of anthropomorphism. When the writer describes the dot as “thinking,” he is joking, but he is also describing the user experience exactly as designed: the machine seems almost alive, just opaque enough to invite imagination. That is part of why AI is so sticky in everyday culture. It gives people not only answers, but a feeling of being in conversation with something that is almost, but not quite, a mind.

The interface is part of the editorial experience

Newsrooms and independent writers are beginning to understand that AI adoption is not only a question of output quality. It is also about workflow psychology, because the model’s delay, confidence, and sometimes bizarre phrasing affect how writers think and work. The Irish Mirror column captures that perfectly by making the waiting itself funny. The joke is that the writer is supposed to be saving time, yet the process has become a little ritual of doubt and self-correction.This matters because AI often arrives in editorial settings with the promise of efficiency. But if the tool creates uncertainty about whether text is truly yours, or whether it has merely been mechanically assembled from old patterns, the savings are not purely temporal. They are psychological, reputational, and sometimes ethical. In that sense, the “throbbing dot” is a vivid metaphor for the modern writing desk: something between a typewriter, a search engine, and a colleague who may or may not be reliable.

- Waiting changes how the writer frames the task.

- The interface invites projection onto the machine.

- Delay creates a sense of suspended authorship.

- Efficiency gains can be offset by emotional friction.

- The user experience itself becomes part of the narrative.

What this means for local journalism

For local and regional journalism, the Irish Mirror stunt is more than a joke about a columnist feeding prompts into a chatbot. It is a reminder that AI can already manufacture copy in the textures that make local writing feel familiar: grievance, gossip, weather, parking, bureaucracy, and everyday absurdity. That is precisely the territory where local outlets have traditionally relied on a human voice to create intimacy with readers. If a model can approximate that voice on demand, then the bar for distinctive human writing rises immediately.Local journalism has always depended on proximity, not merely information. Readers return because they trust a columnist’s angle, not because the sentences are grammatically tidy. AI can produce tidy sentences all day long, but that does not automatically translate into civic value. The deeper question is whether local journalism becomes more valuable when it leans harder into reporting, verification, and personality, or whether it becomes more vulnerable because commodity commentary can now be automated cheaply.

The human edge is narrowing, but not disappearing

This does not mean AI is about to replace every columnist. Human writers still control the awkwardly important things: lived detail, local specificity, and the social instinct for what will actually land with readers. But the gap between possible and publishable is shrinking. A model does not need to write a masterpiece to create pressure; it only needs to produce something good enough that an editor, manager, or freelancer wonders whether the human draft is worth the extra time.That pressure is already visible in adjacent media experiments. News organizations have used AI to draft sports predictions, rewrite summaries, and generate promotional copy, often with a mix of amusement and caution. The common thread is that AI is strongest in repeatable formats with predictable structures. Columns, especially light columns, are sometimes more structured than they look. That makes them a tempting target for automation and a useful test case for where editorial identity still matters.

- Local voice is easier to imitate than to replace entirely.

- Cheap commentary may become a commodity.

- Verification and lived detail become more valuable, not less.

- Editors may demand more specificity from human writers.

- AI pressures writers toward stronger personal originality.

The ethics of style imitation

Style imitation is not automatically unethical, but it becomes sensitive fast when the style belongs to a living journalist. The Irish Mirror piece is transparent about the prompt, which is important, because transparency changes the moral texture of the experiment. Readers know this is a stunt, not a secret substitution. That disclosure keeps the article in the realm of commentary rather than deception, and it preserves the writer’s right to laugh at his own possible replaceability.Still, the experiment raises a serious question: when does “in the style of” become a form of appropriation? If a machine is trained on public writing and asked to imitate a named columnist, the result may be legally and ethically murky even when it is technically easy. The issue is not just copyright, but whether the machine is exploiting the recognizability of a human craft without consent or compensation. That concern is becoming more salient as AI systems are used to mimic voices in journalism, marketing, and entertainment.

Transparency is the line that matters

The safest editorial principle is simple: if AI is involved, say so. That principle is echoed in education guidance that insists students should not submit AI-generated work as their own, and it maps neatly onto journalism. A newsroom can experiment with AI, but it should not hide AI’s role behind the illusion of effortless authorship. Once readers feel tricked, the damage is greater than any time saved.There is also a subtler point about consent and context. A writer’s style is not a free-floating asset detached from identity. It is tied to reputation, audience relationships, and the labour that went into building a recognisable voice. The closer AI gets to copying that voice, the more important it becomes to ask who benefits, who decides, and who is accountable if the result is misleading or lazy.

- Transparency is the minimum ethical requirement.

- Style imitation is not the same as respectful quotation.

- Public writing is accessible, but not morally ownerless.

- Readers deserve to know when a machine has been asked to mimic a person.

- Consent matters more as synthetic voice gets more convincing.

Why the joke works

The column works because it does not pretend AI has solved anything profound. It turns the whole exercise into a scene of mild humiliation, especially the bit where the writer worries that asking the internet to know him might have been presumptuous. That self-deprecation is what keeps the piece from sounding like either a tech demo or a moral panic. It is a column about the social awkwardness of trying something new and possibly foolish, which is one of the oldest and best forms of newspaper writing.There is also a classic comic structure here: setup, delay, frustration, anticlimax, and then a small victory that may or may not actually be a victory. The generated text appears, the writer recognises his own preoccupations, and then he concludes that perhaps he has done the work himself after all. That conclusion is sly because it leaves open two interpretations at once. Either the model successfully copied him, or the model merely reminded him of the way he already writes. Both readings are flattering and faintly alarming.

Comedy as a diagnostic tool

Humour is surprisingly useful for understanding AI because it exposes where the machine is predictable and where it is not. When a column about AI can end up sounding like a column about parking metres, readers immediately understand the limits of novelty. The model can generate fluent material, but it still struggles to produce the exact texture of an individual’s comic worldview. That’s why the story is memorable: it lets readers feel the difference between generic wit and earned wit.The joke also does a good editorial job of lowering the temperature around a contentious issue. Instead of declaring that AI is destroying writing, the piece lets readers watch a writer negotiate with a tool in real time. That makes the point more persuasive. People are more willing to confront unsettling technology when it arrives through self-mockery than through sermonizing. In that sense, the article is not just funny; it is strategically funny.

- The humour depends on self-awareness, not cynicism.

- The ending leaves multiple interpretations open.

- Comedy makes the AI question feel human rather than abstract.

- The joke reduces panic without removing the stakes.

- The piece succeeds because it never forgets the writer is the punchline too.

The broader media lesson

What this story ultimately reveals is that AI does not erase editorial identity; it forces it to become more visible. If a machine can draft a passable imitation of a columnist in minutes, then what separates the human writer is no longer just style. It is judgment, specificity, accountability, and the willingness to stand behind a sentence when it matters. In a world full of synthetic prose, those human qualities become editorial assets.That lesson has consequences for editors as well as writers. Editors may need to ask not only whether a piece is polished, but whether it carries a real point of view that was lived rather than assembled. They may also need to value process more explicitly, because the source of the prose may matter as much as the prose itself. A clean sentence generated by a machine is not automatically interchangeable with a sentence earned through observation and revision.

Strengths and Opportunities

The strongest thing about the Irish Mirror experiment is that it makes a large, abstract debate feel small, personal, and readable. Instead of treating AI as an all-purpose threat or miracle, it shows exactly where the friction begins: in the writer’s doubt, the model’s latency, and the odd recognition that output can be both familiar and чуж. That gives editors and readers a concrete way to think about the tool without collapsing into slogans.- It demonstrates how style imitation works in practice.

- It makes AI’s latency emotionally legible.

- It highlights the importance of transparency.

- It shows that humour can carry serious editorial analysis.

- It encourages writers to define what makes their voice distinct.

- It opens a conversation about authorship without sounding preachy.

- It offers a model for low-stakes public experimentation.

Risks and Concerns

The same experiment also shows why AI-assisted writing needs guardrails. A machine can imitate a voice convincingly enough to blur the line between homage, parody, and substitution. If a newsroom grows comfortable with that blurring, it risks cheapening both the individual writer’s craft and the reader’s trust. The danger is not just bad text; it is misleadingly adequate text that quietly displaces the human work behind it.- AI can blur the line between inspiration and impersonation.

- Readers may not always know when a machine has been used.

- Style imitation can cheapen the value of a columnist’s voice.

- Routine AI use may normalize shallow editorial judgment.

- Fast drafting can conceal weak reporting or weak thinking.

- Overreliance can make human writing feel interchangeable.

- Trust erodes quickly when disclosure is unclear or inconsistent.

What to Watch Next

The next phase of this story will not be whether AI can imitate more writers. That question is already answered in the affirmative, at least for many mainstream styles and formats. The real issue is what newsrooms and writers choose to preserve as distinctly human once imitation becomes cheap and easy. That is where the editorial and commercial stakes begin to separate.We should expect more experiments like this, but also more backlash against them. Some outlets will use AI to accelerate drafting or ideation, while others will lean harder into transparency and human authenticity as differentiators. The market will likely reward outlets that are clear about process, because clarity is now part of the product. A reader who knows how a piece was made is better able to decide whether to trust it.

Likely developments

- More public AI-writing experiments from newspapers and magazines.

- Stronger disclosure norms around machine-assisted content.

- Greater emphasis on personal voice, sourcing, and original reporting.

- More scrutiny of “in the style of” prompts and voice imitation.

- Faster editor detection of generic AI prose.

- Increased reader sensitivity to formulaic content.

- Better internal policies on when AI can and cannot be used.

The Irish Mirror column ends as a comic shrug, but its real significance is more serious: it shows that when a machine learns to sound like us, we are forced to define what it means to be worth reading. That is not just a technology story. It is a journalism story, a labour story, and, in the end, a story about whether readers still want the friction, personality, and responsibility that only a human writer can bring.

Source: Irish Mirror 'I asked AI to write this column and the results, as they say, surprised me'

Last edited: