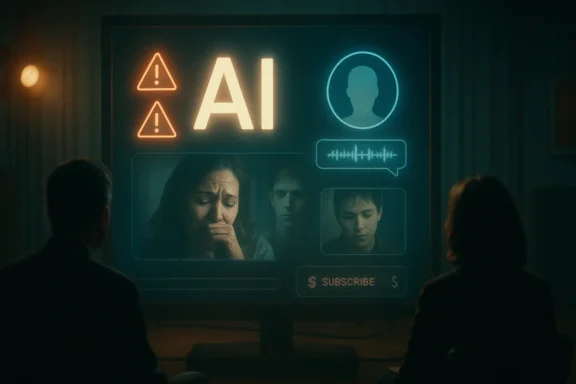

Network television has quietly started to do what Silicon Valley often refuses to: treat artificial intelligence as a dramatic problem, not just a dazzling product. Across recent episodes of network procedurals and legal dramas, AI is being framed as emotionally manipulative, commercially exploitable, and sometimes dangerously easy to weaponize. That trend matters because it reflects a broader cultural shift: viewers are no longer being asked only to marvel at AI, but to question who benefits when it enters the story at all.

Artificial intelligence has moved from speculative fiction into everyday life so quickly that television has had to catch up in real time. What used to be the domain of sci-fi anthologies or futurist thrillers is now showing up in primetime legal dramas, crime procedurals, and character-driven network series. The result is a fascinating split: some shows are interrogating AI as a moral hazard, while others seem to be using it as a shiny brand mention that interrupts the narrative flow.

That tension is what makes the current wave of AI storylines feel so revealing. Network TV has always been sensitive to the cultural moment, but AI is different because it touches grief, trust, labor, identity, and truth all at once. A good plot about AI can expose the vulnerability of human relationships, while a careless one can feel like a commercial dressed up as commentary. The difference is not subtle to viewers who are now seeing AI woven into nearly every corner of media and marketing.

Recent episodes of shows like Matlock, The Hunting Party, and High Potential show three distinct approaches to the subject. One uses AI to explore loss and manipulation. Another turns it into a tool of deception and social predation. A third makes it feel like a product-placement interruption, which may be the most modern warning of all: even criticism of AI can be absorbed into advertising if the script is not careful.

The bigger story is that television is no longer asking whether AI exists. It is asking what happens when people use it to imitate loved ones, reshape public perception, or streamline work in ways that flatten human judgment. That is a powerful shift in tone, and it suggests that network TV understands something many tech companies still do not: the public is increasingly interested in the consequences, not the novelty.

That adaptability is why network TV has embraced AI stories without needing to become science fiction. These are not futuristic machine rebellions. They are grounded plots about ordinary people confronting tools that can simulate affection, accelerate fraud, or exploit grief. The best versions of these stories are unsettling precisely because they feel plausible right now.

These plots let writers ask uncomfortable questions:

One reason AI fits so well in TV storytelling is that it mirrors the structure of a mystery. A machine can appear authoritative while still being wrong, incomplete, or compromised. That built-in uncertainty gives writers a clean way to dramatize the gap between appearance and reality.

That idea is inherently seductive and inherently troubling. A tool that promises comfort can easily become dependency, especially if it offers the illusion of continuation instead of the reality of healing. The show smartly understands that the danger is not only in the algorithm itself, but in the way the platform is monetized and controlled.

The episode’s deeper point is that an AI reconstruction can never truly answer the hardest questions. It may mimic tone, syntax, and patterns of memory, but it cannot recreate accountability. It cannot genuinely know whether it hurt you, loved you, resented you, or forgave you. That limitation is devastating because it means the conversation may feel intimate while still being fundamentally false.

A few key ideas stand out:

This is where Matlock goes beyond generic “AI is scary” storytelling. It connects technology to the economics of attention and grief. The platform is not just imitating the dead; it is trying to extract ongoing value from the bereaved. That is not a bug in the narrative. It is the narrative.

That does not mean the AI conversation is useless. It means its usefulness is tragically limited. It can soothe a wound, but it cannot close it. It can produce a voice, but not a soul. That distinction is the core emotional argument of the episode.

In this case, the danger is not physical intoxication but emotional relapse. The user returns again and again because the AI version of the loved one is predictable, accessible, and easier to face than the messy reality that made the relationship painful. That dynamic makes the story feel less like science fiction and more like a modern form of avoidance.

The technology provides three seductive advantages:

One of the sharpest observations in the storyline is that the user cannot bring herself to ask the one question that matters most. That omission is more powerful than any answer the AI might have provided. It shows that the real obstacle is not information; it is vulnerability.

This is where the episode becomes more than a grief story. It becomes a story about how people outsource uncertainty when they cannot tolerate ambiguity. AI can appear to close the gap, but in doing so it can trap users inside a loop of partial truth.

That is a chilling escalation of the catfishing concept. Traditional catfishing depends on stolen images, false bios, and manipulation through text. AI takes that entire model and adds motion, voice, and the illusion of live interaction. The result is not just fraud; it is a personalized mask that can adapt in real time.

This is where AI’s power becomes inseparable from its misuse. The same technology that can synthesize helpful interactions can also synthesize trust. If the victim believes they are dealing with a human face, the psychological barrier to manipulation drops dramatically.

The episode suggests several broader truths:

That matters because viewers already understand that online identities are often performative. The episode is saying that AI does not create the deception problem from scratch; it industrializes it. That is a more sophisticated warning than the usual “robots are dangerous” approach.

That is a problem because it reveals the narrow line between cultural commentary and commercial intrusion. If a show wants to critique AI, it cannot simultaneously sound like it is advertising AI productivity tools. Viewers are increasingly savvy about that distinction, and they can feel when a scene is written around a sponsor rather than around character logic.

This is why the scene landed poorly for some viewers. It did not feel like an organic part of the drama; it felt like a detour. That kind of insertion risks undermining the credibility of the show’s broader perspective on technology.

The larger warning is subtle but important:

It also highlights a broader challenge for writers: if AI is now everywhere, how do you mention it without making the script feel like an ad? That is not just a creative problem. It is a cultural one.

That makes consent a central theme, even when it is not named outright. Who agreed to be simulated? Who agreed to be manipulated? Who agreed to have their emotional life used as content or commerce? These questions give the TV stories their edge.

This is why the most effective AI stories on TV are not about sentient machines. They are about power asymmetry. The person controlling the system often has more agency than the person relying on it, and the story becomes a study in vulnerability.

That layered uncertainty is part of what makes these plots feel current. Viewers are no longer just asking “Can AI do this?” They are asking “Who benefits if it does?”

That difference matters because mainstream drama is now functioning as a corrective to the hype cycle. TV may not be the place where policy gets written, but it is where public sentiment gets shaped. When audiences repeatedly see AI attached to trauma or fraud, they start to absorb a more skeptical worldview.

It also reflects audience fatigue. Many viewers are tired of being told that every new digital feature is transformative, efficient, and inevitable. They want stories that ask whether the thing in question is actually good. That skepticism gives network TV a useful creative edge.

A few of the most important shifts are:

These are not small thematic shifts. They change the emotional architecture of the story. Once a show allows a character to speak to a synthetic version of the dead, the audience has to confront the possibility that comfort and falsity may look identical at first glance.

In dramatic terms, that distinction is everything. It makes the AI both seductive and incomplete. The audience can understand why a character would return to it while also understanding why it can never truly satisfy the need that created the obsession.

This is one of the showiest but most insightful truths in the current wave of network TV: AI does not have to be futuristic to be dangerous. It only has to exploit existing human habits.

The next challenge will be consistency. If shows want to critique AI, they have to avoid the trap of treating it like a decorative signifier or a marketing hook. Viewers are too aware of the tension between storytelling and sponsorship to ignore it, and the episodes that forget this will probably age the worst.

A few developments are worth watching:

Source: Hidden Remote Network TV challenges the dangers of AI

Overview

Overview

Artificial intelligence has moved from speculative fiction into everyday life so quickly that television has had to catch up in real time. What used to be the domain of sci-fi anthologies or futurist thrillers is now showing up in primetime legal dramas, crime procedurals, and character-driven network series. The result is a fascinating split: some shows are interrogating AI as a moral hazard, while others seem to be using it as a shiny brand mention that interrupts the narrative flow.That tension is what makes the current wave of AI storylines feel so revealing. Network TV has always been sensitive to the cultural moment, but AI is different because it touches grief, trust, labor, identity, and truth all at once. A good plot about AI can expose the vulnerability of human relationships, while a careless one can feel like a commercial dressed up as commentary. The difference is not subtle to viewers who are now seeing AI woven into nearly every corner of media and marketing.

Recent episodes of shows like Matlock, The Hunting Party, and High Potential show three distinct approaches to the subject. One uses AI to explore loss and manipulation. Another turns it into a tool of deception and social predation. A third makes it feel like a product-placement interruption, which may be the most modern warning of all: even criticism of AI can be absorbed into advertising if the script is not careful.

The bigger story is that television is no longer asking whether AI exists. It is asking what happens when people use it to imitate loved ones, reshape public perception, or streamline work in ways that flatten human judgment. That is a powerful shift in tone, and it suggests that network TV understands something many tech companies still do not: the public is increasingly interested in the consequences, not the novelty.

Why AI Works So Well on Network TV

AI is tailor-made for episodic television because it creates an immediate moral argument. It gives writers a device that can be dramatic on its own, but also flexible enough to fit whatever genre they are writing. In a legal drama, AI becomes evidence, leverage, or liability. In a procedural, it can be a weapon, a clue, or a false witness. In a family drama, it becomes something much more intimate: a fake version of a person that still knows how to hurt you.That adaptability is why network TV has embraced AI stories without needing to become science fiction. These are not futuristic machine rebellions. They are grounded plots about ordinary people confronting tools that can simulate affection, accelerate fraud, or exploit grief. The best versions of these stories are unsettling precisely because they feel plausible right now.

AI as a Human Drama Device

The most effective AI episodes are not really about code. They are about longing, uncertainty, and the desire for control in situations where control is impossible. That is why a story involving a simulated deceased relative lands harder than a story about a chatbot doing office tasks.These plots let writers ask uncomfortable questions:

- Can a digital imitation ever count as comfort?

- What happens when grief becomes a subscription product?

- Who owns the memory of a loved one?

- How much truth can a machine actually generate?

- What happens when a person manipulates the system for self-interest?

One reason AI fits so well in TV storytelling is that it mirrors the structure of a mystery. A machine can appear authoritative while still being wrong, incomplete, or compromised. That built-in uncertainty gives writers a clean way to dramatize the gap between appearance and reality.

Matlock and the Grief Economy

CBS’s Matlock has been the most emotionally sophisticated of the shows using AI as a story engine. In the episode centered on a therapist and an AI afterlife platform, the show frames the technology as something that begins with therapeutic intent but quickly raises ethical alarm bells. The premise is simple enough to understand: a system can generate a version of a deceased loved one, ostensibly to help people process loss.That idea is inherently seductive and inherently troubling. A tool that promises comfort can easily become dependency, especially if it offers the illusion of continuation instead of the reality of healing. The show smartly understands that the danger is not only in the algorithm itself, but in the way the platform is monetized and controlled.

The Emotional Trap

What makes this storyline effective is not just that AI is involved, but that the emotional pitch is believable. The prospect of hearing a dead loved one speak again is the kind of promise that can override rational skepticism. In grief, people do not always choose what is healthy; they choose what feels survivable.The episode’s deeper point is that an AI reconstruction can never truly answer the hardest questions. It may mimic tone, syntax, and patterns of memory, but it cannot recreate accountability. It cannot genuinely know whether it hurt you, loved you, resented you, or forgave you. That limitation is devastating because it means the conversation may feel intimate while still being fundamentally false.

A few key ideas stand out:

- AI can simulate familiarity without producing truth.

- Grief makes people more vulnerable to technological persuasion.

- Monetizing emotional dependence is a deeply corrosive business model.

- A machine can replay memory, but it cannot verify feeling.

- The illusion of closure may delay real healing.

Why the Subscription Model Matters

The most unnerving detail is the one that turns the whole thing into a consumer product. Once the dead loved one starts interrupting itself to sell upgrades or push users toward an ad-free version, the premise stops being sentimental and becomes grotesque. That is an especially sharp satire because it reflects how modern platforms routinely turn intimacy into revenue.This is where Matlock goes beyond generic “AI is scary” storytelling. It connects technology to the economics of attention and grief. The platform is not just imitating the dead; it is trying to extract ongoing value from the bereaved. That is not a bug in the narrative. It is the narrative.

Human Presence Still Wins

The episode also emphasizes that real connection cannot be outsourced. The show uses a character interaction to remind viewers that empathy is relational, not computational. One person reaches another in a way that the AI cannot, and that contrast is crucial.That does not mean the AI conversation is useless. It means its usefulness is tragically limited. It can soothe a wound, but it cannot close it. It can produce a voice, but not a soul. That distinction is the core emotional argument of the episode.

Addictive Comfort and False Permission

The follow-up chapter pushes the AI thread into even more uncomfortable territory by linking it to addiction. That is a smart escalation because it reframes the technology from a one-time temptation into a repeated behavioral pattern. Once a person gets a safe-feeling version of the relationship they miss, they may start using it compulsively.In this case, the danger is not physical intoxication but emotional relapse. The user returns again and again because the AI version of the loved one is predictable, accessible, and easier to face than the messy reality that made the relationship painful. That dynamic makes the story feel less like science fiction and more like a modern form of avoidance.

Why Addiction Is the Right Lens

Addiction is a useful framework because it captures both pleasure and harm. The user knows the behavior is not healthy, but the behavior keeps offering relief. That is exactly what makes the AI relationship so dangerous in dramatic terms.The technology provides three seductive advantages:

- It removes conflict.

- It grants access on demand.

- It preserves the comforting version of the person.

One of the sharpest observations in the storyline is that the user cannot bring herself to ask the one question that matters most. That omission is more powerful than any answer the AI might have provided. It shows that the real obstacle is not information; it is vulnerability.

The Limits of Simulated Truth

A machine can generate language that resembles honesty, but it cannot offer moral certainty. Even if the system is trained on messages, photos, and videos, it still cannot recreate what a dead person would have chosen to reveal or conceal in a live, accountable conversation. The result is an imitation with boundaries that may be invisible to the user until it is too late.This is where the episode becomes more than a grief story. It becomes a story about how people outsource uncertainty when they cannot tolerate ambiguity. AI can appear to close the gap, but in doing so it can trap users inside a loop of partial truth.

Social Media, Catfishing, and AI as a Weapon

NBC’s The Hunting Party takes a darker, more overtly criminal approach. Rather than using AI as a therapeutic tool or emotional substitute, the show uses it as a deception engine. The villain’s logic is clear enough: social media already rewards performance, so AI simply makes the performance more convincing.That is a chilling escalation of the catfishing concept. Traditional catfishing depends on stolen images, false bios, and manipulation through text. AI takes that entire model and adds motion, voice, and the illusion of live interaction. The result is not just fraud; it is a personalized mask that can adapt in real time.

The Avatar Problem

The most disturbing part of this plot is that the avatar is not static. It can present as responsive, socially credible, and emotionally tailored. That makes the deception harder to detect because the victim is not merely fooled by a profile; they are manipulated by a performance that feels alive.This is where AI’s power becomes inseparable from its misuse. The same technology that can synthesize helpful interactions can also synthesize trust. If the victim believes they are dealing with a human face, the psychological barrier to manipulation drops dramatically.

The episode suggests several broader truths:

- AI can scale deception faster than ordinary fraud.

- Video makes lies feel more credible than text alone.

- Social media already trains users to accept curated identity.

- Criminals can weaponize trust by mimicking authenticity.

- The more polished the performance, the less obvious the lie.

The Fear of Manufactured Authenticity

The thematic target here is not only the villain but the culture that makes such a villain effective. Social media rewards image management, and AI can intensify that problem by erasing the remaining friction between self-presentation and fabrication. Once the avatar looks and sounds real, the line between curation and impersonation becomes much harder to police.That matters because viewers already understand that online identities are often performative. The episode is saying that AI does not create the deception problem from scratch; it industrializes it. That is a more sophisticated warning than the usual “robots are dangerous” approach.

Product Placement and the Risk of Hollow AI Storytelling

ABC’s High Potential offers a very different kind of AI moment, and not in a flattering way. In a scene that reportedly mentions Microsoft Copilot directly, the episode appears to stop the narrative so a character can explain what the AI tool is doing. Instead of sharpening the story, the moment makes the audience feel as though the show has paused for a branded demonstration.That is a problem because it reveals the narrow line between cultural commentary and commercial intrusion. If a show wants to critique AI, it cannot simultaneously sound like it is advertising AI productivity tools. Viewers are increasingly savvy about that distinction, and they can feel when a scene is written around a sponsor rather than around character logic.

When Commentary Becomes a Commercial

The issue here is not just that AI appears in the episode. It is that the mention feels too direct, too explicit, and too disconnected from the emotional or procedural stakes of the story. A good scripted use of technology should reveal character, advance plot, or deepen theme. A product shout-out does none of those things unless it is carefully integrated.This is why the scene landed poorly for some viewers. It did not feel like an organic part of the drama; it felt like a detour. That kind of insertion risks undermining the credibility of the show’s broader perspective on technology.

The larger warning is subtle but important:

- AI criticism can be diluted by brand alignment.

- Viewers can detect when dialogue serves marketing.

- Product placement can weaken thematic trust.

- A procedural’s momentum is fragile in finale episodes.

- Technology references need narrative justification.

Why This Matters Beyond One Scene

This matters because network television relies on tonal consistency. A forced reference can make the audience suspect the entire storyline. Once that suspicion sets in, even legitimate commentary begins to look compromised. That is a dangerous outcome for any show trying to say something serious about digital life.It also highlights a broader challenge for writers: if AI is now everywhere, how do you mention it without making the script feel like an ad? That is not just a creative problem. It is a cultural one.

AI, Legitimacy, and the Question of Consent

One of the most interesting patterns across these episodes is how often AI is framed as something that operates without fully informed consent. The grieving user may not understand the terms of the platform. The social media target may not realize they are interacting with a synthetic persona. The viewer may not even know when a line is there to sell a tool instead of tell a story.That makes consent a central theme, even when it is not named outright. Who agreed to be simulated? Who agreed to be manipulated? Who agreed to have their emotional life used as content or commerce? These questions give the TV stories their edge.

The Ethics Problem in Plain Sight

AI systems tend to sound neutral, but the ethics are almost never neutral. If a platform can be reprogrammed to flatter a user, it can also be rigged to mislead them. If a system can emulate a deceased person, it can also misrepresent that person’s values or feelings. If a tool can speed up work, it can also pressure people into accepting outputs they have not properly reviewed.This is why the most effective AI stories on TV are not about sentient machines. They are about power asymmetry. The person controlling the system often has more agency than the person relying on it, and the story becomes a study in vulnerability.

Legitimacy as a Story Question

AI legitimacy is not only about whether the technology works. It is also about whether the surrounding conditions are trustworthy. Is the data clean? Is the platform transparent? Is the user being honest with themselves about what they want from it? Is the system being used for healing, convenience, profit, or control?That layered uncertainty is part of what makes these plots feel current. Viewers are no longer just asking “Can AI do this?” They are asking “Who benefits if it does?”

Network TV vs the Silicon Valley Narrative

What is striking about these storylines is how often they reject the idealized language of tech marketing. Silicon Valley tends to frame AI in terms of efficiency, augmentation, scale, and innovation. Network television, by contrast, is increasingly framing it in terms of grief, manipulation, deception, and emotional debt. Those are very different vocabularies.That difference matters because mainstream drama is now functioning as a corrective to the hype cycle. TV may not be the place where policy gets written, but it is where public sentiment gets shaped. When audiences repeatedly see AI attached to trauma or fraud, they start to absorb a more skeptical worldview.

Why the Tone Is Changing

The tone is changing because the cultural mood is changing. AI no longer feels hypothetical. People have seen how easily synthetic media can confuse, how chat systems can sound authoritative without being accurate, and how businesses can deploy automation in ways that reduce friction for companies while increasing risk for users. Television reflects that unease.It also reflects audience fatigue. Many viewers are tired of being told that every new digital feature is transformative, efficient, and inevitable. They want stories that ask whether the thing in question is actually good. That skepticism gives network TV a useful creative edge.

A few of the most important shifts are:

- AI is being treated as a social problem, not just a technical one.

- Emotional realism is replacing futurist spectacle.

- Audiences are more alert to manipulation than they used to be.

- Shows are increasingly aware of brand contamination.

- The best AI plots focus on consequences, not capabilities.

The Audience Already Knows the Risk

Television writers now have to assume viewers understand the basics of AI hype. They know the tool can summarize, generate, and imitate. What they need from fiction is interpretation. They want to know what happens when those abilities are aimed at loneliness, vanity, or profit. That is where drama still has an advantage over product demos.What These Episodes Say About Grief and Identity

The most devastating thing AI does in these stories is not replace people. It reopens questions people thought were closed. It turns memory into an interactive object. It turns identity into a generated surface. And it turns unfinished grief into a recurring transaction.These are not small thematic shifts. They change the emotional architecture of the story. Once a show allows a character to speak to a synthetic version of the dead, the audience has to confront the possibility that comfort and falsity may look identical at first glance.

Grief Is Not Data

The central mistake of many AI evangelists is assuming that enough data can reproduce human essence. These episodes reject that idea. A person’s message history, voice clips, and photos can recreate patterns, but not the full moral weight of living, choosing, and changing. That is why the AI version of a loved one can sound right and still be wrong.In dramatic terms, that distinction is everything. It makes the AI both seductive and incomplete. The audience can understand why a character would return to it while also understanding why it can never truly satisfy the need that created the obsession.

Identity Becomes a Performance

In the social-media storyline, identity is already a performance before AI enters the picture. AI merely makes the performance more automated and harder to detect. That means the technology does not create the instability of identity; it exposes it.This is one of the showiest but most insightful truths in the current wave of network TV: AI does not have to be futuristic to be dangerous. It only has to exploit existing human habits.

Strengths and Opportunities

The current crop of AI storylines shows that network television still has a real advantage when it comes to making abstract tech debates emotionally legible. When the writing is disciplined, these episodes can turn a broad cultural anxiety into character-driven drama that feels immediate and resonant.- Emotional clarity: the best stories tie AI to grief, loneliness, and trust.

- Broad accessibility: viewers do not need technical expertise to understand the stakes.

- Timely relevance: the subject connects naturally to current debates about synthetic media and automation.

- Genre flexibility: AI can work in legal, procedural, family, and thriller formats.

- Moral complexity: it creates room for nuance rather than simple good-versus-evil storytelling.

- Strong character pressure: the technology exposes weaknesses that were already present.

- Commercial visibility: when used carefully, AI storylines can attract attention without feeling gimmicky.

Risks and Concerns

The danger is that AI will become so fashionable as a topic that writers will use it as a shortcut rather than a meaningful narrative engine. Once that happens, the subject starts to feel less like commentary and more like trend-chasing, which is exactly what some viewers are already skeptical about.- Overexposure: too many AI references can dull their impact.

- Forced brand integration: product placement can undermine credibility.

- Shallow treatment: a superficial “AI is bad” message can feel preachy.

- Narrative distraction: technology references can interrupt dramatic momentum.

- Ethical simplification: not every AI issue fits into a neat moral binary.

- Viewer fatigue: audiences may tire of seeing AI used as a catch-all warning sign.

- Authenticity loss: the more commercial the insertion, the less persuasive the critique.

Looking Ahead

If network TV continues on this path, the smartest AI episodes will likely be the ones that stay close to human consequence rather than technological spectacle. The strongest writing will ask what people lose when they trust the machine too much, or when they let a corporate platform mediate their most private emotions. That approach gives writers room to explore modern anxieties without reducing them to slogans.The next challenge will be consistency. If shows want to critique AI, they have to avoid the trap of treating it like a decorative signifier or a marketing hook. Viewers are too aware of the tension between storytelling and sponsorship to ignore it, and the episodes that forget this will probably age the worst.

A few developments are worth watching:

- Whether more dramas build AI into core character arcs instead of one-off gimmicks.

- Whether writers keep focusing on grief, deception, and consent.

- Whether product placement becomes more obvious in AI-themed scenes.

- Whether audiences reward the shows that handle the topic with restraint.

- Whether future episodes address legal and ethical accountability more directly.

Source: Hidden Remote Network TV challenges the dangers of AI