The AI security gap is no longer a theoretical footnote—it is now a definable risk vector that sits between the workflows enterprises want to automate and the controls security teams need to enforce, and closing that gap is the central challenge Mark Polino addressed on the AI Agent & Copilot Podcast. In a thoughtful conversation that cuts through marketing hype, Polino maps out where visibility and governance fail in real-world Copilot and agent deployments, why traditional perimeter controls no longer suffice, and what pragmatic, technical steps organizations must take today to prevent autonomous assistants from becoming an accidental insider threat.

The acceleration of agentic AI—Copilots, assistants, and automated agents that act on behalf of users and systems—promises dramatic productivity gains across IT, sales, finance, and security. Yet that very autonomy expands the attack surface. Agents routinely touch corporate data, invoke third-party APIs, access identity platforms and cloud storage, and act on business-critical systems. When those activities are not observed, controlled, and constrained, they create a visibility gap and a privilege gap that adversaries (and accidental misuse) can exploit.

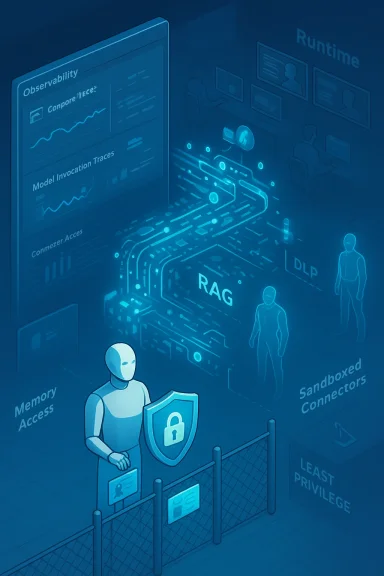

Mark Polino’s discussion places this problem in the context of current enterprise toolchains: Copilot-style assistants integrated with Microsoft 365, RAG (retrieval-augmented generation) pipelines pulling context from corporate Graphs and content stores, and Copilot Studio/agent frameworks that can stitch models, connectors, and runtime code together rapidly. The result is a new operational model where agentic workloads are functionally first-class elements of IT—yet are often treated like ephemeral applications, not security subjects.

Additionally, balancing privacy and audit requirements will be difficult: logging full model inputs and outputs can aid investigations but can itself create privacy and compliance concerns. Organizations must design redaction, retention, and access controls carefully.

Security leaders must adopt the mindset that agents are neither magic nor mere applications; they are new actors in the enterprise with privileges, actions, and lifecycles that deserve rigorous controls. Do that, and enterprises will reap the benefits of Copilots and agents while keeping sensitive data, systems, and reputation intact.

Source: Cloud Wars AI Agent & Copilot Podcast: Mark Polino on Closing the AI Security Gap

Background

Background

The acceleration of agentic AI—Copilots, assistants, and automated agents that act on behalf of users and systems—promises dramatic productivity gains across IT, sales, finance, and security. Yet that very autonomy expands the attack surface. Agents routinely touch corporate data, invoke third-party APIs, access identity platforms and cloud storage, and act on business-critical systems. When those activities are not observed, controlled, and constrained, they create a visibility gap and a privilege gap that adversaries (and accidental misuse) can exploit.Mark Polino’s discussion places this problem in the context of current enterprise toolchains: Copilot-style assistants integrated with Microsoft 365, RAG (retrieval-augmented generation) pipelines pulling context from corporate Graphs and content stores, and Copilot Studio/agent frameworks that can stitch models, connectors, and runtime code together rapidly. The result is a new operational model where agentic workloads are functionally first-class elements of IT—yet are often treated like ephemeral applications, not security subjects.

Why the AI security gap matters

Enterprises have spent decades maturing identity, endpoint, network, and data controls. Many of those controls assume human actors, interactive sessions, and well-scoped credentials. Agentic AI breaks those assumptions in three core ways:- Agents operate autonomously and persistently, executing sequences of actions without continuous human supervision.

- Agents aggregate context: they combine user prompts, historical memory, corporate documents, and external APIs into actionable outputs—raising the risk of data exfiltration or unintended data sharing.

- Agent ecosystems are dynamic: teams create new agents, attach new connectors, and iterate on prompts and tools faster than security processes can keep up.

Overview of the podcast’s central recommendations

Mark Polino’s prescription is both principled and practical. The podcast distilled a clear set of priorities for closing the gap:- Treat agents as first-class security subjects with identity, observable telemetry, and lifecycle management.

- Enforce least-privilege access and avoid granting broad data permissions to Copilots and agents by default.

- Implement runtime protection and agent-level DLP (data loss prevention) to intercept exfiltration attempts at the agent boundary.

- Use observability and audit logging to reconstruct agent actions and detect anomalous behavior.

- Apply governance controls—approved models, approved connectors, and change control—to slow risky deployments while enabling innovation.

The technical anatomy of the gap

Identity and lifecycle

Agents are often provisioned using service principals, API keys, or user tokens. Polino highlights a key problem: many teams issue broad-scoped credentials to accelerate development. The result is an agent that has more access than any human operator should. Without lifecycle controls—creation, rotation, revocation—those credentials become persistent liabilities.Observability and telemetry

Visibility must be granular. High-level logs are insufficient. Security teams need structured telemetry that includes:- Agent identity (who created it, which service principal it uses)

- Model invocation traces (which model and prompt template were used)

- Connector calls and data accessed (file IDs, API endpoints)

- Action execution logs (commands run, external systems modified)

- Memory access patterns (what was retrieved and returned)

Runtime controls and guardrails

A set of runtime protections are necessary to stop risky behavior at the moment it happens, including:- Agent-level DLP that inspects outbound content and blocks or redacts sensitive fields.

- Runtime guardrails for third-party connectors that enforce allowed operations only.

- Policy enforcement for model outputs (for example, banning transmission of PII or corporate secrets).

- Throttling and sandboxing to prevent agents from escalating their reach through chained API calls.

Memory and RAG safety

Retrieval-Augmented Generation brings rich context to models but also expands the surface for leakage. Polino points to the danger of memory poisoning and inadvertent retrieval of confidential fragments. Securing RAG requires authenticated, policy-driven retrieval layers and deterministic redaction before model input.Practical steps for IT and security teams

Mark Polino’s recommendations are designed to be applied pragmatically. Below is a prioritized, actionable roadmap enterprises can adopt.- Inventory and classify agents

- Identify all Copilots, assistants, and agent processes running in your environment.

- Classify each by purpose, owner, data access needs, and risk profile.

- Enforce least privilege

- Use scoped service principals and short-lived tokens.

- Apply role-based access to connectors and data sources.

- Avoid granting blanket Graph or broad storage permissions to agents.

- Implement agent observability

- Ingest structured agent telemetry into your SIEM and correlation engines.

- Log model inputs and outputs (with redaction where necessary) to enable forensic reconstruction.

- Deploy runtime DLP and data classification

- Place DLP at the agent boundary to intercept outputs before they leave your environment.

- Classify data sources feeding RAG layers and apply contextual access controls.

- Harden model and prompt pipelines

- Approve models and model variants centrally.

- Vet and sanitize third-party plugins and connectors before production use.

- Use prompt templates with parameter constraints to reduce the chance of injection.

- Automate detection and response

- Create SOAR playbooks that can disable compromised agents, rotate credentials, and quarantine affected connectors.

- Use anomaly detection focused on agent behavior patterns (sudden expansion of scope, unusual data retrieval).

- Governance and change control

- Require approval for agent deployment to production.

- Maintain versioning and controlled rollback mechanisms for agents and their memory stores.

Strengths and positive signals in the ecosystem

The market is responding. Vendors and platform providers are producing features and integrations that map to Polino’s recommendations:- Security-first Copilot frameworks and agent orchestration platforms are beginning to expose more telemetry, enabling integration with existing SOC tooling.

- Some runtime protection offerings now advertise the ability to inspect copilot outputs and enforce DLP at the agent level, which directly addresses the RAG leakage vector.

- Platform providers are starting to offer more granular permissions for connectors and more robust identity primitives for service accounts—helpful for least-privilege adoption.

Key risks and unresolved challenges

Polino is careful to call out what remains hard or unsolved. These are the areas where enterprises must exercise caution:- Observability completeness: Many platforms still do not generate the rich, structured telemetry required for deep investigations. Partial logs can impede response.

- Performance vs. security: Runtime inspection and DLP can add latency and cost. Organizations must balance user experience with security needs.

- False positives in agent DLP and policy enforcement: Aggressive blocking of model outputs can break workflows, prompting users to seek workarounds that increase risk.

- Supply-chain and third-party risk: Plugins and third-party connectors are a major vector. Vetting and continuous monitoring of those dependencies is operationally heavy.

- Human factors and shadow agents: Business teams will create agents to accelerate work. Without cultural and process changes, security controls risk being bypassed.

A deeper look at technical attack scenarios

Understanding specific attacks helps justify the recommended controls.- Prompt injection chains: Malicious input or compromised connectors can inject instructions into agent prompts, causing them to leak data or perform harmful actions. Mitigation: sanitize and normalize connector content before it feeds prompts, and enforce output policies.

- Memory poisoning: An agent’s memory store can be corrupted by adversarial or erroneous inputs, causing repeated bad behavior. Mitigation: version and validate memories, apply strong write controls, and audit memory access.

- Zero-click exfiltration: Agents that produce outputs to external services (email, external APIs, or unmanaged storage) can exfiltrate data with no human interaction. Mitigation: implement runtime DLP, block outbound connectors by default, and require approvals for any external write operations.

- Credential lifetime abuse: Long-lived tokens issued to agents can be stolen and reused. Mitigation: adopt short-lived credentials and automated rotation, bind tokens to agent identities and behavior, and restrict replayability.

Operationalizing governance without killing innovation

One of the central tensions Polino highlights is that heavy-handed controls can stifle adoption. Security teams must avoid becoming the friction that drives teams to shadow IT. To strike a practical balance:- Implement a staged approval model: allow low-risk agents to self-service in test sandboxes, with telemetry capture and quotas, while high-risk agents require formal approvals.

- Offer secure developer tooling: provide templates, vetted connectors, and approved model bundles so developers have a safe path to build.

- Provide clear, lightweight policies that map to business risk: e.g., a one-page “Agent Security Contract” that an agent owner signs, acknowledging responsibilities.

- Build a fast feedback loop: when a policy blocks a legitimate workflow, the security team should be able to quickly evaluate and whitelist that use case with compensating controls.

Measuring success: KPIs and operational metrics

Polino suggests tracking pragmatic, measurable indicators to show progress and prioritize investments:- Number of agents inventoried and classified

- Percentage of agents with scoped credentials and short-lived tokens

- Mean time to detect (MTTD) anomalous agent behavior

- Mean time to respond (MTTR) to agent incidents (credential rotation, agent quarantine)

- Percentage of agent outputs inspected by runtime DLP

- Number of governance violations detected in production

Where vendors and platforms must improve

Polino’s conversation also outlines expectations from platform vendors:- Standardized telemetry schemas for agent actions and RAG pipelines.

- Native support for short-lived, auditable agent credentials bound to identity providers.

- Built-in runtime policy enforcement points that integrate with enterprise DLP and SIEMs.

- Formalized model provenance and approval workflows for enterprise deployments.

- SDKs and templates for safe-by-design agent creation.

Cautionary notes and unverifiable areas

Not every claim about agent risk can be proven outside of controlled research. Specific exploits, like novel zero-click memory poisoning or particular supply-chain compromises, are plausible and have been demonstrated in research settings, but enterprises should treat such claims as indicative of risk patterns rather than as deterministic inevitabilities. Where concrete vulnerabilities are mentioned in vendor blogs or podcast conversations, security teams must validate against independent, reproducible advisories and CVE entries before taking irreversible action.Additionally, balancing privacy and audit requirements will be difficult: logging full model inputs and outputs can aid investigations but can itself create privacy and compliance concerns. Organizations must design redaction, retention, and access controls carefully.

The bottom line for Windows-focused IT and security leaders

- Assume agents will exist in your environment. Treat that as fact, not a “maybe.”

- Inventory, scope, and observe every agent. If you cannot see it, you cannot secure it.

- Enforce least privilege and short-lived credentials from day one.

- Apply runtime DLP and connector policies to stop exfiltration at the boundary.

- Prioritize human-centered governance: fast approval paths, developer-friendly controls, and clear ownership.

- Work with platform vendors to demand telemetry, identity primitives, and runtime policy hooks.

Conclusion

Mark Polino’s podcast contribution is a practical wake-up call: the AI security gap is surmountable, but only if organizations treat agentic AI as an operational and security-first concern. The engineering and vendor ecosystems are beginning to provide the building blocks—scoped identity, observability, runtime DLP, and governance templates—but the hard work remains in integrating these primitives into day-to-day operations without killing innovation.Security leaders must adopt the mindset that agents are neither magic nor mere applications; they are new actors in the enterprise with privileges, actions, and lifecycles that deserve rigorous controls. Do that, and enterprises will reap the benefits of Copilots and agents while keeping sensitive data, systems, and reputation intact.

Source: Cloud Wars AI Agent & Copilot Podcast: Mark Polino on Closing the AI Security Gap