Cognition’s rollout of Codemaps for the Windsurf platform is a deliberate push to make codebase comprehension a first-class feature of the developer workflow, pairing high-speed software-engineering models with interactive, shareable maps that link directly into source code and agent contexts.

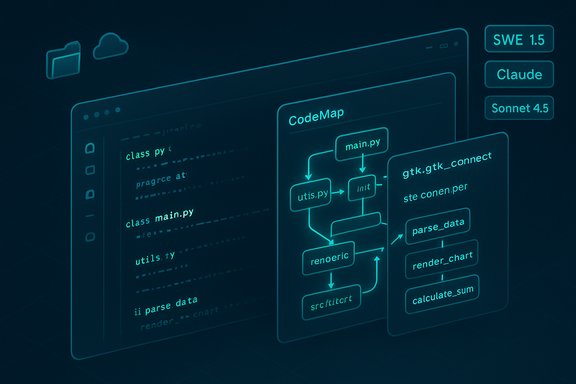

Cognition announced Codemaps as a new Windsurf capability that generates structured, AI-annotated maps of a repository to help engineers form mental models of how files, functions, and execution paths fit together. The feature is powered by Cognition’s own SWE-1.5 model alongside Anthropic’s Sonnet (Claude) 4.5, and is designed to be invoked either on demand for a specific problem or created automatically from task suggestions and recent navigation history. Windsurf itself joined Cognition earlier in 2025 when Cognition acquired the product and its team; Codemaps is the clearest sign yet of how Cognition intends to fuse Windsurf’s agentic IDE concepts with its own model stack and broader product suite (DeepWiki, Cascade, Ask Devin).

For platform competitors (from IDE vendors to GitHub and cloud providers), Codemaps underscores that the next battleground is context orchestration: who owns the canonical, machine-readable representation of a codebase’s structure and how that representation is surfaced to humans and agents.

Enterprises will evaluate Codemaps on two axes: does it reduce onboarding/maintenance cost materially, and can it do so without introducing compliance, security, or intellectual-property risk? The answer will depend on each organization’s control posture and how well Cognition executes on enterprise guardrails.

Source: testingcatalog.com Windsurf is getting Codemaps for AI-assisted coding

Background

Background

Cognition announced Codemaps as a new Windsurf capability that generates structured, AI-annotated maps of a repository to help engineers form mental models of how files, functions, and execution paths fit together. The feature is powered by Cognition’s own SWE-1.5 model alongside Anthropic’s Sonnet (Claude) 4.5, and is designed to be invoked either on demand for a specific problem or created automatically from task suggestions and recent navigation history. Windsurf itself joined Cognition earlier in 2025 when Cognition acquired the product and its team; Codemaps is the clearest sign yet of how Cognition intends to fuse Windsurf’s agentic IDE concepts with its own model stack and broader product suite (DeepWiki, Cascade, Ask Devin). What Codemaps is — the essentials

- What it produces: AI-generated, hierarchical maps of a codebase that show relationships (call/flow, file-to-file links), execution order, and salient code locations. Each node is clickable and jumps the user to the exact file and function in the editor.

- How it’s generated: Users can ask for a codemap for a specific task or choose from suggested topics (based on navigation history). Codemaps are created by an agent that scans the repository, resolves symbols and call paths, and synthesizes explanations and navigation nodes.

- Model plumbing: The feature leverages Cognition’s SWE-1.5 for fast agentic reasoning and Anthropic’s Sonnet 4.5 for deeper analysis; users can pick “fast” or “smart” generation modes depending on the task.

- IDE and agent integration: Codemaps appear inside the Windsurf interface (Activity Bar or command palette) and can be referenced inside Cascade conversations with @-mentions so agent prompts receive codemap context. Maps are also shareable as web-view links and cross-referenced with DeepWiki artifacts.

Why this matters: the productivity and onboarding problem

Modern engineering organizations spend a surprising amount of time—and therefore money—on orientation, chasing down dependency chains, and reconstructing execution paths. Cognition frames Codemaps as a way to reduce the cognitive tax of discovery: instead of reading dozens of files and following breadcrumbs, engineers get a structured, explorable model of the code relevant to the work at hand. Experienced engineers create mental models by reading code; Codemaps externalizes and encodes those models. For teams, that means:- Faster ramp for new hires and rotating engineers.

- A reusable artifact that documents the why and the how for feature areas and subsystems.

- Better context handed to automated agents (Cascade, Ask Devin), which can operate more accurately when fed a targeted codemap rather than an entire repo.

The technical pillars: SWE-1.5, Sonnet 4.5, and the agent harness

SWE-1.5: speed-focused agent model

SWE-1.5 is Cognition’s newest model optimized for software engineering tasks. According to Cognition, SWE-1.5 hits near-state-of-the-art engineering performance while emphasizing inference speed (reported at up to ~950 tokens/second through a Cerebras partnership). That speed is integral to delivering low-latency, interactive agent experiences inside an IDE.Anthropic Sonnet 4.5: complementary depth

Sonnet 4.5 (Anthropic’s Claude lineage) is used alongside SWE-1.5 to provide deeper “thinking” capability where quality and nuance matter more than raw token throughput. In practice, Cognition says users get a fast but competent SWE path and a slower, more deliberative Sonnet path to balance throughput and contextual depth.The agent harness and Cascade integration

Codemaps are produced by a specialized Codemap agent that crawls a repository, builds symbol and call graphs, and outputs a navigable artifact. Those artifacts can be @-mentioned into Cascade conversations so agents have just-in-time codemap context, improving the relevance of automated tasks like targeted code edits or debugging. This is a significant departure from strictly file- or line-level context injection.How Codemaps fits into Windsurf and Cognition’s product stack

Cognition has been steadily building developer tooling around two themes: (1) making documented context available (DeepWiki), and (2) making agentic automation reliable (Cascade, Ask Devin). Codemaps bridges those lanes by creating an artifact that’s both human-readable and agent-consumable. It’s accessible from the Windsurf UI, created from Cascade prompts, and can be surfaced within DeepWiki pages as a complementary view. This design reflects Cognition’s stated thesis: automation without shared understanding is fragile; conversely, agents with better, localized context produce higher-value outcomes.What engineers will actually see and do — practical walkthrough

- Open the Codemaps panel from the Activity Bar or via the command palette in Windsurf.

- Choose a suggested topic (prompts are derived from recent navigation), type a custom prompt, or create the map directly from a Cascade conversation.

- The Codemap agent scans the repository, resolves symbols, and builds a hierarchical map with nodes representing files, functions, and execution traces.

- Click any node to jump to the corresponding file and line; expand trace guides for a narrated explanation of the relevant execution path.

- Share the codemap as a browser-view link with teammates, or @-mention it in a Cascade conversation so agents can use the map as context for targeted automation.

Strengths and opportunities

- Speed with scale: SWE-1.5’s emphasis on inference speed enables interactive experiences that older large models couldn’t provide without perceptible lag. For iterative tasks and live agent sessions, speed matters.

- Actionable artifacts: Unlike simple textual summaries, Codemaps are clickable artifacts that pair explanation with precise navigation. That reduces friction when moving from understanding to coding.

- Agent synergy: Providing structured maps to agents (Cascade, Ask Devin) reduces the chances of irrelevant or hallucinated edits by narrowing the agent’s operational context. This is particularly useful for targeted debugging and refactor tasks.

- Team knowledge capture: Codemaps create sharable, versionable artifacts that preserve the reasoning behind system structure—useful for onboarding, handoffs, and asynchronous collaboration.

Risks, limits, and the things teams must watch

- Vendor-reported benchmarks need independent verification. Cognition reports SWE-1.5 throughput figures (up to ~950 tok/s) and favorable benchmark positions, and independent outlets have reiterated these claims in coverage, but those are vendor-aligned claims that should be validated against reproducible tests in your environment before taking performance numbers at face value. Treat speed and accuracy claims as vendor-provided until you test them.

- Hallucination and overreach. Codemaps synthesize high-level explanations about flows and relationships. Those narrations are generated by LLMs and can hallucinate or miss edge cases—it’s vital that engineers verify assumptions before applying sweeping code changes suggested by an agent operating on a codemap.

- Data residency and telemetry. Shared codemaps and agent operations can surface sensitive architecture details. For enterprise customers, Cognition’s docs note that server-side storage and sharing are opt-in; teams with strict compliance regimes need to validate storage, telemetry, and access controls before enabling sharing.

- Operational overhead and drift. Codemaps are snapshots. Repositories evolve; stale maps can mislead if not refreshed. Teams must decide refresh cadence and integrate codemap generation into their maintenance workflows.

- False sense of security from automation. Early experience with AI-assisted code tools (for example, mainstream Copilot and other assistants) shows that automation can increase throughput but also surface security and licensing risks if outputs are not carefully reviewed. Maintaining human review gates and code-review discipline remains essential.

Cross-comparison with existing tooling

Codemaps is not the first attempt to use AI for code comprehension, but its distinguishing design points are artifact-first mapping and agent integration.- GitHub Copilot and other assistants excel at inline completions and chat-based reasoning inside editors; Codemaps focuses on generating navigable artifacts that represent structure, not just suggestions. This makes it complementary rather than competitive in many workflows.

- Previous tools that attempted mapping or visualization often required manual graph construction or relied on static analysis; Codemaps relies on a hybrid approach—dynamic analysis and LLM synthesis—to provide executable paths and rationales. That introduces both power and new failure modes (LLM misinterpretation).

- Compared with earlier Windsurf features (Cascade agents, DeepWiki), Codemaps is the “map” that agents and wikis can reference—moving from scattered documentation to tightly linked context artifacts.

Recommendations for teams evaluating Codemaps

- Pilot with a representative subsystem. Choose a non-critical but realistic component and generate codemaps to evaluate accuracy, navigation fidelity, and agent behavior.

- Measure time-to-understanding improvements. Run a small study: measure how long new contributors take to complete onboarding tasks with and without codemaps.

- Validate model outputs. Compare codemap explanations and trace guides to human-constructed call graphs and architectural docs to quantify hallucination rates. Re-run tests over several commits to gauge drift.

- Decide sharing and storage policies. For enterprises, opt into server storage only after reviewing Cognition’s enterprise controls and encryption/ACL options. Keep sensitive subsystems out of shared codemaps until policy is set.

- Keep human gates. Require code review and targeted testing for any agent-applied edits that were informed by codemap context. Automation should speed verification, not replace it.

What remains to be proven

- Real-world onboarding impact: Cognition cites extended ramp times and positions codemaps as a reducer of the onboarding tax, but empirical peer-reviewed or independent enterprise case studies are limited at announcement time. Early customer feedback is positive, but teams should treat the reduction in "time-to-productivity" as an outcome to measure, not an assumption.

- Agent safety at scale: Codemaps improve agent context, but whether that reliably reduces unsafe edits or unintended refactors in complex microservice landscapes requires longer-term usage data and independent red-team testing.

- Interoperability with non-Windsurf workflows: The immediate value is clearest inside Windsurf and Cascade; wider IDE or CI/CD integrations will determine how broadly Codemaps change large-team practices. Documentation indicates shareable links and some external views, but fuller ecosystem integration is a natural next step to watch.

Business and market implications

Cognition’s approach—combining proprietary fast models (SWE-1.5) with established models (Sonnet 4.5) and shipping features that are explicitly agent-aware—signals a broader vendor strategy: make agents more dependable by improving the context they operate over, not merely the models themselves. That’s a notable pivot from a singular focus on completion quality toward engineering workflows and artifacts.For platform competitors (from IDE vendors to GitHub and cloud providers), Codemaps underscores that the next battleground is context orchestration: who owns the canonical, machine-readable representation of a codebase’s structure and how that representation is surfaced to humans and agents.

Enterprises will evaluate Codemaps on two axes: does it reduce onboarding/maintenance cost materially, and can it do so without introducing compliance, security, or intellectual-property risk? The answer will depend on each organization’s control posture and how well Cognition executes on enterprise guardrails.

Final assessment

Codemaps is a thoughtful, pragmatic step in evolving AI-assisted development from ephemeral completions to durable, shareable context artifacts. By centering on navigable maps that agents can consume, Cognition addresses a real pain point—understanding code—and ties that understanding back into the automation loop. The use of SWE-1.5 emphasizes low-latency interactivity, while Sonnet 4.5 supplies larger-context reasoning where necessary. That said, the feature is new and vendor-led: performance claims should be validated in-situ, and teams must guard against hallucination, stale artifacts, and unintended disclosure when sharing maps. For forward-looking engineering shops experimenting with agentic workflows, Codemaps is worth piloting—but with clear measurement plans, human review gates, and an explicit policy for map sharing and retention.Quick checklist for a safe, productive trial

- [ ] Pick a non-critical repo and baseline onboarding time for 2–3 tasks.

- [ ] Generate codemaps for those tasks and measure time saved reading and locating code.

- [ ] Validate explanations against human-authored architecture notes.

- [ ] Configure enterprise sharing and storage settings before sharing externally.

- [ ] Integrate codemap refresh into CI or release cadence to avoid stale context.

- [ ] Require code-review gates for any agent-suggested edits drawn from codemaps.

Source: testingcatalog.com Windsurf is getting Codemaps for AI-assisted coding