Microsoft’s Copilot call delegation for Teams Phone became available in April 2026 for Frontier-enrolled organizations with Microsoft 365 Copilot licensing, letting an AI voice agent answer incoming calls, assess urgency, attempt transfers, and offer voicemail or Microsoft Bookings follow-ups. It sounds like a small convenience feature, but it is really Microsoft’s agent strategy crossing the membrane from documents and calendars into live business conversation. The question is no longer whether Copilot can summarize work after the fact. It is whether enterprises are ready to let it stand at the front door.

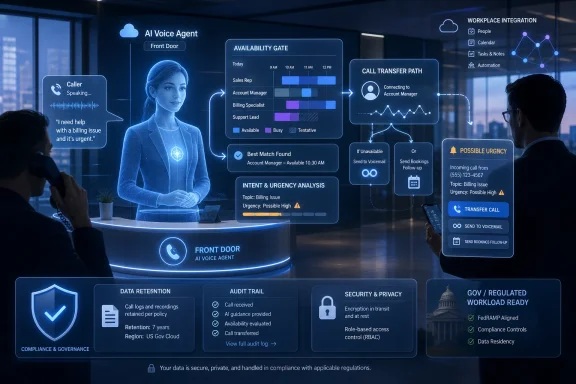

Copilot call delegation is easy to describe because the pitch is so clean. A user turns it on in Teams call settings, an incoming caller reaches Copilot instead of the human recipient, and Copilot asks enough questions to decide whether the call deserves interruption. If the call is urgent, it tries to transfer it live. If not, it can take a voicemail-style message or offer a follow-up through Microsoft Bookings.

That flow is not revolutionary in telecom terms. Auto attendants, call queues, executive assistants, voicemail transcription, and contact-center bots have existed for years. What is new is Microsoft’s attempt to make the screening function personal, contextual, and embedded inside the Microsoft 365 work graph rather than bolted onto the PBX.

The feature therefore matters less as a standalone Teams Phone upgrade than as a signal. Microsoft is moving Copilot from “help me write this email” to “act on my behalf while I am unavailable.” That is a much larger claim, and it lands in one of the most sensitive corners of office life: the phone call that happens when someone wants a human being now.

The historical bargain of enterprise communications has been that automation routes calls, while people make judgments. Copilot call delegation blurs that division. It does not merely route by menu choice; it interprets intent, weighs urgency, and creates a record of what happened.

That is a plausible productivity story. Anyone who has spent a day in Teams knows the pain of overlapping pings, meetings, call pop-ups, and urgent-but-not-really-urgent requests. A competent screening agent could reduce noise, especially for managers, sellers, consultants, clinicians, lawyers, and support leads whose calendars are constantly being punctured by inbound calls.

But productivity tools that decide what matters tend to accumulate power quietly. The first version is a helpful buffer. The second version becomes a workflow dependency. The third version is the thing everyone complains about but cannot switch off because the organization has rebuilt its etiquette around it.

That is the path Teams itself has already traveled. Presence indicators, meeting chat, read receipts, and channel notifications all began as coordination aids. Over time, they became social signals, compliance artifacts, and managerial dashboards. Copilot call delegation could follow the same arc.

If the AI gatekeeper is accurate, polite, and transparent, users may wonder how they lived without it. If it is wrong in subtle ways, the damage will be hard to measure. A missed urgent call is visible. A caller who stops trying because they dislike being screened by a synthetic attendant is not.

That makes call delegation both exciting and awkward. The organizations most likely to experiment with it are often the same organizations with complex compliance, privacy, and customer-experience obligations. Large enterprises want the efficiency benefit first, but they also have the most to lose if the tool mishandles a client conversation or creates records they are not prepared to govern.

The Microsoft 365 Copilot license requirement also frames this as part of the premium AI stack, not a basic Teams Phone feature. In practical terms, Microsoft is teaching customers that the future of telephony intelligence lives behind Copilot licensing, not merely Teams Phone licensing. That is commercially logical, but it will shape adoption.

For sysadmins, the immediate issue is not whether the demo looks good. It is who gets to enable it, whether the tenant can centrally control it, what policies apply to summaries, and how the feature interacts with existing call recording, retention, eDiscovery, and consent settings. A screening agent is not just a user preference when it answers calls from customers, patients, citizens, opposing counsel, or suppliers.

That substitution changes the social contract of the call. A human assistant can explain, improvise, apologize, escalate, and understand when a caller is frustrated for reasons that are not captured in a neat intent classification. An AI attendant can do some of that, but callers will test its boundaries quickly.

Microsoft’s documentation says callers are told they are speaking with an attendant rather than a person. That is the minimum viable disclosure. It prevents the worst version of the feature, in which callers unknowingly interact with a synthetic agent that sounds like a colleague or employee.

But disclosure is not the same as acceptance. Some callers will appreciate the efficiency. Others will hear the announcement and conclude that the recipient is hiding behind automation. In high-trust professions, that perception may matter as much as the technical outcome.

There is also a class issue inside business communications that vendors rarely acknowledge. Senior executives have long had assistants screen calls. Junior staff usually have not. AI makes executive-style filtering scalable, which could be liberating for overloaded workers. It could also normalize a world in which everyone is harder to reach, and every spontaneous conversation must negotiate with software first.

That distinction is the heart of the product. A screening agent that cannot triage is not very useful. A screening agent that can triage badly is dangerous.

False positives are annoying. Copilot interrupts you for a call that could have waited, and the value of the feature erodes. False negatives are worse. A customer escalation, safety issue, legal deadline, medical concern, outage report, or executive request gets converted into a summary and a booking link while the human recipient remains in a meeting.

Microsoft can tune models, improve prompts, and gather feedback, but urgency is not a universal property. In one organization, “the server is slow” is routine. In another, it is the first sign of a revenue-impacting outage. In one legal matter, “just following up” is administrative. In another, it is tied to a filing deadline. The same sentence can be noise or alarm depending on the relationship, timing, and stakes.

That is why enterprises should resist treating Copilot’s urgency label as objective. It is an operational guess. It may be a useful guess, but it is still a guess made inside a model that will not fully understand every business context unless the surrounding systems, policies, and user feedback loops are designed carefully.

Microsoft’s announcement-style disclosure helps, but it does not answer the whole compliance question. Organizations still need to know what data is processed, where it is stored, how long summaries persist, who can access them, whether administrators can audit them, and whether the original audio is retained or merely processed transiently. The summary may be more discoverable and more durable than the call itself.

That last point is important. AI summaries often feel lightweight because they are shorter than transcripts. Legally and operationally, they can be heavier. A summary distills a conversation into asserted facts, topics, and next steps. If it is wrong, incomplete, or misleading, it can still become part of the enterprise record.

Regulated industries should be especially cautious. Financial services firms may need to preserve certain communications. Healthcare organizations may need to assess whether protected health information is involved. Law firms may need to consider privilege and confidentiality. Public-sector bodies may need to think about records requests and statutory retention.

The deployment question is therefore not “Does Copilot disclose itself?” It is “Can the organization explain the full lifecycle of the data created by the call?” If the answer is no, the feature belongs in a pilot, not in broad production.

If urgency is inferred only from the semantic content of the caller’s words, the regulatory analysis may be more straightforward. A caller saying “the production system is down” can be classified as urgent without analyzing their emotional state. Text-based classification of intent is not the same thing as biometric emotion inference.

If, however, the system relies on acoustic features such as tone, pitch, stress, pace, or vocal agitation to infer urgency, the analysis changes. Voice can be biometric data, and workplace emotion inference is exactly the category European lawmakers have treated with suspicion. The EU’s concern is not merely privacy but reliability, discrimination, and power imbalance.

This is where Microsoft owes customers more technical specificity. Enterprises do not need marketing language about intelligent screening; they need to know whether the system analyzes what callers say, how callers sound, or both. That distinction could determine whether a deployment is acceptable in some European contexts.

The recipient matters too. A work call routed through Teams Phone is not legally detached from the workplace simply because the caller is external. The employee being protected from interruption is in a workplace context, and the organization is deploying the system as part of work infrastructure. If voice-derived emotional inference is involved, the compliance problem may not be limited to the caller.

For now, the prudent approach is simple: ask Microsoft directly, document the answer, and do not assume that “urgency detection” is legally neutral. The phrase sounds operational. Depending on the model design, it may be regulatory dynamite.

That shift is not cosmetic. A chat assistant waits for a prompt. An agent accepts an objective, monitors context, and takes steps toward completion. Copilot call delegation brings that model into real-time communications, where the action is immediate and the cost of a wrong step is felt by another human being.

The phone is a particularly revealing test bed because it resists the illusion of perfect automation. Documents can be redrafted. Slides can be regenerated. Email suggestions can be ignored. A live caller cannot be un-annoyed, and a missed escalation cannot always be recovered by reading a summary later.

This is why the feature feels more consequential than yet another Copilot panel in an Office app. It is one thing for AI to help after a meeting. It is another for AI to decide whether a meeting should be interrupted by the outside world.

Microsoft is betting that users will accept that trade because attention is broken. The company is probably right. The modern knowledge worker’s day is a stack of interruptions pretending to be collaboration, and any tool that promises fewer interruptions will find eager testers.

That may be extremely useful. A salesperson can return from a meeting with a clean summary of missed calls and next actions. A manager can avoid context switching while still catching true emergencies. A consultant can give clients an orderly path to book time rather than leaving voicemail in a void.

But the more structure Microsoft extracts from calls, the more governance matters. Voice used to be ephemeral unless recorded. Now even unrecorded calls can produce durable summaries and action items. That changes what administrators must think about when configuring Teams Phone.

The default enterprise instinct will be to ask whether the data stays inside Microsoft 365 compliance boundaries. That is necessary, but not sufficient. The harder question is whether existing policies were written for this kind of artifact in the first place. Many retention schedules distinguish between voicemail, chat, email, meeting recordings, and call records. AI-generated call summaries may not fit neatly into any of them.

The same problem will appear in eDiscovery. A summary of a screened call may be relevant to litigation or investigation, especially if it influenced a business decision. If summaries are retained, searchable, and tied to users, they are not casual notes. They are records.

Voice design matters. So does latency. So does the phrasing of the disclosure. So does the handoff when Copilot decides a call is urgent. A caller who has already explained the issue to an AI agent should not have to repeat everything to the human recipient. If that happens often, the system will feel like another IVR maze with better branding.

Internal culture matters too. In some organizations, using AI to screen calls will be seen as efficient and modern. In others, it may be interpreted as aloof or evasive. That perception will differ by role. An executive using it may seem protected. A support engineer using it during an incident may seem unreachable.

There is also a risk of etiquette fragmentation. If some employees enable call delegation and others do not, callers will learn inconsistent rules. If one department uses it for external clients and another bans it, the organization may project confusion. If managers expect staff to remain directly reachable while using the feature themselves, the politics will be obvious.

This is why the rollout should not be left to individual enthusiasm. Copilot call delegation needs organizational norms: who may use it, when it is appropriate, what caller categories are excluded, and how missed urgent calls are reviewed.

Administrators will want to know whether call delegation can be enabled or blocked by group, geography, role, license, or calling policy. They will want audit events. They will want retention integration. They will want a way to distinguish between a human-answered call, a Copilot-screened call, a voicemail, and a Bookings outcome.

They will also want failure visibility. How often did Copilot attempt a transfer? How often did the caller abandon the interaction? How often did users override, correct, or complain about urgency classification? Without metrics, organizations will be relying on vibes.

Security teams will ask a different set of questions. Can a caller manipulate the agent by claiming urgency? Can prompt-injection-style phrases influence summaries or next steps? Can the agent be tricked into revealing calendar details, availability, internal names, or other information? How much context does it expose during the screening conversation?

Those are not science-fiction concerns. Any agent that converses with untrusted external parties becomes part of the attack surface. The fact that the interaction happens over voice rather than chat does not make it immune to adversarial behavior. It merely changes the interface.

The pilot should measure more than user satisfaction. It should examine call abandonment, transfer accuracy, summary quality, complaint rates, booking completion, and false urgency outcomes. It should also review the generated summaries as records, not just as productivity aids.

Enterprises should test edge cases deliberately. Have callers speak with accents. Have them provide ambiguous reasons. Have them express frustration without stating urgency. Have them state urgency dishonestly. Have them mention sensitive personal data. Have them request information the agent should not disclose.

The point is not to prove that Copilot fails. The point is to discover how it fails before customers, patients, partners, or regulators do. Mature AI adoption is not optimism with a license assigned; it is controlled exposure with evidence.

Microsoft’s Frontier label should encourage that mindset. Early access is an invitation to learn, not a permission slip to deploy everywhere.

The deeper risk is that Copilot’s decision becomes invisible infrastructure. Once users trust it, they may stop reviewing missed-call summaries carefully. Once managers like the productivity gains, they may encourage broader use without revisiting caller experience. Once records accumulate, legal and compliance teams may discover the artifact trail after the fact.

This is the familiar Microsoft 365 pattern. A feature begins as convenience, becomes workflow, and then becomes governance debt. Teams chat, meeting recordings, Loop components, shared files, Planner tasks, and Copilot outputs all follow the same gravitational pull: useful things become records because people use them to do real work.

Call delegation adds an emotional dimension because phone calls still carry a sense of immediacy that chat does not. When a person calls, they are often asking to break through the asynchronous fog. If the first response is an AI agent, the organization is making a statement about access.

That statement may be reasonable. It should not be accidental.

Source: UC Today Could Microsoft Copilot Call Delegation Change How Teams Phone Users Handle Incoming Calls? - UC Today

Microsoft Turns the Receptionist Into an Agent

Microsoft Turns the Receptionist Into an Agent

Copilot call delegation is easy to describe because the pitch is so clean. A user turns it on in Teams call settings, an incoming caller reaches Copilot instead of the human recipient, and Copilot asks enough questions to decide whether the call deserves interruption. If the call is urgent, it tries to transfer it live. If not, it can take a voicemail-style message or offer a follow-up through Microsoft Bookings.That flow is not revolutionary in telecom terms. Auto attendants, call queues, executive assistants, voicemail transcription, and contact-center bots have existed for years. What is new is Microsoft’s attempt to make the screening function personal, contextual, and embedded inside the Microsoft 365 work graph rather than bolted onto the PBX.

The feature therefore matters less as a standalone Teams Phone upgrade than as a signal. Microsoft is moving Copilot from “help me write this email” to “act on my behalf while I am unavailable.” That is a much larger claim, and it lands in one of the most sensitive corners of office life: the phone call that happens when someone wants a human being now.

The historical bargain of enterprise communications has been that automation routes calls, while people make judgments. Copilot call delegation blurs that division. It does not merely route by menu choice; it interprets intent, weighs urgency, and creates a record of what happened.

The Calendar Is Now a Gatekeeper

The most interesting part of call delegation is not that Copilot answers the phone. It is that the system treats a user’s attention as a scarce enterprise resource that can be algorithmically guarded. In Microsoft’s worldview, the meeting you are in, the availability on your calendar, and the caller’s stated reason can become inputs into a decision about whether your work should be interrupted.That is a plausible productivity story. Anyone who has spent a day in Teams knows the pain of overlapping pings, meetings, call pop-ups, and urgent-but-not-really-urgent requests. A competent screening agent could reduce noise, especially for managers, sellers, consultants, clinicians, lawyers, and support leads whose calendars are constantly being punctured by inbound calls.

But productivity tools that decide what matters tend to accumulate power quietly. The first version is a helpful buffer. The second version becomes a workflow dependency. The third version is the thing everyone complains about but cannot switch off because the organization has rebuilt its etiquette around it.

That is the path Teams itself has already traveled. Presence indicators, meeting chat, read receipts, and channel notifications all began as coordination aids. Over time, they became social signals, compliance artifacts, and managerial dashboards. Copilot call delegation could follow the same arc.

If the AI gatekeeper is accurate, polite, and transparent, users may wonder how they lived without it. If it is wrong in subtle ways, the damage will be hard to measure. A missed urgent call is visible. A caller who stops trying because they dislike being screened by a synthetic attendant is not.

Frontier Means Early Access, Not Enterprise Maturity

Microsoft is making this available through Frontier, its early-access program for Microsoft 365 Copilot features. That distinction matters. Frontier is not the same thing as a slow, conservative general availability rollout aimed at risk-averse tenants. It is a channel for organizations willing to test agentic features before the edges have been sanded down.That makes call delegation both exciting and awkward. The organizations most likely to experiment with it are often the same organizations with complex compliance, privacy, and customer-experience obligations. Large enterprises want the efficiency benefit first, but they also have the most to lose if the tool mishandles a client conversation or creates records they are not prepared to govern.

The Microsoft 365 Copilot license requirement also frames this as part of the premium AI stack, not a basic Teams Phone feature. In practical terms, Microsoft is teaching customers that the future of telephony intelligence lives behind Copilot licensing, not merely Teams Phone licensing. That is commercially logical, but it will shape adoption.

For sysadmins, the immediate issue is not whether the demo looks good. It is who gets to enable it, whether the tenant can centrally control it, what policies apply to summaries, and how the feature interacts with existing call recording, retention, eDiscovery, and consent settings. A screening agent is not just a user preference when it answers calls from customers, patients, citizens, opposing counsel, or suppliers.

The Caller Is Part of the System Too

The most common mistake in evaluating this feature is to look only at the Teams user. From the user’s perspective, Copilot call delegation is a personal productivity shield. From the caller’s perspective, it is an unexpected substitution: they placed a call to a person and reached an AI representative of that person or organization.That substitution changes the social contract of the call. A human assistant can explain, improvise, apologize, escalate, and understand when a caller is frustrated for reasons that are not captured in a neat intent classification. An AI attendant can do some of that, but callers will test its boundaries quickly.

Microsoft’s documentation says callers are told they are speaking with an attendant rather than a person. That is the minimum viable disclosure. It prevents the worst version of the feature, in which callers unknowingly interact with a synthetic agent that sounds like a colleague or employee.

But disclosure is not the same as acceptance. Some callers will appreciate the efficiency. Others will hear the announcement and conclude that the recipient is hiding behind automation. In high-trust professions, that perception may matter as much as the technical outcome.

There is also a class issue inside business communications that vendors rarely acknowledge. Senior executives have long had assistants screen calls. Junior staff usually have not. AI makes executive-style filtering scalable, which could be liberating for overloaded workers. It could also normalize a world in which everyone is harder to reach, and every spontaneous conversation must negotiate with software first.

Urgency Detection Is Where the Product Becomes Risky

The word “urgent” does a lot of work here. If Copilot simply asked, “Is this urgent?” and passed along the answer, the feature would be a conversational voicemail assistant. If it independently judges urgency from the caller’s words, context, or voice, it becomes a decision system.That distinction is the heart of the product. A screening agent that cannot triage is not very useful. A screening agent that can triage badly is dangerous.

False positives are annoying. Copilot interrupts you for a call that could have waited, and the value of the feature erodes. False negatives are worse. A customer escalation, safety issue, legal deadline, medical concern, outage report, or executive request gets converted into a summary and a booking link while the human recipient remains in a meeting.

Microsoft can tune models, improve prompts, and gather feedback, but urgency is not a universal property. In one organization, “the server is slow” is routine. In another, it is the first sign of a revenue-impacting outage. In one legal matter, “just following up” is administrative. In another, it is tied to a filing deadline. The same sentence can be noise or alarm depending on the relationship, timing, and stakes.

That is why enterprises should resist treating Copilot’s urgency label as objective. It is an operational guess. It may be a useful guess, but it is still a guess made inside a model that will not fully understand every business context unless the surrounding systems, policies, and user feedback loops are designed carefully.

Compliance Is Not a Checkbox at the Start of the Call

The first privacy question is obvious: the caller is speaking to an AI agent that processes speech and produces a summary. In many jurisdictions, that triggers obligations around notice, lawful basis, retention, access, and sometimes recording consent. In the European Union and the United Kingdom, the analysis starts with personal data. A voice conversation about a business matter can include names, contact details, commercial information, health information, employment information, and other sensitive material.Microsoft’s announcement-style disclosure helps, but it does not answer the whole compliance question. Organizations still need to know what data is processed, where it is stored, how long summaries persist, who can access them, whether administrators can audit them, and whether the original audio is retained or merely processed transiently. The summary may be more discoverable and more durable than the call itself.

That last point is important. AI summaries often feel lightweight because they are shorter than transcripts. Legally and operationally, they can be heavier. A summary distills a conversation into asserted facts, topics, and next steps. If it is wrong, incomplete, or misleading, it can still become part of the enterprise record.

Regulated industries should be especially cautious. Financial services firms may need to preserve certain communications. Healthcare organizations may need to assess whether protected health information is involved. Law firms may need to consider privilege and confidentiality. Public-sector bodies may need to think about records requests and statutory retention.

The deployment question is therefore not “Does Copilot disclose itself?” It is “Can the organization explain the full lifecycle of the data created by the call?” If the answer is no, the feature belongs in a pilot, not in broad production.

Europe’s AI Rules Turn Voice Into a Hard Boundary

The EU AI Act adds another layer because it treats some AI practices as unacceptable, not merely high-risk. One of the most relevant prohibitions concerns emotion recognition in workplace and educational settings, with narrow exceptions. The critical issue for Copilot call delegation is how it determines urgency.If urgency is inferred only from the semantic content of the caller’s words, the regulatory analysis may be more straightforward. A caller saying “the production system is down” can be classified as urgent without analyzing their emotional state. Text-based classification of intent is not the same thing as biometric emotion inference.

If, however, the system relies on acoustic features such as tone, pitch, stress, pace, or vocal agitation to infer urgency, the analysis changes. Voice can be biometric data, and workplace emotion inference is exactly the category European lawmakers have treated with suspicion. The EU’s concern is not merely privacy but reliability, discrimination, and power imbalance.

This is where Microsoft owes customers more technical specificity. Enterprises do not need marketing language about intelligent screening; they need to know whether the system analyzes what callers say, how callers sound, or both. That distinction could determine whether a deployment is acceptable in some European contexts.

The recipient matters too. A work call routed through Teams Phone is not legally detached from the workplace simply because the caller is external. The employee being protected from interruption is in a workplace context, and the organization is deploying the system as part of work infrastructure. If voice-derived emotional inference is involved, the compliance problem may not be limited to the caller.

For now, the prudent approach is simple: ask Microsoft directly, document the answer, and do not assume that “urgency detection” is legally neutral. The phrase sounds operational. Depending on the model design, it may be regulatory dynamite.

The Agentic Copilot Strategy Finally Has a Phone Number

Call delegation fits neatly into Microsoft’s broader Copilot story. The company has spent the last two years moving from chat interfaces toward agents that can operate across files, calendars, inboxes, meetings, and business workflows. The slogan has shifted from assistance to delegation.That shift is not cosmetic. A chat assistant waits for a prompt. An agent accepts an objective, monitors context, and takes steps toward completion. Copilot call delegation brings that model into real-time communications, where the action is immediate and the cost of a wrong step is felt by another human being.

The phone is a particularly revealing test bed because it resists the illusion of perfect automation. Documents can be redrafted. Slides can be regenerated. Email suggestions can be ignored. A live caller cannot be un-annoyed, and a missed escalation cannot always be recovered by reading a summary later.

This is why the feature feels more consequential than yet another Copilot panel in an Office app. It is one thing for AI to help after a meeting. It is another for AI to decide whether a meeting should be interrupted by the outside world.

Microsoft is betting that users will accept that trade because attention is broken. The company is probably right. The modern knowledge worker’s day is a stack of interruptions pretending to be collaboration, and any tool that promises fewer interruptions will find eager testers.

Teams Phone Becomes a Data Product

Teams Phone has always been more than a cloud PBX, but Copilot call delegation pushes it further into data-product territory. Calls become structured events. Caller intent becomes metadata. Summaries become searchable artifacts. Follow-ups become workflow objects.That may be extremely useful. A salesperson can return from a meeting with a clean summary of missed calls and next actions. A manager can avoid context switching while still catching true emergencies. A consultant can give clients an orderly path to book time rather than leaving voicemail in a void.

But the more structure Microsoft extracts from calls, the more governance matters. Voice used to be ephemeral unless recorded. Now even unrecorded calls can produce durable summaries and action items. That changes what administrators must think about when configuring Teams Phone.

The default enterprise instinct will be to ask whether the data stays inside Microsoft 365 compliance boundaries. That is necessary, but not sufficient. The harder question is whether existing policies were written for this kind of artifact in the first place. Many retention schedules distinguish between voicemail, chat, email, meeting recordings, and call records. AI-generated call summaries may not fit neatly into any of them.

The same problem will appear in eDiscovery. A summary of a screened call may be relevant to litigation or investigation, especially if it influenced a business decision. If summaries are retained, searchable, and tied to users, they are not casual notes. They are records.

The Human Factors Will Decide Adoption

Technology buyers often underestimate caller psychology because it is difficult to model in a procurement spreadsheet. The success of Copilot call delegation will depend heavily on how people feel when they encounter it. The best implementation will feel like a polished assistant. The worst will feel like a polite wall.Voice design matters. So does latency. So does the phrasing of the disclosure. So does the handoff when Copilot decides a call is urgent. A caller who has already explained the issue to an AI agent should not have to repeat everything to the human recipient. If that happens often, the system will feel like another IVR maze with better branding.

Internal culture matters too. In some organizations, using AI to screen calls will be seen as efficient and modern. In others, it may be interpreted as aloof or evasive. That perception will differ by role. An executive using it may seem protected. A support engineer using it during an incident may seem unreachable.

There is also a risk of etiquette fragmentation. If some employees enable call delegation and others do not, callers will learn inconsistent rules. If one department uses it for external clients and another bans it, the organization may project confusion. If managers expect staff to remain directly reachable while using the feature themselves, the politics will be obvious.

This is why the rollout should not be left to individual enthusiasm. Copilot call delegation needs organizational norms: who may use it, when it is appropriate, what caller categories are excluded, and how missed urgent calls are reviewed.

The Admin Console Is Where the Real Story Will Play Out

For WindowsForum readers who live in Microsoft 365 admin centers, the practical concern is control. A feature like this should not arrive as a user-level novelty that quietly creates compliance obligations. It needs tenant-level governance, clear policy hooks, and reporting that shows how the agent is being used.Administrators will want to know whether call delegation can be enabled or blocked by group, geography, role, license, or calling policy. They will want audit events. They will want retention integration. They will want a way to distinguish between a human-answered call, a Copilot-screened call, a voicemail, and a Bookings outcome.

They will also want failure visibility. How often did Copilot attempt a transfer? How often did the caller abandon the interaction? How often did users override, correct, or complain about urgency classification? Without metrics, organizations will be relying on vibes.

Security teams will ask a different set of questions. Can a caller manipulate the agent by claiming urgency? Can prompt-injection-style phrases influence summaries or next steps? Can the agent be tricked into revealing calendar details, availability, internal names, or other information? How much context does it expose during the screening conversation?

Those are not science-fiction concerns. Any agent that converses with untrusted external parties becomes part of the attack surface. The fact that the interaction happens over voice rather than chat does not make it immune to adversarial behavior. It merely changes the interface.

A Pilot Should Look Like a Governance Exercise, Not a Demo

The right first deployment is narrow. Pick users whose call patterns are high-volume but not safety-critical. Avoid privileged legal, medical, regulatory, or incident-response workflows until the organization understands the feature’s behavior. Tell callers clearly what is happening. Tell users just as clearly what Copilot can and cannot be trusted to decide.The pilot should measure more than user satisfaction. It should examine call abandonment, transfer accuracy, summary quality, complaint rates, booking completion, and false urgency outcomes. It should also review the generated summaries as records, not just as productivity aids.

Enterprises should test edge cases deliberately. Have callers speak with accents. Have them provide ambiguous reasons. Have them express frustration without stating urgency. Have them state urgency dishonestly. Have them mention sensitive personal data. Have them request information the agent should not disclose.

The point is not to prove that Copilot fails. The point is to discover how it fails before customers, patients, partners, or regulators do. Mature AI adoption is not optimism with a license assigned; it is controlled exposure with evidence.

Microsoft’s Frontier label should encourage that mindset. Early access is an invitation to learn, not a permission slip to deploy everywhere.

The Risk Is Not That Copilot Answers the Phone

The instinctive critique of Copilot call delegation is that AI should not answer calls meant for people. That is too simple. Organizations have used non-human call handling for decades, and many callers prefer a quick automated path to a delayed human one. A well-designed AI attendant could be better than voicemail, better than a ringing phone, and better than a harried receptionist guessing who matters.The deeper risk is that Copilot’s decision becomes invisible infrastructure. Once users trust it, they may stop reviewing missed-call summaries carefully. Once managers like the productivity gains, they may encourage broader use without revisiting caller experience. Once records accumulate, legal and compliance teams may discover the artifact trail after the fact.

This is the familiar Microsoft 365 pattern. A feature begins as convenience, becomes workflow, and then becomes governance debt. Teams chat, meeting recordings, Loop components, shared files, Planner tasks, and Copilot outputs all follow the same gravitational pull: useful things become records because people use them to do real work.

Call delegation adds an emotional dimension because phone calls still carry a sense of immediacy that chat does not. When a person calls, they are often asking to break through the asynchronous fog. If the first response is an AI agent, the organization is making a statement about access.

That statement may be reasonable. It should not be accidental.

The Fine Print That Should Shape Every Pilot

Copilot call delegation is promising enough to test and sensitive enough to slow down. The organizations that get value from it will be the ones that treat it as a communications policy change, not a shiny Teams setting.- Microsoft has made Copilot call delegation available through Frontier for Microsoft 365 Copilot-licensed organizations, which means it should be treated as early-access functionality rather than settled enterprise plumbing.

- The feature changes Teams Phone from a call-routing service into an AI-mediated screening layer that can judge urgency, attempt live transfer, and generate summaries.

- Caller disclosure is necessary but not sufficient, because organizations still need retention, access, audit, and data-processing answers for the summaries and any speech processing involved.

- European deployments should clarify whether urgency detection uses only semantic content or also analyzes acoustic voice characteristics, because that distinction may matter under workplace emotion-recognition restrictions.

- Admins should demand policy controls, auditability, and usage metrics before allowing broad adoption, especially in regulated or client-facing environments.

- A serious pilot should test false negatives, caller abandonment, summary accuracy, sensitive-data handling, and adversarial caller behavior before expanding the feature.

Source: UC Today Could Microsoft Copilot Call Delegation Change How Teams Phone Users Handle Incoming Calls? - UC Today