Microsoft’s Copilot has moved from calendar fixes and document drafting into the most intimate ledger most people own: their medical record, wearable telemetry, and lab results — with the company today previewing Copilot Health, a privacy‑segmented Copilot experience that promises to synthesize electronic health records (EHRs), continuous device data, and laboratory findings into plain‑language insights to help users prepare for clinical visits and better understand personal health trends.

Microsoft’s consumer Copilot strategy has been expanding rapidly across productivity, search, and specialized verticals. Copilot Health represents the company’s clearest move yet into consumer‑facing healthcare: a feature that collects and normalizes data from EHRs, wearable devices, and direct‑to‑consumer lab services, then uses generative and clinical AI tools to describe patterns, highlight anomalies, and produce “appointment prep” briefings and suggested questions for clinicians. Microsoft positions the product as an aid for understanding, not a replacement for professional medical care.

This step is both strategic and predictable. Platforms that become the place users trust to store and query their personal information — calendars, documents, email — are natural candidates to add health as another domain. Microsoft already runs several clinically oriented efforts (for example, Dragon Copilot for clinicians and a slate of healthcare partnerships), and Copilot Health stitches those threads into a consumer‑oriented workspace where wearable telemetry and patient records can be queried conversationally.

Two important governance points to track as Copilot Health scales:

Microsoft’s promises (segregated storage, no training on health lane data, ISO/IEC 42001 governance) are meaningful steps toward safer consumer health AI, and the company’s scale makes this an influential experiment for the industry. Still, the real measure of success will be whether Copilot Health reduces clinical confusion without adding new vectors for error, whether it proves useful across diverse patient populations and devices, and whether regulators, clinicians, and patients see the transparency and independent evidence they need to trust the results.

Source: Digital Watch Observatory AI-powered Copilot Health platform introduced by Microsoft | Digital Watch Observatory

Background / Overview

Background / Overview

Microsoft’s consumer Copilot strategy has been expanding rapidly across productivity, search, and specialized verticals. Copilot Health represents the company’s clearest move yet into consumer‑facing healthcare: a feature that collects and normalizes data from EHRs, wearable devices, and direct‑to‑consumer lab services, then uses generative and clinical AI tools to describe patterns, highlight anomalies, and produce “appointment prep” briefings and suggested questions for clinicians. Microsoft positions the product as an aid for understanding, not a replacement for professional medical care.This step is both strategic and predictable. Platforms that become the place users trust to store and query their personal information — calendars, documents, email — are natural candidates to add health as another domain. Microsoft already runs several clinically oriented efforts (for example, Dragon Copilot for clinicians and a slate of healthcare partnerships), and Copilot Health stitches those threads into a consumer‑oriented workspace where wearable telemetry and patient records can be queried conversationally.

What Copilot Health says it does

Copilot Health’s public preview (U.S. rollout, waitlist model) is built around four core capabilities:- Data aggregation: Bring together EHRs, lab results, and wearable streams into a single, personal health profile.

- Pattern detection and explanation: Use AI to surface trends (sleep, activity, heart rate variability, lab drift) and explain their likely significance in accessible language.

- Appointment preparation: Generate a concise summary and prioritized questions to take to your clinician.

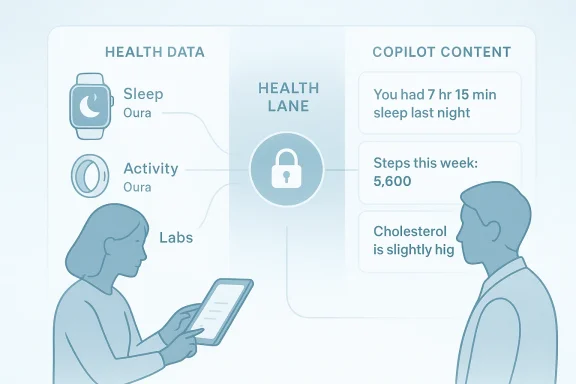

- Privacy segmentation and governance: Keep health conversations and stored records in a separate, encrypted “health lane” inside Copilot that Microsoft says will not be used to train its foundation models.

How the ingestion pipeline is described

Microsoft’s public materials describe a connector model: device and app integrations (Apple Health, Fitbit APIs, vendor connectors), EHR access through HealthEx‑style record locators and FHIR‑based APIs, and lab feeds from consumer testing platforms. Data is normalized into timelines and contextualized with visit summaries and clinical notes when available, enabling the generative layer to reference both continuous device telemetry and episodic clinical events. Microsoft also indicates the feature draws on its research into clinical AI systems — notably the Microsoft AI Diagnostic Orchestrator (MAI‑DxO) and related research programs — to ground outputs in clinical reasoning patterns rather than free‑form speculation.Why Microsoft built Copilot Health — the strategic logic

There are three converging incentives powering Copilot Health:- Product differentiation: Health is one of the few deeply personal spaces where an end‑to‑end assistant can add recurring, high‑value interactions (daily telemetry reviews, prep for periodic visits).

- Data lock‑in and ecosystem expansion: If users aggregate sensitive medical history and device telemetry inside Copilot, Microsoft strengthens the platform’s centrality across devices and subscriptions.

- Market momentum: Competitors (OpenAI’s health features, Amazon’s health chatbot expansions, and several health‑AI start‑ups) are racing to own patient‑facing interfaces — Microsoft sees Copilot as the most natural consumer front door.

Privacy, security, and governance: Microsoft’s promises and limits

Microsoft emphasizes three privacy controls around Copilot Health:- Segregated storage and access controls: Health data and conversations will be stored separately from general Copilot interactions and protected by encryption and fine‑grained access controls. Microsoft’s Copilot privacy documentation reiterates that files and uploaded content are handled with explicit retention and deletion policies and that specific Copilot boundaries exist to limit model training.

- Not used for model training: Microsoft has repeated that content and conversations in the health lane will not be used to train its foundation models. For enterprise and Microsoft 365 products the company has long published opt‑out controls and contractual commitments; Copilot Health inherits that design principle and the product documentation highlights user controls.

- Third‑party certification and governance: Microsoft points to ISO/IEC 42001 (AI Management Systems) alignment and related audits as evidence of governance maturity for Copilot services — Microsoft says its Copilot family has attained ISO/IEC 42001 certification at the product level, and the company frames this as a structural safeguard for responsible AI operations.

The clinical and safety challenge

Copilot Health is explicitly designed to support conversations with clinicians, not to replace them. That caveat appears throughout Microsoft’s consumer guidance: the assistant is for education and preparation, not diagnosis or treatment. Yet the technical and social dynamics of consumer health AI complicate that boundary:- Wearable data and consumer lab results vary widely in clinical quality. Devices designed for step counting or sleep tracking are useful for trends but are not substitutes for clinical diagnostics. Integrating these starrative requires careful uncertainty calibration and provenance transparency so users understand where signals come from.

- Generative models can produce plausible but incorrect explanations — the classic hallucination problem — which is especially dangerous in health contexts where confident text can override patient intuition about uncertainty. Microsoft’s use of grounded clinical content and the MAI‑DxO research program seeks to lower this risk, but independent evaluation is necessary.

- Clinical liability and the clinician‑patient relationship: If Copilot Health produces a list of “urgent” items or a suggested medication question, who is responsible for errors or downstream mismanagement? Microsoft frames outputs as preparatory; the real world makes those outputs part of care conversations, and legal and regulatory frameworks are still catching up.

Triaging accuracy: a practical checklist

Clinicians, health systems, and informed users should apply a short triage before relying on Copilot Health outputs:- Confirm provenance: check whether a flagged lab came from a certified lab or a consumer panel.

- Assess device accuracy: treat single‑point wearable anomalies cautiously; emphasize trends over isolated readings.

- Use Copilot outputs as questions for clinicians, not treatment plans.

- Keep human verification: any recommendation that would change medication, order imaging, or initiate invasive tests should be validated by a licensed clinician.

Interoperability and ecosystem partners — the plumbing that matters

A headline strength of Microsoft's pitch is that Copilot Health is designed as a connector platform:- Device connectors: Apple Health, Fitbit, Oura, and other partner APIs provide continuous telemetry streams that Copilot Health can ingest and summarize. Microsoft’s consumer pages and press reporting list support for dozens of wearable device sources.

- EHR and provider directory connectivity: Microsoft points to HealthEx and related record‑locator services as the route to provider data across thousands of hospitals and clinics — a pragmatic approach that relies on existing FHIR and TEFCA‑style infrastructures to assemble a patient’s longitudinal record. Healthcare IT reporting shows HealthEx’s ability to surface Epic‑sourced patient records and act as a patient‑directed access layer.

- Laboratory partners: Consumer lab vendors such as Function (a direct‑to‑consumer testing company) are already providing patient‑facing panels and APIs; Microsoft’s preview materials list lab result ingestion as part of the Copilot Health profile. Function and similar services are a double‑edged sword: they broaden the pool of available data but introduce variability in clinical interpretation and lab standards.

Governance, certification, and external oversight

Microsoft cites ISO/IEC 42001 certification as a governance milestone for Copilot products; this is meaningful because 42001 is the new international standard for AI management systems and offers a framework for risk assessment, lifecycle controls, and accountability. Microsoft’s public compliance materials and community posts indicate that Microsoft 365 Copilot has achieved ISO/IEC 42001 certification and that the company is applying similar governance processes across Copilot experiences. That said, ISO/IEC 42001 is a management‑system standard — it raises the floor on governance controls but does not certify product safety or clinical efficacy in a narrow technical sense.Two important governance points to track as Copilot Health scales:

- Independent clinical evaluation: external validation studies that compare Copilot Health’s outputs to clinician review will be essential to quantify accuracy, false positives, and missed findings.

- Regulatory posture: consumer health tools that remain in the “information” or “education” bucket can avoid medical‑device classification, but that boundary is fragile. If Copilot Health’s outputs begin to recommend specific clinical actions, regulators may require medical‑device level validation. Microsoft’s public guidance currently frames the product as non‑diagnostic, but product changes and feature creep will continuously test that boundary.

User experience and real‑world utility

In practical terms, Copilot Health’s potential value to typical users is straightforward:- Reduce confusion: a patient with fragmented records — a hospitalist note, a telehealth lab, and two months of smartwatch telemetry — currently faces a messy synthesis task. Copilot Health promises to condense these items into a readable timeline with flagged concerns.

- Improve clinician prep: well‑structured patient summaries and prioritized questions can help clinicians focus visits, possibly making brief appointments more productive.

- Ongoing monitoring: when configured appropriately, trends (sleep, resting heart rate, activity) might help users and clinicians detect early changes that merit attention.

- Surface provenance and confidence scores,

- Let users correct errors (link a different provider, fix device attribution), and

- Provide explicit next‑step pathways (e.g., “Share this summary with your clinician” with a secure export workflow).

Risks and open questions

No product launch eliminates tradeoffs. For Copilot Health, the most important unanswered questions are:- How will Microsoft verify and label the clinical quality of consumer lab panels and third‑party device streams?

- What are the precise retention windows, and how will emergency disclosures (for example, flagged critical lab values) be managed in consumer workflows?

- How will Microsoft measure and report accuracy, bias, and missed‑event rates in Copilot Health outputs?

- What legal and regulatory exposures might arise when the assistant’s suggestions affect clinical decisions?

How clinicians, health systems, and regulators should respond

For clinicians and health leaders anticipating Copilot Health adoption among their patients, a pragmatic three‑step approach will reduce risk:- Update intake processes: ask patients whether they used consumer AI summaries before visits and include that context in the history‑taking workflow.

- Define clear verification standards: create protocols for validating consumer lab results and device‑derived signals before acting on them.

- Advocate for transparency: require vendors to publish evaluation metrics, data‑use policies, and error‑reporting procedures.

What to watch next

As Copilot Health moves from preview to broader availability, watch for the following milestones that will define whether this product is transformative or merely fashionable:- Publication of independent evaluations or white papers describing accuracy, false‑positive rates, and clinical concordance. Microsoft has already signaled research outputs will follow; independent peer‑review will matter.

- Real‑world integration stories from health systems that accept patient‑shared Copilot summaries into workflows, including how clinicians reconcile AI‑generated histories with charted EHR data.

- Feature changes that move Copilot Health from “explain and prepare” toward “recommend and triage” — the regulatory and safety bar rises dramatically at that point.

- The transparency of training and governance decisions: Microsoft’s assertion that health lane data won’t be used for model training is meaningful; ongoing audits and clear, user‑accessible opt‑out mechanisms will be a test of trust.

Bottom line

Copilot Health is a consequential product: it packages wearable telemetry, lab results, and clingle conversational assistant and couples that experience with Microsoft’s broad cloud, identity, and governance infrastructure. That combination could materially improve patient understanding and clinician communication — but only if Microsoft and its partners deliver strong provenance, meaningful independent evaluation, and clear behavioral guards to prevent over‑reliance on imperfect AI outputs.Microsoft’s promises (segregated storage, no training on health lane data, ISO/IEC 42001 governance) are meaningful steps toward safer consumer health AI, and the company’s scale makes this an influential experiment for the industry. Still, the real measure of success will be whether Copilot Health reduces clinical confusion without adding new vectors for error, whether it proves useful across diverse patient populations and devices, and whether regulators, clinicians, and patients see the transparency and independent evidence they need to trust the results.

Practical guidance for readers today

If you’re curious to try Copilot Health when the preview expands, keep these pragmatic rules in mind:- Treat AI summaries as preparation tools, not diagnoses.

- Verify provenance on every flagged lab or clinically actionable suggestion.

- Share Copilot summaries with your clinician as a discussion aid, not as a prescription.

- Use built‑in privacy controls and understand retention settings before uploading sensitive records.

Source: Digital Watch Observatory AI-powered Copilot Health platform introduced by Microsoft | Digital Watch Observatory