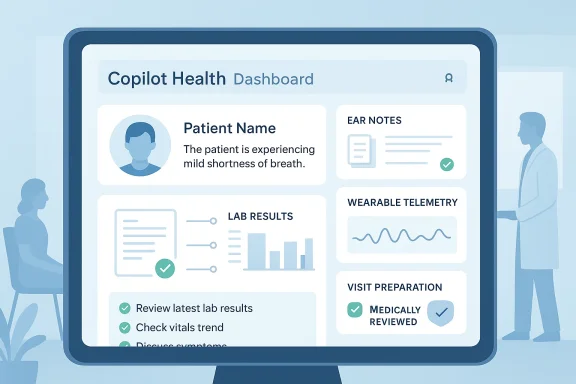

Microsoft’s latest Copilot push has a new—and arguably personal—ambition: to become the place you hand your medical records and wearable data and ask for an intelligible summary, a second opinion, or a prep sheet for your next doctor’s visit. The company’s Copilot Health preview promises to pull together electronic health records, lab results and continuous telemetry from consumer wearables (Apple Health, Fitbit, Oura and the like), synthesize that data with grounded medical guidance, and return personalized, conversational insights—while also trying to stake out a safer, more “grounded” approach to health queries than earlier chatbot experiments. s://microsoft.ai/news/health-check-how-people-use-copilot-for-health/)

Microsoft’s Copilot family has expanded rapidly from office-assistant to platform: it now spans Windows, Edge, Microsoft 365 and several vertical copilots for specialized workflows. Over the past two years Microsoft has layered health-oriented features and partnerships on top of that core: enterprise-facing clinical assistants like Dragon Copilot for hospitals, licensing arrangements with medically reviewed publishers, and product-level health connectors for consumer Copilot experiences. The move to a consumer-facing Copilot Health is the logical extension of that trajectory—aiming to make Copilot the everyday frontions people already ask their phones and PCs.

Industry reporting and previews frame Copilot Health as a “space” inside Copilot where users can upload or link personal medical files and wearable data, then ask natural-language questions—e.g., “Why does my resting heart rate trend up after late-night flights?” or “Do my recent labs show anything my doctor should see before the appointment?” The feature is being rolled out gradually through limited previews and, in many cases, is currently limited to U.S. users on specific platforms (web and iOS in early availability).

Policy makers are watching. The presence of consumer health AI raises questions about HIPAA boundaries (when a developer is a “business associate”), cross-border data flows, and whether consumer-facing tools should be subject to medical-device or software-as-a-medical-device scrutiny when they make diagnostic assertions. Early Microsoft messaging emphasizes caution and “not a replacement for professional medical advice,” but regulators will likely want deeper technical and clinical evaluations.

That said, success will be measured less by marketing and more by measurable safety, transparency and governance. The real test for Copilot Health is not whether it can summarize a wearable trend (it probably can), but whether it can do so reliably, explainably and with controls that protect people’s most sensitive data. Without independent audits, stronger transparency about training and retention, and clear clinical integration pathways, Copilot Health risks being a well‑engineered convenience that nevertheless leaves unresolved questions about liability, fairness and privacy.

For readers: treat early previews as experiments, keep control over your data permissions, and use AI outputs as a conversation starter with trained clinicians—not as a replacement. Microsoft’s preview is a major step in consumer health AI; if executed with ongoing transparency and third‑party validation, it could raise the baseline for how ordinary people access and understand their own health information. If executed without those guardrails, it could amplify existing problems of misinformation and privacy risk. Either way, this is one of the most consequential consumer AI experiments to watch in 2026—and it deserves a rigorous, skeptical public conversation as it scales.

Source: PCWorld Now Copilot wants to check your vitals, too

Source: Digital Trends Microsoft reveals Copilot Health, an AI to make sense of your wearable and medical reports

Background: why Microsoft is putting Copilot inside your health data

Background: why Microsoft is putting Copilot inside your health data

Microsoft’s Copilot family has expanded rapidly from office-assistant to platform: it now spans Windows, Edge, Microsoft 365 and several vertical copilots for specialized workflows. Over the past two years Microsoft has layered health-oriented features and partnerships on top of that core: enterprise-facing clinical assistants like Dragon Copilot for hospitals, licensing arrangements with medically reviewed publishers, and product-level health connectors for consumer Copilot experiences. The move to a consumer-facing Copilot Health is the logical extension of that trajectory—aiming to make Copilot the everyday frontions people already ask their phones and PCs.Industry reporting and previews frame Copilot Health as a “space” inside Copilot where users can upload or link personal medical files and wearable data, then ask natural-language questions—e.g., “Why does my resting heart rate trend up after late-night flights?” or “Do my recent labs show anything my doctor should see before the appointment?” The feature is being rolled out gradually through limited previews and, in many cases, is currently limited to U.S. users on specific platforms (web and iOS in early availability).

What exactly is Copilot Health? A practical overview

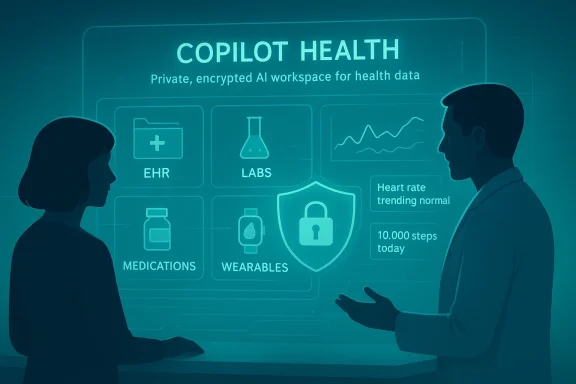

Copilot Health is positioned as a user-controlled, permissioned environment inside Copilot that attempts three things at once:- Data ingestion and normalization — take in electronic health records (EHRs), lab PDFs, and continuous streams from wearables and map them into a form the assistant can reason across.

- Grounded medical guidance — surface answers that are anchored to licensed, medically reviewed content (Microsoft has said Copilot Health will use sources such as Harvard Health Publishing to reduce hallucinations).

- Personalized explanations and next steps — synthesize signals across data sources and present a readable summary, risk flags, and suggested actions (e.g., “Book a follow‑up with cardiology,” or “Review this lab with your GP”).

Supported inputs and connectors (what Microsoft has said so far)

Public reporting and company previews indicate Copilot Health will accept several classes of inputs:- Consumer wearable data: Apple Health,er device ecosystems that let users export or link activity, heart rate, sleep and similar signals.

- Electronic health records and lab reports: EHR exports, PDF lab reports and clinical notes uploaded or connected through PHR (personal health record) bridges or third-party connectors. Some vendors and intermediaries are already being named as partners for record transfer.

- Manually uploaded documents: scanned PDFs, images and patient-facing documents that the Copilot can OCR, parse and summarize.

How Copilot Health works in practice (what you can expect)

The user experience Microsoft and reporters describe typically follows these steps:- User grants permission to link or upload data (wearable connector, EHR export, PDFs).

- Copilot ingests and normalizes the inputs, extracting numeric values and timeline trends.

- The assistant answers questions using a mix of your data and grounded medical content, and it cites or attributes where guidance comes from when possible.

- The output includes a plain‑English summary, an interpretation of key changes or flags, and suggested next steps (e.g., “consider retesting,” “contact your provider if symptoms persist”).

Strengths: where Copilot Health could genuinely help

- Lowering the friction to understanding personal data. Many people leave lab PDFs unread or misinterpret trends from wearable dashboards. Copilot Health promises to translate that raw output into digestible insights—a clear win for health literacy.

- Bridging consumer and clinical contexts. If summaries are accurate, they can help patients prepare better questions for visits and reduce clinic time wasted on basic explanations. This may especially benefit people who have chronic conditions and frequent lab follow-ups.

- Grounding answers in licensed, medically reviewed sources. Microsoft’s move to license content from established medical publishers is a practical mitigation for the common problem of LLM hallucinations in health contexts. That editorial grounding could improve safety and credibility.

- Ecosystem leverage. Microsoft can combine Copilot Health functionality with its enterprise health tools (e.g., Dragon Copilot) and cloud infrastructure to scale features and potentially integrate with provider workflows where regulatory and contractual frameworks permit.

Risks and unresolved questions: a clear-eyed appraisal

For all its promise, Copilot Health raises several nontrivial issues that have to be managed responsibly.- Privacy and data governance. Health data is among the most sensitive categories imaginable. Even with opt‑in connectors, the question of how long Microsoft retains copies, how it uses them to improve models, and whether data is stored in consumer versus clinical systems is central. Early previews suggest Microsoft will offer “privacy‑focused” modes, but the exact retention, processing and model‑training policies require explicit, auditable detail.

- Regulatory and liability ambiguity. Consumer-facing AI that interprets medical data sits in a contested regulatory gray zone. Microsoft has spent heavily on enterprise healthcare compliance and partnerships, but a consumer Copilot that summarizes EHRs and suggests next steps creates a potential vector for harm if advice is incorrect or misleading. Who bears liability if a suggestion is acted on and leads to an adverse outcome—the user, Microsoft, or a clinician who relies on AI output? Current guidance from Microsoft stresses that Copilot is not a replacement for professional medical landscape remains unsettled.

- Accuracy of sensor and model interpretation. Wearable signals are noisy, prone to false positives (arrhythmia flags that are benign, for example), and heavily context-dependent. Automated trend detection and causal inferences—“your heart rate spiked because of X”—are challenging even for clinical-grade analytics. Consumers may trust polished-sounding AI explanations more than they should. That trust gap is dangerous in health contexts.

- Data provenance and source-mixing. Copilot Health’s value depends on mixing personal data with editorial content. If the assistant fails to clearly attribute which statements are derived from your specific data versus general medical guidance, users could misinterpret generic advice as tailored clinical recommendations. Microsoft’s licensing of reputable sources is a mitigation, but implementation details matter.

- Equity and access. The initial U.latform limitations (iOS/web early availability) risk reinforcing a two-tiered system where only certain users can access AI‑mediated health literacy tools, potentially widening existing health information gaps.

How Microsoft appears to be mitigating danger (and where it may fall short)

Microsoft’s playbook for safer Copilot Health includes several visible elements:- Licensing trusted medical content to ground responses and reduce hallucination risk. That editorial layer is intended to make answers safer and auditable.

- A dedicated “Health” space inside Copilot that separates medical conversations from general queries, enabling different UX guardrails and data handling rules.

- Limited previews and staged rollouts to U.S. users and selected platforms—an opportunity to iterate policies and controls before wider release.

- Model training and reuse transparency. It is unclear to outside observers whether and how Microsoft will use uploaded personal health data to train or refine models, and what opt-outs truly mean in practice. This is a core governance question that must be answered publicly.

- Independent verification. Promises of grounding and safety are only meaningful with external audits and peer-reviewed evaluations of error modes in real-world scenarios—especially for alert generation from wearables. So far, independent results are limited.

- Clear clinical workflow integration. Helping patients prepare for appointments is valuable only if clinicians can quickly interpret the AI’s outputs; that requires standardized export formats and clinical governance agreements, which are not yet fully documented for the consumer preview.

Practical guidance for users who want to try Copilot Health—or avoid it

If you’re considering Copilot Health in preview, here are practical, platform‑agnostic steps and considerations:- Review permission prompts carefully. Only connect the minimum datasets you’re comfortable sharing (e.g., upload a PDF lab report rather than linking your entire EHR store).

- Favor ephemeral or local-first workflows when available. If the app offers an option to keep processing on your device or to delete uploads after analysis, prefer that mode. Confirm data retention timelines in the app’s privacy settings.

- Treat Copilot’s output as informational—not a diagnosis. Use summaries to prepare questions for your clinician, not as a replacement for clinical judgment. Microsoft itself warns Copilot is not a substitute for professional medical advice.

- Keep a copy of the original outputs. If you intend to bring AI-generated summaries to a clinician, save the AI’s report and the raw inputs (PDFs, wearable CSVs) so a clinician can verify the bases of those claims.

- Check service scope and availability. Early previews can limit platform and region; if you’re outside the U.S. or not on the supported client, you may not have access yet.

The competitive and policy landscape: where Copilot Health sits in the market

Copilot Health is not happening in a vacuum. Large AI vendors and healthcare platforms are all pursuing consumer and clinician-facing health tools—OpenAI launched ChatGPT Health, Anthropic has built health connectors into Claude, and other cloud vendors are advancing clinical copilots for hospitals. Microsoft’s distinct advantage is scale: consumer front-ends across Windows and Office, enterprise relationships with health systems, and licensing deals with medical publishers. But that same scale amplifies the risk profile and regulatory scrutiny.Policy makers are watching. The presence of consumer health AI raises questions about HIPAA boundaries (when a developer is a “business associate”), cross-border data flows, and whether consumer-facing tools should be subject to medical-device or software-as-a-medical-device scrutiny when they make diagnostic assertions. Early Microsoft messaging emphasizes caution and “not a replacement for professional medical advice,” but regulators will likely want deeper technical and clinical evaluations.

What to watch next: four signals that will determine how this plays out

- Transparency on data use and retention. Will Microsoft publish clear, machine-readable policies about how uploaded personal health data is stored and whether it is used in model training? Public, auditable promises would be a major trust signal.

- Independent audits and safety studies. Peer-reviewed assessments of Copilot Health’s error rates, false alarms from wearable data, and clinical concordance would move this from marketing to credible product.

- Regulatory engagement and compliance artifacts. Explicit statements about HIPAA applicability, data processing agreements, and medical‑device assessment (if any) would clarify legal exposure for providers and users.

- Provider adoption patterns. If health systems begin to accept and incorporate Copilot‑generated summaries into workflows (with verification steps), that indicates the product is crossing from consumer novelty to clinical utility. Conversely, clinician pushback would highlight gaps that still need to be closed.

Final analysis: an inherently useful idea that demands disciplined execution

Copilot Health sits at a compelling intersection: most people want help making sense of labs and wearables, and modern LLMs plus clinical content partnerships can plausibly shorten that gap. Microsoft’s advantage—horizontal reach across consumer and enterprise products, plus relationships inside healthcare—gives it a plausible pathway to build a meaningful product. Reporting so far describes a carefully staged rollout with editorial grounding and privacy‐first messaging, which is the right starting posture for an inherently risky domain.That said, success will be measured less by marketing and more by measurable safety, transparency and governance. The real test for Copilot Health is not whether it can summarize a wearable trend (it probably can), but whether it can do so reliably, explainably and with controls that protect people’s most sensitive data. Without independent audits, stronger transparency about training and retention, and clear clinical integration pathways, Copilot Health risks being a well‑engineered convenience that nevertheless leaves unresolved questions about liability, fairness and privacy.

For readers: treat early previews as experiments, keep control over your data permissions, and use AI outputs as a conversation starter with trained clinicians—not as a replacement. Microsoft’s preview is a major step in consumer health AI; if executed with ongoing transparency and third‑party validation, it could raise the baseline for how ordinary people access and understand their own health information. If executed without those guardrails, it could amplify existing problems of misinformation and privacy risk. Either way, this is one of the most consequential consumer AI experiments to watch in 2026—and it deserves a rigorous, skeptical public conversation as it scales.

Source: PCWorld Now Copilot wants to check your vitals, too

Source: Digital Trends Microsoft reveals Copilot Health, an AI to make sense of your wearable and medical reports